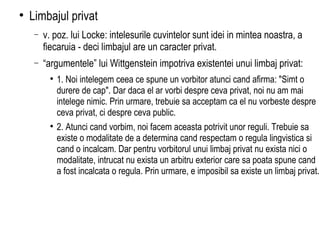

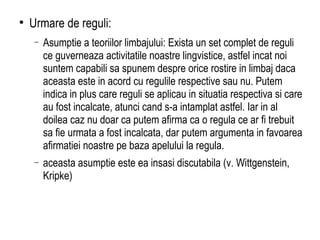

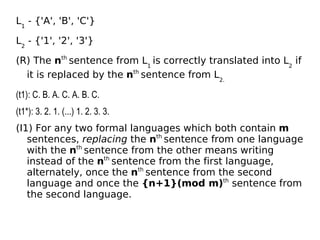

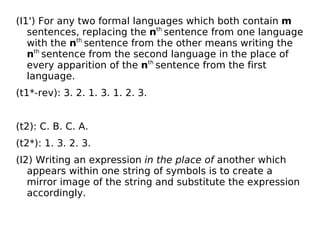

The document discusses various philosophical perspectives on language, focusing on interpretation and translation theories, particularly the positions of Quine and Davidson on indeterminacy and radical interpretation. It critiques the idea of a private language, arguing against Wittgenstein's view by analyzing the necessity of public rules in linguistic practices. Finally, it highlights the challenges in formulating a semantic theory that reliably distinguishes meaningful from meaningless expressions, questioning the feasibility of normative theories in language.

![Traducere si interpretare Quine: Indeterminarea traducerii Inscrutabilitatea referintei Relativitatea ontologica [v. exemplul cu 'Gavagai'] Davidson: Interpretare radicala Principle of Charity](https://image.slidesharecdn.com/limbaj13-090520055437-phpapp01/85/Filosofia-limbajului-curs-13-2-320.jpg)