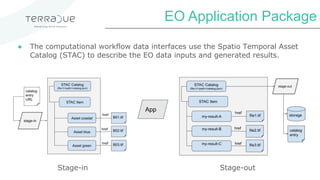

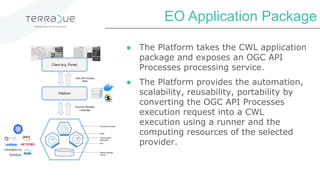

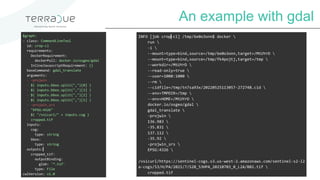

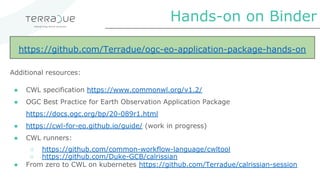

Terradue is an ESA spin-off company based in Rome that supports application builders in using satellite data through algorithms in different programming models and languages. They help deploy and operate Earth observation data processing applications in multiple clouds without lock-in. An Earth observation application is a set of command-line tools organized as a computational workflow with EO and numeric/textual data parameters. An application package uses the Common Workflow Language to describe the workflow's input/output interface and tool orchestration, guaranteeing automation, scalability, portability across environments. The package containers the tools and dependencies, while the workflow and interfaces are described in CWL. The platform takes the package and exposes an OGC API Processes service, automating