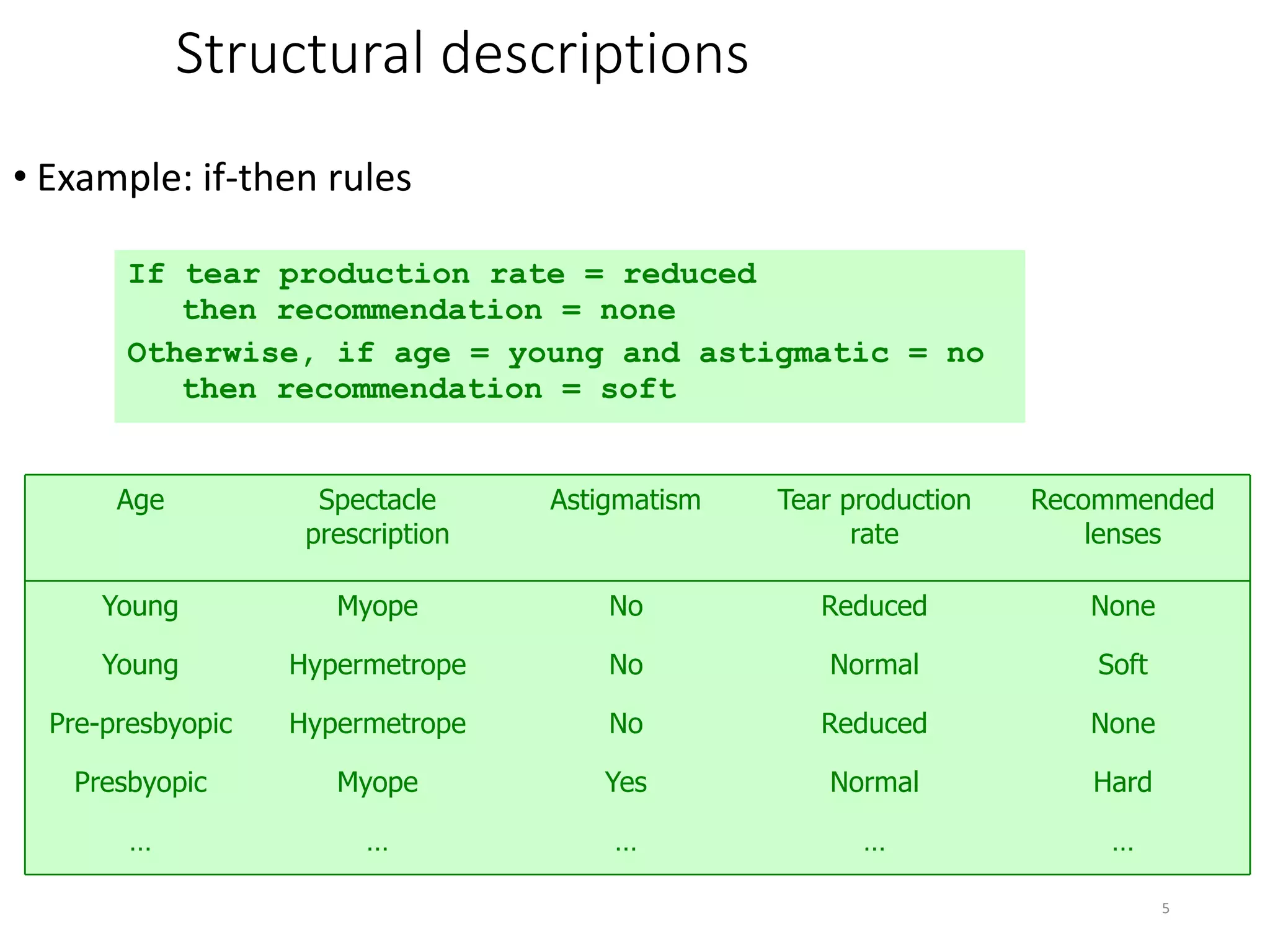

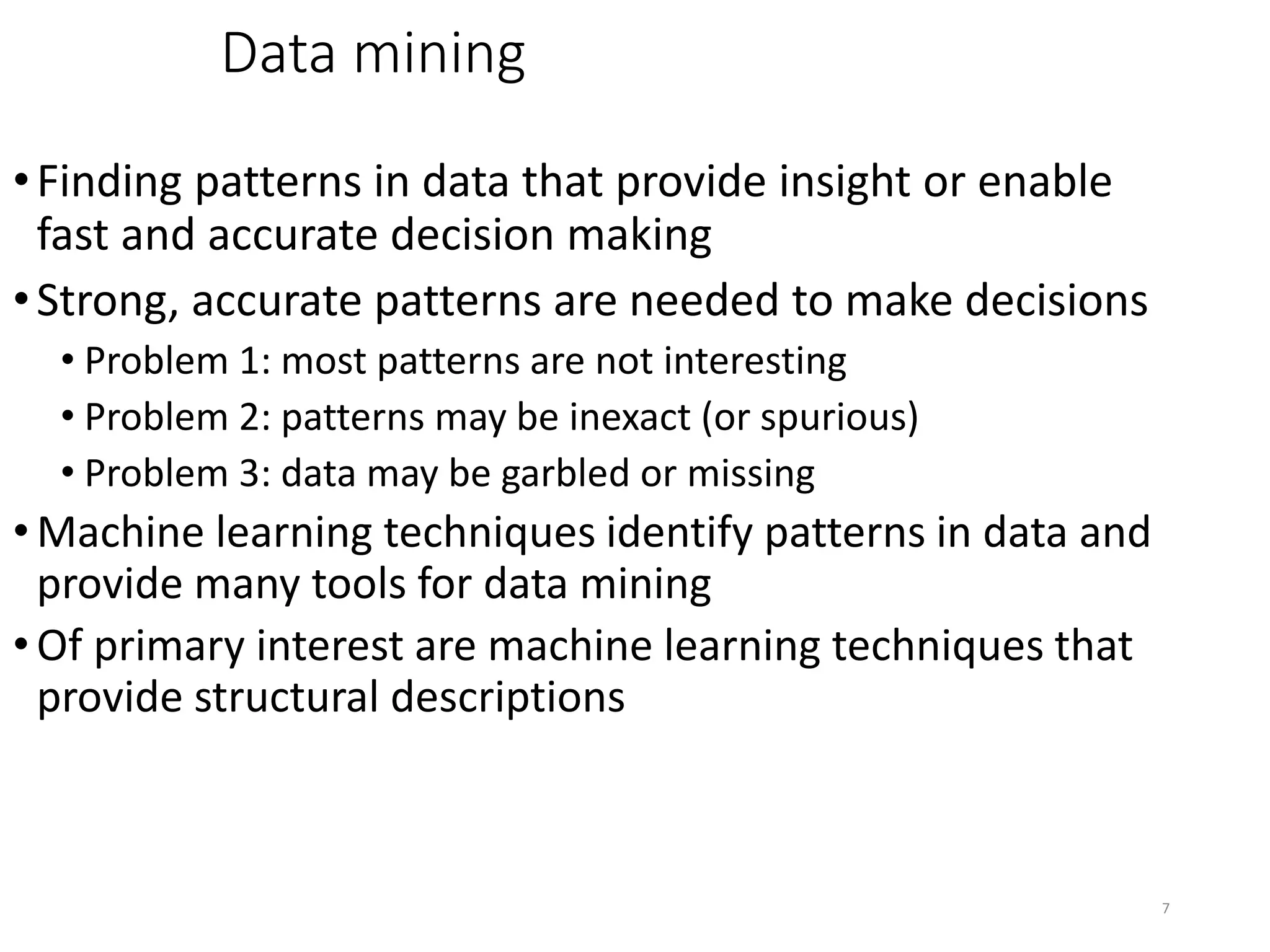

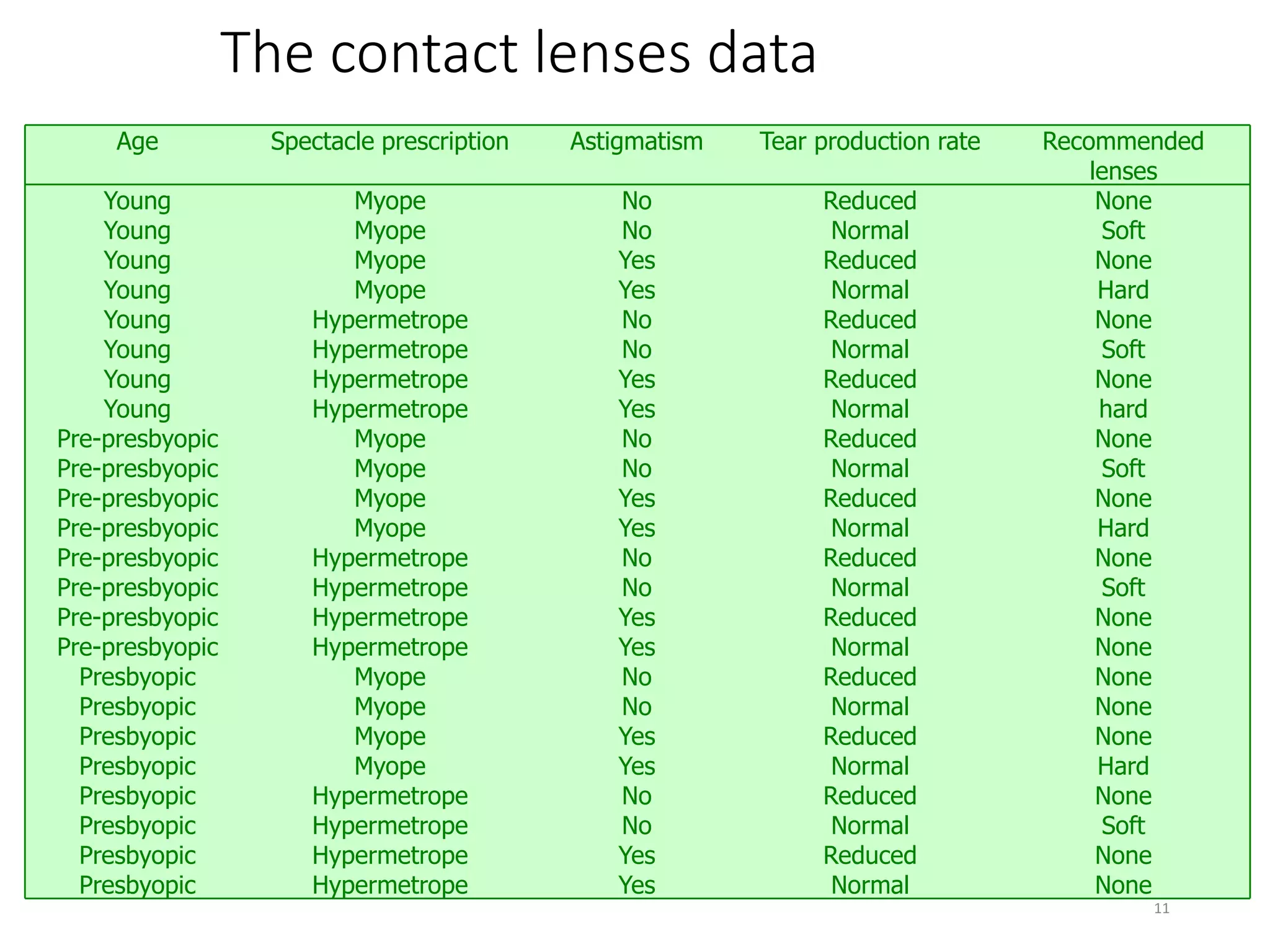

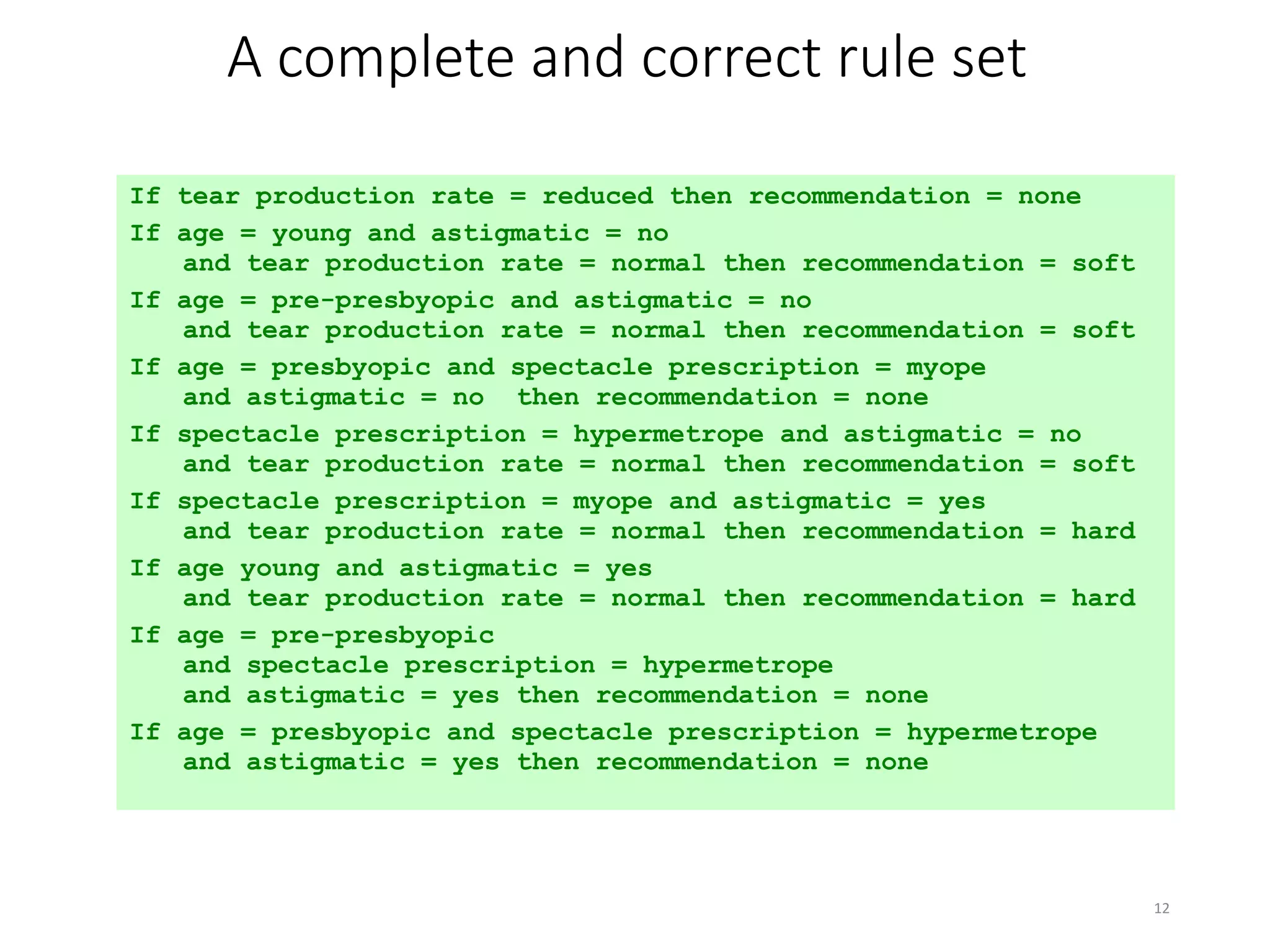

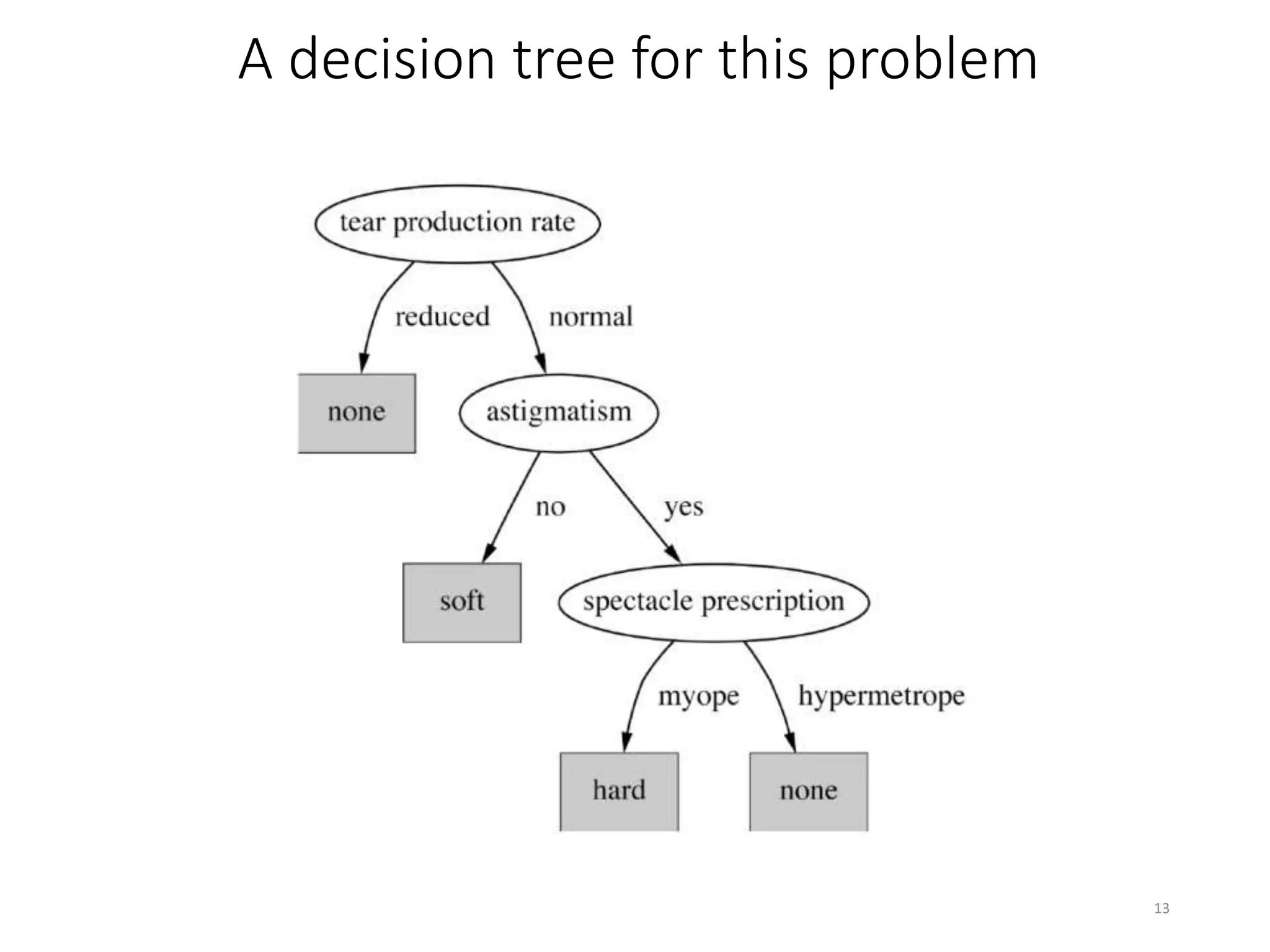

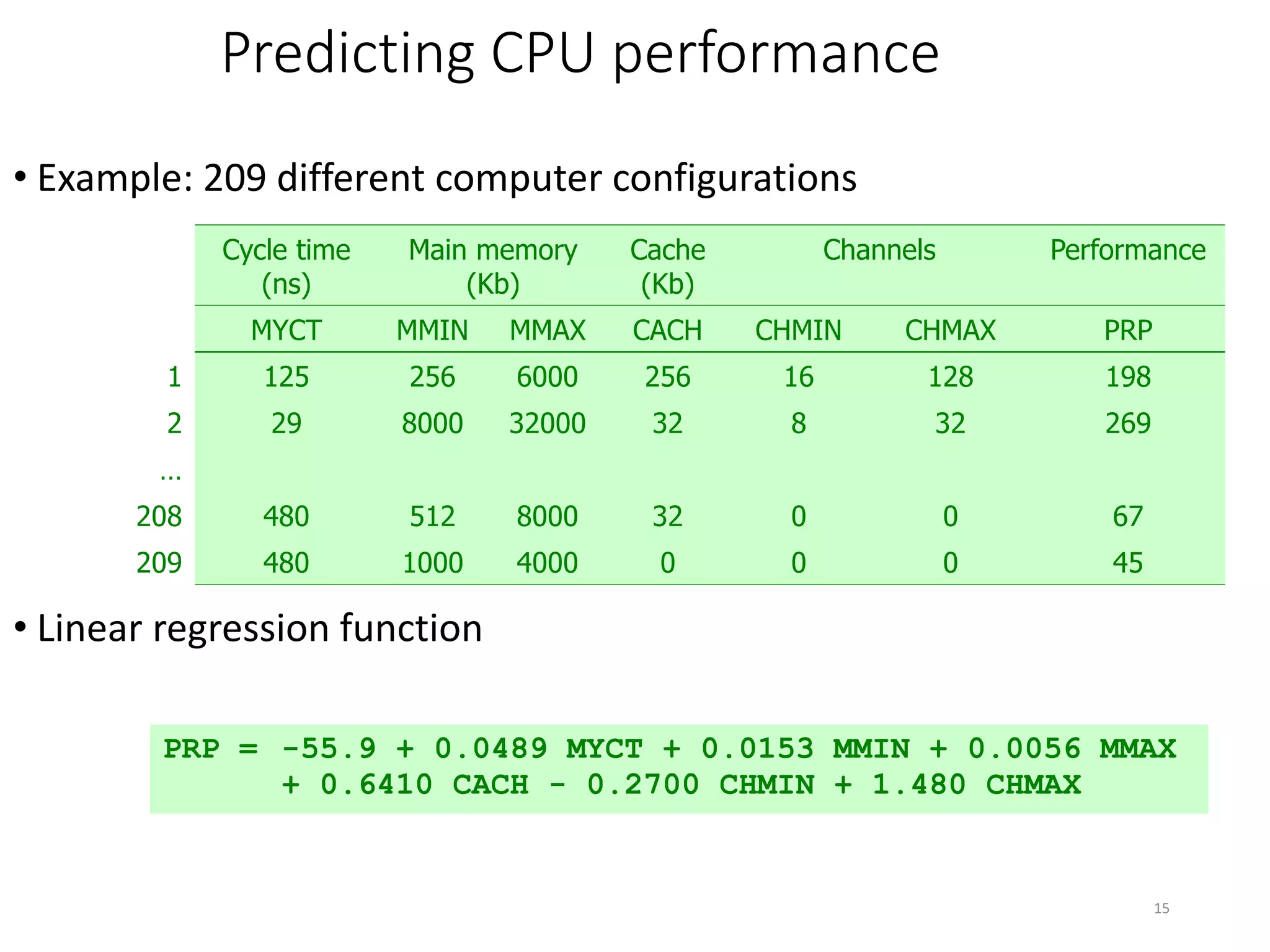

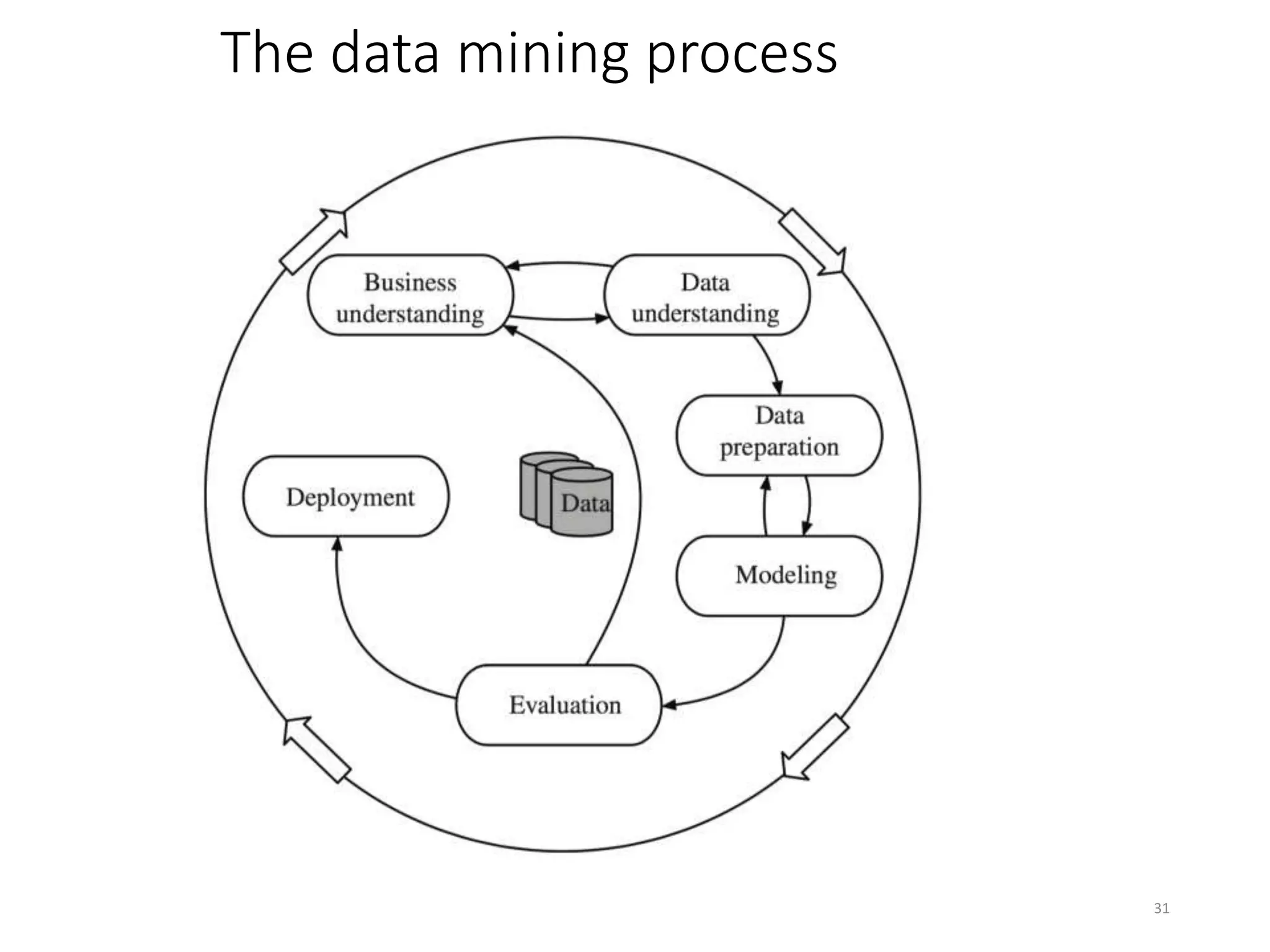

This document provides an overview of data mining and machine learning techniques. It discusses how machine learning can be used to extract patterns and insights from large amounts of data. Examples are provided of applications in domains like credit lending, oil spill detection, load forecasting, and machine fault diagnosis. The document also covers key concepts in data mining like the generalization process, bias, and some ethical considerations around using these techniques.