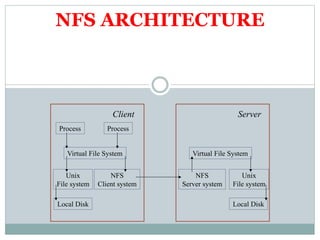

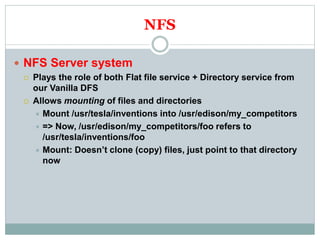

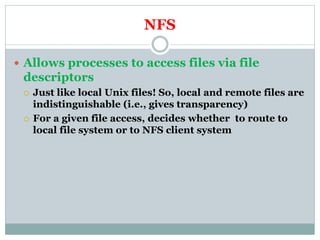

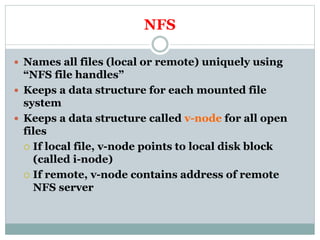

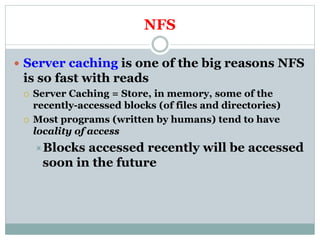

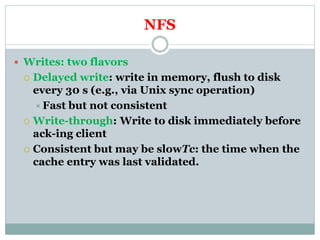

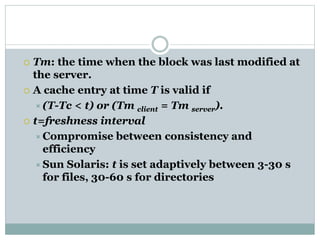

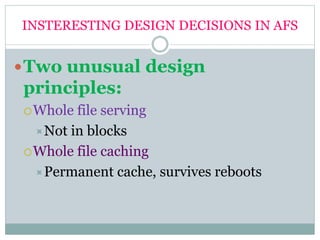

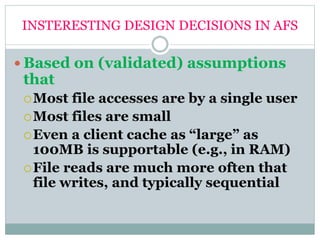

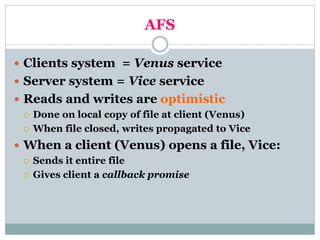

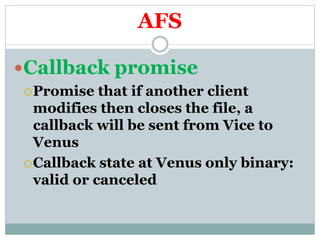

The document summarizes the Sun Network File System (NFS) and the Coda File System. NFS was developed by Sun Microsystems in the 1980s and allows processes to access files via file descriptors, making local and remote files indistinguishable. It uses file handles to uniquely name files and caches frequently accessed file blocks at the server for improved read performance. The Andrew File System (AFS) was designed at CMU and takes a different approach by serving whole files rather than blocks and caching entire files at clients.