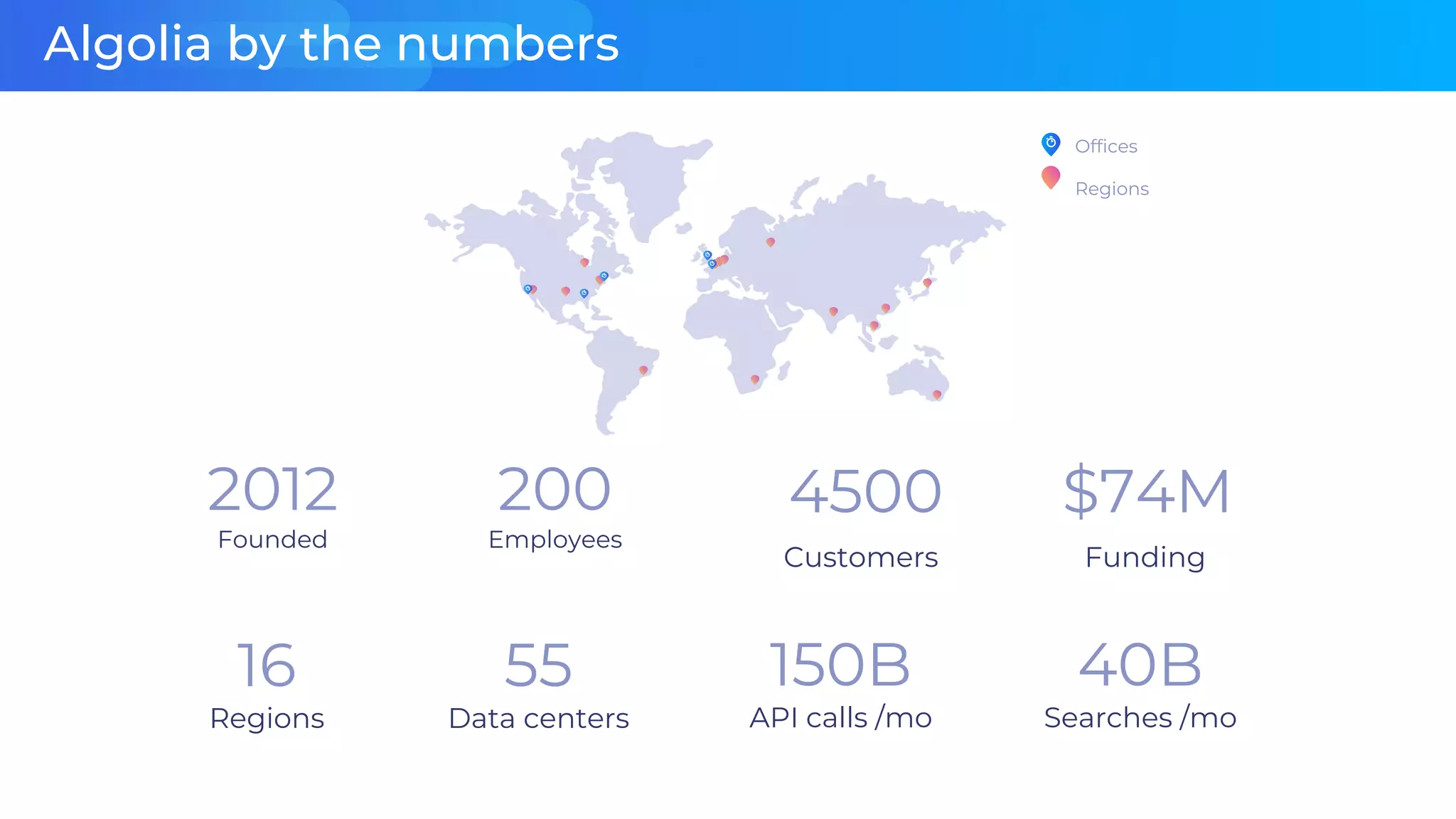

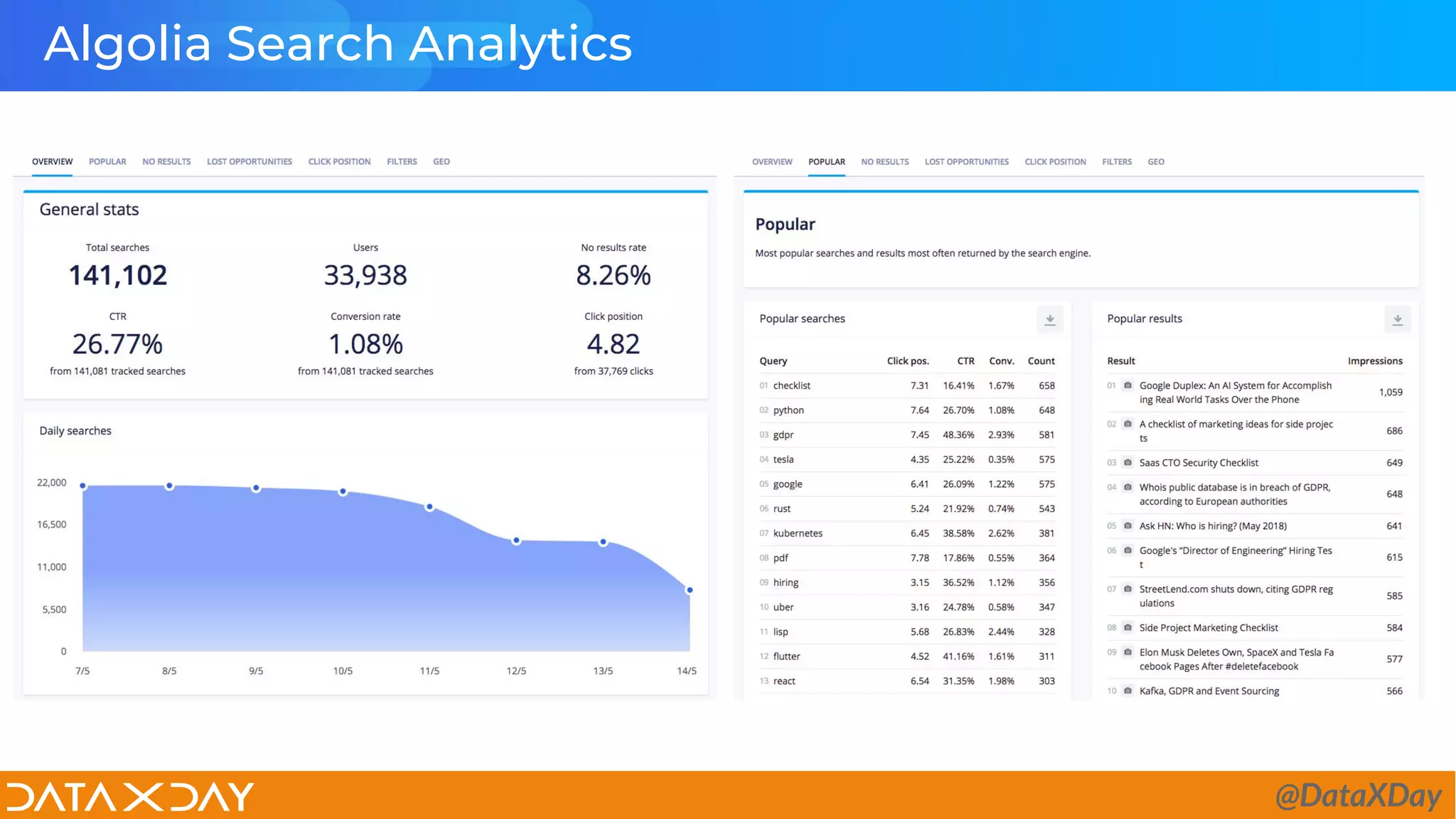

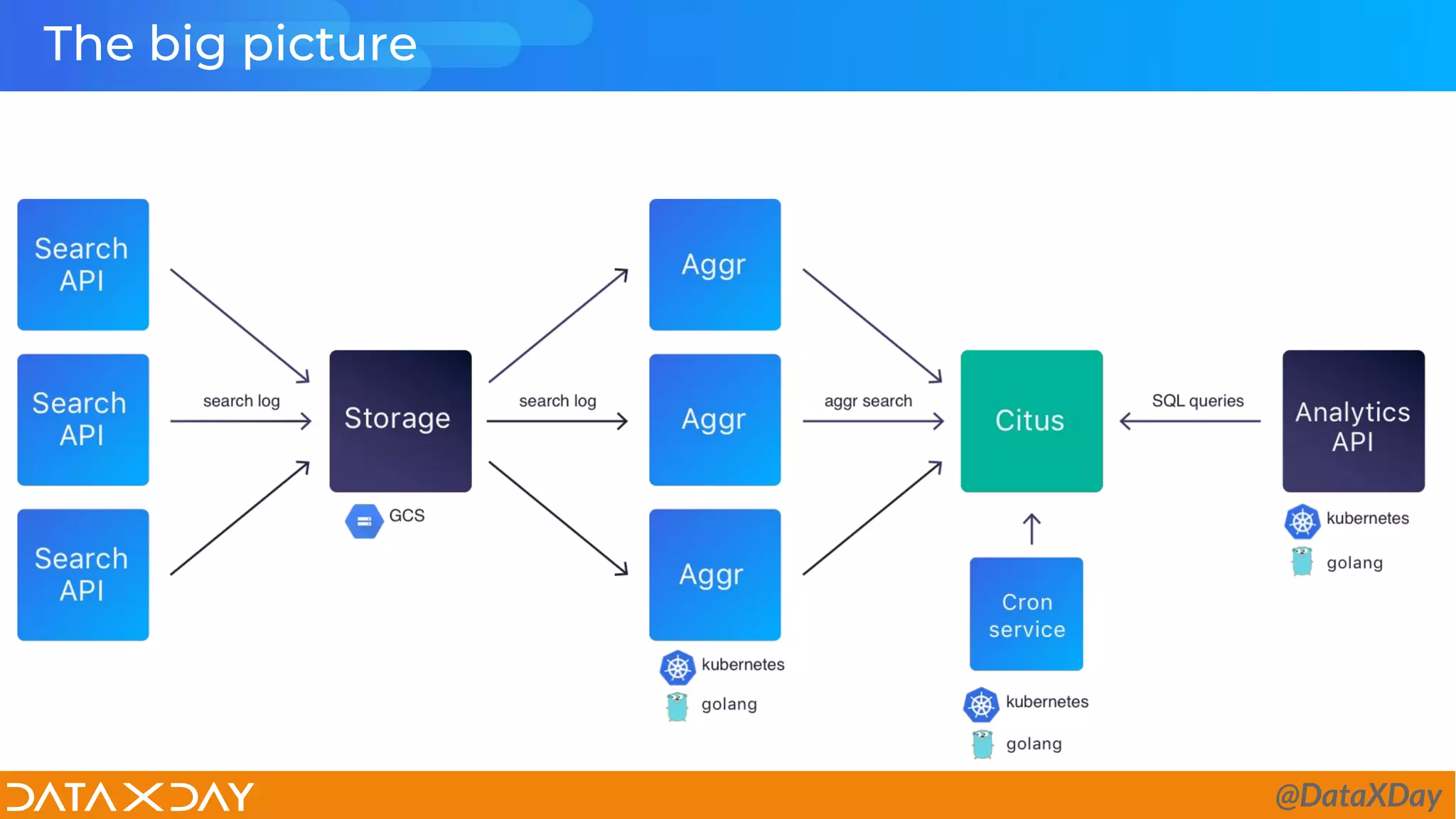

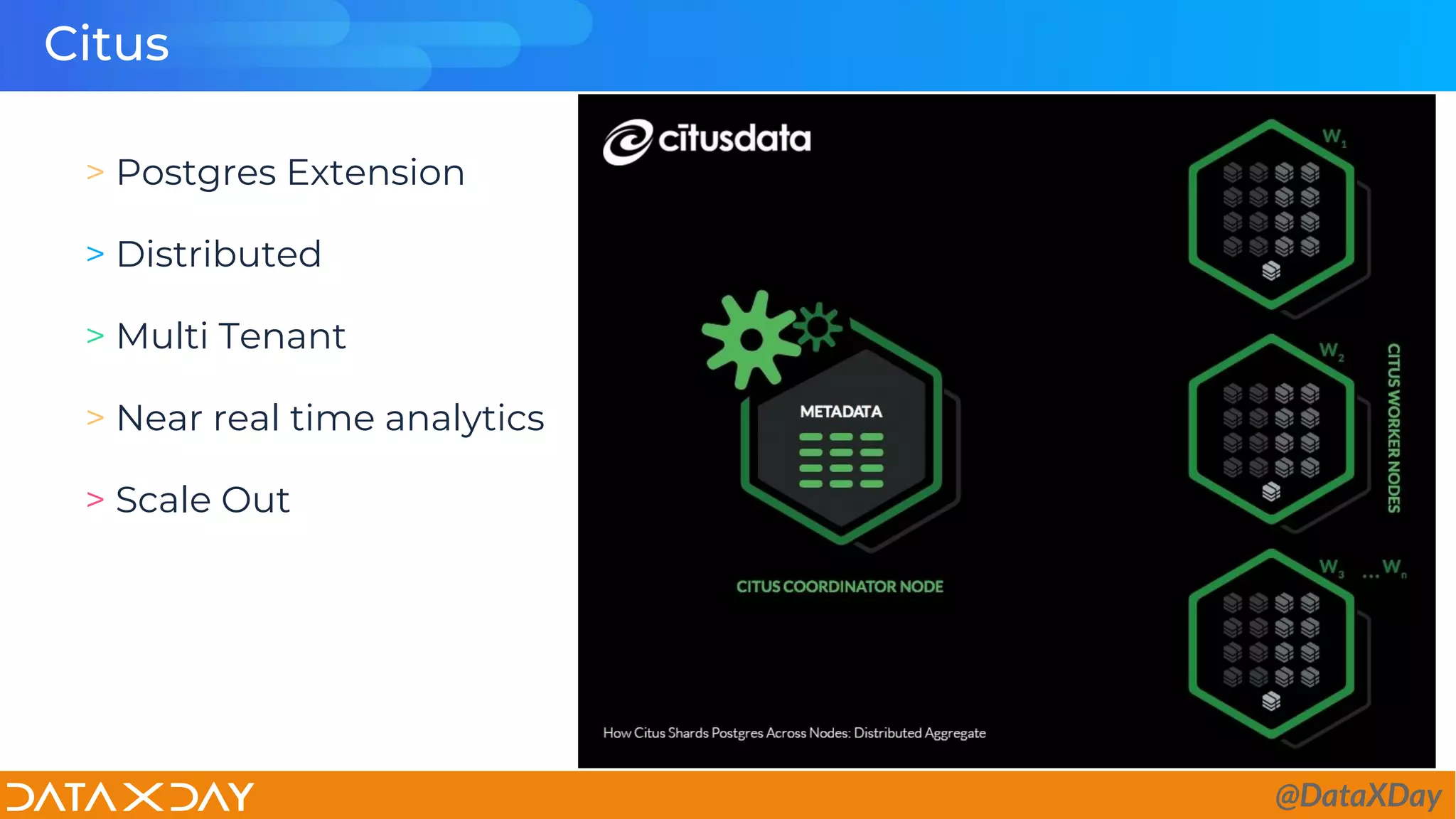

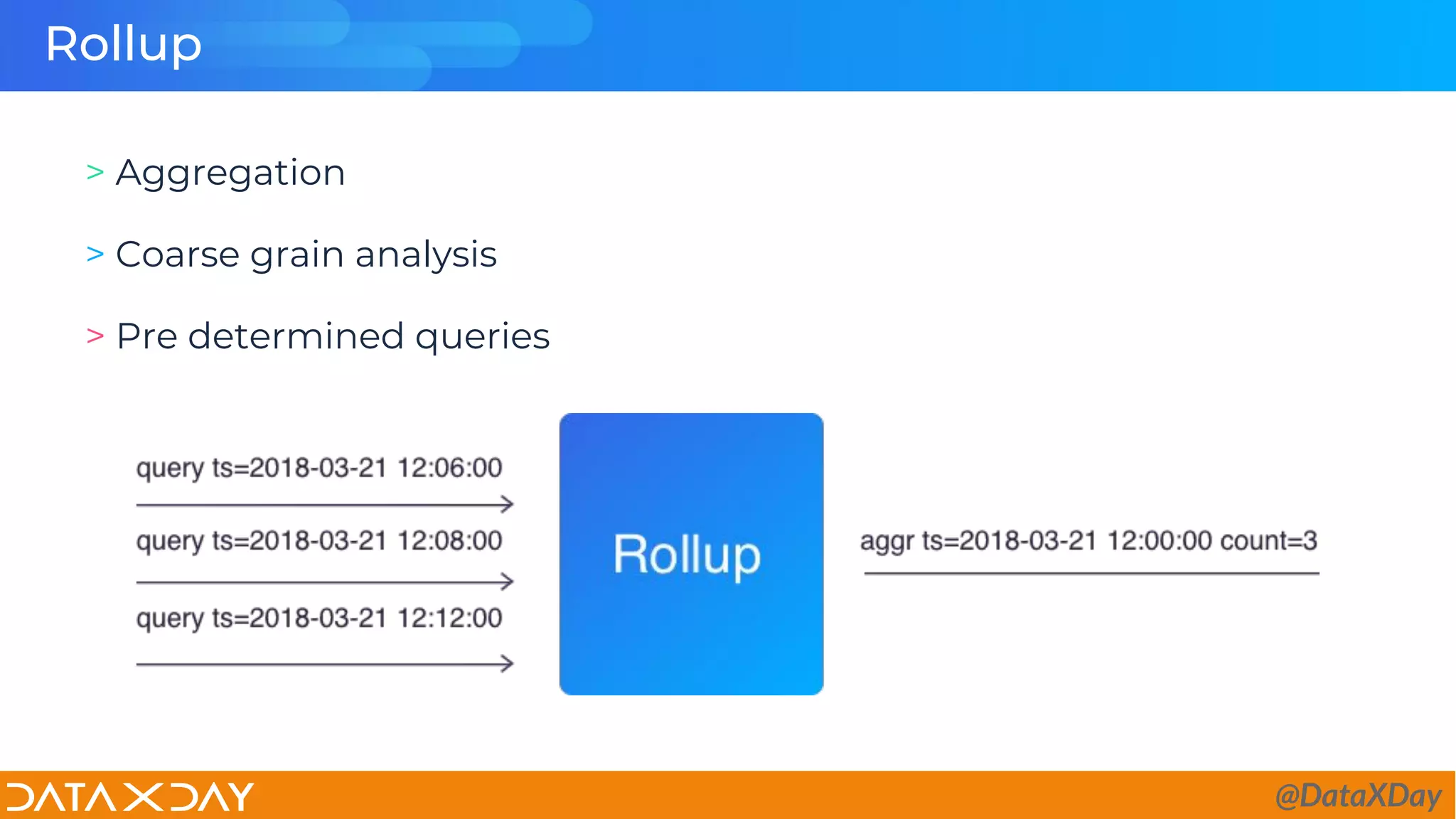

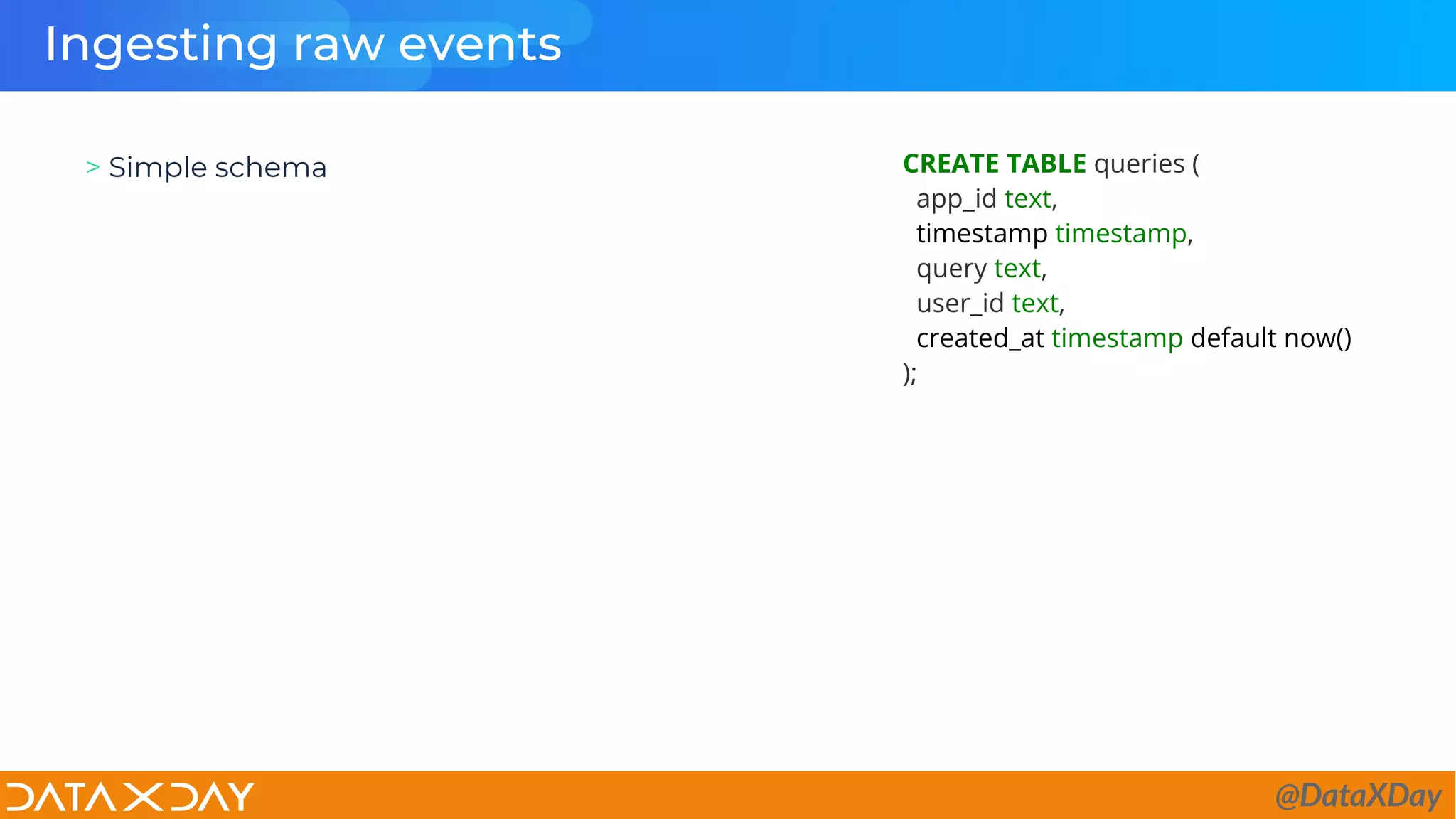

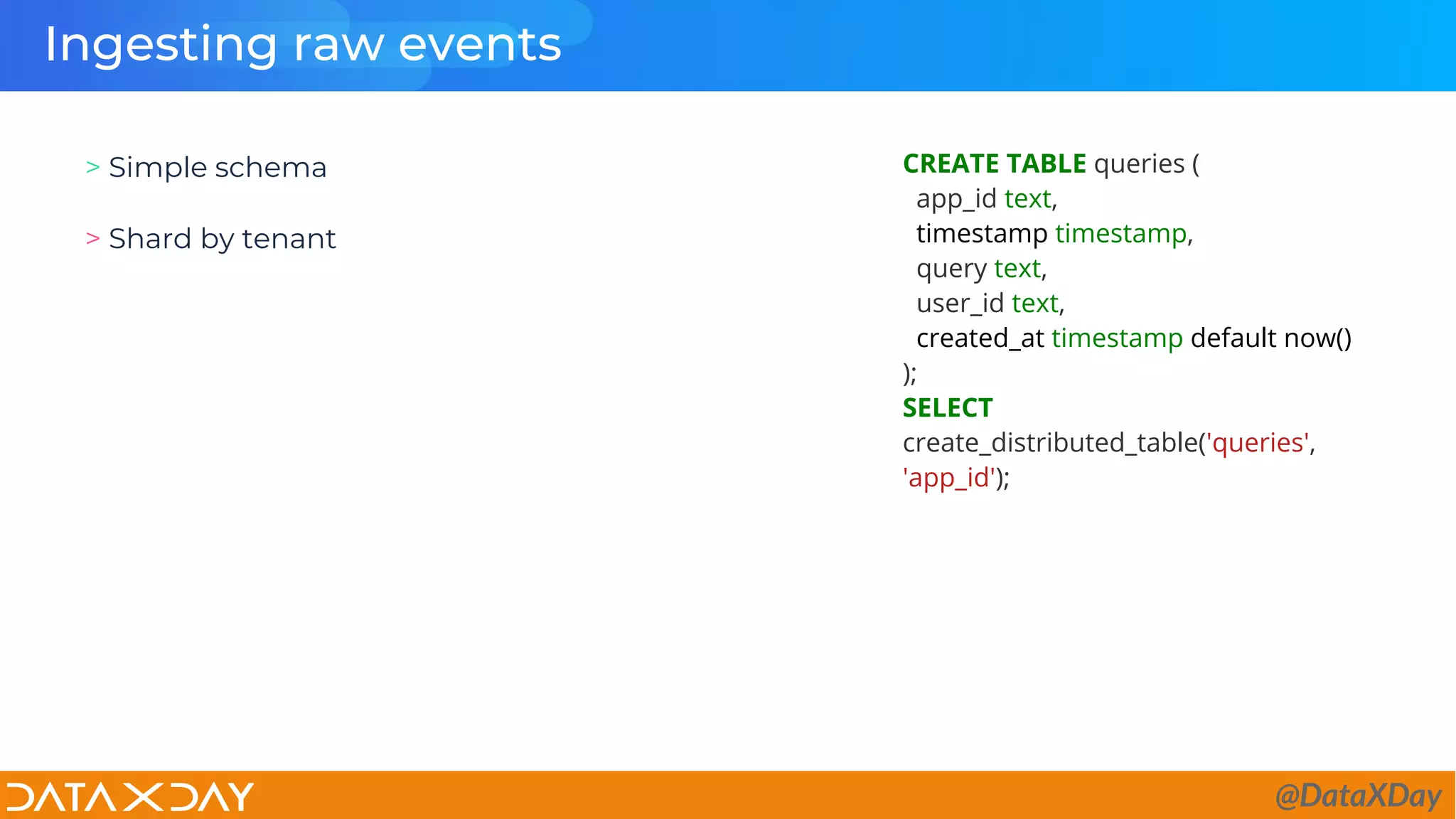

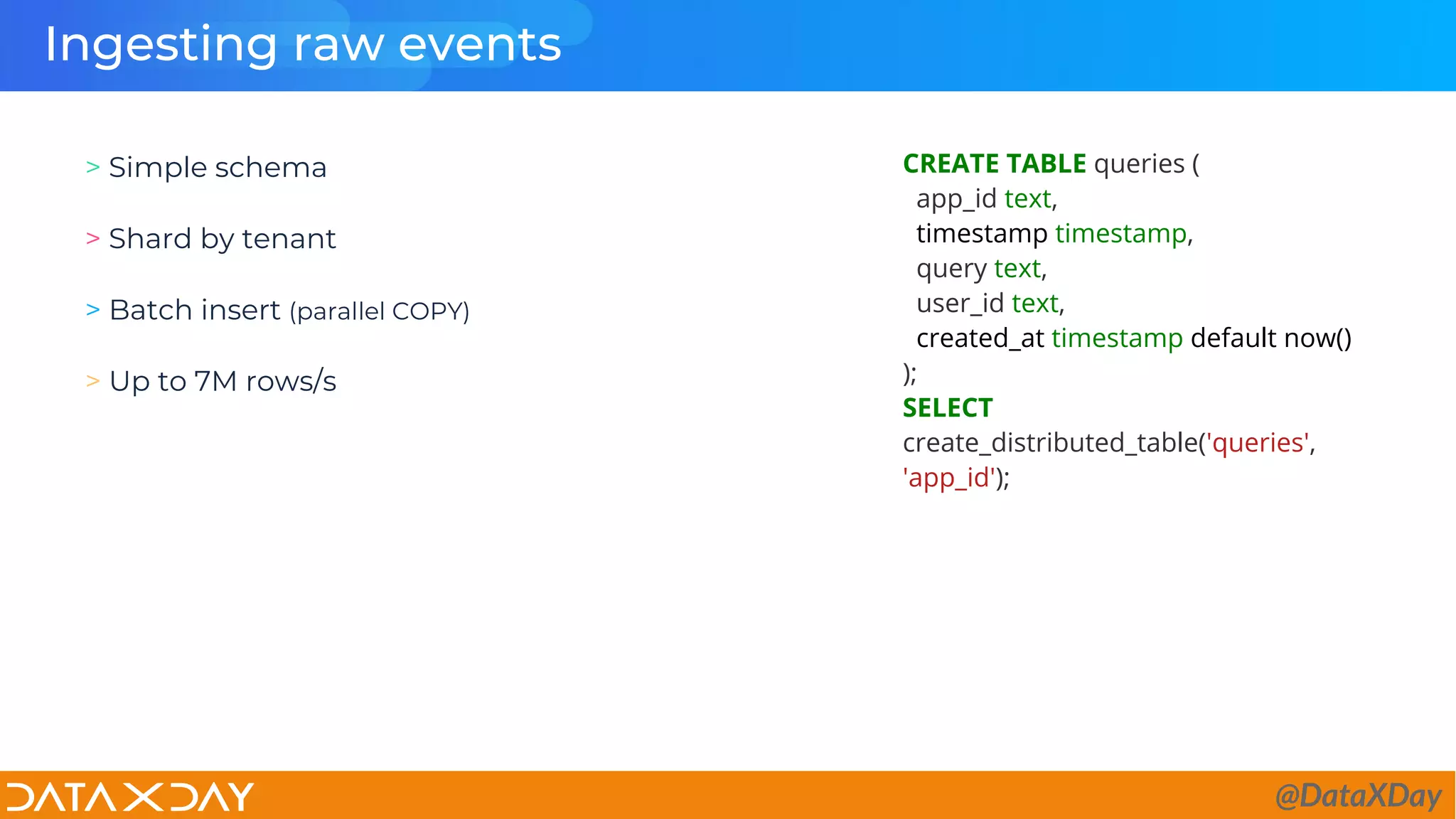

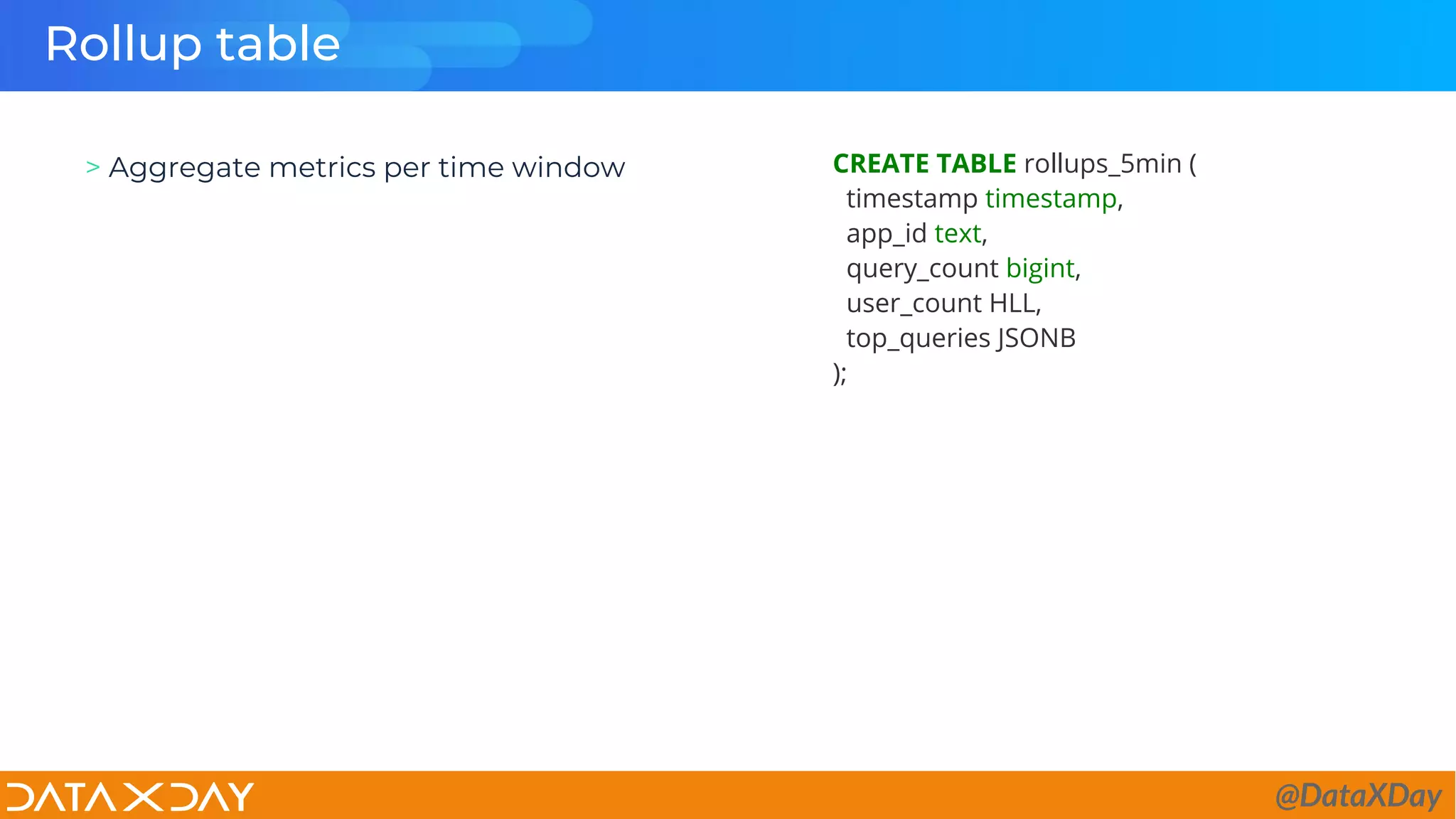

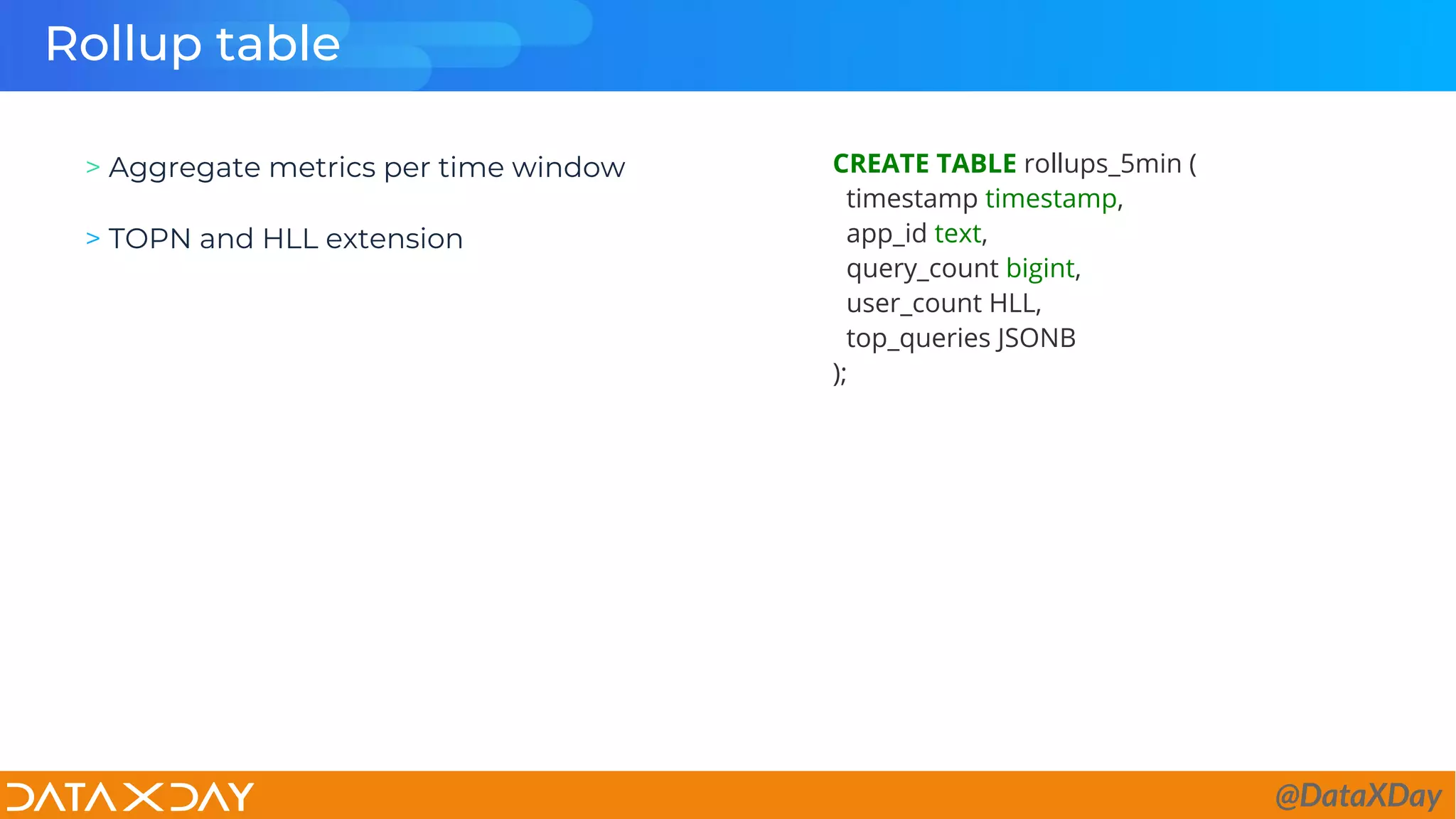

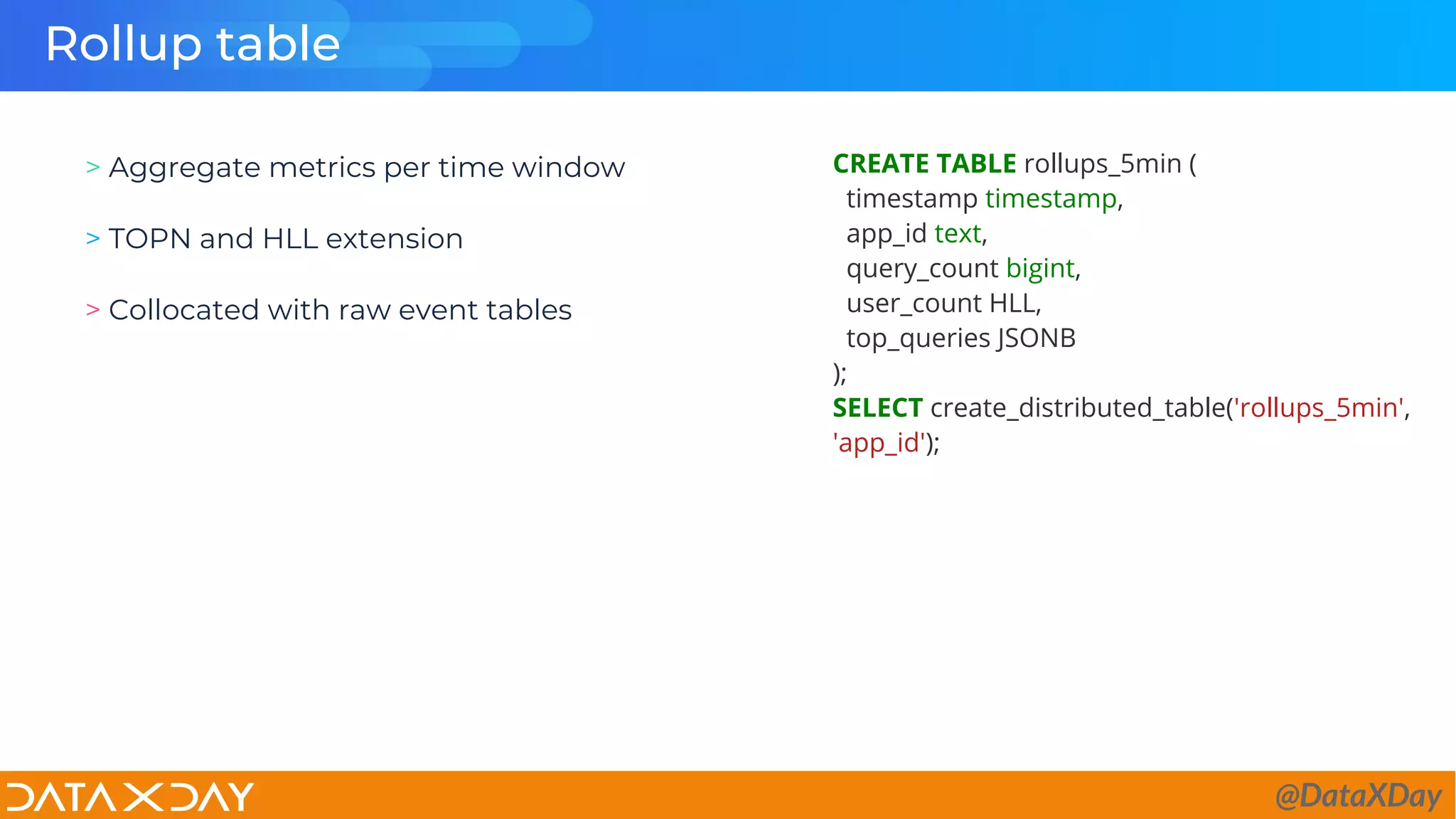

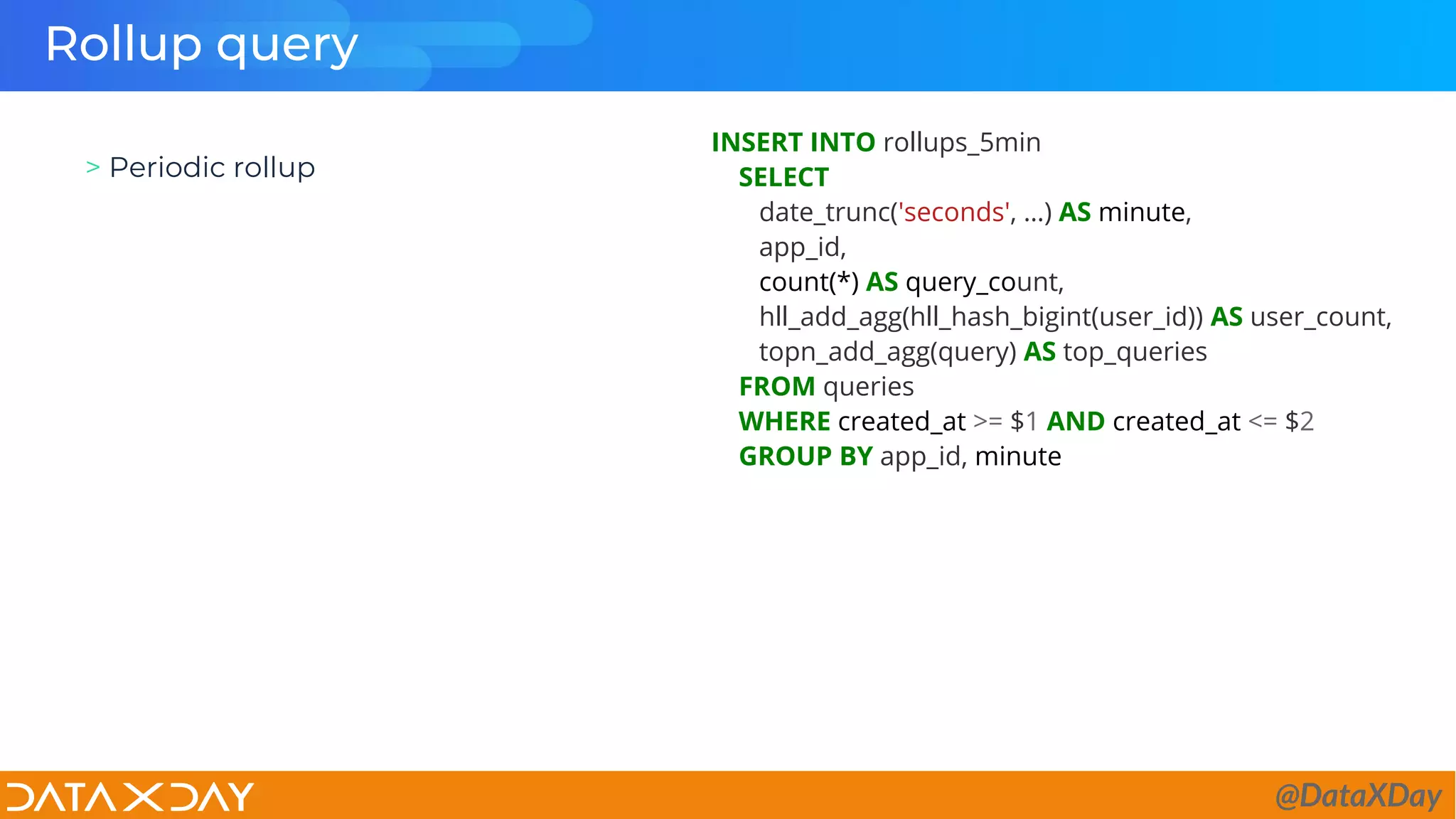

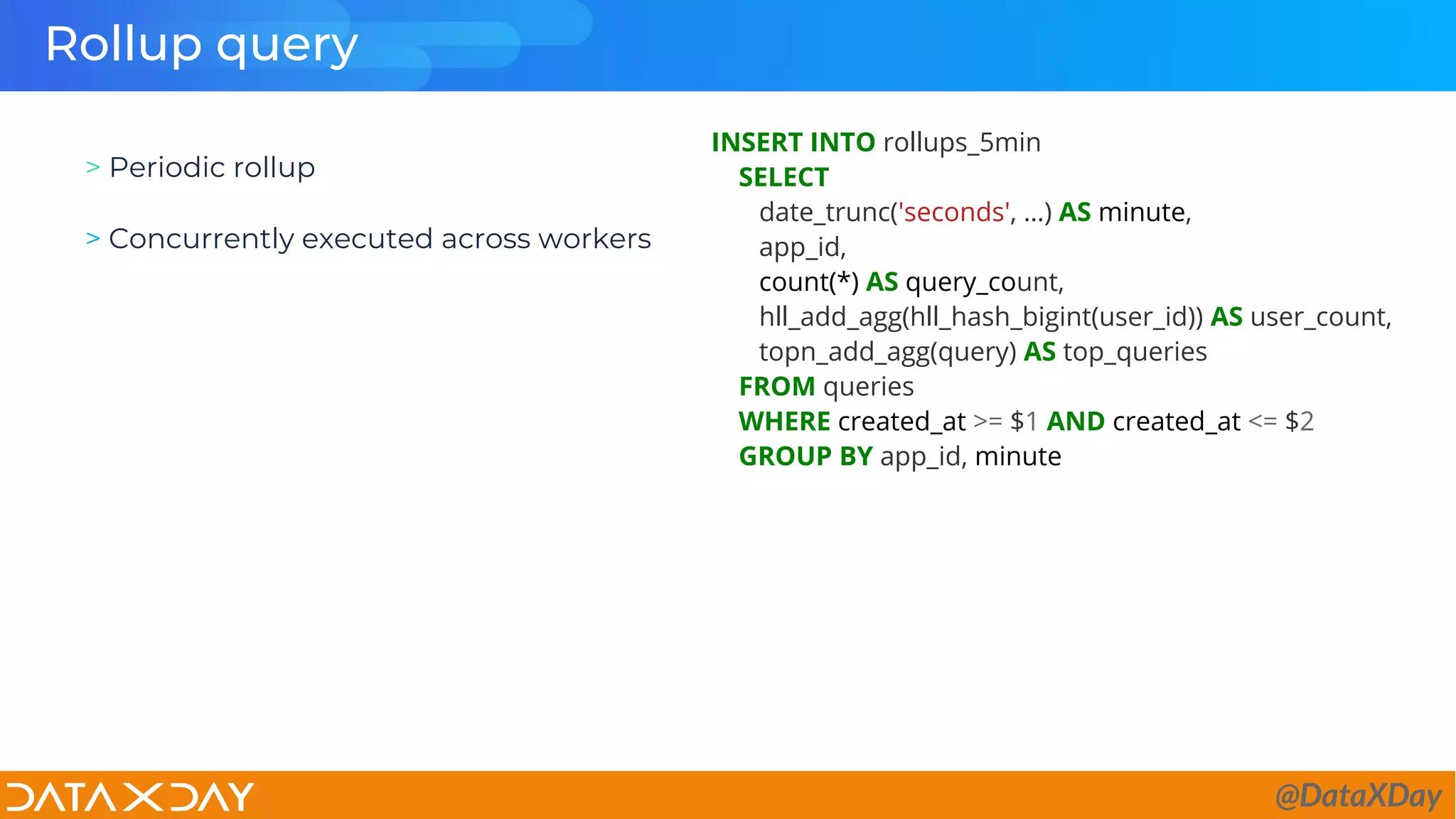

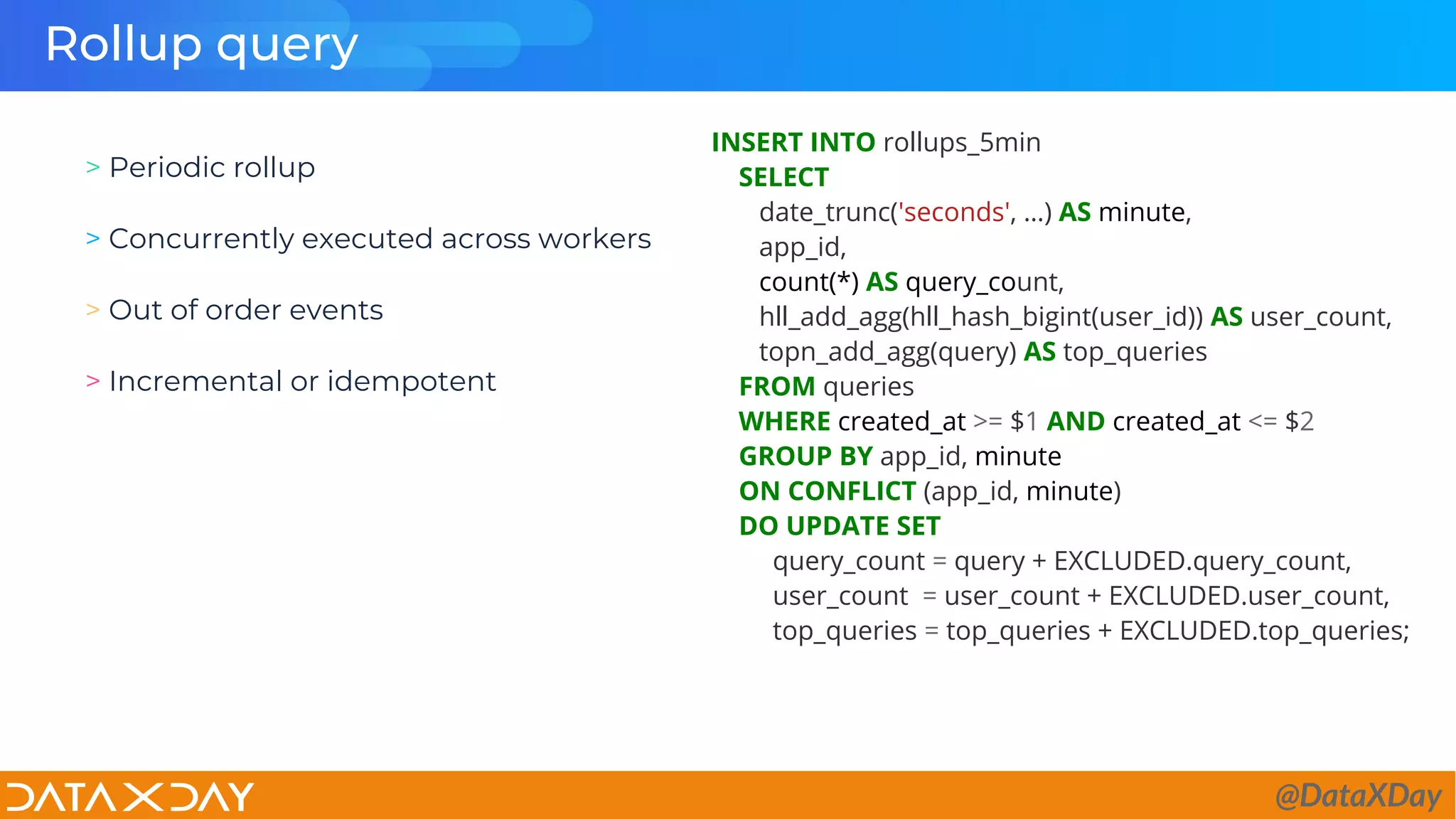

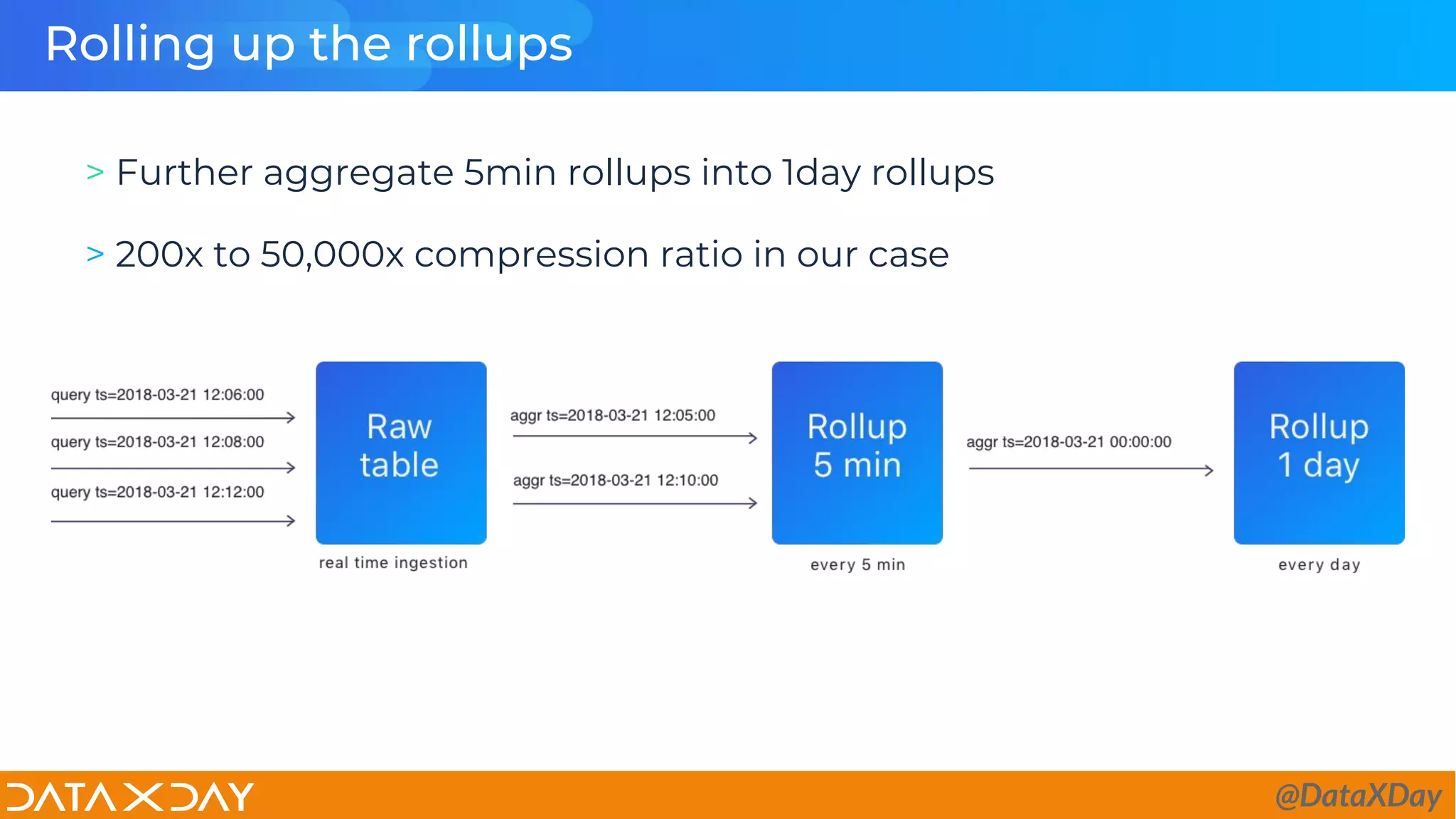

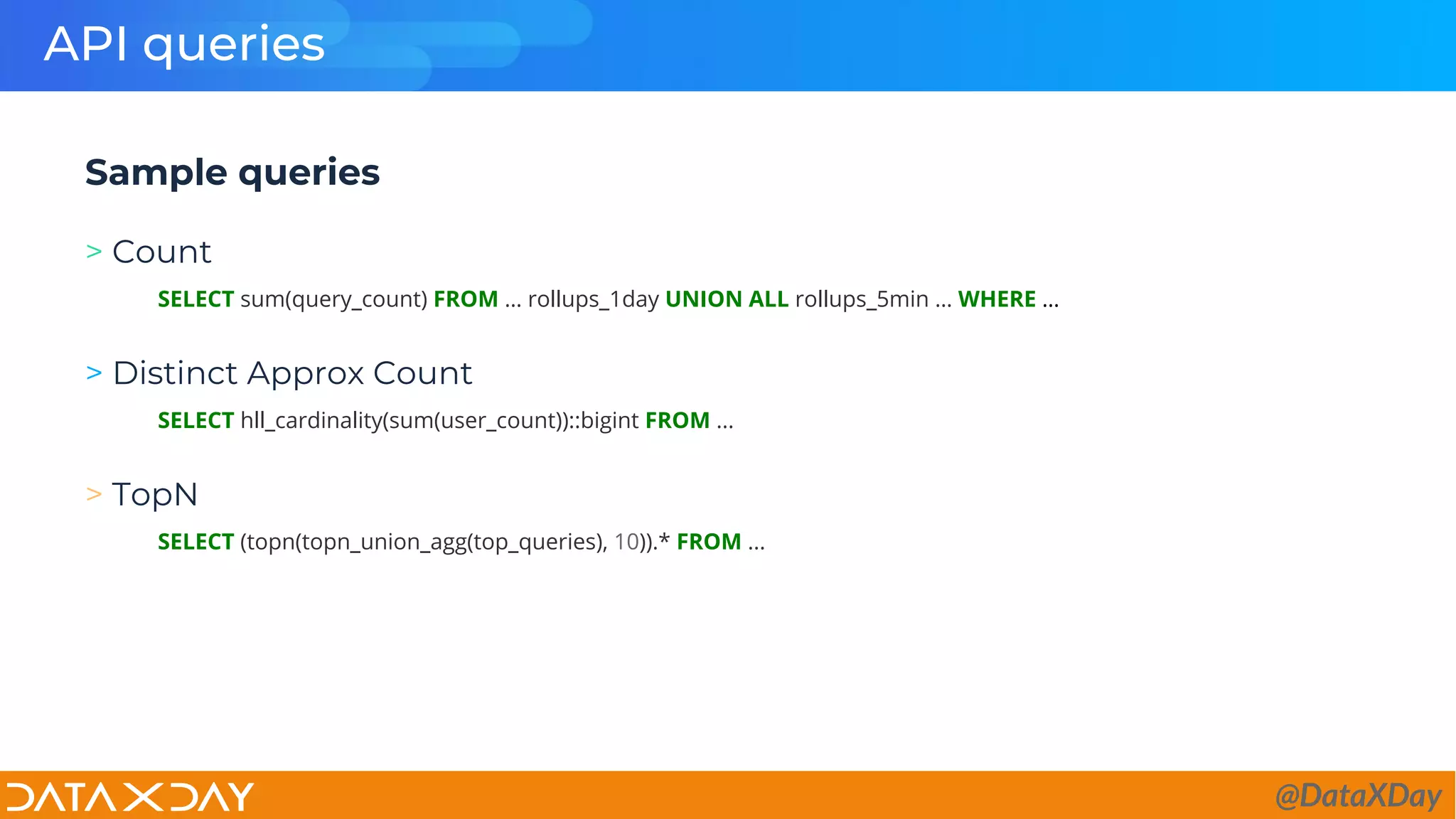

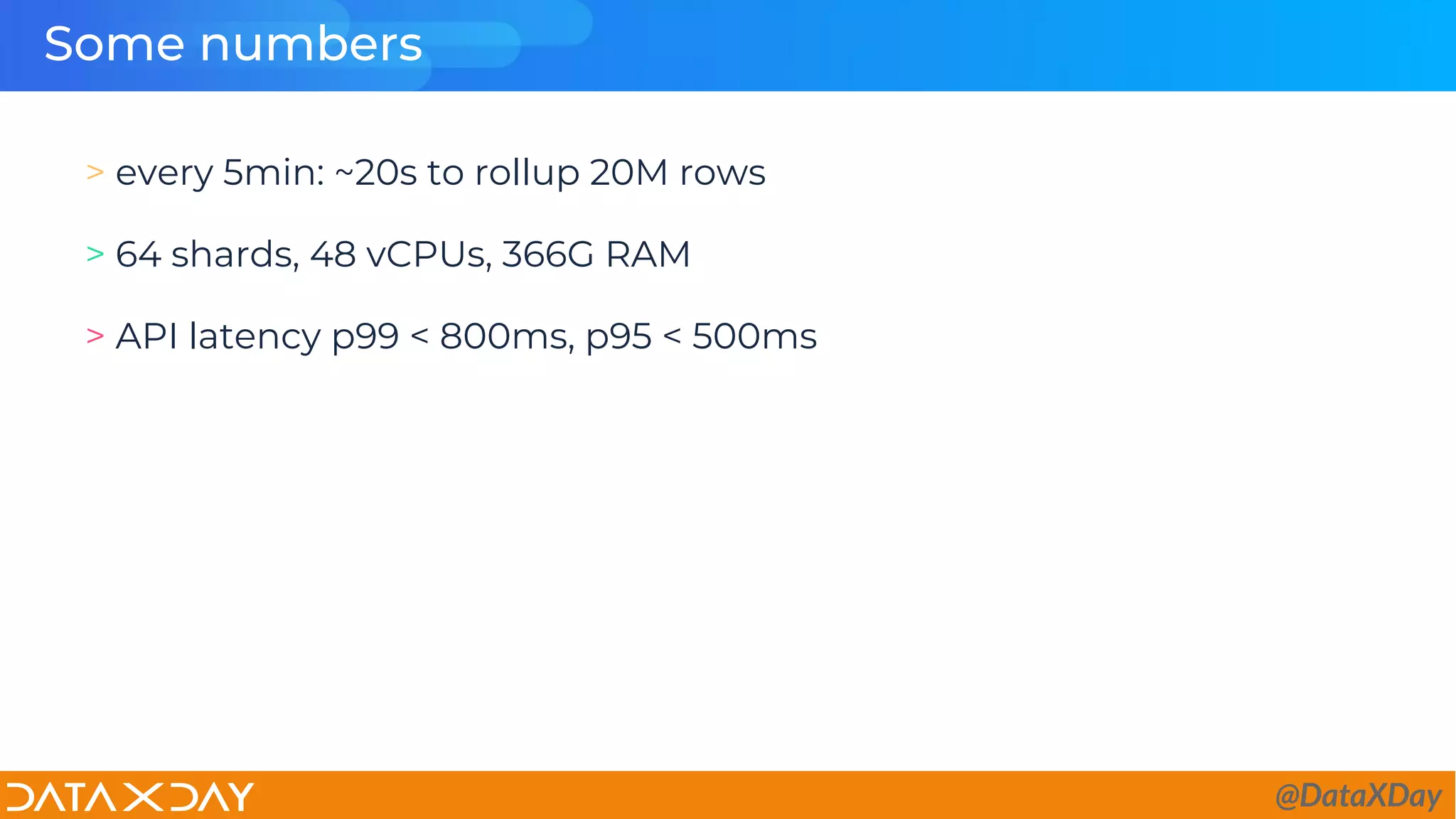

This document summarizes a presentation about building a real-time analytics API at scale using Citus, an open-source PostgreSQL extension. The presentation discusses how Algolia moved from ElasticSearch to Citus to enable sub-second analytics queries on billions of events per day. Key points include how Algolia configured Citus to shard and distribute data across clusters, used roll-up tables to aggregate raw events into aggregated metrics on different time intervals, and could perform queries on these aggregated tables with sub-800ms latency at scale. The approach using Citus as the foundation has proven successful for Algolia's analytics needs.