Correlation Analysis Modeling Use Case - IBM Power Systems

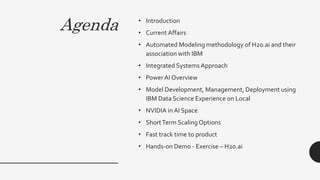

- 1. Agenda • Introduction • Current Affairs • Automated Modeling methodology of H2o.ai and their association with IBM • Integrated Systems Approach • PowerAI Overview • Model Development, Management, Deployment using IBM Data Science Experience on Local • NVIDIA in AI Space • ShortTerm ScalingOptions • Fast track time to product • Hands-on Demo - Exercise – H2o.ai

- 2. Introductions Network with IBM team present at the event Discuss a ‘Use Case’ Extend a LinkedIn Invite Connect on twitter • Technical team Leader • Project head • Speaker • Speaker

- 3. Current Affairs • PowerAI: The World’s Fastest Deep Learning Solution Among Leading Enterprise Servers • Deep Learning Software Distro • Evaluating Julia for Deep Learning on Power + NVIDIA Tesla

- 4. Automated Modeling methodology of H2o.ai and their association with IBM • Technology leader with most completeness of vision • H2O.ai customers gave the highest overall score 4

- 5. H2O Driverless AI Complements IBM Power AI & Vision IBM Power AI is Automatic Deep Learning Sensor Log Transactional H2O Driverless AI is Automatic Machine Learning Image Integrated Systems Approach (On Premises SAAS Platform) 5

- 6. Model Development, Management, Deployment using IBM Data Science Experience on Local (DSX - Local) Winning with Data Science Key Features : • Available for Anaconda 2.7, Anaconda 3.5 and Anaconda plus Spark • Runs on Horton Works Data Platform Hadoop • SPSS for DSX –Visual Productivity for Data Science • Decision optimization for DSX -Transform insights from ML into actions • Model Management and Deployment - Accelerate time to production • Deploy Python, R, & Spark Models online or batch • Track model accuracy and schedule evaluations • Load-balancing and auto-scaling support DSX Local a platform for enterprise data science teams ... For individuals For teams SPSS for DSX DO for DSX Notebooks 6

- 7. IBM Data Science Experience Local – Leadership Position Forrester BARC

- 8. Fast track time to product - Utility leverages different type of deployments, Options for Digital Transformation Watson & AI Analytics IoT Machine Learning Multi-Cloud Multi-Cloud Hybrid ___aaS DevOps CDM Agile Workflow Automation & Orchestration Modernization & Transformation Containerization Private Cloud Digital Transformation 8

- 9. • A single binary download of the most ubiquitous open source AI and major deep learning frameworks packages that are all precompiled with of the supporting software that they require to run. flattening the deep learning time to value curve Artificial Intelligence on Power Infrastructure Enterprise Software Distribution • PowerAI S/W • Support Tools for Ease of Development • PowerAI VISION • Apache-Spark Data ETL and Preparation Tool • DL Insight Automation & Modeling • Distributed Deep Learning • Large Model Support Enterprise Deep Learning Distribution PowerAI is the “Red Hat” of Deep Learning • Simplified deployment, integrated support, excellent performance Safe and Secure • On Premises Installation • Easy integration • Supports Several API’s • Embedded Safety &Security features 9

- 10. GoogLeNet – 1000 epochs LOWER IS BETTER 3.8x faster [9709] seconds 4xTesla V100 GPUs PCIe3 Critical capabilities (regression, nearest neighbor, recommendation systems, +++) operate on more than just the GPU memory Use Server and GPU memory to support higher resolution data by moving large amounts of data between the CPU and GPU PowerAI automatically enables seamless use of Server and GPU memory NVLink 2.0 and POWER9 significantly cuts training times and boosts performance (accuracy) of the model with higher resolution data train more | build more | know more • POWER9 delivers 3.8x reduction in AI training with same NVIDIA GPU Benchmark details in speaker notes. [2622] seconds 4xTesla V100 GP NVLink 2.0

- 11. 3.7x 2.3x train more build more know more 3.8x 11

- 12. If you don’t want to pay for On premises- systems then you can scale out using Nutanix Components in IBM Hyper converged System Turnkey infrastructure platform that converges compute, storage, networking and virtualization to run any application, at any scale. Comprehensive application and infrastructure management solution that radically simplifies datacenter operations. Short Term Scaling Options Nutanix Acropoxlis - ProNutanix Prism - Starter (Pro Edition available sometime after GA … but soon.) Simplify infrastructure management with one-click operations. (Ultimate Edition available as add-on.) A powerful scale-out data fabric for server, storage, virtualization and networking. 14

- 13. NVIDIA Tech Chip Company “A giant leap” Taking AI from video games to autonomous car • Partners with IBM, known for their Graphical processing Units on desktops, Key element : Pascal GPU • Making an impact in driverless/Autonomous driving space • Accelerated computing, NVIDIAGPUs enabling NVLink technology on Power that is a huge edge

- 14. EXAMPLE – BUILDING, IMPLEMENTING AND DEPLOYING MODELS CASE STUDY ON CRIME & ITS IMPACT ON SOCIETY

- 15. Case Study Question Do the people having good financial standing ,higher education level, a steady job corresponds to commit fewer crime, and Does the uneducated, or poor people commit more crime? • Data Source : From the Communities and Crime Un- normalized Data Set • Website : http://archive.ics.uci.edu/ml/machine-learning- databases/00211/CommViolPredUnnormalizedData.txt • Total Observations : 2215 • TotalVariables : 147

- 16. Extracted PctLess9thGrad e PctNotHSGrad with exterior parameters related to employment and poverty Variable Format Min Max Description PctWWage Num 31.68 96.76 Percentage of households with wage or salary income in 1989 PctPopUnderPov Num 0.64 58 Percentage of People under the poverty level PctLess9thGrade Num 0.2 49.89 Percentage of People with 25 and Over with less then 9th grade of education PctNotHSGrad Num 1.46 73.66 Percentage of people 25 and over that are not high school graduates PctUnemployed Num 1.32 31.23 Percentage of people 16 and over, in the labor force, and unemployed PctEmploy Num 24.82 84.67 Percentage of people 16 and over who are employed

- 17. Quantitative Exploration Variable Mean Median Comments PctLess9thGrade 9.186646 7.74 Will be used in the model to calculate the education level PctNotHSGrad 22.30512 21.38 Will be used in the model to calculate the education level PctUnemployed 6.045242 5.45 Exterior Element PctEmploy 62.02161 62.44 Exterior Element PctPopUnderPov 11.62054 9.33 Will be used as the dependent Crime 1081.1729 150 Sum of the Crime robberies 237.9521 19 Exterior Element autoTheft 516.6926 75 Exterior Element assaults 326.5282 56 Exterior Element Community 65.58753 27 Removed from the analysis due to data scarcity

- 18. Outliers, and Data Transformation • Dropped 9 outliers from population • Dropped 2 outliers where people > 50,000 for population and > 400 for communities. • Examined the normality of data • PctLess9thGrade and PctNotHSGrad were transformed to improve their normality Normal Distribution pctWWage MedOwnCostPctIncNoMtg Violent Crimes PctPopUnderPov

- 19. Correlation Analysis • The only exterior factor highly correlated to various burglary, a type of crimes is people who live under poverty • People who attended school, and are less then 9th grade, and also people who aren’t High School grad also correlates positively on a low level to various types of crime. • Wage of a person or employment has negative correlation with Crimes population pctWWage PctEmploy PctPopUnderPov PctLess9thGrade PctNotHSGrad population 1.00000000 -0.01273487 -0.02246343 0.09537704 0.04318251 0.05564877 pctWWage -0.01273487 1.00000000 0.87065844 -0.52248347 -0.43352533 -0.54723404 PctEmploy -0.02246343 0.87065844 1.00000000 -0.70090092 -0.53131695 -0.61725106 PctPopUnderPov 0.09537704 -0.52248347 -0.70090092 1.00000000 0.64238395 0.66442624 PctLess9thGrade 0.04318251 -0.43352533 -0.53131695 0.64238395 1.00000000 0.92756033 PctNotHSGrad 0.05564877 -0.54723404 -0.61725106 0.66442624 0.92756033 1.00000000 robberies 0.24168197 -0.25706104 -0.30391282 0.48295302 0.32251613 0.41247479 burglaries 0.10177926 -0.25440430 -0.25898625 0.41750058 0.21035835 0.30832998 autoTheft 0.95461771 -0.03632701 -0.04477308 0.09044789 0.05807327 0.07301789

- 20. Demographics & Crime Correlation positively correlated to the crime rate: • People who live under poverty are more likely to be involved in burglaries • Percentage of adults with less than 9th grade education has high tendency to commit crime negative to crime rate: • People who are employed • People who make good wages • percentage of 65 and older in population 1) Correlation plots to visualize the relationship between each independent variable and the dependent variable

- 21. 2) crime rate against each variable group to visualize the correlation violent vs. population NonViolentCrime vs. population • no significant correlation between population and crime rate

- 22. 3) crime rate against each variable group to visualize the correlation violent vs. Education • The more educated, the lower crime rate NonViolentCrime vs. Education

- 23. 4) crime rate against each variable group to visualize the correlation • Poor people, they commit more is the crime violent vs. PercentageUnderPovertyviolent vs. PctUnderPoverty

- 24. Data Description • Communities and Crime Un-normalized Dataset • over 2215 observations and 147 attributes collected between 1995 and 2011 • We selected 13 major independent variables for our project • Dataset clean process: • Plot each independent variable Elimate outliers Close to Norm Distribution • Omit “Crime Per Capita” for there are missing data • We eventually obtained 2011 observations for non- violent crime and 1894 observations for violent observations.

- 25. • PrincipalComponentAnalysis & Regression: Use an orthogonal transformation to convert a set of observations of possibly correlated variables into a set of values of linearly uncorrelated variables • Benefit: 1) Able to evaluate and summarize a dataset before implementing a statistical algorithm to evaluate the results 2) Able to decompose a high dimensional data to extract the main feature components of data MethodsThis lm command consumes a lot of processing; Power Infrastructures makes sure that your model runs and produce results in time.

- 26. • Effective factors for Crime: Population Education Age Income Poverty • Certain Areas do have greater rate of crime than national average level • People who are poor commit more crime in certain region • Crime statistics in these towns are different as compared to the other cities • Crime influences the legal system through new laws, laws that impact the life of the poor, especially those in vulnerable areas • One must be careful to use crime data to form the basis of new laws • There is further scope of this research as the contributing variables to Crime Attribute are vast Conclusion

- 27. Brainstorming Neural Networks Capsule networks • Neural Networks: Artificial Intelligence, Leverage Neural Networks to solve subsets of general Intelligence problems that includes perception, computer vision, prediction analysis, knowledge representation, Intelligent Agents, NLP, Automated reasoning, AI Planning (DFS is one approach to solve this), Interface for control systems. • Model fit /Optimization /Analytic and Simulation: Real time decision making, autonomous agents to make real time in less time, and provide support order Actions, Hard to put a boxer on any particular problem, need to have individuals for the areas that cannot be modeled accurately that area would be useful to know. • Discussions on deployment and Continuous learning: How to monitor and manage models that could go to production. Underlying the learning track for modern Data platforms, Big Data Analytic and Open Source. • Illustrating descriptive and diagnostic approach: Value within a data science not just building models, but supporting decision making and automating them by linear programming type approaches; discussing initial descriptive and diagnostic effort that is tremendously valuable Capsule Network :When multiple predictions agree, a higher level capsule becomes active. 27

- 28. Thank you

Editor's Notes

- Recognized for the mindshare, partner network and status as a quasi-industry standard for machine learning and AI Among all the vendors for sales relationship and account management, customer support (onboarding, troubleshooting, etc.) and overall service and support

- Anaconda ; What version ? What’s inside Data Science Experience , Show all the capabilities ; show model management and deployment stage ; different phases ; fed by preliminary enggineer, supported by SPSS. More of a cluster integration Cloudera Data Science Workbench, Under Evaluation for about an year.

- Why is it valuable – Answer should be in title , why is contenarization is ok ? Why private cloud because you are utility , Including Digital Transformation in title , Automation , this tends to support the learning curve out of equation, Deploy faster , Do,n’t wanna be expert in anything. How can we enable the transition the work in kind of experimental POC type in production, represent how technologies can support overcoming the pain points. Won’t necessary have a lot of skills without the need of full skill base to support deployment. It's important to recognize what's actually driving this need for storage data services. It's well-recognized that organizations today are all looking to build more competitive, profitable businesses. And they can outmaneuver their competition by exploiting the data within their organizations and develop new solutions that drive more competitiveness within their business. You clients are all on a journey Every organization wants to be leveraging things like analytics and artificial intelligence. They want to take advantage of Watson, but not a lot of clients are there yet. Some of that may be, in part, due to the fact that they don't have all the right underlying components that are needed, They don't have the right infrastructure components to help them build an agile workflow that, for example, can enable a DevOps environment to build new competitive solutions. Storage Data Services is really about the ability to serve up data to the organization in a way that works for every line of business, such that they can meet their marketing and business demands.

- Put security in the slide Use number and comparisons ; more quantifiable information Enterprise Software Distribution PowerAI: Available now, a single binary download of the most ubiquitous open source AI and major deep learning frameworks packages that are all precompiled with of the supporting software that they require to run. Enterprise Support: Bid now GA 4Q, L1-L3 support through TSS Productivity Tools PowerAI VISION: Tech Eval Aug 7th , GA 2Q 2018, This is a custom application development tool aimed at computer vision workload. AI Vision enabled application developers with little or not experience with DL to build a trained deep learning mode for different input data sets. Users can input an image data set, and AI Vision will select the best possible model, which framework to use, and determine the best way to tune it. Apache-Spark Data ETL and Preparation Tool: Tech Eval now, GA 4Q, PowerAI includes IBM Spectrum Conductor with Spark with a GUI-based set of tools that enable the data scientist to create functions that transform an input data set to the format required by frameworks like TensorFlow or Caffe, making it a cinch to match your data set to your framework. DL Insight: Tech Eval Aug 7th , GA 4Q, Model tuning software that automatically tunes hyper-parameters for models based on input data sets using Spark-based distributed computing. We enhanced IBM Spectrum Conductor with Spark Tech Eval Aug 7th , GA 2Q ’18, so that it automatically launches multiple model training runs with different hyper-parameters using a subset of the data. It then monitors the training progress and searches and identifies the best hyper-parameters using several different search methods such as random and Bayesian search. Performance Distributed Deep Learning: Tech Eval Aug 7th , GA 2Q ’18, To accelerate the training time, we are adding methods to scale a single training job across a cluster of servers. We have both a Message Passing Interface (MPI) based scaling approach (a messaging passing system for parallel computing architectures), inspired by high-performance computing methods, as well as a Spark and HPC converged distributed computing model, for clusters with either an Ethernet or InfiniBand network. Large Model Support: Tech Eval Aug 7th , GA 2Q ‘18 , One of the challenges our customers are facing is that they are limited by the size of memory available within GPUs. Today, when data scientists develop a deep learning workload the structure of matrices in the neural model, and the data elements which train the model (in a batch) must sit within the memory on GPU –today that space is mostly limited to ~16GB. Efficiency: reduced training time allows more iterations and improve models Improved Accuracy: ability to use larger data sets

- Now we know what software can do , we can talk about hardware on what scale does this really matter ? This slide shows an example of the benefits software features such as large model support (LMS – detailed on the last slide) can deliver practitioners building deep neural networks. The goal of this test? Use system memory and GPU memory to support more complex and higher resolution data. From a business perspective, IBM was showcasing how to maximize productivity training a CNN for medical/satellite image detection with Chainer on POWER9 with NVIDIA V100 GPUs. As you can see … the IBM AC922 infrastructure with POWER9 processing cores and NVLink 2.0 (which you now know enables enhanced Serer CPU GPU communications) demonstrated a 3.8x reduction in training times vs. a tested x86 system (across 1000 epochs). Specifically, in this test, IBM ran 1000 epochs (mini-batch size=5) of the Google’s GoogLeNet model (this neural network that was used to win the ImageNet 2014 computer vision competition) using the Enlarged ImageNet data set (its resolution was 240 x 240 and used the RGB color channel). This test move large amounts of data between the CPU and the GPU and in the end, the IBM POWER9 system reduced the training time for this model by 3.8x. What could you do with that 3.7x faster training times? Perhaps you iterate on the model another ~4 times (or 4000 more epochs for greater performance of the model) or perhaps you deploy 5 models instead of a single one, each trained for 1000 epochs. You decide! For this test, IBM used the a Power AC922 server that had 40 POWER9 cores (2 sockets x 20 core) running at 2.25GHz; the server comes with NVLink 2.0 supporting 4 Tesla V100 GPUs and 1024 GB memory. The competitive server had 2 Intel Xeon E5-2640 v4 chips for a total of 20 cores (2 sockets x 10 cores) running at 2.4 GHz and dispatching 40 threads; the servers uses PCIe 3 because Intel does not support NVLink 2.0 communications between CPUs and GPUs. The server had 1024 GB of RAM and the same 4 Tesla V100 GPUs. Each server was running Ubuntu Linux 16.04 and used the Chainer framework (Chainverv3). The LMS/Out of Core with CUDA 9 / CuDNN7 patches can be download at: https://github.com/cupy/cupy/pull/694 and https://github.com/chainer/chainer/pull/3762. More details… or things to know ImageNet is an image database organized according to the WordNet hierarchy (currently only the nouns), in which each node of the hierarchy is depicted by hundreds and thousands of images. Currently it has an average of over 500 images per node. You can learn more about ImageNet at: http://www.image-net.org/. The GoogLeNet project was one of the winning teams in the 2014 ImageNet large-scale visual recognition challenge (ILSVRC), an annual competition to measure improvements in machine visual technology, based on ImageNet library.

- It’s simple really … Enterprise AI requires new infrastructure that enable the cutting-edge AI innovation that data scientists desire, with the dependability IT requires. IBM Power Systems AC922 offers the fastest way to deploy accelerated databases and deep learning frameworks – with enterprise-class support. You will hear IBM talk about systems like “Coral” and “Summit” – they’re expected to be the world’s most powerful super computers in 2018 (built on POWER9). But super computing deployment like ”Summit” represent only 10% of the technical and high performance computing (HPC) opportunities as commercial clients deploy deep techniques as a foundation for embedding AI into business processes. Well that’s exactly what the AC922 is all about … ring super computer scaling for deep learning to everyone. This slide shows a summary of some of the every day deep learning projects clients are challenged with and how the AC922 can help them train their neural nets faster. There are slides in this deck the delves into the details of each of these benchmarks … you can choose to use this slide as an introduction to those detailed slides … or remove the detailed slides that follow and just use these as statement slide.

- There are two foundational management and provisioning products in an IBM Hyperconverged Solution powered Nutanix: Nutanix Acropolis, and Nutanix Prism. Nutanix Prism (Prism) is the control plane that simplifies datacenter operations by providing a single pane of glass to manage compute, storage and virtualization and offering rich automation and operational insights. Prism simplifies infrastructure management with one-click operations. With its consumer-grade interface, Prism provides an end-to-end management solution for virtualized datacenter environments. It streamlines and automates common workflows and eliminates the need for multiple management solutions across datacenter operations. Powered by advanced machine learning technology, Prism analyzes system data to generate actionable insights – optimizing virtualization and infrastructure management. Prism is available in two editions: Starter and Pro. The IBM Hyperconverged Systems powered by Nutanix comes with the Starter Edition and includes Prism Central for multiple cluster management. Prism Pro (which is not included in the IBM Hyperconverged Systems powered by Nutanix offering at GA 1 but is in the roadmap as an add-on subscription) includes customizable dashboards, as well as one-click capacity planning via capacity behavioral analytics (to enable predictive analysis of capacity usage and trends based on workload behavior enabling pay-as-you-grow scaling), expansion recommendations, efficiency evaluation, a capacity optimization advisor (infrastructure optimization recommendations to improve efficiency and performance) and just-in-time forecasting (capacity expansion forecast to meet future workload growth). Learn more about the differences between Prism Starter and Prism Pro at: https://www.nutanix.com/products/prism/. Nutanix Acropolis (Acropolis) is a powerful scale-out data fabric for server, storage, virtualization and networking. With its ability to run any application at any scale with a turnkey hyperconverged infrastructure solution, it’s become the industry’s leading turnkey infrastructure platform that delivers enterprise-class storage, compute and virtualization services for any application. Acropolis offers IT professionals uncompromising flexibility of where to run their applications, providing a path to freely choose the best virtualization technology for their organization – whether it is traditional hypervisors, emerging hypervisors or containers. For the first time, infrastructure decisions can be made entirely based on the performance, economics, scalability and resiliency requirements of the application, while allowing workloads to move seamlessly without penalty. Acropolis is different from traditional approaches to virtualization because converged storage and virtualization stack eliminates the bloat of legacy standalone hypervisors and makes virtualization invisible. The Acropolis Hypervisor is purpose-built to run on intelligent storage that understands virtualization and provides data services such as snapshots, clones, provisioning, operations and data protection at VM granularity. As a result, the hypervisor can be made leaner and focus on delivering secure virtual computing. IBM Hyperconverged Systems powered by Nutanix ships with Acropolis Pro. The Pro edition offers rich data services and resilience and management features for enterprises running multiple applications on a Nutanix cluster or with large-scale single workload deployments. The Ultimate edition is for enterprise customers looking to unleash the full potential of Nutanix to tackle infrastructure challenges. This edition is ideal for multi-site deployments and for advanced security requirements; for example you can manage multi-site disaster recovery (DR) deployments using Acropolis Ultimate. You can see all the difference between all the Acropolis editions (Starter, Pro, and Ultimate) by reading the Acropolis data sheet: http://www.webscaleworks.com/datasheets/software-editions-solution-brief_.pdf. Nutanix Acropolis is comprised of three foundational components: Distributed Storage Fabric Enterprise data storage delivered as an on-demand service by employing a highly distributed software architecture. Nutanix eliminates the need for traditional SAN and NAS solutions, and delivers a rich set of VM-centric software-defined services, including snapshots, clones, high availability, disaster recovery, deduplication, compression, erasure coding storage optimization and more. 2. App Mobility Fabric A newly-designed open environment capable of delivering intelligent VM placement, VM migration, and VM conversion across hypervisors and clouds, as well as cross-hypervisor high availability and integrated disaster recovery. Acropolis supports all virtualized applications, and will provide a seamless path to containers and hybrid cloud computing. 3. Acropolis Hypervisor (AHV) Its Distributed Storage Fabric includes a native hypervisor based on the proven Linux KVM hypervisor. With enhanced security, self-healing capabilities based on SaltStack and enterprise-grade VM management, Acropolis Hypervisor delivers the best overall user experience at the lowest TCO and will be the first hypervisor to plug into the App Mobility Fabric. In summary, Nutanix Acropolis combines feature-rich software-defined storage with built-in virtualization in a turnkey hyperconverged infrastructure solution that can be deployed out-of-the-box in 60 minutes or less. Eliminate the need for standalone SAN or NAS-based storage, reduce the complexity of legacy virtualization management and lower virtualization costs by up to 80%. You can learn all about the details of Acropolis by reading “Hyperconverged Infrastructure: The Definite Guide” at https://www.nutanix.com/go/what-is-nutanix-hyperconverged-infrastructure.html. The foundational components effectively deliver an integrated management of physical and virtual infrastructure, end-to-end operations for any workload at any scale, with unfettered application mobility and up to 80% lower virtualization costs.

- scatterplot(CrimeData$population~CrimeData$community)

- cor(CrimeData)

- cor.test(CrimeData$violentcrimes,CrimeData$population) Pearson's product-moment correlationdata: CrimeData$violentcrimes and CrimeData$populationt = 15.292, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.2709899 0.3463393sample estimates: cor 0.3091497 > cor.test(CrimeData$violentcrimes,CrimeData$PctLess9thGrade) Pearson's product-moment correlationdata: CrimeData$violentcrimes and CrimeData$PctLess9thGradet = 14.175, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.2498661 0.3262414sample estimates: cor 0.2885126 > cor.test(CrimeData$violentcrimes,CrimeData$PctPopUnderPov) Pearson's product-moment correlationdata: CrimeData$violentcrimes and CrimeData$PctPopUnderPovt = 20.401, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.3622210 0.4323523sample estimates: cor 0.3978677 >

- scatterplot(CrimeData$population~CrimeData$NonViolentCrime) scatterplot(CrimeData$population~CrimeData$violentcrimes) cor.test(CrimeData$NonViolentCrime,CrimeData$population) Pearson's product-moment correlationdata: CrimeData$NonViolentCrime and CrimeData$populationt = 161.76, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.9568337 0.9633400sample estimates: cor 0.9602169 cor.test(CrimeData$violentcrimes,CrimeData$population) Pearson's product-moment correlationdata: CrimeData$violentcrimes and CrimeData$populationt = 15.292, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.2709899 0.3463393sample estimates: cor 0.3091497

- scatterplot(CrimeData$violentcrimes~CrimeData$PctLess9thGrade) scatterplot(CrimeData$NonViolentCrime~CrimeData$PctLess9thGrade) cor.test(CrimeData$NonViolentCrime,CrimeData$PctLess9thGrade) Pearson's product-moment correlationdata: CrimeData$NonViolentCrime and CrimeData$PctLess9thGradet = 2.9033, df = 2213, p-value = 0.003729alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.02000231 0.10298471sample estimates: cor 0.06159995 cor.test(CrimeData$violentcrimes,CrimeData$PctLess9thGrade) Pearson's product-moment correlationdata: CrimeData$violentcrimes and CrimeData$PctLess9thGradet = 14.175, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.2498661 0.3262414sample estimates: cor 0.2885126

- > scatterplot(CrimeData$violentcrimes~CrimeData$PctPopUnderPov)> scatterplot(CrimeData$NonViolentCrime~CrimeData$PctPopUnderPov)> cor.test(CrimeData$NonViolentCrime,CrimeData$PctPopUnderPov) Pearson's product-moment correlationdata: CrimeData$NonViolentCrime and CrimeData$PctPopUnderPovt = 4.7422, df = 2213, p-value = 2.249e-06alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.05889555 0.14135697sample estimates: cor 0.1002985 > cor.test(CrimeData$violentcrimes,CrimeData$PctPopUnderPov) Pearson's product-moment correlationdata: CrimeData$violentcrimes and CrimeData$PctPopUnderPovt = 20.401, df = 2213, p-value < 2.2e-16alternative hypothesis: true correlation is not equal to 095 percent confidence interval: 0.3622210 0.4323523sample estimates: cor 0.3978677

- setInternet2(use = TRUE)crimedataraw <-read.csv("http://archive.ics.uci.edu/ml/machine-learning-databases/00211/CommViolPredUnnormalizedData.txt", header = FALSE, sep = ",", quote = "\"", dec = ".", fill = TRUE, comment.char = "", stringsAsFactors = default.stringsAsFactors())Crime_Dataset <-read.csv("http://archive.ics.uci.edu/ml/machine-learning-databases/00211/CommViolPredUnnormalizedData.txt",header = FALSE, sep = ",", quote = "\"", dec = ".", fill = TRUE, comment.char = "",na.strings="?",strip.white=TRUE,stringsAsFactors = default.stringsAsFactors())View(Crime_Dataset)names(Crime_Dataset )[1] <- "county"names(Crime_Dataset )[2] <- "state"names(Crime_Dataset )[3] <- "community"names(Crime_Dataset )[4] <- "communityname"names(Crime_Dataset )[5] <- "fold"names(Crime_Dataset )[6] <- "population"names(Crime_Dataset )[7] <- "householdsize"names(Crime_Dataset )[8] <- "racepctblack"names(Crime_Dataset )[9] <- "racePctWhite"names(Crime_Dataset )[10] <- "racePctAsian"names(Crime_Dataset )[11] <- "racePctHisp"names(Crime_Dataset )[12] <- "agePct12t21"names(Crime_Dataset )[13] <- "agePct12t29"names(Crime_Dataset )[14] <- "agePct16t24"names(Crime_Dataset )[15] <- "agePct65up"names(Crime_Dataset )[16] <- "numbUrban"names(Crime_Dataset )[17] <- "pctUrban"names(Crime_Dataset )[18] <- "medIncome"names(Crime_Dataset )[19] <- "pctWWage"names(Crime_Dataset )[20] <- "pctWFarmSelf"names(Crime_Dataset )[21] <- "pctWInvInc"names(Crime_Dataset )[22] <- "pctWSocSec"names(Crime_Dataset )[23] <- "pctWPubAsst"names(Crime_Dataset )[24] <- "pctWRetire"names(Crime_Dataset )[25] <- "medFamInc"names(Crime_Dataset )[26] <- "perCapInc"names(Crime_Dataset )[27] <- "whitePerCap"names(Crime_Dataset )[28] <- "blackPerCap"names(Crime_Dataset )[29] <- "indianPerCap"names(Crime_Dataset )[30] <- "AsianPerCap"names(Crime_Dataset )[31] <- "OtherPerCap"names(Crime_Dataset )[32] <- "HispPerCap"names(Crime_Dataset )[33] <- "NumUnderPov"names(Crime_Dataset )[34] <- "PctPopUnderPov"names(Crime_Dataset )[35] <- "PctLess9thGrade"names(Crime_Dataset )[36] <- "PctNotHSGrad"names(Crime_Dataset )[37] <- "PctBSorMore"names(Crime_Dataset )[38] <- "PctUnemployed"names(Crime_Dataset )[39] <- "PctEmploy"names(Crime_Dataset )[40] <- "PctEmplManu"names(Crime_Dataset )[41] <- "PctEmplProfServ"names(Crime_Dataset )[42] <- "PctOccupManu"names(Crime_Dataset )[43] <- "PctOccupMgmtProf"names(Crime_Dataset )[44] <- "MalePctDivorce"names(Crime_Dataset )[45] <- "MalePctNevMarr"names(Crime_Dataset )[46] <- "FemalePctDiv"names(Crime_Dataset )[47] <- "TotalPctDiv"names(Crime_Dataset )[48] <- "PersPerFam"names(Crime_Dataset )[49] <- "PctFam2Par"names(Crime_Dataset )[50] <- "PctKids2Par"names(Crime_Dataset )[51] <- "PctYoungKids2Par"names(Crime_Dataset )[52] <- "PctTeen2Par"names(Crime_Dataset )[53] <- "PctWorkMomYoungKids"names(Crime_Dataset )[54] <- "PctWorkMom"names(Crime_Dataset )[55] <- "NumIlleg"names(Crime_Dataset )[56] <- "PctIlleg"names(Crime_Dataset )[57] <- "NumImmig"names(Crime_Dataset )[58] <- "PctImmigRecent"names(Crime_Dataset )[59] <- "PctImmigRec5"names(Crime_Dataset )[60] <- "PctImmigRec8"names(Crime_Dataset )[61] <- "PctImmigRec10"names(Crime_Dataset )[62] <- "PctRecentImmig"names(Crime_Dataset )[63] <- "PctRecImmig5"names(Crime_Dataset )[64] <- "PctRecImmig8"names(Crime_Dataset )[65] <- "PctRecImmig10"names(Crime_Dataset )[66] <- "PctSpeakEnglOnly"names(Crime_Dataset )[67] <- "PctNotSpeakEnglWell"names(Crime_Dataset )[68] <- "PctLargHouseFam"names(Crime_Dataset )[69] <- "PctLargHouseOccup"names(Crime_Dataset )[70] <- "PersPerOccupHous"names(Crime_Dataset )[71] <- "PersPerOwnOccHous"names(Crime_Dataset )[72] <- "PersPerRentOccHous"names(Crime_Dataset )[73] <- "PctPersOwnOccup"names(Crime_Dataset )[74] <- "PctPersDenseHous"names(Crime_Dataset )[75] <- "PctHousLess3BR"names(Crime_Dataset )[76] <- "MedNumBR"names(Crime_Dataset )[77] <- "HousVacant"names(Crime_Dataset )[78] <- "PctHousOccup"names(Crime_Dataset )[79] <- "PctHousOwnOcc"names(Crime_Dataset )[80] <- "PctVacantBoarded"names(Crime_Dataset )[81] <- "PctVacMore6Mos"names(Crime_Dataset )[82] <- "MedYrHousBuilt"names(Crime_Dataset )[83] <- "PctHousNoPhone"names(Crime_Dataset )[84] <- "PctWOFullPlumb"names(Crime_Dataset )[85] <- "OwnOccLowQuart"names(Crime_Dataset )[86] <- "OwnOccMedVal"names(Crime_Dataset )[87] <- "OwnOccHiQuart"names(Crime_Dataset )[88] <- "RentLowQ"names(Crime_Dataset )[89] <- "RentMedian"names(Crime_Dataset )[90] <- "RentHighQ"names(Crime_Dataset )[91] <- "MedRent"names(Crime_Dataset )[92] <- "MedRentPctHousInc"names(Crime_Dataset )[93] <- "MedOwnCostPctInc"names(Crime_Dataset )[94] <- "MedOwnCostPctIncNoMtg"names(Crime_Dataset )[95] <- "NumInShelters"names(Crime_Dataset )[96] <- "NumStreet"names(Crime_Dataset )[97] <- "PctForeignBorn"names(Crime_Dataset )[98] <- "PctBornSameState"names(Crime_Dataset )[99] <- "PctSameHouse85"names(Crime_Dataset )[100] <- "PctSameCity85"names(Crime_Dataset )[101] <- "PctSameState85"names(Crime_Dataset )[102] <- "LemasSwornFT"names(Crime_Dataset )[103] <- "LemasSwFTPerPop"names(Crime_Dataset )[104] <- "LemasSwFTFieldOps"names(Crime_Dataset )[105] <- "LemasSwFTFieldPerPop"names(Crime_Dataset )[106] <- "LemasTotalReq"names(Crime_Dataset )[107] <- "LemasTotReqPerPop"names(Crime_Dataset )[108] <- "PolicReqPerOffic"names(Crime_Dataset )[109] <- "PolicPerPop"names(Crime_Dataset )[110] <- "RacialMatchCommPol"names(Crime_Dataset )[111] <- "PctPolicWhite"names(Crime_Dataset )[112] <- "PctPolicBlack"names(Crime_Dataset )[113] <- "PctPolicHisp"names(Crime_Dataset )[114] <- "PctPolicAsian"names(Crime_Dataset )[115] <- "PctPolicMinor"names(Crime_Dataset )[116] <- "OfficAssgnDrugUnits"names(Crime_Dataset )[117] <- "NumKindsDrugsSeiz"names(Crime_Dataset )[118] <- "PolicAveOTWorked"names(Crime_Dataset )[119] <- "LandArea"names(Crime_Dataset )[120] <- "PopDens"names(Crime_Dataset )[121] <- "PctUsePubTrans"names(Crime_Dataset )[122] <- "PolicCars"names(Crime_Dataset )[123] <- "PolicOperBudg"names(Crime_Dataset )[124] <- "LemasPctPolicOnPatr"names(Crime_Dataset )[125] <- "LemasGangUnitDeploy"names(Crime_Dataset )[126] <- "LemasPctOfficDrugUn"names(Crime_Dataset )[127] <- "PolicBudgPerPop"names(Crime_Dataset )[128] <- "ViolentCrimesPerPop"names(Crime_Dataset )[130] <- "murders"names(Crime_Dataset )[132] <- "rapes"names(Crime_Dataset )[134] <- "robberies"names(Crime_Dataset )[136] <- "assaults"names(Crime_Dataset )[138] <- "burglaries"names(Crime_Dataset )[142] <- "autoTheft"names(Crime_Dataset )[146] <- "violentcrimes"names(Crime_Dataset)[148] <- "region"Crime_Dataset$region <- NAnames(Crime_Dataset)[148] <- "region"West <- c("AZ","CO","ID","NM","MT","UT","NV","WY","AK","CA","HI","OR","WA")South <- c("DE","DC","FL","GA","MD","NC","SC","VA","WV","AL","KY","MS","TN","AR","LA","OK","TX")Midwest <- c("IN","IL","MI","OH","WI","IO","NE","KS","ND","MN","SD","MO")Northeast <- c("CT","ME","MA","NH","RI","VT","NJ","NY","PA")Crime_Dataset [which(Crime_Dataset$state %in% West),148] <- "West"Crime_Dataset [which(Crime_Dataset$state %in% South),148] <- "South"Crime_Dataset [which(Crime_Dataset$state %in% Midwest),148] <- "MidWest"Crime_Dataset [which(Crime_Dataset$state %in% Northeast),148] <- "NorthEast"View(Crime_Dataset)

- Model <- lm(CrimeData$burglaries~CrimeData$PctPopUnderPov+CrimeData$PctNotHSGrad)> summary(Model)Call:lm(formula = CrimeData$burglaries ~ CrimeData$PctPopUnderPov + CrimeData$PctNotHSGrad)Residuals: Min 1Q Median 3Q Max -108.44 -17.45 -5.71 12.04 373.51 Coefficients: Estimate Std. Error t value Pr(>|t|) (Intercept) 11.37595 1.49631 7.603 4.26e-14 ***CrimeData$PctPopUnderPov 1.51681 0.10289 14.742 < 2e-16 ***CrimeData$PctNotHSGrad 0.17268 0.08052 2.144 0.0321 * ---Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1Residual standard error: 31.12 on 2212 degrees of freedomMultiple R-squared: 0.176, Adjusted R-squared: 0.1753 F-statistic: 236.3 on 2 and 2212 DF, p-value: < 2.2e-16>

- Illustrate it; there are lot of different architecture out there. From an illustration purposes it will be easier to grasp it , why someone would want to combine these different systems. What’s the next the level of creativity we are gaining with capsnet