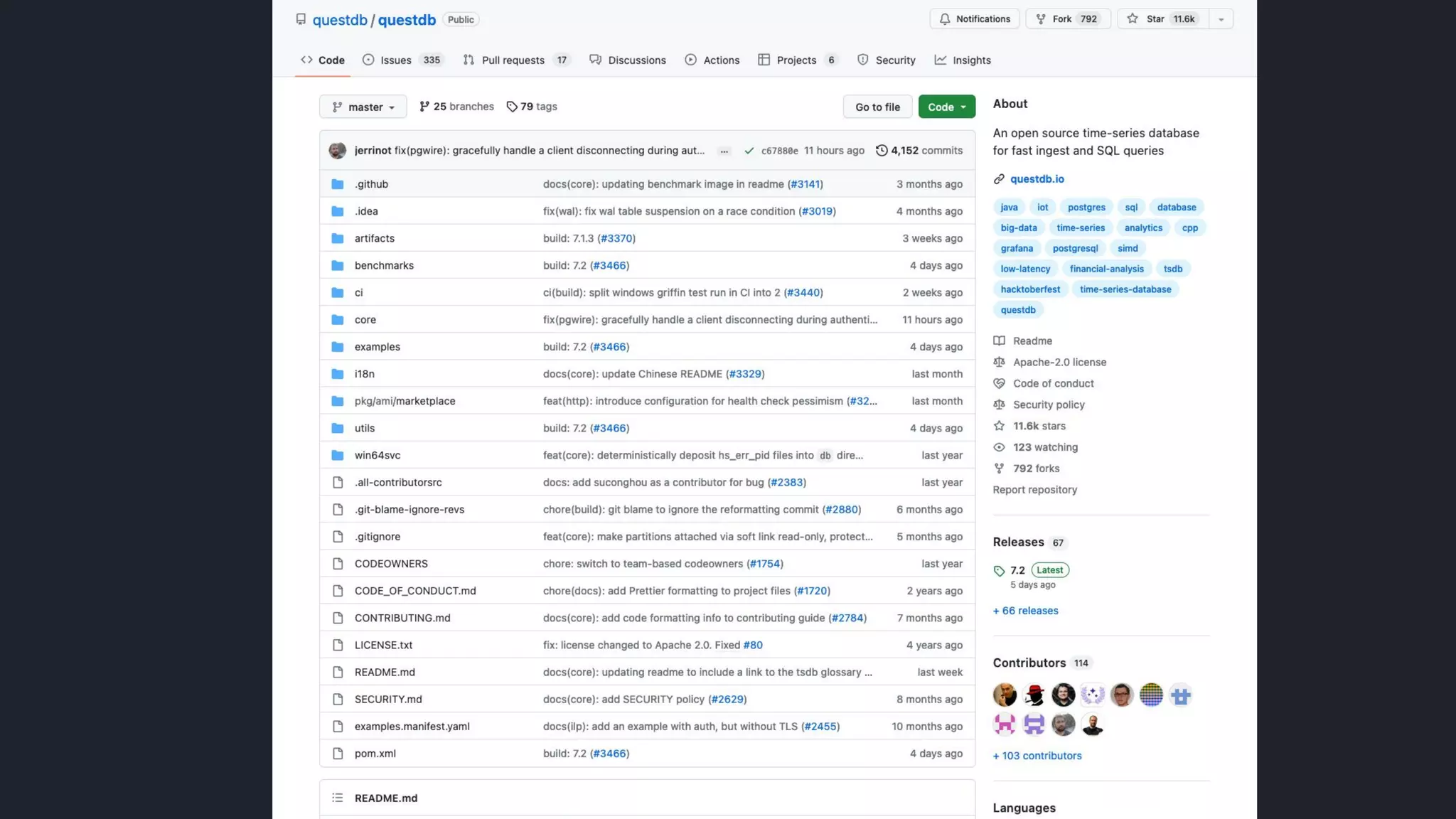

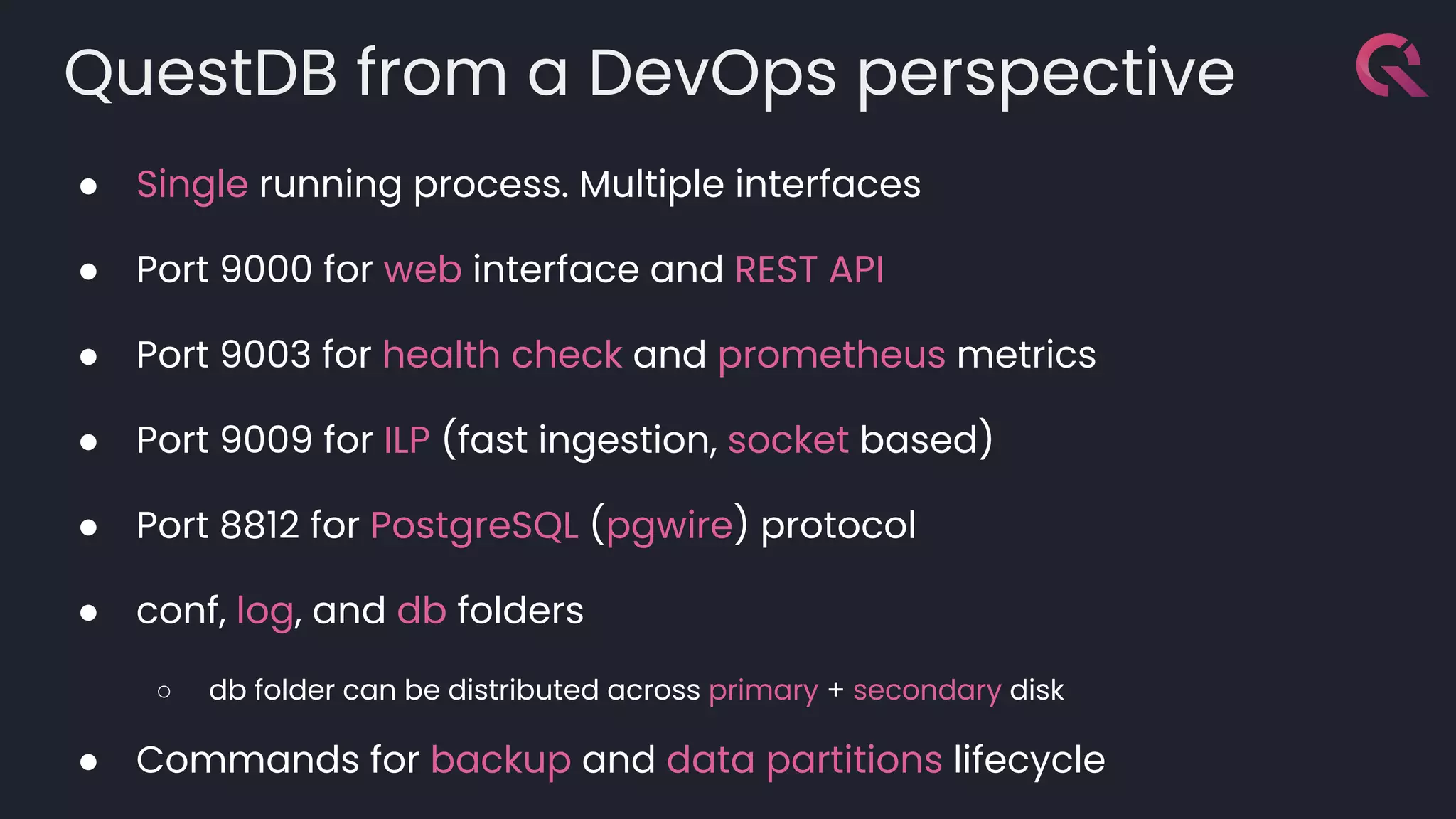

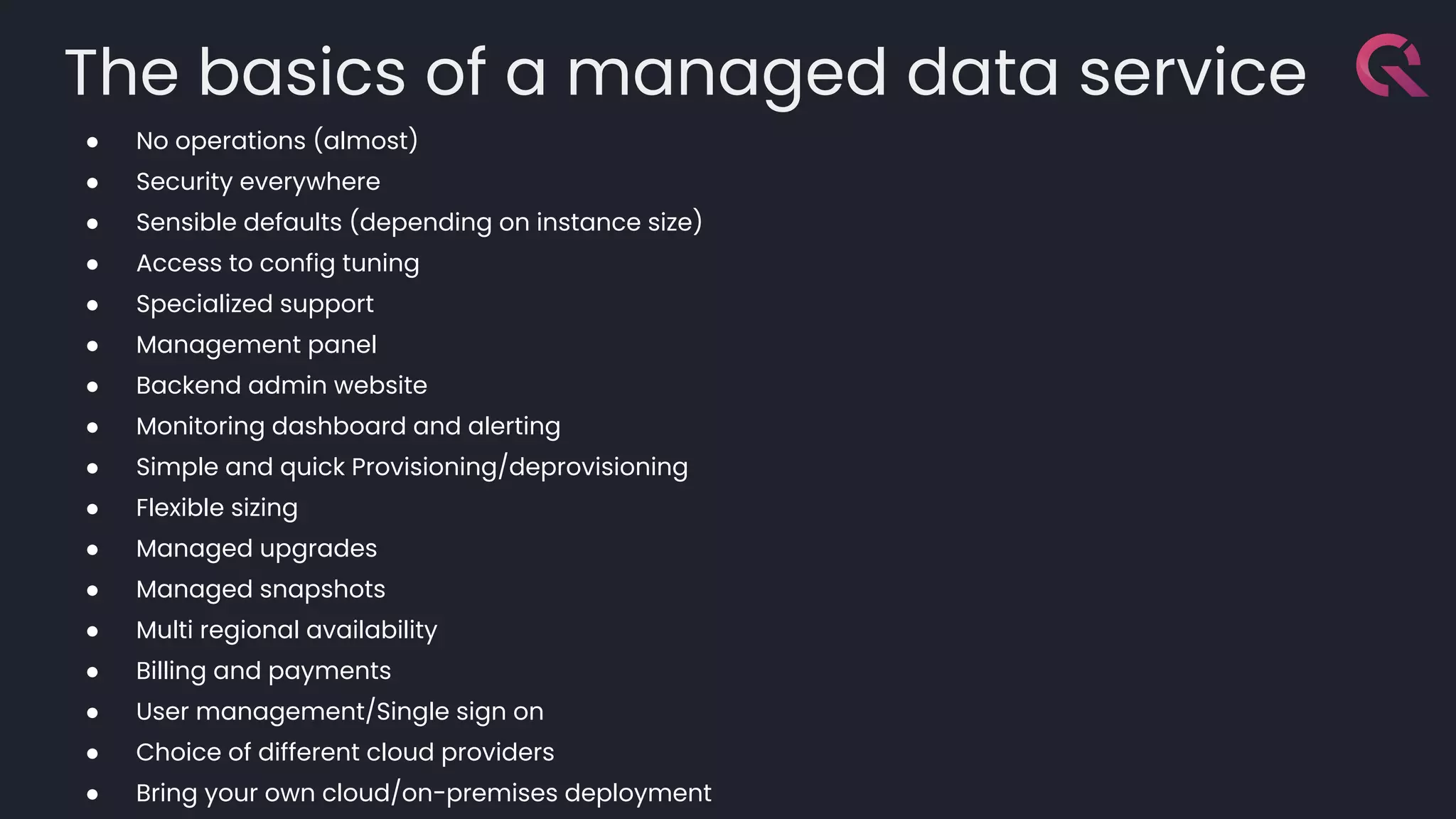

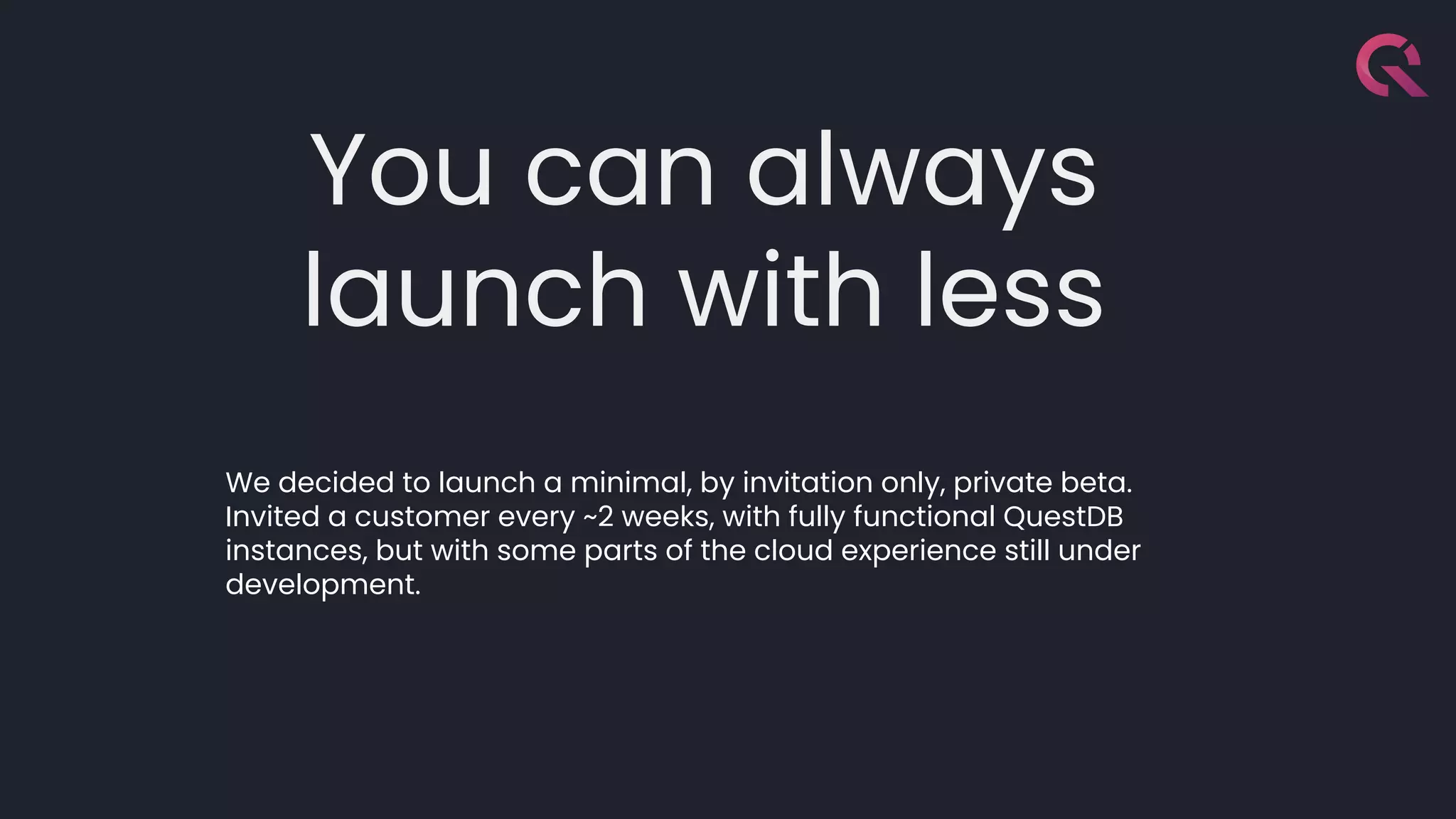

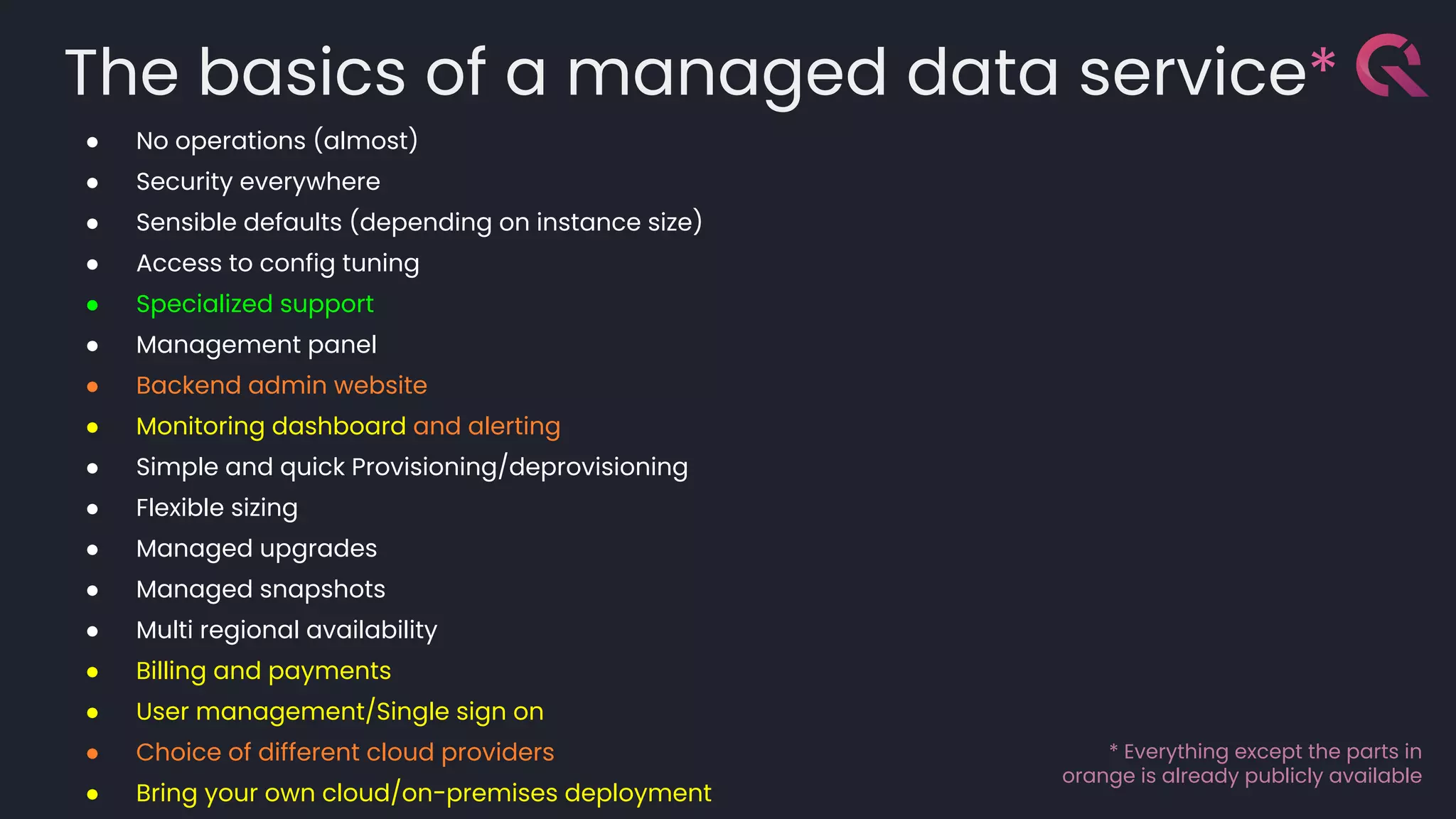

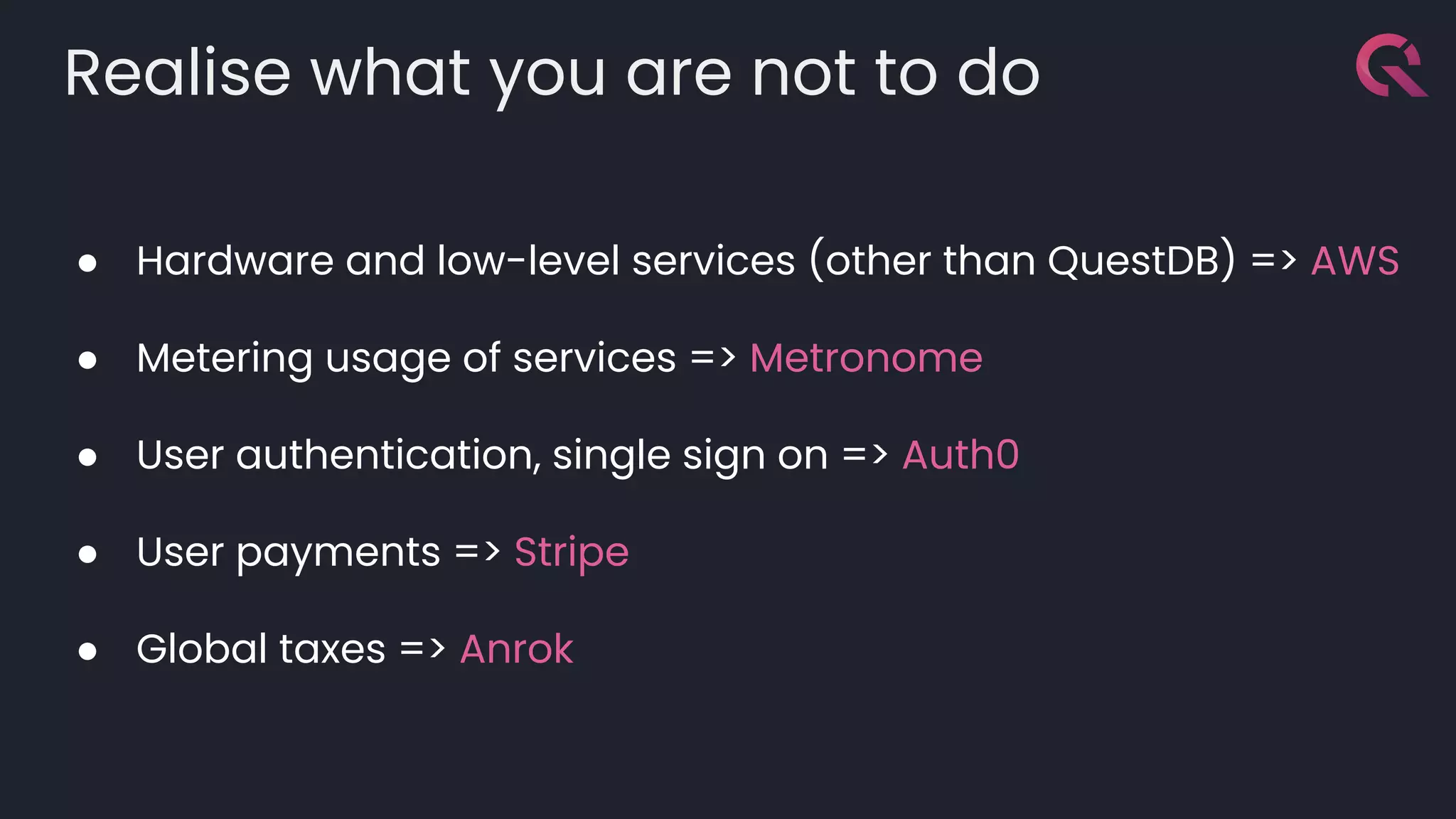

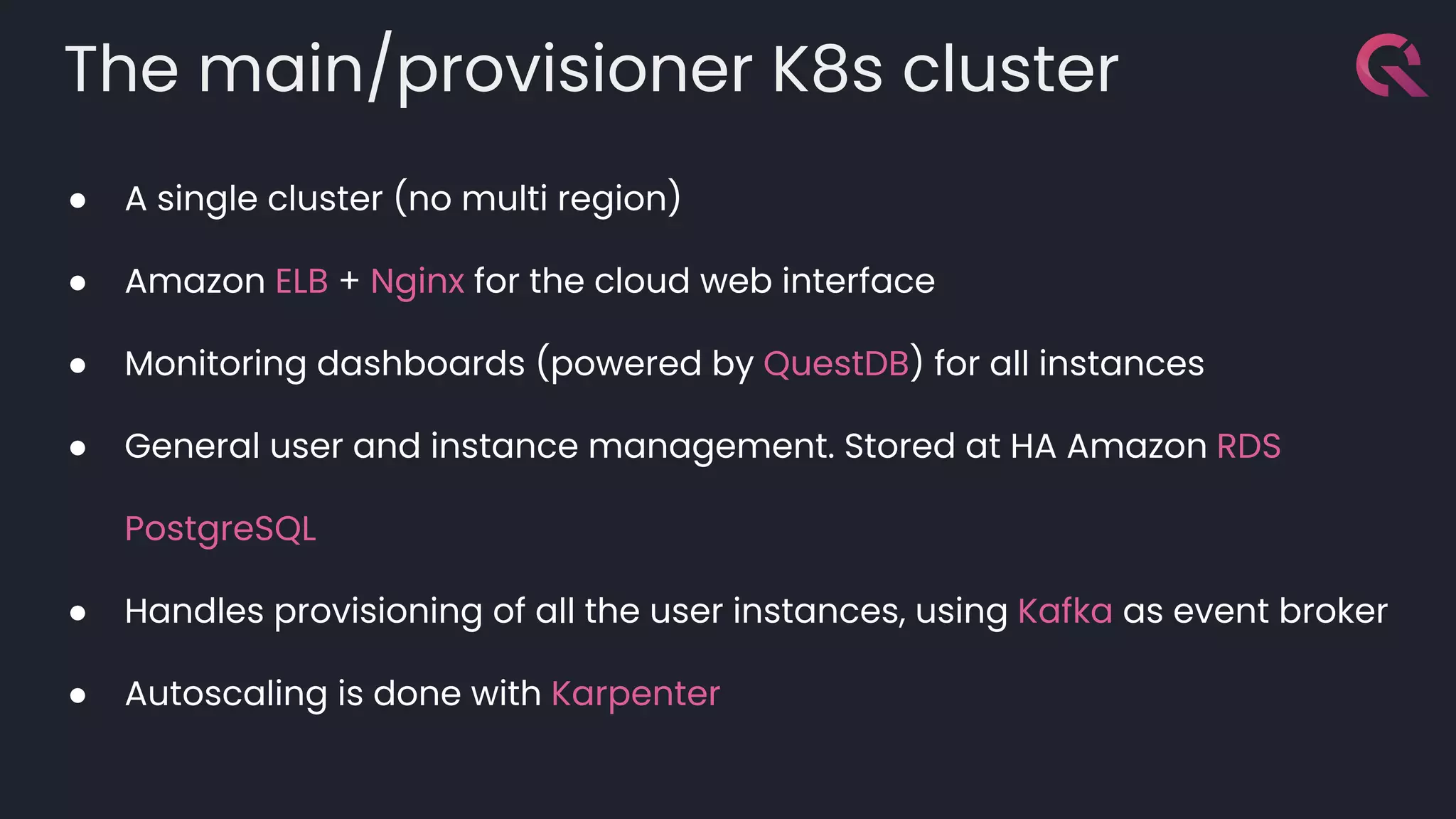

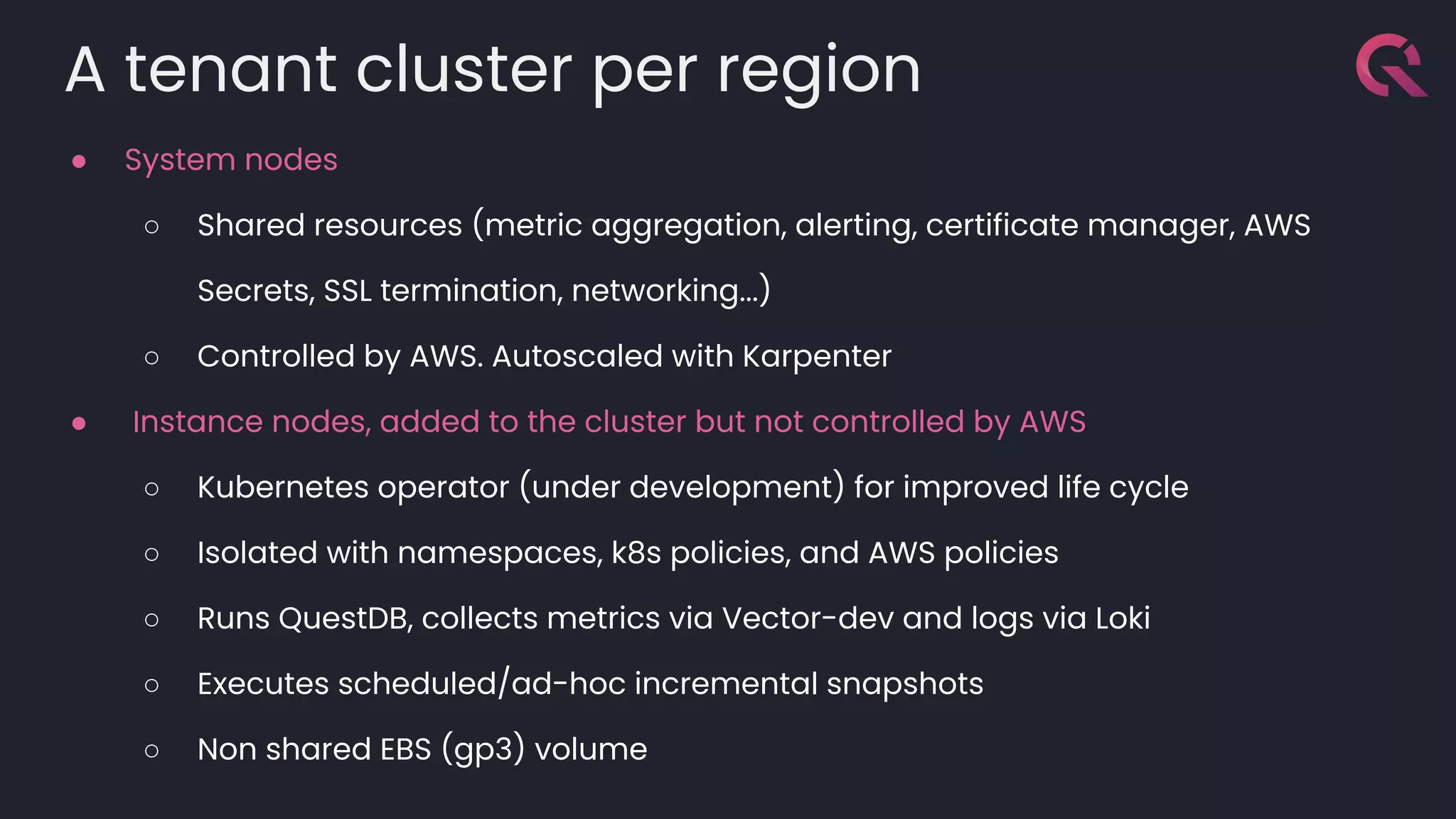

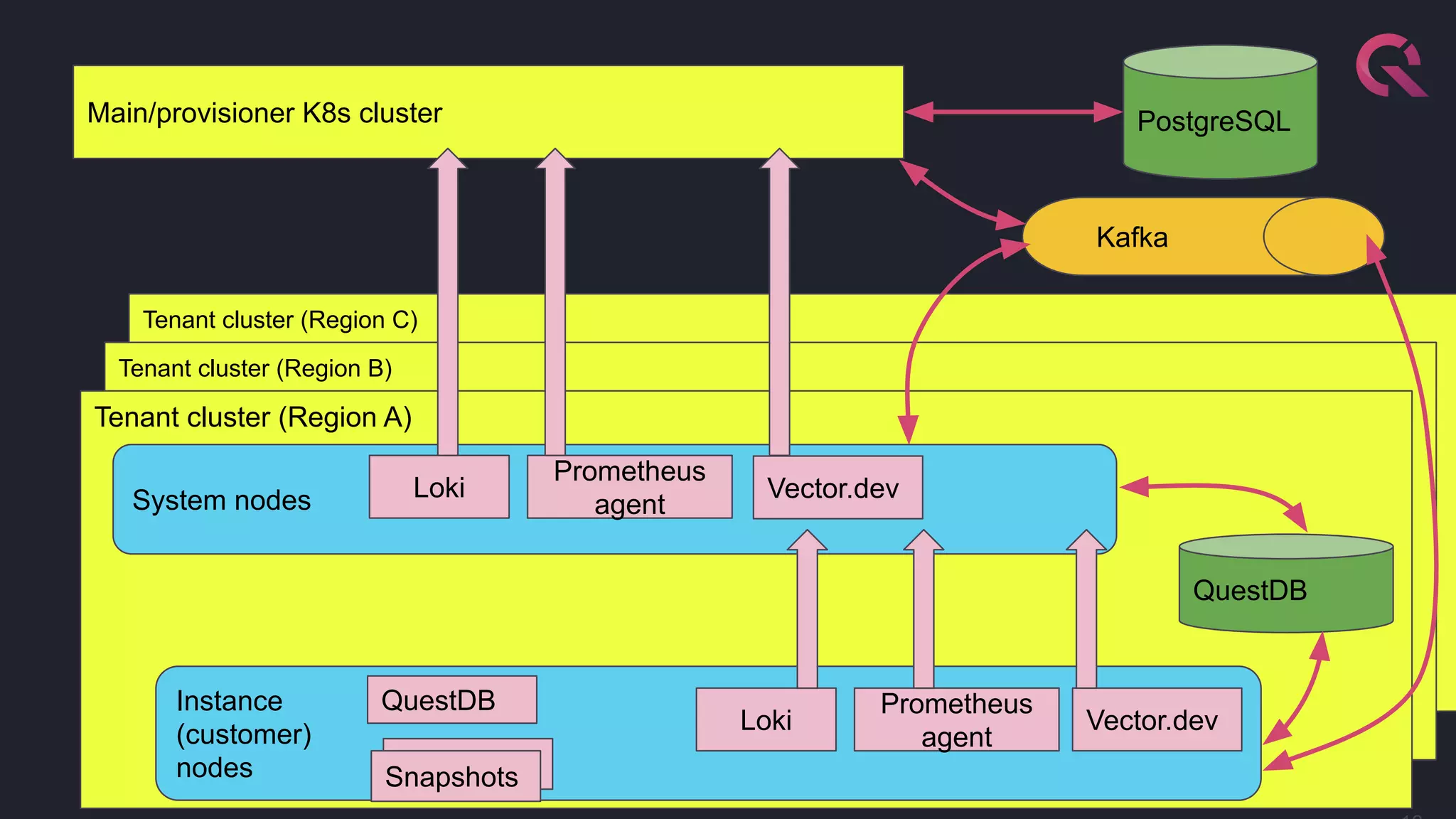

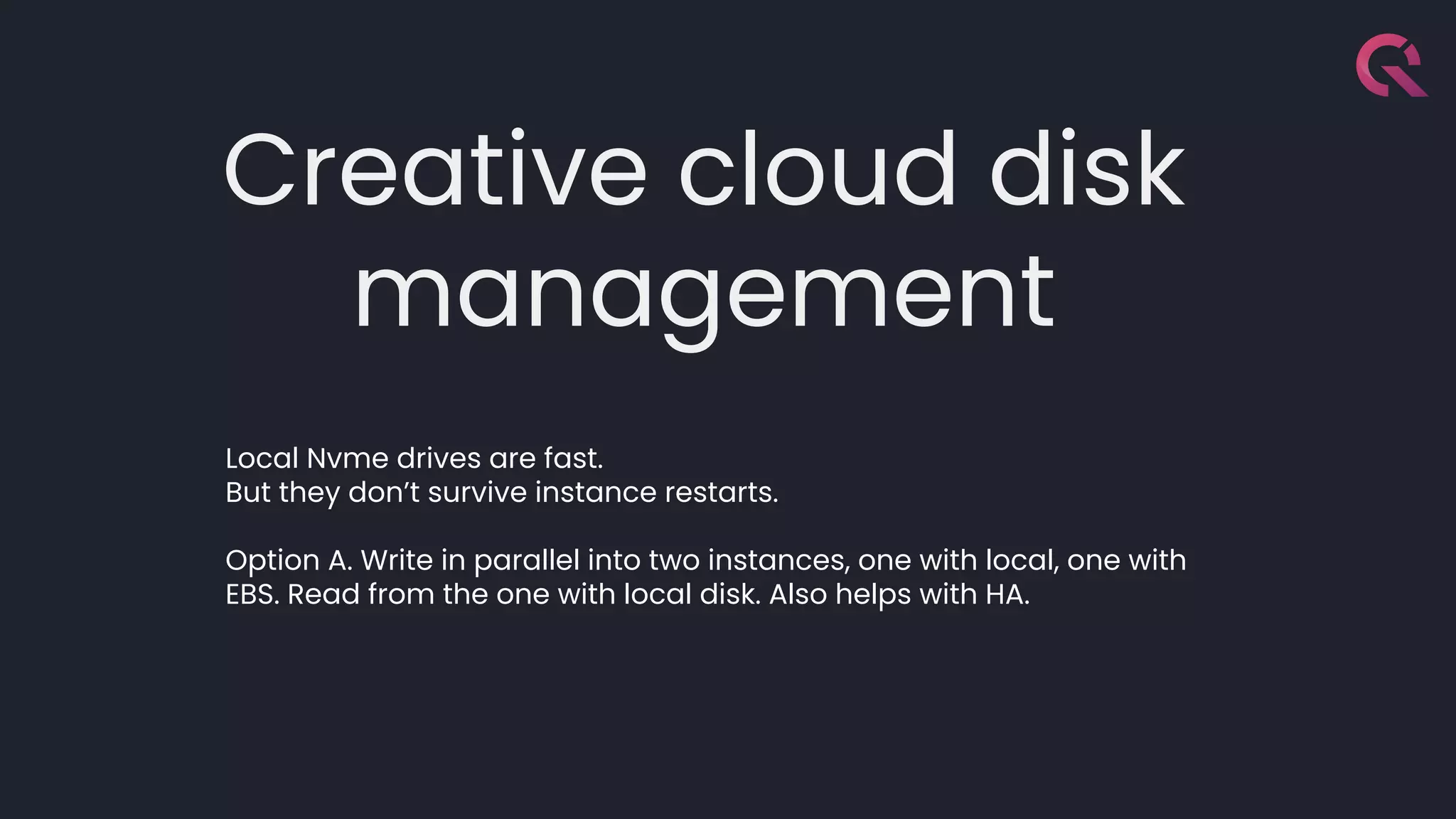

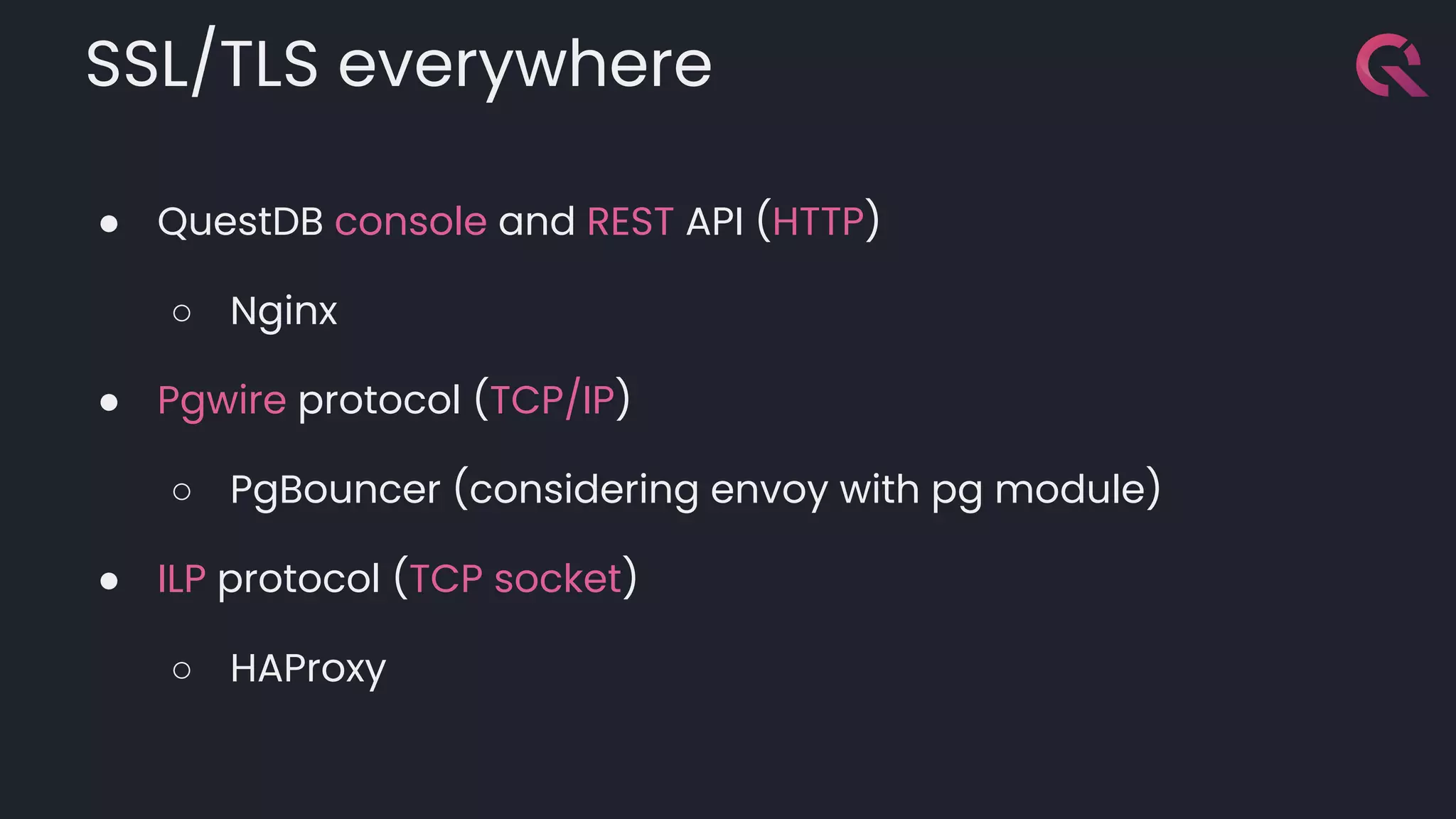

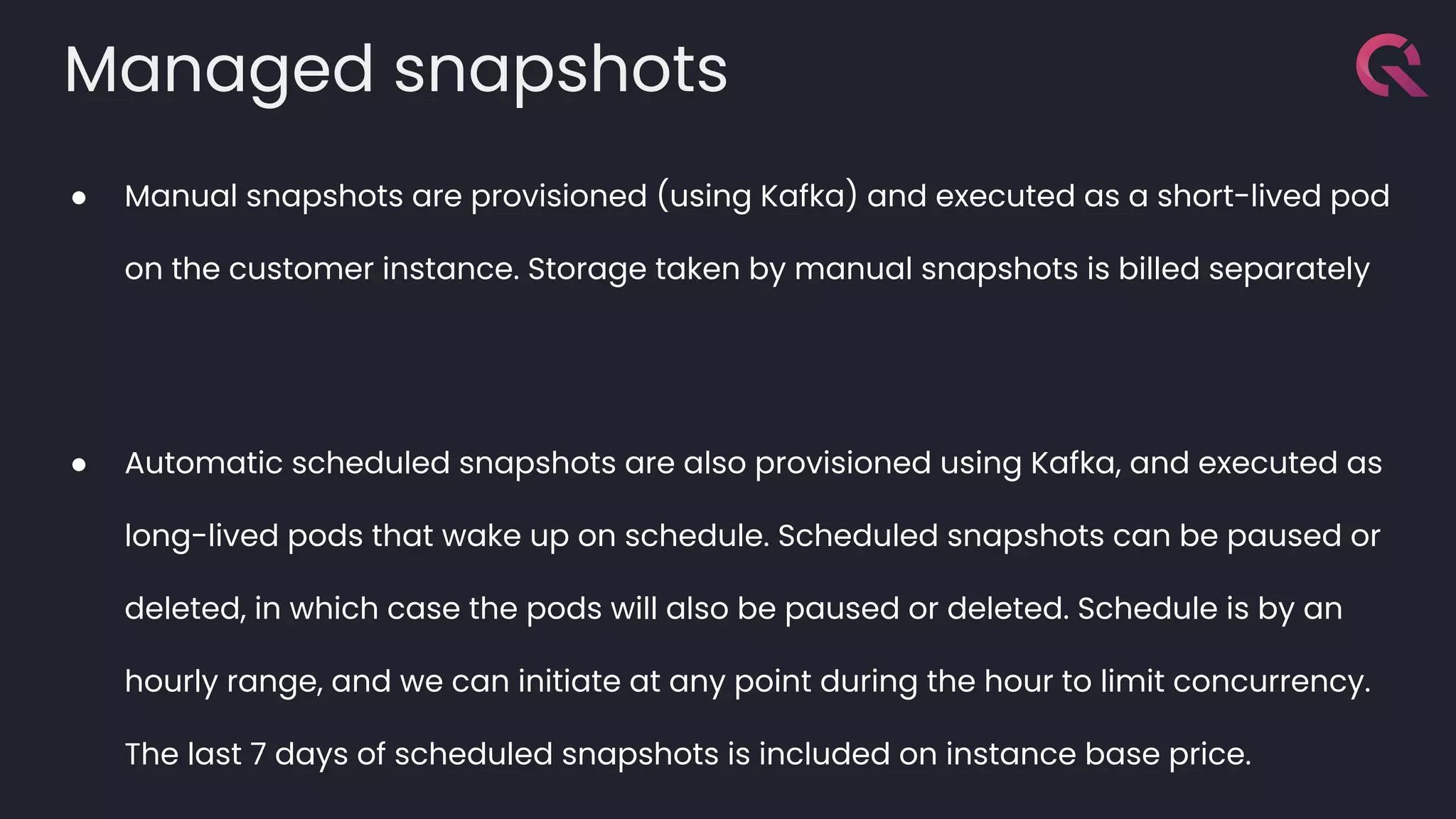

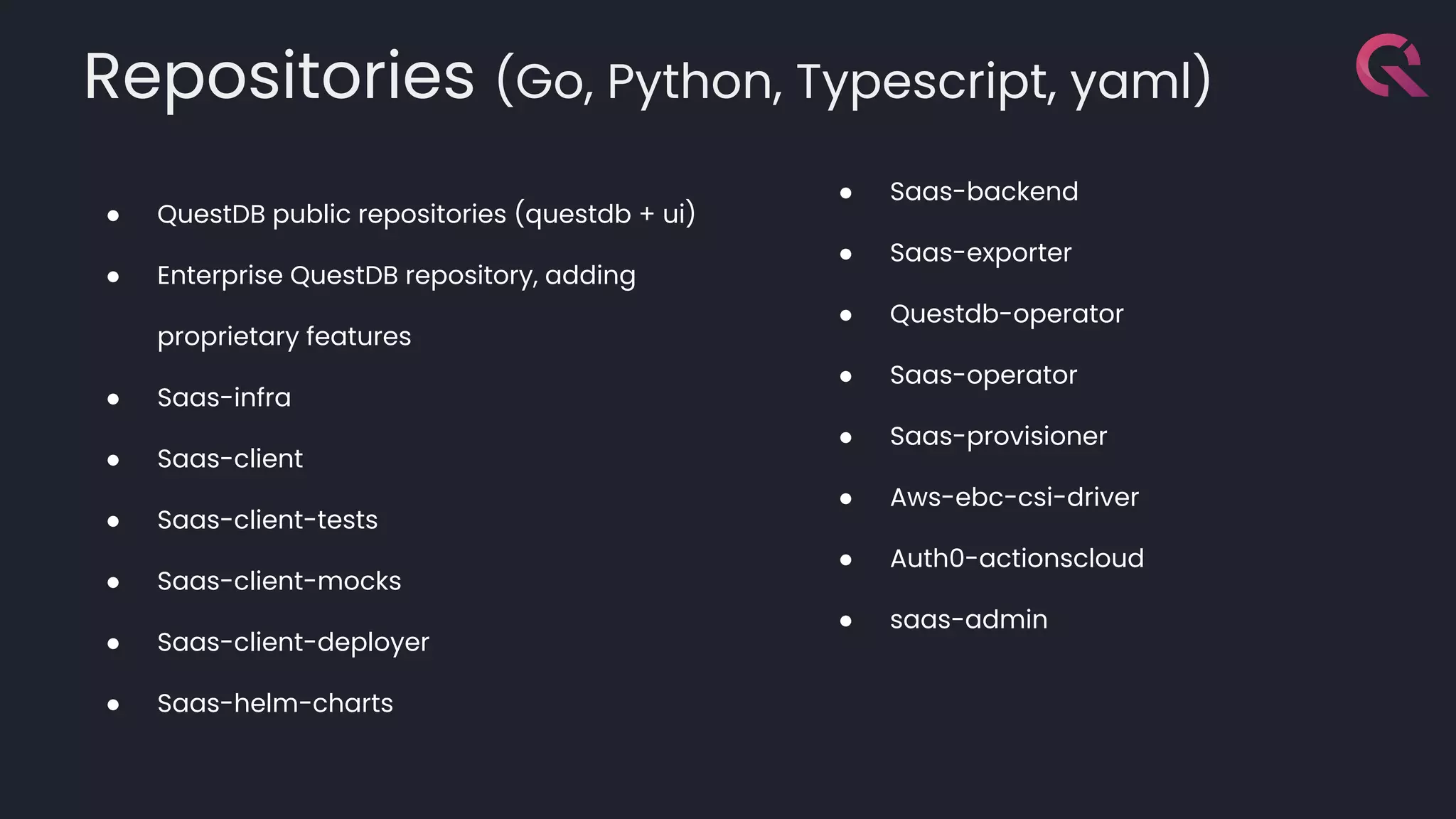

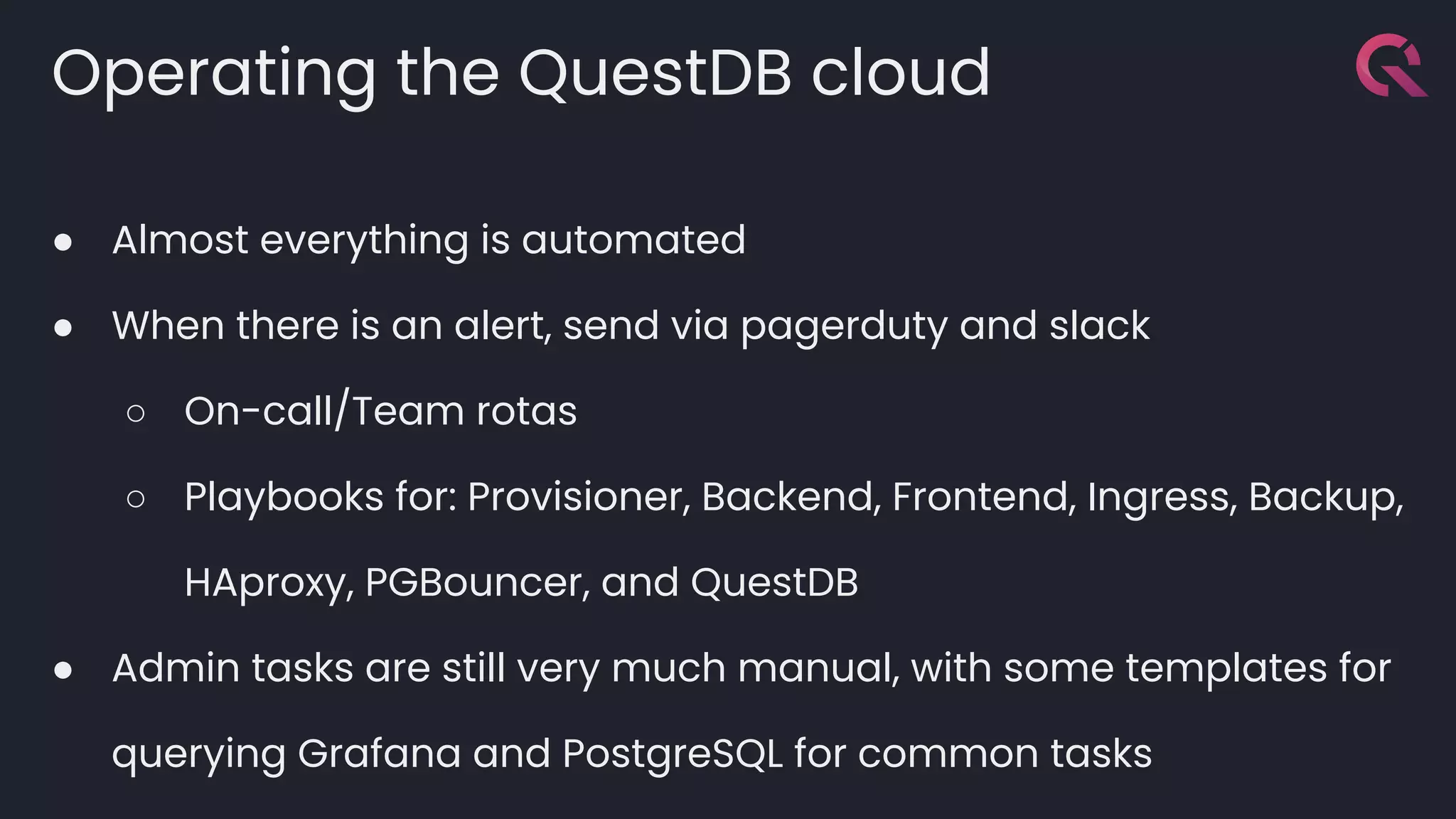

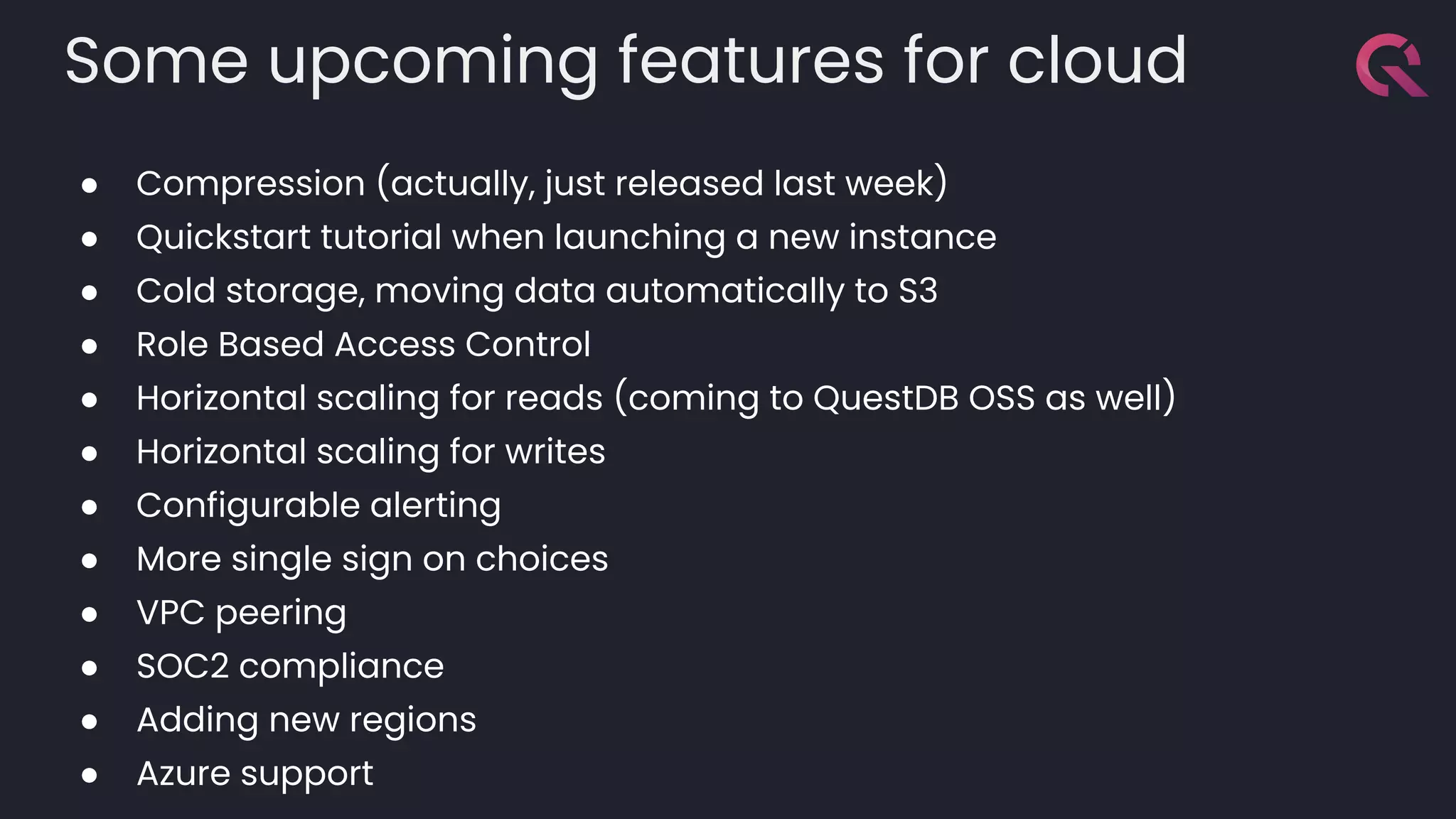

This document details how QuestDB transitioned from an open-source database to a managed SaaS multi-tenant setup using Kubernetes, highlighting essential features such as performance, security, and ease of use. The document outlines the provisioning process, infrastructure requirements, monitoring mechanisms, and upcoming features aimed at enhancing the cloud service. It emphasizes a strong commitment to developer experience and open-source principles throughout the deployment and operational strategies.