Cdisc2 rdf overveiw

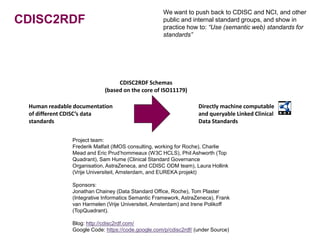

- 1. We want to push back to CDISC and NCI, and other CDISC2RDF public and internal standard groups, and show in practice how to: “Use (semantic web) standards for standards” CDISC2RDF Schemas (based on the core of ISO11179) Human readable documentation Directly machine computable of different CDISC’s data and queryable Linked Clinical standards Data Standards Project team: Frederik Malfait (IMOS consulting, working for Roche), Charlie Mead and Eric Prud’hommeaux (W3C HCLS), Phil Ashworth (Top Quadrant), Sam Hume (Clinical Standard Governance Organisation, AstraZeneca, and CDISC ODM team), Laura Hollink (Vrije Universiteit, Amsterdam, and EUREKA projekt) Sponsors: Jonathan Chainey (Data Standard Office, Roche), Tom Plaster (Integrative Informatics Semantic Framework, AstraZeneca), Frank van Harmelen (Vrije Universiteit, Amsterdam) and Irene Polikoff (TopQuadrant). Blog: http://cdisc2rdf.com/ Google Code: https://code.google.com/p/cdisc2rdf/ (under Source)

- 2. CDISC2RDF CDISC2RDF Schemas (based on the core of ISO11179) Human readable documentation Directly machine computable of different CDISC’s data and queryable Linked Clinical standards Data Standards Example: ”DRUG INTERRUPTED” in Codelist ”ACN” (Action Taken with Study Treatment) Example: --ACN Screenshots from the ontology tool: Example: AEACN TopBraid Composer

- 3. CDISC2RDF Overview of Ontologies: Schemas Meta model schema (mms) (Data definition, the core part of ISO 11179) SDTM 1.2 schema (sdtms) (Classifiers: Data Element roles and types) Controlled Terminology schema (cts) (a few additional properties from the NCI Thesaurus export) SDTM 3.1.2 IG schema (sdtmigs) (a few additional properties)

- 4. CDISC2RDF Overview of Ontologies: Schemas and Standards Meta model schema (mms) (Data definition, the core part of ISO 11179) CDASH CT value sets SDTM 1.2 schema (sdtms) SDTM 1.2 (classificiers: Data Element roles and types) model ADaM CT Controlled Terminology schema (cts) (a few additional properties value sets from the NCI Thesaurus export) SDTM IG 3.1.2 SDTM 3.1.2 IG schema domains SDTM CT (sdtmigs) (a few additional properties) value sets

- 5. CDISC2RDF SDTM Model 1.2 Example: --ACN Meta model schema (mms) (Data definition, the core part of ISO 11179) SDTM 1.2 schema (sdtms) SDTM 1.2 (Classifiers: Data Element Compliance, Roles and Types) model Screenshots from the ontology tool: TopBraid Composer

- 6. CDISC2RDF SDTM Model 1.2 + IG 3.1.2 Example: --ACN Meta model schema (mms) (Data definition, the core part of ISO 11179) SDTM 1.2 schema (sdtms) SDTM 1.2 (Classifiers: Data Element Compliance, Roles and Types) model SDTM IG 3.1.2 SDTM 3.1.2 IG schema domains (sdtmigs) (a few additional properties) Example: AEACN Screenshots from the ontology tool: TopBraid Composer

- 7. CDISC2RDF CT Schema and CT:s Meta model schema (mms) (Data definition, the core part of ISO 11179) Controlled Terminology schema (cts) SDTM CT (a few additional properties from the NCI Thesaurus export) value sets Example: ”DRUG INTERRUPTED” in Codelist ”ACN” (Action Taken with Study Treatment) Screenshots from the ontology tool: TopBraid Composer

- 8. CDISC2RDF Annotation of SDTM CT Excel using CDISC2RDF schemas SDTM CT original format Import file: SDTM Codelist, annotated to map the CDISC2RDF schema for Controlled Terminologies Meta model schema (mms) (data definition, the core part of ISO 11179) Controlled Terminology schema (cts) (structure of CDISC’s value sets drawn from NCI Thesaurus) Import file: SDTM Codelist Elements annotated to map CDISC2RDF schema for Controlled Terminologies

- 9. CDISC2RDF Import / Transform SDTM CT in Annotated Excel to a SDTM CT ontology Import file: SDTM Codelist, annotated to map the CDISC2RDF schema for Controlled Terminologies TopBraid Composer Import Import file: SDTM Codelist Elements annotated to map CDISC2RDF schema for Controlled Terminologies SDTM CT value sets Screenshots from the ontology tool: TopBraid Composer

- 10. CDISC2RDF From SDTM Implementation Guideline (IG) in PDF/Excel to OWL/RDF Meta model schema (mms) (data definition, the core part of ISO 11179) SDTM 1.2 schema (sdtms) SDTM 1.2 (classifications: Data Element roles and types) model SDTM 3.1.2 IG schema (sdtmigs) (a few additional properties) This one is yet not published Annotations Import file: SDTM IG 3.1.2 annotated using CDISC2RDF SDTM IG Schema Import/Transform using TopBraid Composer SDTM IG 3.1.2 SDTM CT domains value sets