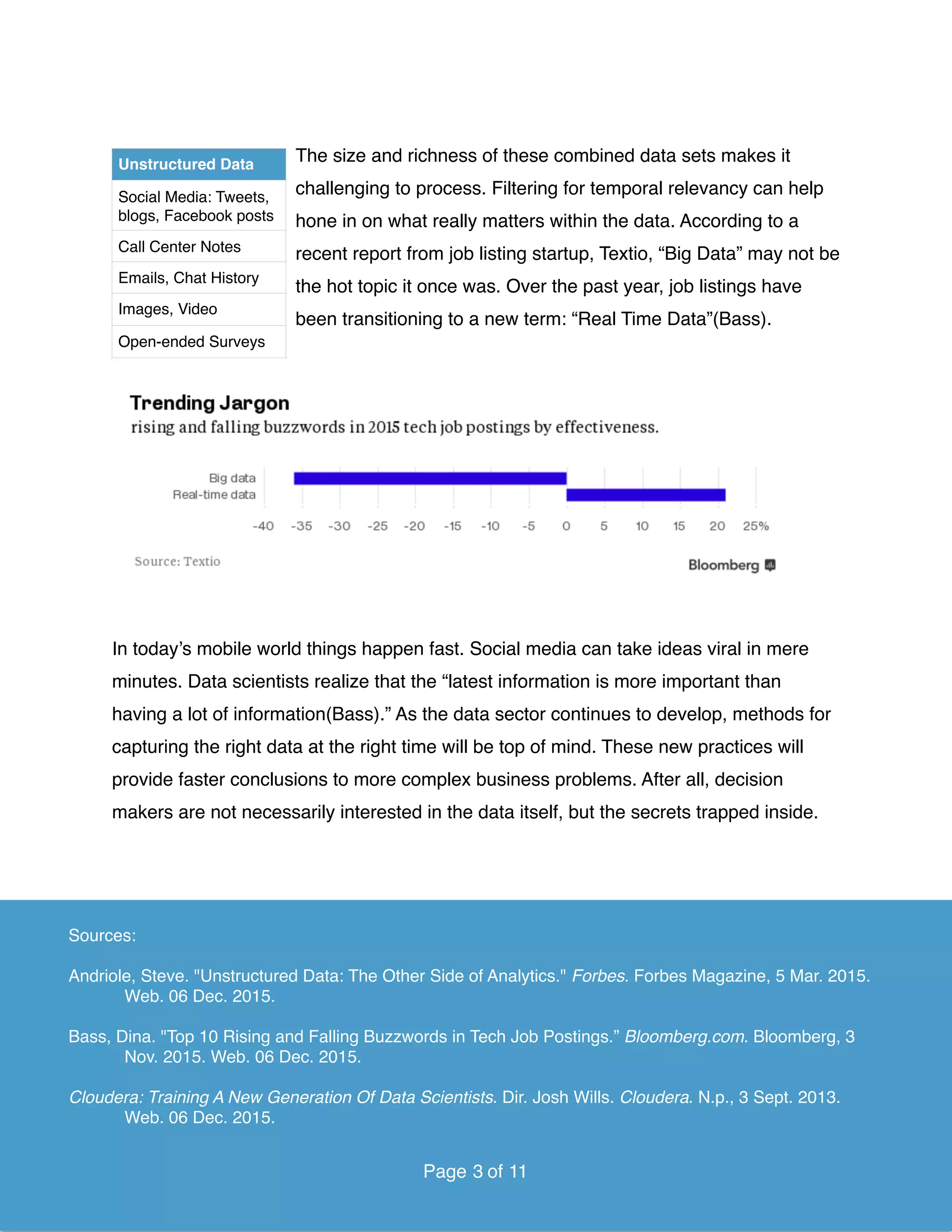

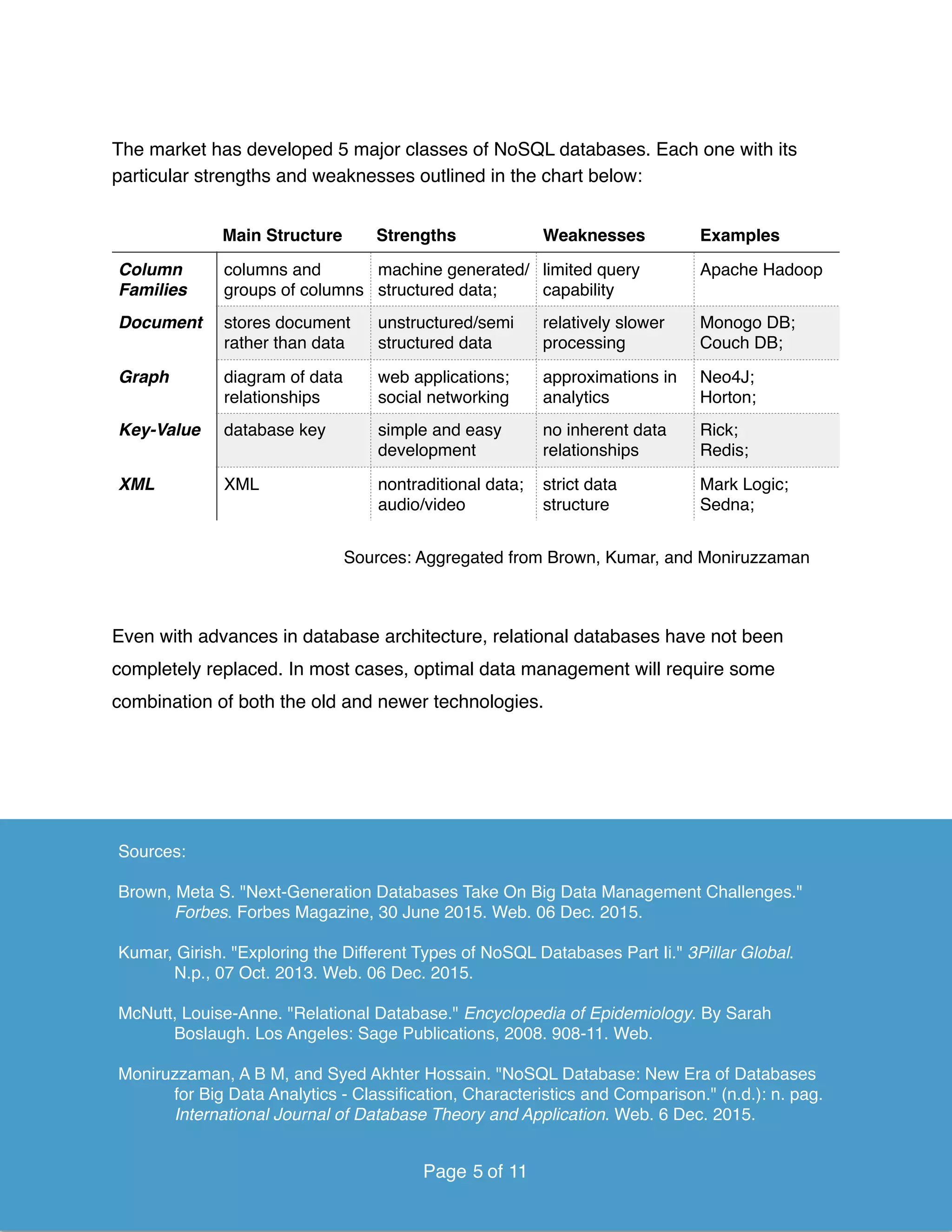

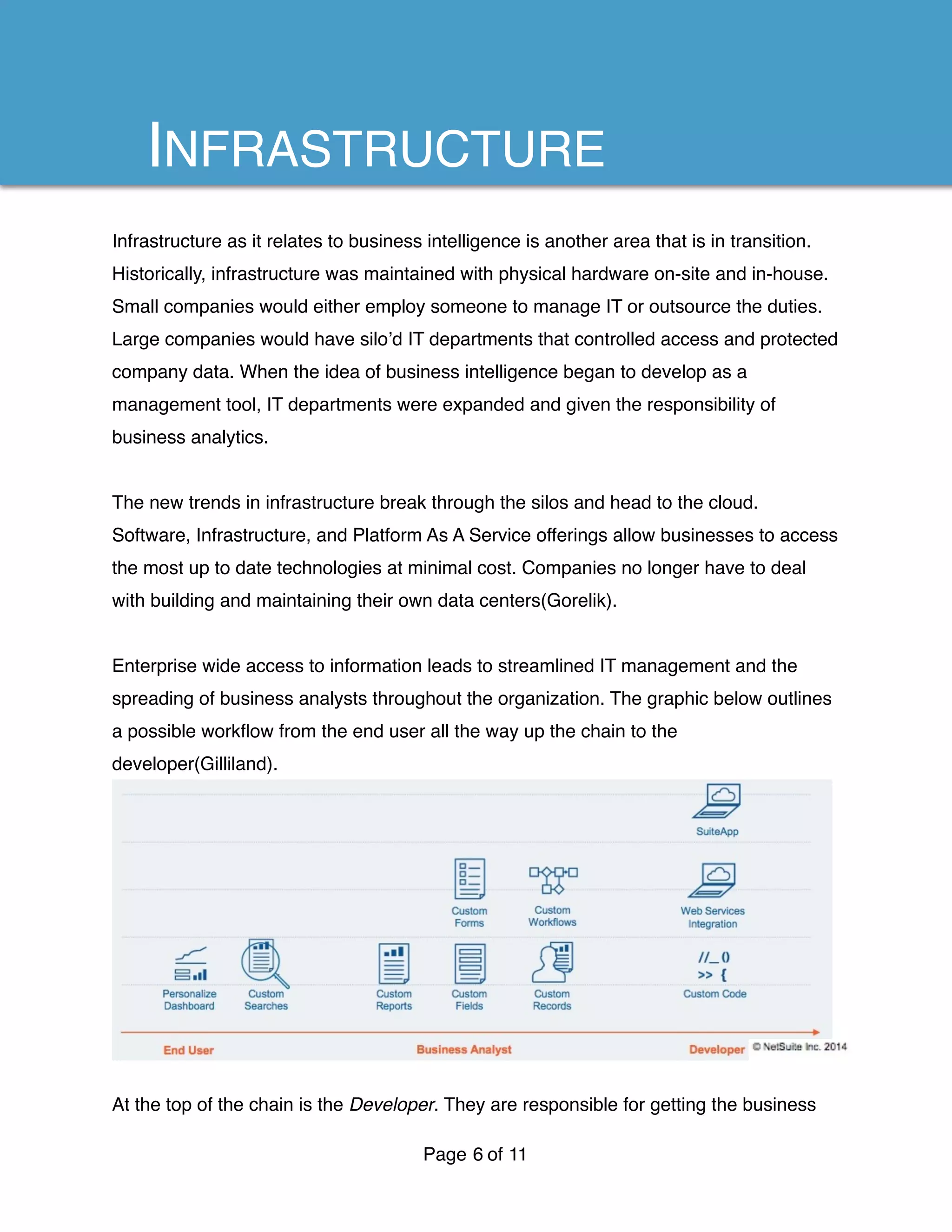

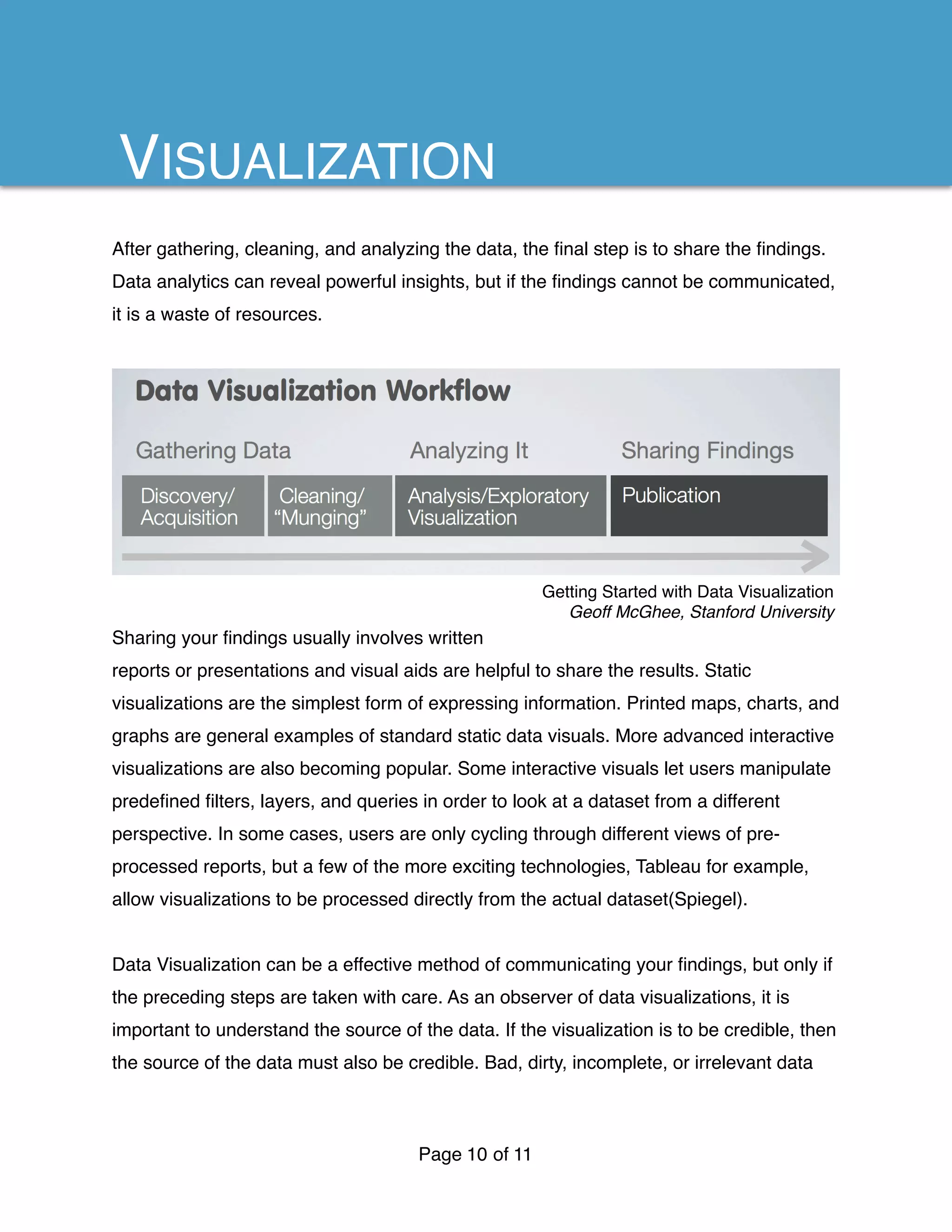

This document discusses business analytics and intelligence. It covers topics such as big data, structured vs unstructured data, databases, infrastructure, analytics evolution, and data visualization. Big data provides value when data sets are massive, though it can be expensive to store and process. Combining structured and unstructured data enables predictive analytics. NoSQL databases were developed to handle diverse data types at large scales. Cloud infrastructure provides benefits like streamlined IT management and widespread access to business intelligence across an organization. Analytics are evolving from internal data analysis to integrating diverse external data sources and building products using predictive insights. Data visualization is an important way to communicate findings from analytics, though the quality of the underlying data impacts the credibility of any visualizations.