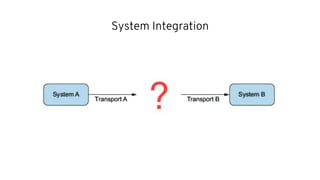

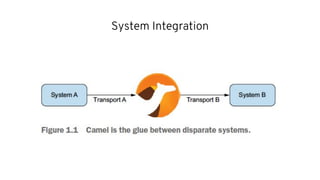

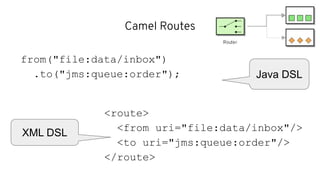

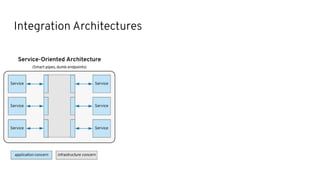

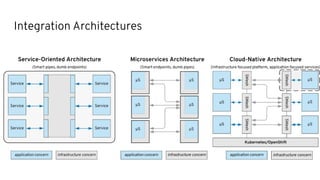

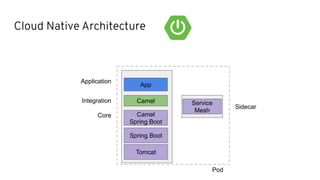

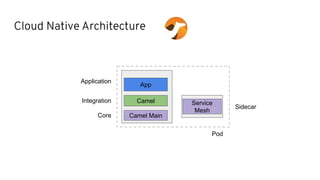

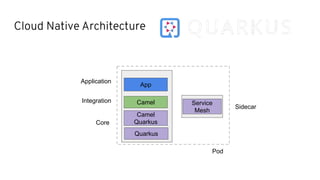

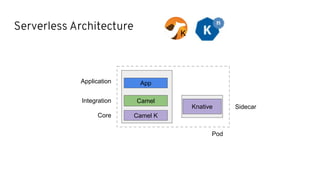

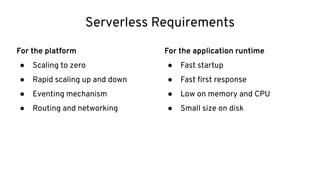

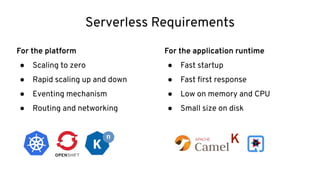

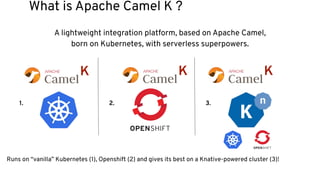

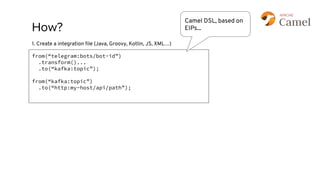

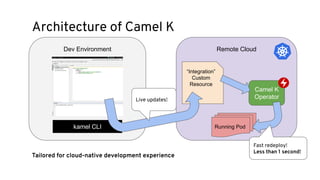

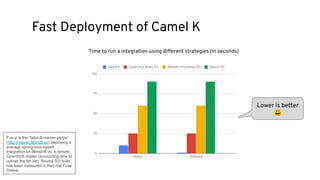

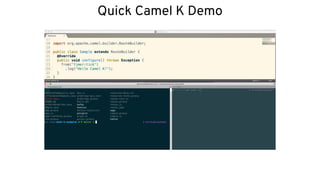

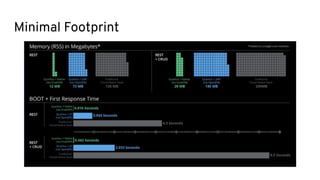

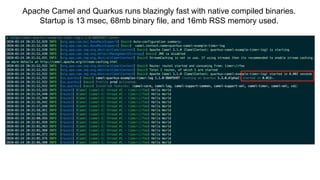

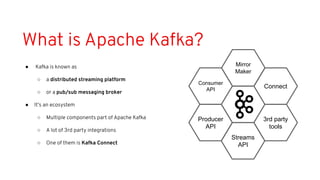

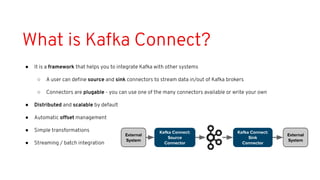

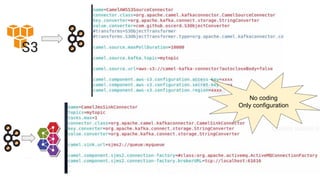

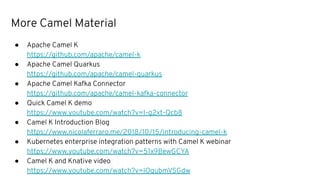

This document discusses best practices for middleware and integration architecture modernization using Apache Camel. It provides an overview of Apache Camel, including what it is, how it works through routes, and the different Camel projects. It then covers trends in integration architecture like microservices, cloud native, and serverless. Key aspects of Camel K and Camel Quarkus are summarized. The document concludes with a brief discussion of the Camel Kafka Connector and pointers to additional resources.