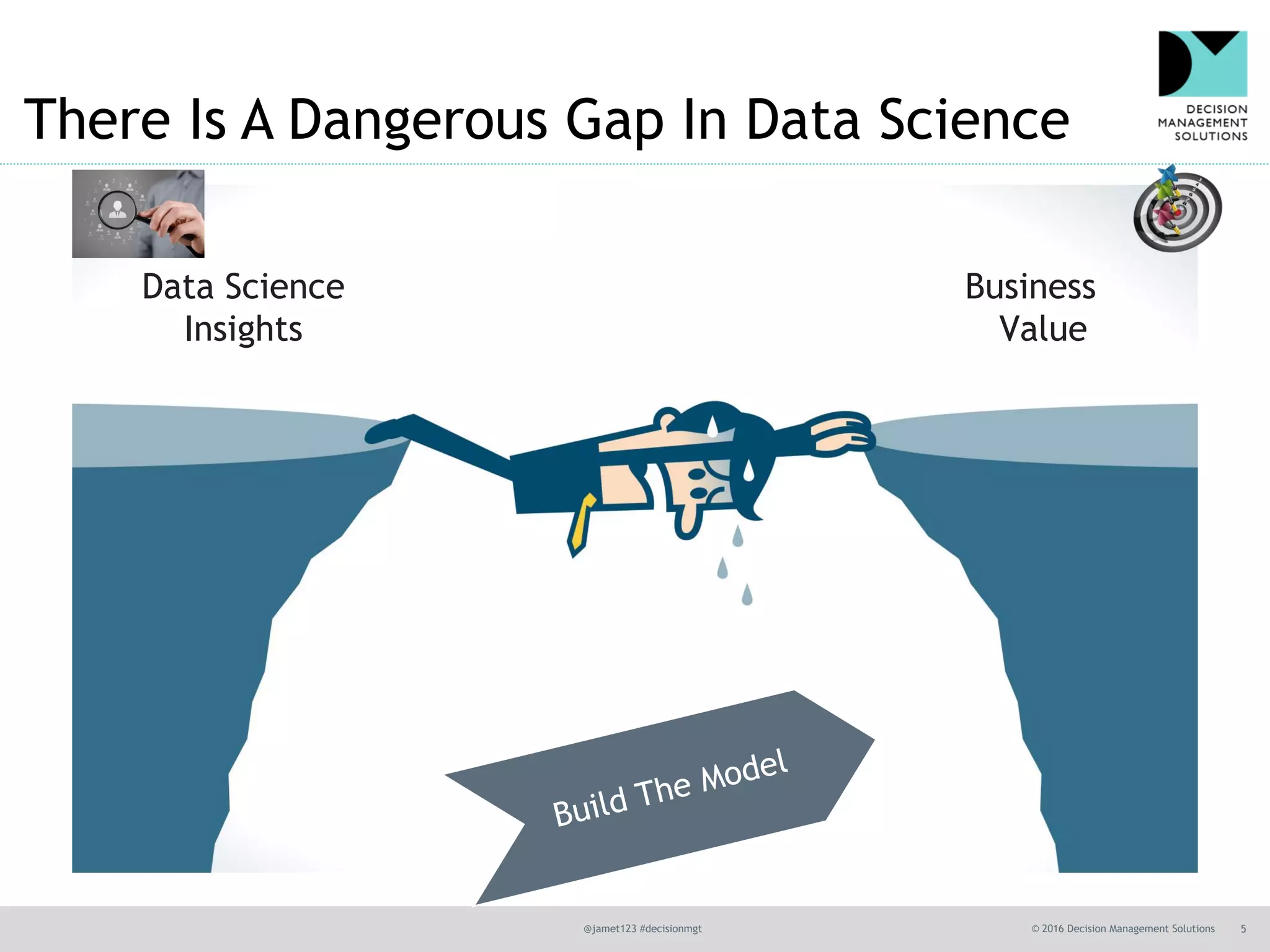

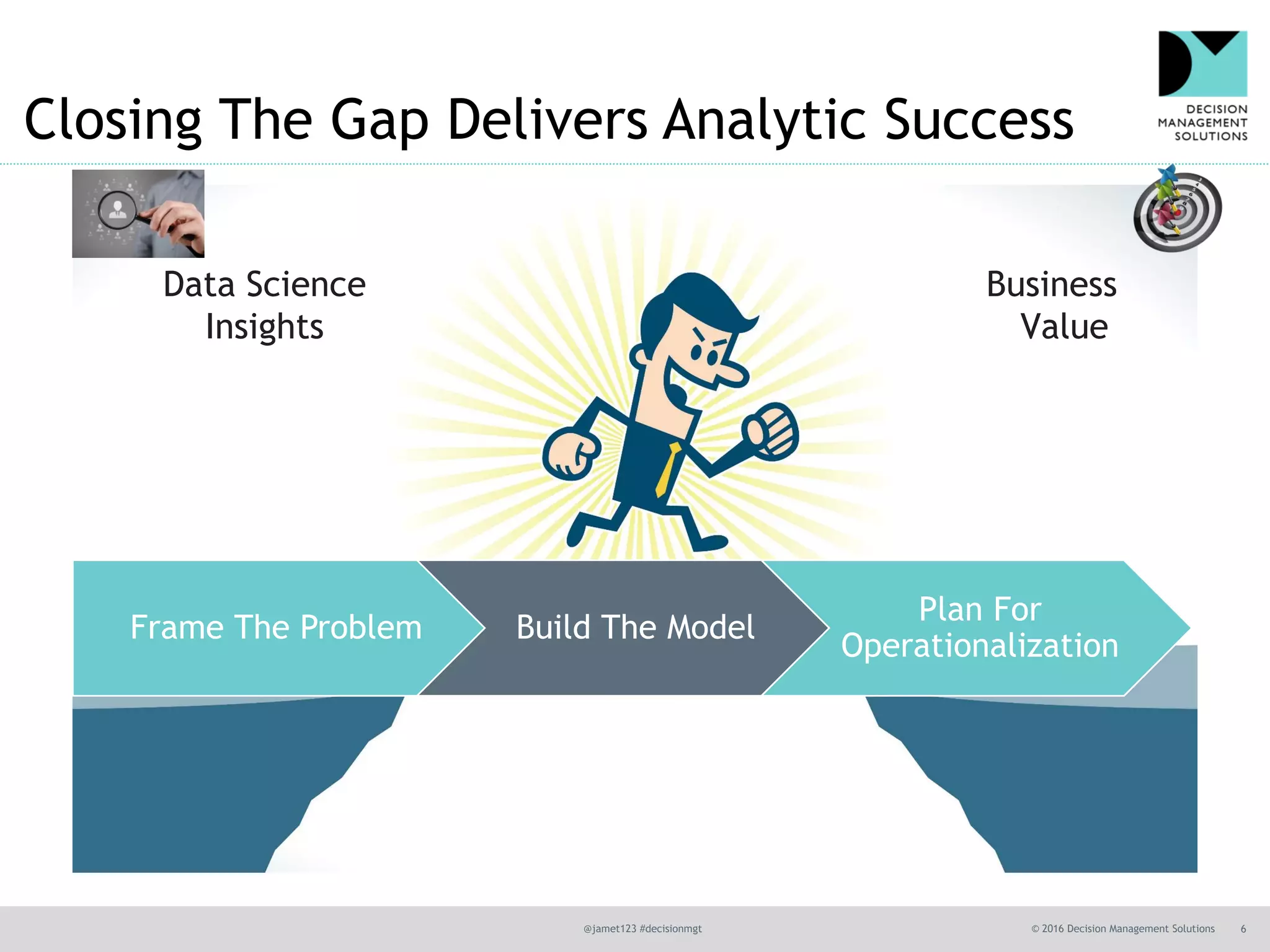

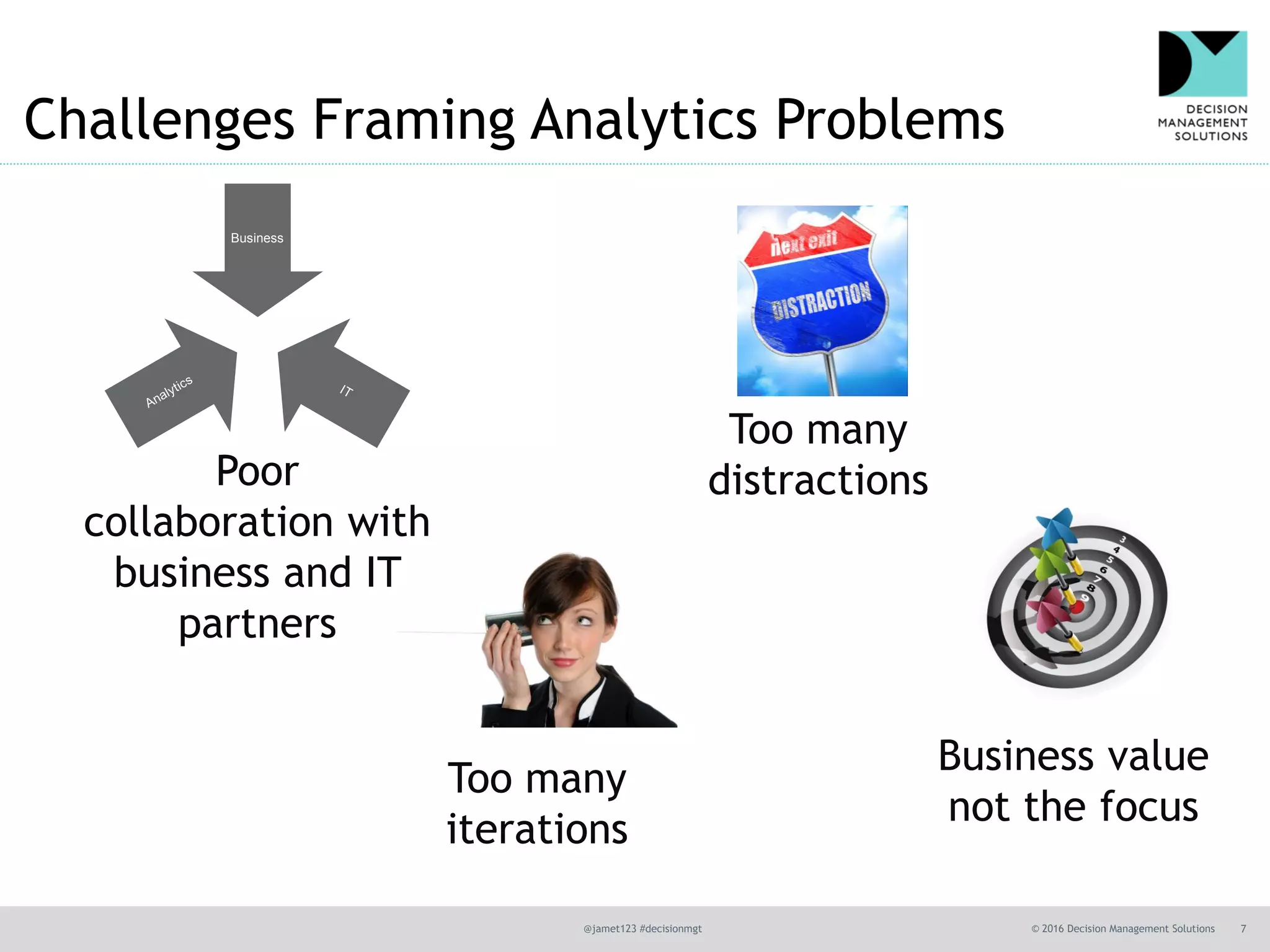

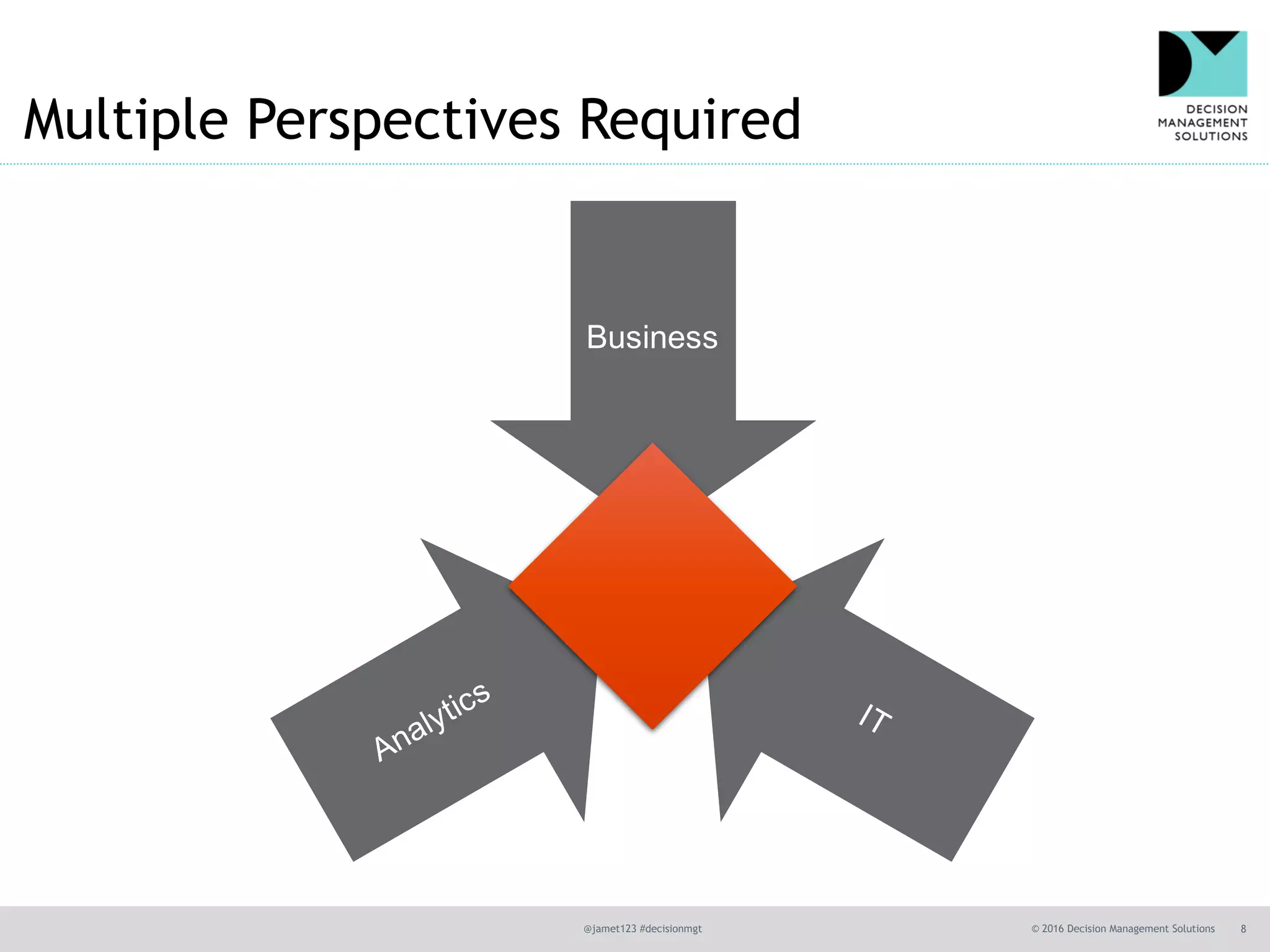

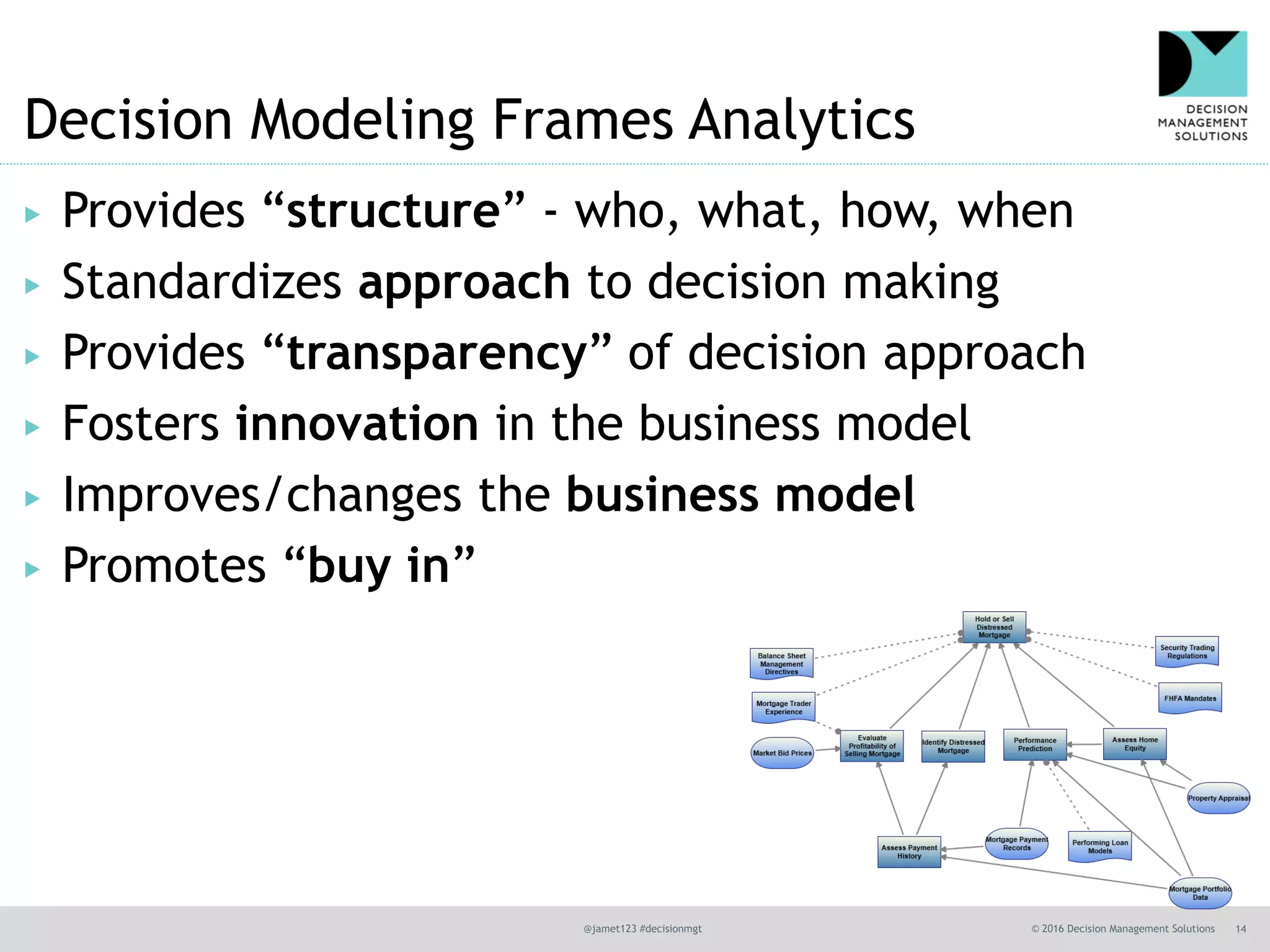

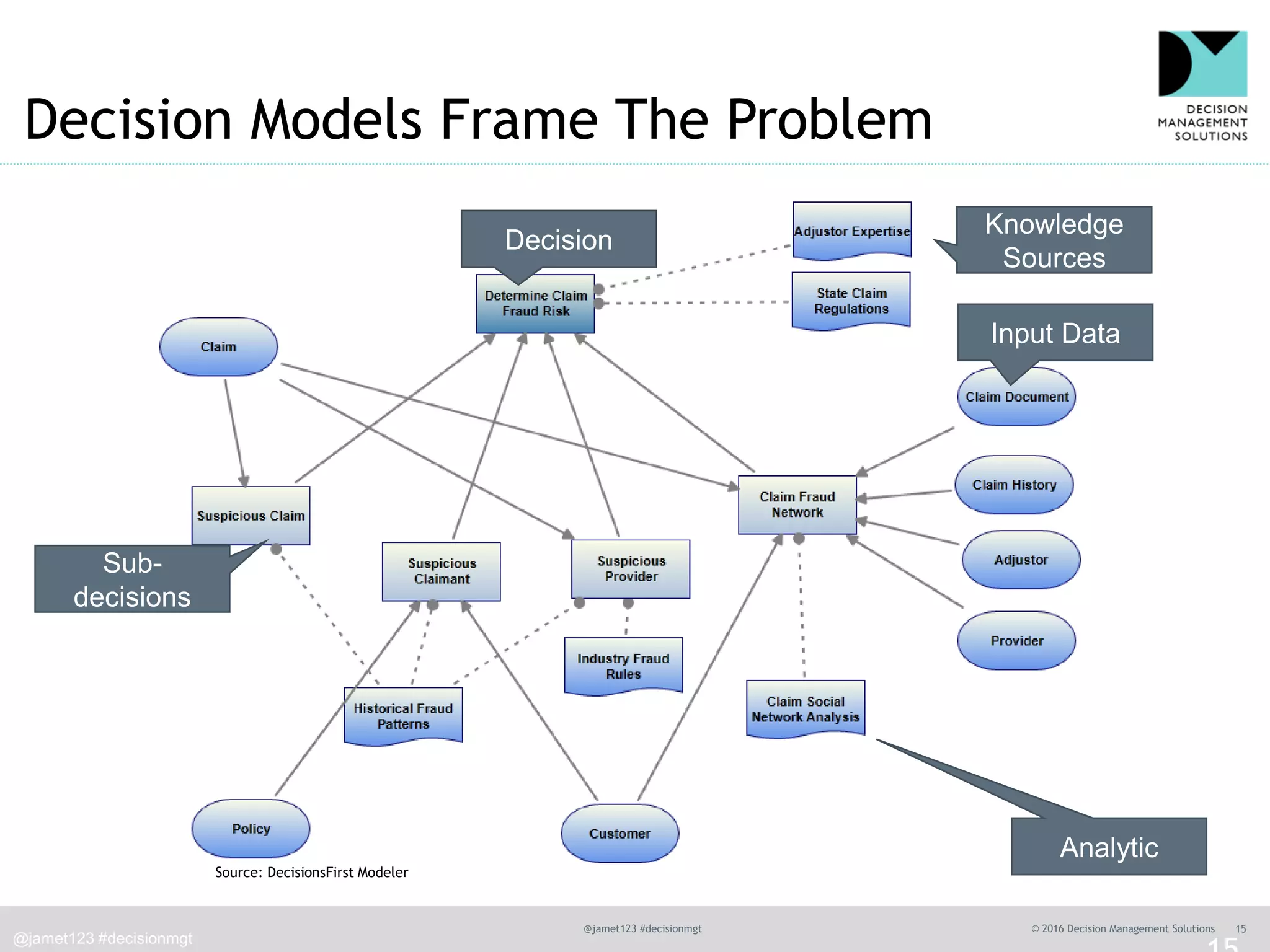

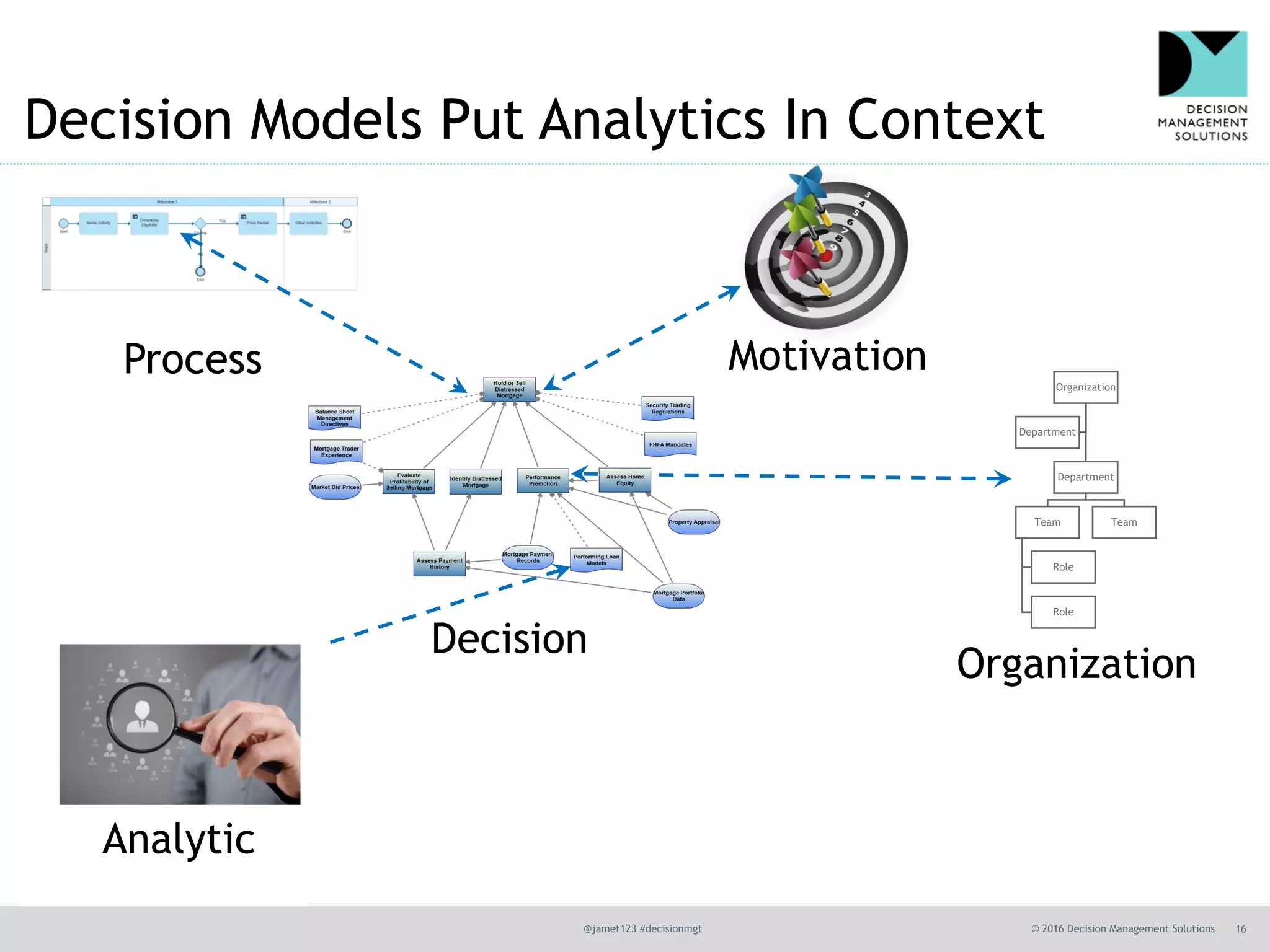

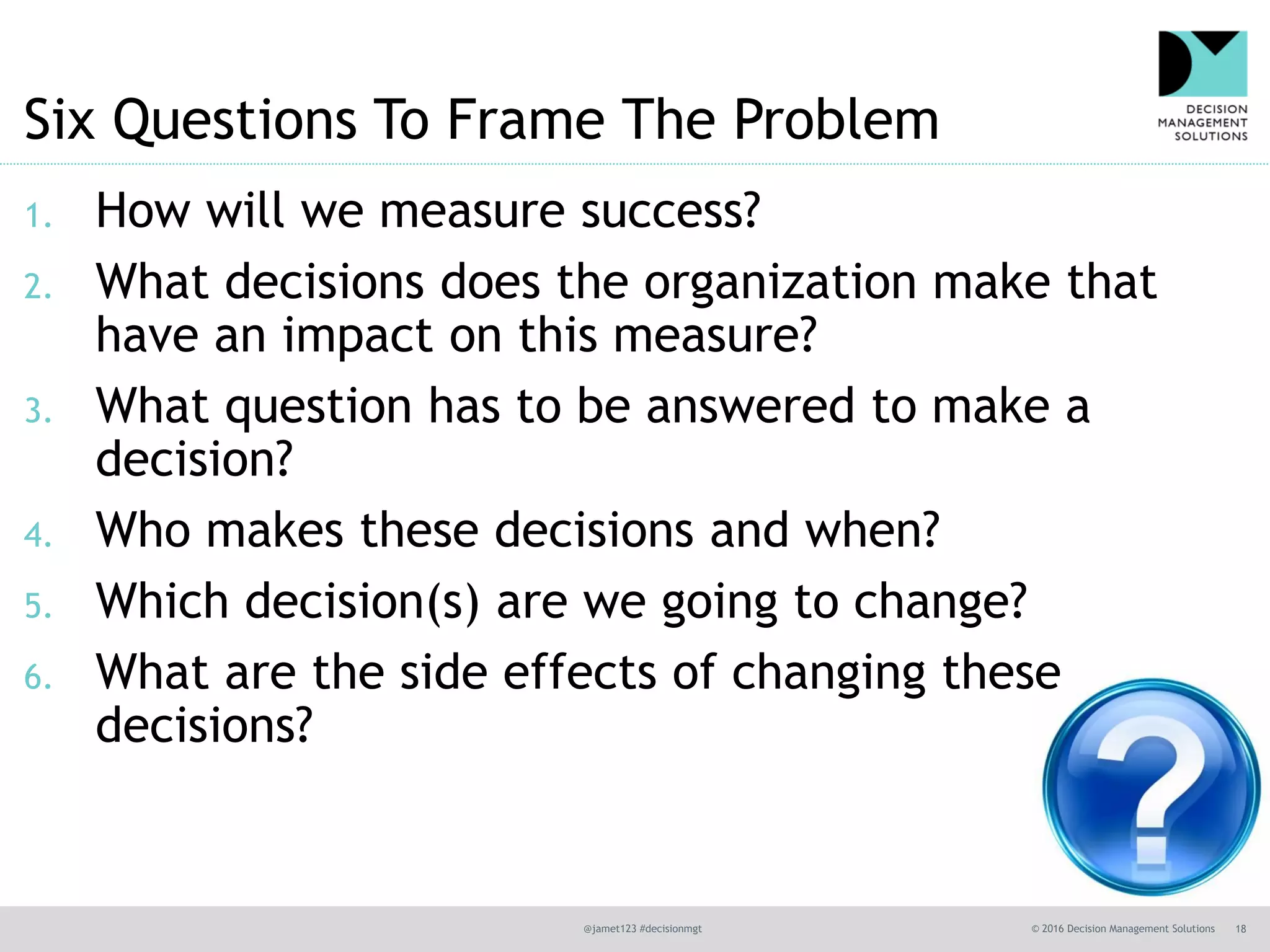

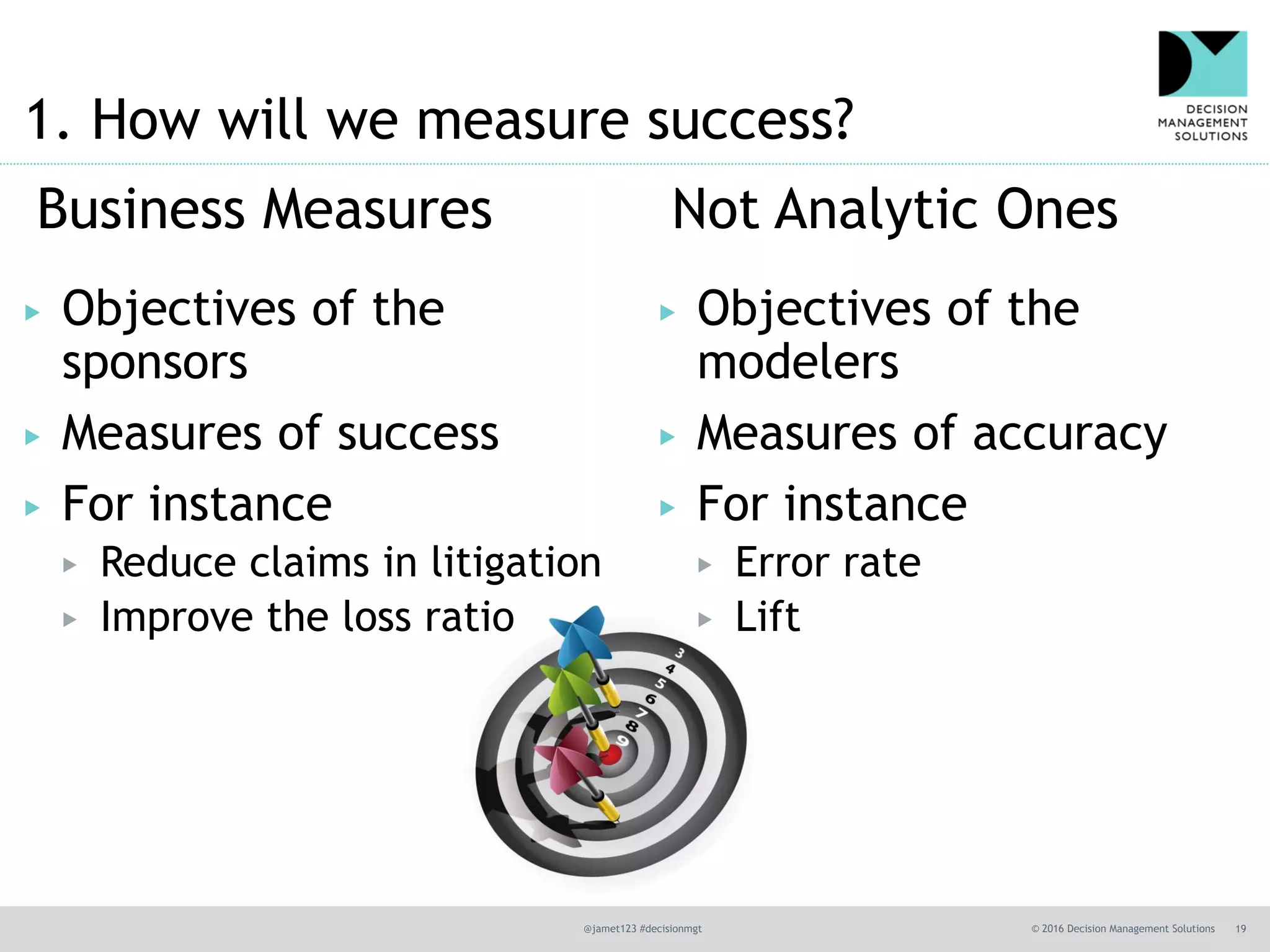

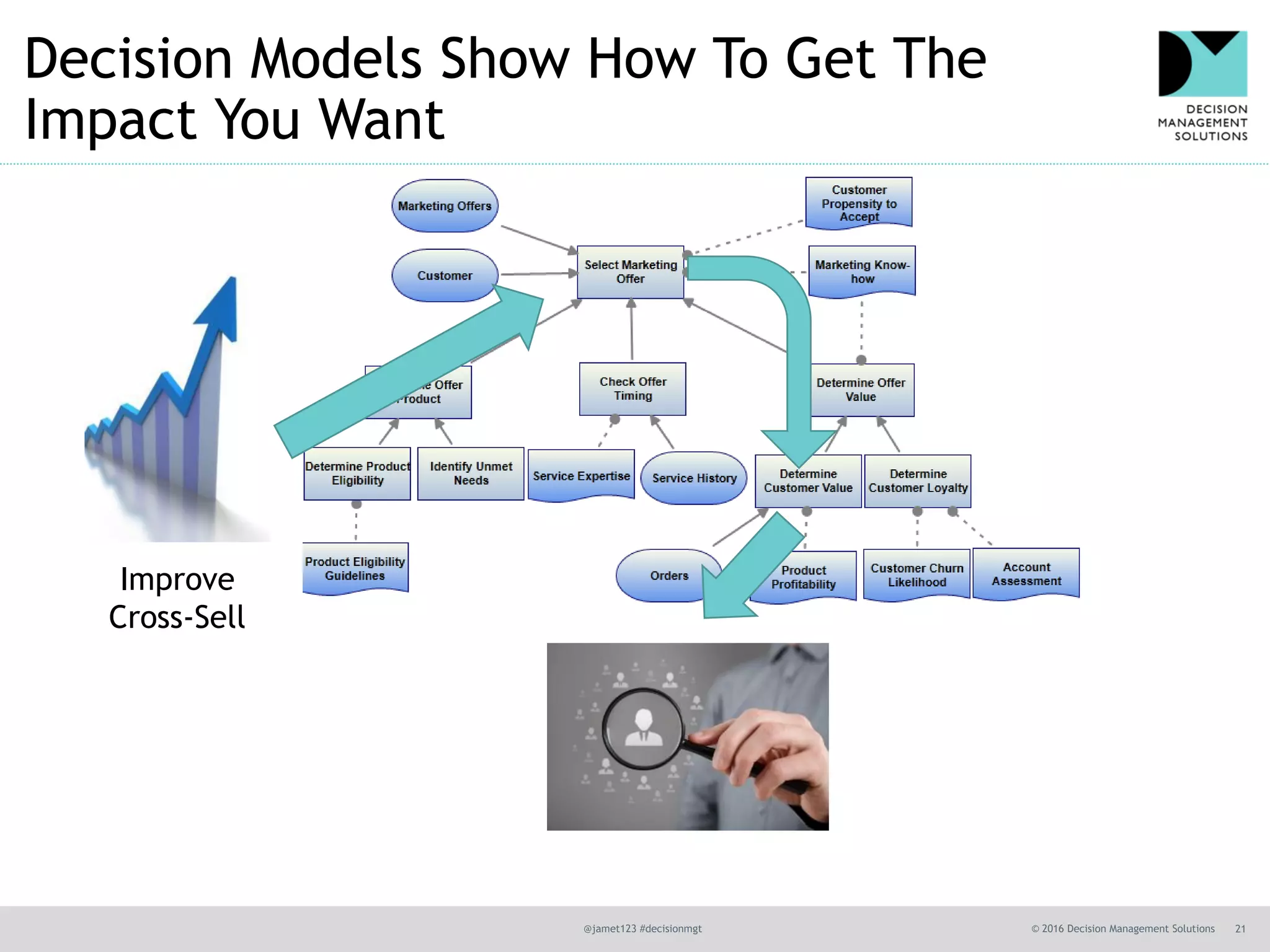

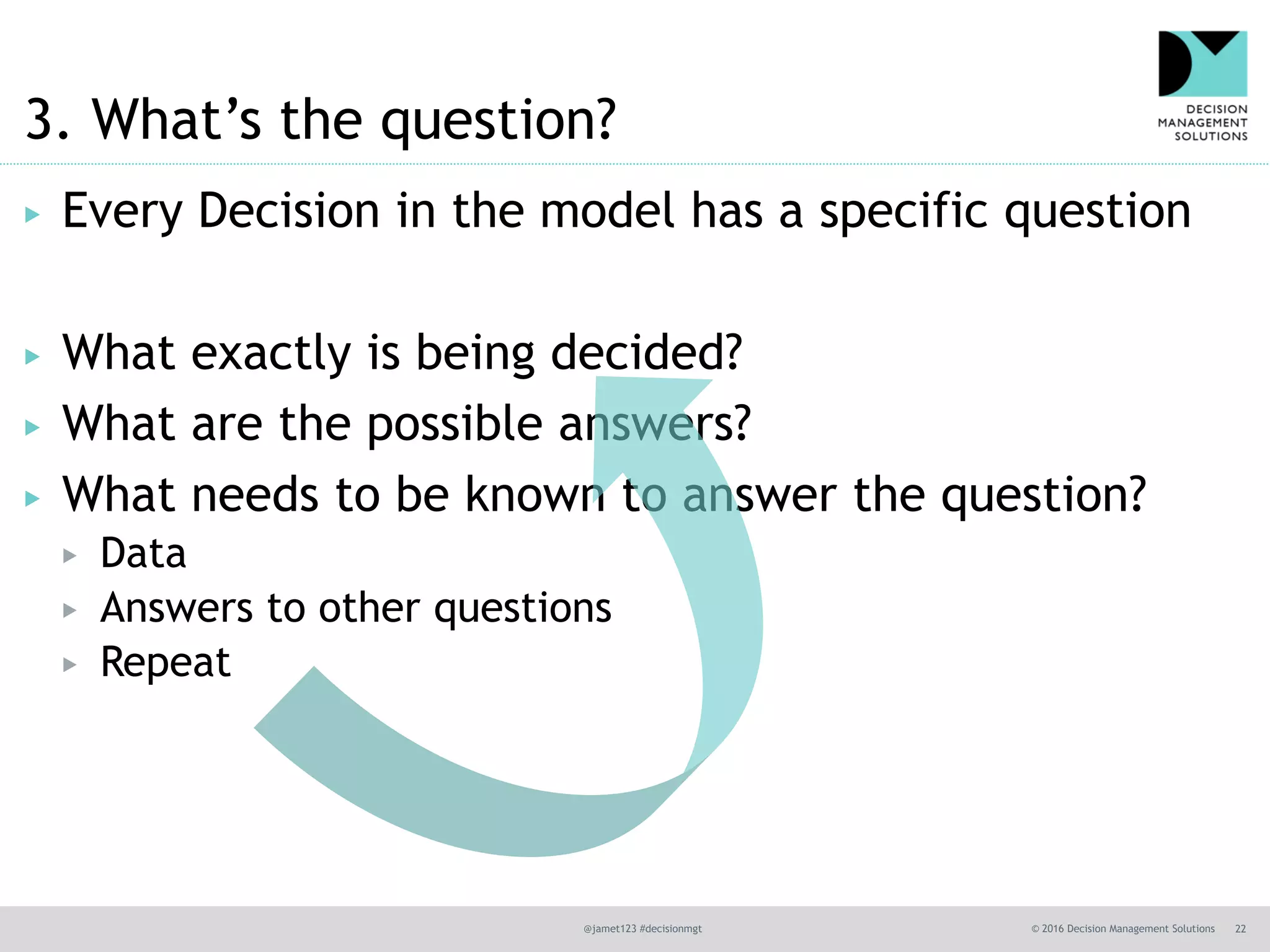

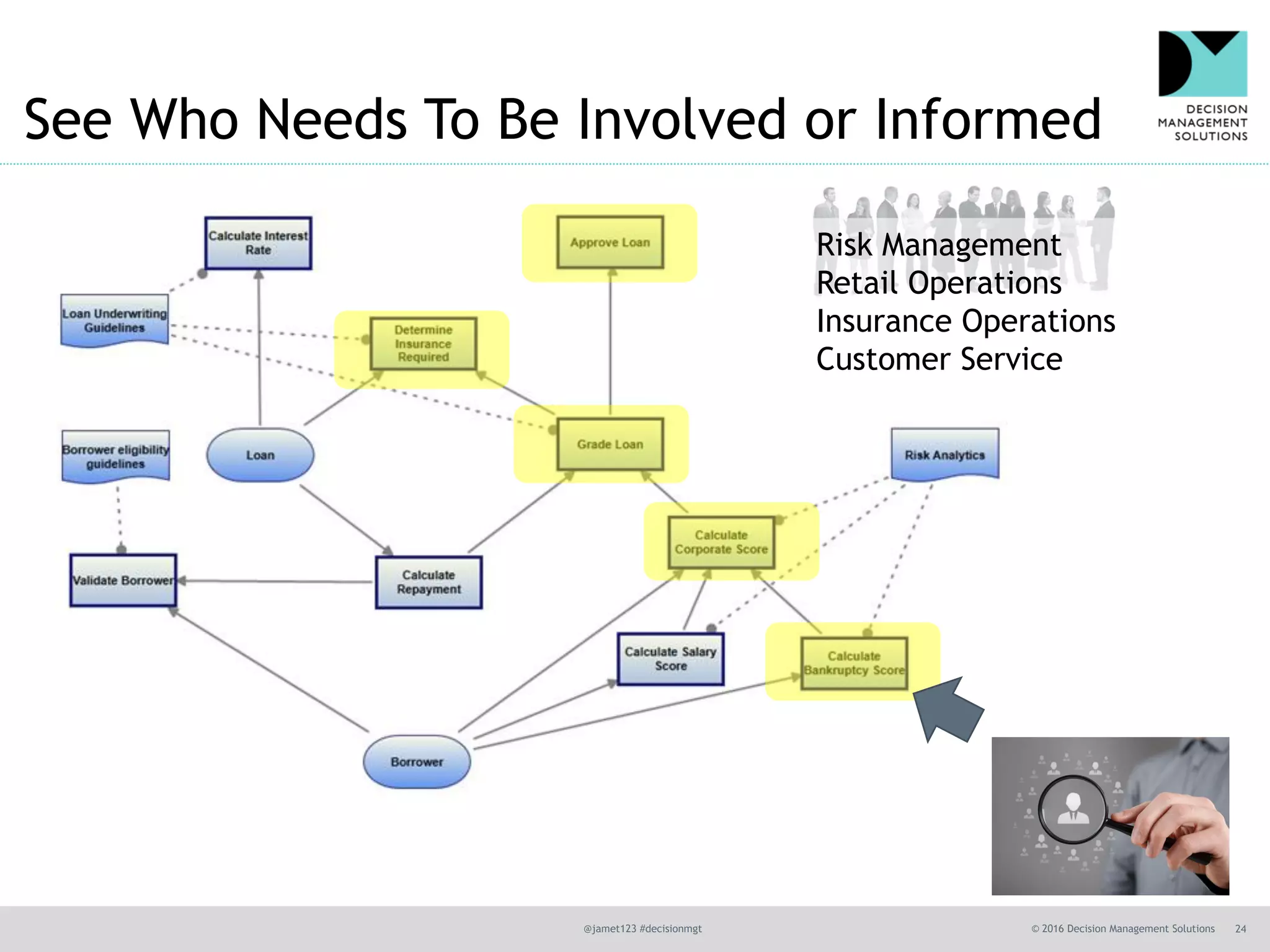

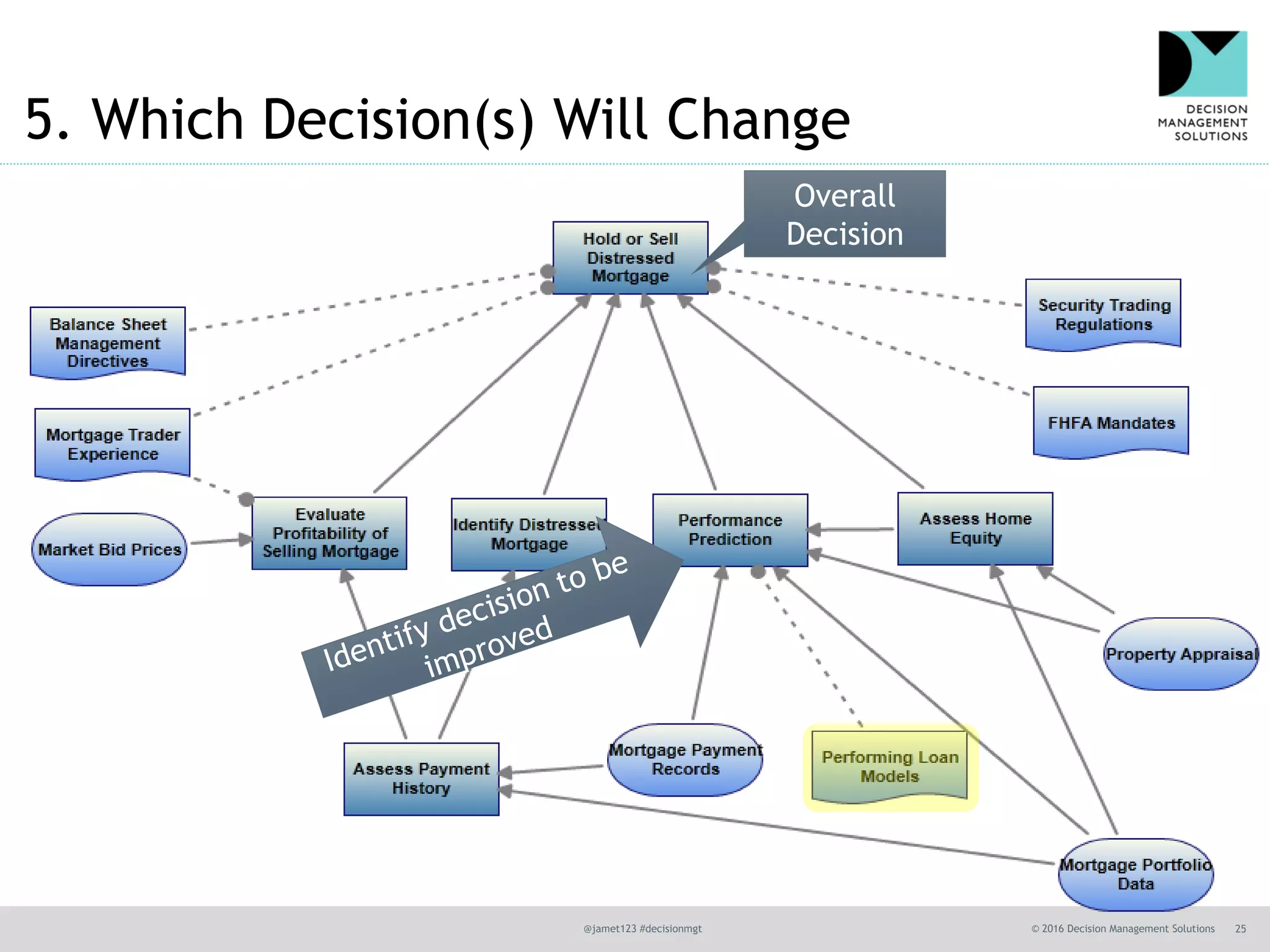

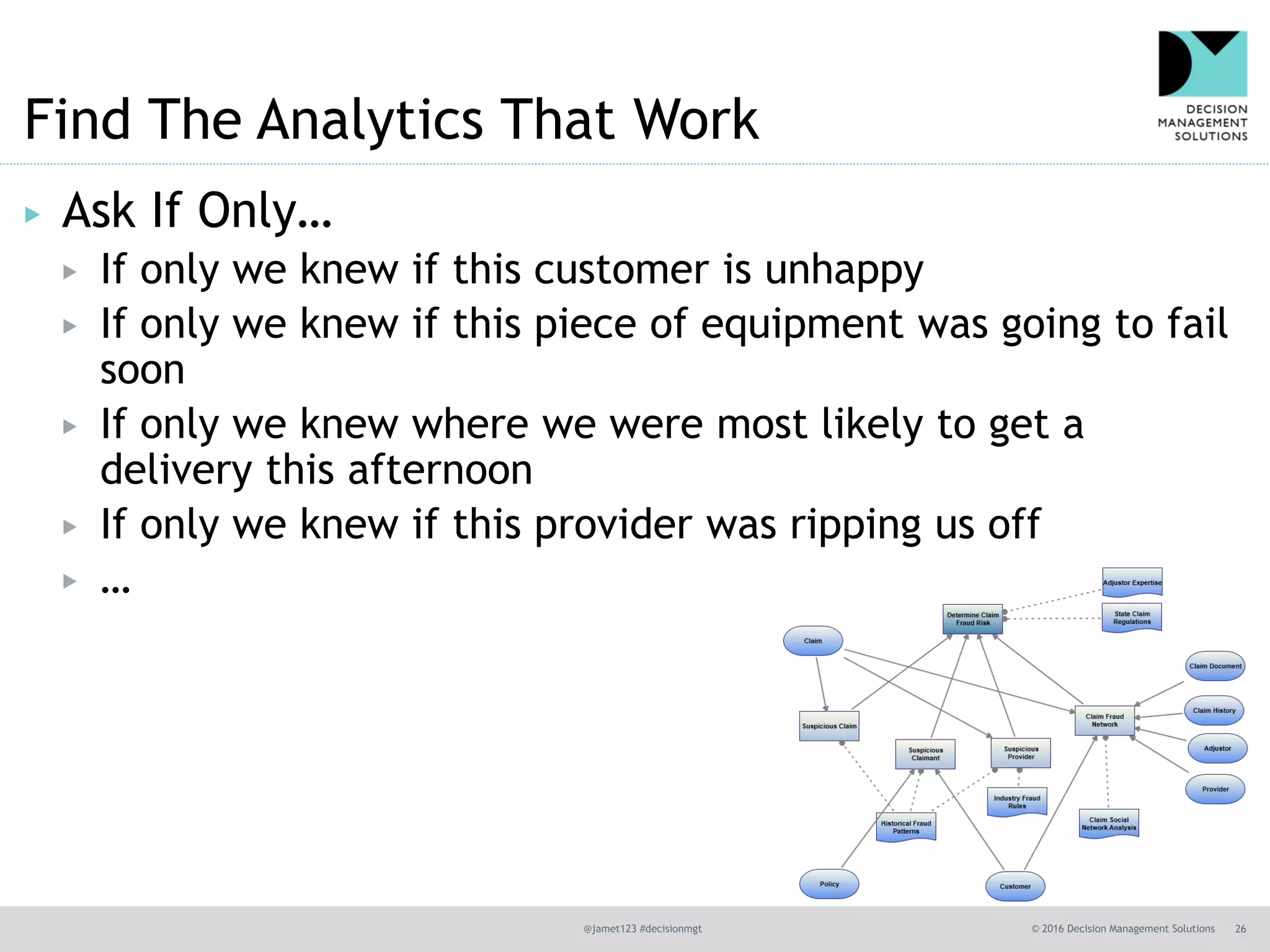

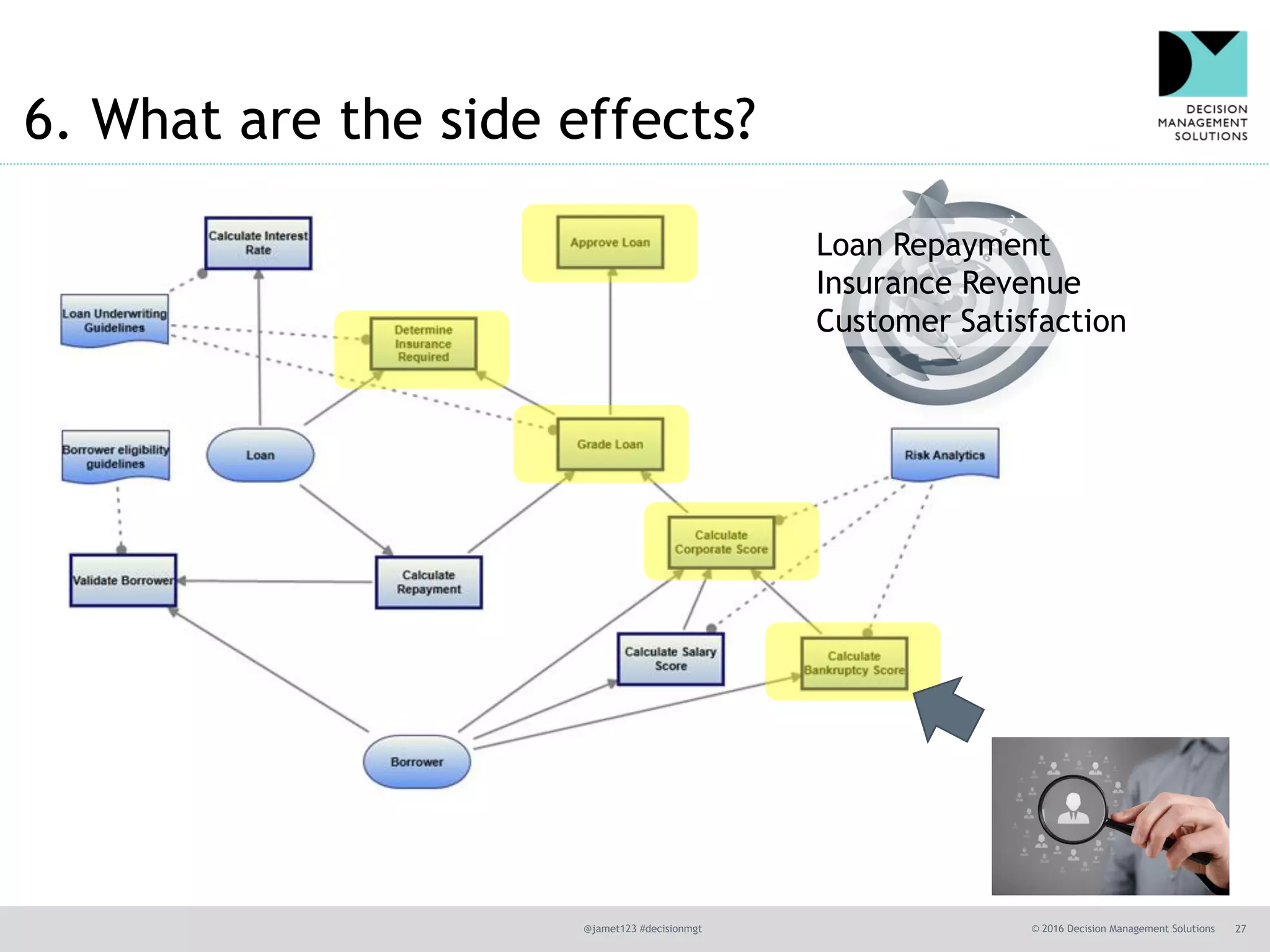

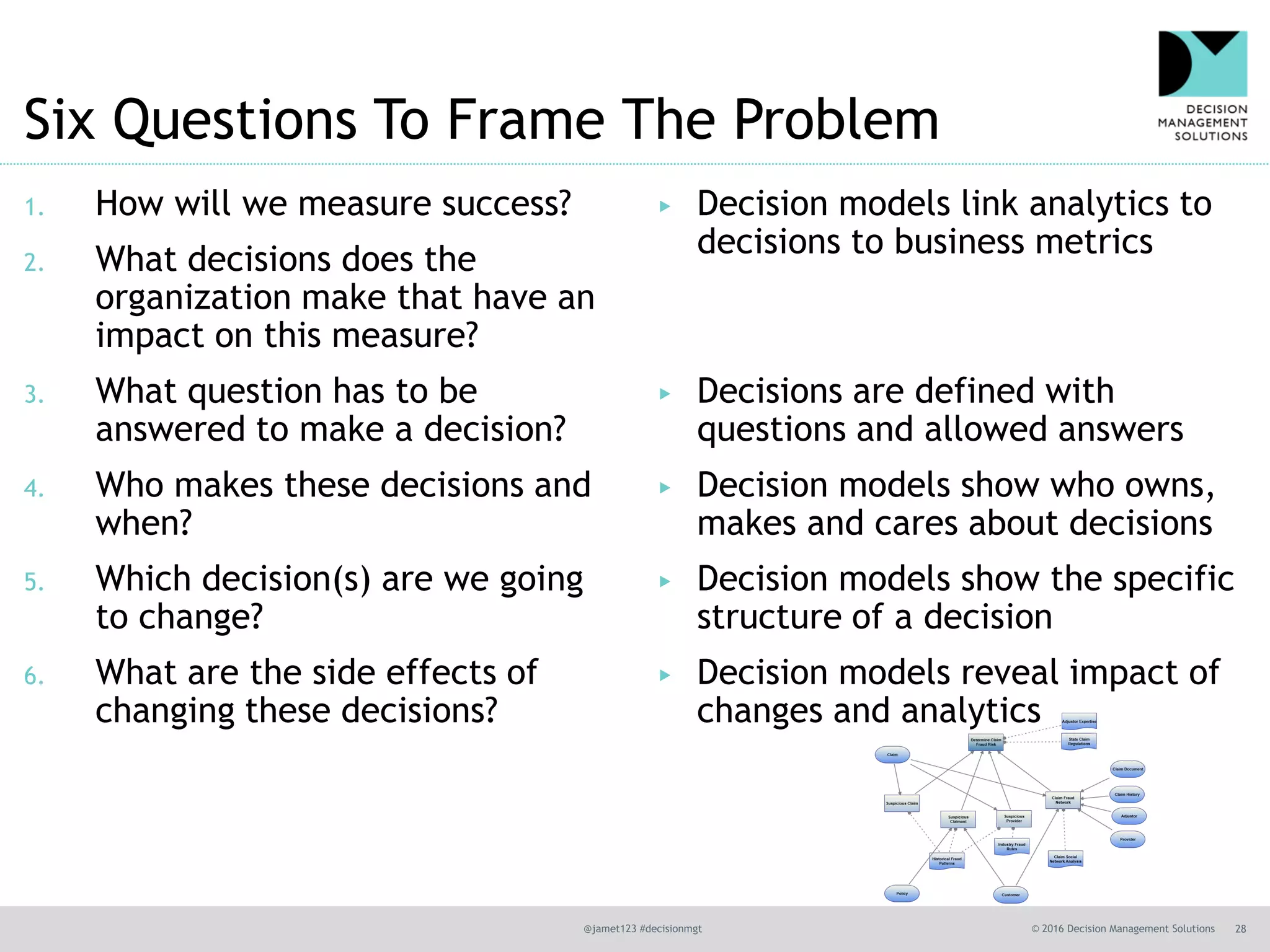

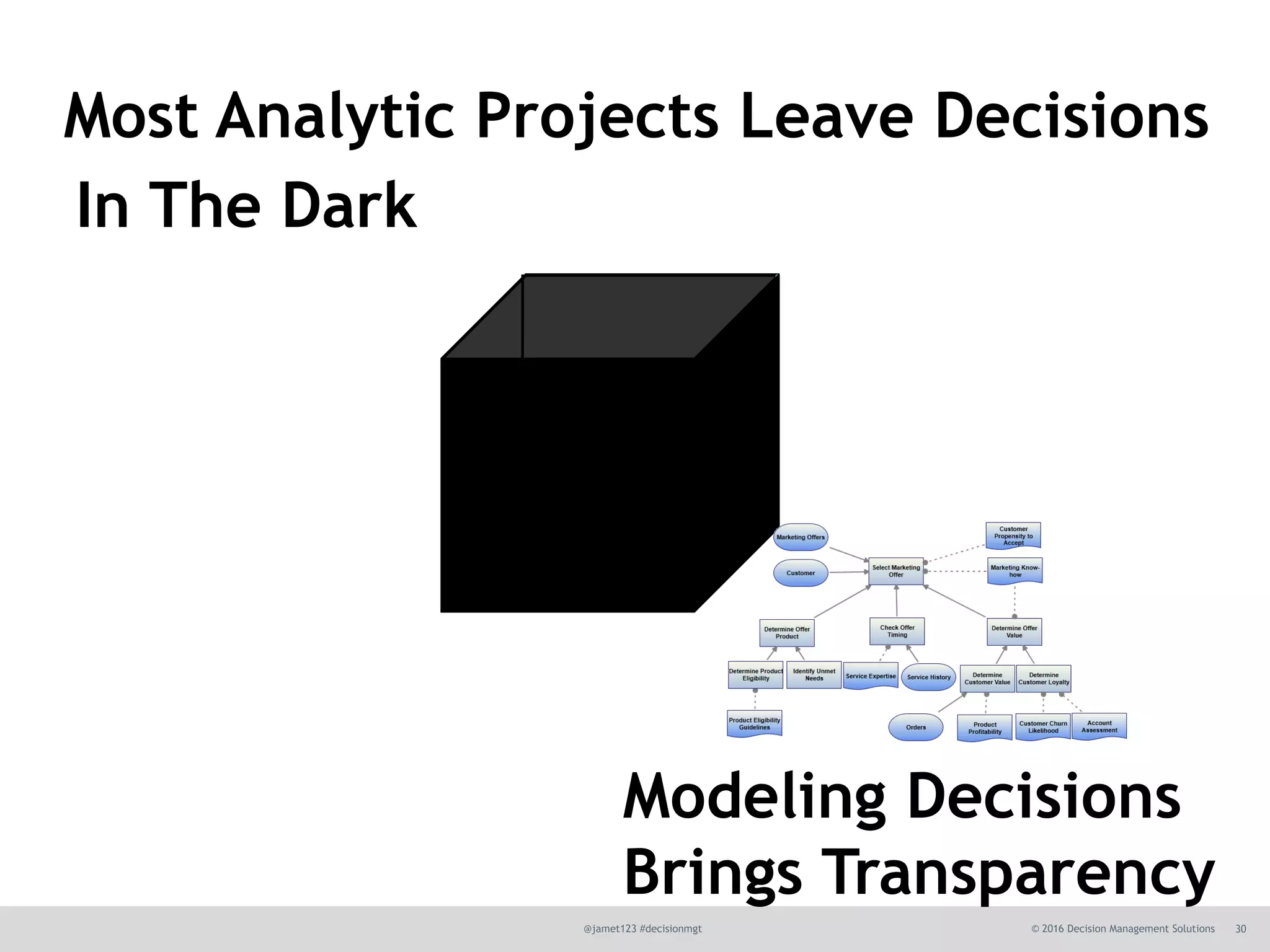

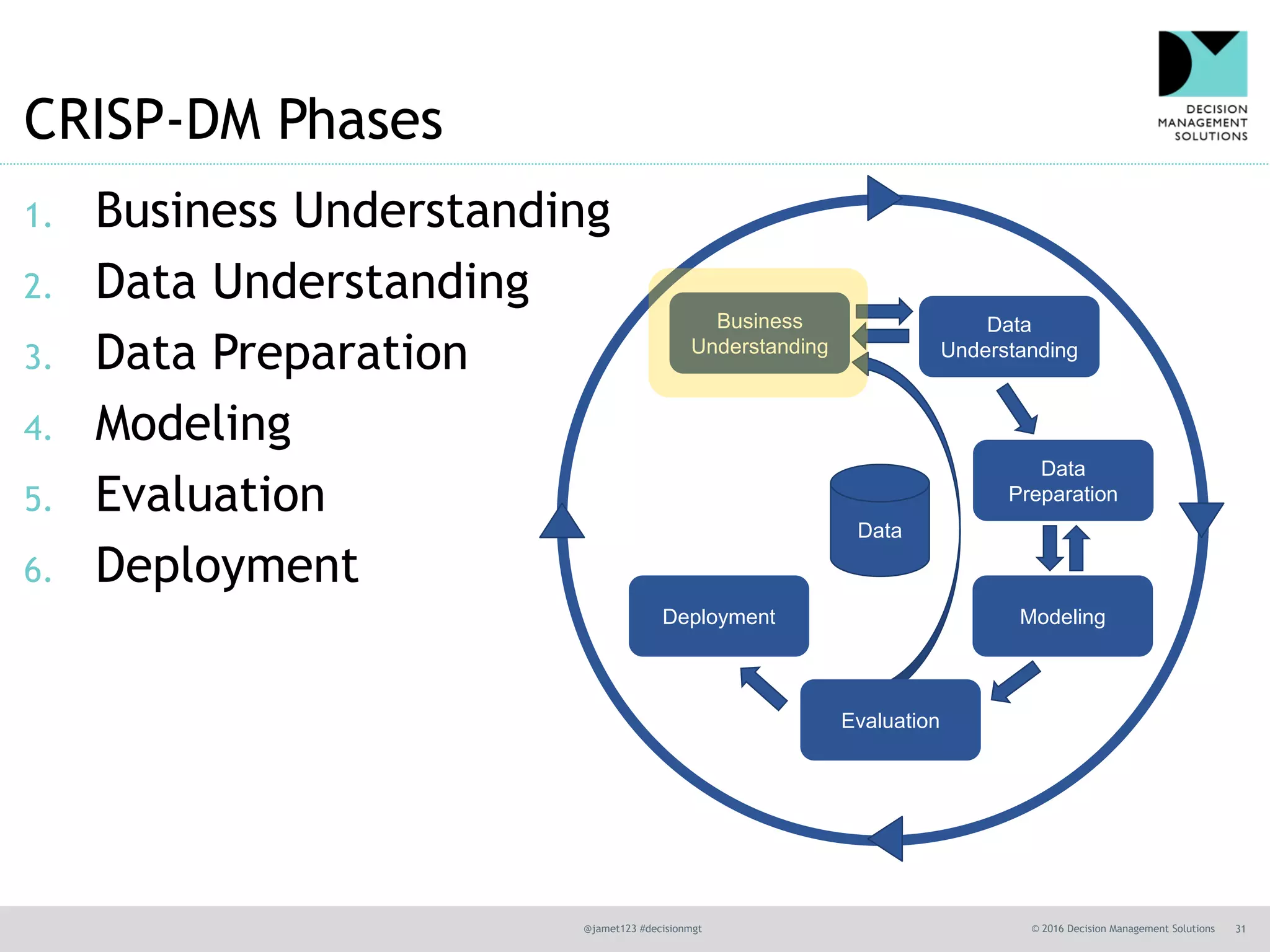

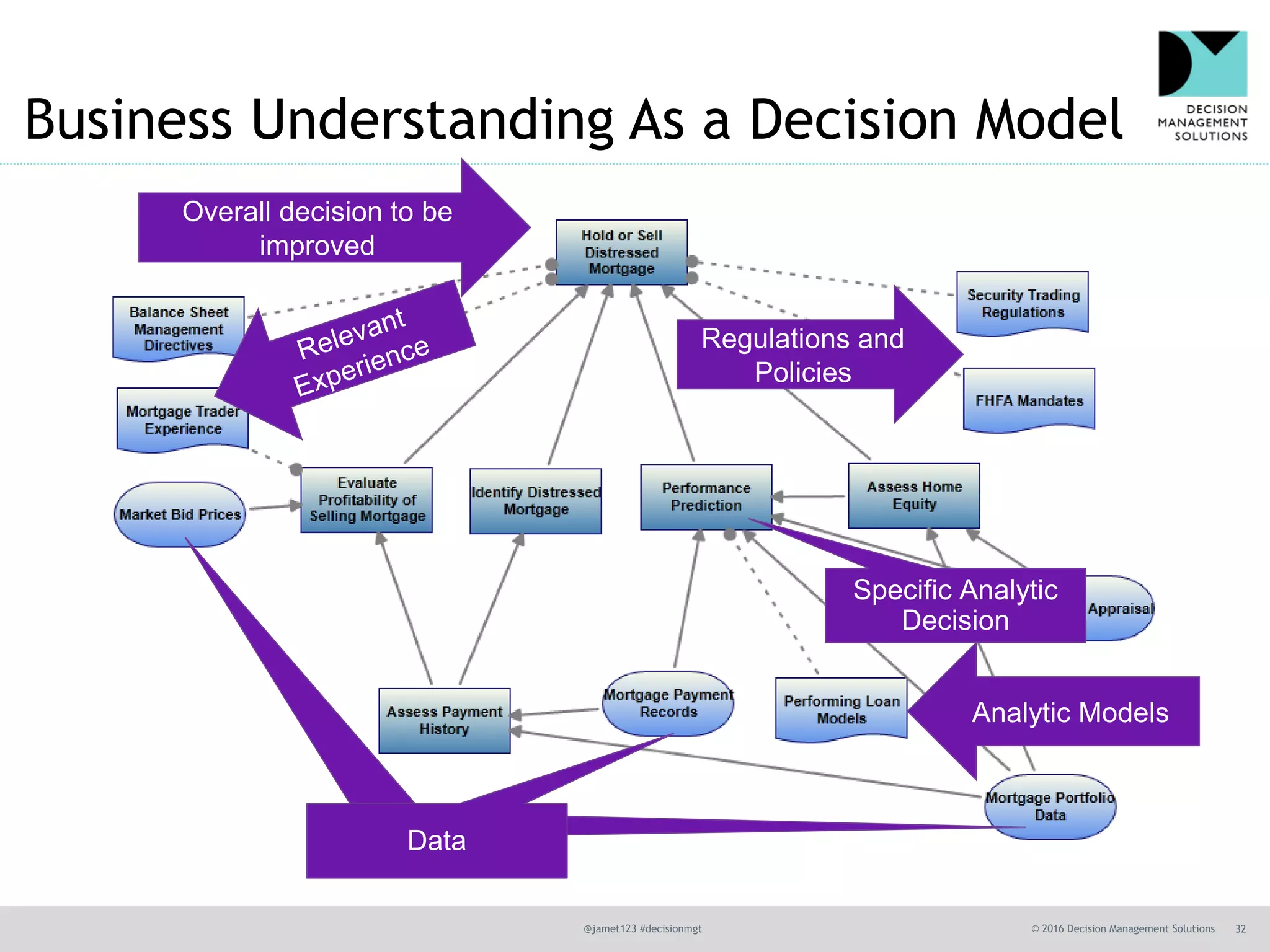

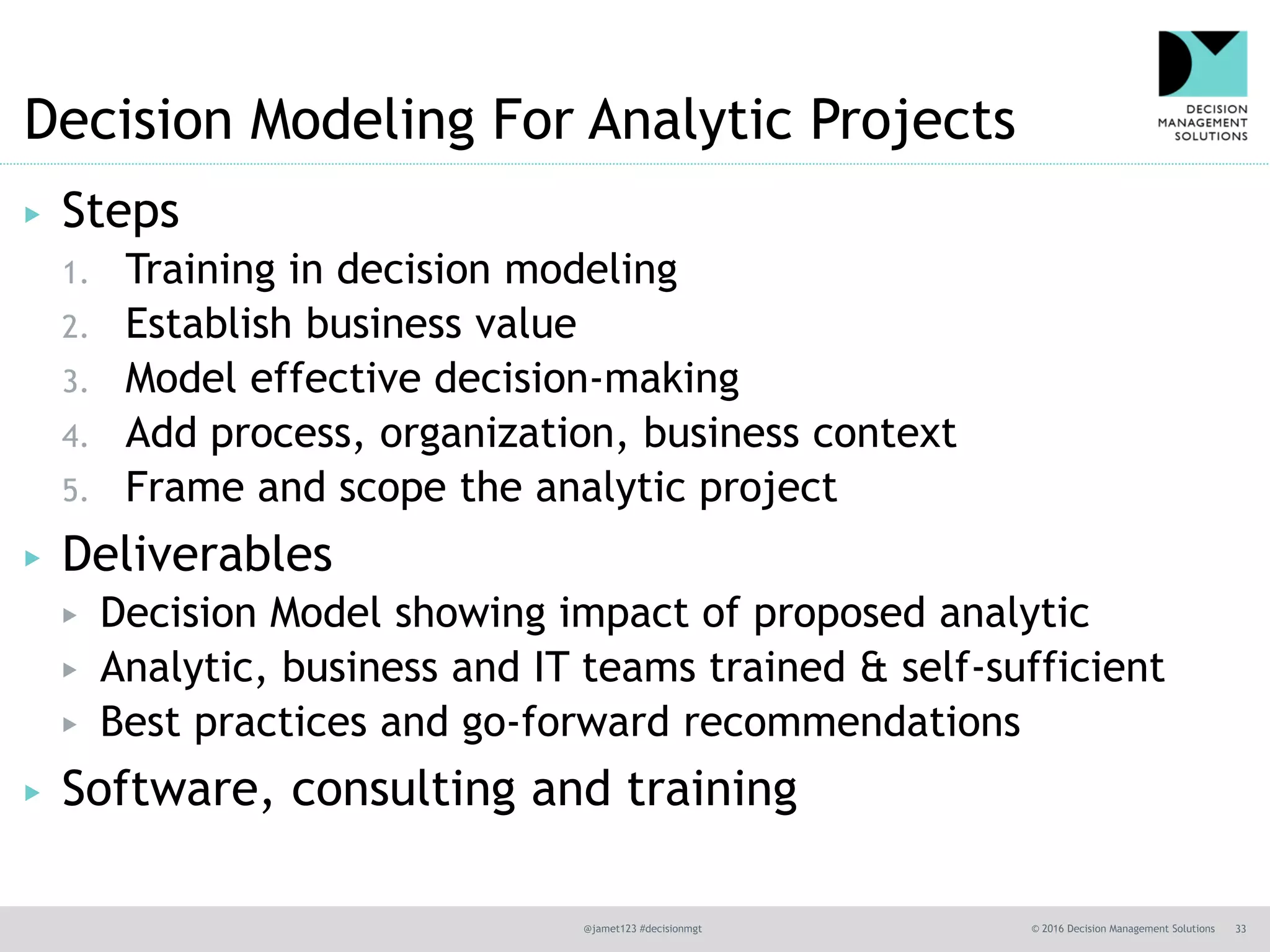

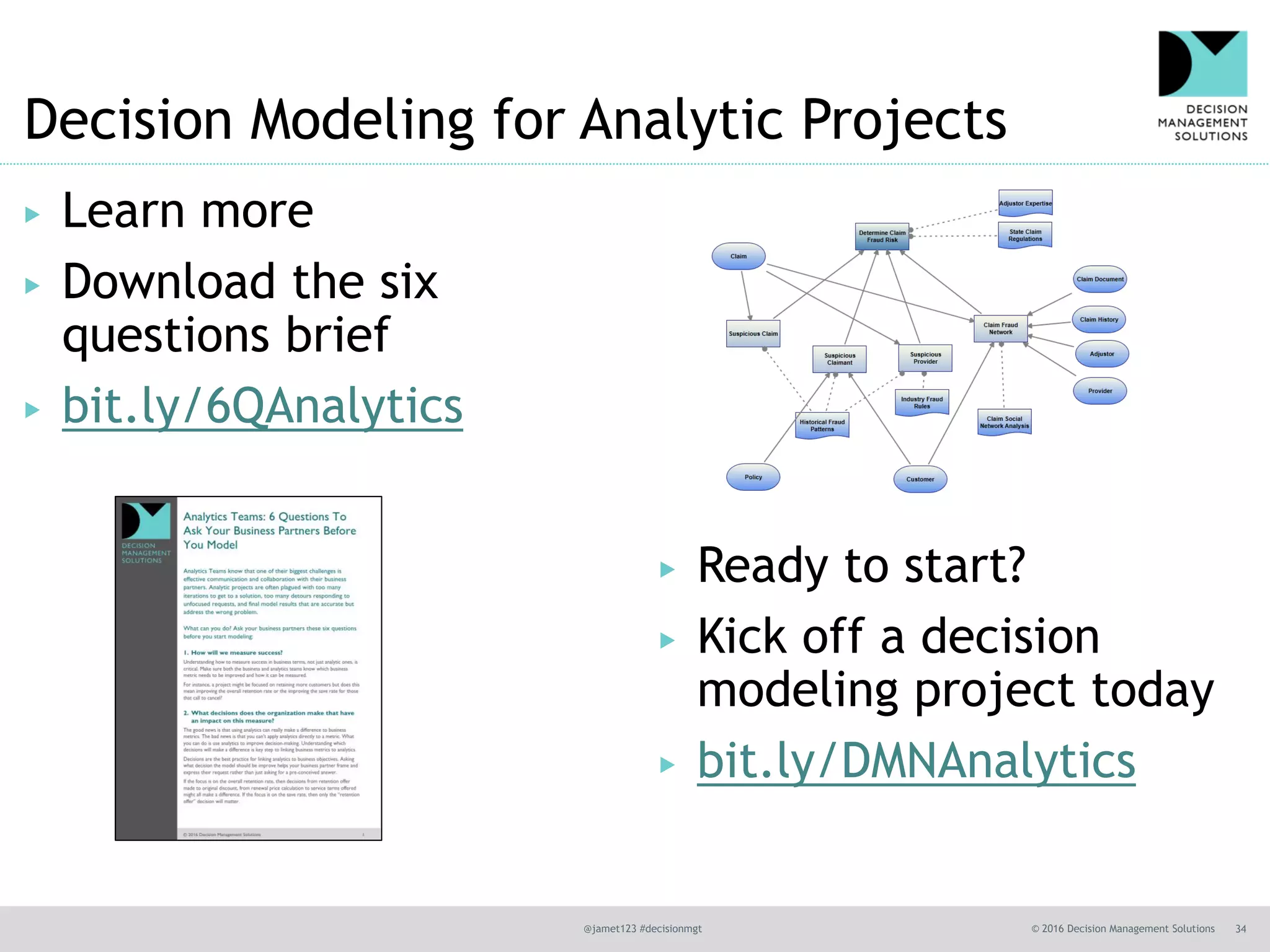

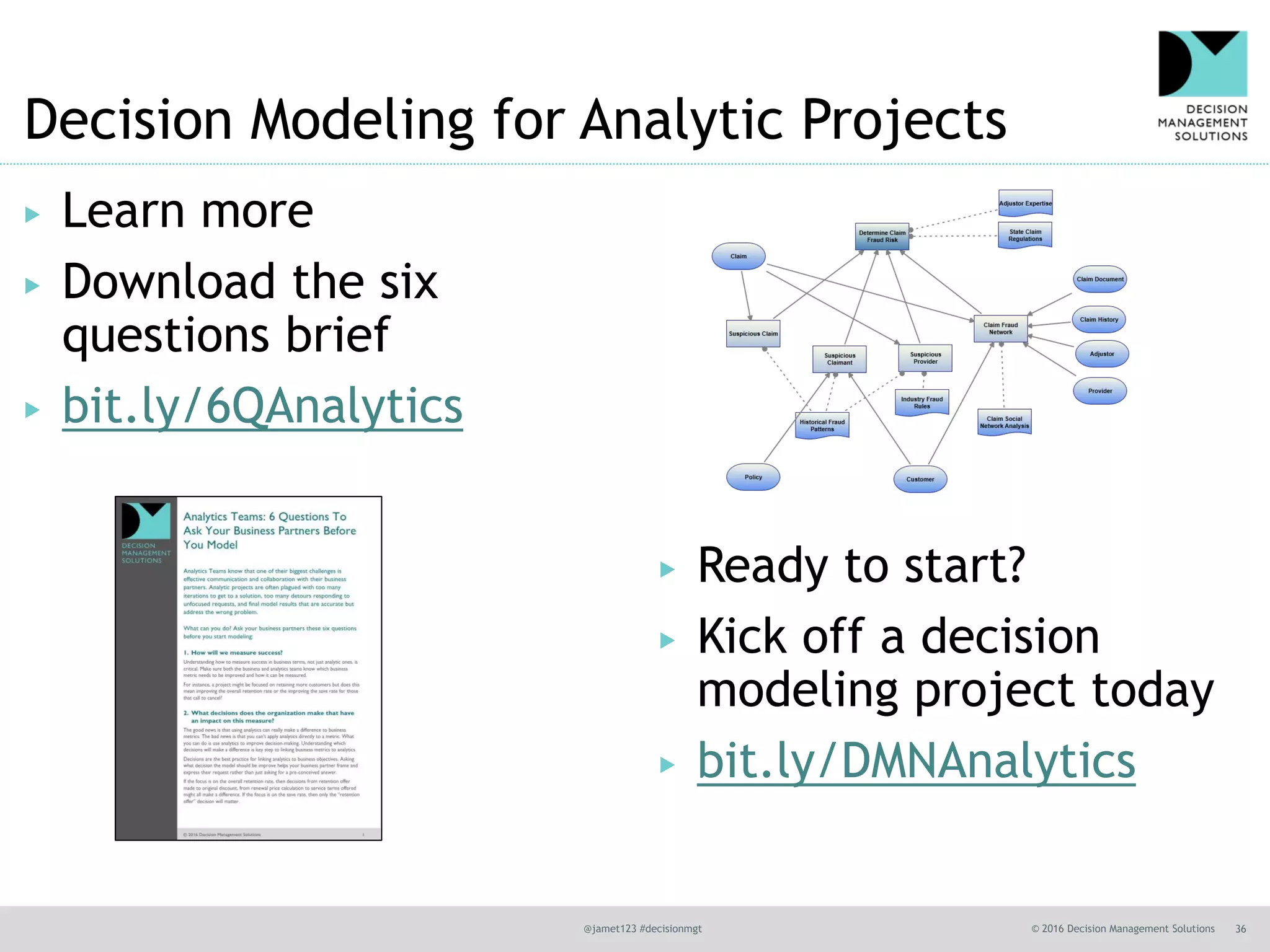

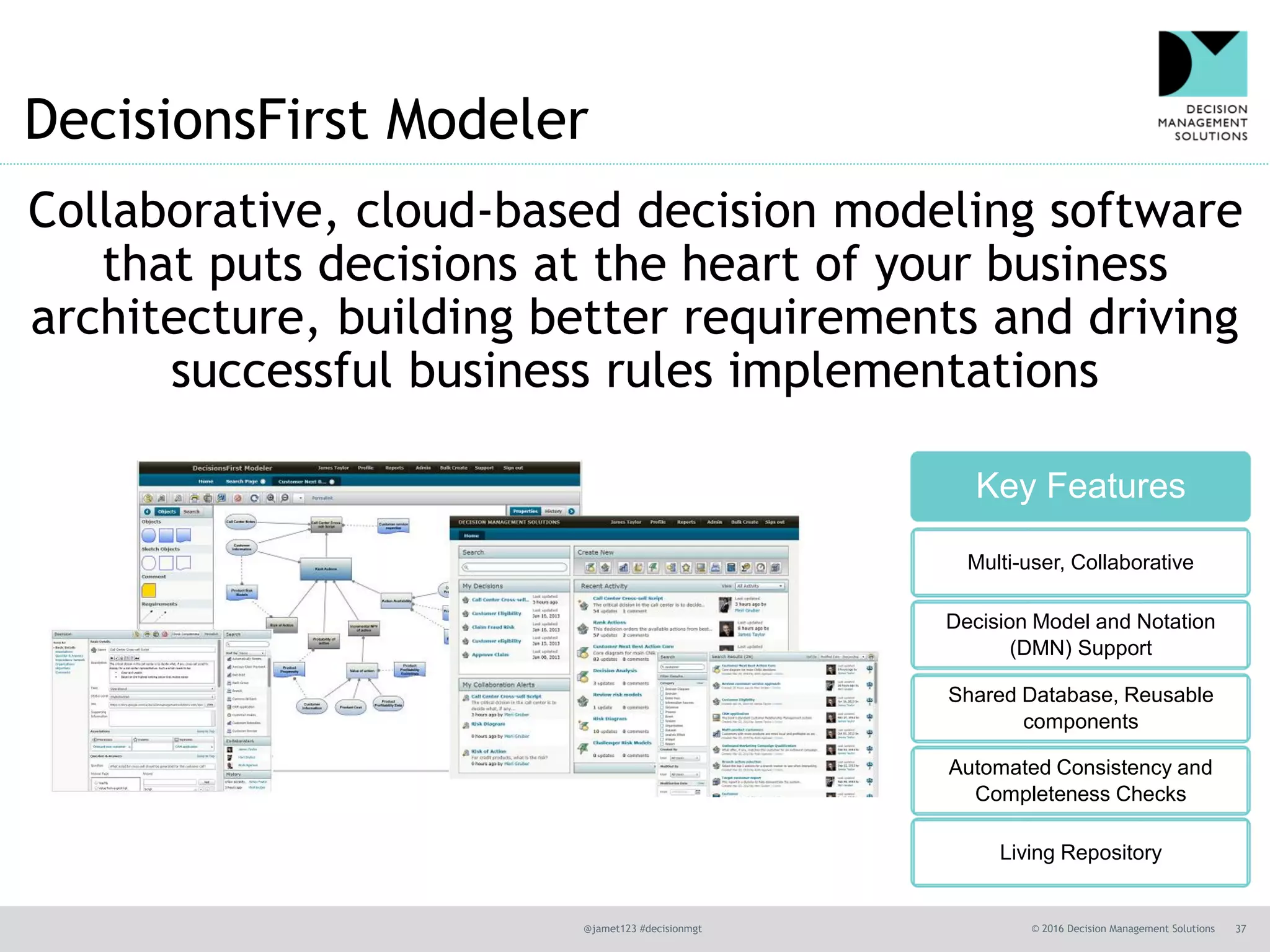

The document outlines the importance of decision modeling in analytics, presenting six critical questions that help frame analytic problems and focus on business value. It emphasizes the need for collaboration between analytics teams and business partners to improve decision-making and operationalization of analytics. Ultimately, decision models enhance transparency, structure, and innovation in business processes, linking analytics directly to strategic business objectives.