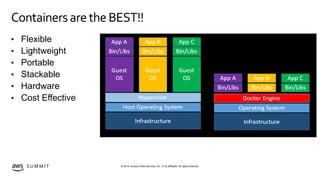

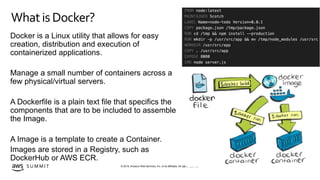

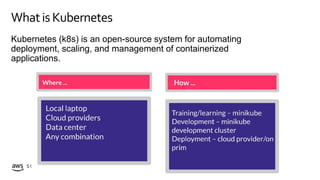

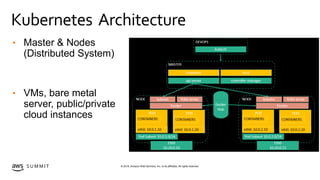

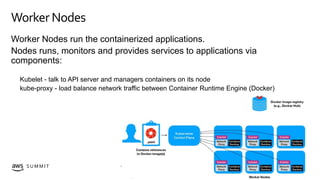

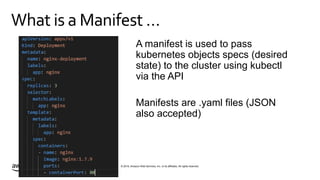

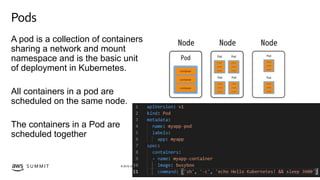

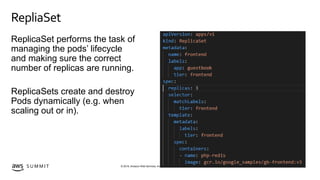

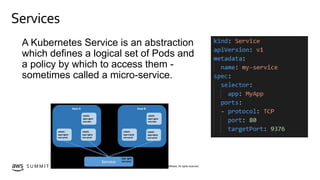

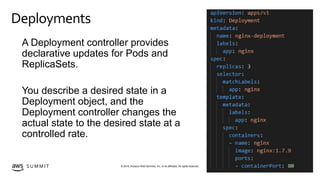

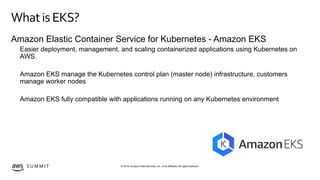

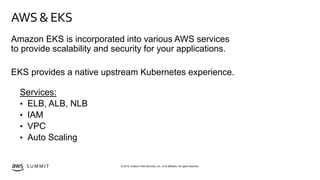

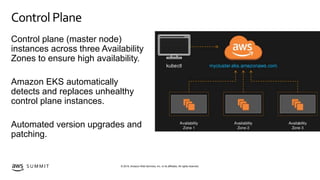

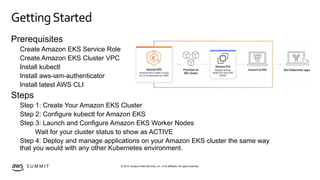

The document provides an overview of Amazon Elastic Kubernetes Service (EKS) and introduces key concepts related to containers and Kubernetes, including the architecture, pods, and services. It highlights the benefits of using containers, the role of Kubernetes in managing them, and outlines the steps to get started with EKS on AWS. The content emphasizes that EKS simplifies the deployment and scaling of containerized applications while ensuring high availability and integration with various AWS services.