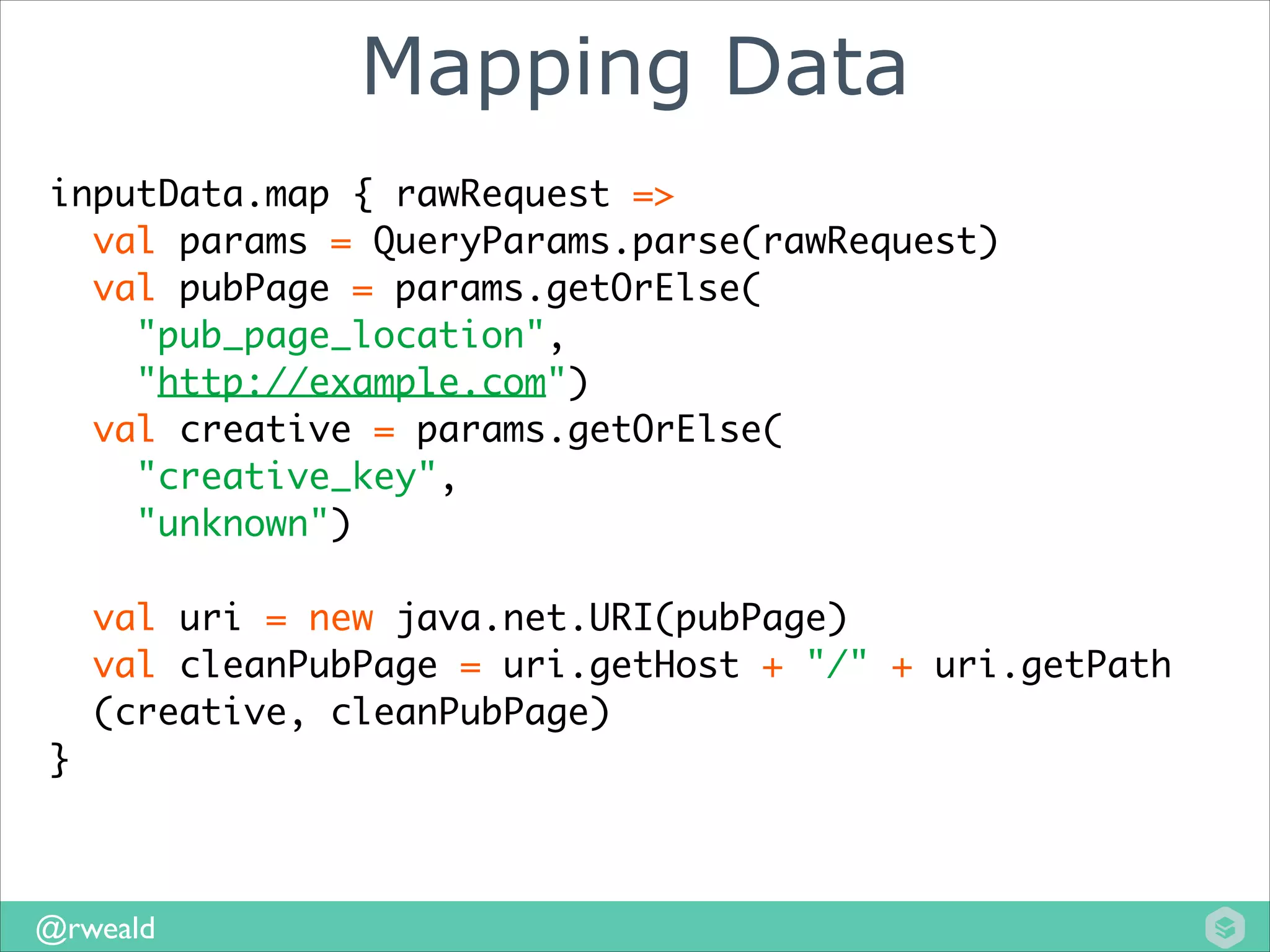

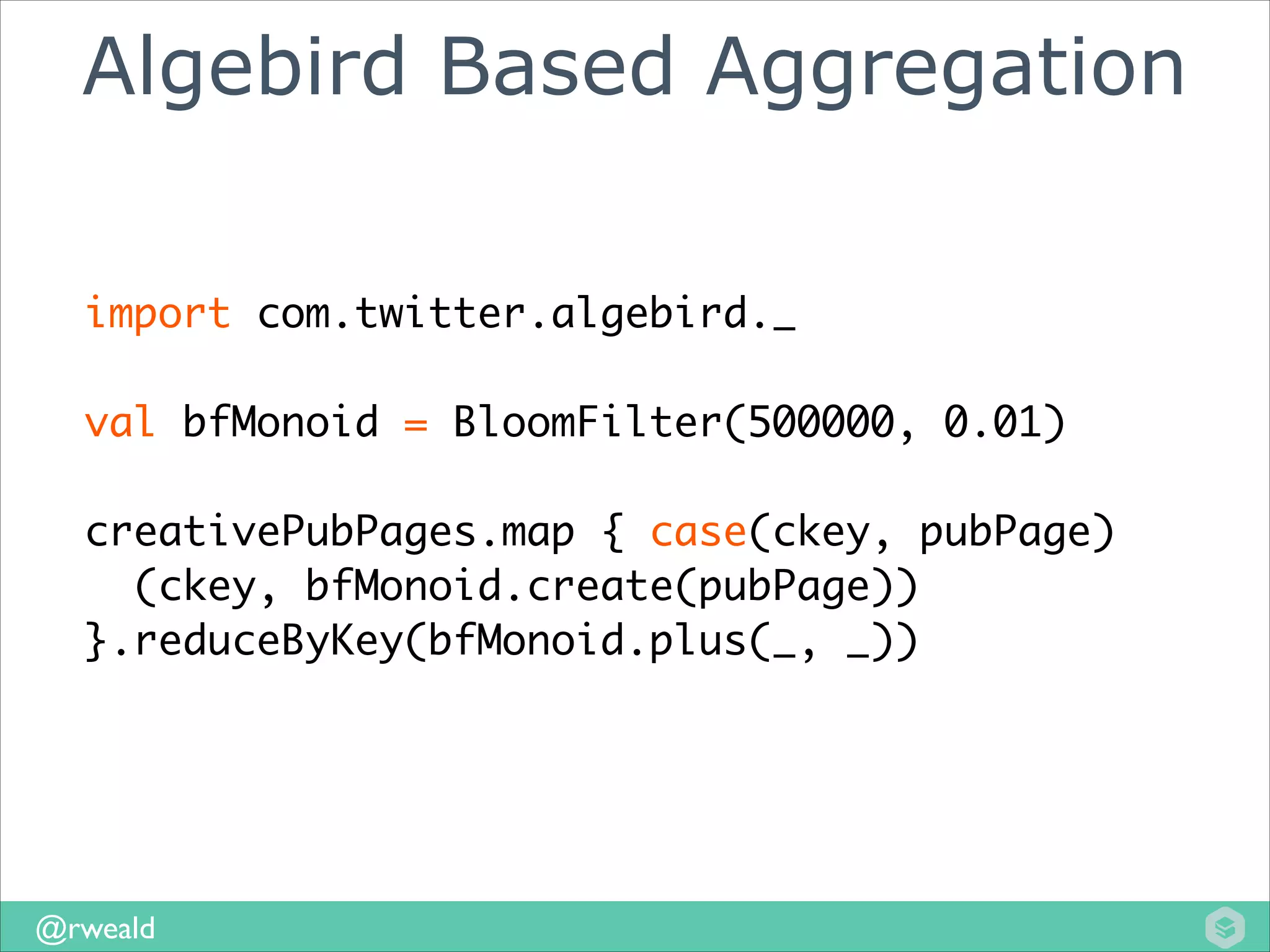

This document discusses common patterns in Spark streaming jobs, including mapping data, aggregating using monoids, and storing results. It describes using monoids to abstract aggregation, allowing different implementations like Bloom filters. It also discusses using dependency injection to make storage pluggable for different environments. The talk suggests potential additions to Spark's API to directly support these patterns.

![Basic Aggregation

val sum: (Set[String], Set[String]) =>

Set[String] = _ ++ _

!

creativePubPages.map { case(ckey, pubPage)

(ckey, Set(pubPage))

}.reduceByKey(sum)

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-14-2048.jpg)

![WTF is a Monoid?

trait Monoid[T] {

def zero: T

def plus(r: T, l: T): T

}

* Just need to make sure plus is associative.

(1+ 5) + 2 == (2 + 1) + 5

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-19-2048.jpg)

![Monoid Example

SetMonoid extends Monoid[Set[String]] {

def zero = Set.empty[String]

def plus(l: Set[String], r: Set[String]) = l ++ r

}

!

SetMonoid.plus(Set("a"), Set("b"))

//returns Set("a", "b")

!

SetMonoid.plus(Set("a"), Set("a"))

//returns Set("a")

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-20-2048.jpg)

![Algebird Based Aggregation

val aggregator = new Monoid[(BF, BF)] {

def zero = (bfMonoid.zero, bfMonoid.zero)

def plus(l: (BF, BF), r: (BF, BF)) = {

(bfMonoid.plus(l._1, r._1),

bfMonoid.plus(l._2, r._2))

}

}

!

creativePubPages.map { case(ckey, pubPage, userId)

(

ckey,

bfMonoid.create(pubPage),

bfMonoid.create(userID)

)

}.reduceByKey(aggregator.plus(_, _))

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-24-2048.jpg)

![Storage API

trait MergeableStore[K, V] {

def get(key: K): V

def put(kv: (K,V)): V

/*

* Should follow same associative property

* as our Monoid from earlier

*/

def merge(kv: (K,V)): V

}

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-30-2048.jpg)

![Storing Spark Results

def saveResults(result: DStream[String, BF],

store: HBaseStore[String, BF]) = {

result.foreach { rdd =>

rdd.foreach { element =>

val (keys, value) = element

store.merge(keys, impressions)

}

}

}

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-32-2048.jpg)

![Generic Storage Method

def saveResults(result: DStream[String, BF],

store: StorageFactory) = {

val store = StorageFactory.create

result.foreach { rdd =>

rdd.foreach { element =>

val (keys, value) = element

store.merge(keys, impressions)

}

}

}

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-36-2048.jpg)

![DI the Store You Need!

trait StorageFactory {

def create: Store[String, BF]

}

!

class DevModule extends ScalaModule {

def configure() {

bind[StorageFactory].to[InMemoryStorageFactory]

}

}

!

class ProdModule extends ScalaModule {

def configure() {

bind[StorageFactory].to[HBaseStorageFactory]

}

}

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-38-2048.jpg)

![Potential API additions?

class PairDStreamFunctions[K, V] {

def aggregateByKey(aggregator: Monoid[V])

def store(store: MergeableStore[K, V])

}

@rweald](https://image.slidesharecdn.com/abstractionsforsparkstreaming-sparkmeetuppresentation-140120102704-phpapp01/75/Monoids-Store-and-Dependency-Injection-Abstractions-for-Spark-Streaming-Jobs-40-2048.jpg)