A Disaster Tolerant Cloud Computing Model as a Disaster Survival Methodology - Chad M Lawler PhD

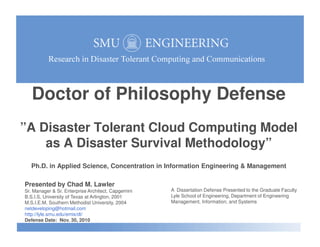

- 1. Doctor of Philosophy Defense ”A Disaster Tolerant Cloud Computing Model”A Disaster Tolerant Cloud Computing Model as A Disaster Survival Methodology” Ph.D. in Applied Science, Concentration in Information Engineering & Management Presented by Chad M. Lawler Sr. Manager & Sr. Enterprise Architect, Capgemini B.S.I.S, University of Texas at Arlington, 2001 M.S.I.E.M, Southern Methodist University, 2004 netdeveloping@hotmail.com http://lyle.smu.edu/emis/dt/ Defense Date: Nov. 30, 2010 A Dissertation Defense Presented to the Graduate Faculty Lyle School of Engineering, Department of Engineering Management, Information, and Systems

- 2. Doctor of Philosophy Defense Outline Introduction & Research Objectives Overview & Background Problem Definition Approach, Methodology & Model Validation and Verification Summary and Conclusions http://lyle.smu.edu/emis/dt / Summary and Conclusions 2

- 3. Supervisory Committee Dr. Stephen Szygenda, Ph.D., Professor Committee Chairman and Dissertation Director Dr. Mitchell Thornton, Ph.D., Professor Dr. James Hinderer, Ph.D., Adjunct Professor Introduction http://lyle.smu.edu/emis/dt / Dr. Sukumaran Nair, Ph.D., Professor Dr. Jerrell Stracener, Ph.D., Scholar in Residence 3

- 4. Chad Lawler - Brief Bio & Background Senior Manager & Enterprise Architect, Capgemini, Dallas, TX Fourteen Years of Experience in IT in Consulting, Product Development, Management & Outsourcing Technical Focus on Internet Architecture and Cloud Computing Masters in Information Engineering & Management, SMU, 2004 Introduction http://lyle.smu.edu/emis/dt / Engaged in Disaster Tolerant research from Spring 2005 to Fall 2010 Certified Enterprise Architect by the Open Group (TOGAF) ITIL certified by HP and Microsoft Certified Systems Engineer (MCSE) 4

- 5. Research Objectives For the commercial business environment: Define challenges with traditional IT Disaster Recovery approaches Uncover the primary causes of DR Failure Work to address DR challenges with a Disaster Tolerant approach & model Develop a Disaster Tolerant model Methodology focused on IT service continuity throughout the occurrence of a disaster, not on recovery Research Objectives http://lyle.smu.edu/emis/dt / disaster, not on recovery Disaster Tolerant model & Corresponding Infrastructure Architecture Focused on web HTTP applications Validate Functionality Test and validate model functionality Focus not on mathematical or academic testing, but actual functional implementation testing 5

- 6. Doctor of Philosophy Defense Outline Introduction & Research Objectives Overview & Background Problem Definition Approach, Methodology & Model Validation and Verification Summary and Conclusions http://lyle.smu.edu/emis/dt / Summary and Conclusions 6

- 7. Research Overview Research Findings Traditional DR does not represent an effective approach to mitigating risk of downtime DR instead has a high failure rate in the event of an actual traumatic disaster Businesses waste investment in DR caused by failed recovery efforts in disasters Renders DR ineffective & results in wasted capital & resource investment in DR Fails to meet the primary goal of IT service recovery Proposed Disaster Tolerant Computing Model Overview & Background http://lyle.smu.edu/emis/dt / Proposed Disaster Tolerant Computing Model Proposes a model to provide an updated Disaster Tolerant Computing (DTC) approach Helps address the challenges associated with DR Leveraged new technologies in cloud computing to reduce cost and complexity Significance of this Research: Disaster Recovery Ineffectiveness Challenges of Achieving High Availability Applicability of Cloud Computing to Disaster Tolerance Validity of the Disaster-Tolerant Computing Approach Verification of the functionality of proposed Disaster Tolerant Cloud Computing Model 7

- 8. Degree Coursework & Research PhD Degree Credit Hour Requirement Completed 54 Course Credit Hours Complete 36 Hours of Doctoral Dissertation research & Special Studies Coursework and research spanning 2005 to 2010 Supporting Research Publications 6 Conference Publications from 2007 to 2010 2 Academic Journal Publications 2008 & 2009 Overview & Background http://lyle.smu.edu/emis/dt / 2 Academic Journal Publications 2008 & 2009 Total of 8 research publications supporting dissertation Completed Prior Education Masters, Information Engineering Management, SMU, 2004 B.S. Business Information Systems, University of Texas, Arlington, 2001 8

- 9. Supporting Research Publications 1. “Realities of Disaster Recovery- IT Systems & Disaster Preparedness“ CITSA 07- The 4th International Conference on Cybernetics and Information Technologies, Systems & Applications: CITSA 2007 jointly with The 5th International Conference on Computing, Communications and Control Technologies: CCCT 2007 July 12-15, 2007 – Orlando, Florida |Authors: Chad M. LAWLER, Stephen A. SZYGENDA 2. "Components of Continuous IT Availability & Disaster Tolerant Computing“ IEEE ConTech 2007 Homeland Security - 2007 IEEE Conference on Technologies for Homeland Security, May 16-17, 2007, Woburn, MA. Authors: Chad M. LAWLER, Stephen A. SZYGENDA 3. “Disaster Recovery, IT Systems and Disaster Preparedness“ IAMOT 2007IAMOT 2007-16th International Conference on Management of Technology, Miami Beach, Florida, USA, May Overview & Background http://lyle.smu.edu/emis/dt / IAMOT 2007IAMOT 2007-16th International Conference on Management of Technology, Miami Beach, Florida, USA, May 13-17, 2007. Authors: Chad M. LAWLER, Stephen A. SZYGENDA 4. "Components and Analysis of Disaster Tolerant Computing“ WIA 2007 - The 3rd International Workshop on Information Assurance. New Orleans, LA,USA, April 11-13, 2007 Authors: Chad M. LAWLER, Michael HARPER, Mitchell A. THORNTON 5. “Techniques for Disaster Tolerant Information Technology Systems” IEEESysCon2007 - 1st Annual IEEE Systems Conference, April 9-12, 2007, Waikiki, Honolulu Hawaii Authors: Chad M. LAWLER, Stephen A. SZYGENDA, Mitchell A. THORNTON 6. “Disaster Tolerant Computing with a Hybrid Public-Private Cloud Computing Load Balanced Geographical Duplicate & Match Model” SPDS 2010, Society for Design & Process Science, Dallas, TX, UT Dallas, June 6-11 Authors: Chad M. LAWLER 9

- 10. Supporting Journal Publications 1. "Components of Disaster Tolerant Computing: Analysis of Disaster Recovery, IT Application Downtime & Executive Visibility“ International Journal of Business Information Systems (IJBIS) ISSN (Online): 1746-0980 - ISSN (Print): 1746-0972, Issue 2, Volume 3, 2008. Authors: Chad M. LAWLER, Michael A. HARPER, Mitchell A. THORNTON, Stephen A. SZYGENDA 2. "Challenges & Realities of Disaster Recovery: Perceived Business Value, Executive Visibility & Capital Investment“ International Journal of Business Information Systems (IJBIS) ISSN (Online): 1746-0980 - ISSN (Print): 1746-0972, Publication in Issue 4, Volume 3, 2008 Authors: Chad M. LAWLER & Stephen A. SZYGENDA Overview & Background http://lyle.smu.edu/emis/dt / 10

- 11. Technology Challenge - Widening Gap Overview & Background http://lyle.smu.edu/emis/dt / 11 Cocchiara, Richard, IBM 2007

- 12. Top IT Datacenter Challenges Overview & Background http://lyle.smu.edu/emis/dt / 12 Top IT Datacenter Challenges Source: IDC 2007

- 13. Mission Critical Business Functions Most ICT Operations are Mission Critical Mission critical business functions represent 60% of all IT service functionality Varying by industry type from 49.8% to 69% Half of all IT service functions are critical to business availability & continuance! Overview & Background http://lyle.smu.edu/emis/dt / 13 Mission Critical Business Functions by Industry Source: Gartner 2006

- 14. Critical Application Downtime Tolerance Most businesses can only tolerate 1-4 hours of critical application downtime Overview & Background http://lyle.smu.edu/emis/dt / 14 Business Tolerance for Critical Application Downtime Source: Enterprise Strategy Group, 2008

- 15. Overview - Disaster Recovery Disaster Recovery (DR) Goal to resume business functions and IT service operations after a disaster occurs Fundamentally a risk management discipline Subset of Business Continuity Planning (BCP) to reduce losses from an outage Commonly utilizes IT services along with fault tolerant systems to achieve recovery With traditional DR approaches, there is a delay before IT operations can continue Success depends on the ability to restore, replace, or re-create critical systems Conventional Established DR practices Overview & Background http://lyle.smu.edu/emis/dt / Conventional Established DR practices The enabling approach is storing offsite copies of data in secondary locations Focuses on recovery of failed services, systems and applications, Based on Recovery Time Objective (RTO) and Recovery Point Objective (RPO) Process often utilizes a “Backup-Transport-Restore-Recover” (BTRR) methodology Traditional DR methods include: tape backup, expedited hardware replacement, vendor recovery sites and data vaulting or Hot-Sites Creation & execution of a DR plan to resume interrupted IT & business functions Required limitation of three key elements of downtime: Frequency, duration & scope 15

- 16. RPO & RTO DR RPO RTO Timeline Overview & Background http://lyle.smu.edu/emis/dt / 16 Recovery Point Objective (RPO): Amount of acceptable data loss measured in terms of how much data can be lost before the business is too adversely affected. RPO indicates the point in time a business is able to recover data after a systems failure, relative to the time of the failure itself Recovery Time Objective (RTO): Amount of systems downtime defining the total time of the disaster until the business can resume operations Quantifies how much data loss is acceptable without grossly adversely affecting the business due to lost business transactions data

- 17. Cloud Computing Cloud Computing A model for enabling convenient, on-demand network access to a shared pool of configurable computing resources (networks, servers, storage, applications, services) Rapidly provisioned and released with minimal effort or service provider interaction Broad concept of accessing IT services on-demand through subscription basis Shared resources provisioned over the internet with benefit of economies of scale Metaphor for the Internet based on common ‘cloud’ depiction of the Internet in diagrams Abstracts underlying infrastructure that “the cloud” conceals Overview & Background http://lyle.smu.edu/emis/dt / Abstracts underlying infrastructure that “the cloud” conceals Enables Virtual computing resources Access to a shared pool of configurable computing resources Networks, servers, storage, applications, and services Rapidly provisioned & released resources with minimal management effort or interaction Essential Characteristics Abstraction of Infrastructure, Shared/Pooled Resources leveraged by multiple users, Services Oriented Architecture, Elasticity of Resources, Utility Model of Consumption (Pay-per-Use) and Allocation 17

- 18. Cloud Computing Overview Model Overview & Background http://lyle.smu.edu/emis/dt / 18 Cloud Computing Overview Model Source: NIST 2007

- 19. Cloud Computing Service Providers Overview & Background http://lyle.smu.edu/emis/dt / 19 Gartner Magic Quadrant for Web Hosting and Hosted Cloud System Infrastructure Services (On Demand), Gartner 2009

- 20. Cloud Computing Models Community Cloud Shared infrastructure managed by the organizations or 3rd party, on or off premise Public Cloud Available to public & owned by an organization selling cloud services commonly over the Internet Private Cloud Operated for an organization, managed by the organization or 3rd party on premise or off premise Overview & Background http://lyle.smu.edu/emis/dt / Operated for an organization, managed by the organization or 3rd party on premise or off premise Hybrid Cloud Two or more clouds integrated by technology that enables cloud-to-cloud connectivity Different Cloud service models have also evolved Cloud Software as a Service (SaaS), Cloud Platform as a Service (PaaS), Cloud Infrastructure as a Service (IaaS) 20

- 21. Amazon Web Services Amazon Web Services (AWS) Remote cloud computing services offered over the Internet launched July 2002 Amazon EC2 and related services form an IaaS web service in the cloud Represents the first commercial implementation of cloud computing services. Services not exposed directly, instead offer virtualized infrastructure resources AWS Elastic Cloud Compute (EC2) Resizable compute capacity including operating system, services, databases & Overview & Background http://lyle.smu.edu/emis/dt / Resizable compute capacity including operating system, services, databases & application platform Components required for web-based applications with a management console & APIs Reduces the time required to obtain and boot new server instances to minutes Allows for deployment of new server capacity as well as rapid tear down of resources New Economic Computing Dynamic EC2 changes the economics of computing, allowing clients to pay only for used capacity Allows upfront capital expenditure avoidance & pushes computing costs to operational expense 21

- 22. Background Summary Virtualization & Cloud Computing Current state of virtualized, utility pay-per-use computing spans more than five decades through the client server, PC and Internet revolution Pivotal shift from physical to virtual led to current cloud computing environment Available to businesses to support mission-critical business IT services & applications Cloud Computing & DT Computing Opportunity to apply DT computing approach to evolving Cloud Computing capabilities Overview & Background http://lyle.smu.edu/emis/dt / Unique, rapid elastic infrastructure expansion & contraction capability Appealing low-cost structure with effective ramp up during disaster Can be leveraged to achieve increased reliability & availability of IT systems with continued, uninterrupted IT service operations throughout disaster occurrence A New Approach Architecture that leverages HA and Virtualized Server and Storage capabilities Integrated private & public cloud hybrid to enable cost-effective disaster-tolerance Capable of meeting mission-critical business needs 22

- 23. Outline Introduction & Research Objectives Overview & Background Problem Definition Approach, Methodology & Model Validation and Verification Summary and Conclusions http://lyle.smu.edu/emis/dt / Summary and Conclusions 23

- 24. Disaster Recovery & Disaster Tolerance Availability is quantified numerically as the percentage of time that a service is available for use to the end-user Availability Target Permitted Annual Downtime 99% 87 hours 36 minutes 99.9% 8 hours 46 minutes 99.99% 52 minutes 34 seconds 99.999% 5 minutes 15 seconds Problem Definition http://lyle.smu.edu/emis/dt / 24

- 25. Downtime Risks & Consequences Chance of Surviving Major Data Loss Only 6% of companies survive more than two years after suffering a major data loss Nearly half of all the companies that lose their data in a disaster never reopen 43% percent of companies that experience a major data loss from a disaster, but have no recovery plan in place, go out of business and never re-open By the sixth day of a major computing system outage, companies experience a 25% loss in daily revenue Problem Definition http://lyle.smu.edu/emis/dt / loss in daily revenue By day 25, this loss of revenue is at 40% of prior daily revenue Within two weeks of the loss,75% of organizations reach critical or total functional loss of all business revenue 25

- 26. Costs of Major US Disasters Problem Definition http://lyle.smu.edu/emis/dt / 26 Insurance Services Office, Insurance Information Institute & National Hurricane Service, 2008

- 27. Costs of Downtime Problem Definition http://lyle.smu.edu/emis/dt / 27 Network Computing, Meta Group, Contingency Planning Research, 2008

- 28. Large Scale Disasters Problem Definition http://lyle.smu.edu/emis/dt / 28 Disaster Radius

- 29. Traditional Hot-Site DR Process Insurance for ICT Hot-site services & traditional DR processes tantamount to insurance policies for ICT Hot-Site DR = Datacenter Move Failover and service recovery at an alternate site Process similar to a datacenter move project Numerous tasks executed in a particular sequence over a specific time frame Problem Definition http://lyle.smu.edu/emis/dt / Complex with dependencies & interrelationships Traditional Investment in Hot-Site DR Alternate ‘hot’, ‘warm’ or ‘cold’ failover sites Often done after an IT solution has been designed & implemented Not before, where it could have the most impact Often unsuccessful - forces technology to function in a way for which it was not designed 29

- 30. Disaster Recovery Approach Well-defined, 6-stage “Recovery Timeline” process Various challenges and potential for recovery failure Despite wide acceptance! Challenges: Data Loss, Recovery Time, Data Synchronization, Testing, Failover, Failback Problem Definition http://lyle.smu.edu/emis/dt / 30 EMC Corporation , 2008

- 31. Traditional Hot-Site DR Approach IT App IT App IT App IT App IT App Biz Processes Primary Site Secondary Site WAN Problem Definition http://lyle.smu.edu/emis/dt / 31 IT App IT App IT App Business Users IntranetIntranet IntranetIntranet RightFaxRightFax ADAD SQL ExchangeExchange

- 32. Challenges with Traditional DR Recent Studies Show Half of Businesses Not Prepared for Disasters One in two organizations are not equipped to mitigate the risk of disaster outages 48% of organizations have had to execute disaster recovery plans Only 20 % of businesses recover in 24 hours or less from traumatic outages 80% businesses are unable to retrieve lost data from backup media in disaster scenarios Nearly 50% of Actual DR Tests Fail While 91 % of IT DR plans conduct DR testing, nearly 50% of actual DR tests fail! Only 35% test their DR plans at least once a year Problem Definition http://lyle.smu.edu/emis/dt / Only 35% test their DR plans at least once a year 93% of organizations had to modify their DR plans following testing trials 2003 North East American Power Blackout 76 % of companies stated blackout significantly impacted business Nearly 1/3 of the US Data Centers Were Impacted 60 %t of IT departments did not have plans to deal with the outage 9-11 Debatable effect on disaster preparedness spending patterns of business 45% of businesses have not increased security spending post 9-11 as of 2006 32 Symantec Corporation & Dynamic Markets, “The Symantec Disaster Recovery Research 2007” report, October, 2007, & The Conference Board, 2004, Harris Interactive & SunGard Availability, 2005, & AT&T & the International Association of Emergency Managers (IAEM), 2005 & Gartner,

- 33. Challenges with Traditional DR Failure of DR Plans Within Actual Disasters is Common When disasters occur, businesses learn DR doesn’t meet needs Mismatches between requirements recovery planning, lack of maintenance & testing Large Scale Disasters Often Not Considered Most plans do not address impact of large scale regional emergencies Plans overlook impacts of major disasters across industries/communities Problem Definition http://lyle.smu.edu/emis/dt / Majority of US Fortune 1000 Business Executives State Leadership not focused on Disaster Recovery or Business Continuity Companies less prepared to deal with disasters than in years prior to 2005 View disaster preparedness as a business expense to be minimized Inadequate Disaster Preparation “…most IT departments, especially those in mid-sized companies, are still flying by the seat of their pants. DR planning is simply not on their list of priorities.” Jason Livingstone, DR analyst at Info-Tech Research Group 33

- 34. Complexities & Costs of Redundancy High Costs of DR Costs of provisioning HA infrastructure in duplicate, triplicate or quad systems Redundancy multiplies the costs of implementing, supporting and maintaining systems Represents a massive financial burden on the organizations implementing them Complexity Additional complexities introduced increase number of components Which increases the potential for single and multiple points of failure Problem Definition http://lyle.smu.edu/emis/dt / Which increases the potential for single and multiple points of failure Detection of errors in these complex infrastructures becomes problematic Barrier to High Availability Presents a significant barrier to investment in DR and disaster-tolerant architectures Cost, complexity and difficulty in managing HA infrastructures increases with the availability levels 34

- 35. DR Research Findings Summary Significance Highlights inadequacies of traditional DR as a primary approach to business IT systems availability Evidence of high costs of business interruptions and systems downtime But perceived disaster risk does not tend to outweigh the costs investment As a result, businesses continue to gamble with stability, profitability and continuity DR Does Not Meet Business Needs Demonstrates that a high percentage of DR plan executions fail during actual disasters Chance of successful DR plan execution is low, despite DR approach being commonplace In the event of an actual disaster, most businesses have a 50% chance of DR plan success Problem Definition http://lyle.smu.edu/emis/dt / In the event of an actual disaster, most businesses have a 50% chance of DR plan success Wasted Investment Underpinned by significant financial capital and human resource investments Large portion of business capital in DR represents a wasted investment in failed recovery Does not produce the desired result of ensuring continued business operations in a disaster Highlights DR Challenges & the Need for Disaster Tolerance In actual disasters, DR fails too often to be reliable means of ensuring critical systems HA Presents a major challenge for businesses and organizations requiring high availability Research highlights the need to move away from recovering failed critical IT systems & DR Instead move toward DT that ensures availability throughout the occurrence of a disaster 35

- 36. Outline Introduction & Research Objectives Overview & Background Problem Definition Approach, Methodology & Model Validation and Verification Summary and Conclusions http://lyle.smu.edu/emis/dt / Summary and Conclusions 36

- 37. Disaster Tolerance Disaster Tolerant (DT) Computing & Communications SE-based approach for reliable Information & Communication Technology (ICT) Augments high availability beyond traditional fault tolerance & risk management Goal of maintaining uninterrupted ICT systems operations despite disaster occurrence Continued Information & Communication Technology Operations Extends Disaster Recovery (DR) & Business Continuity Planning (BCP) practices Critical systems continue operations as opposed to resuming interrupted functions Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Critical systems continue operations as opposed to resuming interrupted functions Superset of Fault Tolerance Methods Massive numbers of individual faults & single points of failure Disasters may occur which cause rapid, almost simultaneous, multiple points of failure As well as single points of failure, that escalate into wide catastrophic system failures DT Approach Develop models and methodologies to provide agile infrastructure that can tolerate catastrophe by design and continue operations when a disaster strikes 37

- 38. Disaster Tolerant Approach Departure from Recovery Will help address the weaknesses and failure-prone nature of DR Establishing proven process incorporates DT techniques early in design Distinct from the concept of Disaster Recovery Focus on Operational Continuance Continuing IT service operation as opposed to resuming them Removing recovery from the IT service continuity formula Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Address DR Challenges Utilizing Disaster Tolerant Techniques Critical step - begin disaster tolerance in mind in initial service & infrastructure design Avoid forcing systems to function in manners in which they were not designed Design focus on capabilities for continued operations uninterrupted Different Direction Building redundancy into the initial architecture itself is certainly not a new a concept Encompassing DT approach from initial design through implementation & management Establishing proven process incorporating DT technologies early in solution design Change in direction from traditional Disaster Recovery approaches 38

- 39. Disaster Tolerant Model Geographical Duplicate & Match Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 39 Disaster Tolerant Model with Geographical Duplicate & Match, Harper, M.A., 2009

- 40. Disaster Tolerant Architecture Geographical Duplicate & Match Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 40 Disaster Tolerant Architecture with Geographical Duplicate & Match, Harper, M.A., 2009

- 41. Disadvantages & Limitations Geographical Duplicate & Match (GDM) approach has several disadvantages and limitations: High Cost High cost of hardware /software is more than double Hardware communications cost increase considerably due to distance separation The cost of software needed for fault identification and isolation increases Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Complexity Complex infrastructure required If the system is complex, possibility that required diagnostic tests may not complete Faulty system may not be able to indentify or take considerable time for fault isolation Systems are not traditionally designed to operate geographically separated by large distances and/or in a widely dispersed network environments Cost & Complexity Can be Addressed Leveraging Cloud Computing 41

- 42. Causes of DR Failover Challenges 1. Testing Lack of testing - unrealistic, inadequate 2. Complexity High complexity of technologies and failover/failback mechanism 3. Readiness DR failover environments not ready for failover 4. Human Error Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 42 4. Human Error Manual human error occurs within failover 5. Unshared Critical Knowledge Critical knowledge experts unavailable during crises 6. Scalability Processes and technology unable to scale in disaster 7. Invalid Assumptions Incorrect assumptions regarding failover plan

- 43. Causes of DR Challenges Plan Maintenance & Dependencies Plans rarely exercised/maintained at levels required for real-world use Dependence on key personnel - major failure point, human availability not considered Internal/external dependencies not adequately identified or documented Requires significant investment in continuous DR testing that is iterative and regular Realistic Testing is Problematic Each test results must incorporate changes & lessons learned for up to date relevance Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Each test results must incorporate changes & lessons learned for up to date relevance Most DR problems resulted from inadequate testing & lack of resources/time/budget DR plans found to be rarely tested due to potential impact to customers and revenues Therefore were flawed to a point of not functioning when executed An Untested DR Plan is Not a Functional DR Plan A firm that simulated DR scenario stress testing won't uncover its weaknesses Until they are in the midst of a crisis! In fact, your firm doesn't have a plan if it's untested 43 Integrated Business Continuity”, IBM Business Continuity and Resiliency Services, March, 2007

- 44. Addressing Weaknesses in DR Addressing Failure, Cost & Complexity High failure rate of DR practices leaves traditional approach to recovery limited Identified DR components prone to failure in the event of a major traumatic disaster High cost & complexity associated with multiple-node systems is a challenge Large financial capability required to implement & test DR systems & processes Cloud Computing Capabilities help address the key challenge cost issue with DT Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Capabilities help address the key challenge cost issue with DT Significant application to the disaster-tolerant approached presented in this research Provides a viable and powerful means for meeting business needs for highly available Provides cost-effective alternatives to the high costs barriers of implementing multiple, geographically separate site nodes required for disaster-tolerant ICT systems 44

- 45. Disaster Tolerant Model New Model Proposal Research proposes an approach, method and model to address these areas of failure Significance - address of weaknesses in DR leveraging new technologies Helps address the cost barriers of previous disaster-tolerant approaches Provides a viable disaster-tolerant approach Achieve increased availability & uninterrupted service throughout disaster occurrence Cloud and the Cost Challenge Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Cloud and the Cost Challenge Cloud sites housing duplicate environments with scalability rapid provisioning Available infrastructure without traditional high capital costs of dedicated physical sites Leveraging the cloud, a small footprint environment can be deployed to the cloud Can be scaled up if additional capacity is needed in the event of a disaster Significance - it can dramatically reduce the need for & cost of duplicate environments Provides a compelling reason to utilize the cloud in disaster-tolerant solutions Helps resolve the cost-barrier to DTC a viable alternative to 45

- 46. Disaster Tolerant Model - GDM Based Significant portion of DR challenges related to Testing & Failover/Failback Remove the Challenges by Operating in a Minimum of Two Locations Load balanced systems use traffic Data replication and synchronization Avoiding need for failover/failback, recovery and testing Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Addressed Leveraging Geographical Duplicate & Match (GDM) Model Utilization of traditional N-tier web application architecture with a cluster database backend Combined with replication and load balancing with HA network access for users, provides redundancy at all tiers of the infrastructure S1 and S2 Nodes Load balancing and clustering enables the capability to share application load across geographical duplicate systems S1 and S2 nodes 46

- 47. Disaster-Tolerant Cloud Computing (DTCC) Model Primary Obstacles to Implementing Disaster Tolerant Principles Technology complexity and cost - often more than twice the cost of standalone Hybrid Cloud Disaster Tolerant Model Hybrid Public-Private Cloud Computing Load Balanced Geographical Duplicate and Match Model Cloud Computing Cost Reduction Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Cloud Computing Cost Reduction Utilization of the cloud as the GDM S2 node Takes advantage of the cost differential associated with Cloud Computing Helps address the cost barrier to deploying Disaster Tolerant solutions Cost Benefits Cloud Computing functionality in the S2 node On-demand utility model of consumption Pay-per-use self-service rapid elasticity and scalability Ideal characteristics for a DT solution 47

- 48. Logical Hybrid Public-Private Disaster Tolerant Computing Architecture (DTCC) Based on GDM Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 48

- 49. DTCC Model Infrastructure Components Two-Site Implementation A primary private datacenter site and a redundant cloud computing environment Located a safe distance apart of at least 100KM distance apart Cloud environment located in a different metropolitan area from the data center site Primary computing facility and environment as the System S1 node Cloud Computing IaaS service and infrastructure as the System S2 node Complete Redundancy in Facilities Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Complete Redundancy in Facilities HA dual Internet connections from different telecommunication providers from the Private datacenter site to the Internet Local HA and clustering of all system and server components at each site HA physical redundant network and security hardware infrastructure at S1 including HA routers, HA Firewalls & HA switches Virtual HA network infrastructure at S2 providing local virtual machine network load balancing, web farm and HA clustered database 49

- 50. Physical Hybrid Public-Private Disaster Tolerant Computing Architecture (DTCC) Based on GDM Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 50

- 51. DTCC Model Infrastructure Components Full Load Balancing, No Failover Required Multiple Internet Domain Naming Service (DNS) A records for host name to public Internet Protocol (IP) address resolution Internet DNS-Based service for HA DNS Global Server Load balancing (GSLB) for separate node traffic load balancing Hyper Text Transfer Protocol Security (HTTPS) 128-bit encryption of in transit data Virtual Private Network (VPN) overlay provisioning private IP address space over VPN SSL over HTTPS tunnel to private S1 to S2 node communications Approach, Methodology & Model http://lyle.smu.edu/emis/dt / SSL over HTTPS tunnel to private S1 to S2 node communications 51

- 52. DTCC Model Infrastructure Components Web Farm Load Balancing & Replication HA network load balanced web server nodes forming web server farm Local Network Load Balancing (NLB) for web farm HTTP traffic load distribution S1 physical or virtual server instances with corresponding S2 virtual cloud instances Asynchronous replication of web server content between S1 & S2 web farms Database Clustering & Replication S1 HA 2-node Clustered Database backend housing the application database and Approach, Methodology & Model http://lyle.smu.edu/emis/dt / S1 HA 2-node Clustered Database backend housing the application database and content on HA clustered storage Asynchronous database replication from S1 database to S2 content between S1 &S2 S1 and S2 web farms configured to write all database transactions to S1 database Simple configuration change available on S2 web farm to configure web farm database writes to S2 database in the event of disaster occurrence at S1 Redundant Authentication and DNS HA local S1 Lightweight Directory Access Protocol (LDAP) Authentication and Identity Management and internal DNS primary and secondary nodes LDAP & DNS replication over the HTTPS SSL VPN overlay network. Virtual cloud instances of the same at S2 52

- 53. DTCC Model Logical Traffic Flow Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 53

- 54. DTCC Model Summary & Significance Cloud is Ideal for Disaster Tolerance Helps addressing the cost component of HA architectures ideal for disaster-tolerant solutions Allows deployment of HA sites for a move toward IT service continuance Enables economical operational expense model with financial flexibility to help address high costs of traditional DR and high-availability approaches Significance Hybrid Public-Private cloud model, combined with a disaster-tolerant architecture Potential to become the default standard for all new IT services for business moving forward Approach, Methodology & Model http://lyle.smu.edu/emis/dt / Potential to become the default standard for all new IT services for business moving forward Departure from the concept and potential for failed services that must be recovered Applicable to business systems designed from their instantiation to tolerate traumatic disasters Supported by multiple, geographically separate sites, as defined in a GDM model DTCC model and approach that can meet the needs of businesses for HA and reliability Move away from the concept of systems downtime and data recovery permanently New Disaster Tolerant Computing Standard Can establish a new standard business approach to technology implementation moving forward There are no critical business ITC systems and services that should suffer downtime Leveraging the DTCC model and cloud computing presented 54

- 55. Outline Introduction & Research Objectives Overview & Background Problem Definition Approach, Methodology & Model Validation and Verification Summary and Conclusions http://lyle.smu.edu/emis/dt / Summary and Conclusions 55

- 56. DTCC Model Proof of Concept (POC) DTCC POC To test proposed DTCC model, a Proof of Concept (POC) for was designed Test functionality of a Hybrid Public-Private Cloud Disaster-Tolerant infrastructure Demonstrate disaster-tolerant application functionality between private datacenter and public cloud Utilize Amazon Web Services (AWS) cloud computing service platform Not exhaustive due to resource/cost constraints, but demonstrates functionality of foundational components Validation & Verification http://lyle.smu.edu/emis/dt / foundational components POC Objectives & Scope Demonstrate technical feasibility of integrated public cloud with private datacenter Demonstrate shared load with a redundant site, balancing traffic among the sites Primary computing facility and environment as the System S1 node Cloud Computing IaaS service and infrastructure as the System S2 node Duplicate S1& S2 computing resources residing in private datacenter & in AWS cloud Secure Integration & Connectivity 56

- 57. DTCC Full Physical Model with Traffic Flow Approach, Methodology & Model http://lyle.smu.edu/emis/dt / 57

- 58. DTCC Model POC Goals Duplication for S1 & S2 Establish duplicate application software installations and images of identified Windows and Linux applications in the Amazon Web Services EC2 cloud infrastructure Secure Integration & Connectivity Provide a secure HTTPS SSL encrypted Virtual Private network (VPN) overlay to enable contiguous network connection and communication between Hybrid Public- Private Cloud disaster-tolerant infrastructure S1 and S2 nodes Validation & Verification http://lyle.smu.edu/emis/dt / Load Balancing Demonstrate disaster-tolerant load balancing between S1 private datacenter and S2 cloud computing nodes Primary and Secondary Internet Domain Naming Service (DNS) for Universal Resource Locator (URL) name to public Internet Protocol (IP) address resolution Use of multiple DNS A records for HA (HA) of DNS or Global Server Load Balancing 58

- 59. DTCC Model POC Technical Challenges AWS EC2 service considerations that restrict functionality: AWS does not allow hardware-based appliances nor enterprise firewalls or load balancers AWS limits connectivity to new software-based VPNs for secure connectivity There is a lack of ability to connect to services to other clouds or datacenters Cloud connectivity is Internet-based and data transfer bottlenecks pose a challenge A few key challenges arose during the POC that required particular commercial technologies solutions to address critical functionality of the proposed DTCC model. Validation & Verification http://lyle.smu.edu/emis/dt / technologies solutions to address critical functionality of the proposed DTCC model. POC Technical challenges included: Integration of disparate networks between private datacenter network infrastructure and the cloud environment network Network Load Balancing between private datacenter site and the cloud site Data replication and consistency at the web application tier Data replication and consistency at database tier 59

- 60. DTCC Model POC Technologies AWS EC2 AWS Elastic Cloud Compute (EC2) as the cloud computing platform for virtual cloud resources VPNCubed Cohesive Flexible Technologies, known as CohesiveFT, (http://www.cohesiveft.com) Virtual Private Network (VPN) Cubed as the Secure Sockets Layer (SSL) Hyper Text Transfer Protocol (HTTPS) overlay network connection to the Cloud. Validation & Verification http://lyle.smu.edu/emis/dt / Transfer Protocol (HTTPS) overlay network connection to the Cloud. Application use Cases Windows 2008 Server IIS - Application duplication, installation and operation in the AWS EC2 cloud of Identified Windows 2008 Server IIS HTTP web application Linux HTTP web application - Application duplication, installation and operation in the AWS EC2 cloud of Identified Linux HTTP web application Both application use cases utilize traditional N-Tier web applications with front end web servers and backend database servers 60

- 61. DTCC Model POC - HA Dual Internet Connections Validation & Verification http://lyle.smu.edu/emis/dt / HA dual Internet connections First components for HA configuration for Internet-based applications Datacenter location chosen for the POC had two fully highly available DS3 high-speed Internet connections capable of data rates up to 45 Mbps DS3 links provisioned by separate Telco and ISP providers Ensuring no SPOF for Internet network connectivity One of the first network connectivity requirements for the DTCC model 61

- 62. DTCC Model POC – Integration Challenges 3rd Party Controlled Cloud Environment In public clouds, resources are deployed into third-party controlled environments Virtualized environments without traditional physical network termination points, routers & firewalls AWS restriction prevented deployment of traditional point-to-point network devices such as routers A local host server-based software packaged had to be devised to meet this need Network Integration Challenge Public cloud environments & private datacenters utilize separate & different network IP subnets Cloud network has unique aspects with differences in network resource utilization & provisioning Validation & Verification http://lyle.smu.edu/emis/dt / Cloud network has unique aspects with differences in network resource utilization & provisioning Public/ private IPs, dynamic /static addressing, customer assignable IPs & firewall restrictions Non-standardized nature makes integrated network connectivity & communications a challenge Need for Integrated Network Communications One of the first steps in proving the feasibility of the DTCC model was to find a means of connecting the public and private datacenter environment t Need for integrated and unified network communications for the POC infrastructure Made possible by establishing VPNs between the cloud and private infrastructure 62

- 63. DTCC POC Integrated Public-Private Cloud Networks Validation & Verification http://lyle.smu.edu/emis/dt / 63 Hybrid Public-Private Cloud DT POC Secure Overlay Network Source: Cohesive Flexible Technologies, CohesiveFT, 2008

- 64. DTCC POC Integrated Public-Private Cloud Networks Validation & Verification http://lyle.smu.edu/emis/dt / Network Integration via VPNCubed Establishing a unified network is a key step in creating DTCC infrastructure VPN-Cubed product leveraged during the POC to provide a secure integrated network connectivity Established VPN among private & cloud nodes for secure overlay network integration Enabled TCP/IP connectivity between servers in private data & public cloud Integration of network subnet space for appropriate routing & server communications Static network IP addressing for Cloud devices and private datacenter devices Network topology control by using VPN-Cubed managers as virtual routers Use of popular enterprise protocols like UDP Multicast for service discovery Encrypted communications between devices via HTTPS & SSL OpenVPN 64

- 65. DTCC POC Load Balancing Between Environments Validation & Verification http://lyle.smu.edu/emis/dt / Simulated GSLB via DNS Global Server Load Balancing (GSLB) to address issue of failed site where client must change IP GSLB uses 2 DNS A records to provide domain host lookup, returning multiple records in an ordered list Multiple DNS A-Records map the specific domain site name for the POC to two distinct IP addresses The primary A-Record resolved to the private datacenter S1 site, secondary resolved to AWS cloud site Load balancing between S1 &S2 nodes enables web servers to manage HTTP requests in a HA mode Ideal solution would be a commercial Cloud load balancing service utilizing virtual machine appliances 65

- 66. DTCC POC Model Database Clustering Validation & Verification http://lyle.smu.edu/emis/dt / Local HA MSCS database clusters at both S1 & S2 nodes, utilize S1 as a primary database Web servers in both S1 and S2 nodes have an optimized and centralized configuration local to each server in the web farm to direct all database traffic to the database in S1 S1 & S2 web servers will direct all database traffic to the S1 database The S2 database remains active and available, but no web servers are configured to communicate with the database servers except in the event of an outage with S1 Asynchronous data replication replicates data changes from S1 database to S2 database Allows duplicate database at each site with consistency & single ownership of the data 66

- 67. DTCC POC Model Database Clustering Validation & Verification http://lyle.smu.edu/emis/dt / 67

- 68. DTCC POC Logical End User Traffic Flow Validation & Verification http://lyle.smu.edu/emis/dt / 68

- 69. Environment 1 - EC2 Account Container Local Active Directory & DNS Server Linux HTTP Server NLB Web Farm MS SQL Servers Local MSCS Cluster S2 – EC2 Elastic Cloud Compute Environment Windows 2008 IIS Web Server NLB Farm Validation & Verification DTCC POC Physical Infrastructure Implementation http://lyle.smu.edu/emis/dt / 69 Server-Based SSL VPN Internet DMZS1 – Private Datacenter Environment Open VPN Agents VPNCUbed Manager Servers & Gateway VPNCubed Overlay Network Windows 2008 IIS Web Server NLB Farm Linux HTTP Server NLB Web Farm OpenVPN Agents Local Active Directory & DNS Server MS SQL Servers Local MSCS Cluster VPNCubed VPN Manager Servers & Gateway

- 70. DTCC Model POC Findings Summary DTCC model implemented and tested in 2-node hybrid public cloud private datacenter configuration Successfully demonstrated and validated the feasibility of the DTCC model Demonstrated technical feasibility of integrated public Cloud environment with private datacenter Demonstrated functional load sharing between redundant site, removing failover requirements POC goals met through successful implementation and of the application use cases Load balancing of traffic amounts S1 and S2 nodes, operations do not have to failover to a Validation & Verification http://lyle.smu.edu/emis/dt / Load balancing of traffic amounts S1 and S2 nodes, operations do not have to failover to a secondary site because the system is designed to distribute load No requirements for bringing up hot or cold secondary systems in the event of an outage because these systems are already active and functional No downtime suffered, traffic automatically distributed in the event of a site outage, with current data via replication Leverage of the Cloud for a low-cost virtual server implementation to be expanded as needed 70

- 71. DTCC Model Proof of Concept POC Implementation To test proposed DTCC model, a Proof of Concept (POC) for was designed Test functionality of a Hybrid Public-Private Cloud Disaster-Tolerant infrastructure Demonstrate disaster-tolerant application functionality between private datacenter and public cloud POC Objectives & Goals Demonstrate technical feasibility of integrated public cloud with private datacenter Validation & Verification http://lyle.smu.edu/emis/dt / Demonstrate technical feasibility of integrated public cloud with private datacenter Demonstrate shared load with a redundant site, balancing traffic among the sites Primary computing facility and environment as the System S1 node Cloud Computing IaaS service and infrastructure as the System S2 node Duplicate S1& S2 computing resources residing in private datacenter & in AWS cloud Secure Integration & Connectivity 71

- 72. Outline Introduction & Research Objectives Overview & Background Problem Definition Approach, Methodology & Model Validation and Verification Summary and Conclusions http://lyle.smu.edu/emis/dt / Summary and Conclusions 72

- 73. Summary & Conclusions Research Findings Traditional DR does not represent an effective approach to mitigating risk of downtime DR instead has a high failure rate in the event of an actual traumatic disaster Businesses waste investment in DR caused by failed recovery efforts in disasters Renders DR ineffective & results in wasted capital & resource investment in DR Fails to meet the primary goal of IT service recovery Proposed Disaster Tolerant Computing Model Overview & Background http://lyle.smu.edu/emis/dt / Proposed Disaster Tolerant Computing Model Proposed DTCC model to provide an Disaster Tolerant Computing approach Helps address the challenges associated with DR Leveraged new technologies in cloud computing to reduce cost and complexity Significance of this Research: Disaster Recovery Ineffectiveness Challenges of Achieving High Availability Applicability of Cloud Computing to Disaster Tolerance Validity of the Disaster-Tolerant Computing Approach Verification of the functionality of proposed Disaster Tolerant Cloud Computing Model 73

- 74. Summary & Conclusions Crucial Business Continuance Data protection & ICT service availability are crucial to continued business operations in the event of disaster occurrence Difficulty in Meeting Needs Ongoing paradigm shift from physical to virtualized servers and ICT infrastructure, combined with escalating data protection requirements Makes it exceedingly difficult for many ICT organizations to meet business continuance needs DR Does Not Work Well Summary & Conclusions http://lyle.smu.edu/emis/dt / DR Does Not Work Well Traditional DR practices are not sufficient to protect against disasters and do not enable continued operations throughout disaster Failure of Disaster Recovery processes in the event of an actual disaster is common, occurring 50% of the time Wasted Investment This results in a large investment in DR that is wasted in failed recovery and lost capital expenditure on traditional Disaster Recovery and Business Continuity Planning approaches These practices do not produce the desired result: continued organizational and business IT service operations 74

- 75. Summary & Conclusions Disaster Tolerant Computing to Achieve Business Needs Leverage of the Disaster Tolerant Geographical Duplicate & Match (GDM) Combined with the rapid and elastic scalable, virtualized nature of Cloud Computing in a hybrid public cloud-private cloud Presents a feasible and robust Disaster Tolerant solution Low cost potential Cloud Computing & DT Computing Opportunity to apply DT computing approach to evolving Cloud Computing capabilities Summary & Conclusions http://lyle.smu.edu/emis/dt / Opportunity to apply DT computing approach to evolving Cloud Computing capabilities Unique, rapid elastic infrastructure expansion & contraction capability Appealing low-cost structure with effective ramp up during disaster Can be leveraged to achieve increased reliability & availability of IT systems with continued, uninterrupted IT service operations throughout disaster occurrence Viable New Approach Organizations can leverage this approach to achieve increased reliability & availability of IT systems Continued uninterrupted IT service operations throughout the occurrence of a disaster Architecture that leverages HA and Virtualized Server and Storage capabilities Integrated private & public cloud hybrid to enable cost-effective disaster-tolerance Capable of meeting mission-critical business needs 75

- 76. Summary & Conclusions Disaster Tolerant Approach Continuing IT service operation, as opposed to resuming them, is required to address DR failures Removing failover & recovery, focus on continued service availability throughout disaster occurrence Work to address the weaknesses and failure-prone nature of traditional DR Beginning with Disaster Tolerance In Mind Critical step in achieving DT is to begin with the idea of DT in mind in the initial design Building redundancy into the initial architecture itself is certainly not a new a concept Including disaster-tolerant architecture approach from initial design through implementation Summary & Conclusions http://lyle.smu.edu/emis/dt / Including disaster-tolerant architecture approach from initial design through implementation Establishing a process that incorporates disaster-tolerant technologies early in the IT solution design Step in a new and positive direction Practical Benefits Provides an alternative to traditional DR approaches to better meets businesses availability needs Proposal of DTCC model provides a viable alternative to traditional DR Help mitigate the risk downtime by migrating away from a recovery approach toward a DT approach Leverage of GDM-based DTCC model presents a feasible and robust disaster-tolerant solution 76

- 77. Ongoing & Future Research Areas Opportunities for future works in this research area are abundant and include: Load Balancing Further testing of GLSB and Network Load Balancing Capabilities Large Scale Model Large Scale Public-Private Hybrid Cloud Disaster-Tolerant Model with 3 and 4 Node Expansions Summary & Conclusions http://lyle.smu.edu/emis/dt / Cloud Only Model DTCC model Cloud-only variation utilizing multiple Cloud vendors with no private datacenter site Database clustering with Shared Nothing Database Implementation of Shared-Nothing backend database Cost & ROI Model Accurate Cost of Downtime and Value of Uptime Calculation Capability 772/7/2011

- 78. Summary & Conclusions – Plan Forward Finalize & Submit Dissertation in Dec. 2010 Numerous Comments Provided Modifications Incorporated Formal Editing Electronic Submission Date: Wednesday, Dec. 8 2010 Graduation Date: Saturday, Dec. 18, 2010 Summary & Conclusions http://lyle.smu.edu/emis/dt / Post Doctoral Work – Sr. Enterprise Architect, Capgemini Focused on cloud computing Developing internal cloud computing platform Considering options for commercial services centered around Disaster Tolerance and the cloud Planned Future Publications Over the Next Two Years Focused on future research areas 782/7/2011

- 79. SMU Disaster Tolerant Web Site http://lyle.smu.edu/emis/dt/ Research Paper & Document Management System Conferences & Journal Updates Researcher Bios News & Blogs Contact Information Summary & Conclusions http://lyle.smu.edu/emis/dt / 79 Centralized portal for research collaboration

- 80. ”A Disaster Tolerant Cloud Computing Model as A Disaster Survival Methodology” Question & Answers Session Presented by Chad M. Lawler Sr. Manager & Sr. Enterprise Architect, Capgemini B.S.I.S, University of Texas at Arlington, 2001 M.S.I.E.M, Southern Methodist University, 2004 netdeveloping@hotmail.com http://lyle.smu.edu/emis/dt/ Defense Date: Nov. 30, 2010