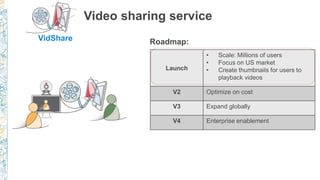

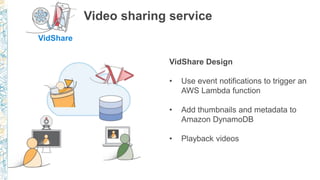

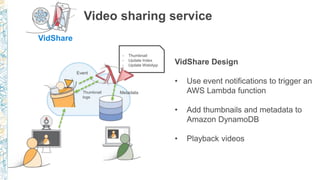

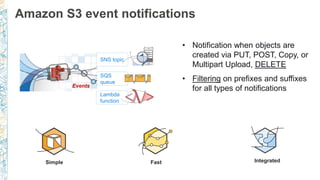

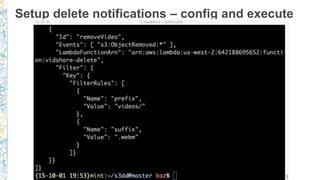

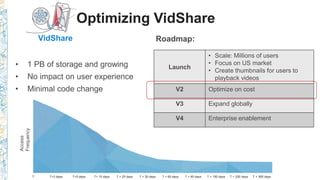

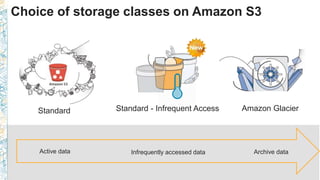

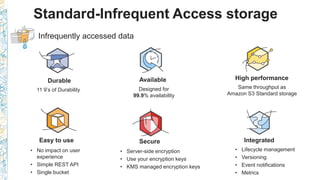

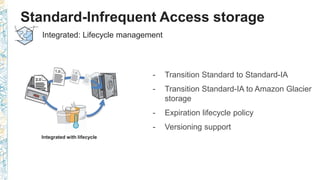

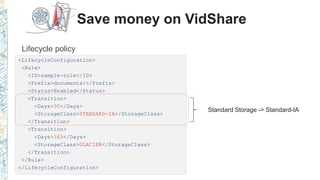

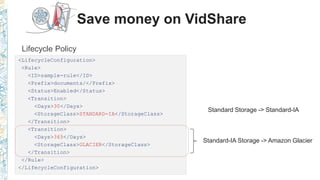

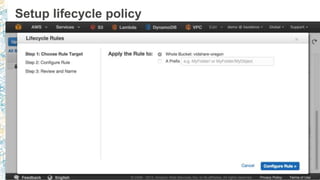

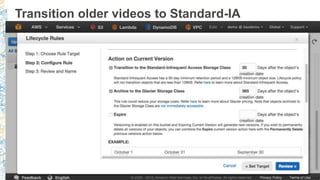

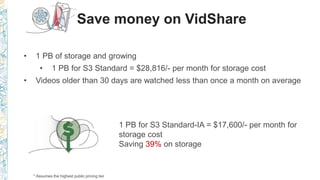

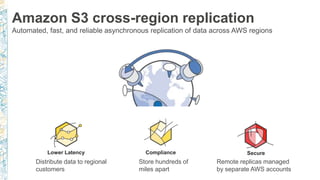

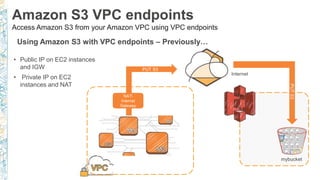

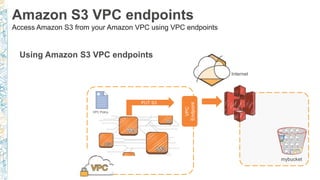

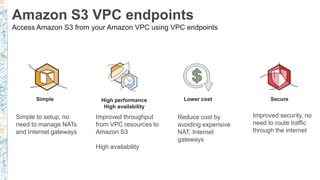

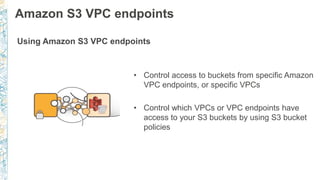

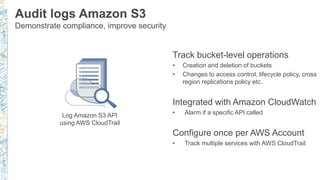

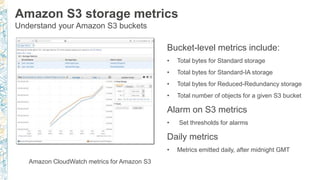

The document discusses various enhancements and features of Amazon S3 introduced in 2015, including cross-region replication, event notifications, and storage class options designed for cost optimization. It details the VidShare video sharing service's configurations and lifecycle policies that help manage data efficiently, as well as the use of VPC endpoints for improved security and cost considerations. Additionally, it highlights the integration of CloudTrail and CloudWatch for better compliance tracking and performance metrics.