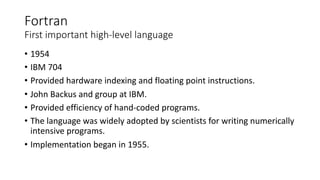

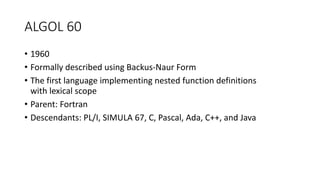

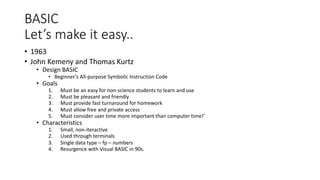

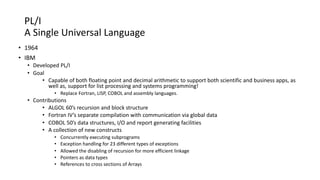

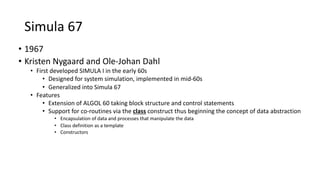

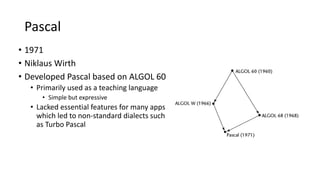

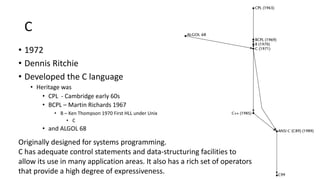

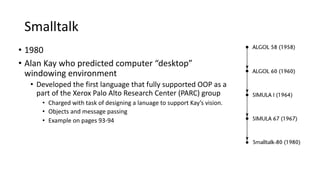

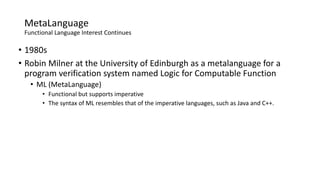

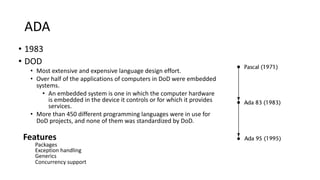

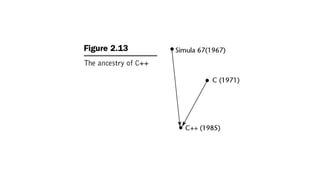

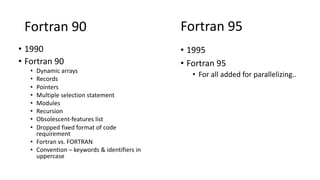

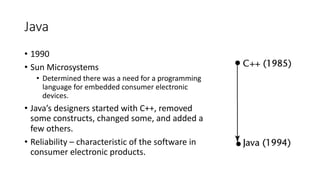

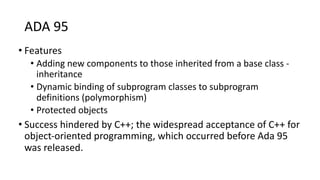

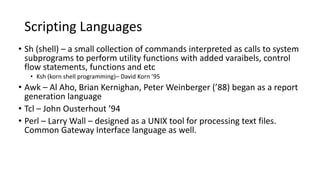

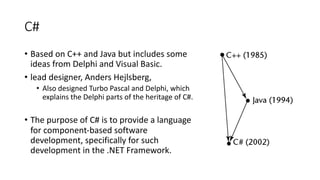

The major programming languages have evolved over several decades, beginning in the 1940s-1950s with assembly languages and languages like Fortran for scientific computing. Key developments included ALGOL in the late 1950s which introduced block structures, LISP for AI in the late 1950s, COBOL for business in the late 1950s, and BASIC for education in the 1960s. Object-oriented concepts emerged in the 1960s with Simula and became widespread in the 1980s and 1990s with languages like C++, Smalltalk, and Java. Functional programming concepts gained prominence with LISP and languages like ML and Haskell. Modern scripting languages aided system administration tasks. Each new generation of languages incorporated ideas from its