For any business today, it is a fact that more than 70% of email carries critical information such as agreements, contract negotiations, commitments, issues, invoices, reports, notifications, contacts, etc. Our customer, a leading financial services company, understands this and for them safely storing email for the long term is critical to manage risks and ensuring compliance.

This particular customer of Mithi is one of India's leading NBFC brands offering a diverse range of financial products and services across rural, housing and infrastructure finance sector. It also offers mutual fund products and investment management services.

What was the use case at this customer?

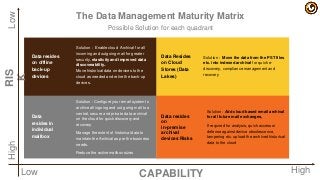

The customer has been accumulating a high volume of email data related to employees that exit the company. Such email data was being downloaded from the primary mail platform and stored in a data lake before disabling the email account. The legacy email data of these inactive users has to then be conserved for extended periods of time for compliance purposes.

What were the challenges faced by this customer?

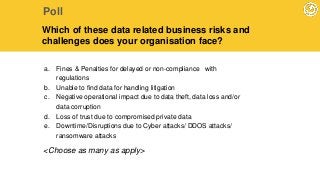

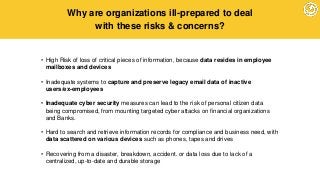

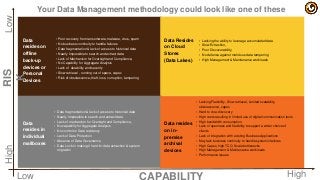

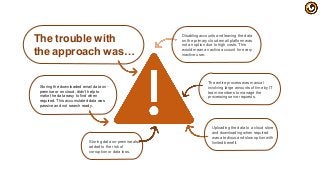

1. Leaving email data of inactive users on the primary mail platform in a disabled state was an expensive affair since they had to pay for the cost of a live mailbox.

2. Traditional methods of preserving data on tapes, drives and other media was risky and "passive" with no easy access when required.

3. Downloading and preserving email of a large number of existing employees was a manual and tedious process, prone to errors.

4. Email preserved on the cloud in a dark/passive email data lake, was making hard to search and retrieve from.

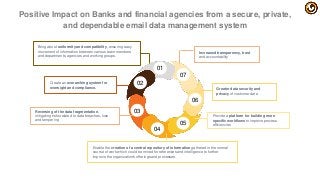

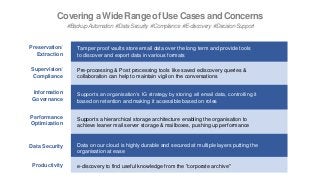

Join Mithi and Amazon to learn how this customer leveraged Vaultastic HOLD to

1. optimize the cost and reliability of storing legacy email data

2. automate the migration of data from the primary platform to the archive thereby improving IT team productivity for maintaining this data

3. stay compliance ready always with on-demand ediscovery

4. benefit from the pay per use billing model.

![AWS and Compliance Standards

Certifications & Attestations Laws, Regulations and Privacy Alignments & Frameworks

Cloud Computing Compliance Controls

Catalogue (C5)

DE 🇩🇪 CISPE EU 🇪🇺 CIS (Center for Internet Security) 🌐

Cyber Essentials Plus UK 🇬🇧 EU Model Clauses EU 🇪🇺 CJIS (US FBI) US 🇺🇸

DoD SRG US 🇺🇸 FERPA US 🇺🇸 CSA (Cloud Security Alliance) 🌐

FedRAMP US 🇺🇸 GLBA US 🇺🇸 Esquema Nacional de Seguridad ES 🇪🇸

FIPS US 🇺🇸 HIPAA US 🇺🇸 EU-US Privacy Shield EU 🇪🇺

IRAP AU 🇦🇺 HITECH 🌐 FISC JP 🇯🇵

ISO 9001 🌐 IRS 1075 US 🇺🇸 FISMA US 🇺🇸

ISO 27001 🌐 ITAR US 🇺🇸 G-Cloud UK 🇬🇧

ISO 27017 🌐 My Number Act JP 🇯🇵 GxP (US FDA CFR 21 Part 11) US 🇺🇸

ISO 27018 🌐 Data Protection Act – 1988 UK 🇬🇧 ICREA 🌐

MLPS Level 3 CN 🇨🇳 VPAT / Section 508 US 🇺🇸 IT Grundschutz DE 🇩🇪

MTCS SG 🇸🇬 Data Protection Directive EU 🇪🇺 MITA 3.0 (US Medicaid) US 🇺🇸

PCI DSS Level 1 💳 Privacy Act [Australia] AU 🇦🇺 MPAA US 🇺🇸

SEC Rule 17-a-4(f) US 🇺🇸 Privacy Act [New Zealand] NZ 🇳🇿 NIST US 🇺🇸

SOC 1, SOC 2, SOC 3 🌐 PDPA - 2010 [Malaysia] MY 🇲🇾 Uptime Institute Tiers 🌐

PDPA - 2012 [Singapore] SG 🇸🇬 Cloud Security Principles UK 🇬🇧

PIPEDA [Canada] CA 🇨🇦

🌐 = industry or global standard Agencia Española de Protección de Datos ES 🇪🇸

https://aws.amazon.com/compliance/](https://image.slidesharecdn.com/asset-webinar-financialserviceslegacyemaildatav7-190522092435/85/How-a-financial-services-company-preserved-legacy-email-data-on-the-cloud-and-gained-from-ediscovery-43-320.jpg?cb=1558517169)