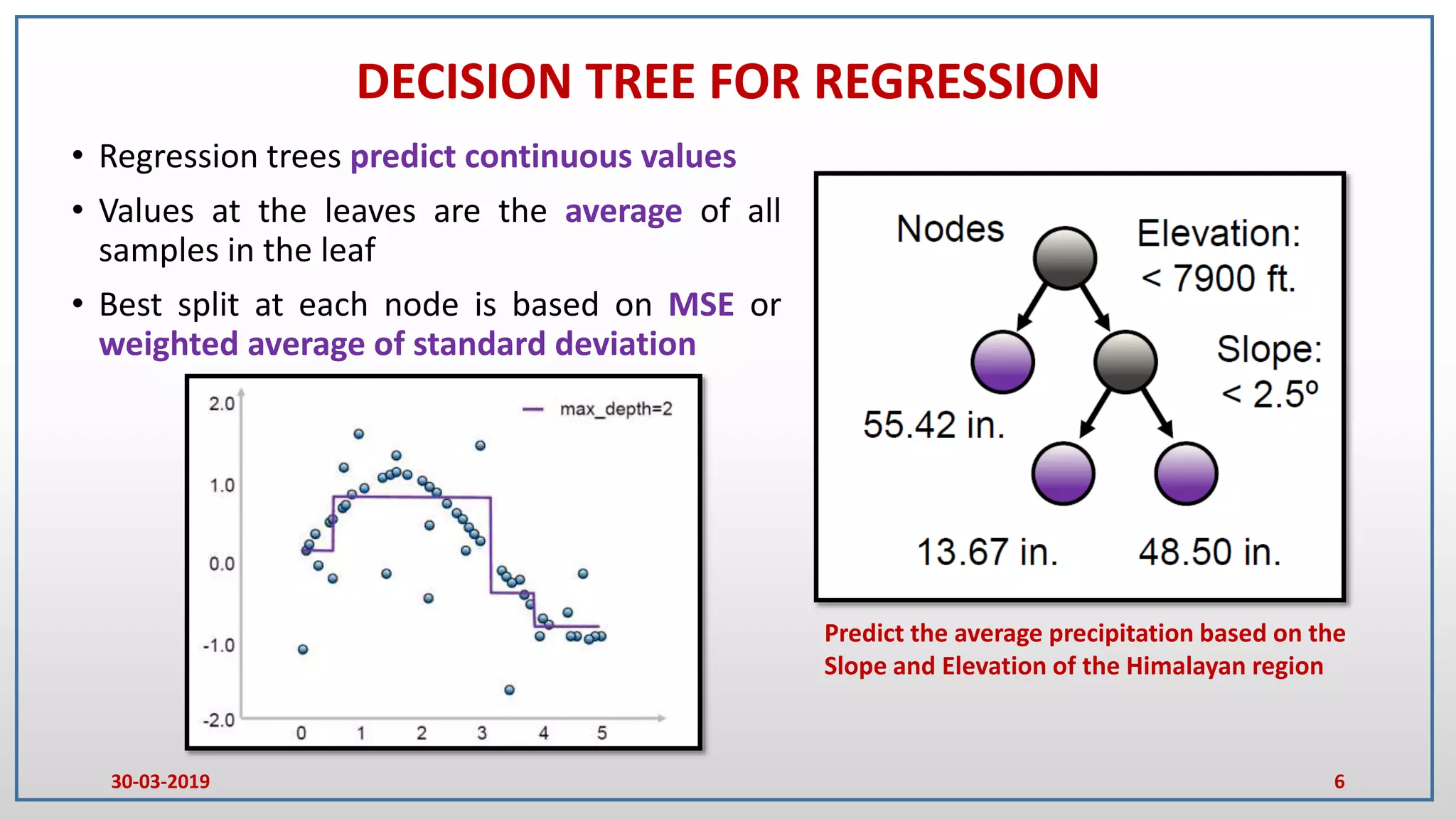

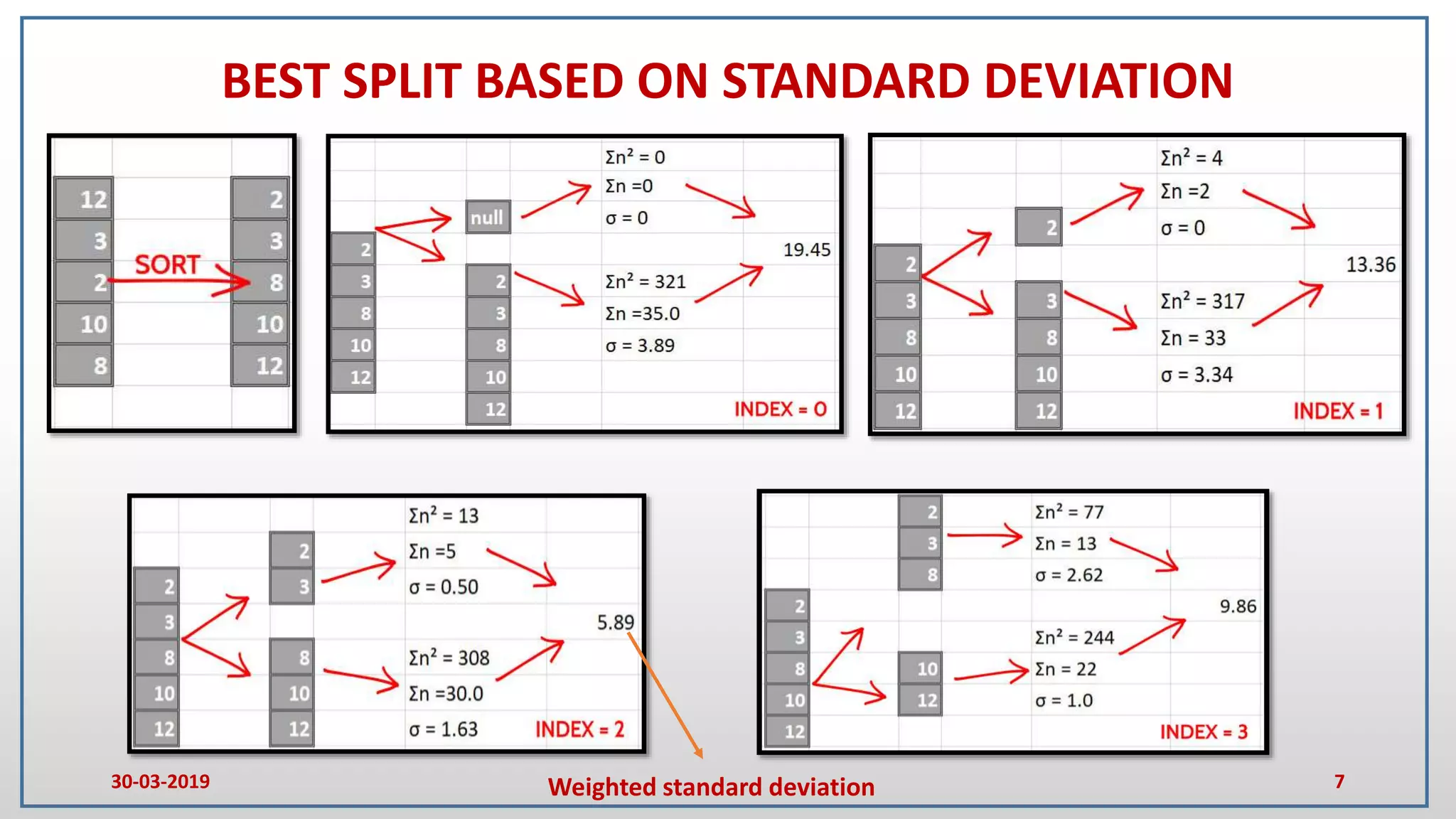

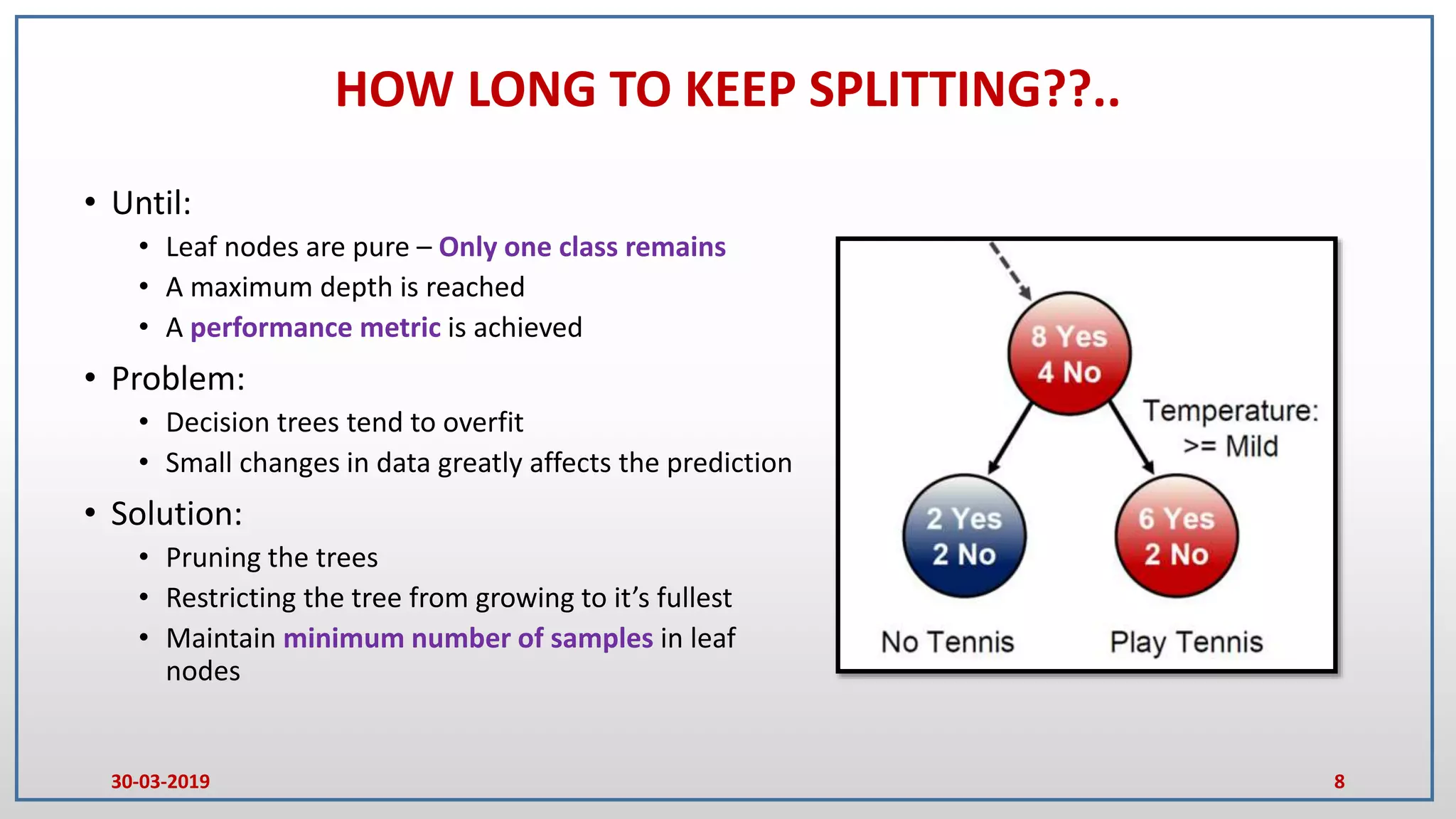

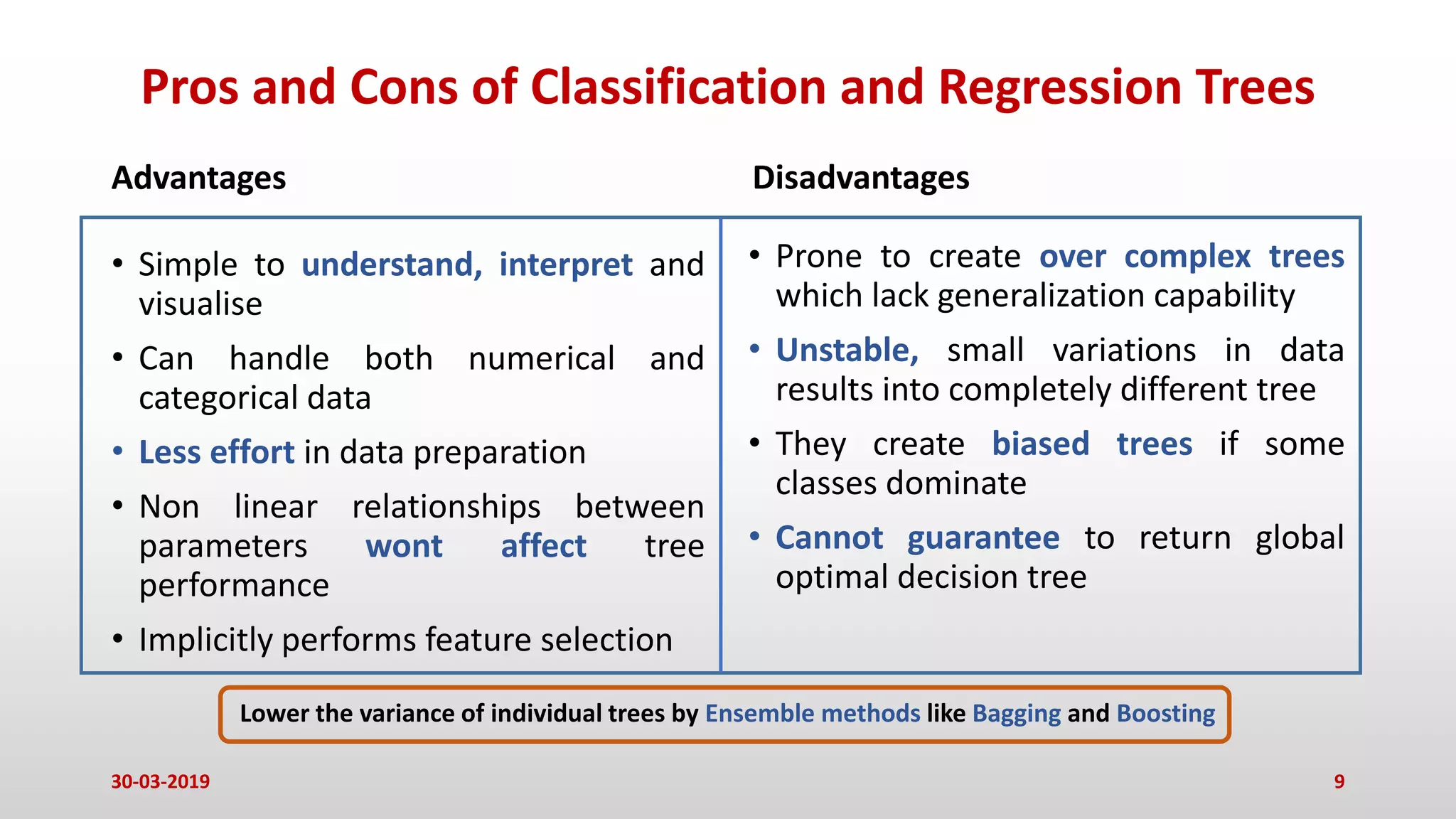

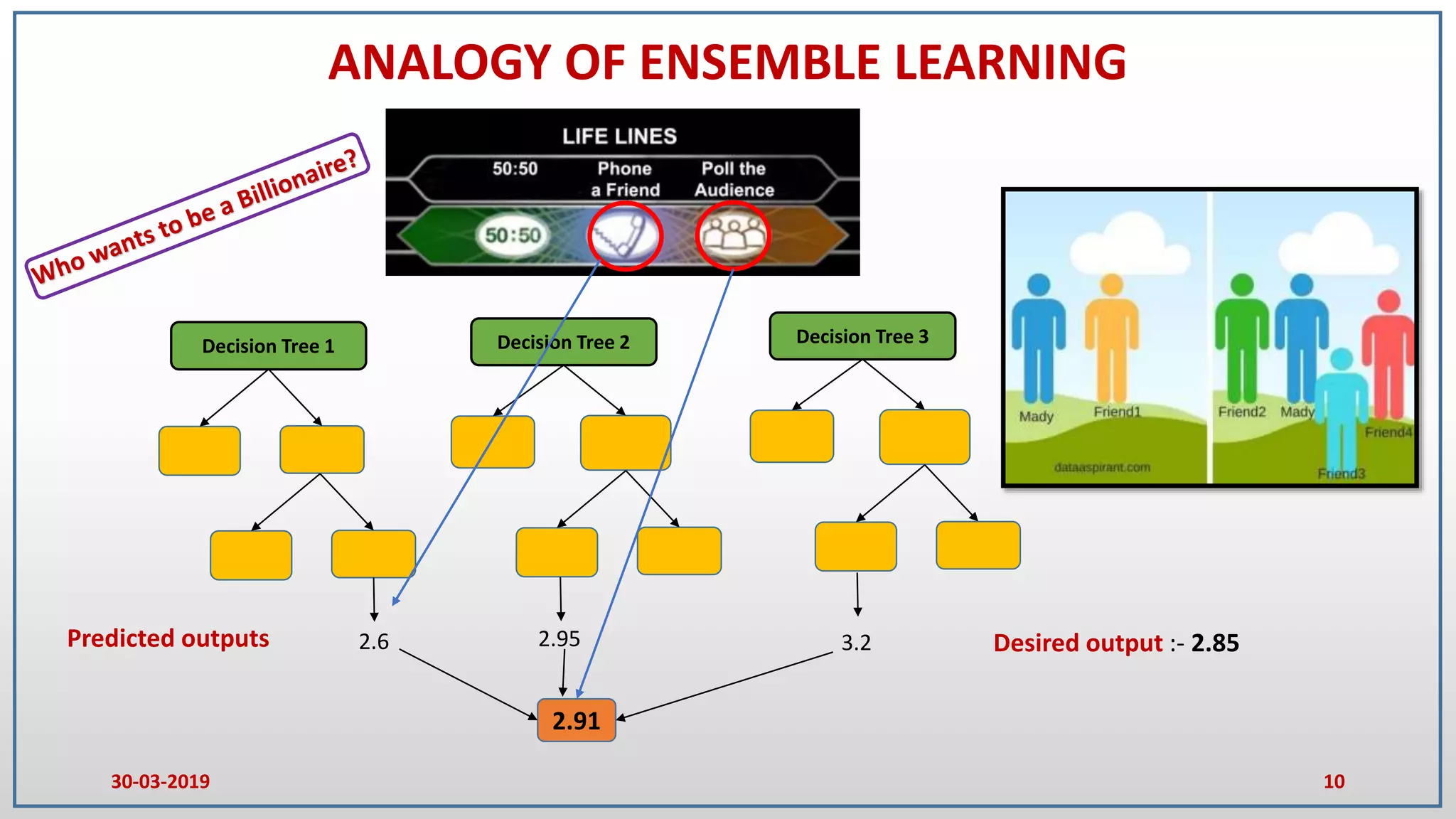

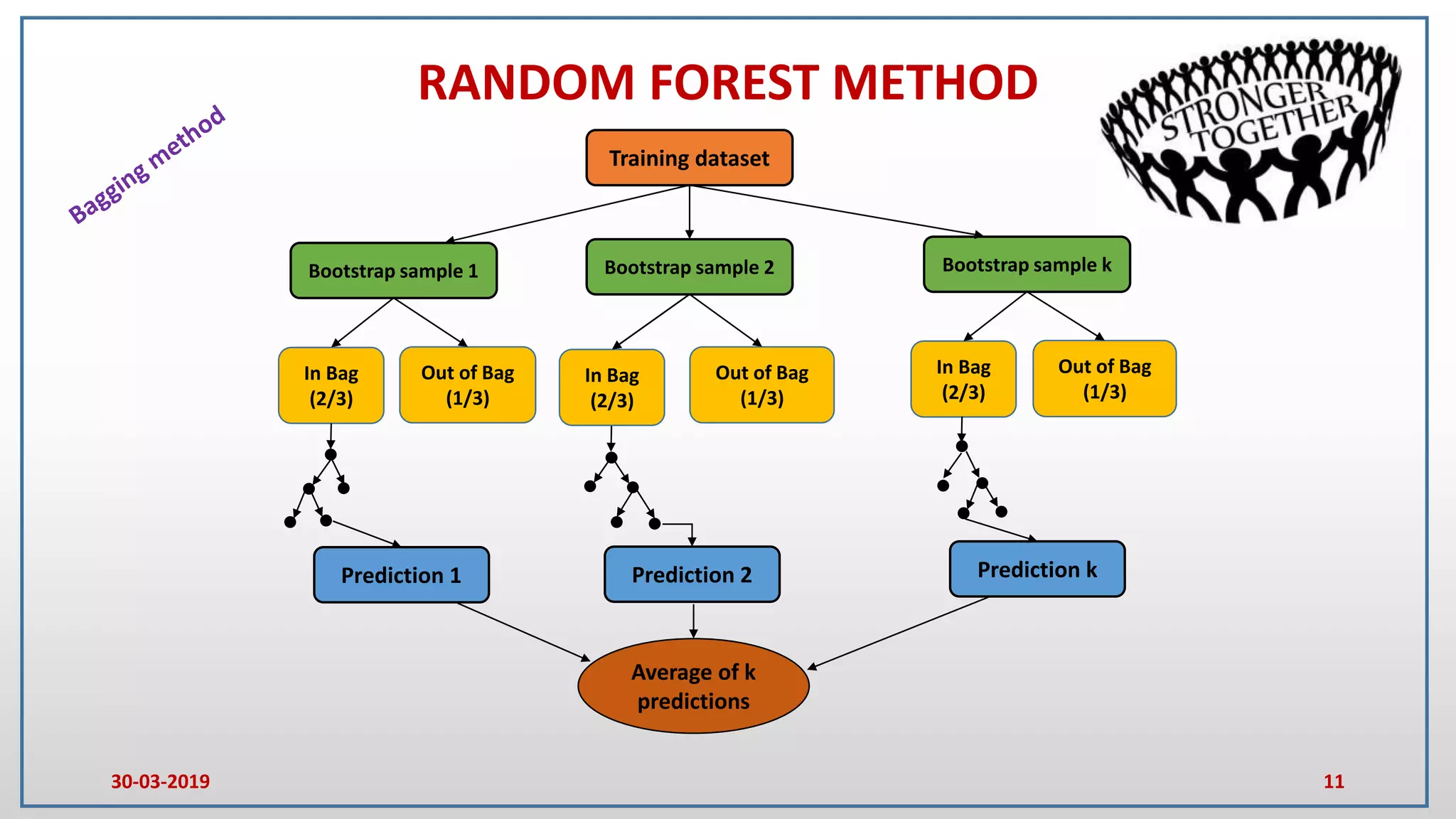

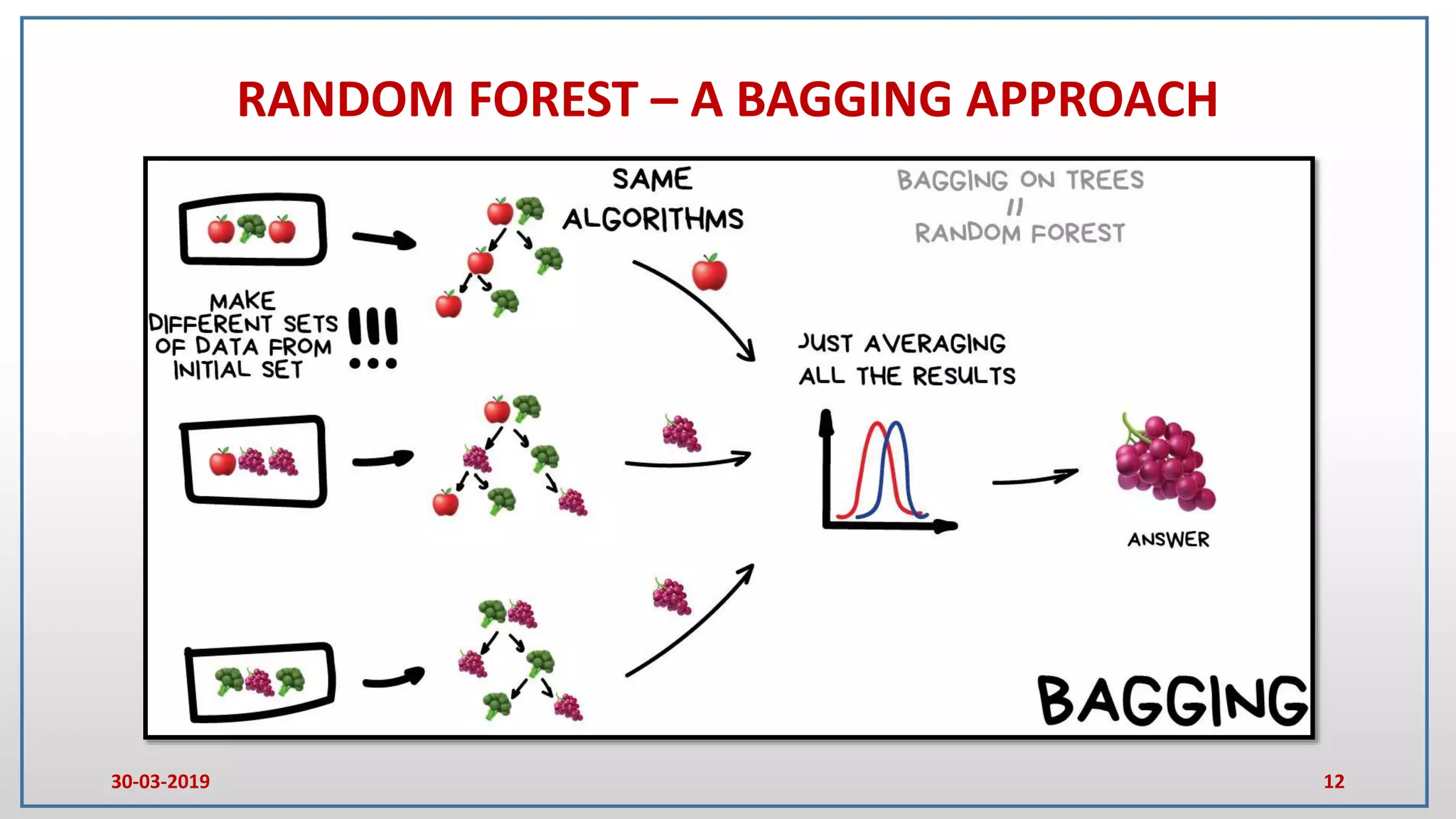

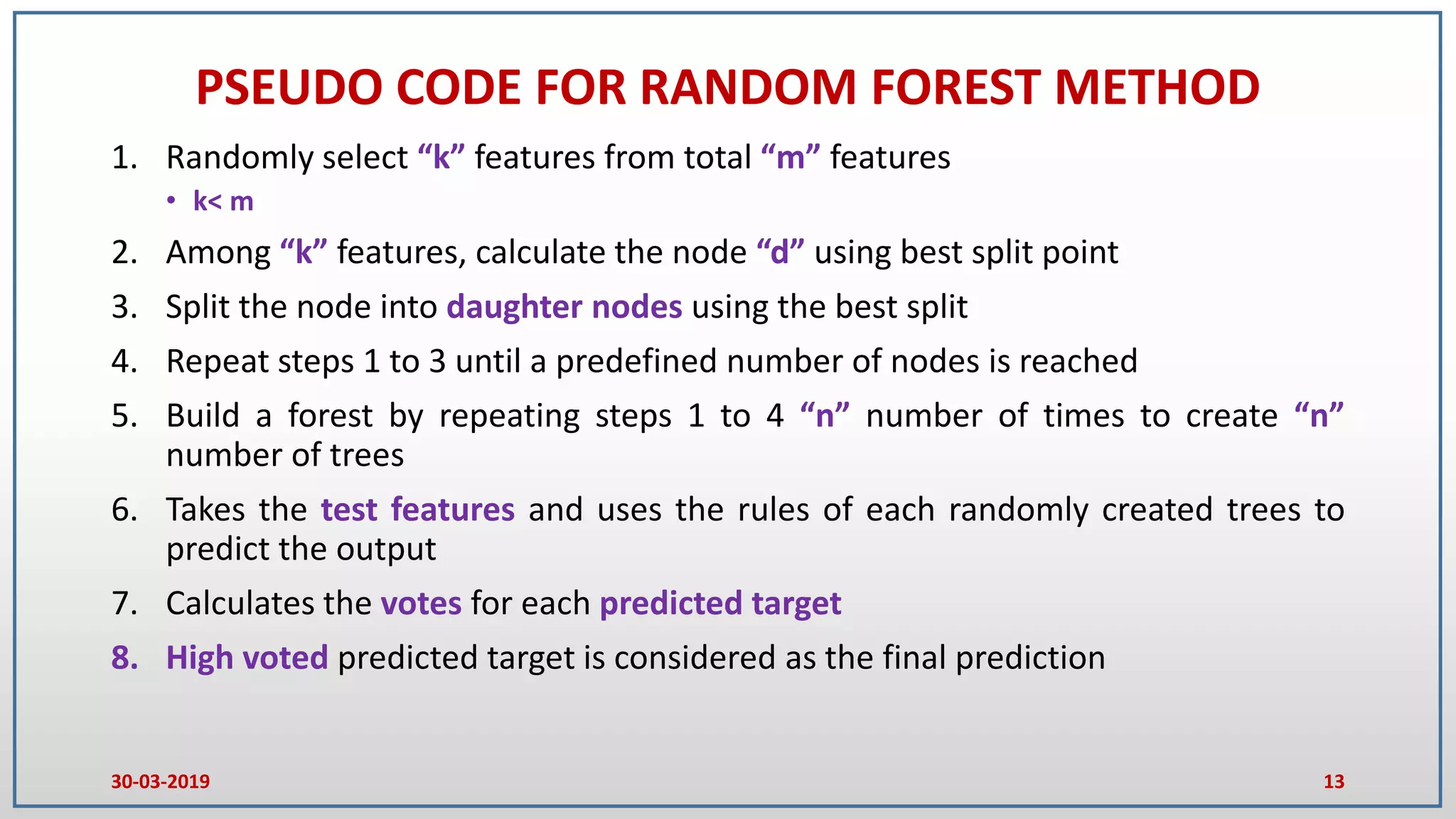

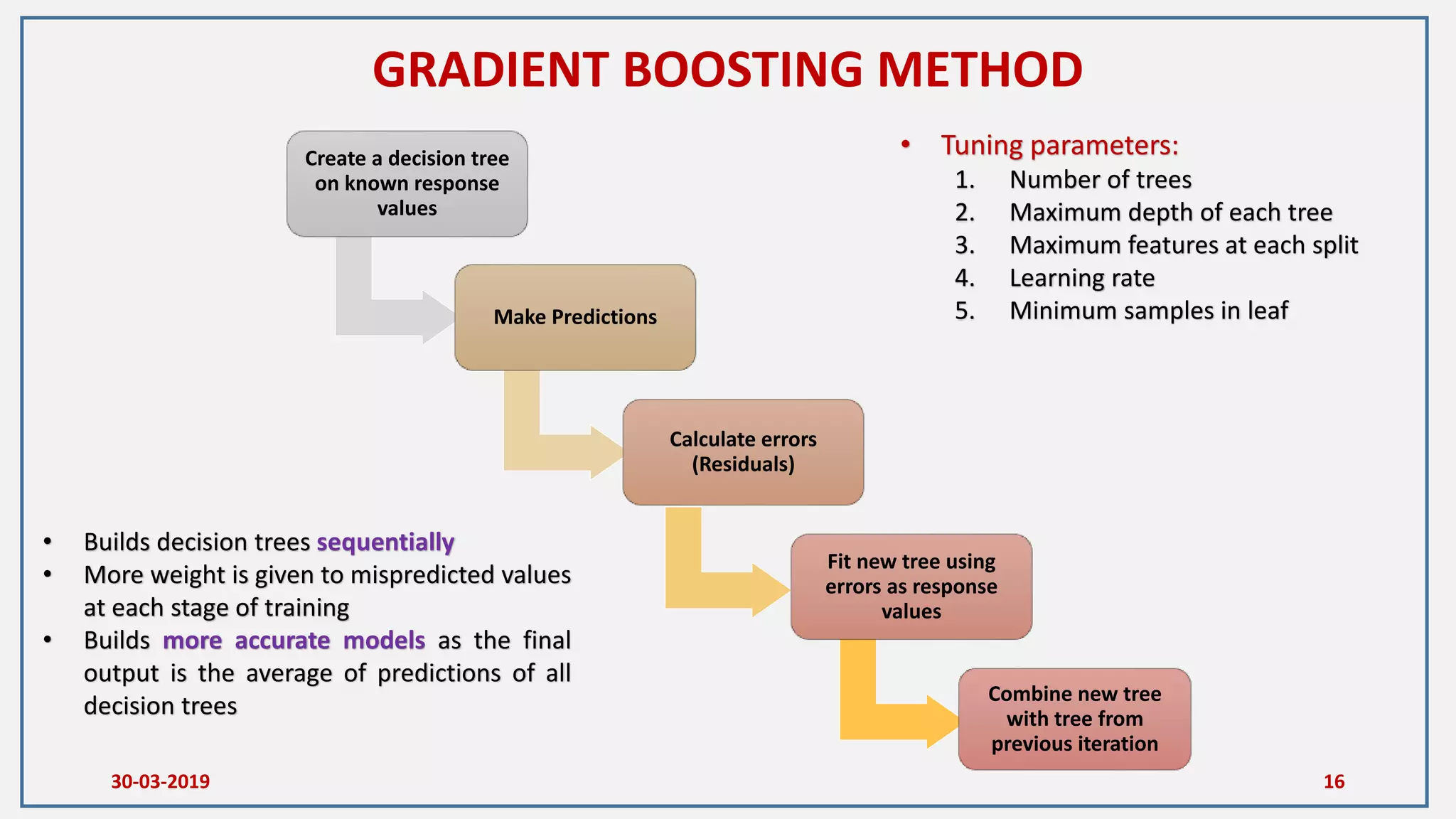

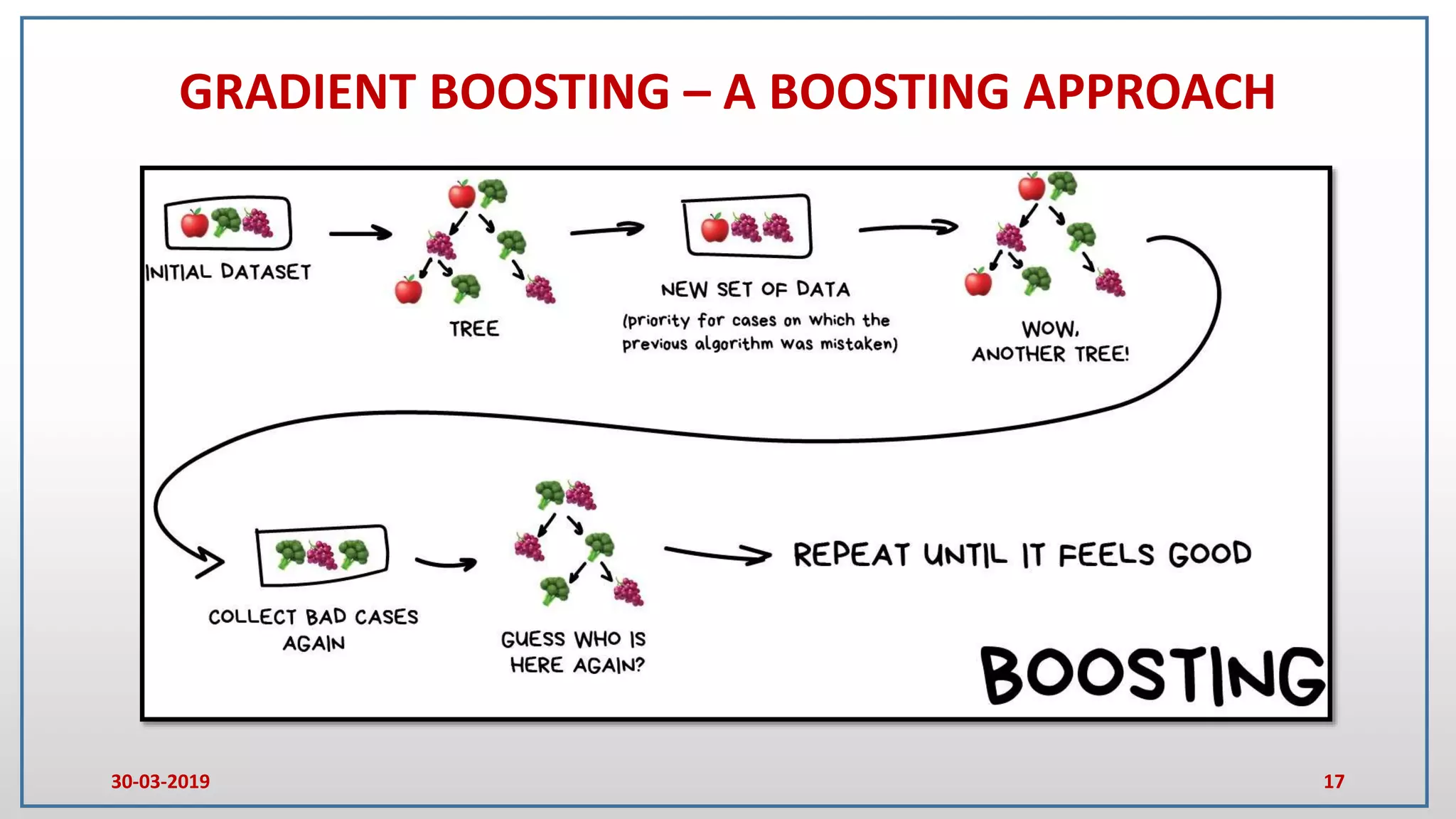

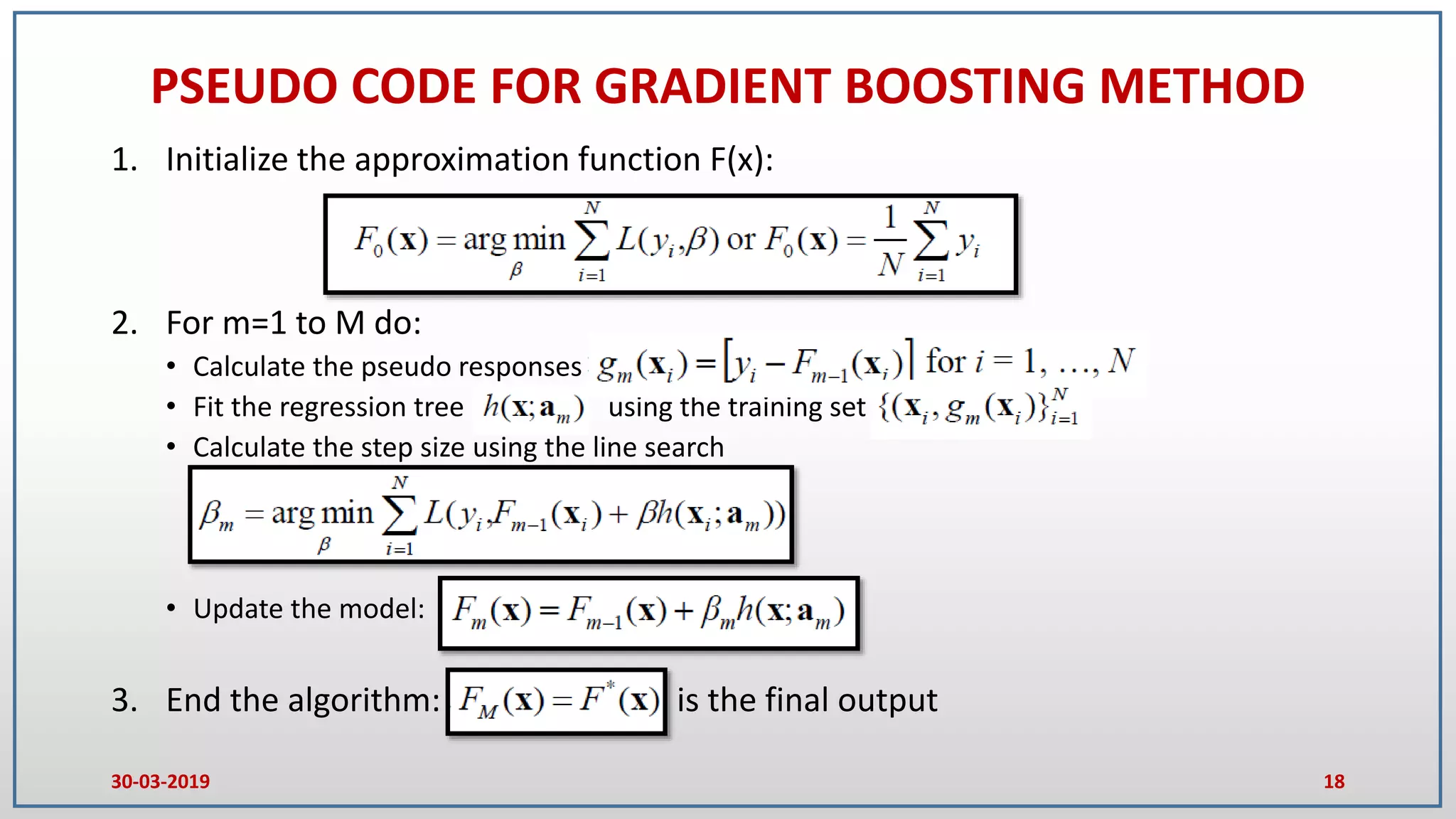

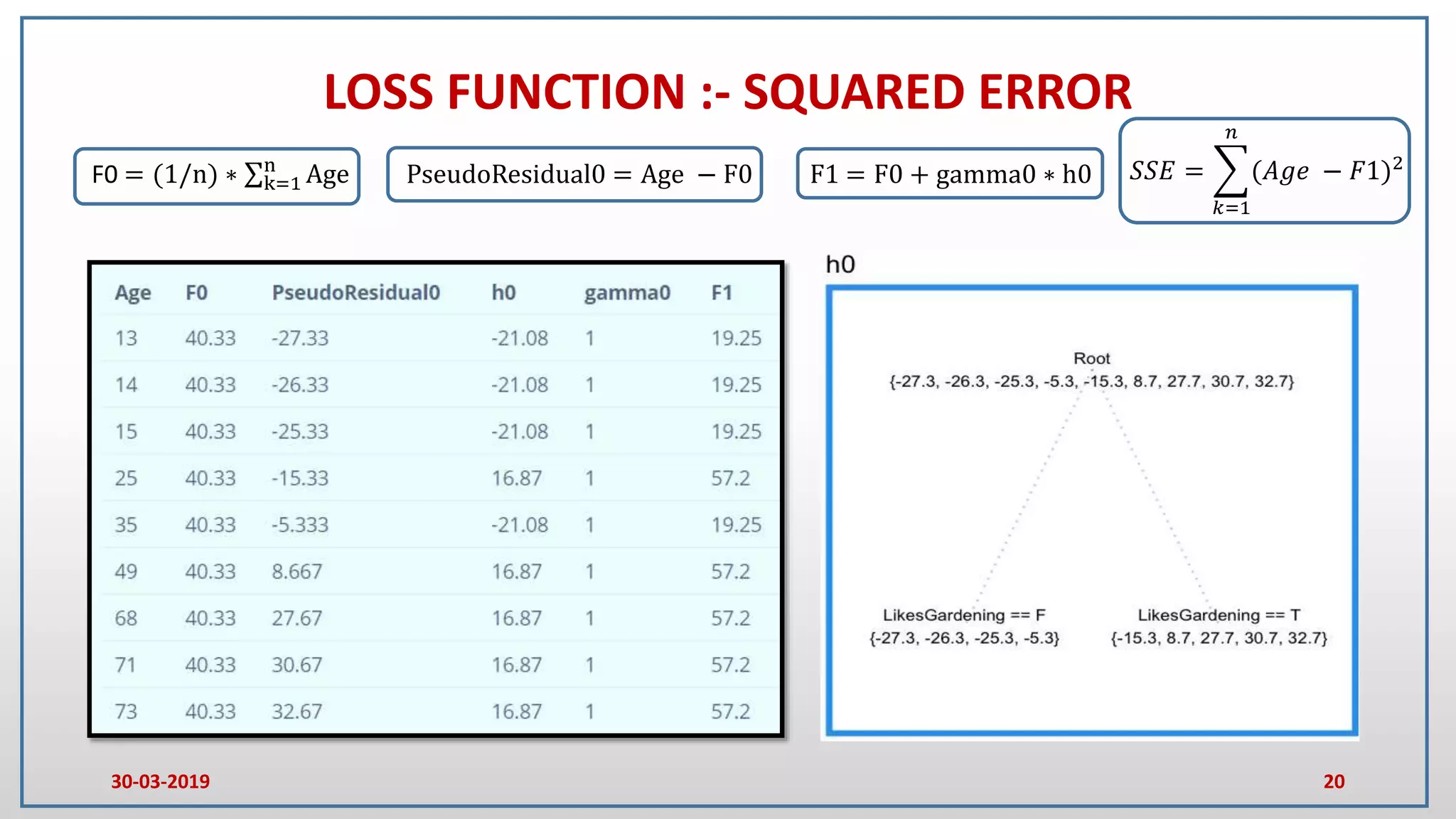

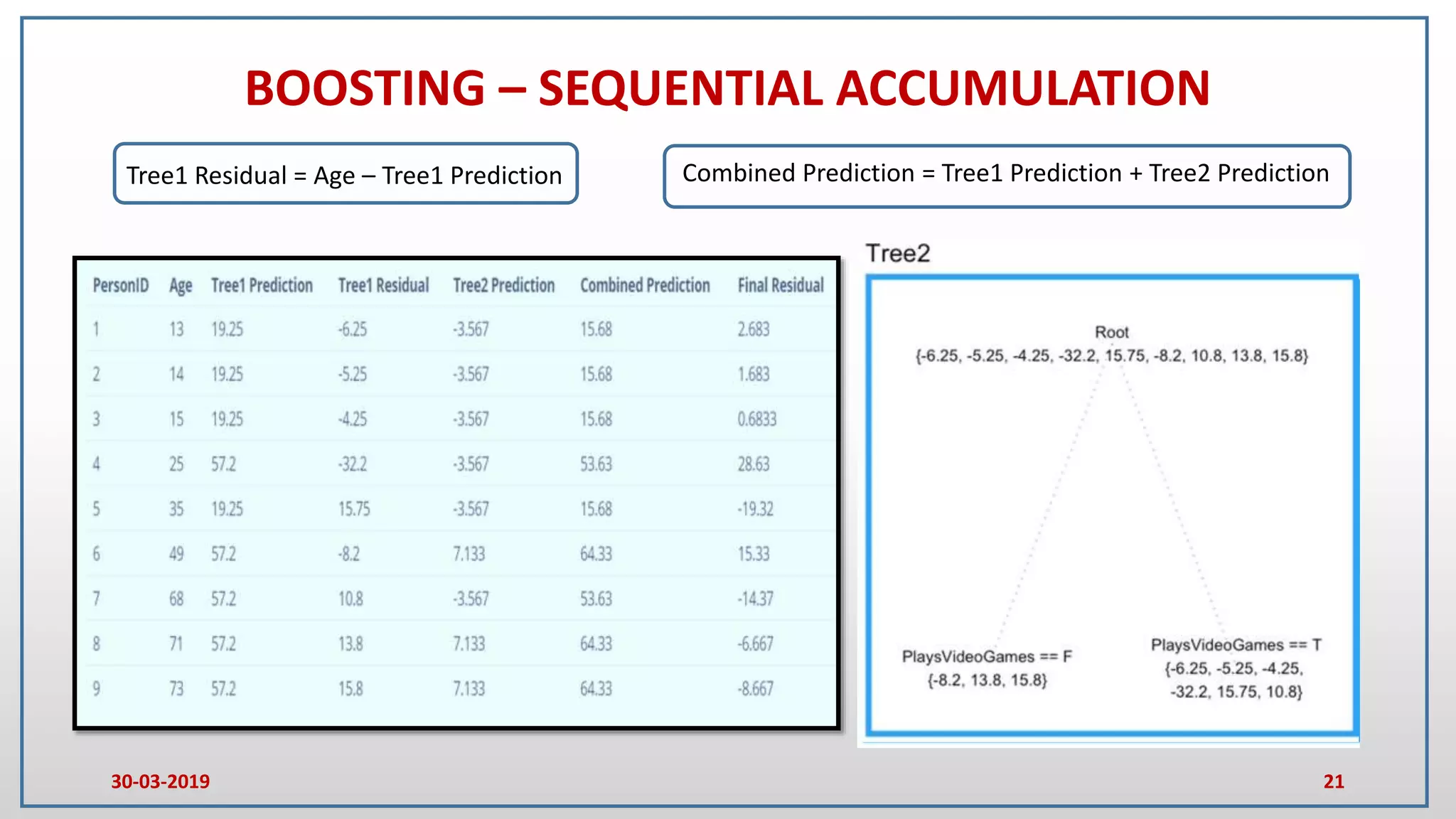

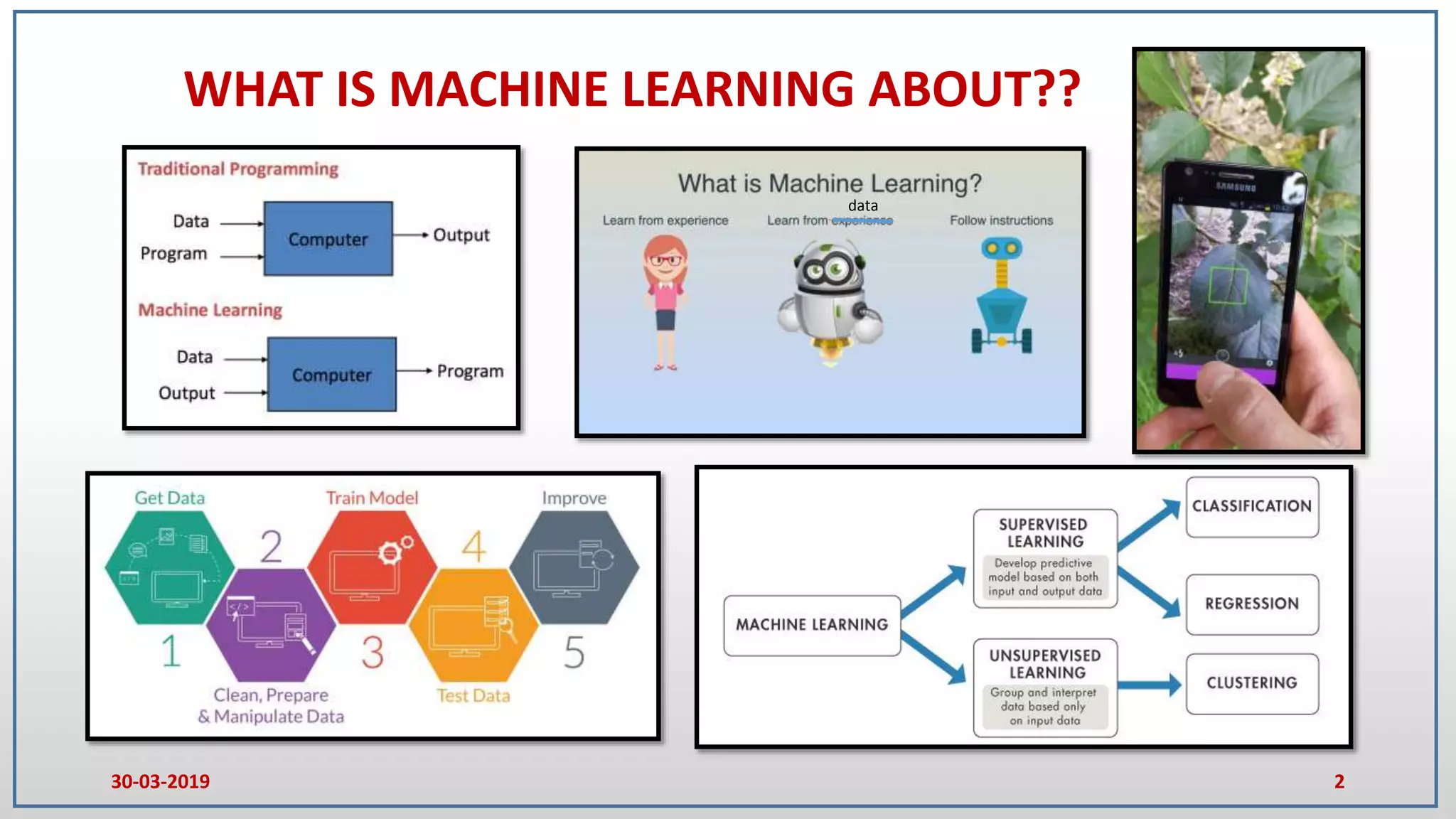

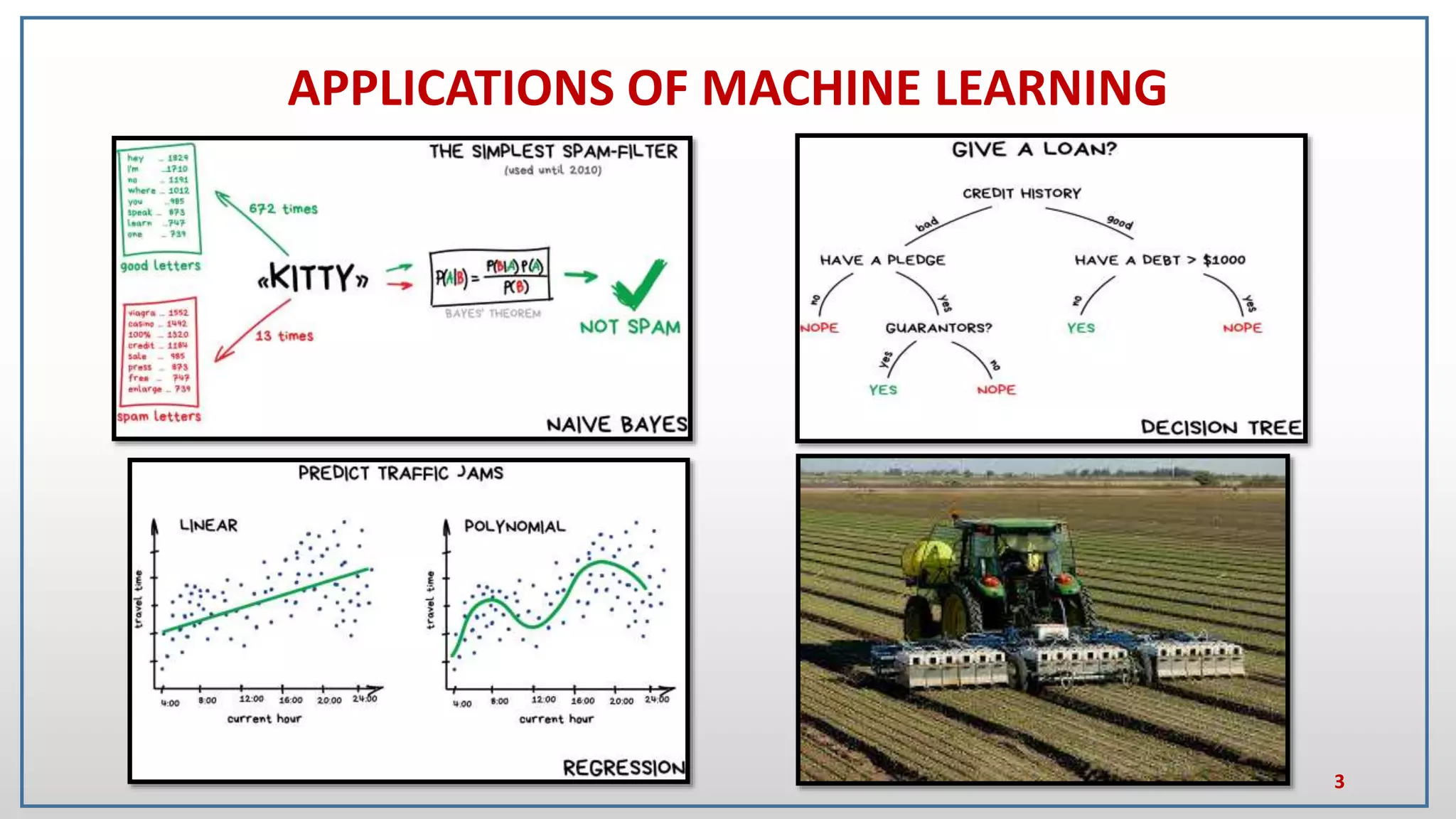

This document provides an introduction to random forest and gradient boosting methods, explaining the anatomy and functioning of decision trees, including their application in classification and regression tasks. It discusses the advantages and disadvantages of decision trees, the process of ensemble learning through bagging and boosting, and the practical applications of these methods in fields like banking and medicine. The document also details the pseudo code for both random forest and gradient boosting approaches.

![ANATOMY OF DECISION TREE

4

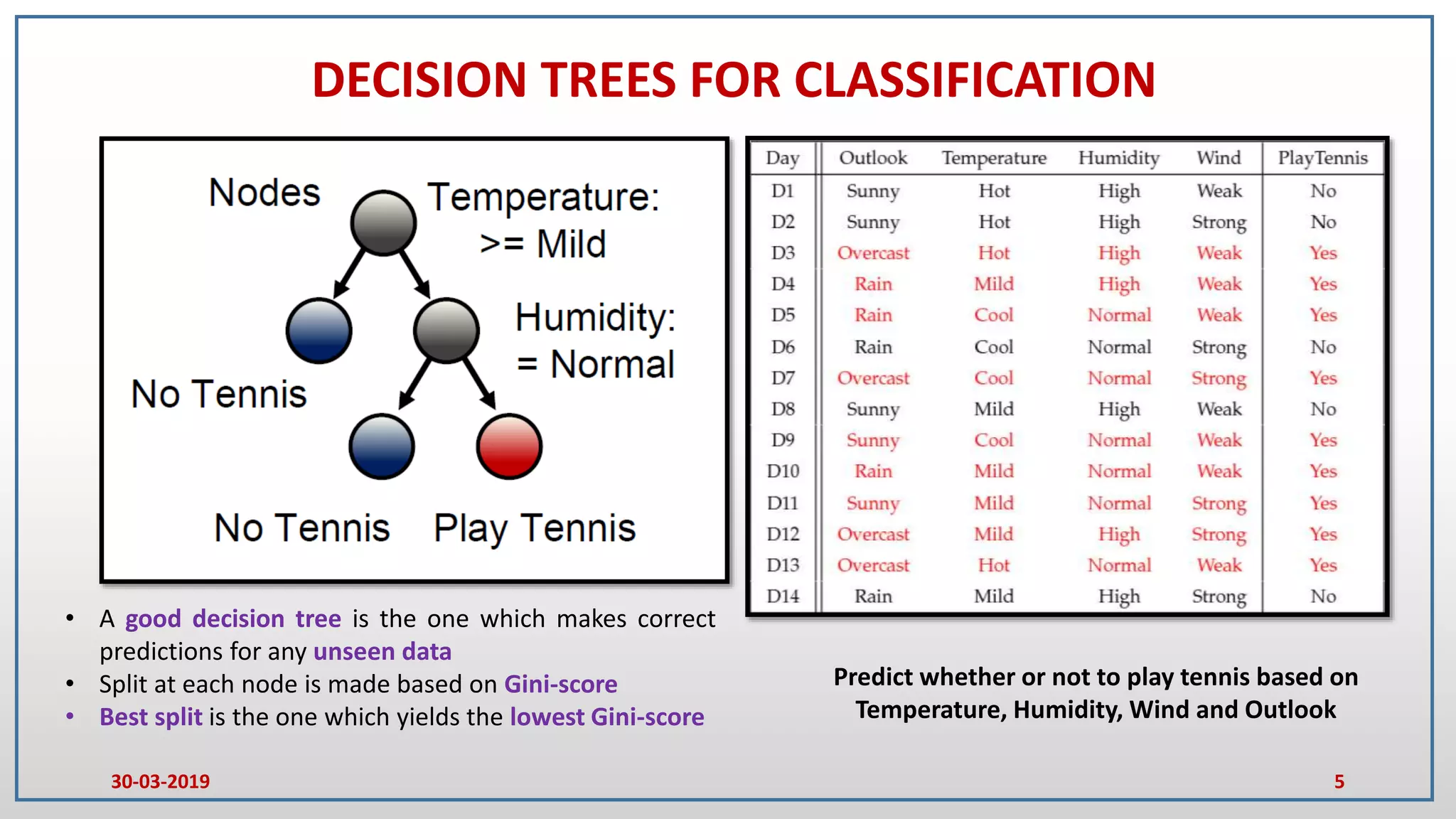

• Trees that predict categorical results are

called as decision trees

• At each node certain set of rules should be

satisfied

• Output from each node will be a Boolean

(True/False)

• Splitting is a process of dividing a node into

two or more sub nodes

• Root node represents the entire population

• When sub nodes split into further sub

nodes then it’s a decision node

• Nodes that do not split are called as

terminal nodes/leaf nodes

ROOT NODE

DECISION NODE

LEAF NODE

Decision tree for Regression dataset

X[i] :- Input variables in the dataset

MSE :- Mean Squared Error of all samples in a node

Samples :- Total number of samples in a node

Value :- Average value of all samples corresponding to

an output variable in a node

30-03-2019](https://image.slidesharecdn.com/introductiontorandomforestandgradientboostingmethods-alecture-190402015955/75/Introduction-to-random-forest-and-gradient-boosting-methods-a-lecture-4-2048.jpg)