Providing Choice and Control In a 360 Degree Environment For Those with Low or No Hearing

- 1. PROVIDING CHOICE AND CONTROL IN A 360 DEGREE VIDEO ENVIRONMENT FOR THOSE WITH LOW OR NO HEARING Max Evjen (@cantus94) #MW19-CC

- 2. War and Speech Exhibition Virginia Tech - Content Creators Digital Scholarship Lab - 360 Video and VR Evaluation Plan World War One in Vauquois Project (@cantus94) #MW19-CC

- 3. DESIGN CHALLENGE Accessibility in Immersive Content Area Desire for self directed participants Content shows up in different places, narration with no visual cues. No captioning, and video doesn’t allow for it in any significant way. Where to put audio content - different places? (@cantus94) #MW19-CC

- 4. PROPOSED SOLUTION Let participants control where they look. Put copy in timed slides coordinated with 360 video, on a technology agnostic platform. Created easily. AT (Appropriate Technology) We had copy (though incomplete) (@cantus94) #MW19-CC

- 5. IMPLEMENTING THE SOLUTION Needed to complete copy edits. Block copy into slides. Quality testing. Set timing (issue with slide timing). Export to video editing software (Kaltura). Embed on video hosting site (MediaSpace), accessed by a link. (@cantus94) #MW19-CC

- 6. EVALUATION QUESTIONS How do visitors use the tablets? Are visitors satisfied or not with the experience? What else might visitors have to say about the experience? (@cantus94) #MW19-CC

- 7. METHODOLOGIES Survey Behavior Sampling (Video Observation) Target n=10 (@cantus94) #MW19-CC

- 8. EARLY RESULTS (SURVEYS, N=2) Helpful, yes, but with issues. Learned about underground warfare, timing of slides is crucial. One would recommend, other recommends the video. Looking at slides negatively impacts looking at some video; text appearing in video complicates reading slides. (@cantus94) #MW19-CC

- 9. EARLY RESULTS (BEHAVIOR SAMPLING, N=2) Participants: Spent all of the time in one place Read tablet most of the time Looked up periodically to see visuals Did not speak during the experience (@cantus94) #MW19-CC

- 10. PROVIDING CHOICE AND CONTROL IN A 360 DEGREE VIDEO ENVIRONMENT FOR THOSE WITH LOW OR NO HEARING Max Evjen (@cantus94) #MW19-CC

Editor's Notes

- Hello Everyone, I’m Max Evjen and I’m here to talk about Providing Choice and Control in a 360 Degree Video Environment for Those with Low or no Hearing. I’d appreciate it if you would all use the hashdash #MW19-CC if tweeting about this talk.

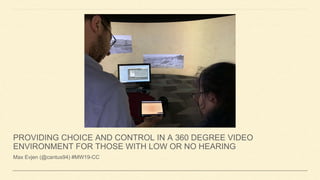

- This project originated with the War and Speech: Propaganda, Patriotism, and Dissent in the Great War Exhibition at the Michigan State University Museum, where I am Performance and Digital Engagement Specialist. The curator of that exhibition discovered that people at Virginia Tech had developed a 360 degree video and VR experience about World War One in Vauquois, a town in Northeastern France that saw underground warfare between the French and the Germans that obliterated the town. The people at Virginia Tech were offering these experiences free to museums, and while the MSU Museum does not have a 360 degree room or VR equipment, a new Digital Scholarship Lab was being built in the MSU Main Library, across the street from the Museum, so we brought those experiences to them as their inaugural experiences. We also included an evaluation plan for those experiences. In time, the Director of the Digital Scholarship Lab, Terence O’Neil, and I were looking to make the 20 minute, 360 degree video accessible to those with low or no hearing, since it heavily depends on audio narration.

- Our design challenges followed: we wanted an equitable experience for those with low or no hearing. We wanted everyone to be self directed. Content shows up in various places on the 360 degree screen and the the narration follows almost no visual cues. There is no captioning and the video doesn’t allow for it in a significant way. Plus, where would we put captions, since the content pops up all over the screen?

- In the course of one meeting Terence and I proposed a fairly easy solution: We wanted to ensure participants retained control for where they wanted to look, even with captions, so we decided to put the copy of the narration into slides that would be coordinated with the narration and ensure that it could be accessed an any digital platform. We realized we had the technology available to do this, and we had the copy of the narration from the Virgina Tech folks. Often, remediation efforts are timely and costly, but in this instance, we quickly realized we could do remediation quite easily.

- To implement the solution we needed to make copy edits, since some of the copy didn’t match with how the narration ended up. Then we had to separate the copy into slides. A student, Jacob Harrison, did this work. Terence and I did quality testing to ensure all copy matched. Then we sought to make the timing work with slide platforms, but we couldn’t find an easy way to make slides advance when we needed them to in concert with the narration of the video, so we recorded changing the slides in concert with the video in Kaltura, which is technology available to students, staff, and faculty at MSU, and embedded that video into the hosting site, MediaSpace, also available at MSU, and accessible on any platform via a link.

- We sought to test this initiative with individuals with low or no hearing adhering to the following evaluation questions: How do visitors use the tablets? Are visitor satisfied or not with the experience? What else might visitors have to say about the experience?

- The methodologies we chose to address those questions were a survey questionnaire, and behavior sampling through video. We established a target n of 10 because we wanted some essentially short feedback.

- I was able to just get 2 participants with low hearing to test the initiative the day before I left for this conference, so we are still collecting data, and these are early results.

- The results of the behavior sampling will have to be coded, but primarily it shows the following: These participants spent all of the time in one place, but this might be due to the fact that one of our two devices for testing that day failed, so both participants had to look at one tablet. They spent most of the time looking at the tablet, but looked up at the video periodically. The participants did not speak during the entire 20 minute experience.

- We are still collecting data, and look forward to future results for the project. Thank you!