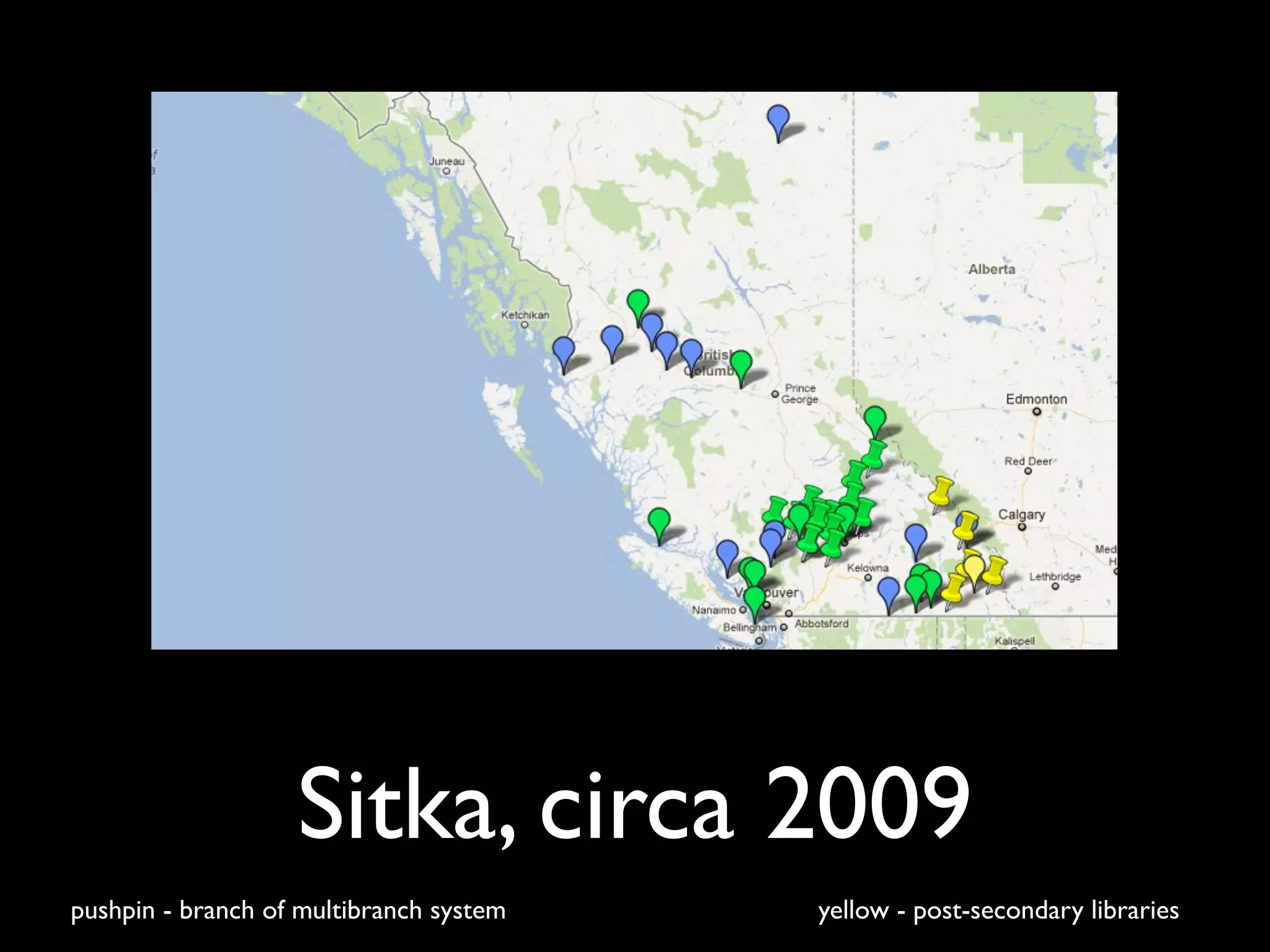

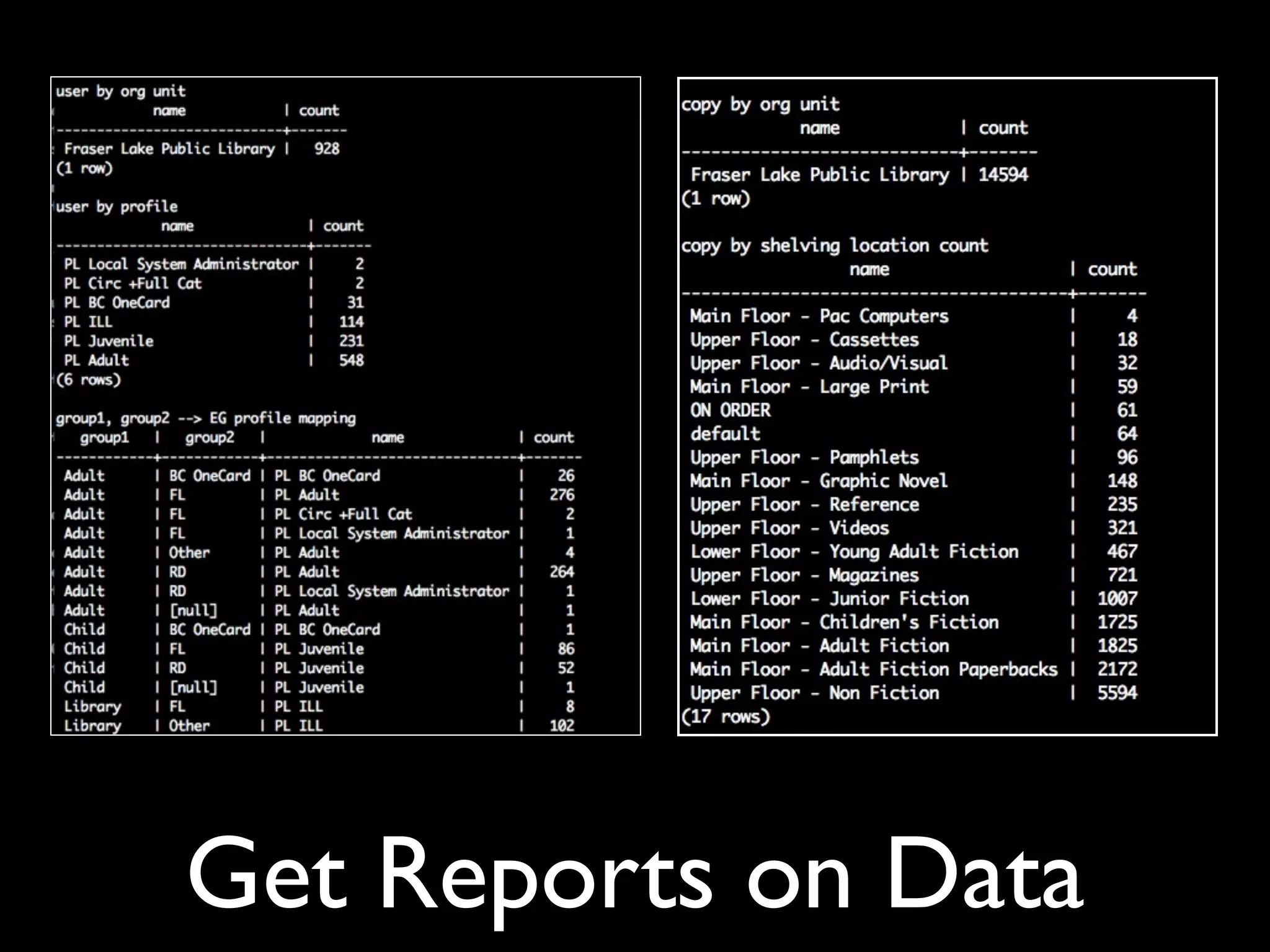

This document discusses lessons learned from library data migration projects. It provides tips for libraries undertaking a migration, including cleaning up data before migration, developing a clear project plan, testing early and often, standardizing scripts, and using staging tables. Staff training, managing expectations, and getting management support are also emphasized. The document is illustrated with photos related to libraries, data migration, and technology.