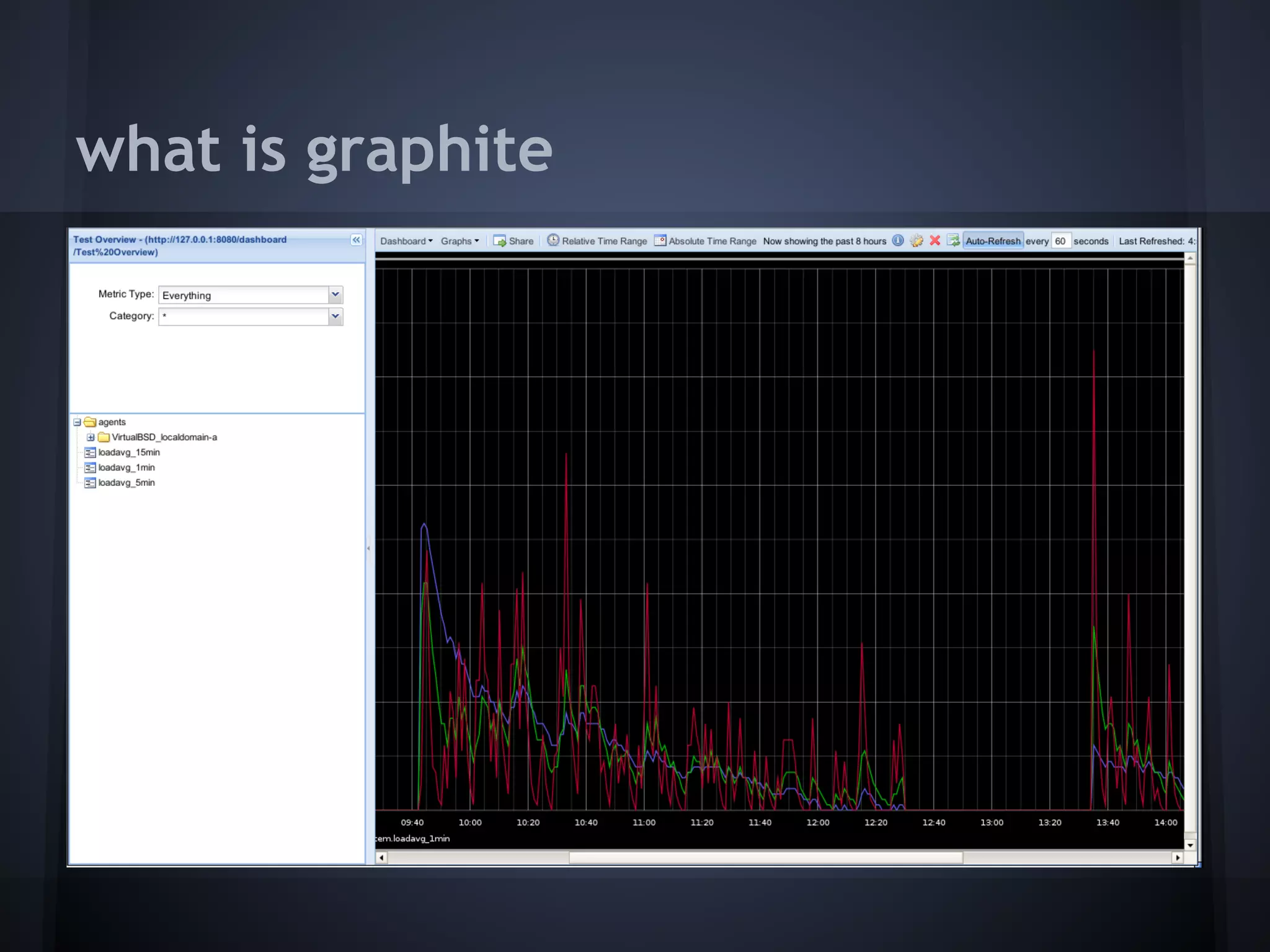

This document discusses using Graphite, an open source tool for monitoring and visualizing real-time performance data. It begins by introducing Graphite and its components - a web front end, processing backend, and database. The document then discusses what types of data are useful to capture from applications and systems, such as function times, database queries, memory usage and network traffic. Finally, it covers interpreting Graphite charts to identify deviations, jitters and other anomalies that could help debug performance issues. A demo of using Graphite to monitor a WordPress site is also mentioned.