Embed presentation

Download as PDF, PPTX

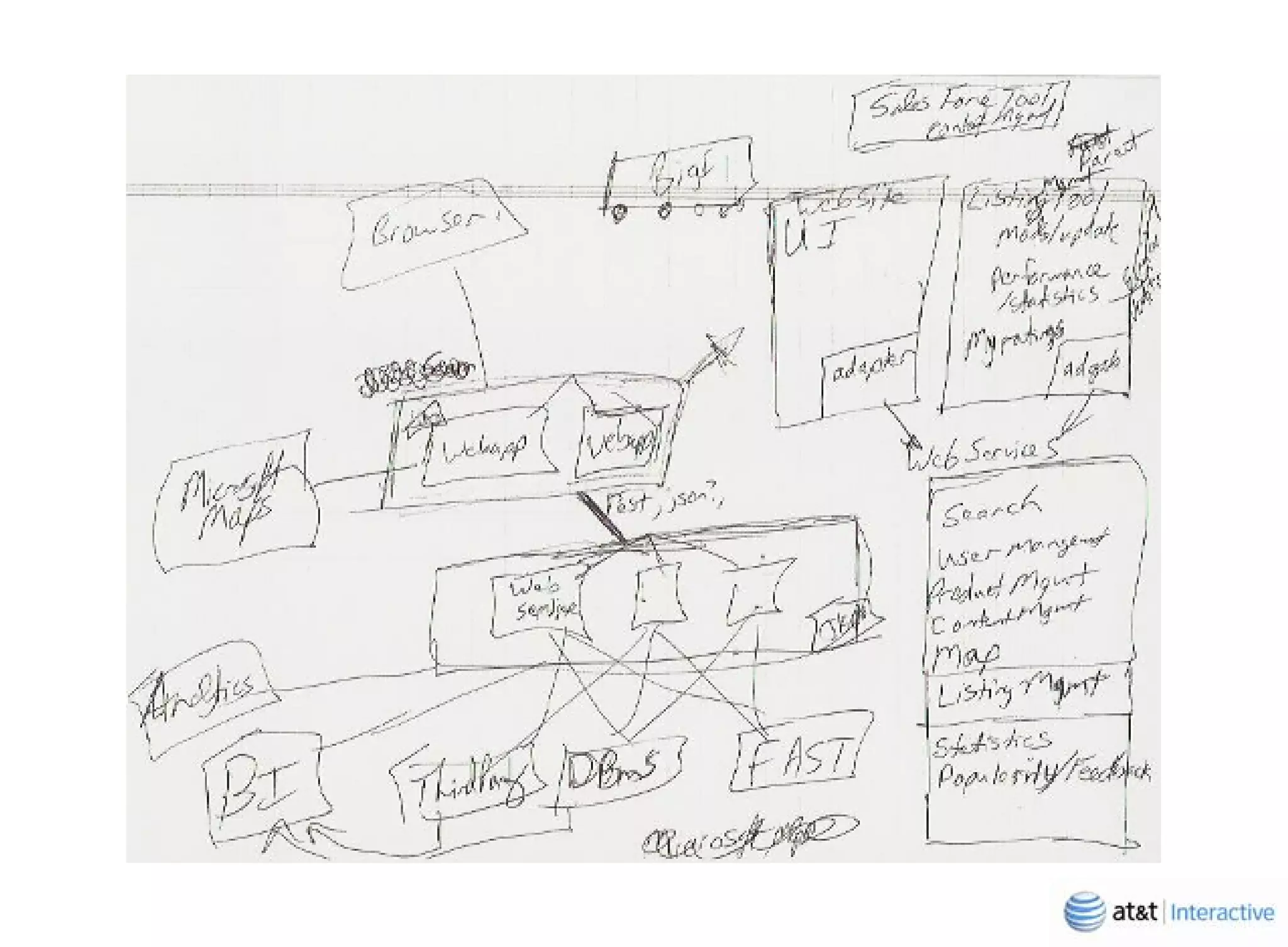

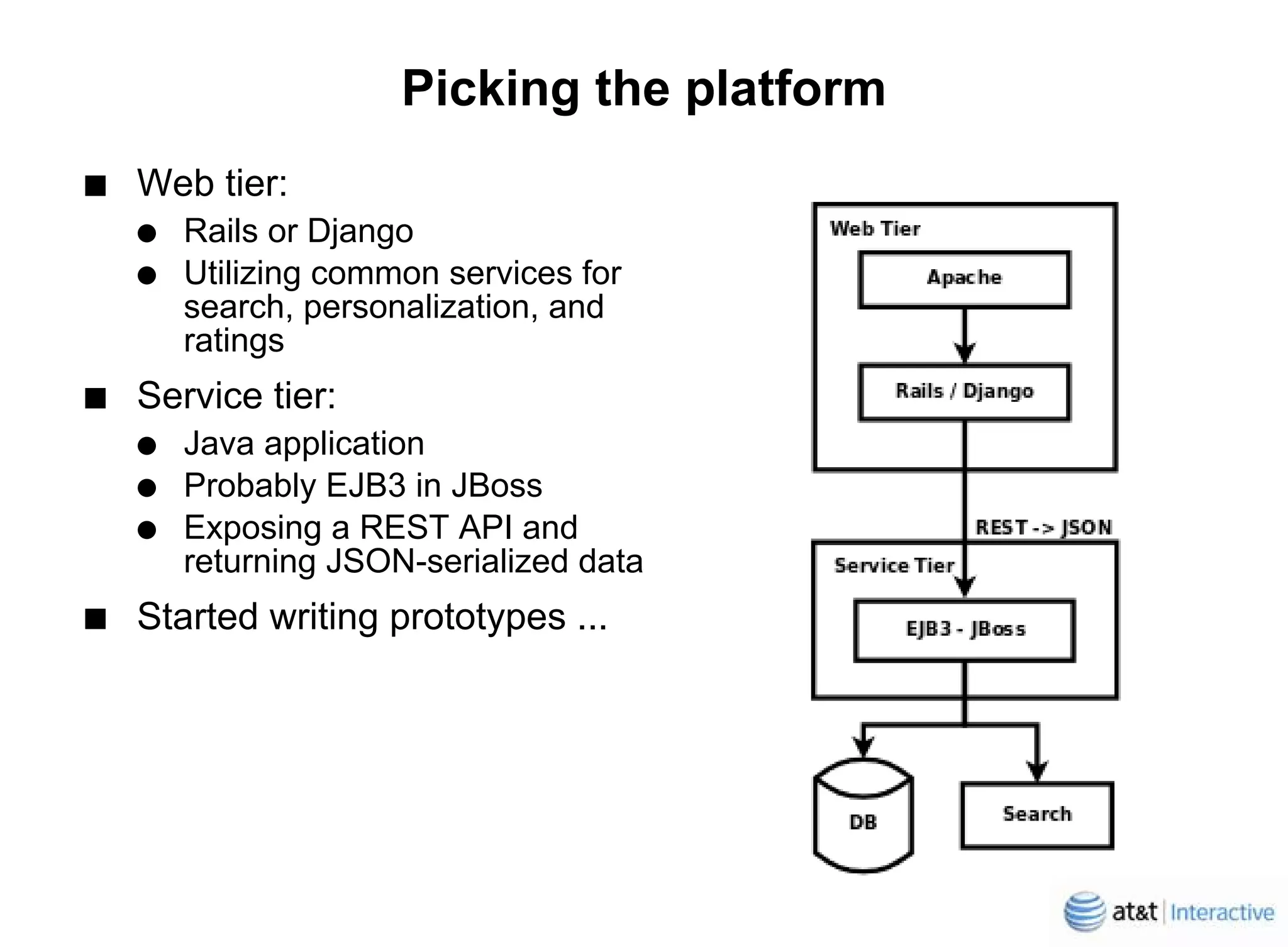

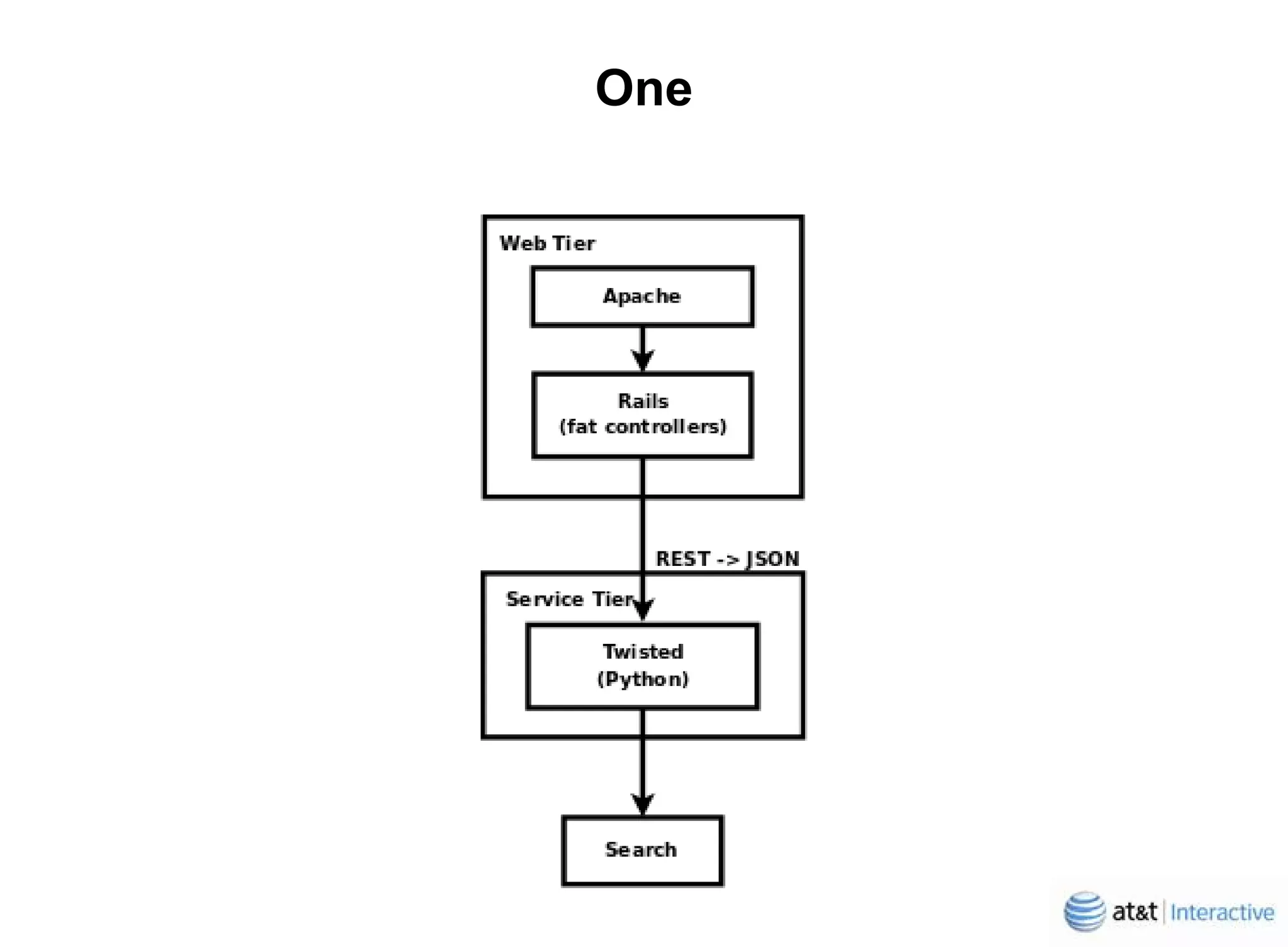

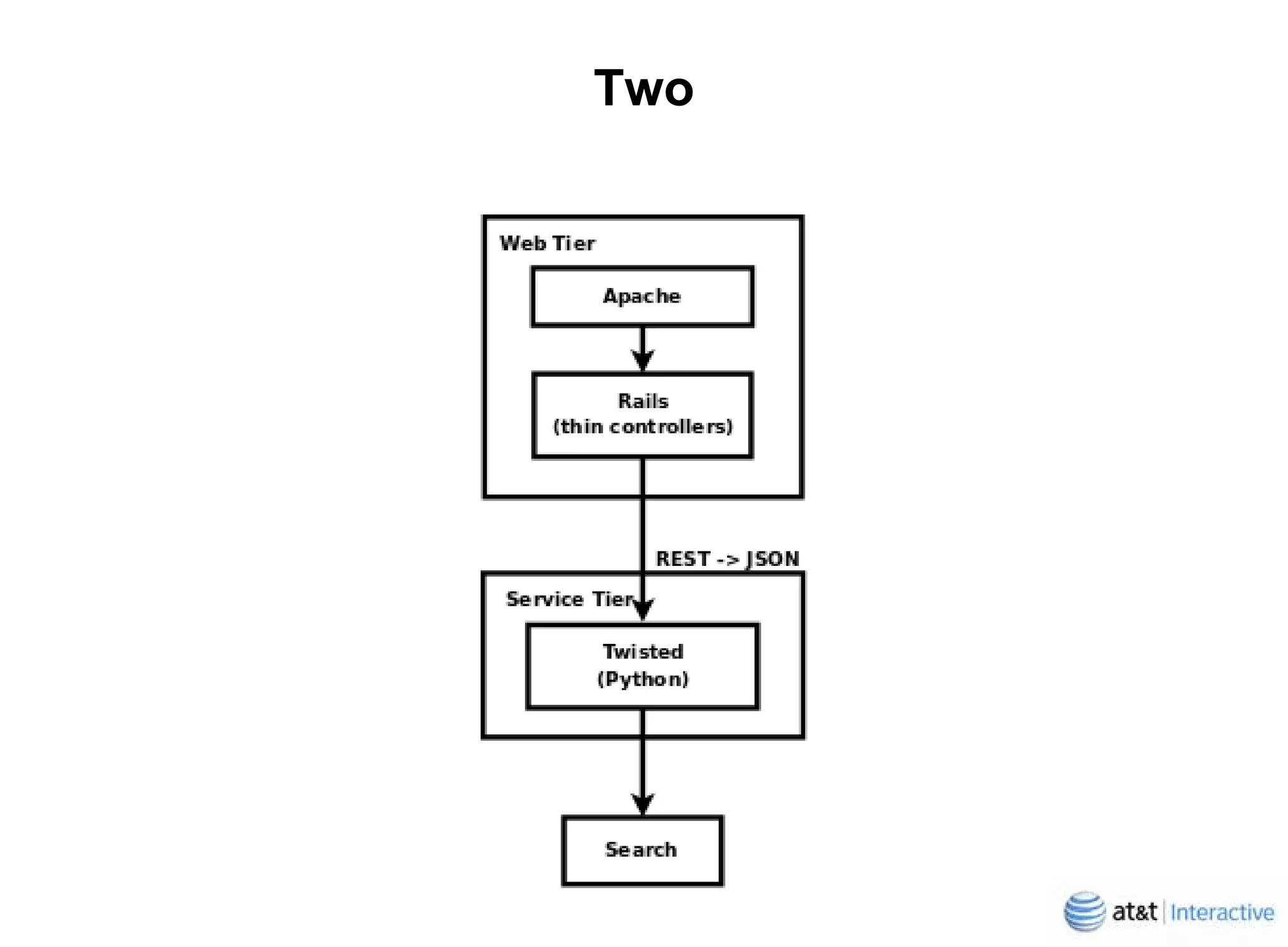

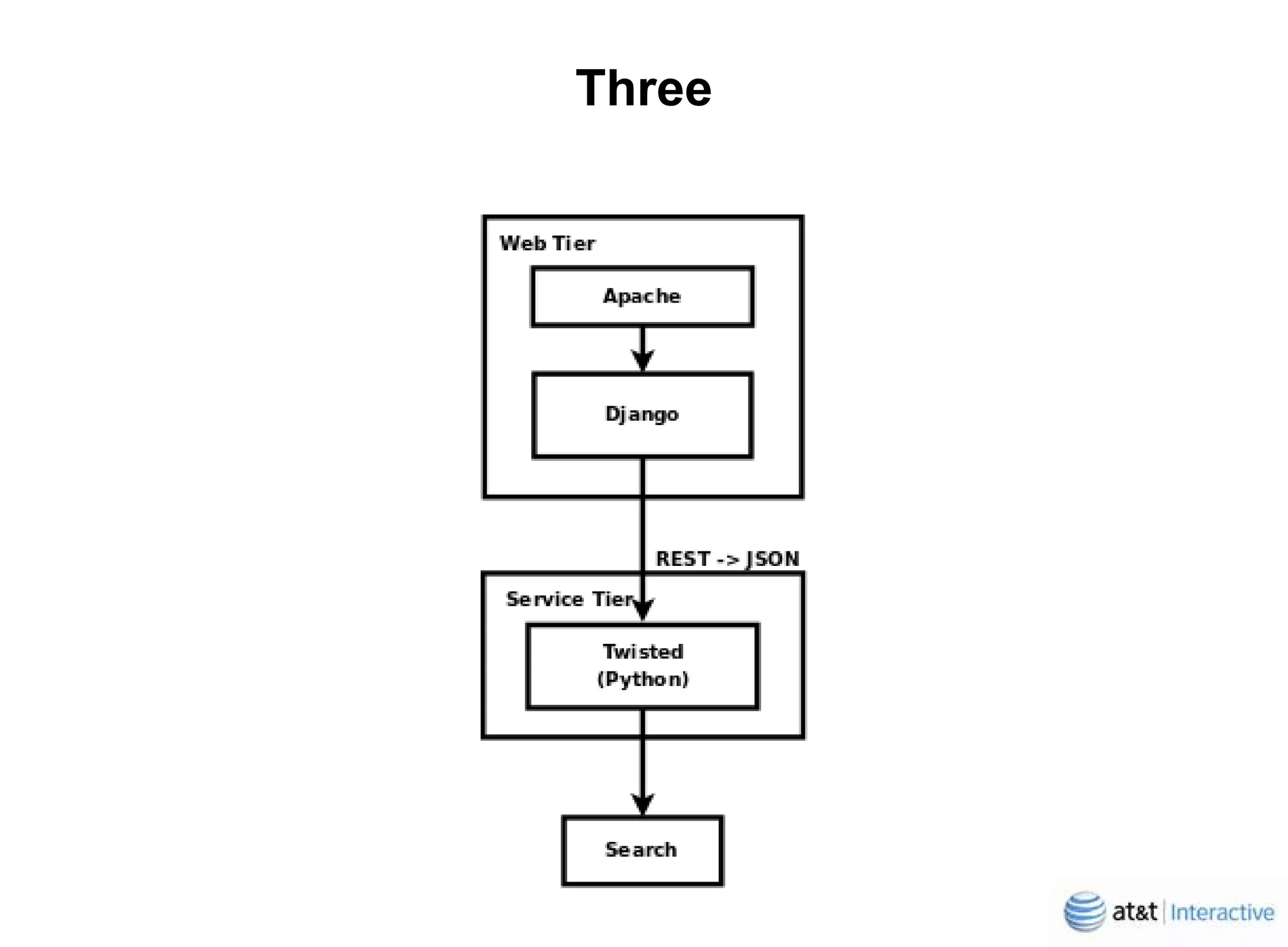

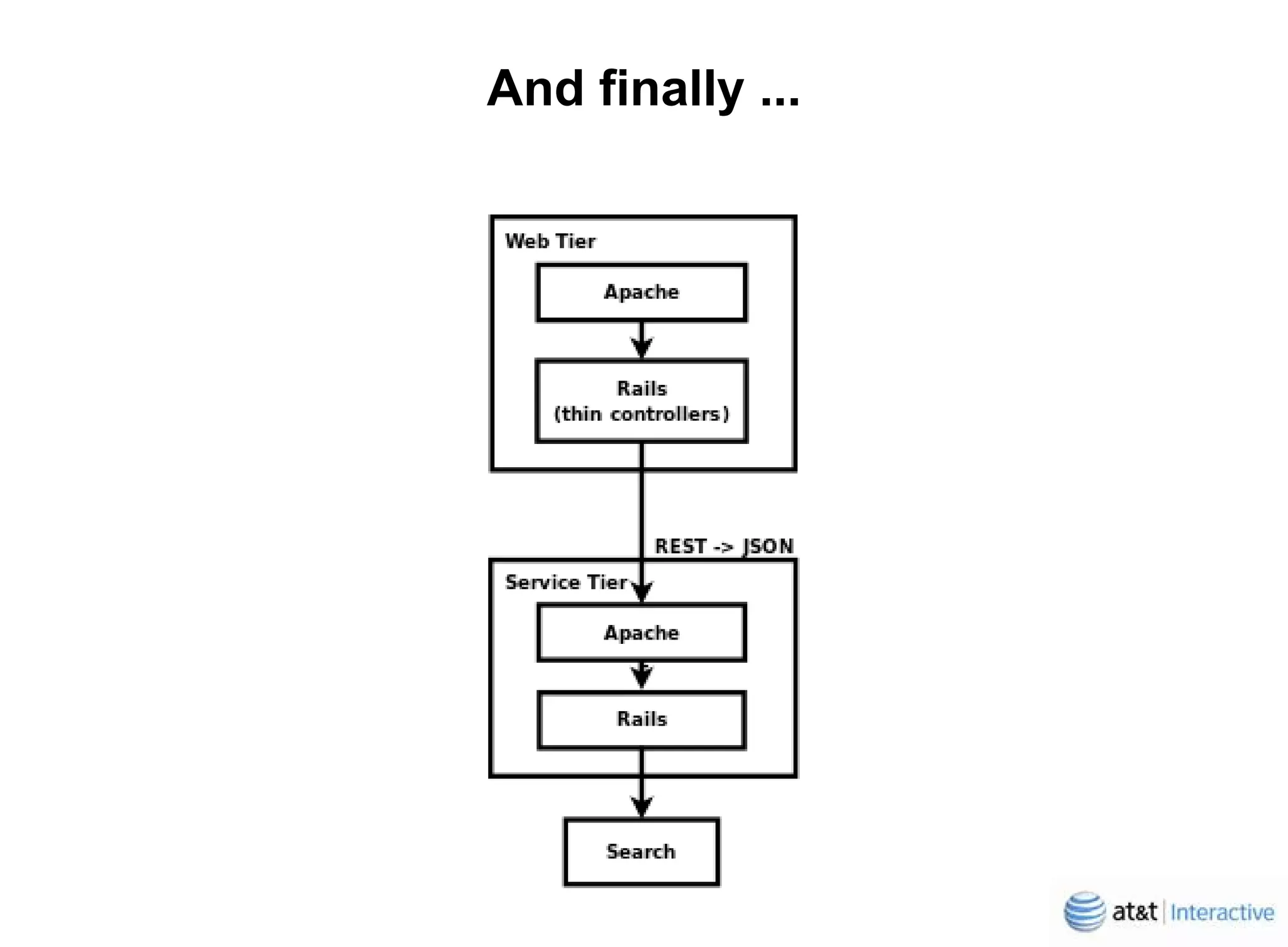

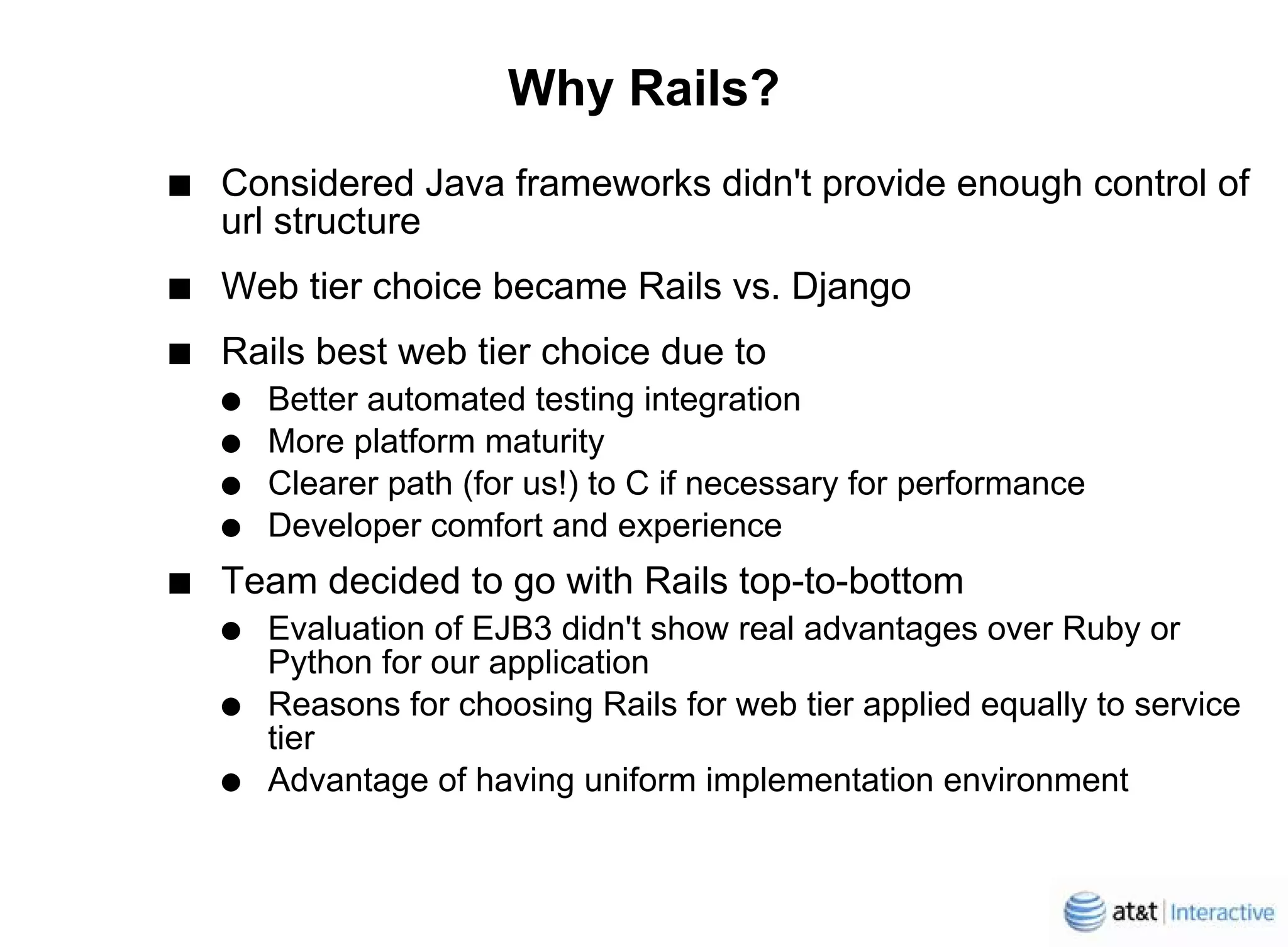

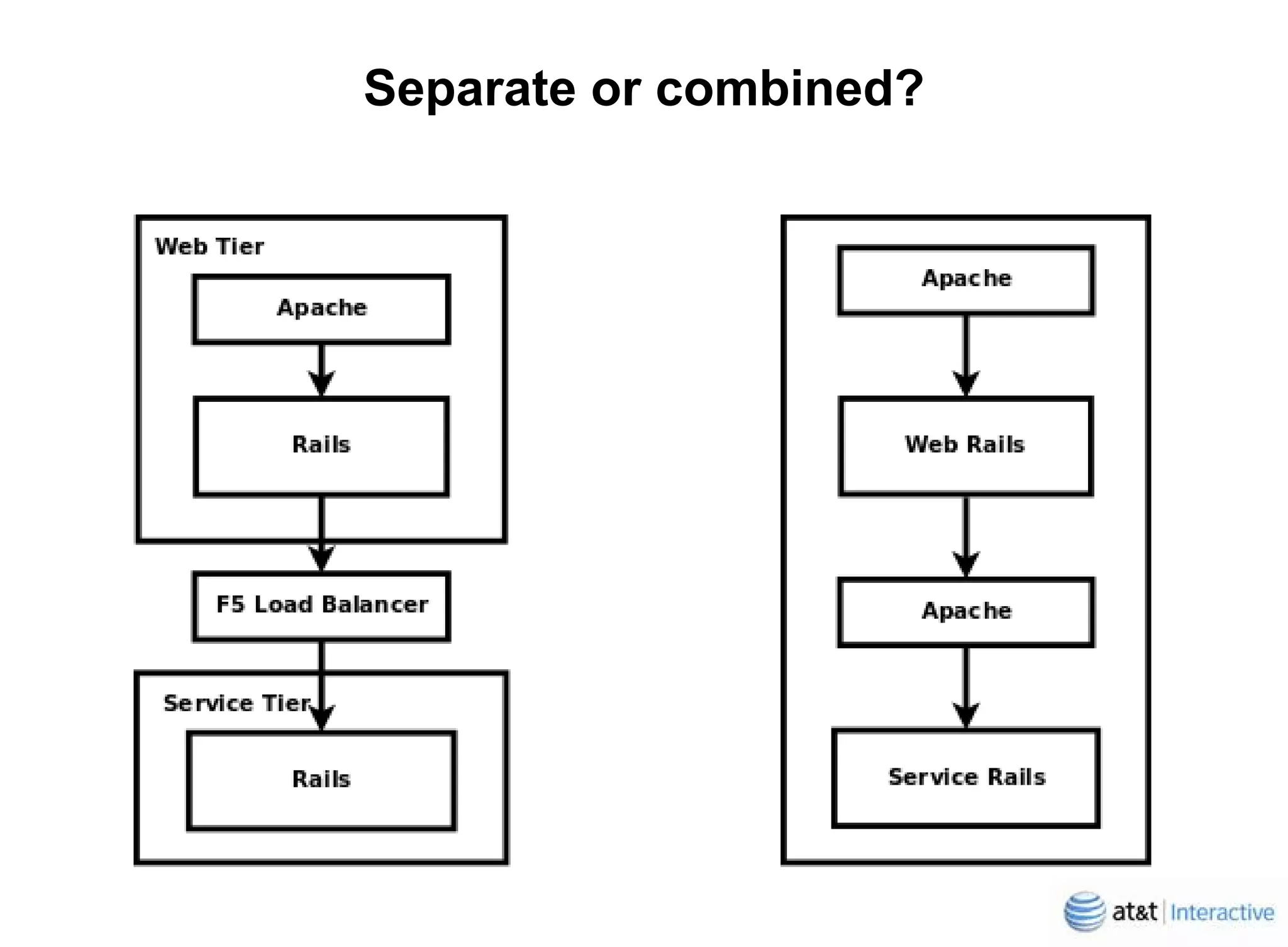

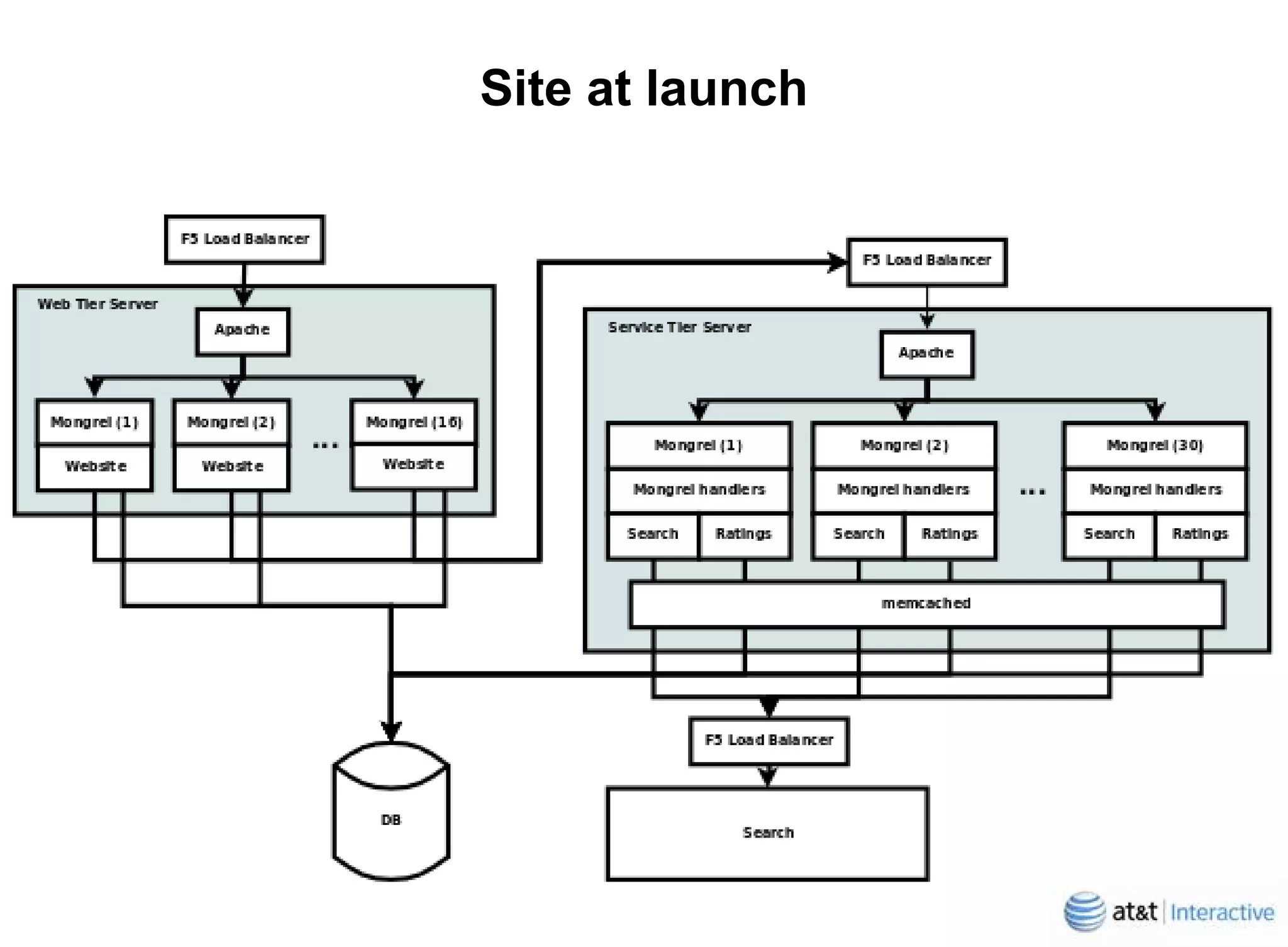

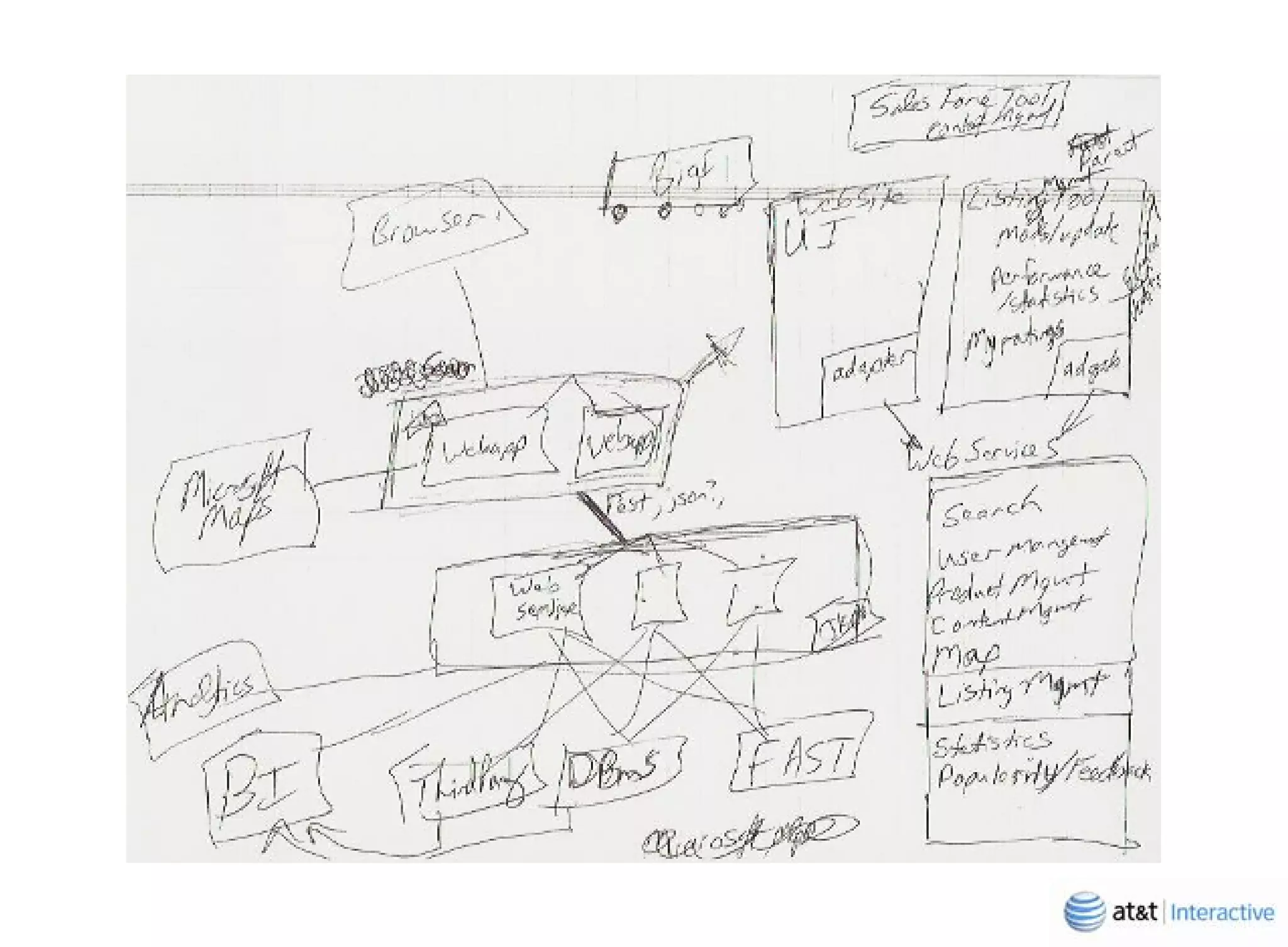

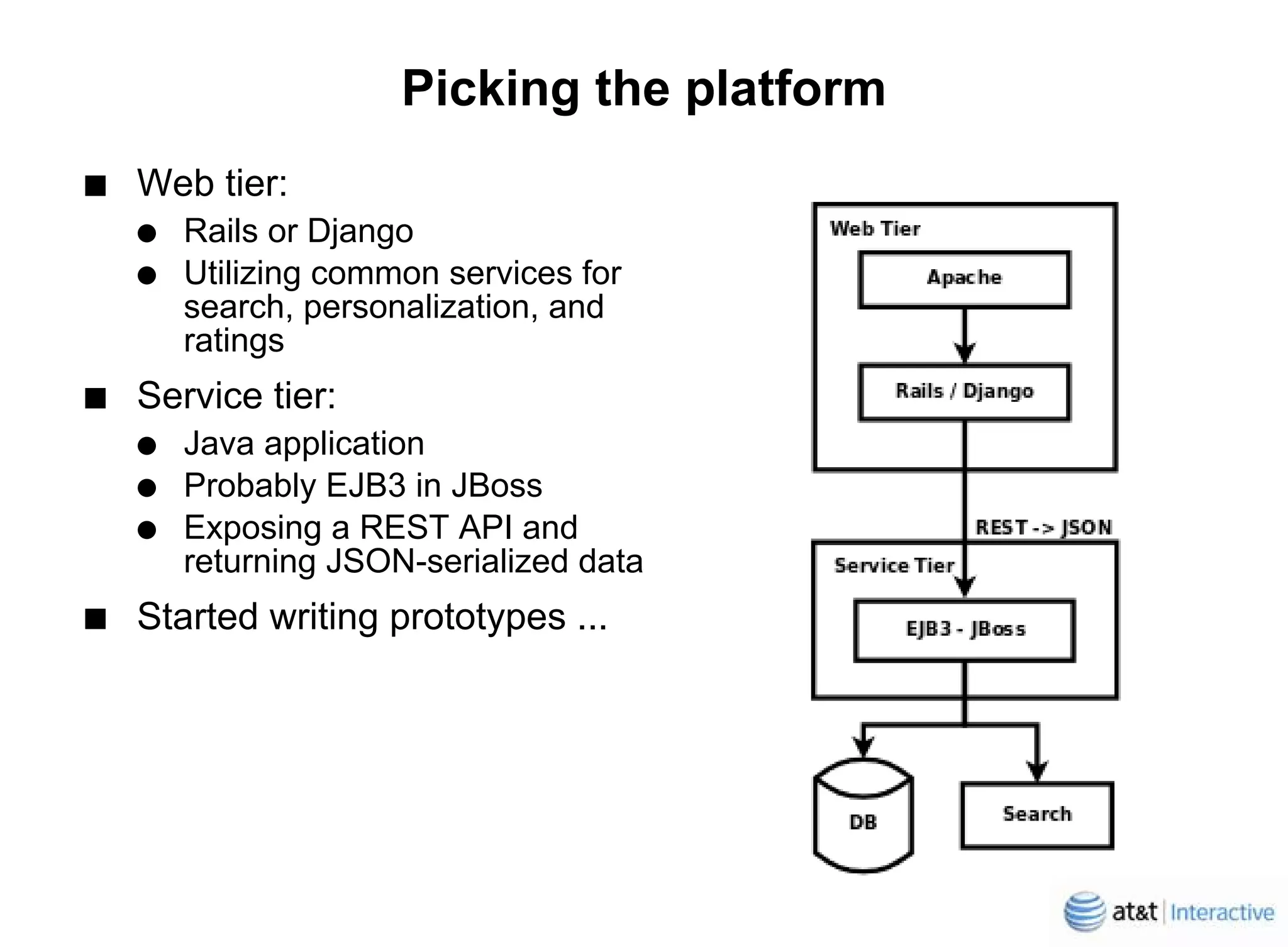

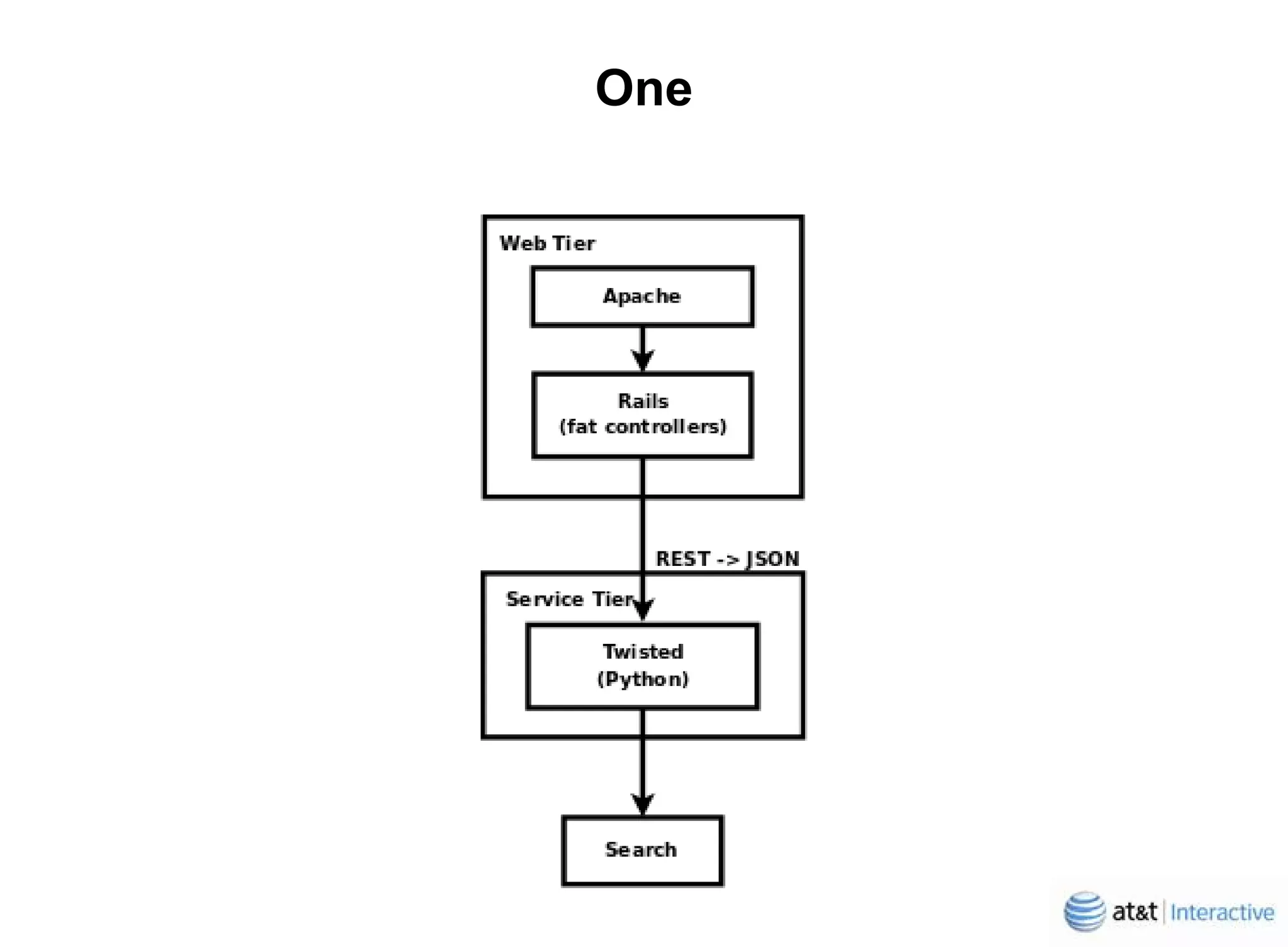

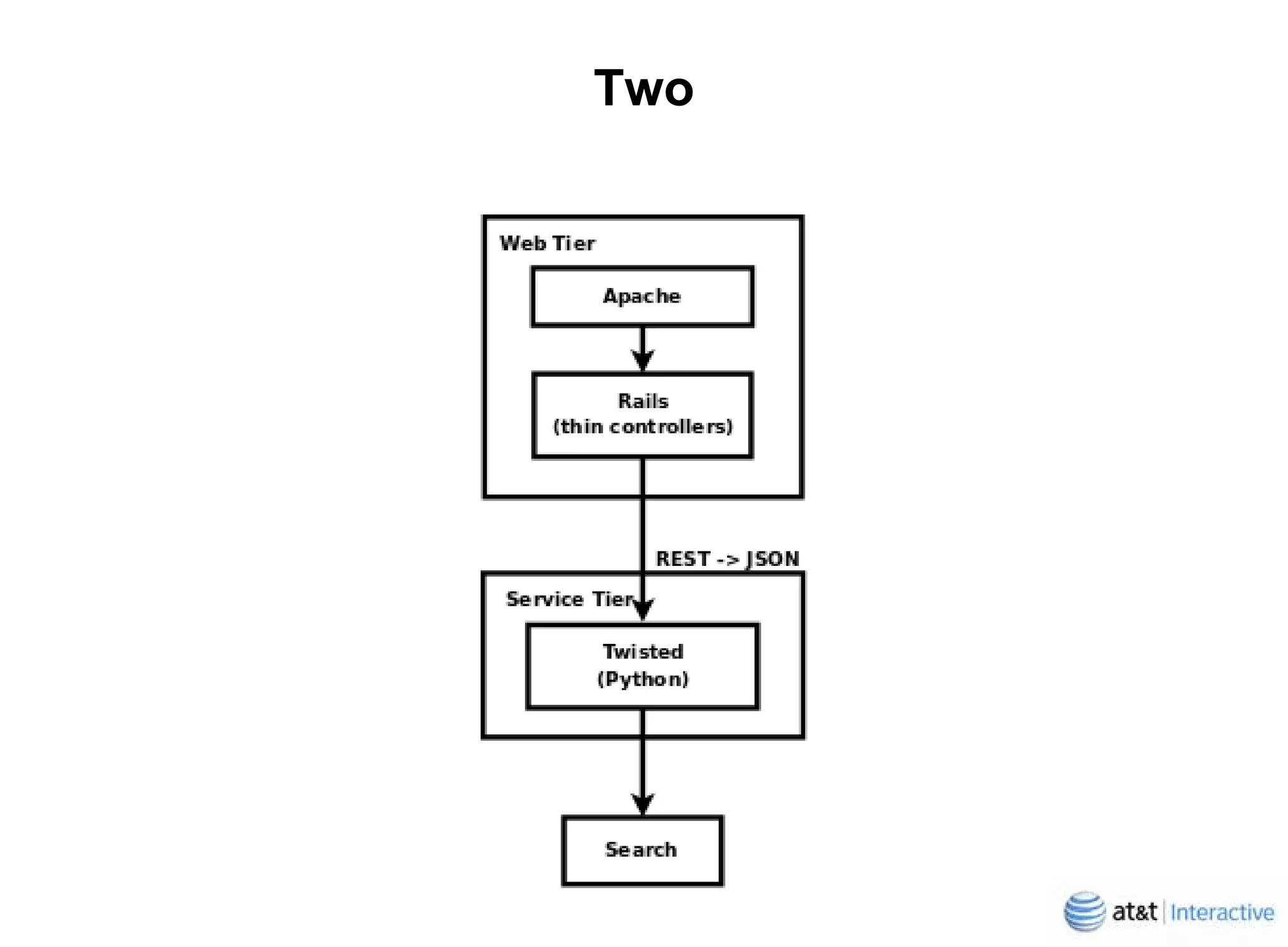

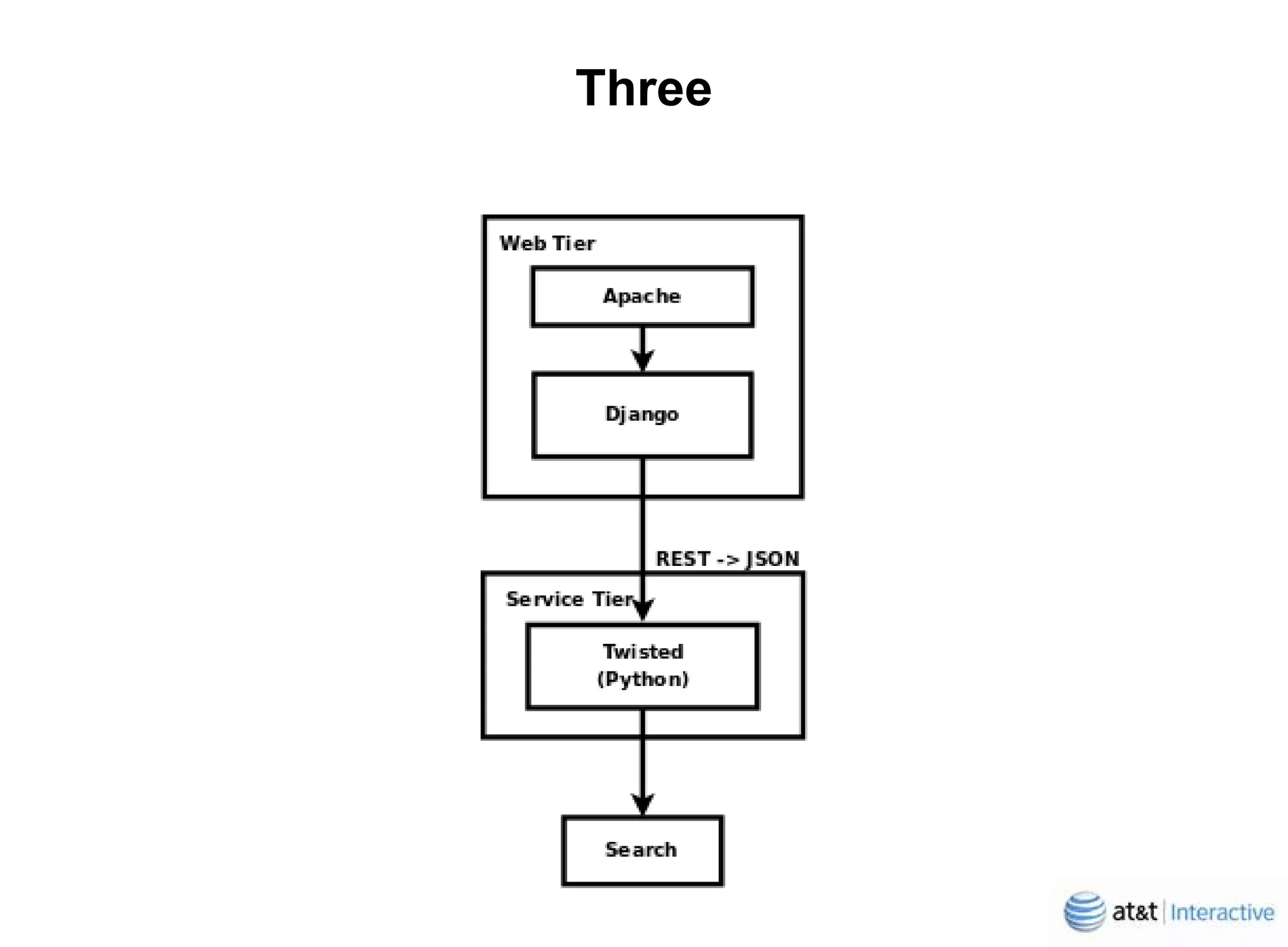

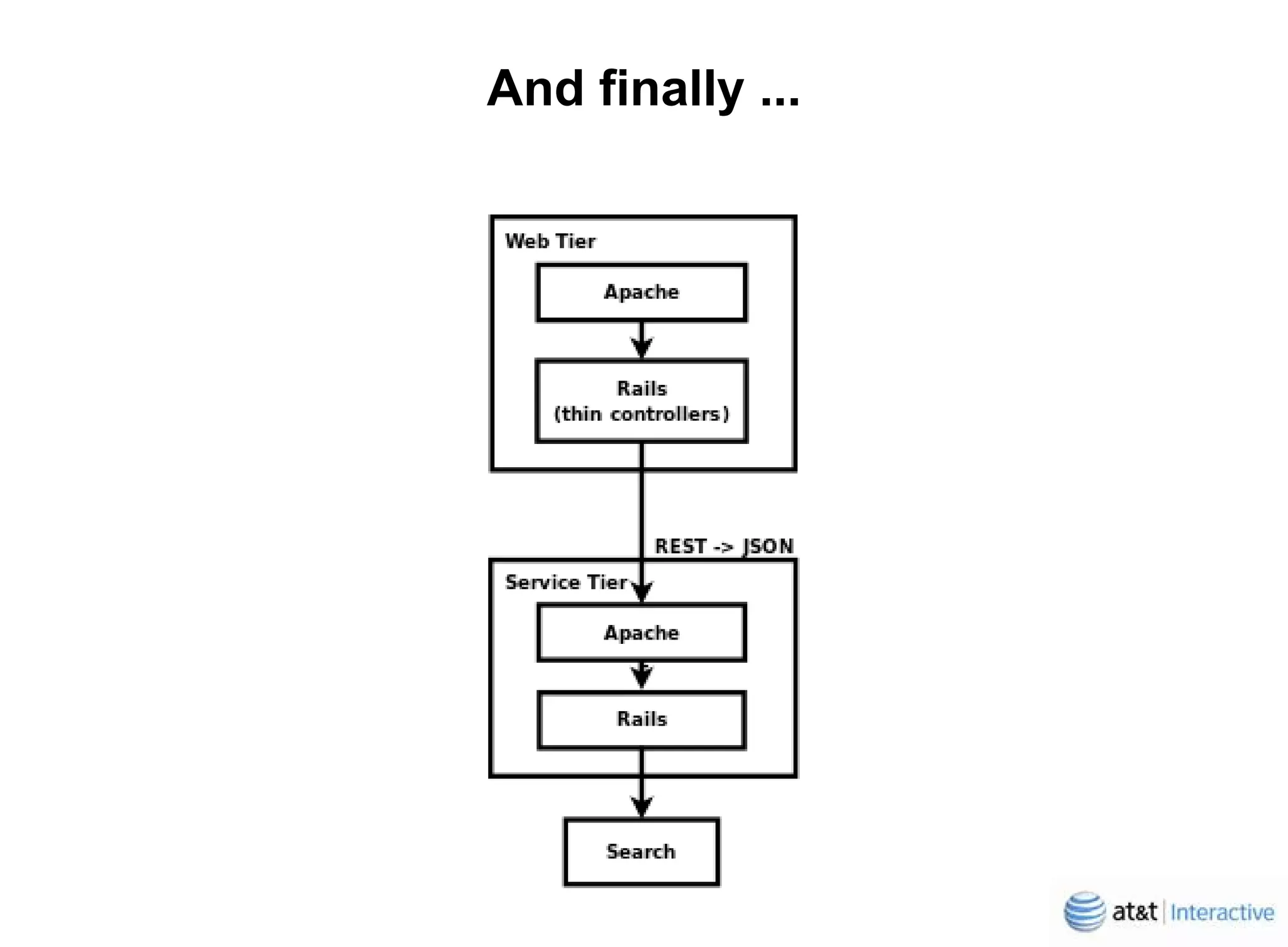

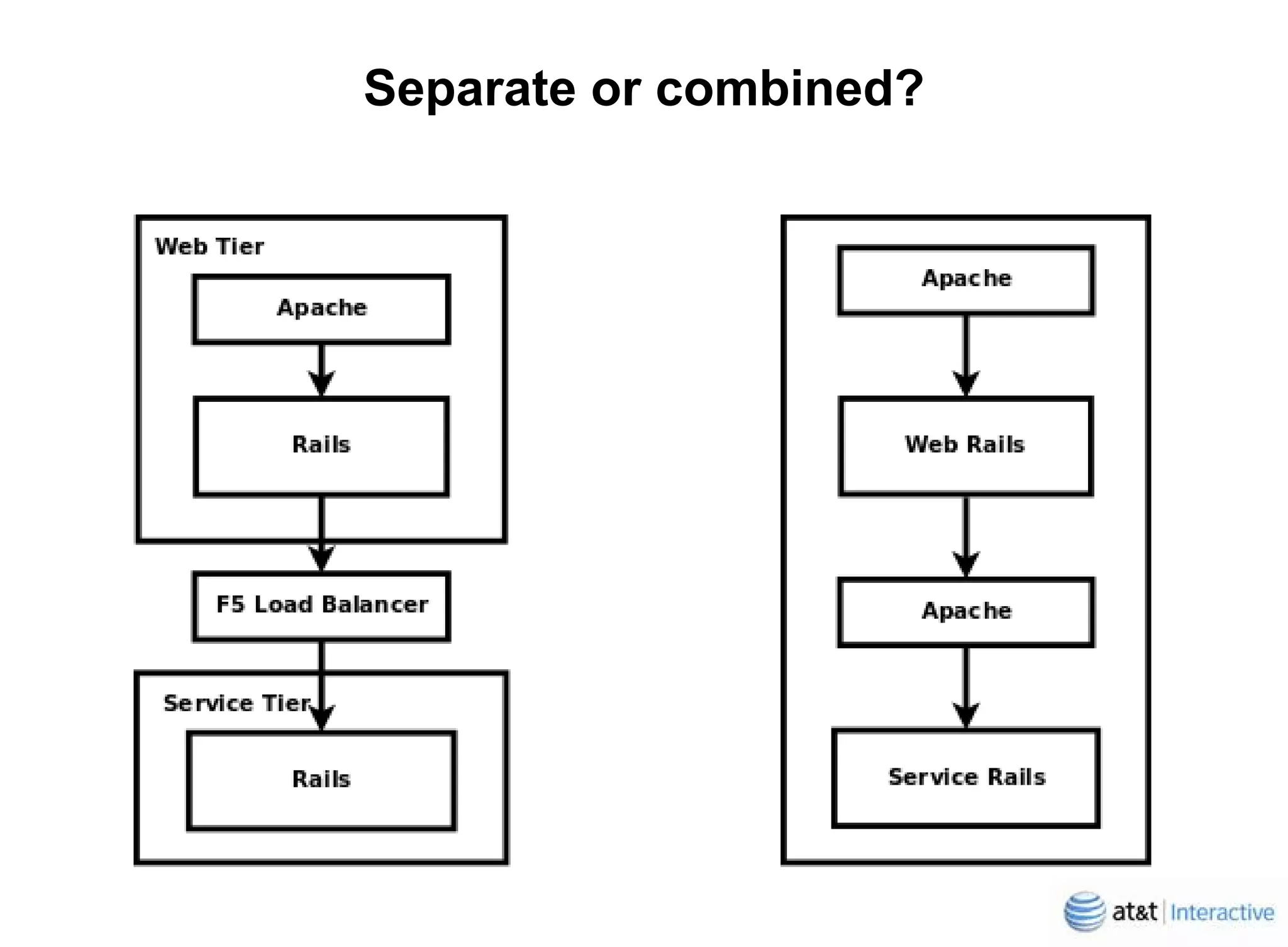

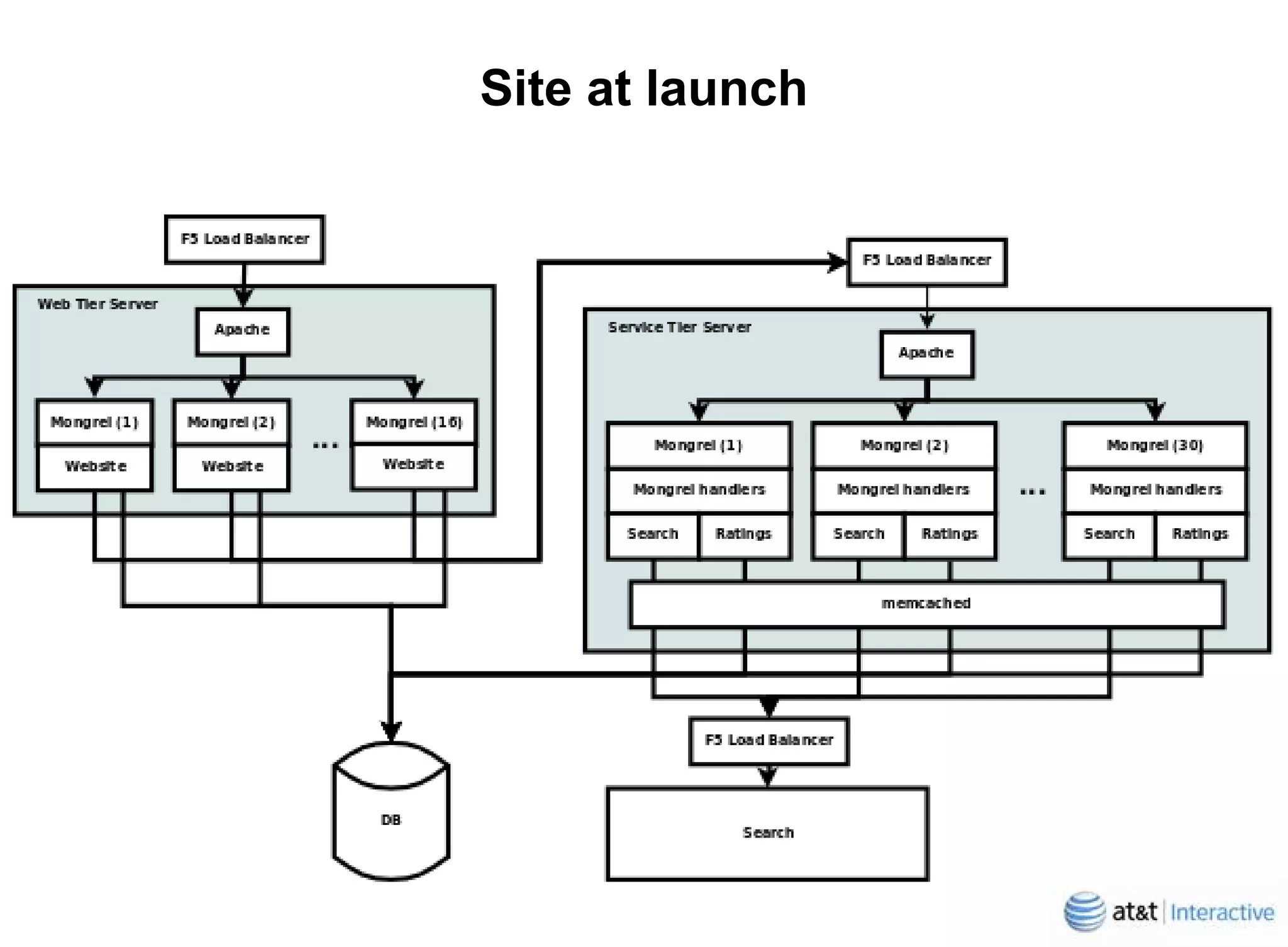

1. Yellowpages.com completely rewrote their website using Ruby on Rails instead of Java to improve performance, scalability, and agility. 2. The rewrite team of 20 focused on a service-oriented architecture with the web tier in Rails and service tier using common search, personalization, and business review logic. 3. Rails was chosen for both tiers due to better testing, maturity, and developer experience compared to alternatives like Java frameworks or Django.