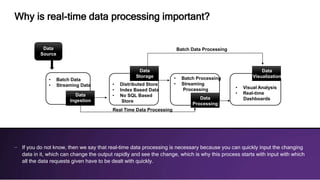

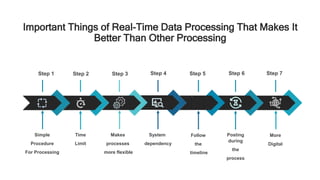

Real-time data processing is a crucial technology for businesses, enabling rapid data input and analysis that significantly impacts decision-making and customer relationships. It surpasses traditional batch processing by offering timely responses and flexibility, while facilitating the collection and management of dynamic data. Businesses are encouraged to adopt real-time data processing to enhance efficiency and maintain competitiveness in an increasingly data-driven market.