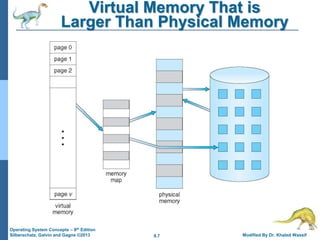

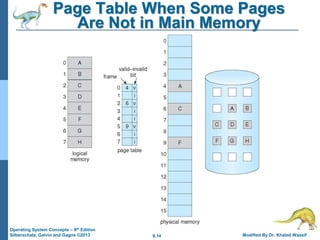

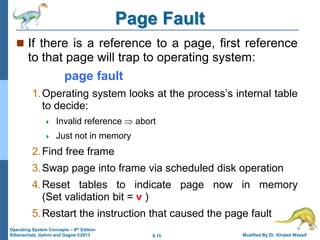

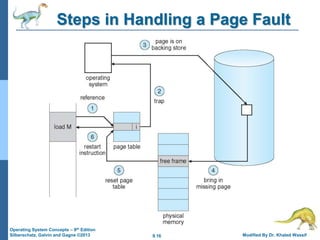

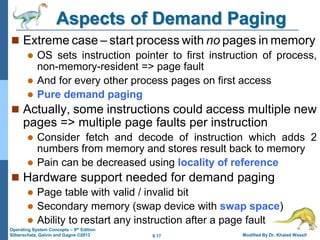

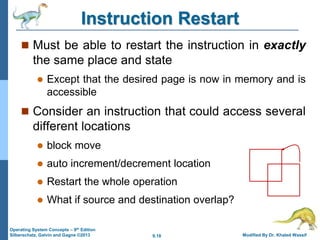

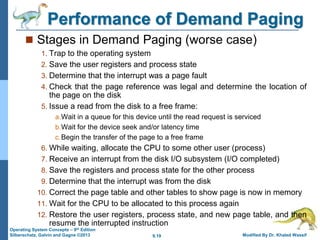

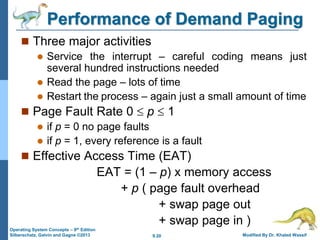

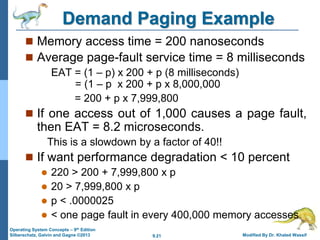

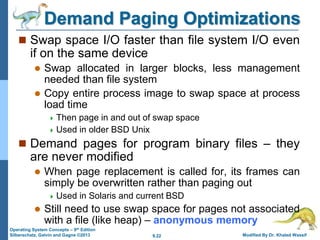

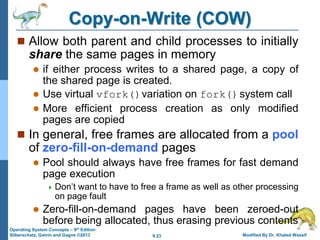

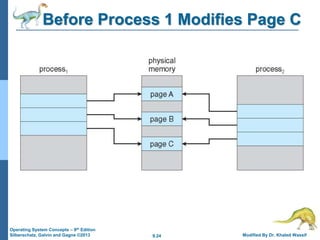

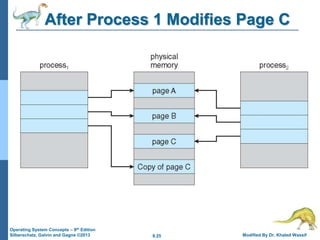

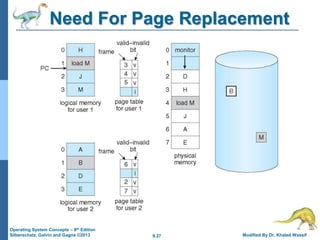

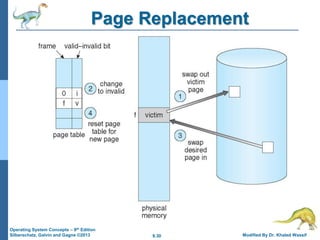

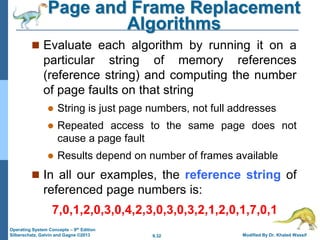

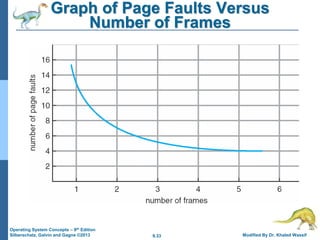

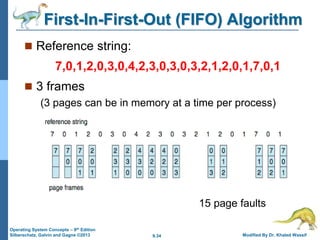

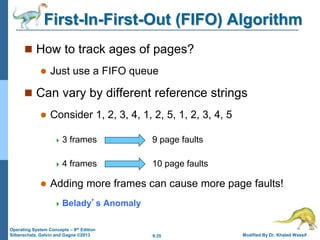

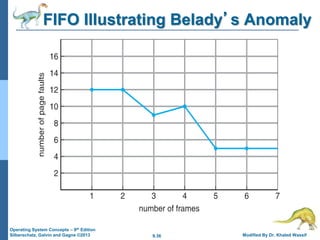

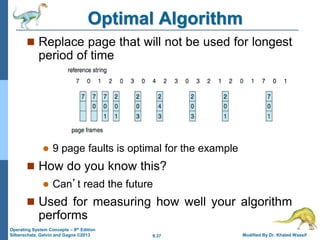

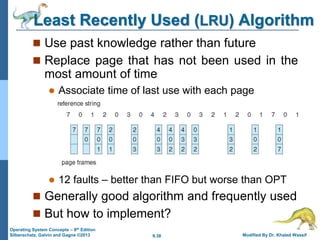

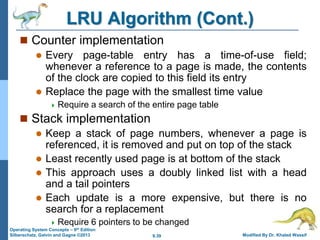

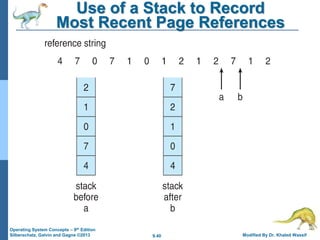

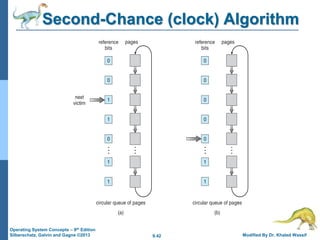

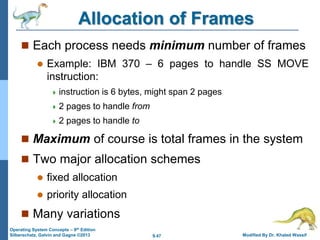

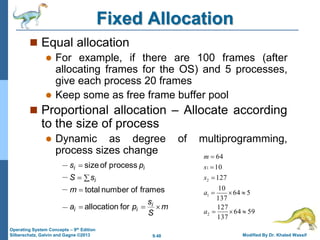

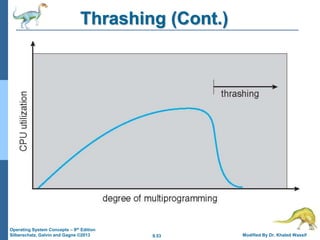

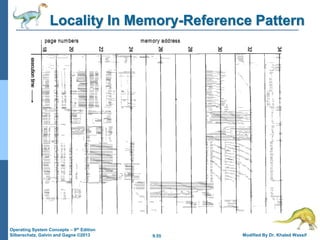

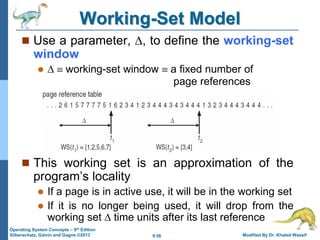

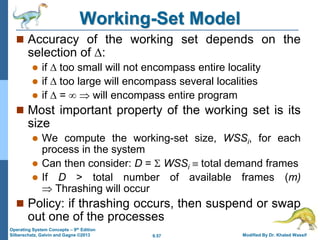

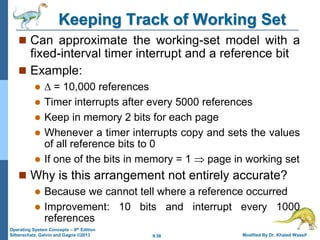

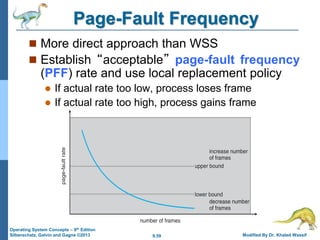

Chapter 9 of 'Operating System Concepts' discusses virtual memory, focusing on its benefits, including demand paging and page replacement. It explains how virtual memory allows programs to run without being fully loaded in physical memory, improving CPU utilization and throughput. The chapter also covers memory management techniques, algorithms for page replacement, and the handling of page faults.