1) The document outlines the tasks, tools, and topics explored by Vipul Divyanshu during a summer internship at India Innovation Labs, including data analytics on a medium-sized database and building a recommender engine.

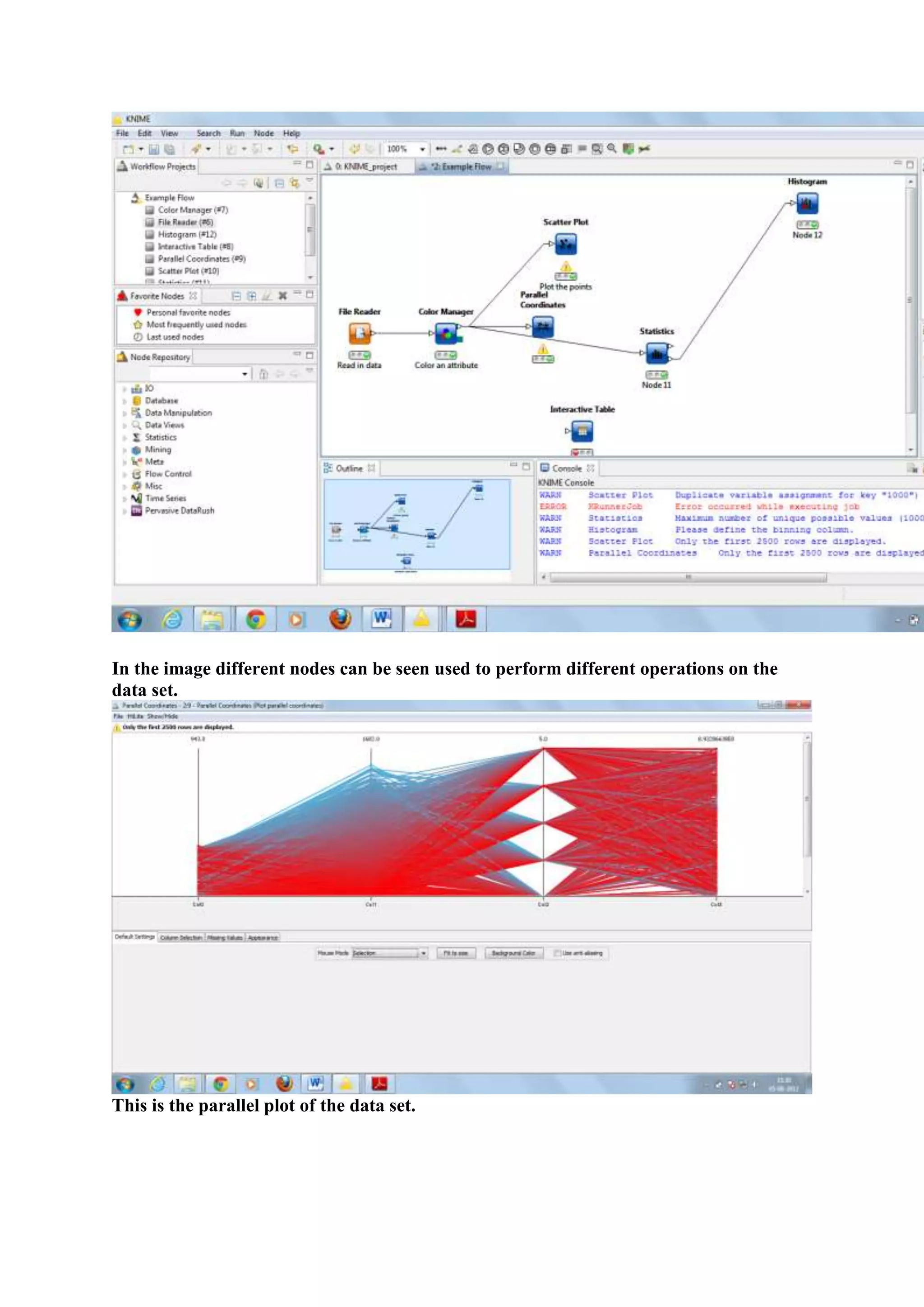

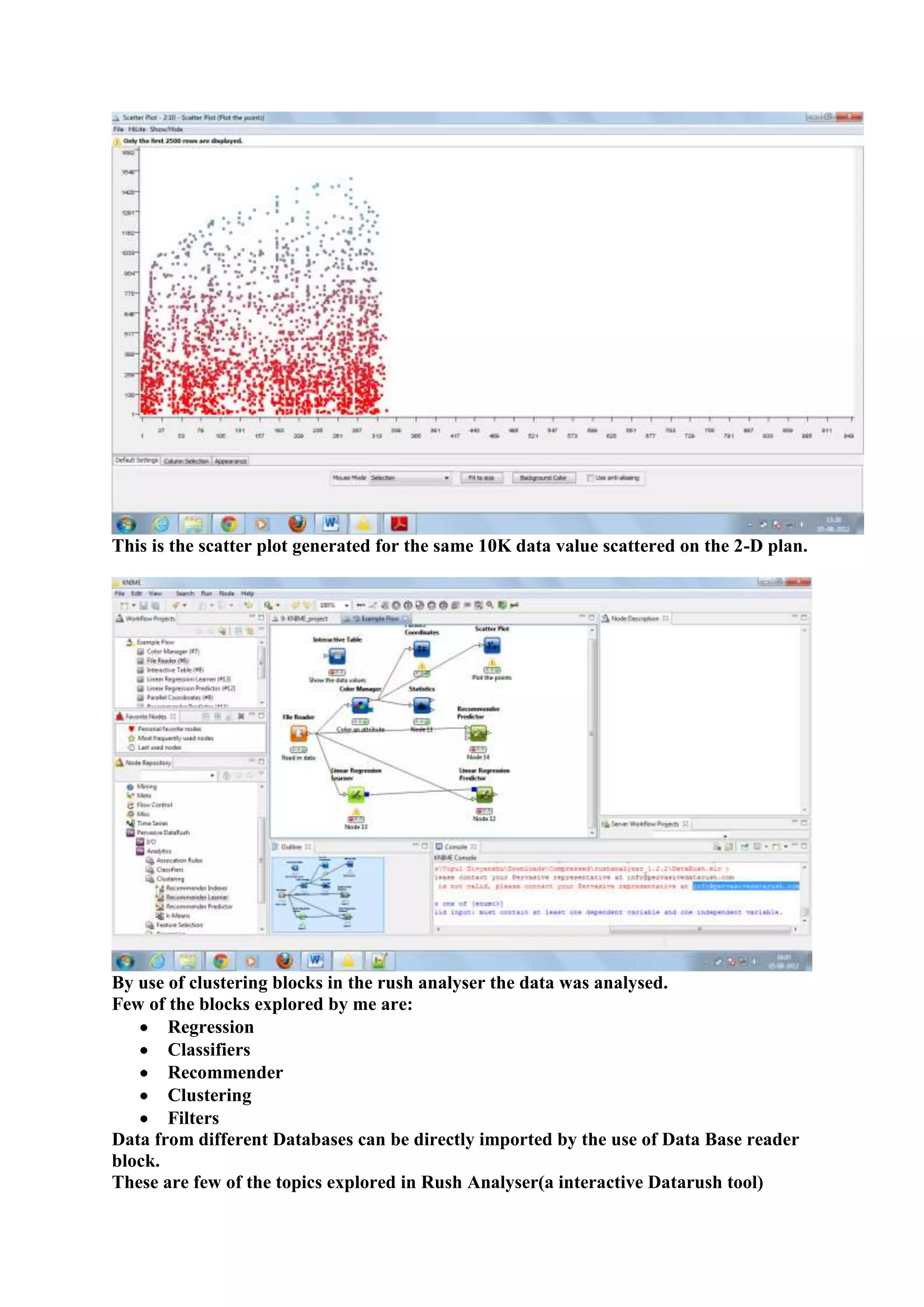

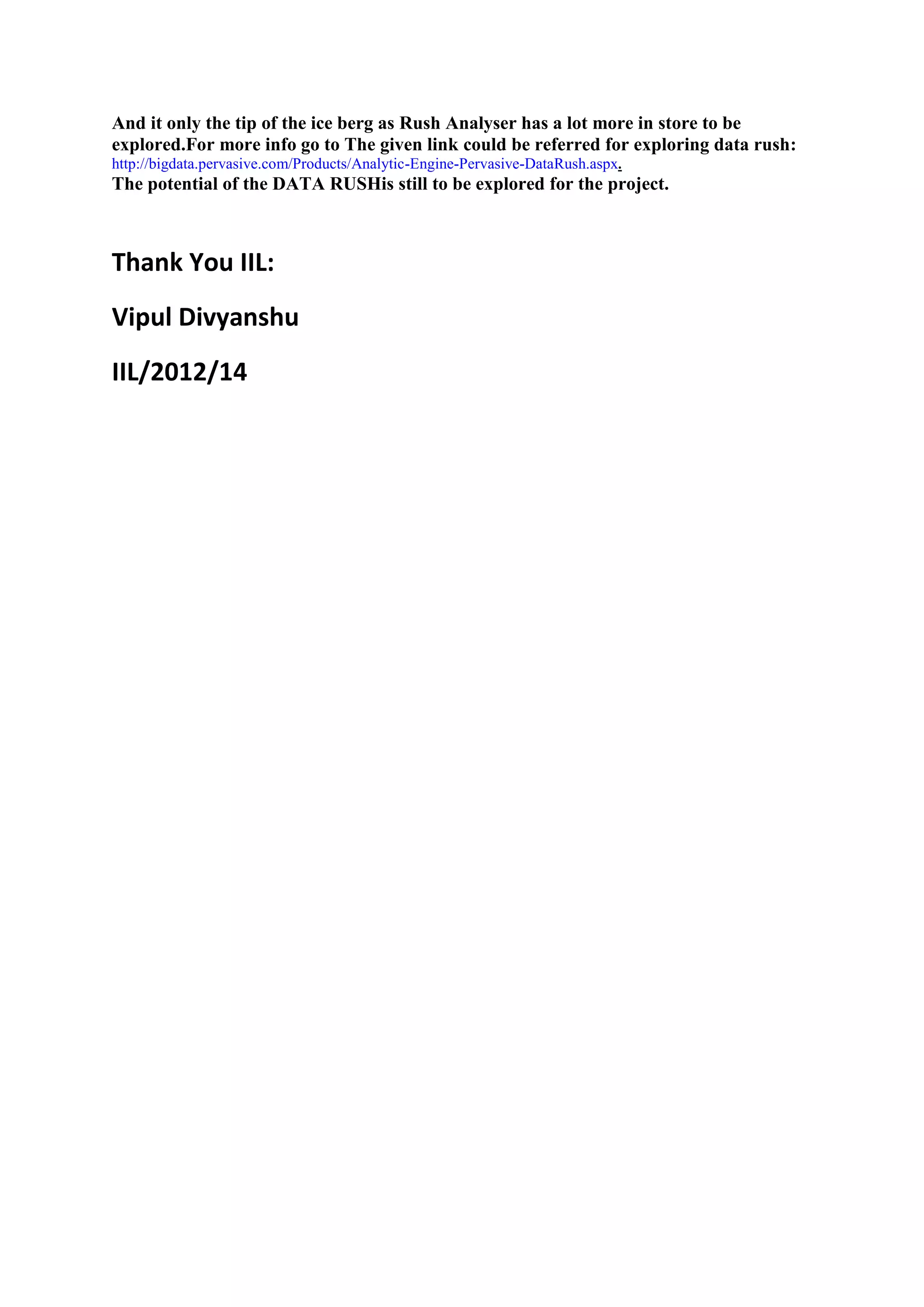

2) Key tools explored include Mahout for machine learning algorithms, Hadoop for distributed processing, and Rush Analyzer (with KNIME) for data visualization and analytics.

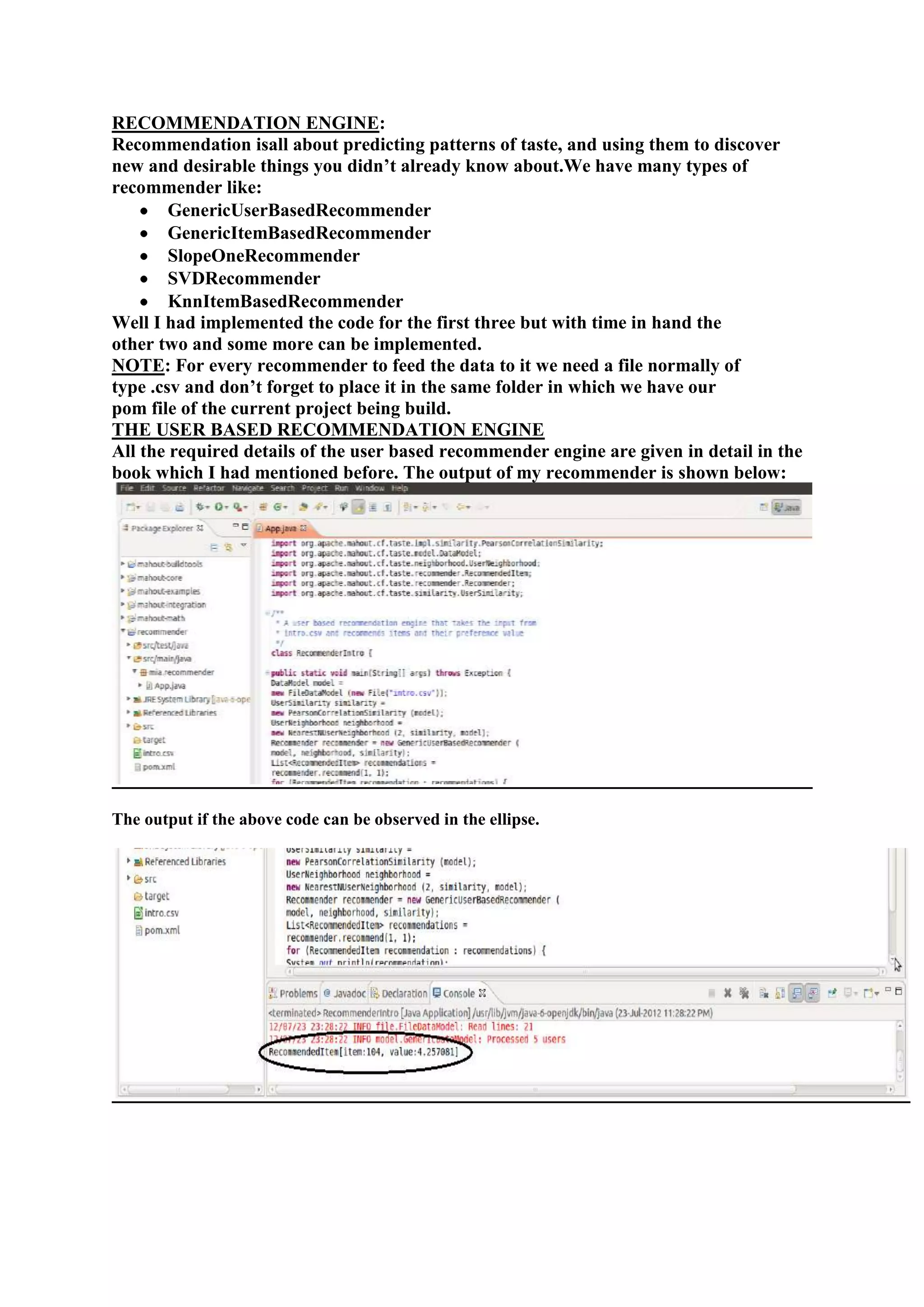

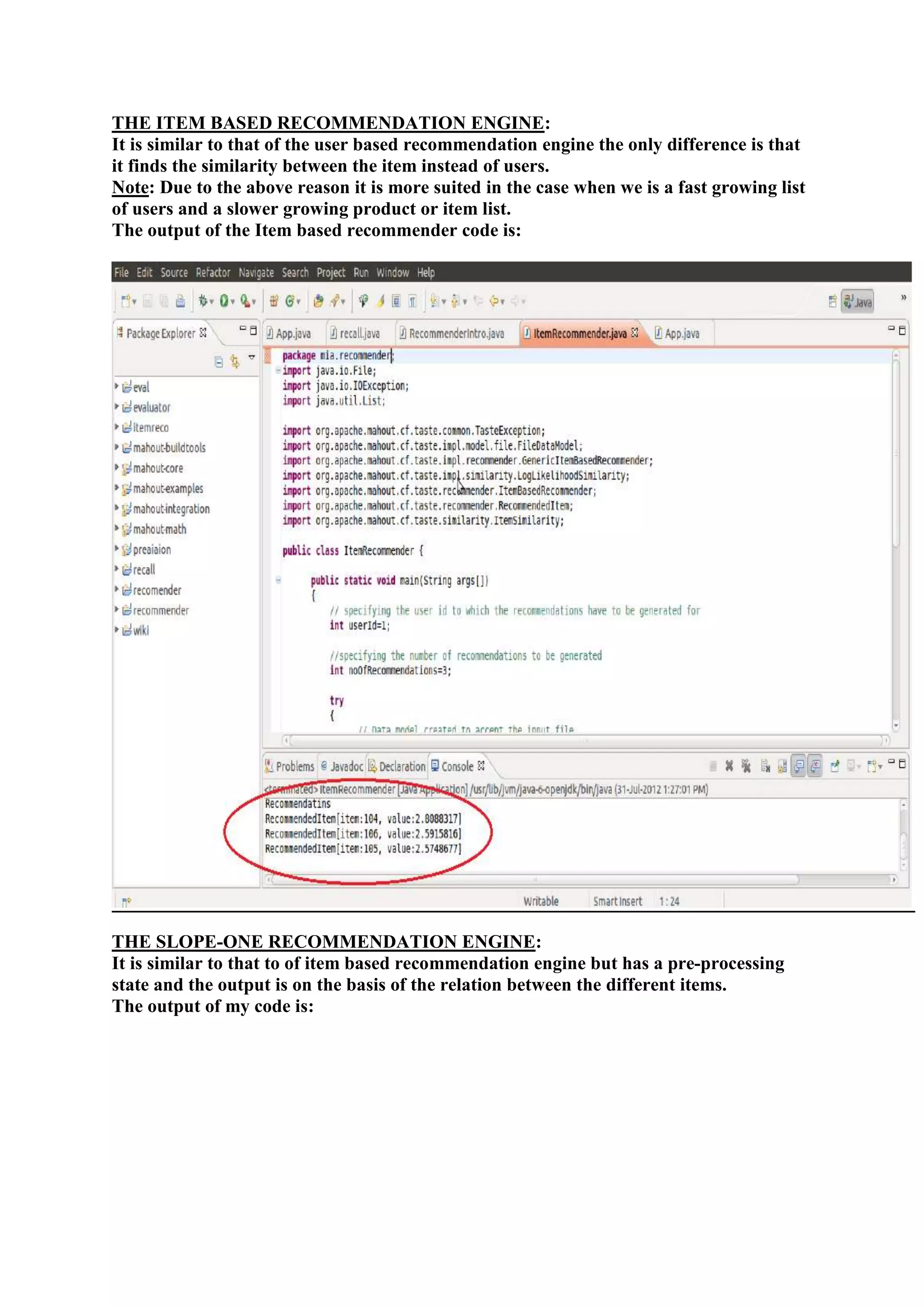

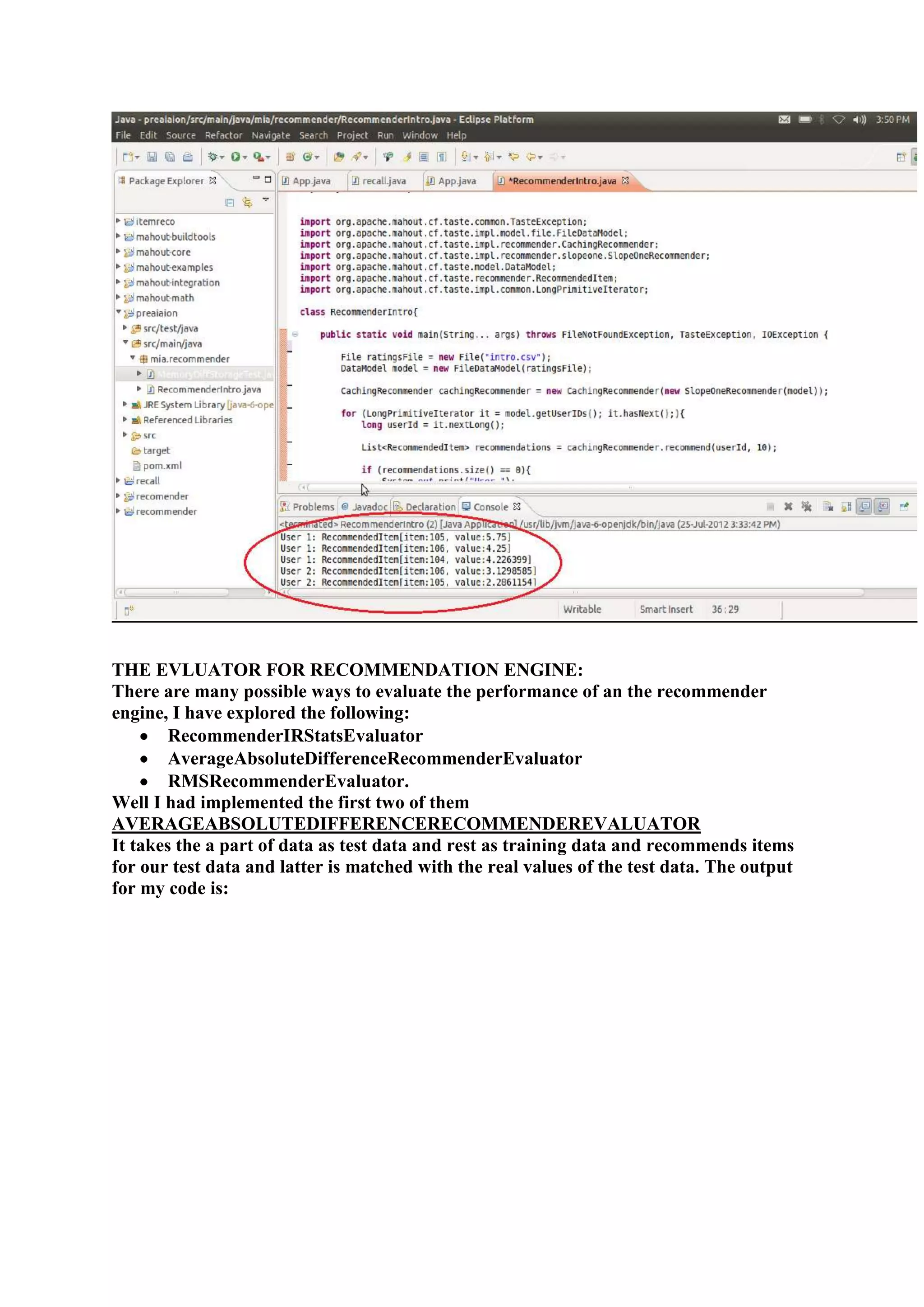

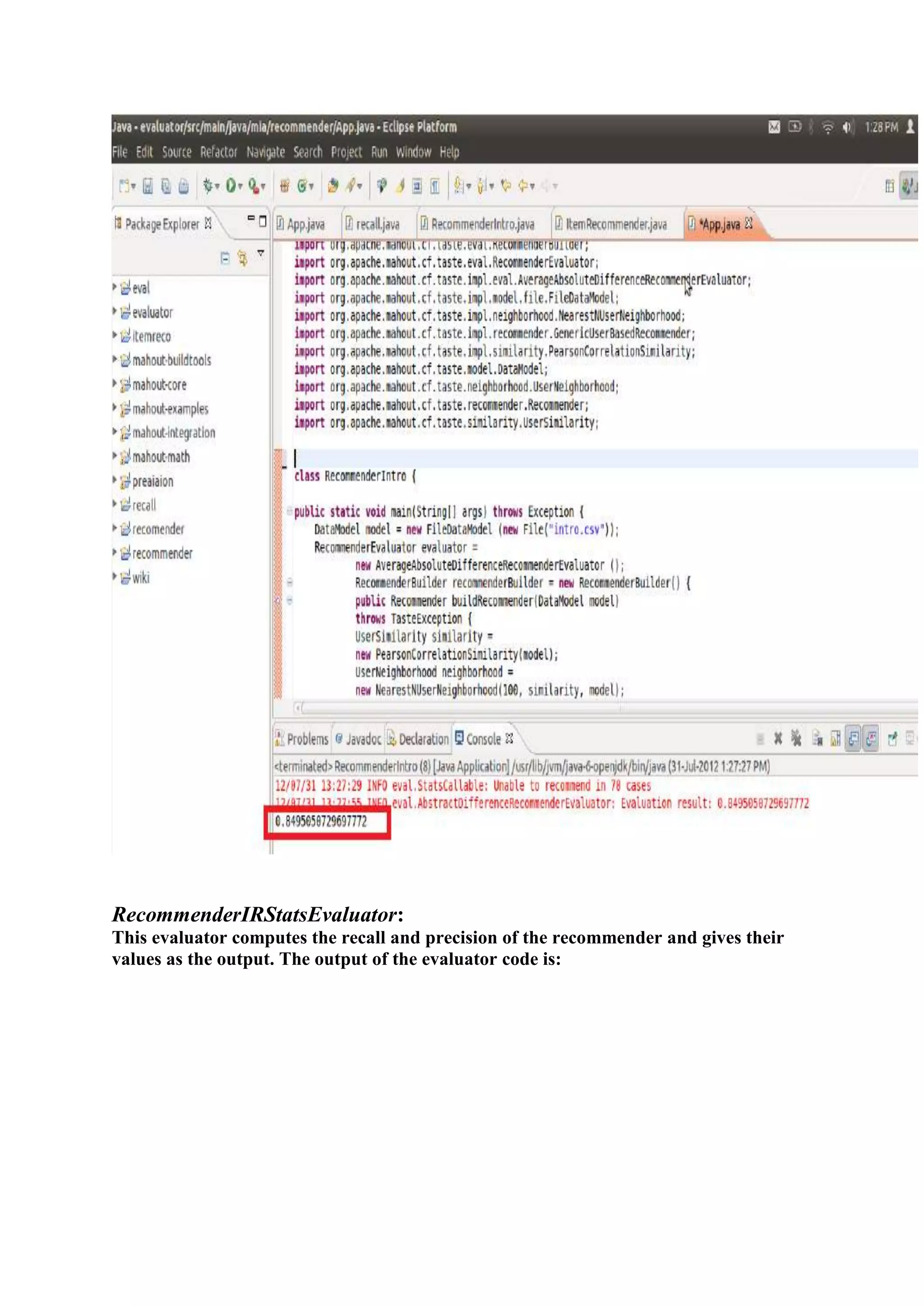

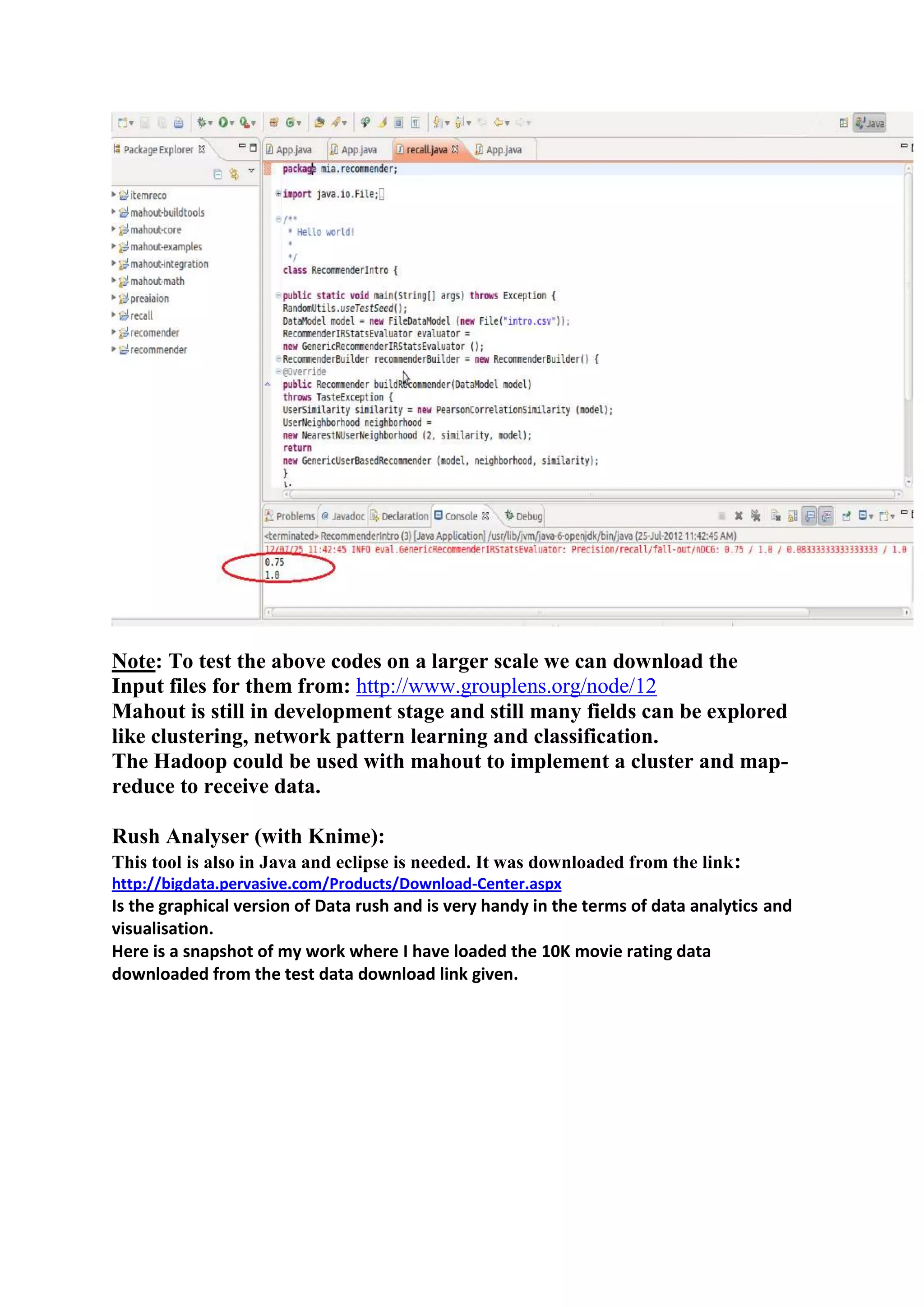

3) Vipul implemented recommendation engines including user-based, item-based, and SlopeOne recommenders and evaluated performance using recommender evaluators.

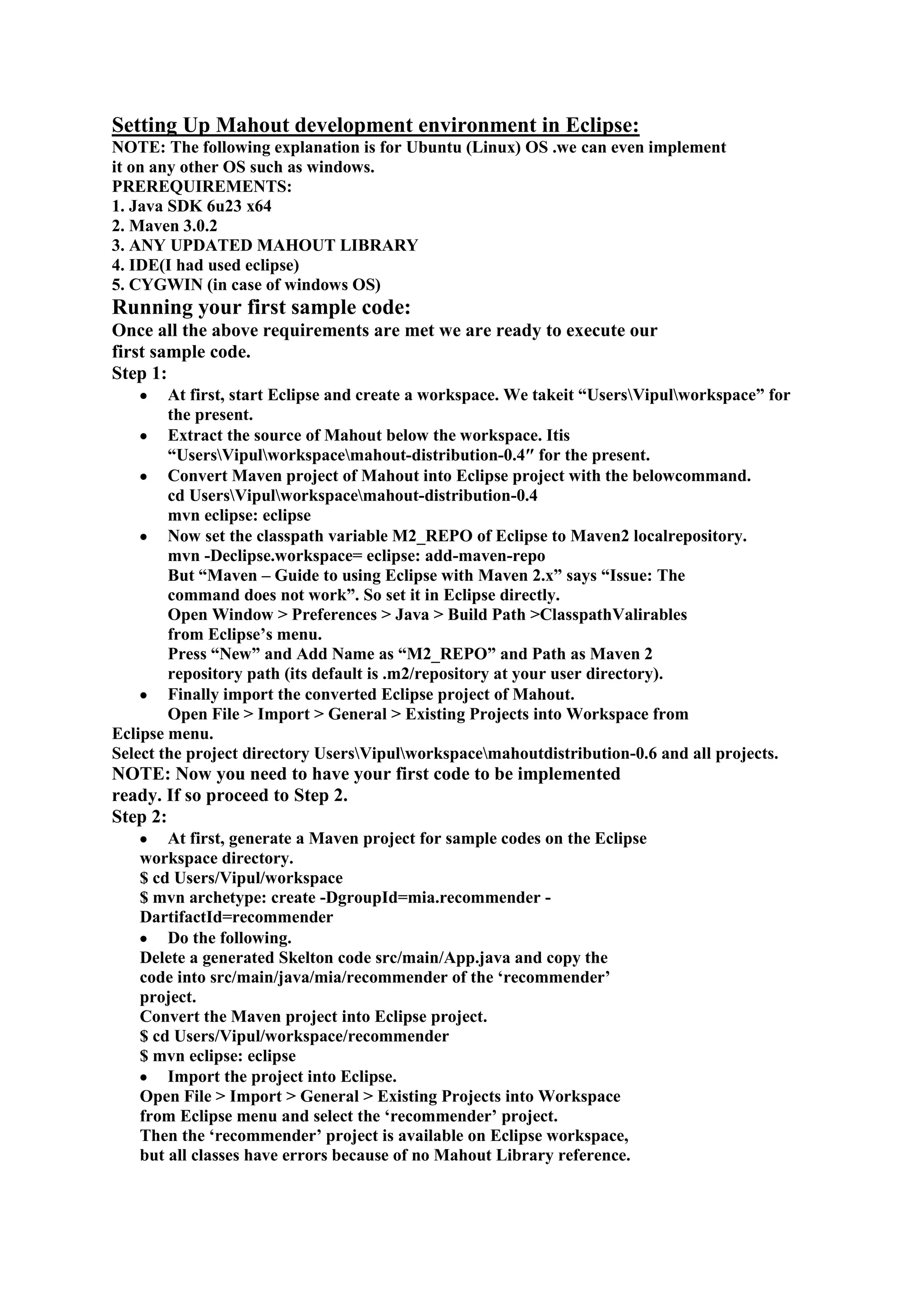

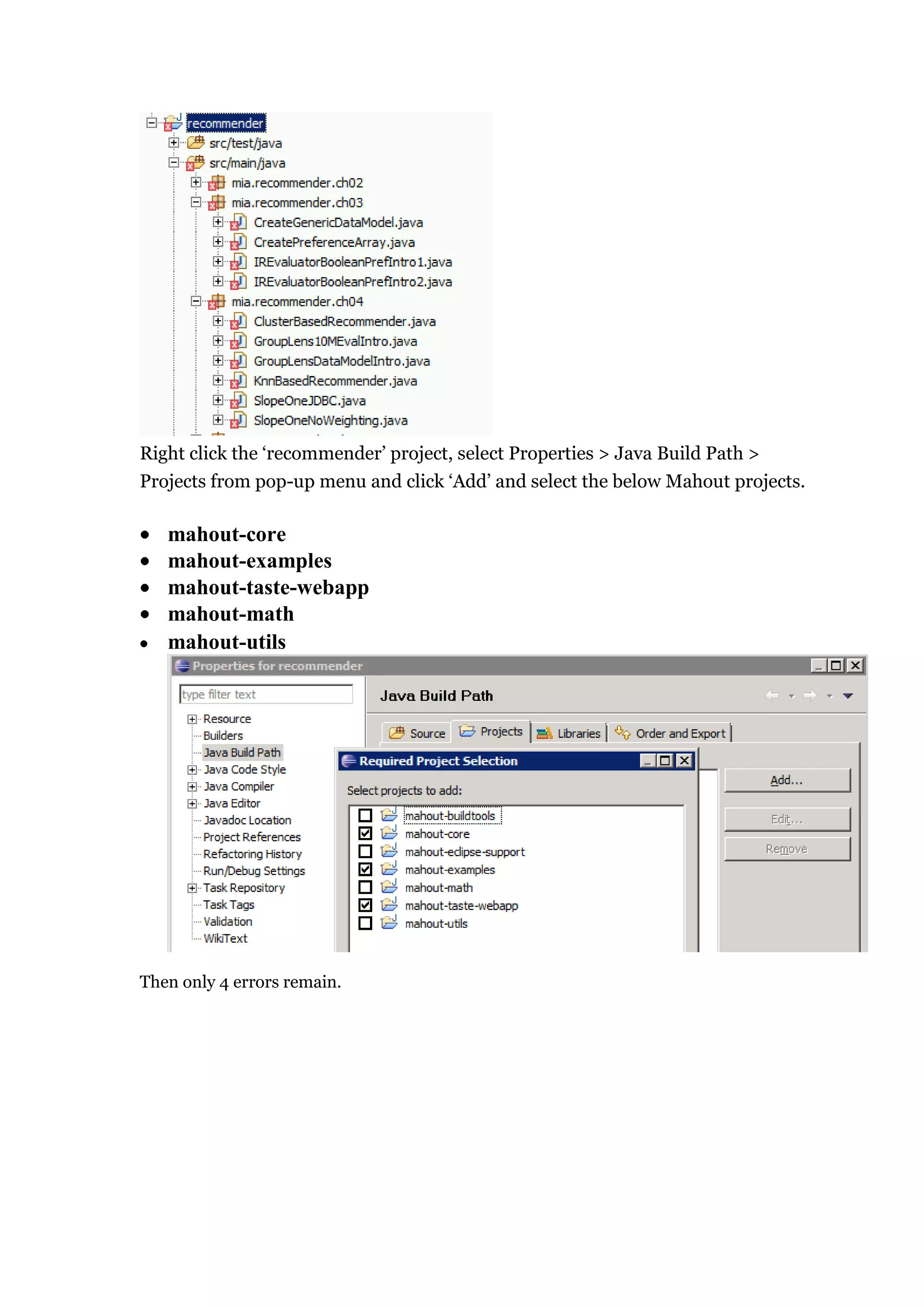

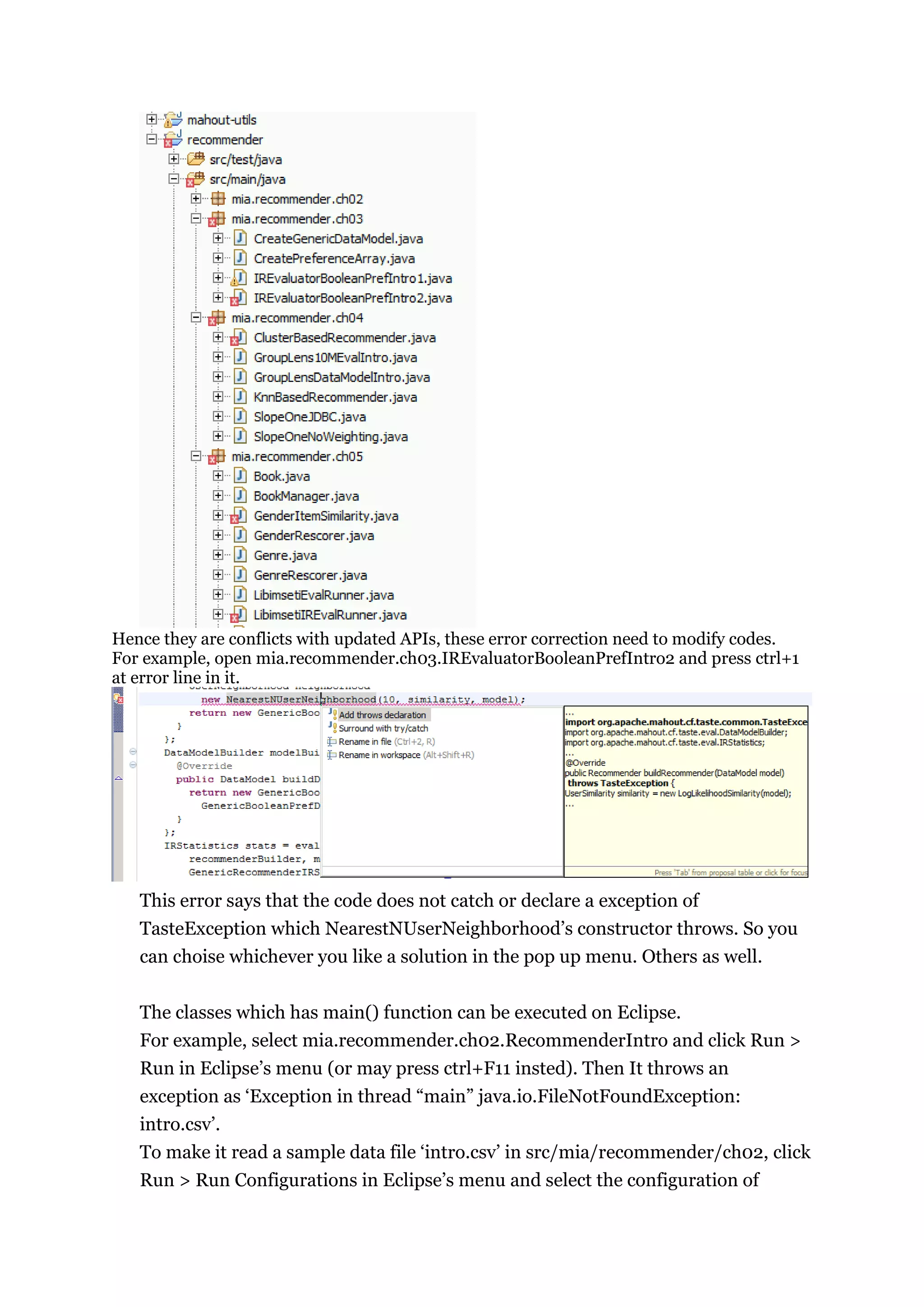

![RecommenderIntro which is created by the above execution. Then set

mia/recommender/ch02 to Working directory in Arguments tab(see the below

figure). Click “Workspace…” button and select the directory.

Then it outputs a result like “RecommendedItem[item:104, value:4.257081]“.

If you want to make a project, repeat from Maven project creation.](https://image.slidesharecdn.com/vipuldivyanshumahoutdocumentation-130131133634-phpapp01/75/Vipul-divyanshu-mahout_documentation-7-2048.jpg)