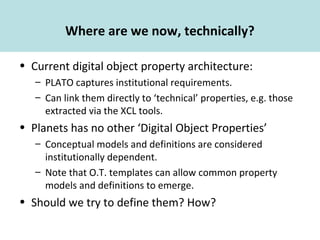

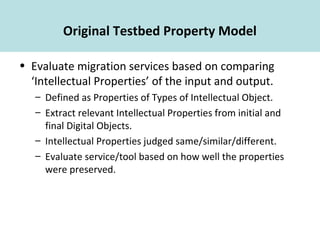

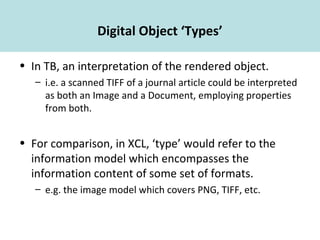

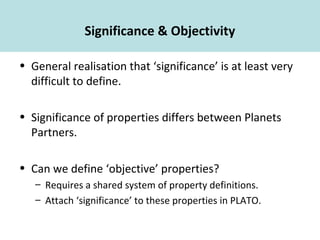

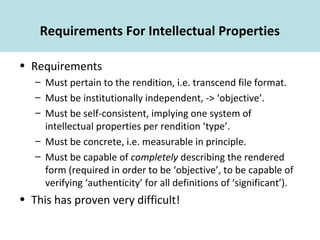

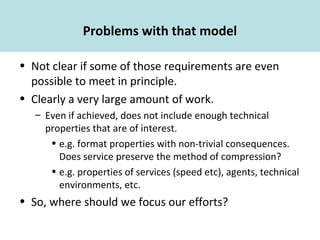

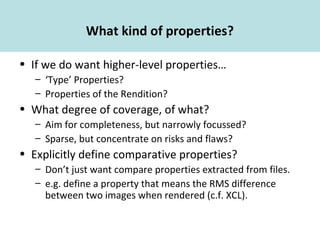

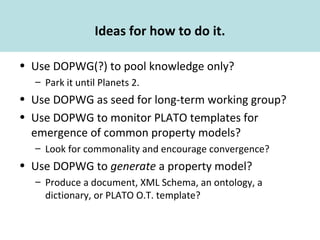

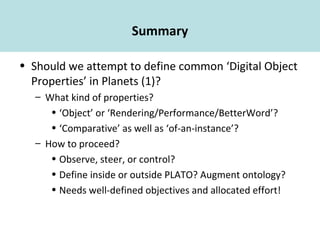

This document discusses defining common digital object properties for Planets. It considers what kind of properties could be defined (e.g. type properties, rendition properties), the requirements and challenges of defining objective, measurable properties that fully describe digital objects, and different approaches that could be taken to define properties (e.g. using a working group, monitoring property models that emerge from PLATO templates). In the end, it recommends clearly defining objectives and allocating effort before attempting to define common digital object properties in Planets.