Sureh hadoop 3 years t

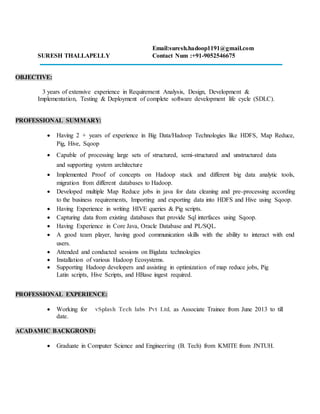

- 1. Email:suresh.hadoop1191@gmail.com SURESH THALLAPELLY Contact Num :+91-9052546675 OBJECTIVE: 3 years of extensive experience in Requirement Analysis, Design, Development & Implementation, Testing & Deployment of complete software development life cycle (SDLC). PROFESSIONAL SUMMARY: Having 2 + years of experience in Big Data/Hadoop Technologies like HDFS, Map Reduce, Pig, Hive, Sqoop Capable of processing large sets of structured, semi-structured and unstructured data and supporting system architecture Implemented Proof of concepts on Hadoop stack and different big data analytic tools, migration from different databases to Hadoop. Developed multiple Map Reduce jobs in java for data cleaning and pre-processing according to the business requirements, Importing and exporting data into HDFS and Hive using Sqoop. Having Experience in writing HIVE queries & Pig scripts. Capturing data from existing databases that provide Sql interfaces using Sqoop. Having Experience in Core Java, Oracle Database and PL/SQL. A good team player, having good communication skills with the ability to interact with end users. Attended and conducted sessions on Bigdata technologies Installation of various Hadoop Ecosystems. Supporting Hadoop developers and assisting in optimization of map reduce jobs, Pig Latin scripts, Hive Scripts, and HBase ingest required. PROFESSIONAL EXPERIENCE: Working for vSplash Tech labs Pvt Ltd, as Associate Trainee from June 2013 to till date. ACADAMIC BACKGROND: Graduate in Computer Science and Engineering (B. Tech) from KMITE from JNTUH.

- 2. PROJECT#1: PROJECT AT CLIENT Humana Inc Domain Insurance Duration April 2014 - Feb 2015 PROJECT DESCRIPTION The main aim of this project is to transfer the data from Different RDBMS data bases to HDFS- Hive vice versa without knowing any knowledge on sqoop or hadoop echo system to the end user (testers, ETL - warehouse developers). AT stands for archival tool. AT tool archives the RDBMS data into HDFS in an efficient and effective way. It provides a liberty to the user to which type of compression and serialization need to be used in the HDFS file system, Even if user does not proved compression technique, partition, bucketing and number of mappers also AT will automatically set the optimized values and moves the data to HDFS. ENVIRONMENT HDFS, Map Reduce, Pig, Flume, Java MVC. ROLE Developer CONTRIBUTIONS Involved in environment setup and cluster configuration using cloudera manager. Involved in Configuration, development & reviewing of codes. Written pig scripts for parsing the data. Preparing the Functional and technical documents in all phases of POC. PROJECT#2: PROJECT Web Log Analysis CLIENT Forties Health Care Domain Health Care Duration March 2015 – April 2016 PROJECT DESCRIPTION Web log analysis (also called a web log analyzer) is a kind of web analytics software that parses a server log file from a web server, and based on the values contained in the log file, derives indicators about who, when, and how a web server is visited. Analyzing this data helps in predicting the visitor's behavior and modify the website design and/or marketing campaigns for improved success and to acquire new customers. Over time, the size of the logs keeps increasing until it becomes very difficult to manually extract any important information out of them, particularly for busy websites. The Hadoop framework does a good job in handling this challenge in a timely, reliable, and cost-efficient manner. The task of Web log analysis can be performed using the Hadoop framework, which is well suited to

- 3. handle large amounts of unstructured data. ENVIRONMENT HDFS, Map Reduce, Pig, Flume, Java MVC. ROLE Developer CONTRIBUTIONS Exported result set from Hive to MySQL using Sqoop after processing the data Used Hive to partition and bucketing the data. Analyzed the partitioned and bucketed data and computed various metrics for reporting. Wrote Pig Scripts to perform ETL procedures on the data in HDFS. Analyzed the data by performing Hive queries and running Pig scripts to study customer behaviour. Worked on improving performance of existing Pig and Hive Queries . TECHNICAL EXPERTISE: Primary Skills Hadoop Map reduce Programming, Pig, Hive, Sqoop, Java, Shell Scripting, Oracle PL/SQL Operating Systems Windows XP, Windows 7, Linux – Ubuntu, Centos. Languages Java, C#. Scripts Shell script, java script, basics of python scripting. Databases Oracle Domain Knowledge Healthcare, Insurance Testing Tools MR-Unit. Documentation MS – Office, Open Office Version Controller SVN. PERSONAL DETAILS: Name : Suresh Thallapelly Date Of Birth : 11 Mar, 1991. Gender : Male. Nationality : Indian. Languages known : English, Hindi, Telugu. Mail : sureshthallapelly1191@gmail.com Mobile : 9052546675 Pan card No : ATGPT7270A Permanent Address : H.no 16-9-569/10/1 Ayodhyanagar Malakpet, Hyderabad 500032

- 4. . DECLARATION: I hereby state that all the above furnished information is true and correct to the best of my knowledge and belief. Place: Hyderabad, Yours truly, Date: SureshThallapelly