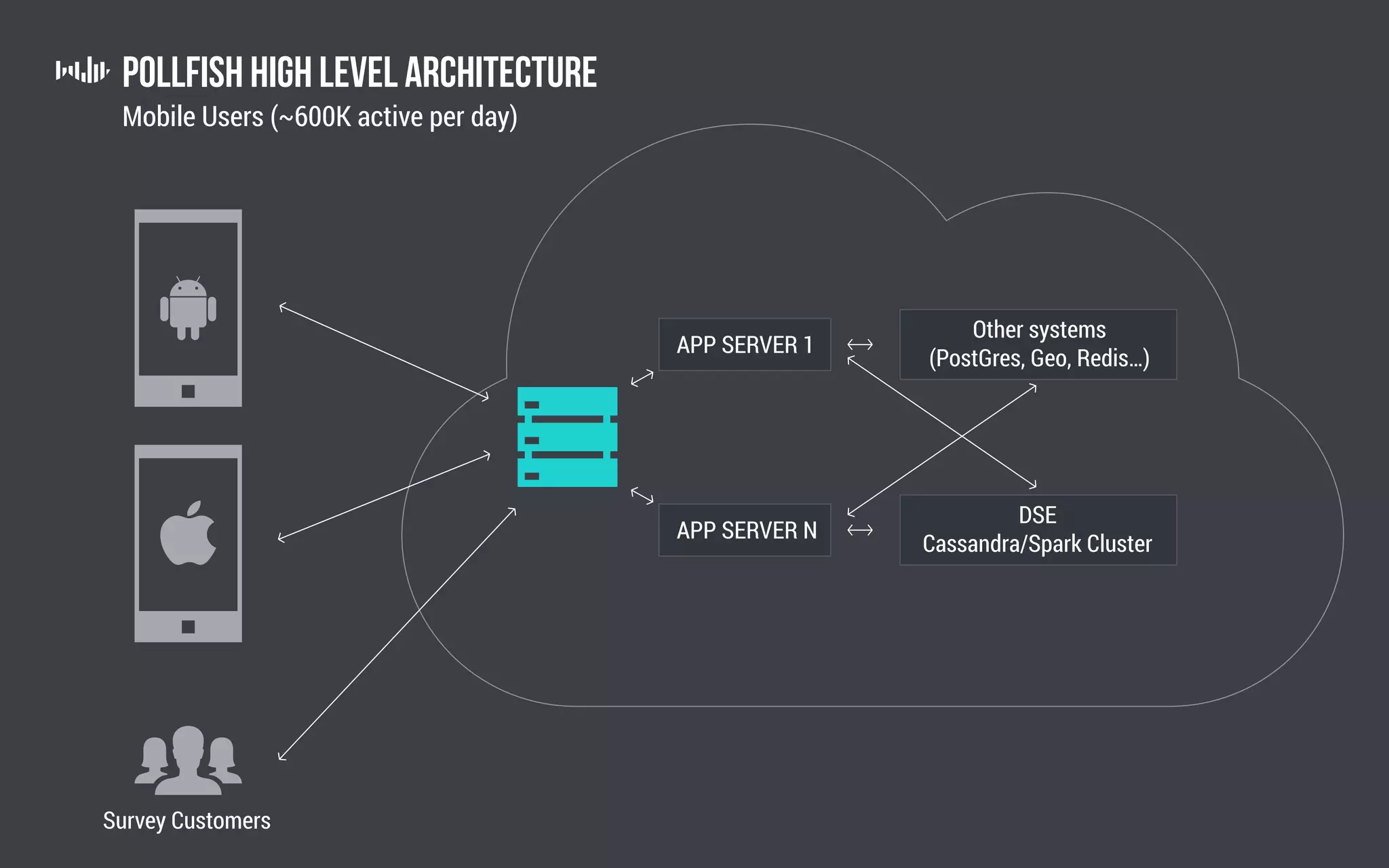

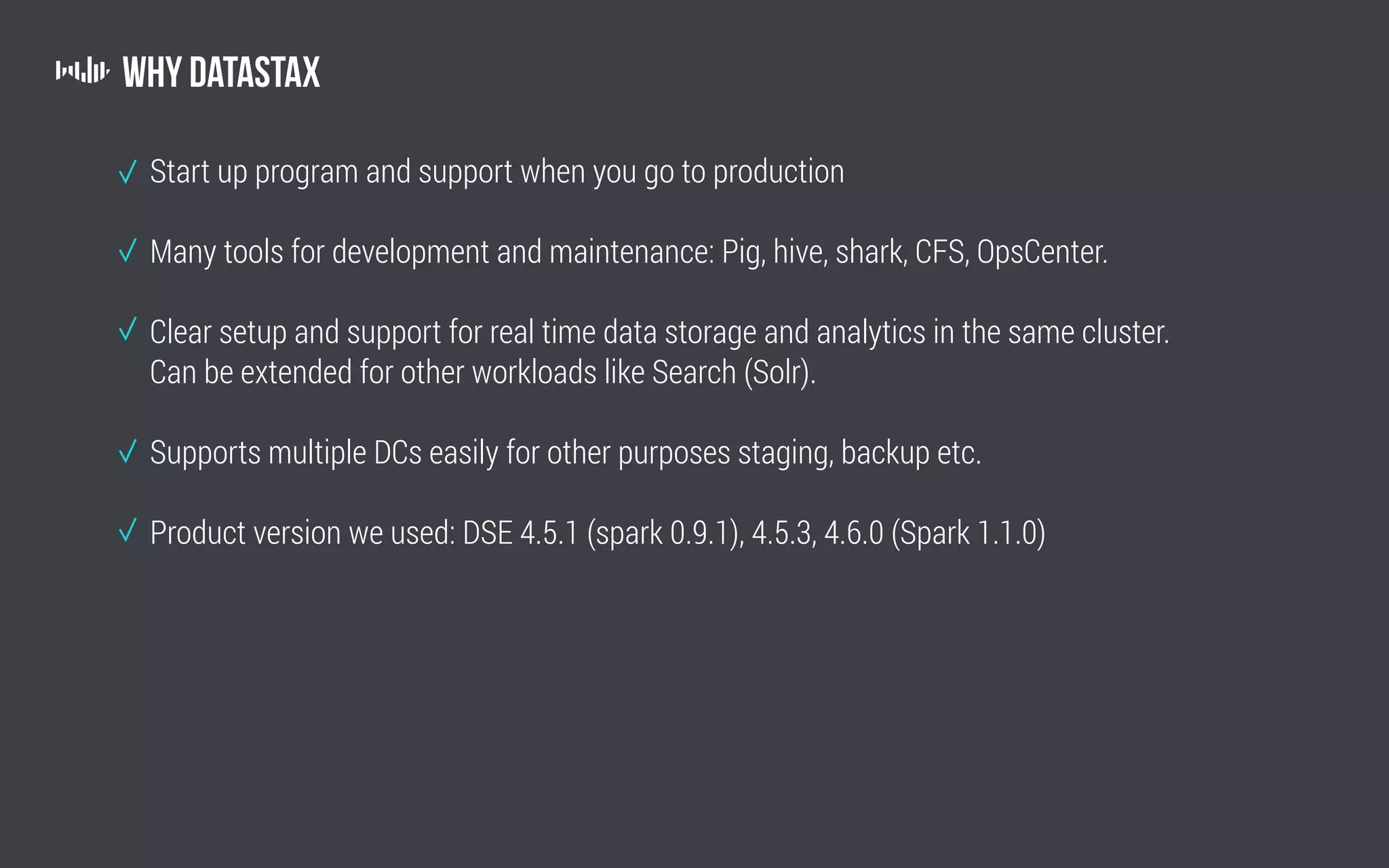

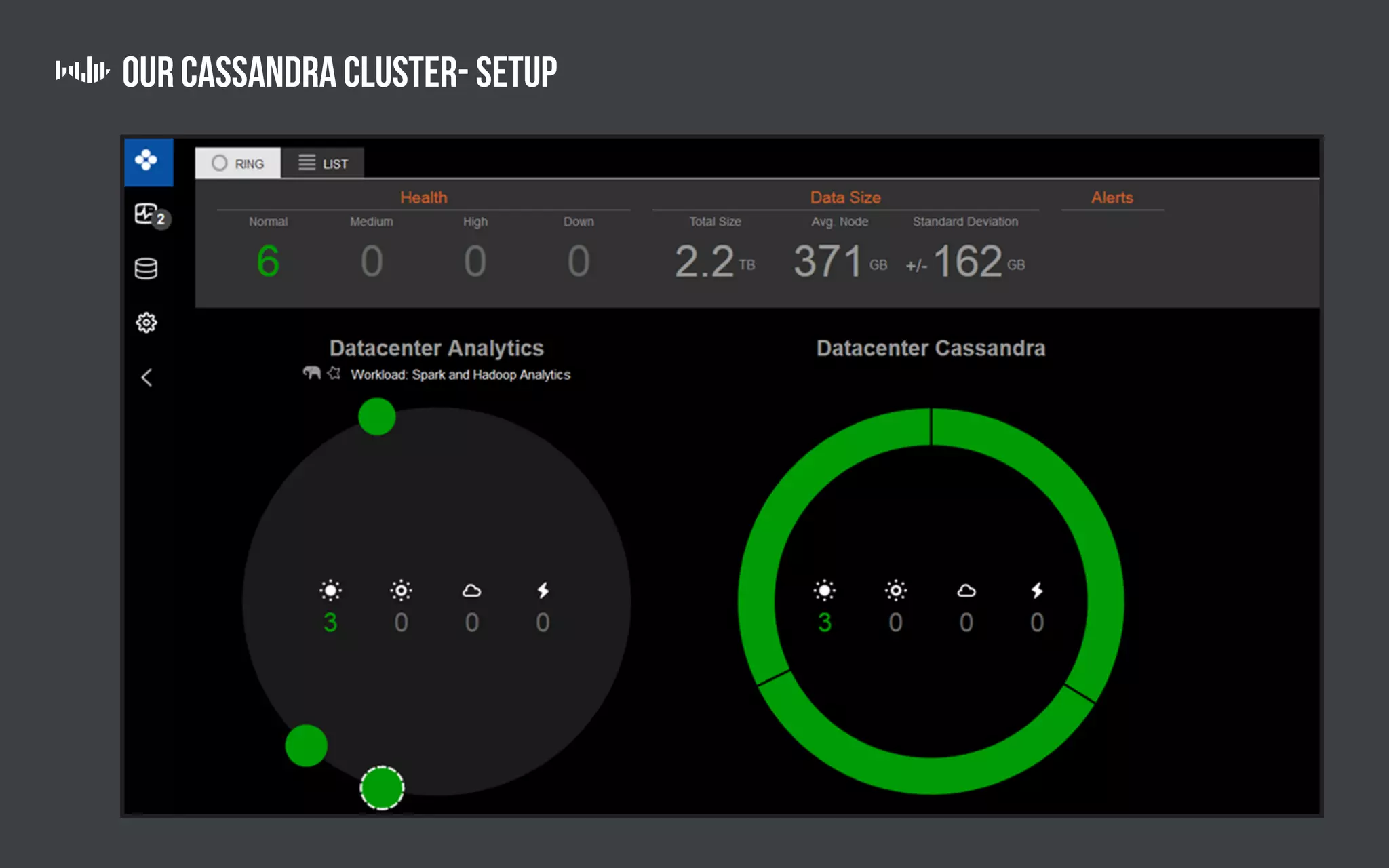

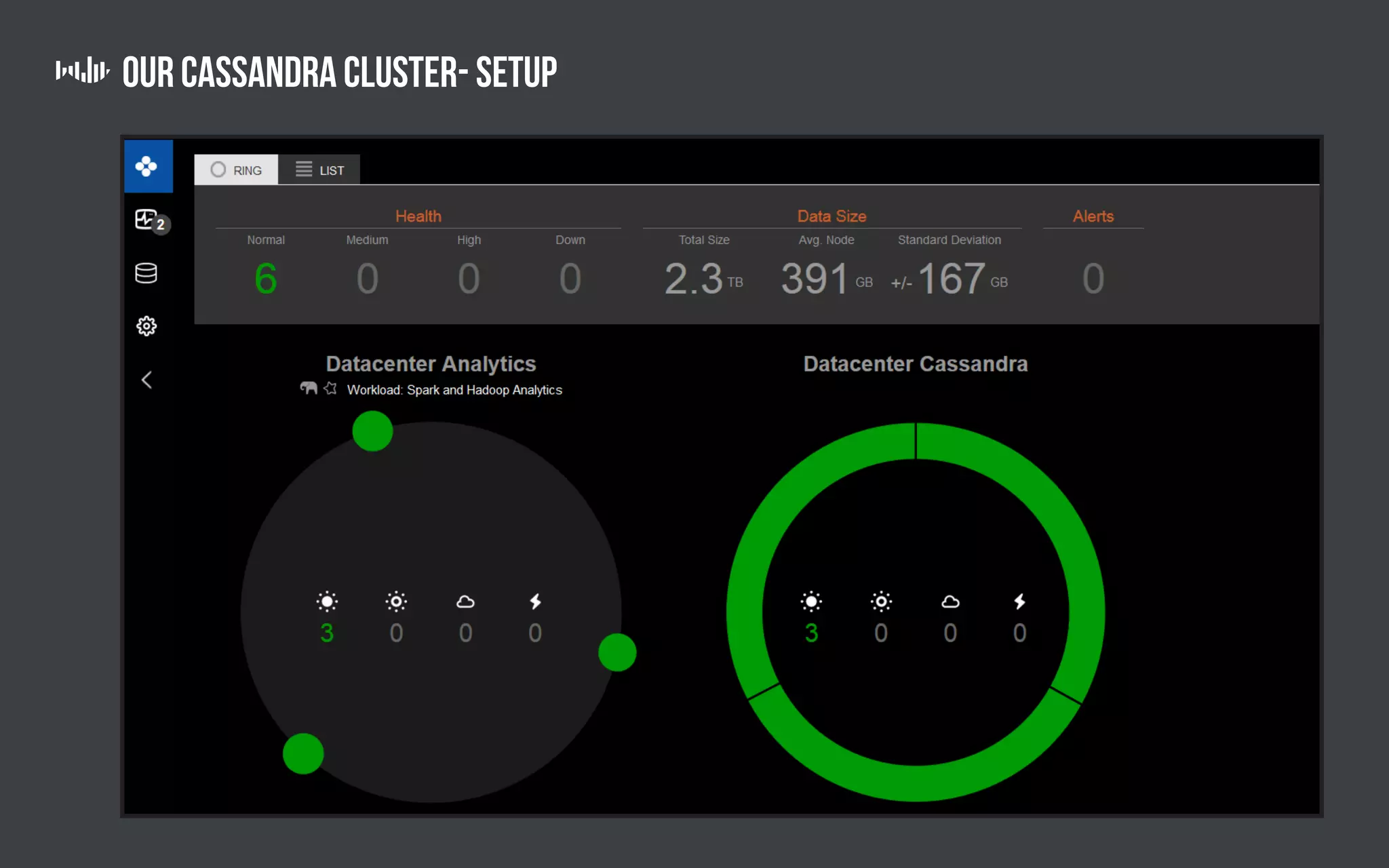

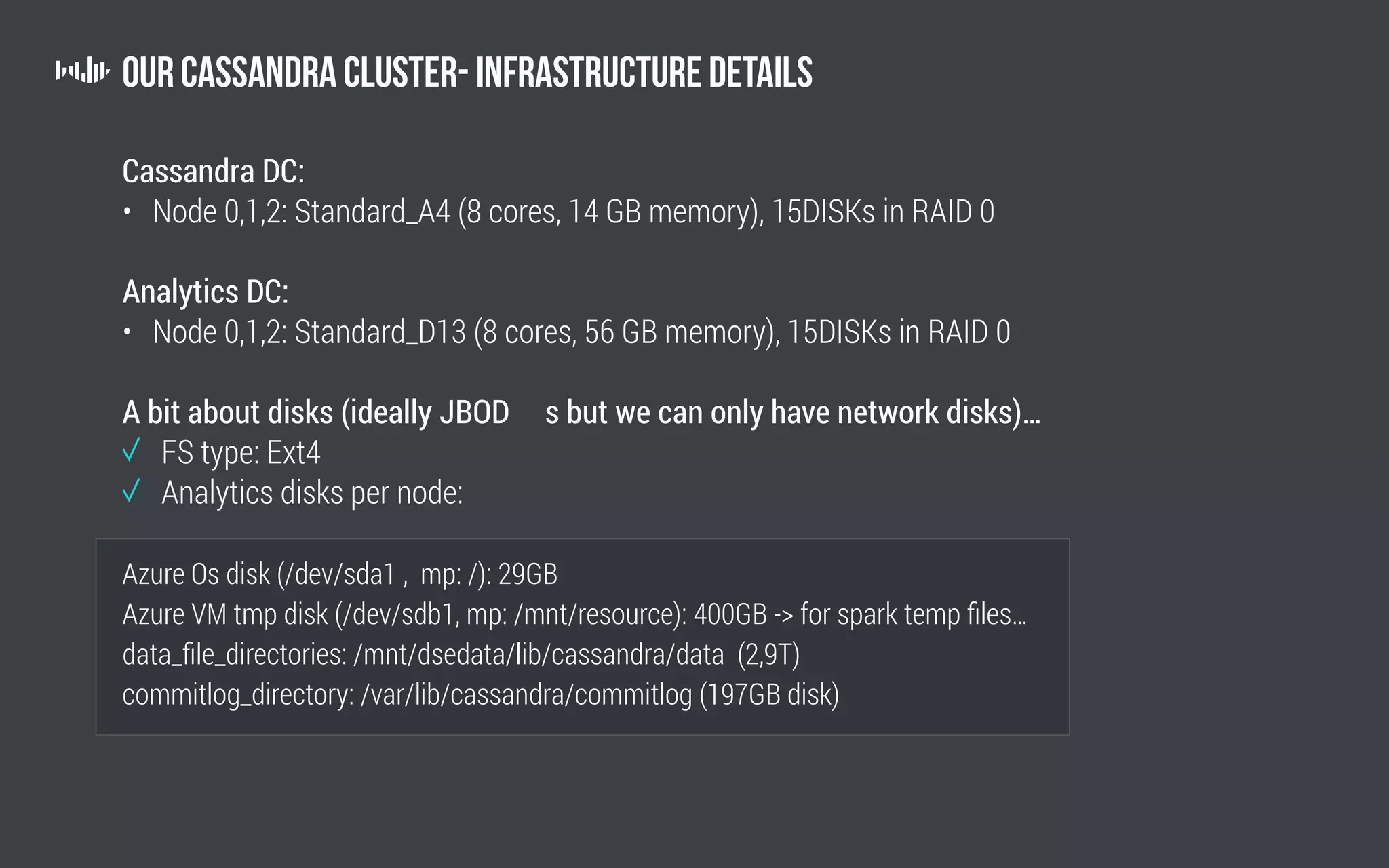

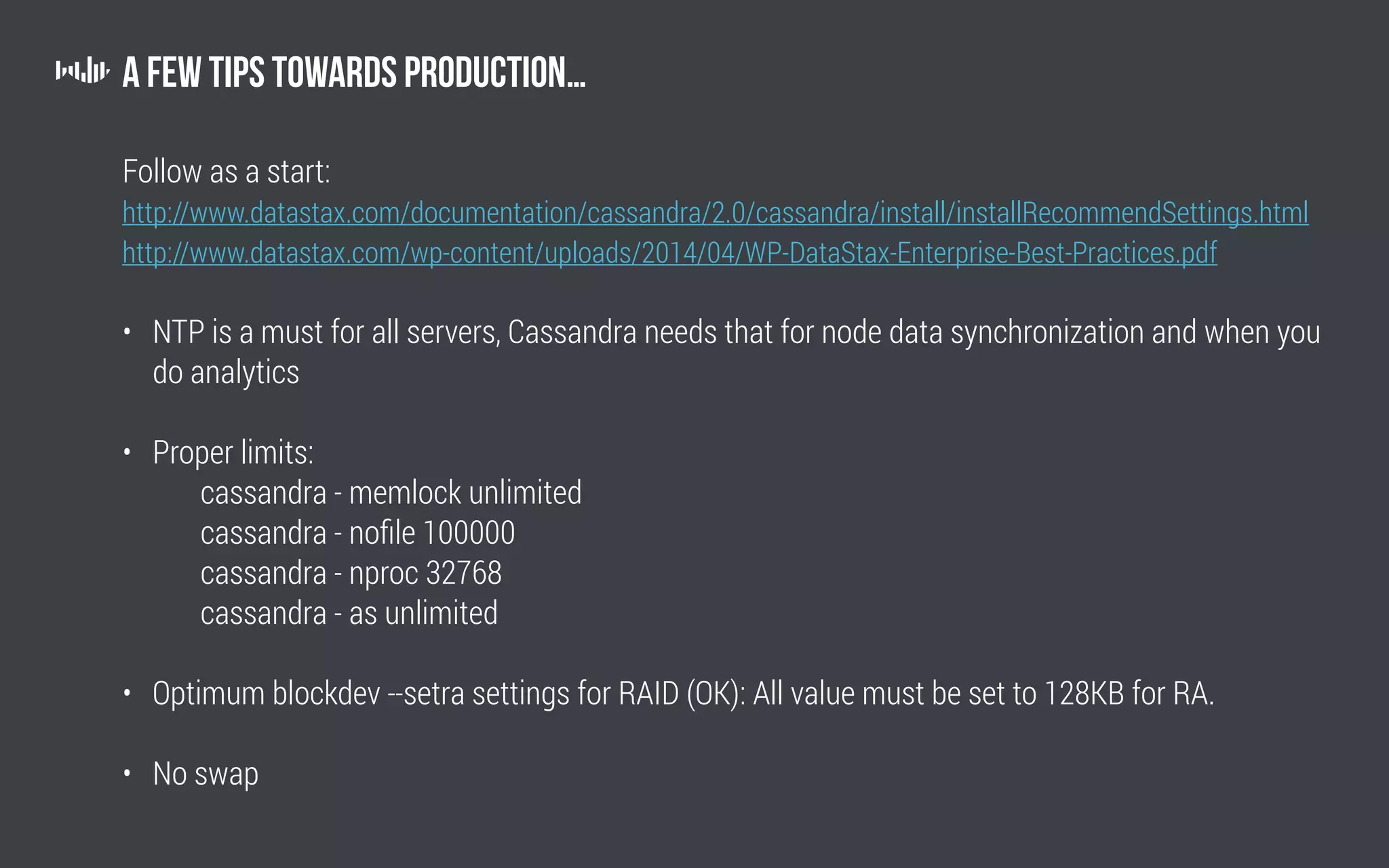

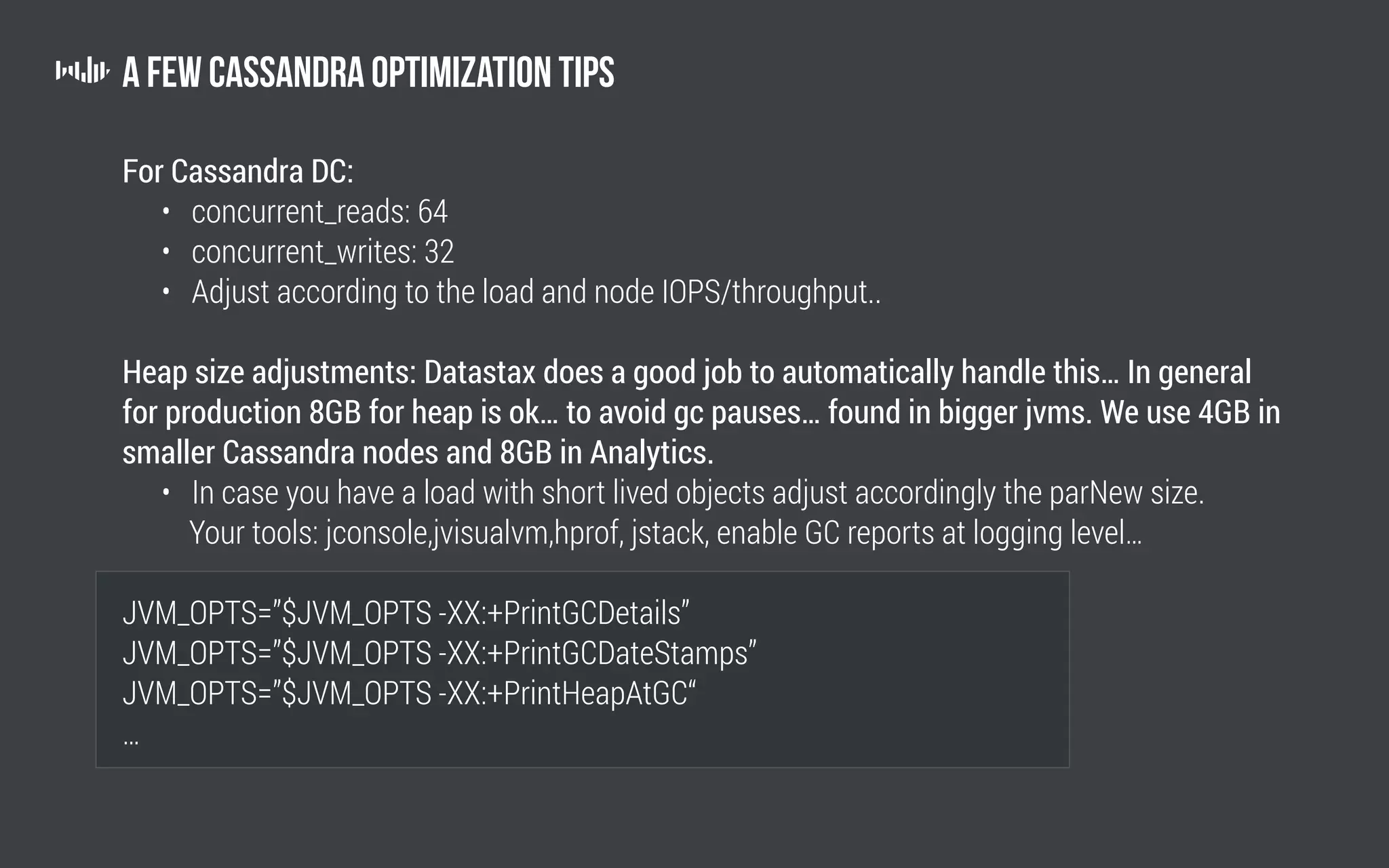

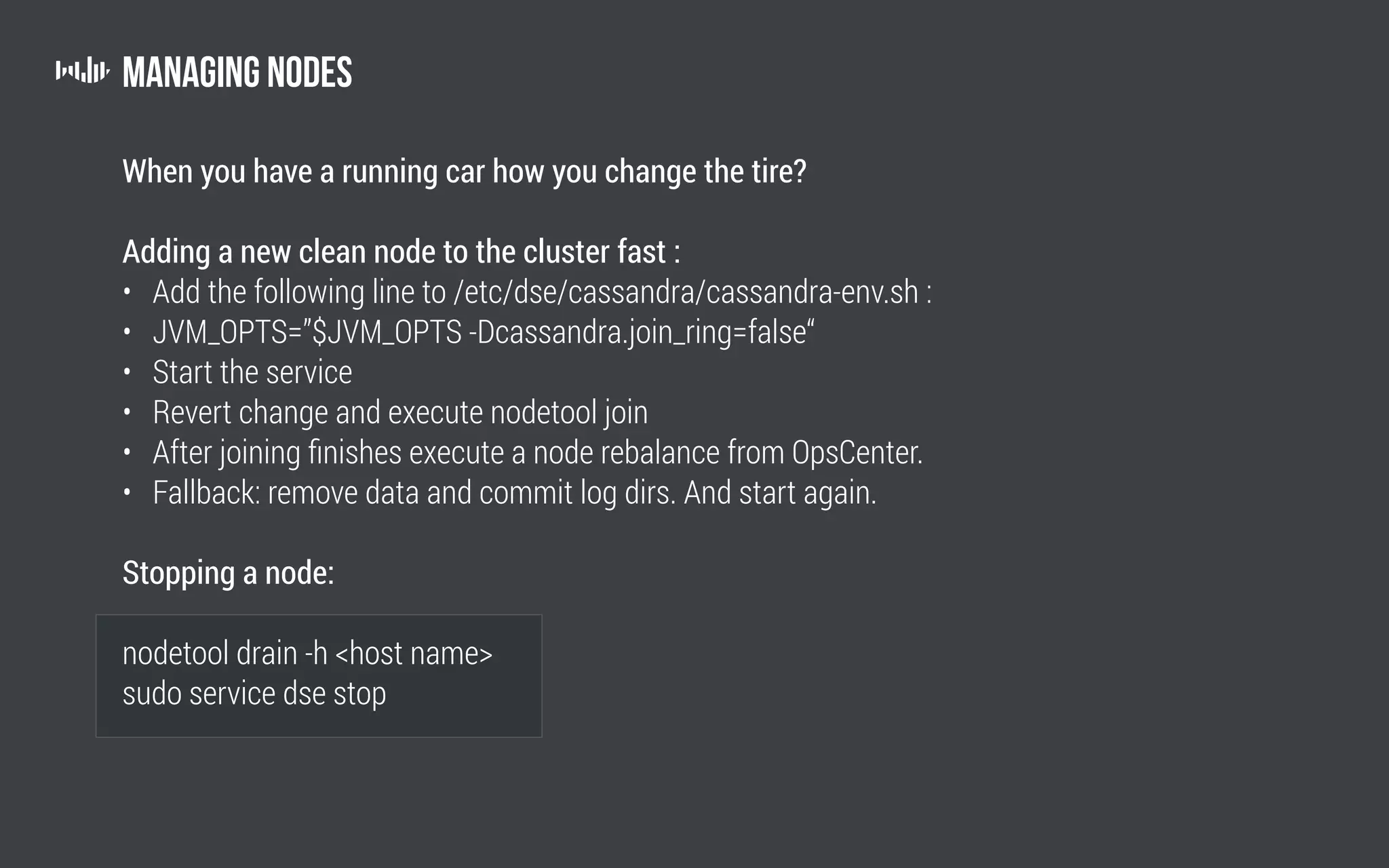

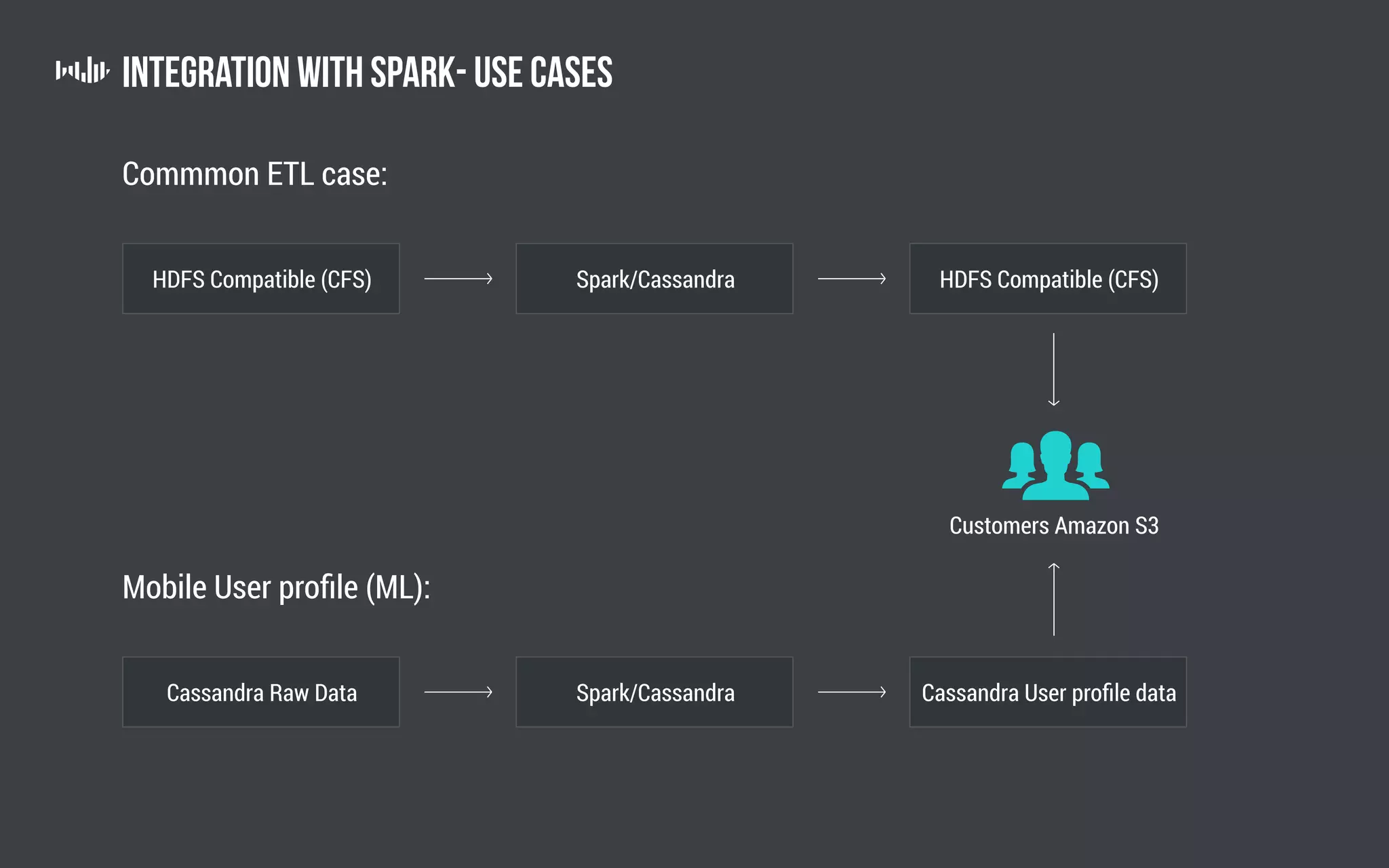

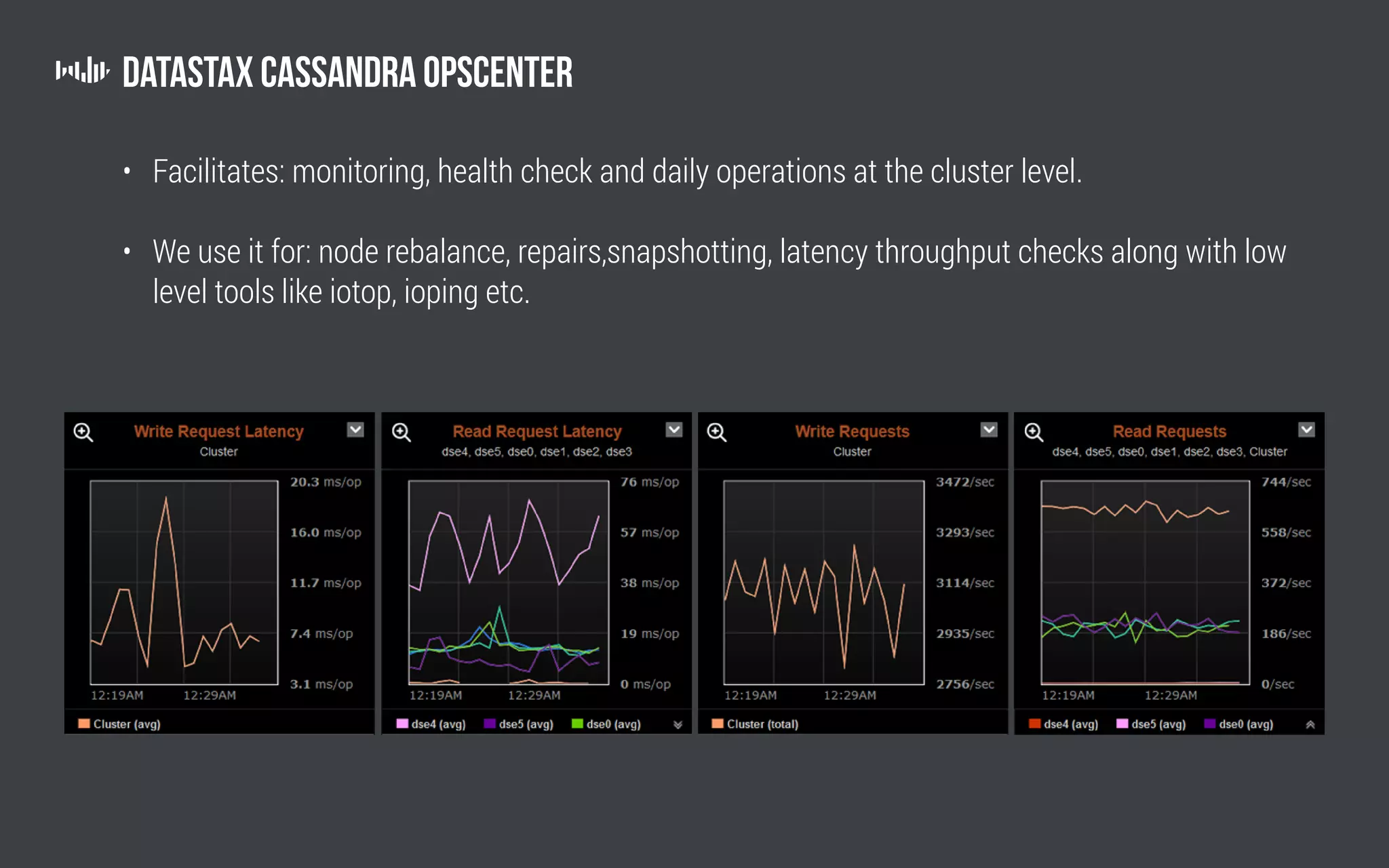

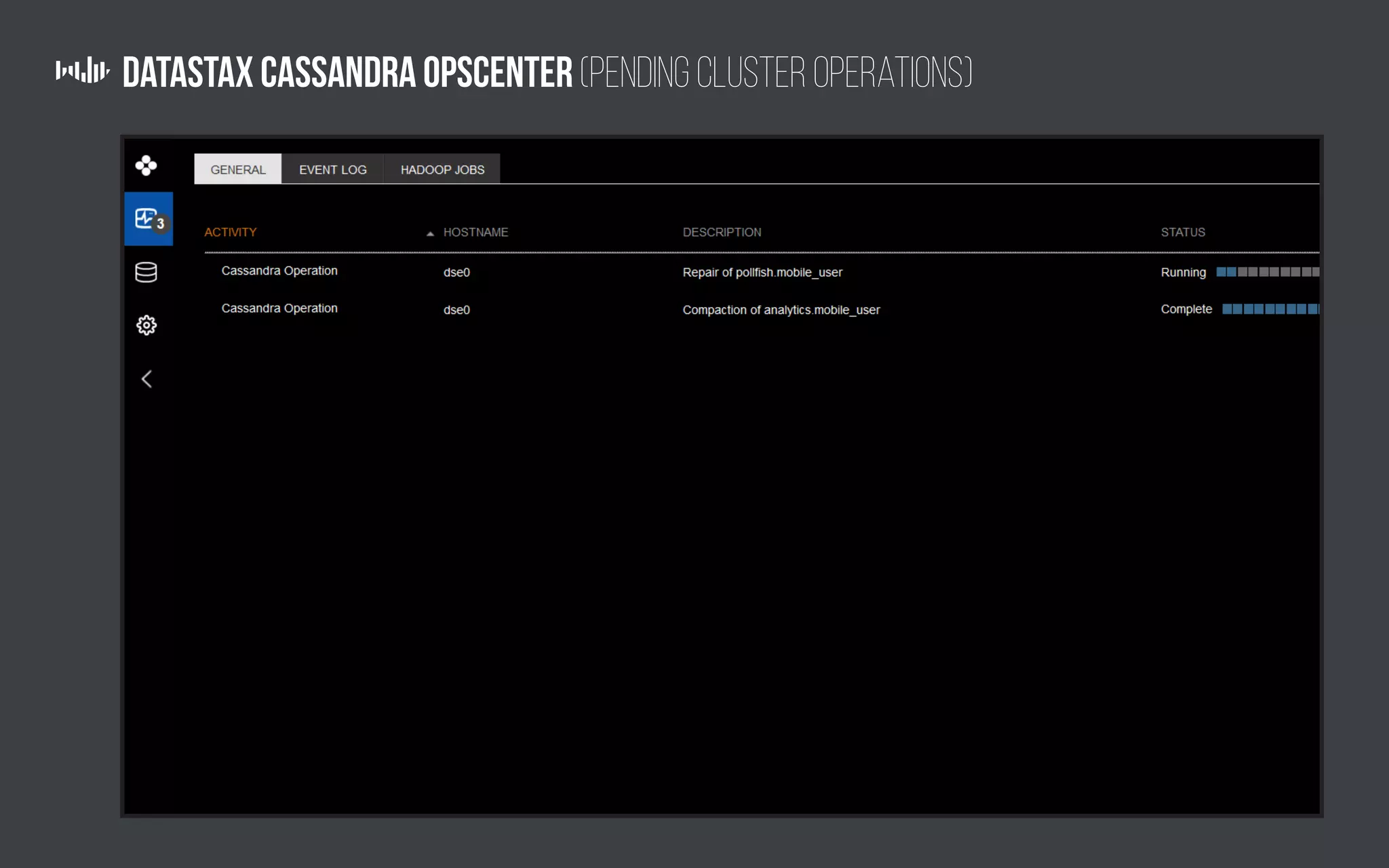

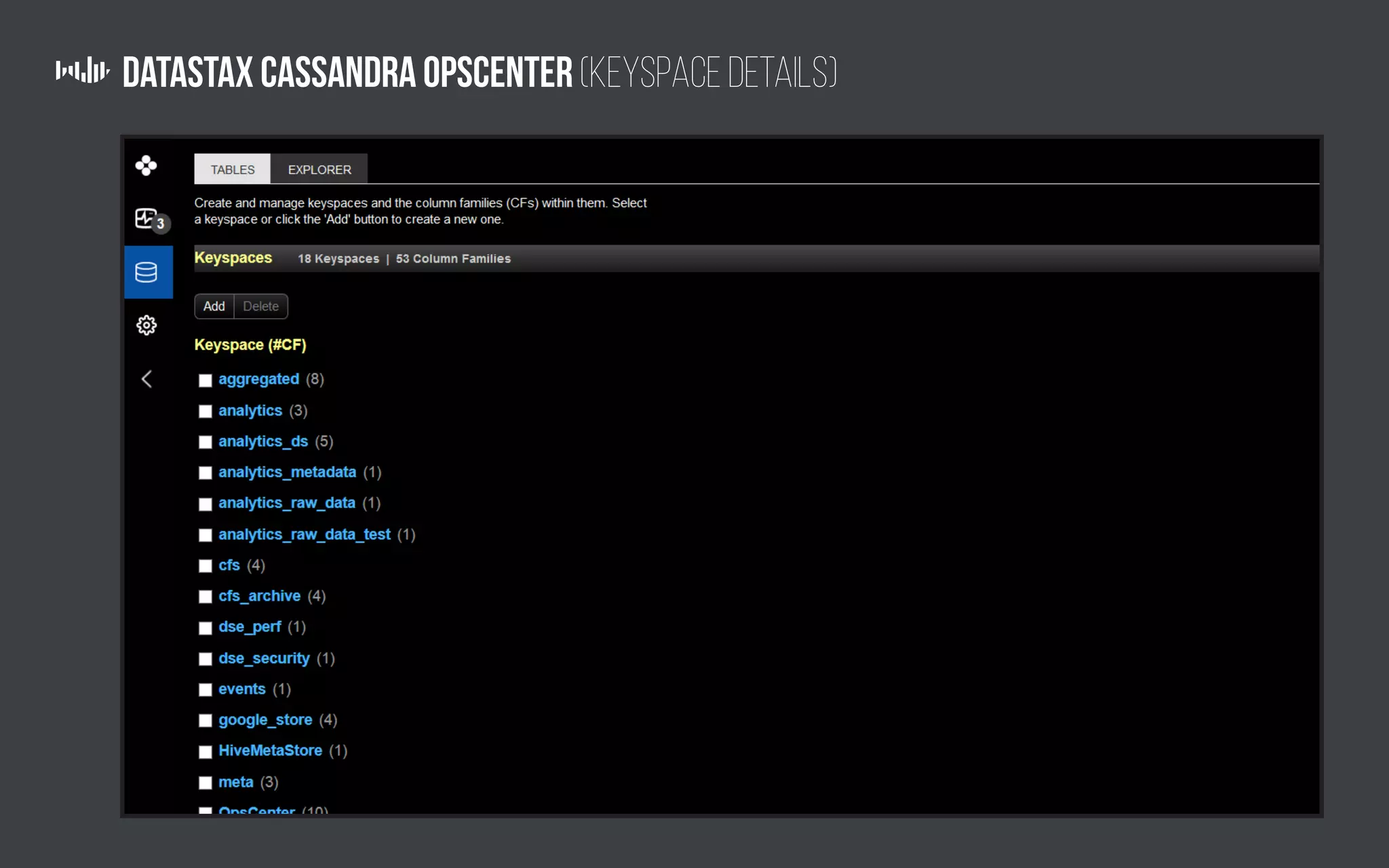

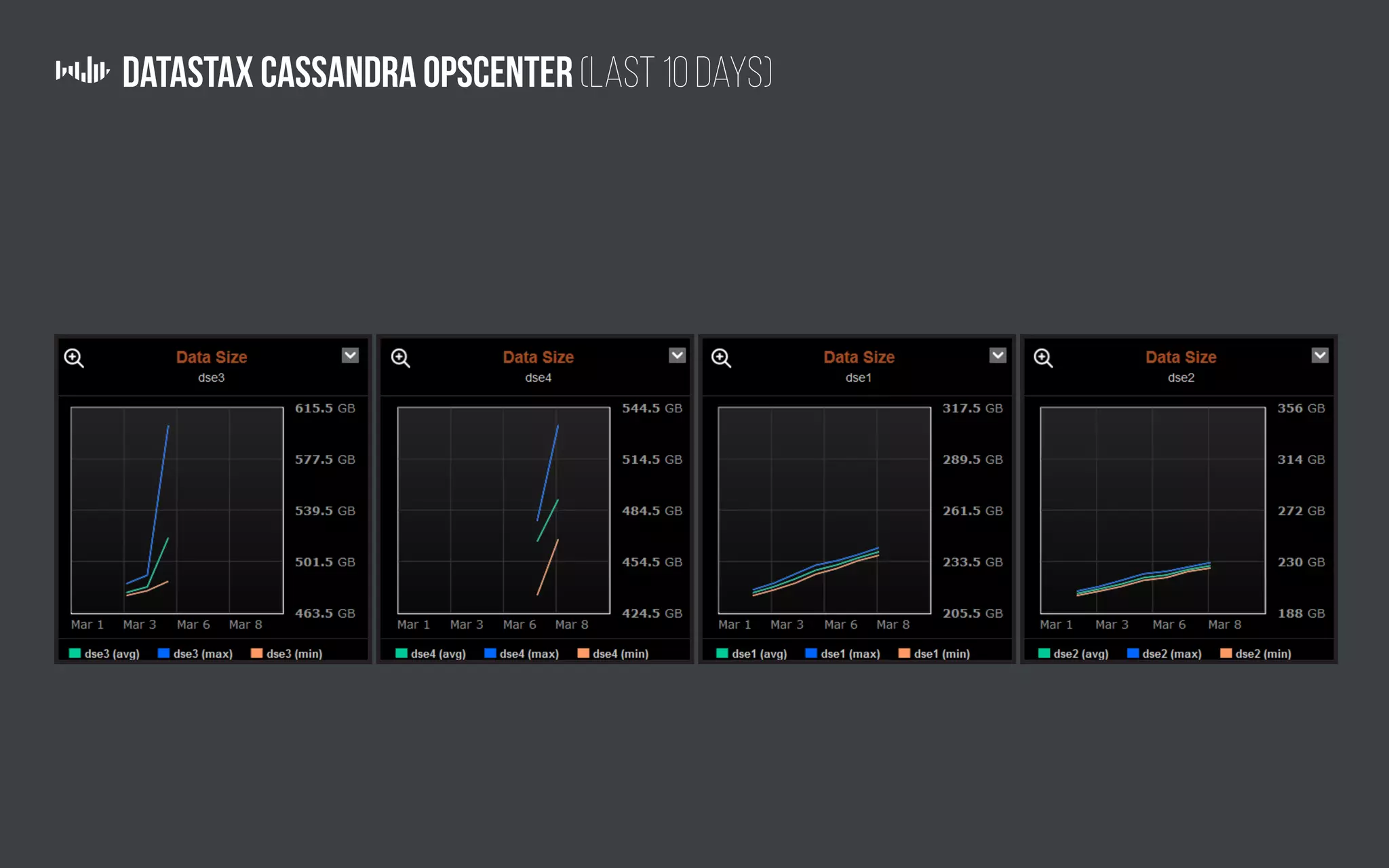

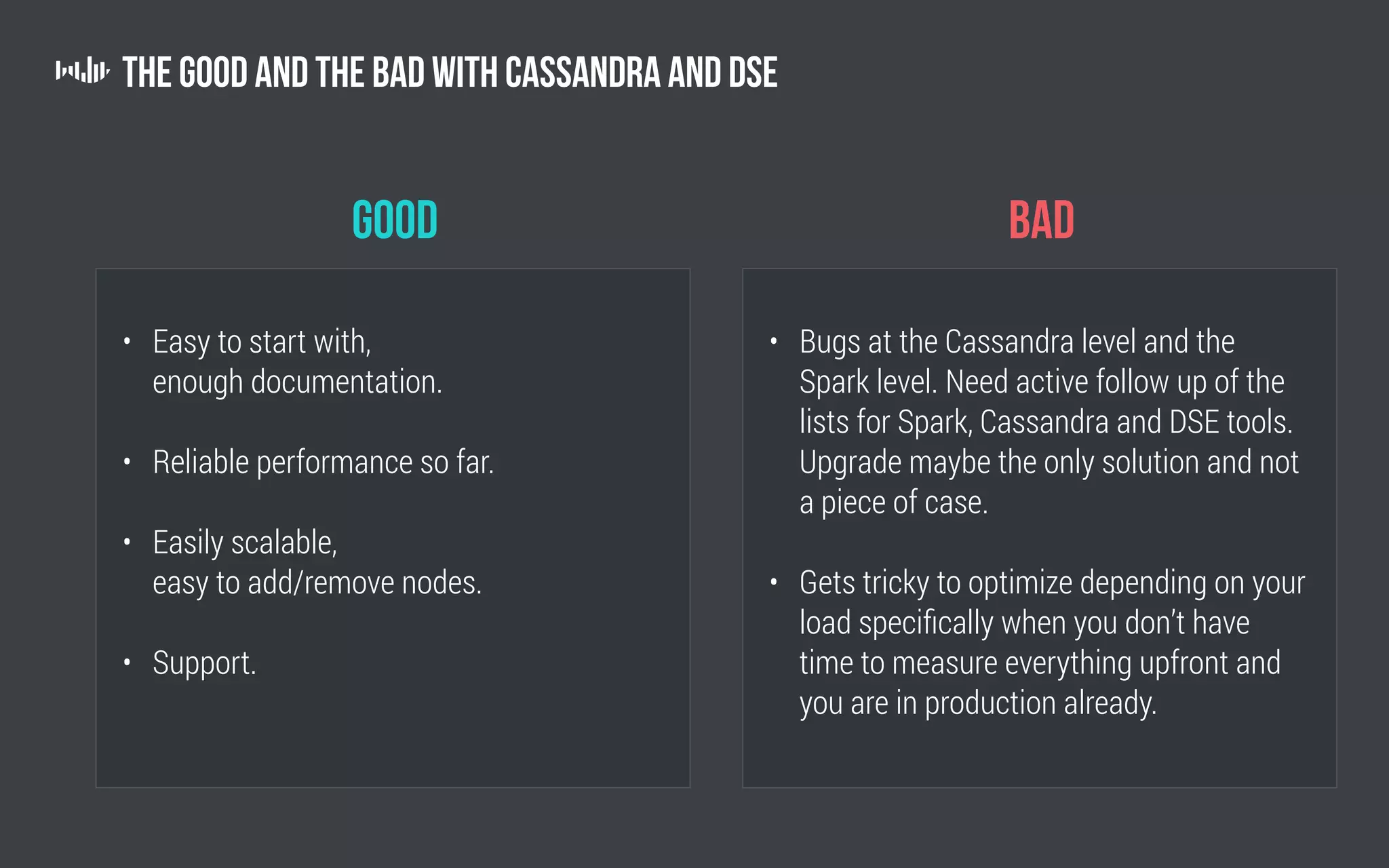

The document outlines the technology and architecture used by Pollfish, particularly focusing on their use of Apache Cassandra and Spark for handling surveys targeted at mobile users. It details the cluster setup, best practices for performance optimization, and integration with analytics frameworks. Additionally, it highlights the advantages and disadvantages of using Cassandra and DSE, along with tips for managing and scaling the cluster effectively.