Report

Share

Download to read offline

Recommended

Introduction to Web Mining and Spatial Data Mining

Data Ware Housing And Mining subject offer in Gujarat Technological University in Branch of Information and Technology.

This Topic is from chapter 8 named Advance Topics.

PhD Research Topics in Data Mining Tutorials

Newest Subjects in Data Mining Projects

Intellectual Datasets in Data Mining Projects

Identical Concepts in in Data Mining Projects

Web Mining Projects Topics

MODERN CONCEPTS IN WEB MINING

FOREMOST TECHNOLOGIES IN WEB MINING

TOPMOST APPLICATIONS IN WEB MINING

Future Of Metadata –

Describes what metadata is, how it is created, and the impact that content creators ultimately have on it.

The Modern Palimpsest

A talk given at the Ingenta Publisher Forum, in November 2008.

See: http://www.ldodds.com/blog/archives/000264.html

For a detailed description of the talk.

Recommended

Introduction to Web Mining and Spatial Data Mining

Data Ware Housing And Mining subject offer in Gujarat Technological University in Branch of Information and Technology.

This Topic is from chapter 8 named Advance Topics.

PhD Research Topics in Data Mining Tutorials

Newest Subjects in Data Mining Projects

Intellectual Datasets in Data Mining Projects

Identical Concepts in in Data Mining Projects

Web Mining Projects Topics

MODERN CONCEPTS IN WEB MINING

FOREMOST TECHNOLOGIES IN WEB MINING

TOPMOST APPLICATIONS IN WEB MINING

Future Of Metadata –

Describes what metadata is, how it is created, and the impact that content creators ultimately have on it.

The Modern Palimpsest

A talk given at the Ingenta Publisher Forum, in November 2008.

See: http://www.ldodds.com/blog/archives/000264.html

For a detailed description of the talk.

Sensordaten analysieren mit Docker, CrateDB und Grafana

Predictive analytics, Internet of Things, Industrie 4.0: Begriffe, die in aller Munde sind. Wie aber sehen echte Installationen aus? Wie können containerbasierte Microservices den Deploymentprozess vereinfachen und gleichzeitig die Produktivität erhöhen? Claus Matzinger von Crate.io wird in diesem Vortrag all diese Fragen beantworten und mittels Raspberry Pis, Grafana und Rust einige Best Practices aus der "echten Welt" vorstellen.

Harvard Law School Justice Hackathon 2018 | Hauser Hall by Jacob Khan

Developers, project managers, tech enthusiasts, law enthusiasts, and justice-minded citizens from the Harvard and MIT communities focus on developing (both technical and non-technical) solutions to problems that HLS Clinics face. HLS Clinics provide free legal services to people who could not otherwise afford or access legal services. The hackathon is co-sponsored by Developing Justice, the Harvard Law and Technology Society, and the Legal Services Center. The hackathon will be judged by MIT professor and MacArthur Fellow Eric Demaine.

Clariah WP4 dataLegend data stories

Lunch lecture on a Clariah WP4 use case on the spread of the "spanish" flu epidemic in The Netherlands in 1918

K-NEAREST NEIGHBOR CLASSIFICATION OVER SEMANTICALLY SECURE ENCRYPTED RELATION...

I3E Technologies,

#23/A, 2nd Floor SKS Complex,

Opp. Bus Stand, Karur-639 001.

IEEE PROJECTS AVAILABLE,

NON-IEEE PROJECTS AVAILABLE,

APPLICATION BASED PROJECTS AVAILABLE,

MINI-PROJECTS AVAILABLE.

Mobile : 99436 99916, 99436 99926, 99436 99936

Mail ID: i3eprojectskarur@gmail.com

Web: www.i3etechnologies.com, www.i3eprojects.com

K nearest neighbor classification over semantically secure encrypted relation...

K nearest neighbor classification over semantically secure encrypted relational data

+91-9994232214,8144199666, ieeeprojectchennai@gmail.com,

www.projectsieee.com, www.ieee-projects-chennai.com

IEEE PROJECTS 2015-2016

-----------------------------------

Contact:+91-9994232214,+91-8144199666

Email:ieeeprojectchennai@gmail.com

ieee projects chennai, ieee projects bangalore

Web Mining & Text Mining

Web mining : How we mine the web data?

Text Mining : How we use text to search things over web?

Linked Open Data Utrecht University Library

Presentation on Linked Open Data for researchers and libraries: introduction, some SPARQL, and demo's.

Date: December 7, 2021

Linked Data media experiment

"A Norwegian Linked Open Data infrastructure experiment" - presentation by MediArena CEO Anders Waage Nilsen at Media 3.0 seminar in Bergen May 2011.

Big Data to SMART Data : Process Scenario

Big Data to SMART Data : Process scenario

Scenario of an implementation of a transformation process of the Data towards exploitable data and representative with treatments of the streaming, the distributed systems, the messages, the storage in an NoSQL environment, a management with an ecosystem Big Data graphic visualization of the data with the technologies:

Apache Storm, Apache Zookeeper, Apache Kafka, Apache Cassandra, Apache Spark and Data-Driven Document.

LITERATURE SURVEY ON BIG DATA AND PRESERVING PRIVACY FOR THE BIG DATA IN CLOUD

LITERATURE SURVEY ON BIG DATA AND PRESERVING PRIVACY FOR THE BIG DATA IN CLOUDInternational Journal of Technical Research & Application

Big data is the term that characterized by its increasing

volume, velocity, variety and veracity. All these characteristics

make processing on this big data a complex task. So, for

processing such data we need to do it differently like map reduce

framework. When an organization exchanges data for mining

useful information from this big data then privacy of the data

becomes an important problem. In the past, several privacy

preserving algorithms have been proposed. Of all those

anonymizing the data has been the most efficient one.

Anonymizing the dataset can be done on several operations like

generalization, suppression, anatomy, specialization, permutation

and perturbation. These algorithms are all suitable for dataset

that does not have the characteristics of the big data. To preserve

the privacy of the large dataset an algorithm was proposed

recently. It applies the top down specialization approach for

anonymizing the dataset and the scalability is increasing my

applying the map reduce frame work. In this paper we survey the

growth of big data, characteristics, map-reduce framework and

all the privacy preserving mechanisms and propose future

directions of our research. SURVEY OF UNITED STATES RELATED DOMAINS: SECURE NETWORK PROTOCOL ANALYSIS

Over time, the HTTP Protocol has undergone significant evolution. HTTP was the internet's foundation for data communication. When network security threats became prevalent, HTTPS became a widely accepted technology for assisting in a domain defense. HTTPS supported two security protocols: secure socket layer (SSL) and transport layer security (TLS). Additionally, the HTTP Strict Transport Security (HSTS) protocol was included to strengthen the HTTPS protocol. Numerous cyber-attacks occurred in the United States, and many of these attacks could have been avoided simply by implementing domains with the most up-to-date HTTP security mechanisms. This study seeks to accomplish two objectives: 1. Determine the degree to which US-related domains are configured optimally for HTTP security protocol setup; 2. Create a generic scoring system for a domain's network security based on the following factors: SSL version, TLS version, and presence of HSTS to easily determine where a domain stands. We found through our analysis and scoring system incorporation that US-related domains showed a positive trend for secure network protocol setup, but there is still room for improvement. In order to safeguard unwanted cyber-attacks, current HTTPS domains need to be extensively investigated to identify if they possess lower version protocol support. Due to the infrequent occurrence of HSTS in the evaluated domains, the computer science community necessitates further HSTS education.

Meliorating usable document density for online event detection

Online event detection (OED) has seen a rise in the research community as it can provide quick identification of possible events happening at times in the world. Through these systems, potential events can be indicated well before they are reported by the news media, by grouping similar documents shared over social media by users. Most OED systems use textual similarities for this purpose. Similar documents, that may indicate a potential event, are further strengthened by the replies made by other users, thereby improving the potentiality of the group. However, these documents are at times unusable as independent documents, as they may replace previously appeared noun phrases with pronouns, leading OED systems to fail while grouping these replies to their suitable clusters. In this paper, a pronoun resolution system that tries to replace pronouns with relevant nouns over social media data is proposed. Results show significant improvement in performance using the proposed system.

Network Forensic Investigation of HTTPS Protocol

International Journal of Modern Engineering Research (IJMER) is Peer reviewed, online Journal. It serves as an international archival forum of scholarly research related to engineering and science education.

Cert Overview

I created this presentation for a presentation on CERT (Community Emergency Response Teams) given to the Mid-State Amateur Radio Club.

More Related Content

What's hot

Sensordaten analysieren mit Docker, CrateDB und Grafana

Predictive analytics, Internet of Things, Industrie 4.0: Begriffe, die in aller Munde sind. Wie aber sehen echte Installationen aus? Wie können containerbasierte Microservices den Deploymentprozess vereinfachen und gleichzeitig die Produktivität erhöhen? Claus Matzinger von Crate.io wird in diesem Vortrag all diese Fragen beantworten und mittels Raspberry Pis, Grafana und Rust einige Best Practices aus der "echten Welt" vorstellen.

Harvard Law School Justice Hackathon 2018 | Hauser Hall by Jacob Khan

Developers, project managers, tech enthusiasts, law enthusiasts, and justice-minded citizens from the Harvard and MIT communities focus on developing (both technical and non-technical) solutions to problems that HLS Clinics face. HLS Clinics provide free legal services to people who could not otherwise afford or access legal services. The hackathon is co-sponsored by Developing Justice, the Harvard Law and Technology Society, and the Legal Services Center. The hackathon will be judged by MIT professor and MacArthur Fellow Eric Demaine.

Clariah WP4 dataLegend data stories

Lunch lecture on a Clariah WP4 use case on the spread of the "spanish" flu epidemic in The Netherlands in 1918

K-NEAREST NEIGHBOR CLASSIFICATION OVER SEMANTICALLY SECURE ENCRYPTED RELATION...

I3E Technologies,

#23/A, 2nd Floor SKS Complex,

Opp. Bus Stand, Karur-639 001.

IEEE PROJECTS AVAILABLE,

NON-IEEE PROJECTS AVAILABLE,

APPLICATION BASED PROJECTS AVAILABLE,

MINI-PROJECTS AVAILABLE.

Mobile : 99436 99916, 99436 99926, 99436 99936

Mail ID: i3eprojectskarur@gmail.com

Web: www.i3etechnologies.com, www.i3eprojects.com

K nearest neighbor classification over semantically secure encrypted relation...

K nearest neighbor classification over semantically secure encrypted relational data

+91-9994232214,8144199666, ieeeprojectchennai@gmail.com,

www.projectsieee.com, www.ieee-projects-chennai.com

IEEE PROJECTS 2015-2016

-----------------------------------

Contact:+91-9994232214,+91-8144199666

Email:ieeeprojectchennai@gmail.com

ieee projects chennai, ieee projects bangalore

Web Mining & Text Mining

Web mining : How we mine the web data?

Text Mining : How we use text to search things over web?

Linked Open Data Utrecht University Library

Presentation on Linked Open Data for researchers and libraries: introduction, some SPARQL, and demo's.

Date: December 7, 2021

Linked Data media experiment

"A Norwegian Linked Open Data infrastructure experiment" - presentation by MediArena CEO Anders Waage Nilsen at Media 3.0 seminar in Bergen May 2011.

What's hot (13)

Sensordaten analysieren mit Docker, CrateDB und Grafana

Sensordaten analysieren mit Docker, CrateDB und Grafana

Harvard Law School Justice Hackathon 2018 | Hauser Hall by Jacob Khan

Harvard Law School Justice Hackathon 2018 | Hauser Hall by Jacob Khan

K-NEAREST NEIGHBOR CLASSIFICATION OVER SEMANTICALLY SECURE ENCRYPTED RELATION...

K-NEAREST NEIGHBOR CLASSIFICATION OVER SEMANTICALLY SECURE ENCRYPTED RELATION...

K nearest neighbor classification over semantically secure encrypted relation...

K nearest neighbor classification over semantically secure encrypted relation...

WestlawNext - Research at the Next Level - The Recorder 2011

WestlawNext - Research at the Next Level - The Recorder 2011

Barcelona 2014: CrossRef System and Support Update by Chuck Koscher

Barcelona 2014: CrossRef System and Support Update by Chuck Koscher

Similar to PanamaPapers

Big Data to SMART Data : Process Scenario

Big Data to SMART Data : Process scenario

Scenario of an implementation of a transformation process of the Data towards exploitable data and representative with treatments of the streaming, the distributed systems, the messages, the storage in an NoSQL environment, a management with an ecosystem Big Data graphic visualization of the data with the technologies:

Apache Storm, Apache Zookeeper, Apache Kafka, Apache Cassandra, Apache Spark and Data-Driven Document.

LITERATURE SURVEY ON BIG DATA AND PRESERVING PRIVACY FOR THE BIG DATA IN CLOUD

LITERATURE SURVEY ON BIG DATA AND PRESERVING PRIVACY FOR THE BIG DATA IN CLOUDInternational Journal of Technical Research & Application

Big data is the term that characterized by its increasing

volume, velocity, variety and veracity. All these characteristics

make processing on this big data a complex task. So, for

processing such data we need to do it differently like map reduce

framework. When an organization exchanges data for mining

useful information from this big data then privacy of the data

becomes an important problem. In the past, several privacy

preserving algorithms have been proposed. Of all those

anonymizing the data has been the most efficient one.

Anonymizing the dataset can be done on several operations like

generalization, suppression, anatomy, specialization, permutation

and perturbation. These algorithms are all suitable for dataset

that does not have the characteristics of the big data. To preserve

the privacy of the large dataset an algorithm was proposed

recently. It applies the top down specialization approach for

anonymizing the dataset and the scalability is increasing my

applying the map reduce frame work. In this paper we survey the

growth of big data, characteristics, map-reduce framework and

all the privacy preserving mechanisms and propose future

directions of our research. SURVEY OF UNITED STATES RELATED DOMAINS: SECURE NETWORK PROTOCOL ANALYSIS

Over time, the HTTP Protocol has undergone significant evolution. HTTP was the internet's foundation for data communication. When network security threats became prevalent, HTTPS became a widely accepted technology for assisting in a domain defense. HTTPS supported two security protocols: secure socket layer (SSL) and transport layer security (TLS). Additionally, the HTTP Strict Transport Security (HSTS) protocol was included to strengthen the HTTPS protocol. Numerous cyber-attacks occurred in the United States, and many of these attacks could have been avoided simply by implementing domains with the most up-to-date HTTP security mechanisms. This study seeks to accomplish two objectives: 1. Determine the degree to which US-related domains are configured optimally for HTTP security protocol setup; 2. Create a generic scoring system for a domain's network security based on the following factors: SSL version, TLS version, and presence of HSTS to easily determine where a domain stands. We found through our analysis and scoring system incorporation that US-related domains showed a positive trend for secure network protocol setup, but there is still room for improvement. In order to safeguard unwanted cyber-attacks, current HTTPS domains need to be extensively investigated to identify if they possess lower version protocol support. Due to the infrequent occurrence of HSTS in the evaluated domains, the computer science community necessitates further HSTS education.

Meliorating usable document density for online event detection

Online event detection (OED) has seen a rise in the research community as it can provide quick identification of possible events happening at times in the world. Through these systems, potential events can be indicated well before they are reported by the news media, by grouping similar documents shared over social media by users. Most OED systems use textual similarities for this purpose. Similar documents, that may indicate a potential event, are further strengthened by the replies made by other users, thereby improving the potentiality of the group. However, these documents are at times unusable as independent documents, as they may replace previously appeared noun phrases with pronouns, leading OED systems to fail while grouping these replies to their suitable clusters. In this paper, a pronoun resolution system that tries to replace pronouns with relevant nouns over social media data is proposed. Results show significant improvement in performance using the proposed system.

Network Forensic Investigation of HTTPS Protocol

International Journal of Modern Engineering Research (IJMER) is Peer reviewed, online Journal. It serves as an international archival forum of scholarly research related to engineering and science education.

Cert Overview

I created this presentation for a presentation on CERT (Community Emergency Response Teams) given to the Mid-State Amateur Radio Club.

Exposing the Hidden Web

The purpose of this article is to provide a quantitative analysis of privacy-compromising mechanisms on the top 1 million websites as determined by Alexa. It is demonstrated that nearly 9 in 10 websites leak user data to parties of which the user is likely unaware; more than 6 in 10 websites spawn third-party cookies; and more than 8 in 10 websites load Javascript code. Sites that leak user data contact an average of nine external domains. Most importantly, by tracing the flows of personal browsing histories on the Web, it is possible to discover the corporations that profit from tracking users. Although many companies track users online, the overall landscape is highly consolidated, with the top corporation, Google, tracking users on nearly 8 of 10 sites in the Alexa top 1 million. Finally, by consulting internal NSA documents leaked by Edward Snowden, it has been determined that roughly one in five websites are potentially vulnerable to known NSA spying techniques at the time of analysis.

A Systems Approach To Qualitative Data Management And Analysis

Essay Writing Service

http://StudyHub.vip/A-Systems-Approach-To-Qualitative-Data- 👈

An introduction to Data Mining by Kurt Thearling

An Introduction to Data Mining Discovering hidden value in your data warehouse By Kurt Thearling Overview Data mining, the extraction of hidden predictive information from large databases, is a powerful new technology with great potential to help companies focus on the most important information in their data warehouses. Data mining tools predict future trends and behaviors, allowing businesses to make proactive, knowledge-driven decisions. The automated, prospective analyses offered by data mining move beyond the analyses of past events provided by retrospective tools typical of decision support systems. Data mining tools can answer business questions that traditionally were too time consuming to resolve. They scour databases for hidden patterns, finding predictive information that experts may miss because it lies outside their expectations. Most companies already collect and refine massive quantities of data. Data mining techniques can be implemented rapidly on existing software and hardware platforms to enhance the value of existing information resources, and can be integrated with new products and systems as they are brought on-line. When implemented on high performance client/server or parallel processing computers, data mining tools can analyze massive databases to deliver answers to questions such as, "Which clients are most likely to respond to my next promotional mailing, and why?" This white paper provides an introduction to the basic technologies of data mining. Examples of profitable applications illustrate its relevance to today’s business environment as well as a basic description of how data warehouse architectures can evolve to deliver the value of data mining to end users.

An introduction to Data Mining

Data Mining is a powerful new technology with great potential to help companies focus on the most important information in their data warehouses. Data mining tools predict future trends and behaviors, allowing businesses to make proactive, knowledge-driven decisions. It is very important to understand the importance and need of data mining in todays situation.

Understanding The World Wide Web

Article on business issues, protocol, and best practices for The Monitor

Competitive Intelligence Made easy

To automate the process of obtaining information for competitor research

the darknet and the future of content distribution

the darknet and the future of content distribution

Similar to PanamaPapers (20)

LITERATURE SURVEY ON BIG DATA AND PRESERVING PRIVACY FOR THE BIG DATA IN CLOUD

LITERATURE SURVEY ON BIG DATA AND PRESERVING PRIVACY FOR THE BIG DATA IN CLOUD

SURVEY OF UNITED STATES RELATED DOMAINS: SECURE NETWORK PROTOCOL ANALYSIS

SURVEY OF UNITED STATES RELATED DOMAINS: SECURE NETWORK PROTOCOL ANALYSIS

Meliorating usable document density for online event detection

Meliorating usable document density for online event detection

Christopher furton-darpa-project-memex-erodes-internet-privacy

Christopher furton-darpa-project-memex-erodes-internet-privacy

Demystifying analytics in e discovery white paper 06-30-14

Demystifying analytics in e discovery white paper 06-30-14

A Systems Approach To Qualitative Data Management And Analysis

A Systems Approach To Qualitative Data Management And Analysis

Additional Data Session Statistical Data Distinguish between full.docx

Additional Data Session Statistical Data Distinguish between full.docx

the darknet and the future of content distribution

the darknet and the future of content distribution

PanamaPapers

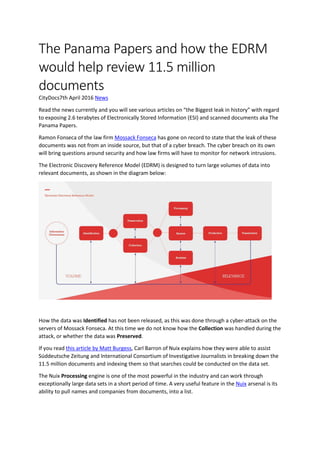

- 1. The Panama Papers and how the EDRM would help review 11.5 million documents CityDocs7th April 2016 News Read the news currently and you will see various articles on “the Biggest leak in history” with regard to exposing 2.6 terabytes of Electronically Stored Information (ESI) and scanned documents aka The Panama Papers. Ramon Fonseca of the law firm Mossack Fonseca has gone on record to state that the leak of these documents was not from an inside source, but that of a cyber breach. The cyber breach on its own will bring questions around security and how law firms will have to monitor for network intrusions. The Electronic Discovery Reference Model (EDRM) is designed to turn large volumes of data into relevant documents, as shown in the diagram below: How the data was Identified has not been released, as this was done through a cyber-attack on the servers of Mossack Fonseca. At this time we do not know how the Collection was handled during the attack, or whether the data was Preserved. If you read this article by Matt Burgess, Carl Barron of Nuix explains how they were able to assist Süddeutsche Zeitung and International Consortium of Investigative Journalists in breaking down the 11.5 million documents and indexing them so that searches could be conducted on the data set. The Nuix Processing engine is one of the most powerful in the industry and can work through exceptionally large data sets in a short period of time. A very useful feature in the Nuix arsenal is its ability to pull names and companies from documents, into a list.

- 2. The document set being dealt with contained both electronic data and scanned hard copy documents. In order to search the hard copy documents, traditionally a company would scan, unitise and code certain metadata fields, such as “To”, “From”, “CC”, “BCC”, “Document Title/Subject” and “Date”. The hard copy documents would then be loaded into a Review platform, such as kCura’s Relativity, and the electronic documents would follow a traditional processing route through Nuix and then loaded into the same review platform. Using the names and companies that can be identified using Nuix, journalists would be able to put together searches to identify the documents that could be relevant for their investigation. If a review tool such as Relativity was to be used, those search terms could be highlighted across the document set to help Analyse what is there. Relativity has some very powerful analytics features of its own for dealing with large data sets, such as email threading and near duplicate analysis. Email threading helps cut down the number of documents requiring full review by showing only the inclusive email. The last email in a thread: The last email in a particular thread will be marked inclusive, because any text added in this last email (even just a “forwarded” indication) will be unique to this email and this one alone. If nobody used attachments, and nobody ever changed the subject line, or went “below the line” to change text, this would be the only type of inclusiveness (Source). Trying to manually review the 11.5 million Panama Papers would be costly and prohibitive. It would also take a lot of time to go through the data set and require many people to review accordingly. Technological advances from review platforms allow for an assisted option in reviewing a vast quantity of documents. Relativity, for example, features an assisted review module that Identifies relevant and irrelevant documents, saving huge amounts of time and money. For data sets such as the Panama Papers, the EDRM has undoubtedly helped to streamline this process and speed up the legal proceedings. Similarly, any project that contains data to process and review would benefit from eDisclosure solutions over paper disclosure. Written by James Merritt, Director at CityDocs Forensic Technology & eDisclosure.