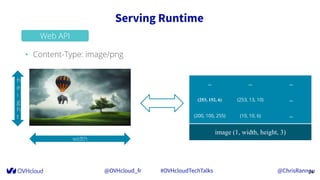

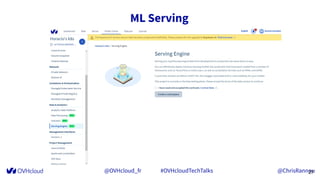

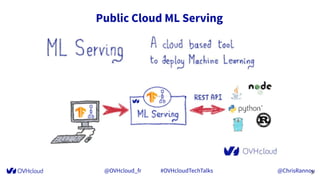

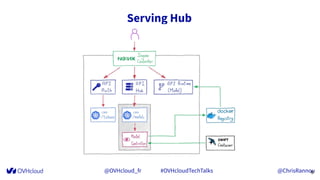

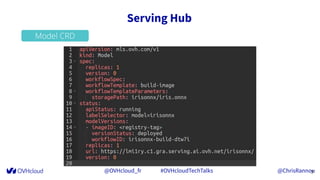

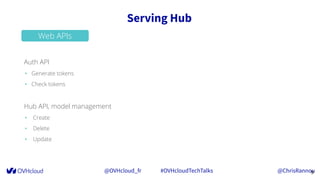

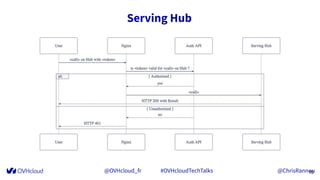

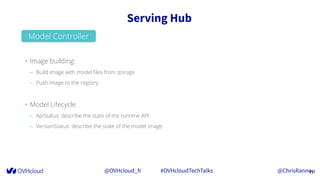

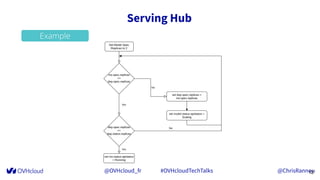

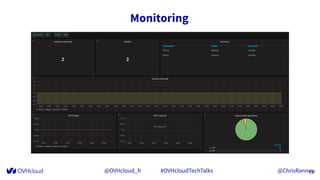

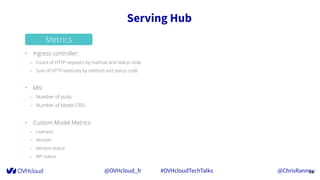

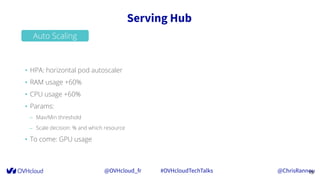

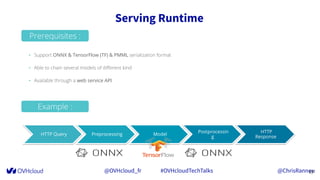

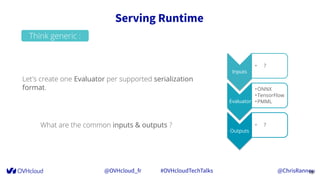

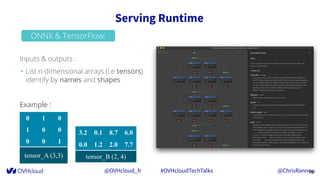

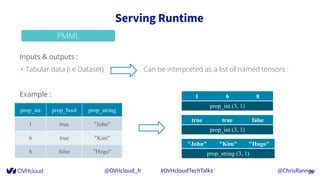

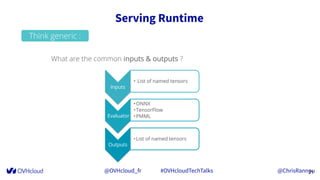

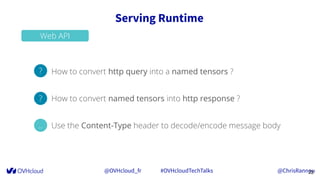

The document discusses OVHcloud's machine learning (ML) serving capabilities, simplifying the deployment of ML models by automating processes via APIs and reducing time-to-production. It highlights features such as model lifecycle management, scaling, monitoring, and supporting various model formats like ONNX, TensorFlow, and PMML. Additionally, it emphasizes ease of integration through web APIs for converting input/output data types and managing content types.

![@OVHcloud_fr #OVHcloudTechTalks @ChrisRannou

Serving Runtime

23

Web API

• Content-Type: application/json

0 1 0

1 0 0

0 0 1

tensor_A (3,3)

3.2 0.1 8.7 6.0

0.0 1.2 2.0 7.7

tensor_B (2, 3)

{

"tensor_A": [

[0, 1, 0],

[1, 0, 0],

[0, 0, 1]

],

"tensor_B": [

[3.2, 0.1, 8.7, 6.0],

[0.0, 1.2, 2.0, 7.7]

]

}](https://image.slidesharecdn.com/2020-04-16-mlserving-200417153759/85/OVHcloud-TechTalks-ML-serving-23-320.jpg)