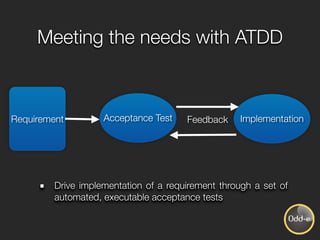

The document discusses Acceptance Test Driven Development (ATDD), which involves using automated acceptance tests to drive the implementation of requirements. The key points are:

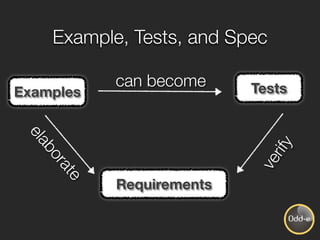

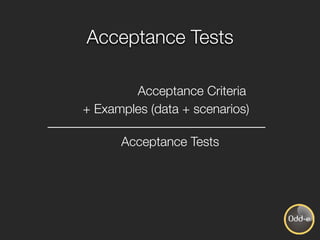

- ATDD uses examples selected from real-world scenarios to build a shared understanding and act as both specifications and an acceptance test suite.

- The development process focuses on passing the acceptance tests before implementation. Tests are automated and run in parallel with coding and other activities.

- ATDD involves collaboration between developers, testers, and product owners to clarify requirements and implement features together based on the tests.

- Benefits include comprehensible examples over complex requirements, close collaboration, definition of done, and testing at the system level to prevent defects rather