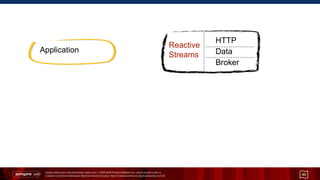

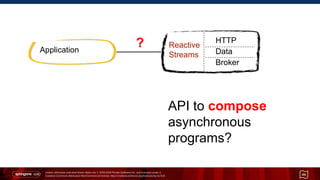

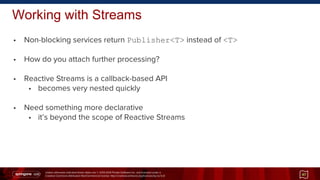

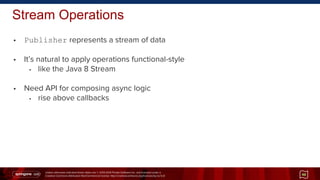

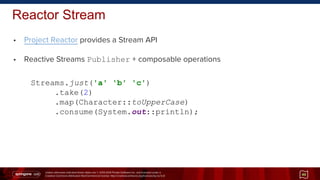

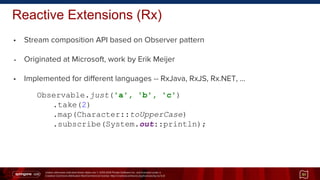

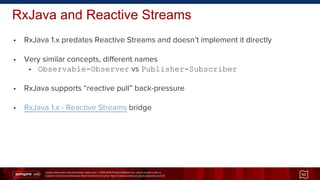

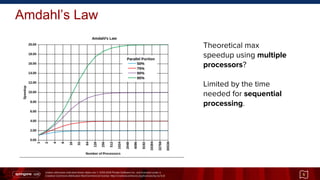

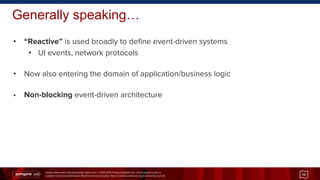

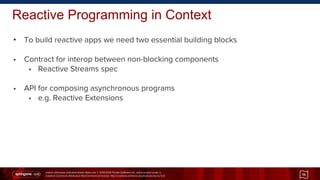

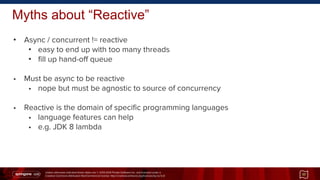

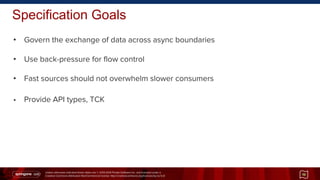

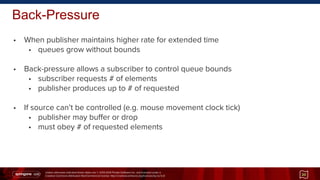

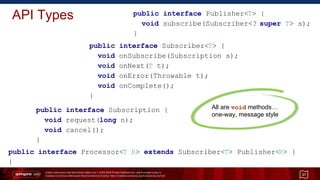

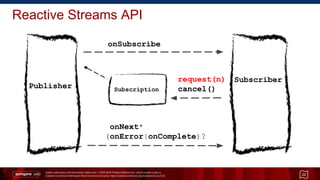

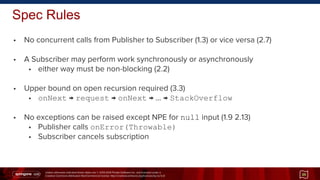

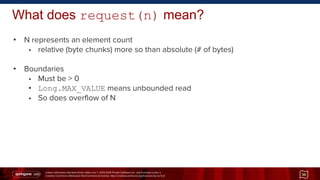

The document presents an introduction to reactive programming, highlighting its importance for building scalable, non-blocking applications in the context of modern computing challenges driven by increased concurrency. It discusses the need for reactive programming tools, specifically focusing on the reactive streams specification that governs the exchange of data across asynchronous boundaries using a back-pressure mechanism for flow control. The document also addresses common myths about reactive systems and outlines the architecture and API necessary for implementing reactive programming effectively.

![Unless otherwise indicated these slides are © 2013-2014 Pivotal Software Inc. and licensed under a

Creative Commons Attribution-NonCommercial license: http://creativecommons.org/licenses/by-nc/3.0/

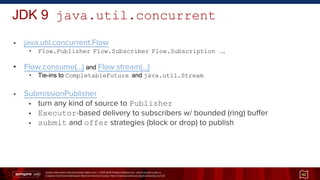

Reactive Streams → JDK 9 Flow.java

• No single best fluent async/parallel API in Java [1]

• CompletableFuture/CompletionStage

• continuation-style programming on futures

• java.util.stream

• multi-stage/parallel, “pull”-style operations on collections

• No "push"-style API for items as they become available from active source

Java “concurrency-interest” list:

[1] initial announcement [2] why in the JDK? [3] recent update

41](https://image.slidesharecdn.com/s2gx2015-intro-to-reactive-programming-150915194713-lva1-app6891/85/Intro-To-Reactive-Programming-41-320.jpg)