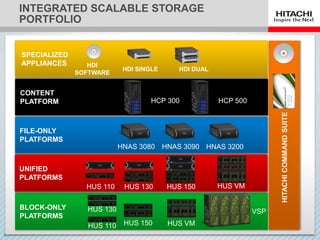

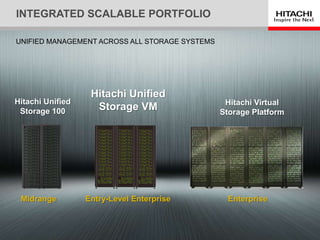

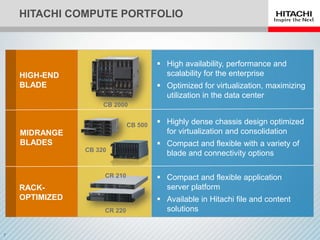

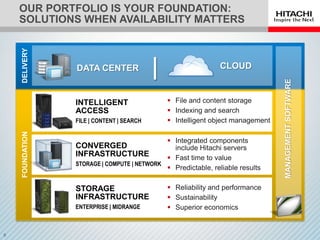

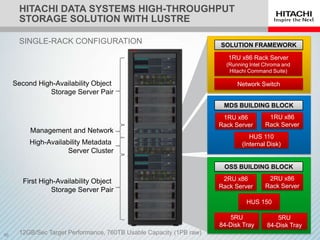

This document discusses scalability and availability challenges for high-throughput storage in production environments. It presents Hitachi's portfolio and solutions to meet these challenges, including unified storage platforms, file and content solutions, and a high-throughput storage solution with Lustre. This Lustre solution combines Hitachi's high availability with Lustre's scalability using pre-architected building blocks for easy design and deployment, simplified management, and support from a single vendor.