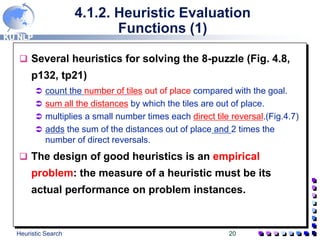

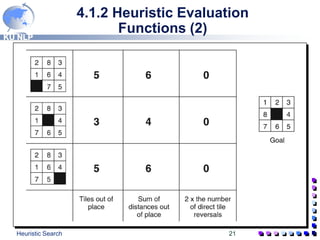

The document discusses heuristic search techniques. It describes best-first search, which uses two lists - OPEN and CLOSED - to track states. The OPEN list is ordered by heuristic estimates of state closeness to the goal. Each iteration selects the most promising state from OPEN for expansion. Heuristic evaluation functions guide the search by assigning values like number of misplaced tiles to states. Careful design of heuristics and use of depth and heuristic estimates in the f(n) function help best-first search examine fewer states to find solutions.

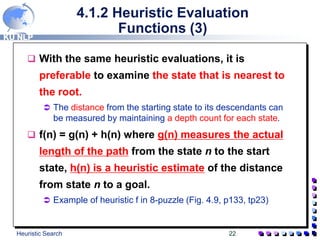

![KU NLP

Heuristic Search 18

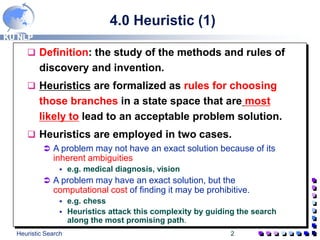

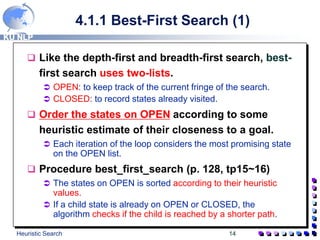

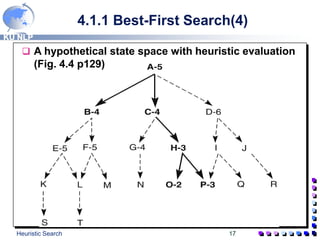

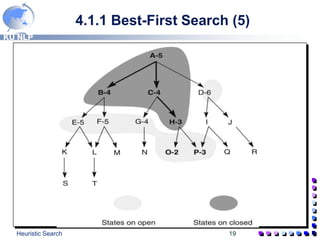

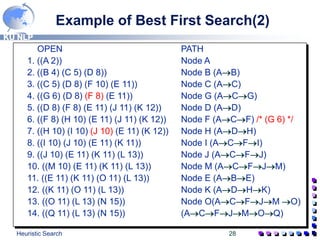

4.1.1 Best-First Search(4)

A trace of the execution of best_first_search

1. OPEN=[A5]; CLOSED=[ ]

2. evaluate A5; OPEN=[B4,C4,D6]; CLOSED=[A5]

3. evaluate B4; OPEN=[C4,E5,F5,D6]; CLOSED=[B4,A5]

4. evaluate C4; OPEN=[H3,G4,E5,F5,D6]; CLOSED=[C4,B4,A5]

5. Evaluate H3; OPEN=[O2,P3,G4,E5,F5,D6];

CLOSED=[H3,C4,B4,A5] …………

In the event a heuristic leads the search down a path that

proves incorrect, the algorithm shifts its focus to another part of

the space. A B … (E, F) C

Shift the focus from B to C, but the children of B (E and F) are

kept on OPEN in case the algorithm returns to them later.

The goal of best-first search is to find the goal state

by looking at as few states as possible. (Fig. 4.5,

p130, tp19)](https://image.slidesharecdn.com/3249989slideplayer-240416050944-5517fe9f/85/Heuristics-Search-3249989_slideplayer-ppt-18-320.jpg)

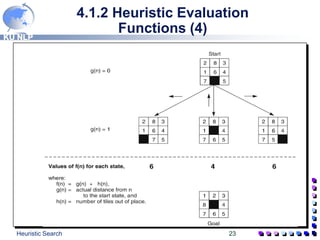

![KU NLP

Heuristic Search 24

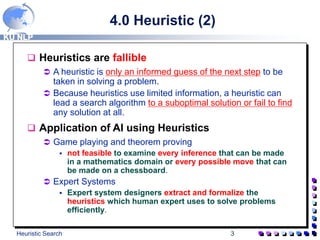

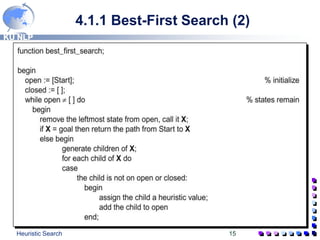

4.1.2 Heuristic Evaluation

Function (5)

State space generated in heuristic search (Fig 4.10, p135, tp25)

Each state is labeled with a letter and its heuristic weight, f(n) =

g(n) + h(n) where g(n)=actual distance from n to the start state,

and h(n)=number of tiles out of place.

The successive stages of OPEN and CLOSED are:

1. OPEN=[a4]; CLOSED=[ ];

2. OPEN=[c4,b6,d6]; CLOSED=[a4];

3. OPEN=[e5,f5,g6,b6,d6]; CLOSED=[a4, c4];

4. OPEN=[f5,h6,g6,b6,d6,i7]; CLOSED=[a4,c4,e5]; ………….

Although the state h, the immediate child of e, has the same

number of tiles out of place as f, it is one level deeper in the state

space. The depth measure, g(n), causes the algorithm to select f

for evaluation.](https://image.slidesharecdn.com/3249989slideplayer-240416050944-5517fe9f/85/Heuristics-Search-3249989_slideplayer-ppt-24-320.jpg)

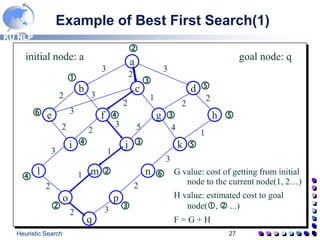

![KU NLP

Heuristic Search 29

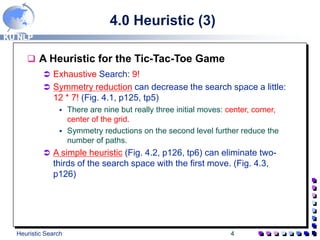

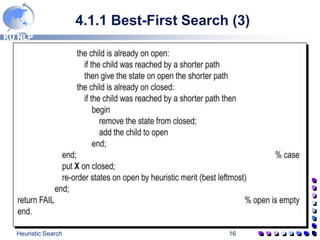

Example of Best First Search(3)

OPEN CLOSED PATH

[A2] [ ] A

[B4, C5, D8] [A2] (AB)

[C5, D8, F10, E11] [A2, B4] (AC)

[G6, D8, F8, E11] [A2, B4, C5] (ACG)

[D8, F8, E11, J11, K12] [A2, B4, C5, G6] (AD)

[F8, H10, E11, J11, K12] [A2, B4, C5, G6, D8] (ACF)

[H10, I10, J10, E11, K12] [A2,B4.C5.G6,D8,F8] (ADH)

[I10, J10, E11, K11] [A2,B4,C5,G6,D8,F8,H10] (ACFI)

[J10,E11,K11,L13] [A2,B4,C5,G6,D8,F8,H10,I10] (ACFJ)

[M10,E11,K11,L13] [A2,B4,C5,G6,D8,F8,H10,I10,J10] (ACFJM)

[E11,K11,O11,L13] [A2,B4,C5,G6,D8,F8,H10,I10,J10,M10] (ABE)

[K11,O11,L13] [A2,B4,C5,G6,D8,F8,H10,I10,J10,M10,E11] (ADHK)

[O11,L13,N15] [A2,B4,C5,G6,D8,F8,H10,I10,J10,M10,E11,K11]

(ACFJM O)

[Q11,L13,N15] [A2,B4,C5,G6,D8,F8,H10,I10,J10,M10,E11,K11,O11]

(ACFJMOQ)](https://image.slidesharecdn.com/3249989slideplayer-240416050944-5517fe9f/85/Heuristics-Search-3249989_slideplayer-ppt-29-320.jpg)