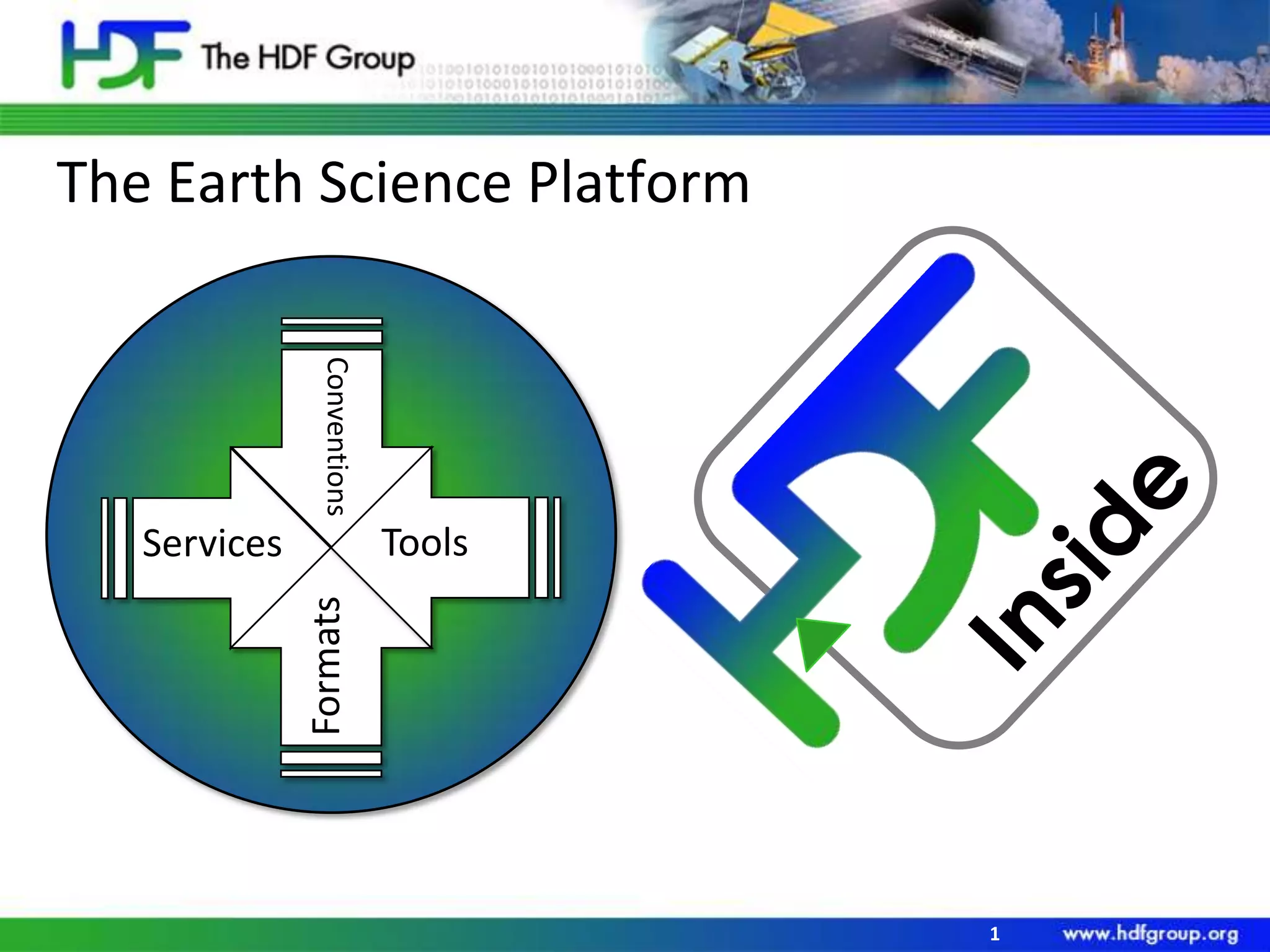

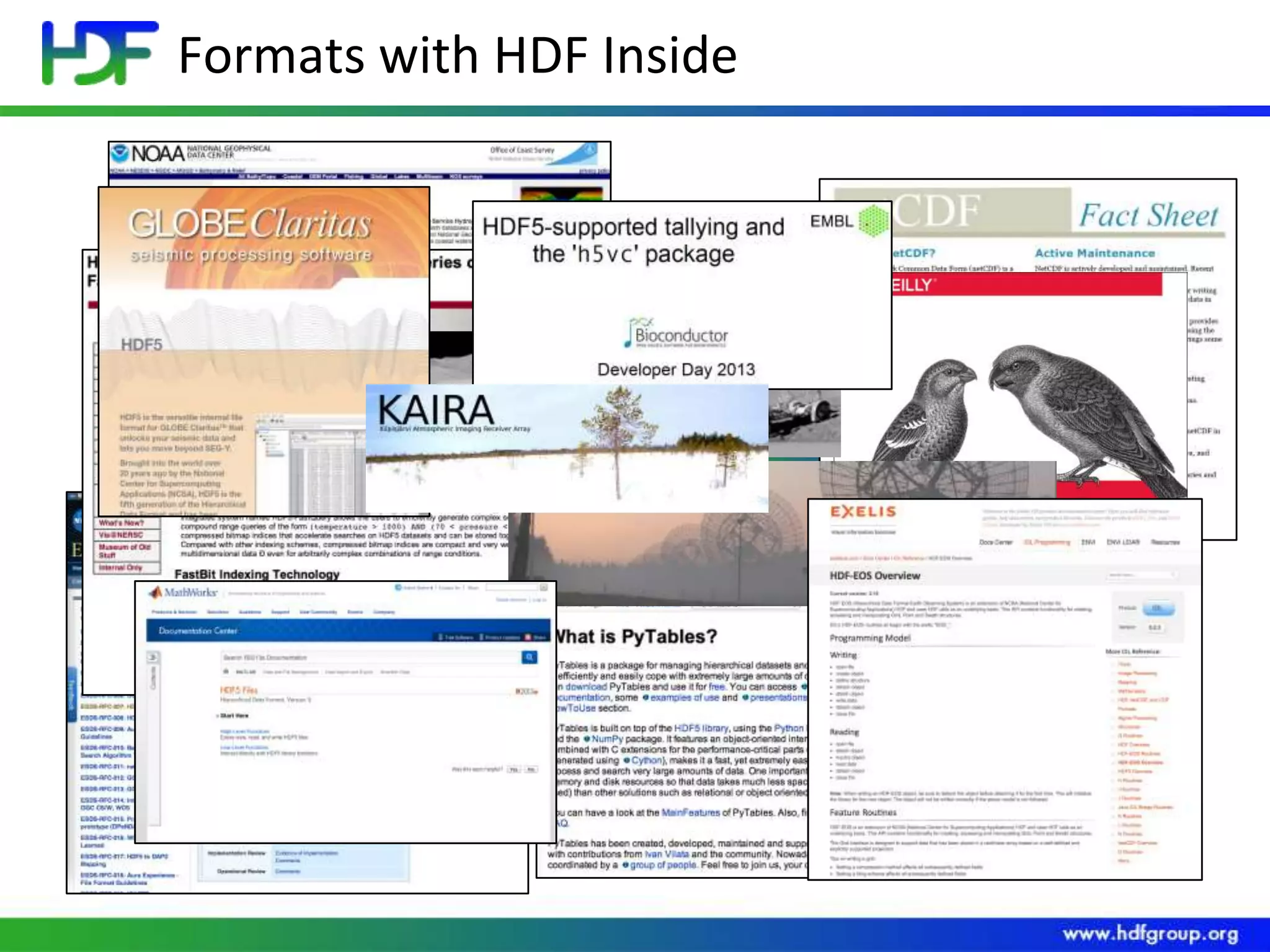

The document discusses the importance of data organization and storage in communication within the earth science community. It emphasizes the advantages of using standardized formats like HDF5 for simplifying data sharing globally. Python is highlighted as a relevant tool in handling these formats.