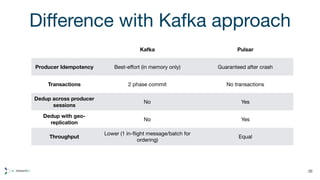

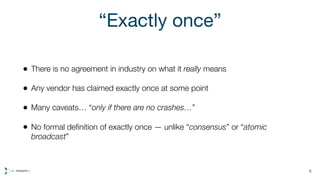

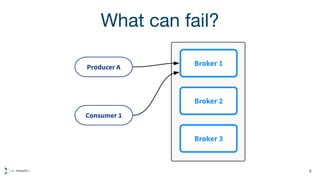

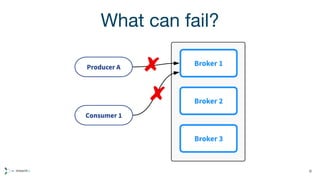

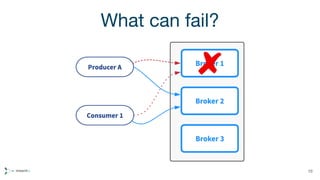

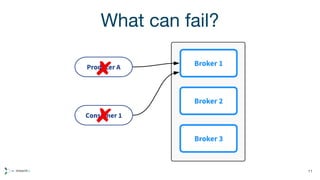

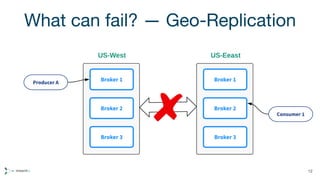

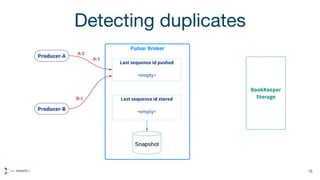

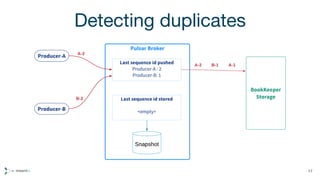

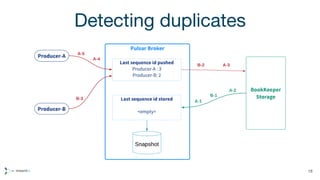

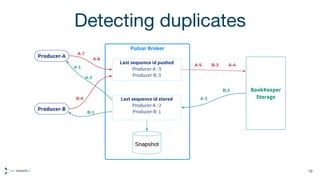

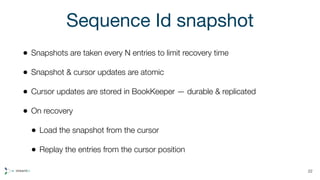

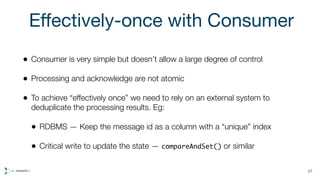

Apache Pulsar is a distributed pub/sub messaging system that offers low latency, multi-tenancy, and geo-replication, supported by Apache BookKeeper for scalable log storage. The document discusses the messaging semantics of 'effectively once' messaging, addressing challenges such as detecting and discarding duplicate messages while ensuring data integrity despite failures. It also contrasts Pulsar's approach to deduplication with Kafka's, highlighting the benefits of producer idempotency and higher throughput in failure scenarios.

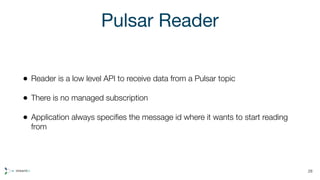

![Reader example

MessageId lastMessageId = recoverLastMessageIdFromDB();

Reader reader = client.createReader(MY_TOPIC, lastMessageId,

new ReaderConfiguration());

while (true) {

Message msg = reader.readNext();

byte[] msgId = msg.getMessageId().toByteArray();

// Process the message and store msgId atomically

}

29](https://image.slidesharecdn.com/apachepulsar-effectivelyonce-180309170757/85/Effectively-once-semantics-in-Apache-Pulsar-29-320.jpg)