DPACK_Intro

•

0 likes•99 views

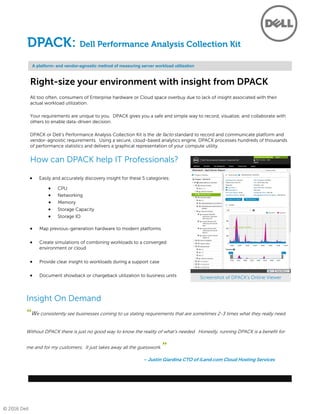

DPACK is Dell's Performance Analysis Collection Kit that provides a platform-agnostic way to record, visualize, and collaborate on server workload utilization insights. It collects hundreds of thousands of performance statistics non-invasively over several days and analyzes them to deliver graphical representations of compute resource usage. This helps right-size hardware environments by revealing workload needs and allowing businesses to avoid overbuying servers and cloud space due to lack of utilization data.

Report

Share

Report

Share

Download to read offline

Recommended

Data Fabric: NetApp's Vision for the Future of Data Management

Gain the freedom to manage, protect & leverage data across the resources that are best for your business.

informatica data replication (IDR)

understanding of what Data Replication is and how it is defined in the industry.

Introducing Elevate Capacity Management

It is no longer efficient, nor even possible, to properly manage your infrastructure with manual processes performed in an ad hoc, incident-based manner. You must be able to continuously monitor, assess, adjust and restructure every part of your multiplatform, distributed, interconnected and internet-dependent cyber-multiverse to respond to constantly changing business requirements.

Elevate Capacity Management (formerly Athene) provides leading companies with the cross-platform capacity management solution they need to meet their capacity management challenges. The new release of Elevate Capacity Management adds new features to ensure data integrity, improve data filtering, and provide more flexibility in customizing the most important thresholds in your IT environment.

View this webinar on-demand and learn about these new features including:

• Performance enhancement for large scale data ingestion and reporting

• The ability to use virtually any metric as a threshold for monitoring and alerting

• A faster and more scalable multi-threaded data management architecture

10 Good Reasons: NetApp for Finance

Here are 10 things you should know about NetApp and the financial industry.

'Software-Defined Everything' Includes Storage and Data

Is your data stuck where it started? Join us and industry analyst Jason Bloomberg this Tuesday, July 26 to discover how you can automate data mobility across your software-defined datacenter.

If you’re like most enterprises, you’ve likely added the benefits of flash and cloud storage to your traditional infrastructure. This storage diversity delivers more choice in meeting performance, protection and cost requirements to support the different data needs of applications, but without a way to converge data across your different storage investments, it’s nearly impossible to align the right data to the right storage at the right time. Data virtualization is a software-defined solution that finally unites different storage systems into a global pool of resources so that even data can be part of your SDDC architecture from on-premise and into the cloud.

In Tuesday’s webinar, Jason will provide insight on how the principle of Software-Defined Everything supports the business agility needs of today’s enterprises. He will also discuss the software-defined approach to championing agility by automatically aligning storage resources to evolving data demands through data virtualization and orchestration, even as business needs change.

Following Jason’s talk, Primary Data Senior Systems Engineer Brett Arnott will cover how data orchestration ensures that data is automatically aligned to the right storage resource to deliver breakthrough agility and efficiency. Attendees will learn how data virtualization and orchestration helps enterprises not only develop a roadmap for their transition to software-defined storage and data, but also execute the move to automated, Objective-driven storage efficiency.

Transform Your Mainframe with Microsoft Azure

Moving mainframe application data to cloud data warehouses helps to enhance downstream analytics, business insights and next wave technologies such as machine learning. However, integrating mainframe data to cloud data warehouses often need tedious data transformations and highly skilled resources. Learn how the Syncsort Connect product family is helping businesses transform their mainframe to Microsoft Azure ecosystem. Key takeaways from this webinar are:

• How Syncsort Connect builds links between the mainframe and the Microsoft Azure ecosystem

• Value gained by taking mainframe data and bringing it into the Microsoft Azure ecosystem

• The importance of mainframe data when it comes to building out new data driven services and applications in Microsoft Azure

Transform 2014: Kofax Altosoft™ Insight - Deep Dive

Take an in-depth look at Kofax Altosoft Insight by watching an analytics solution built from scratch, everything from defining data connections to multiple data sources to building dashboards and reports.

Kofax Analytics presentation

Learn about how FLCC can assist in implementation of Kofax Analytics. Previously implemented for Wells Fargo's 200M page capture system.

Recommended

Data Fabric: NetApp's Vision for the Future of Data Management

Gain the freedom to manage, protect & leverage data across the resources that are best for your business.

informatica data replication (IDR)

understanding of what Data Replication is and how it is defined in the industry.

Introducing Elevate Capacity Management

It is no longer efficient, nor even possible, to properly manage your infrastructure with manual processes performed in an ad hoc, incident-based manner. You must be able to continuously monitor, assess, adjust and restructure every part of your multiplatform, distributed, interconnected and internet-dependent cyber-multiverse to respond to constantly changing business requirements.

Elevate Capacity Management (formerly Athene) provides leading companies with the cross-platform capacity management solution they need to meet their capacity management challenges. The new release of Elevate Capacity Management adds new features to ensure data integrity, improve data filtering, and provide more flexibility in customizing the most important thresholds in your IT environment.

View this webinar on-demand and learn about these new features including:

• Performance enhancement for large scale data ingestion and reporting

• The ability to use virtually any metric as a threshold for monitoring and alerting

• A faster and more scalable multi-threaded data management architecture

10 Good Reasons: NetApp for Finance

Here are 10 things you should know about NetApp and the financial industry.

'Software-Defined Everything' Includes Storage and Data

Is your data stuck where it started? Join us and industry analyst Jason Bloomberg this Tuesday, July 26 to discover how you can automate data mobility across your software-defined datacenter.

If you’re like most enterprises, you’ve likely added the benefits of flash and cloud storage to your traditional infrastructure. This storage diversity delivers more choice in meeting performance, protection and cost requirements to support the different data needs of applications, but without a way to converge data across your different storage investments, it’s nearly impossible to align the right data to the right storage at the right time. Data virtualization is a software-defined solution that finally unites different storage systems into a global pool of resources so that even data can be part of your SDDC architecture from on-premise and into the cloud.

In Tuesday’s webinar, Jason will provide insight on how the principle of Software-Defined Everything supports the business agility needs of today’s enterprises. He will also discuss the software-defined approach to championing agility by automatically aligning storage resources to evolving data demands through data virtualization and orchestration, even as business needs change.

Following Jason’s talk, Primary Data Senior Systems Engineer Brett Arnott will cover how data orchestration ensures that data is automatically aligned to the right storage resource to deliver breakthrough agility and efficiency. Attendees will learn how data virtualization and orchestration helps enterprises not only develop a roadmap for their transition to software-defined storage and data, but also execute the move to automated, Objective-driven storage efficiency.

Transform Your Mainframe with Microsoft Azure

Moving mainframe application data to cloud data warehouses helps to enhance downstream analytics, business insights and next wave technologies such as machine learning. However, integrating mainframe data to cloud data warehouses often need tedious data transformations and highly skilled resources. Learn how the Syncsort Connect product family is helping businesses transform their mainframe to Microsoft Azure ecosystem. Key takeaways from this webinar are:

• How Syncsort Connect builds links between the mainframe and the Microsoft Azure ecosystem

• Value gained by taking mainframe data and bringing it into the Microsoft Azure ecosystem

• The importance of mainframe data when it comes to building out new data driven services and applications in Microsoft Azure

Transform 2014: Kofax Altosoft™ Insight - Deep Dive

Take an in-depth look at Kofax Altosoft Insight by watching an analytics solution built from scratch, everything from defining data connections to multiple data sources to building dashboards and reports.

Kofax Analytics presentation

Learn about how FLCC can assist in implementation of Kofax Analytics. Previously implemented for Wells Fargo's 200M page capture system.

Data center convergence

Unifying the management of a data center’s software and hardware components can help organizations deliver the technology infrastructures necessary to capitalize on the promises of cloud computing, big data, and the Internet of Things (IoT).

Zero Downtime, Zero Touch Stretch Clusters from Software-Defined Storage

Business continuity, especially across data centers in nearby locations often depends on complicated scripts, manual intervention and numerous checklists. Those error-prone processes are exponentially more difficult when the data storage equipment differs between sites.

Such difficulties force many organizations to settle for partial disaster recovery measures, conceding data loss and hours of downtime during occasional facility outages.

In this webcast and live demo, you’ll learn about:

• Software-defined storage services capable of continuously mirroring data in

real-time between unlike storage devices.

• Non-disruptive failover between stretched cluster requiring zero touch.

• Rapid restoration of normal conditions when the facilities come back up.

EMEA TechTalk – The NetApp Flash Optimized Portfolio

EMEA TechTalk – October 7th, 2014 - Learn how NetApp Flash Optimized Storage improves application performance, reduces storage capacity, costs and complexity in the data centre.

OpenStack at the speed of business with SolidFire & Red Hat

When it comes to OpenStack® and the enterprise, it’s critical that you can rapidly deploy a plug-and-play solution that delivers mixed workload capabilities on a shared infrastructure. Join Red Hat and SolidFire to see how Agile Infrastructure for OpenStack can help your cloud move at the speed of business.

Software-Defined Data Center Case Study – Financial Institution and VMware

In this case study, a large financial institution engaged the VMware software-defined data center team to create a three-to-five year forward-looking strategy document for its IT department. The overriding business driver for the institution was the need for a drastic reduction in IT OpEx Costs, at least a 50% OpEx annualized cost reduction over a three-year period. This presentation explains how VMware Accelerate Advisory Services established the necessary strategy, including a look at the “cloud reference architecture,” which addressed the: application plane, control plane, infrastructure layer, and management plan.

Slides: Get Breakthrough Efficiency in Virtual and Private Cloud Environments

Slides from the on-demand webcast (showcasing customer Logicalis.) Learn how NetApp® clustered Data ONTAP® 8.2 enables infrastructure and operational efficiencies with the right shared virtualized infrastructure platform that allow IT to store more data using less storage, and simplify and automate service management across virtual and private cloud environments.

Core Concept: Software Defined Everything

Software Defined anything (SDx) is a movement toward promoting a greater role for software systems in controlling different kinds of hardware - more specifically, making software more "in command" of multi-piece hardware systems and allowing for software control of a greater range of devices.

Software Defined Everything (SDx) includes

Software Defined Networks (SDN)

Software Defined Computing (SDC)

Software Defined Storage (SDS)

Software Defined Data Centers (SDDC)

Disaster Recovery: Understanding Trend, Methodology, Solution, and Standard

Disaster Recovery (DR)

Provides the technical ability to maintain critical services in the event of any unplanned incident that threatens these services or the technical infrastructure required to maintain them.

How to Avoid Disasters via Software-Defined Storage Replication & Site Recovery

Shifting weather patterns across the globe force us to re-evaluate data protection practices in locations we once thought immune from hurricanes, flooding and other natural disasters.

Offsite data replication combined with advanced site recovery methods should top your list.

In this webcast and live demo, you’ll learn about:

• Software-defined storage services that continuously replicate data, containers and virtual machine images over long distances

• Differences between secondary sites you own or rent vs. virtual destinations in public Clouds

• Techniques that help you test and fine tune recovery measures without disrupting production workloads

• Transferring responsibilities to the remote site

• Rapid restoration of normal operations at the primary facilities when conditions permit

10 Good Reasons: NetApp OnCommand Insight

Here are 10 things you should know about OnCommand Insight.

NetApp FlashAdvantage 3-4-5

Discover how you can make the all-flash data center a reality with the FlashAdvantage 3-4-5.

3X GUARANTEED PERFORMANCE

Increase performance by at least 3x with NetApp all-flash storage.

4:1 GUARANTEED REDUCTION

Increase your

effective storage capacity by at least 4x with NetApp all-flash storage. Guaranteed.

5 WAYS TO GET STARTED

NetApp all-flash, with our industry-leading capacity reduction technology, lowers your TCO. Now you’ve got the perfect workload consolidation platform for all of your infrastructure needs. Make the move to the all-flash data center today.

Software-Defined Storage: Data ONTAP

Today, CIOs are moving from being builders of apps and operators of data centers to becoming brokers of information services to the business. They're embracing new technologies and new service models that allow them to make IT faster, cheaper, and smarter, and make their companies more responsive and more competitive. Joel Kaufman, Senior Manager, VMware Technical Marketing at NetApp, explains how NetApp's clustered Data ONTAP fits into the software-defined storage discussion.

Slides: Maintain 24/7 Availability for Your Enterprise Applications Environment

Slides from the on-demand webcast (showcasing customer Bigelow Lab.) Learn how NetApp clustered Data ONTAP enables nondisruptive operations and eliminates IT downtime with a scalable, unified clustered infrastructure for business-critical applications such as Oracle database, SAP, and Microsoft® applications.

NetApp Clustered Data ONTAP Operating System and OnCommand Insight - Deliver

How NetApp IT uses clustered Data ONTAP and OCI to deliver storage as a service.

Learn more at www.NetAppIT.com

Whitepaper - Choosing the right cloud provider for your business

As cloud computing becomes an increasingly important part of any IT organization’s delivery model, assessing and selecting the right cloud provider also becomes one of the most strategic decisions that business leaders undertake. The accumulation of the necessary data to base cloud buying decisions is often achieved in production, or reproduction models mainly as paid customer engagements or trial engagements – which often occurs AFTER the major decisions have been made in the sales process.

This white paper will deliver data that provides valuable information based on real compute scenarios to assist buyers of cloud services in understanding how their workloads might perform and what costs are associated with those environments across multiple cloud computing platforms BEFORE they invest in the selection of a cloud computing provider.

Uncovering New Opportunities With HP Public Cloud - RightScale Compute 2013

Speaker: Dan Baigent - Sr. Director, HP Cloud Services

HP’s Converged Cloud strategy promises a revolution in how customers deliver and deploy applications in the cloud leveraging open standards like OpenStack and a rich ecosystem of partners like RightScale. In this session you will become versed in how HP’s public cloud and its ecosystem address a variety of customer needs/use cases.

More Related Content

What's hot

Data center convergence

Unifying the management of a data center’s software and hardware components can help organizations deliver the technology infrastructures necessary to capitalize on the promises of cloud computing, big data, and the Internet of Things (IoT).

Zero Downtime, Zero Touch Stretch Clusters from Software-Defined Storage

Business continuity, especially across data centers in nearby locations often depends on complicated scripts, manual intervention and numerous checklists. Those error-prone processes are exponentially more difficult when the data storage equipment differs between sites.

Such difficulties force many organizations to settle for partial disaster recovery measures, conceding data loss and hours of downtime during occasional facility outages.

In this webcast and live demo, you’ll learn about:

• Software-defined storage services capable of continuously mirroring data in

real-time between unlike storage devices.

• Non-disruptive failover between stretched cluster requiring zero touch.

• Rapid restoration of normal conditions when the facilities come back up.

EMEA TechTalk – The NetApp Flash Optimized Portfolio

EMEA TechTalk – October 7th, 2014 - Learn how NetApp Flash Optimized Storage improves application performance, reduces storage capacity, costs and complexity in the data centre.

OpenStack at the speed of business with SolidFire & Red Hat

When it comes to OpenStack® and the enterprise, it’s critical that you can rapidly deploy a plug-and-play solution that delivers mixed workload capabilities on a shared infrastructure. Join Red Hat and SolidFire to see how Agile Infrastructure for OpenStack can help your cloud move at the speed of business.

Software-Defined Data Center Case Study – Financial Institution and VMware

In this case study, a large financial institution engaged the VMware software-defined data center team to create a three-to-five year forward-looking strategy document for its IT department. The overriding business driver for the institution was the need for a drastic reduction in IT OpEx Costs, at least a 50% OpEx annualized cost reduction over a three-year period. This presentation explains how VMware Accelerate Advisory Services established the necessary strategy, including a look at the “cloud reference architecture,” which addressed the: application plane, control plane, infrastructure layer, and management plan.

Slides: Get Breakthrough Efficiency in Virtual and Private Cloud Environments

Slides from the on-demand webcast (showcasing customer Logicalis.) Learn how NetApp® clustered Data ONTAP® 8.2 enables infrastructure and operational efficiencies with the right shared virtualized infrastructure platform that allow IT to store more data using less storage, and simplify and automate service management across virtual and private cloud environments.

Core Concept: Software Defined Everything

Software Defined anything (SDx) is a movement toward promoting a greater role for software systems in controlling different kinds of hardware - more specifically, making software more "in command" of multi-piece hardware systems and allowing for software control of a greater range of devices.

Software Defined Everything (SDx) includes

Software Defined Networks (SDN)

Software Defined Computing (SDC)

Software Defined Storage (SDS)

Software Defined Data Centers (SDDC)

Disaster Recovery: Understanding Trend, Methodology, Solution, and Standard

Disaster Recovery (DR)

Provides the technical ability to maintain critical services in the event of any unplanned incident that threatens these services or the technical infrastructure required to maintain them.

How to Avoid Disasters via Software-Defined Storage Replication & Site Recovery

Shifting weather patterns across the globe force us to re-evaluate data protection practices in locations we once thought immune from hurricanes, flooding and other natural disasters.

Offsite data replication combined with advanced site recovery methods should top your list.

In this webcast and live demo, you’ll learn about:

• Software-defined storage services that continuously replicate data, containers and virtual machine images over long distances

• Differences between secondary sites you own or rent vs. virtual destinations in public Clouds

• Techniques that help you test and fine tune recovery measures without disrupting production workloads

• Transferring responsibilities to the remote site

• Rapid restoration of normal operations at the primary facilities when conditions permit

10 Good Reasons: NetApp OnCommand Insight

Here are 10 things you should know about OnCommand Insight.

NetApp FlashAdvantage 3-4-5

Discover how you can make the all-flash data center a reality with the FlashAdvantage 3-4-5.

3X GUARANTEED PERFORMANCE

Increase performance by at least 3x with NetApp all-flash storage.

4:1 GUARANTEED REDUCTION

Increase your

effective storage capacity by at least 4x with NetApp all-flash storage. Guaranteed.

5 WAYS TO GET STARTED

NetApp all-flash, with our industry-leading capacity reduction technology, lowers your TCO. Now you’ve got the perfect workload consolidation platform for all of your infrastructure needs. Make the move to the all-flash data center today.

Software-Defined Storage: Data ONTAP

Today, CIOs are moving from being builders of apps and operators of data centers to becoming brokers of information services to the business. They're embracing new technologies and new service models that allow them to make IT faster, cheaper, and smarter, and make their companies more responsive and more competitive. Joel Kaufman, Senior Manager, VMware Technical Marketing at NetApp, explains how NetApp's clustered Data ONTAP fits into the software-defined storage discussion.

Slides: Maintain 24/7 Availability for Your Enterprise Applications Environment

Slides from the on-demand webcast (showcasing customer Bigelow Lab.) Learn how NetApp clustered Data ONTAP enables nondisruptive operations and eliminates IT downtime with a scalable, unified clustered infrastructure for business-critical applications such as Oracle database, SAP, and Microsoft® applications.

NetApp Clustered Data ONTAP Operating System and OnCommand Insight - Deliver

How NetApp IT uses clustered Data ONTAP and OCI to deliver storage as a service.

Learn more at www.NetAppIT.com

What's hot (20)

Zero Downtime, Zero Touch Stretch Clusters from Software-Defined Storage

Zero Downtime, Zero Touch Stretch Clusters from Software-Defined Storage

EMEA TechTalk – The NetApp Flash Optimized Portfolio

EMEA TechTalk – The NetApp Flash Optimized Portfolio

OpenStack at the speed of business with SolidFire & Red Hat

OpenStack at the speed of business with SolidFire & Red Hat

SplunkLive! Nutanix Session - Turnkey and scalable infrastructure for Splunk ...

SplunkLive! Nutanix Session - Turnkey and scalable infrastructure for Splunk ...

Software-Defined Data Center Case Study – Financial Institution and VMware

Software-Defined Data Center Case Study – Financial Institution and VMware

Slides: Get Breakthrough Efficiency in Virtual and Private Cloud Environments

Slides: Get Breakthrough Efficiency in Virtual and Private Cloud Environments

Disaster Recovery: Understanding Trend, Methodology, Solution, and Standard

Disaster Recovery: Understanding Trend, Methodology, Solution, and Standard

How to Avoid Disasters via Software-Defined Storage Replication & Site Recovery

How to Avoid Disasters via Software-Defined Storage Replication & Site Recovery

Slides: Maintain 24/7 Availability for Your Enterprise Applications Environment

Slides: Maintain 24/7 Availability for Your Enterprise Applications Environment

NetApp Clustered Data ONTAP Operating System and OnCommand Insight - Deliver

NetApp Clustered Data ONTAP Operating System and OnCommand Insight - Deliver

Similar to DPACK_Intro

Whitepaper - Choosing the right cloud provider for your business

As cloud computing becomes an increasingly important part of any IT organization’s delivery model, assessing and selecting the right cloud provider also becomes one of the most strategic decisions that business leaders undertake. The accumulation of the necessary data to base cloud buying decisions is often achieved in production, or reproduction models mainly as paid customer engagements or trial engagements – which often occurs AFTER the major decisions have been made in the sales process.

This white paper will deliver data that provides valuable information based on real compute scenarios to assist buyers of cloud services in understanding how their workloads might perform and what costs are associated with those environments across multiple cloud computing platforms BEFORE they invest in the selection of a cloud computing provider.

Uncovering New Opportunities With HP Public Cloud - RightScale Compute 2013

Speaker: Dan Baigent - Sr. Director, HP Cloud Services

HP’s Converged Cloud strategy promises a revolution in how customers deliver and deploy applications in the cloud leveraging open standards like OpenStack and a rich ecosystem of partners like RightScale. In this session you will become versed in how HP’s public cloud and its ecosystem address a variety of customer needs/use cases.

Why Cloud-Native Kafka Matters: 4 Reasons to Stop Managing it Yourself

With your most talented teams bogged down managing a massive Kafka deployment, it can be challenging to move the dial on projects that drive real value for your business. For example, launching your next major feature, fueling more best-in-breed services like AI/ML on your cloud provider platform, or developing your first use cases for real-time data movement across clouds. By shifting to a fully managed, cloud-native service for Kafka you can unlock your teams to work on the projects that make the best use of your data in motion.

In this webinar you will learn about:

• The increasing value of data in motion to your business

• Challenges and costs of self-managing a large-scale Kafka deployment

• Benefits of managed cloud services for non-core activities like data storage, data warehousing, and messaging

• Optimizing time usage for value-generating activity like new product launches

• Potential cost savings for your business with a cloud-native service for Kafka

Run more applications without expanding your datacenter

By upgrading from the legacy solution we tested to the new Intel processor-based Dell and VMware solution, you could do 18 times the work in the same amount of space. Imagine what that performance could mean to your business: Consolidate workloads from across your company, lower your power and cooling bills, and limit datacenter expansion in the future, all while maintaining a consistent user experience—the list of potential benefits is huge.

Try running DPACK, which can help you identify bottlenecks in your environment and inform you about your current performance needs. Then consider how the consolidation ratio we proved could be helpful for your company. The Intel processor-powered Dell PowerEdge R730 solution with VMware vSphere and Dell Storage SC4020, also powered by Intel, could be the right destination for your upgrade journey.

Conquering Disaster Recovery Challenges and Out-of-Control Data with the Hybr...

More and more companies are leveraging the cloud for disaster recovery. After all, the limitless compute resources of the cloud are perfectly suited for disaster recovery. Learn how to easily leverage the cloud for DR.

Cloud Computing

Basics of cloud computing including examples of SaaS, PaaS and Iaas. The advantages and disadvantages are reviewed as well as a plan to migrate to the cloud.

Cloud Computing for Small & Medium Businesses

I presented this topic at the Greater Binghamton Business Expo in Upstate New York. It is meant to shed light on utilizing Cloud Computing for Small and Medium size businesses. It should help decision makers consider Software-as-a-Service offerings for their business as a way to save on IT cost and to deliver on better efficiency for their organizations.

Data center terminology photostory

Read how IBM and NC State created a “cloud computing” model for provisioning technology that offered a quantum improvement in access, efficiency and convenience over traditional computer labs.

Cloud 101: The Basics of Cloud Computing

Curious about the cloud? We've got answers. Join HOSTING for an overview of cloud hosting and computing basics. From the history of the cloud to the projected future, we'll investigate the foundation of this $2.1 billion industry.

ACIC Rome & Veritas: High-Availability and Disaster Recovery Scenarios

A white paper to illustrate High-Availability and Disaster Recovery Scenarios and use-cases developed by Accenture and Veritas in the Accenture Cloud Innovation Center of Rome.

GxP Data Integrity for Cloud Apps – Part 1

GxP is a general abbreviation for the "Good Practice" quality guidelines and regulations.

The “G” stands for Good "x" stands for various fields, including the pharmaceutical, life sciences, agricultural, clinical, laboratory, manufacturing and food industries.

DataCore Executive Brief

10 REASONS TO ADOPT DATACORE SOFTWARE

Over 10,000 satisfied clients and more than 30,000 installations worldwide, clients in every industry sector and of every size,

testify to DataCore’s innovative spirit. It’s no wonder that we know precisely what it takes to deal with the challenges our clients face. We are there to assist you with a range of solutions aimed at dealing with increasing volumes of data and complex management of a disparate variety of infrastructures. This is why we are in the top ranking in the market of software-defined storage and hyper- converged infrastructure. Whether to boost the performance of mission-critical applications, increase efficiency, structure for enhanced availability, ensure high availability or business continuity - with DataCore you are always in control.

Netscaler for mobility and secure remote access

This session describes practical approaches to utilizing provisioning services for Citrix XenDesktop and Citrix XenApp, taken from actual customer deployments in the 25- to 500-device range. We will discuss how to use provisioning services correctly, including best practices for vDisks and cache placement. Other topics will include high availability and load balancing. Live demos will illustrate some of the best practices of a provisioning services deployment.

Similar to DPACK_Intro (20)

Whitepaper - Choosing the right cloud provider for your business

Whitepaper - Choosing the right cloud provider for your business

Uncovering New Opportunities With HP Public Cloud - RightScale Compute 2013

Uncovering New Opportunities With HP Public Cloud - RightScale Compute 2013

Why Cloud-Native Kafka Matters: 4 Reasons to Stop Managing it Yourself

Why Cloud-Native Kafka Matters: 4 Reasons to Stop Managing it Yourself

Run more applications without expanding your datacenter

Run more applications without expanding your datacenter

Conquering Disaster Recovery Challenges and Out-of-Control Data with the Hybr...

Conquering Disaster Recovery Challenges and Out-of-Control Data with the Hybr...

ACIC Rome & Veritas: High-Availability and Disaster Recovery Scenarios

ACIC Rome & Veritas: High-Availability and Disaster Recovery Scenarios

Continuous Integration and Continuous Deployment Pipeline with Apprenda on ON...

Continuous Integration and Continuous Deployment Pipeline with Apprenda on ON...

DPACK_Intro

- 1. © 2016 Dell Right-size your environment with insight from DPACK All too often, consumers of Enterprise hardware or Cloud space overbuy due to lack of insight associated with their actual workload utilization. Your requirements are unique to you. DPACK gives you a safe and simple way to record, visualize, and collaborate with others to enable data-driven decision. DPACK or Dell’s Performance Analysis Collection Kit is the de facto standard to record and communicate platform and vendor-agnostic requirements. Using a secure, cloud-based analytics engine, DPACK processes hundreds of thousands of performance statistics and delivers a graphical representation of your compute utility. How can DPACK help IT Professionals? A platform- and vendor-agnostic method of measuring server workload utilization DPACK: Dell Performance Analysis Collection Kit Insight On Demand Networking CPU Memory Storage IO Storage Capacity Map previous-generation hardware to modern platforms Create simulations of combining workloads to a converged environment or cloud Provide clear insight to workloads during a support case Document showback or chargeback utilization to business units Easily and accurately discovery insight for these 5 categories: “We consistently see businesses coming to us stating requirements that are sometimes 2-3 times what they really need. Without DPACK there is just no good way to know the reality of what’s needed. Honestly, running DPACK is a benefit for me and for my customers. It just takes away all the guesswork.” – Justin Giardina CTO of iLand.com Cloud Hosting Services Screenshot of DPACK’s Online Viewer

- 2. © 2016 Dell How DPACK works: DPACK’s Collector streams performance data to the Online Analytics Engine that will crunch and blend workload characteristics from the most popular operating systems and virtualization environments. That data is then delivered as an easy to read and browsable summary you can use to drive decisions. DPACK works in 3 easy steps: Collect The first step is to use DPACK’s collector to begin a point-in-time capture of one or more servers. The collector supports remotely recording multiple servers from multiple different platforms from 4 hours to 7 days. The Collector is agentless, runs only in memory and makes no modifications to servers while it gathers data with near zero overhead using com- mon administrative protocols that generally require no “Change Control” or firewall modifications. View The data is then streamed or uploaded and can be visually explored in near real-time by accessing the online Viewer. The Viewer is an HTML5 navigation console that supports phone, tablet, or browser and allows quick access to the graphs and calculations supplied by the DPACK analytics engine. Collaborate Most businesses will make decisions in teams, with consultants, or in coordination with manufacturers or their partners. The Viewer platform allows the secure sharing of performance insight between those involved in a decision cycle or helping to resolve a support case (so long as they also have a DPACK account). Good news is that DPACK accounts are free and you can get one today: http://dpack2.dell.com/register/today Below is a sample of DPACK’s Online Viewer The information is broken down into three major categories — server navigation, analytics summary, and insight graphs: