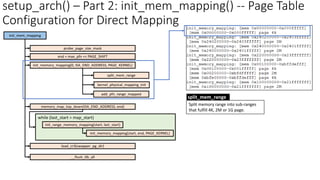

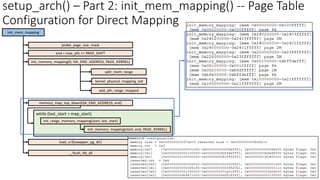

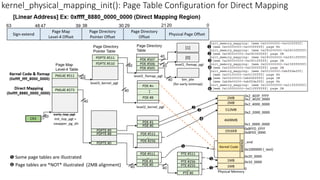

The document provides an in-depth exploration of the Linux kernel's initialization process from the perspective of page table configuration, focusing on kernel 5.11 (x86_64) with various memory management features and concepts. Key topics include the booting flow, memory model structures like fixed-mapped addresses, early I/O mapping, and the implementations of virtual system calls. It also discusses the setup of CPU-specific structures and memory management functions during the initialization phase to ensure efficient operation of the kernel.

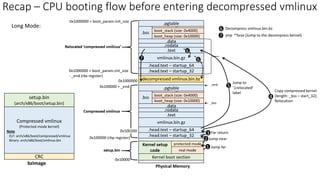

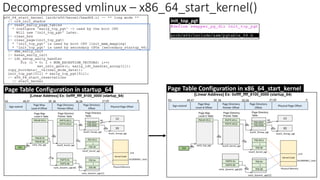

![Recap - Compressed vmlinux: Page table before entering decompressed

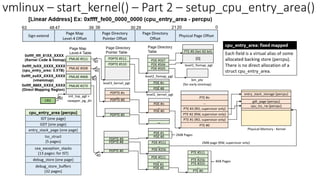

vmlinux

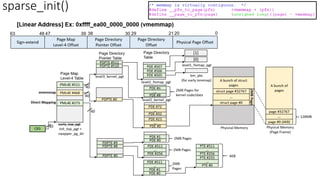

Sign-extend

Page Map

Level-4 Offset

Page Directory

Pointer Offset

Page Directory

Offset

Physical Page Offset

0

30 21

39 20

38 29

47

48

63

PML4E #0

PDPTE #3

Data

Page Map

Level-4 Table

Page Directory

Pointer Table

Page Directory

Table

40

9 9 9

Linear Address

CR3

PDPTE #2

PDPTE #1

PDPTE #0

PDE #1535

PDE #1024

.

.

PDE #2047

PDE #1536

.

.

PDE #511

PDE #0

.

.

PDE #1023

PDE #512

.

.

2MBbyte

Physical

Page

40

40

31

21

[Paging] Identity mapping for 0-4GB memory space](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-4-320.jpg)

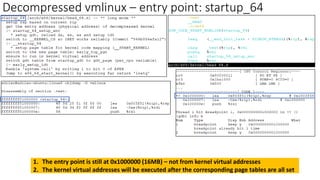

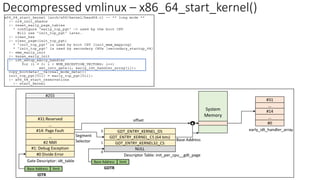

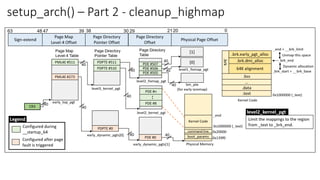

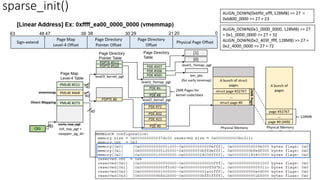

![64-bit Virtual Address

Kernel Space

0x0000_7FFF_FFFF_FFFF

0xFFFF_8000_0000_0000

128TB

Page frame direct

mapping (64TB)

ZONE_DMA

ZONE_DMA32

ZONE_NORMAL

page_offset_base

0

16MB

64-bit Virtual Address

Kernel Virtual Address

Physical Memory

0

0xFFFF_FFFF_FFFF_FFFF

Guard hole (8TB)

LDT remap for PTI (0.5TB)

Unused hole (0.5TB)

vmalloc/ioremap (32TB)

vmalloc_base

Unused hole (1TB)

Virtual memory map – 1TB

(store page frame descriptor)

…

vmemmap_base

64TB

*page

…

*page

…

*page

…

Page Frame

Descriptor

vmemmap_base

page_ofset_base = 0xFFFF_8880_0000_0000

vmalloc_base = 0xFFFF_C900_0000_0000

vmemmap_base = 0xFFFF_EA00_0000_0000

* Can be dynamically configured by KASLR (Kernel Address Space Layout Randomization - "arch/x86/mm/kaslr.c")

Default Configuration

Kernel text mapping from

physical address 0

Kernel code [.text, .data…]

Modules

__START_KERNEL_map = 0xFFFF_FFFF_8000_0000

__START_KERNEL = 0xFFFF_FFFF_8100_0000

MODULES_VADDR

0xFFFF_8000_0000_0000

Empty Space

User Space

128TB

1GB or 512MB

1GB or 1.5GB Fix-mapped address space

(Expanded to 4MB: 05ab1d8a4b36) FIXADDR_START

Unused hole (2MB) 0xFFFF_FFFF_FFE0_0000

0xFFFF_FFFF_FFFF_FFFF

FIXADDR_TOP = 0xFFFF_FFFF_FF7F_F000

Reference: Documentation/x86/x86_64/mm.rst](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-5-320.jpg)

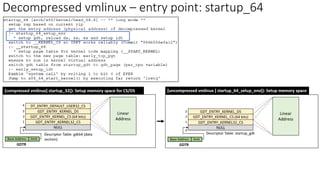

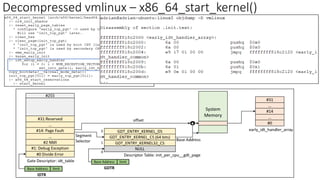

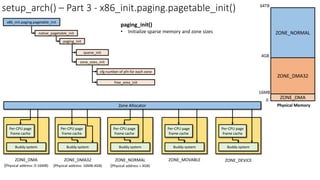

![setup_arch() – Part 1

memblock: boot time memory management

Memblock

• Memory allocation during boot time stage

• Set up in setup_arch()

• Tear down in mem_init(): Release free pages

to buddy allocator

[memblock] Reserve page 0

• Security: Mitigate L1TF (L1 Terminal Fault)

vulnerability](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-22-320.jpg)

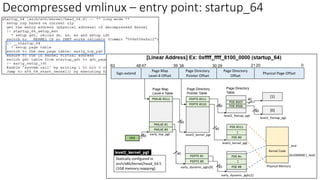

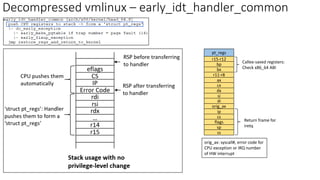

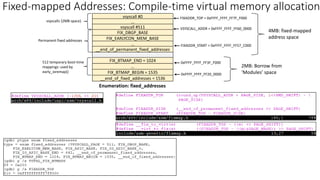

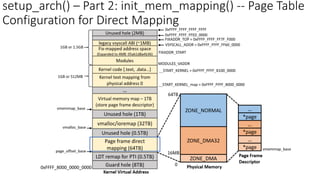

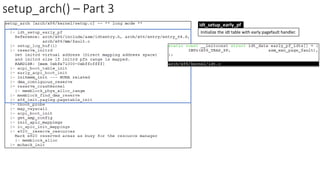

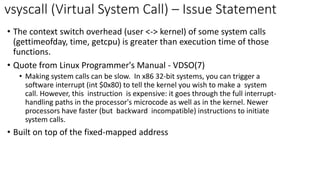

![Early ioremap: based on fixed-mapped address

PDE #507: 0xFFFF_FFFF_FF60_0000

PDE #506: 0xFFFF_FFFF_FF40_0000

PDE #505: 0xFFFF_FFFF_FF20_0000

#1528

…

FIX_BTMAP_BEGIN = 1535

…

FIX_BTMAP_END = 1024

…

# 1031

slot_virt[0]

slot_virt[7]

slot_virt[0] =

0xFFFF_FFFF_FF20_0000

slot_virt[7] =

0xFFFF_FFFF_FF3C_0000

early_ioremap_setup()

Early ioremap

• Mapping/unmapping of I/O physical

address to virtual address before

ioremap mechanism is ready

• early_ioremap() & early_iounmap()

Fixed-mapped Addresses](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-26-320.jpg)

![setup_arch() – Part 1

[Linux x86 Boot Protocol]

setup_data: 64-bit physical pointer to linked list

of struct setup_data](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-28-320.jpg)

![vsyscall – Implementation (Emulate)

[PTE] Bit 63: Execute Disable (XD)

• If IA32_EFER.NXE = 1 and XD

= 1, instruction fetches are

not allowed from this PTE.

This will generate a #PF

exception.](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-46-320.jpg)

![Replacement of vsyscall: vDSO (virtual Dynamic

Shared Object)

• vsyscall limitation

• Security concern: fixed virtual address (0xFFFF_FFFF_FF60_0000)

• vDSO

• Exploit ASLR (Address Space Layout Randomization)

• Can be enabled/disabled via /proc/sys/kernel/randomize_va_space

• [Enable] echo 1 > /proc/sys/kernel/randomize_va_space

• [Disable] echo 0 > /proc/sys/kernel/randomize_va_space

• User space address

• Security enhancement](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-51-320.jpg)

![[Recap] Page Table Configuration after finishing setup_arch()](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-53-320.jpg)

![[Recap] Page Table Configuration after finishing setup_arch()

1

2

3

1

1

2

3](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-54-320.jpg)

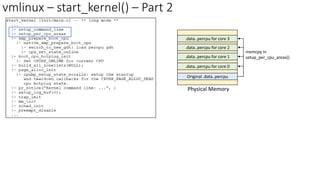

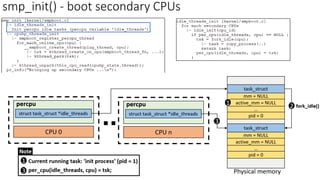

![percpu variable access option #1: __per_cpu_offset

APIs (include/linux/percpu-defs.h):

* per_cpu_ptr(ptr, cpu): via __per_cpu_offset

Original .data..percpu

.data..percpu for core 2

.data..percpu for core 3

.data..percpu for core 0

.data..percpu for core 1

Physical Memory

memcpy with source

address ‘__per_cpu_load’

in setup_per_cpu_areas()

__per_cpu_offset[0]

__per_cpu_offset[1]

__per_cpu_offset[2]

__per_cpu_offset[3]](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-58-320.jpg)

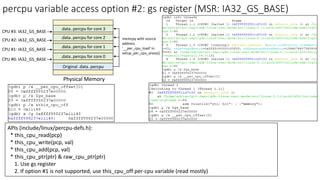

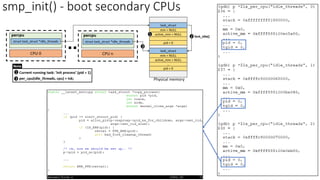

![percpu variable access option #1: __per_cpu_offset

*(.data..percpu..shared_aligned)

*(.data..percpu)

*(.data..percpu..read_mostly)

*(.data..percpu..page_aligned)

*(.data..percpu..first)

.data..percpu

__per_cpu_load

(kernel virtual address)

__per_cpu_end

__per_cpu_start = 0

[Example]

gdt_page = 0xb000

Original .data..percpu

.data..percpu for core 2

.data..percpu for core 3

.data..percpu for core 0

.data..percpu for core 1

Physical Memory

memcpy with source

address ‘__per_cpu_load’

in setup_per_cpu_areas()

__per_cpu_offset[0]

__per_cpu_offset[1]

__per_cpu_offset[2]

__per_cpu_offset[3]](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-59-320.jpg)

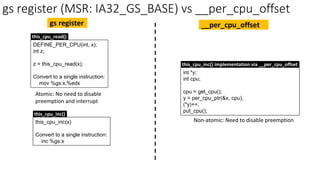

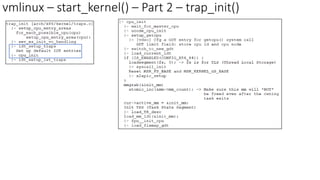

![vmlinux – start_kernel() – Part 2 – trap_init()

CPU Entry Area (percpu)

• Page Table Isolation (PTI)

o Mitigate Meltdown

o Isolate user space and kernel space memory

o When the kernel is entered via syscalls, interrupts or exceptions, the page tables are

switched to the full "kernel“ copy.

▪ Entry/exit functions and IDT (Interrupt Descriptor Table) are needed for userspace page table

Kernel

Space

User

Space

User mode &

Kernel Mode

PTI

Kernel

Space

User

Space

Kernel mode

Kernel Space

User Space

User mode

User Space

percpu TSS

entry

Kernel

Space syscall

[User mode]

User Page Table

User Space

percpu TSS

entry

Kernel

Space

Switch to kernel

page table

[Kernel Mode]

User Page Table

User Space

percpu TSS

entry

Kernel

Space

[Kernel Mode]

Kernel Page Table

…

PTI: Concept PTI: High-level implementation](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-63-320.jpg)

![[Prev task] Return to the next instruction of calling

switch_to() when the previous task is re-scheduled.

4

task.stack

Kernel Stack

STACK_END_MAGIC = 0x57AC6E9D

struct pt_regs (save/restore CPU

registers for userspace tasks)

kernel stack

usage space

bx (kernel thread function)

r13

r14

r15

r12 (kernel thread argument)

ret_addr = ret_from_fork

bp

task.stack +

THREAD_SIZE

rsp

2

3

rsp `return prev_p`

1

Context Switch – Kernel Thread

jump

4](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-82-320.jpg)

![Context Switch – init_task is rescheduled

[Prev task] Return to the next instruction of calling

switch_to() when the previous task is re-scheduled.

4

Backtrace when init_task (pid = 0) is rescheduled because kernel_init thread (pid = 1) is scheduled out

jump

4](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-85-320.jpg)

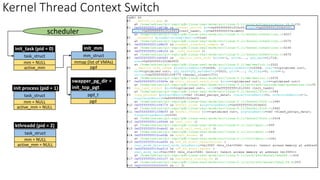

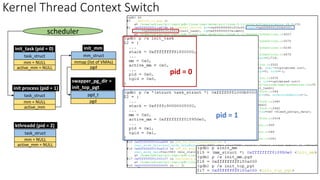

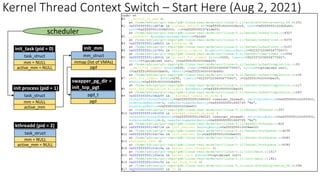

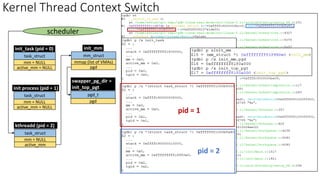

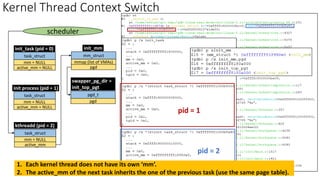

![Context Switch: Kernel Thread <-> User Space Task

task_struct

scheduler

sleep program (pid = 40)

mm_struct

mmap (list of VMAs)

pgd

pgd_t

pgd

mm

active_mm

cpu = 2

task_struct

ksoftirqd/2 (pid = 20)

mm = NULL

active_mm

cpu = 2

pid = 40

pid = 20

[Kernel Thread ]

Inherit active_mm of

the previous task.

(No need to flush

TLB because cr3 is

not changed)

`sleep` userspace task is

scheduled out](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-95-320.jpg)

![[pid = 1 – init process] When are mm & active_mm allocated?](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-97-320.jpg)

![[pid = 1 – init process] When are mm & active_mm allocated?](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-98-320.jpg)

![[pid = 1 – init process] When are mm & active_mm allocated?

clone_pgd_range()](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-99-320.jpg)

![[pid = 1 – init process] When are mm & active_mm allocated?

[pid = 1] Before running run_init_process()

[pid = 1] After finishing run_init_process():

kernel thread -> user process

clone_pgd_range(): mm.pgd verification

[pid = 1] mm_struct](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-100-320.jpg)

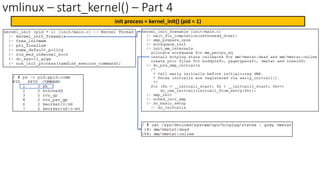

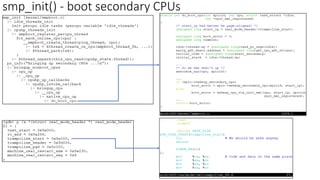

![smp_init() - boot secondary CPUs – Boot Flow

startup_32: setup cr3 @trampoline_pgd

secondary_startup_64: setup cr3 @init_top_pgt

[Secondary CPUs] CR3 Register Configuration](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-105-320.jpg)

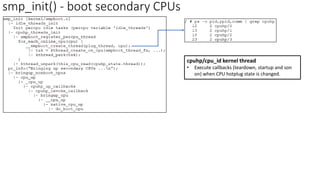

![startup_32() - boot secondary CPUs – Page Table Configuration

startup_32: setup cr3 @trampoline_pgd

secondary_startup_64: setup cr3 @init_top_pgt

[Secondary CPUs] CR3 Register Configuration](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-106-320.jpg)

![startup_32() - boot secondary CPUs – Page Table Configuration

startup_32: setup cr3 @trampoline_pgd

secondary_startup_64: setup cr3 @init_top_pgt

[Secondary CPUs] CR3 Register Configuration](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-107-320.jpg)

![secondary_startup_64() - boot secondary CPUs – Page Table

startup_32: setup cr3 @trampoline_pgd

secondary_startup_64: setup cr3 @init_top_pgt

[Secondary CPUs] CR3 Register Configuration](https://image.slidesharecdn.com/decompressedvmlinux-linuxkernelinitializationfrompagetableconfigurationperspective-210802071137/85/Decompressed-vmlinux-linux-kernel-initialization-from-page-table-configuration-perspective-108-320.jpg)