Embed presentation

Download as PDF, PPTX

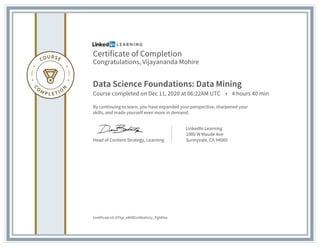

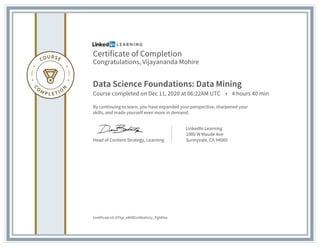

Vijayananda Mohire has completed a Data Science Foundations: Data Mining course on December 11, 2020, lasting 4 hours and 40 minutes. This achievement reflects ongoing learning and skill enhancement. The certificate was issued by LinkedIn Learning, 1000 W Maude Ave, Sunnyvale, CA.