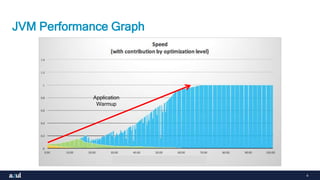

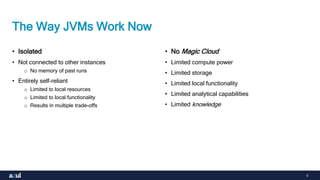

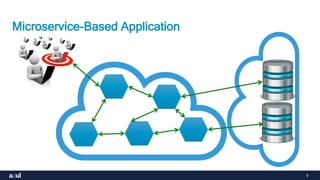

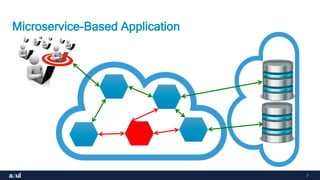

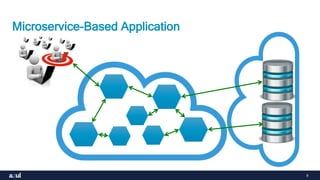

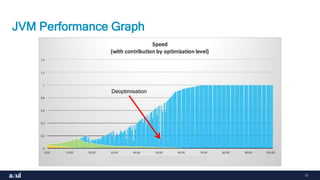

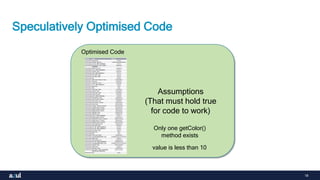

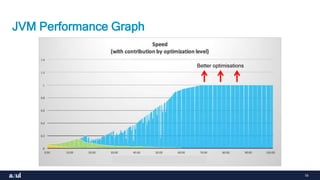

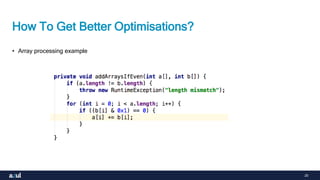

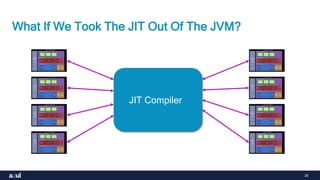

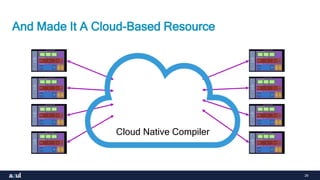

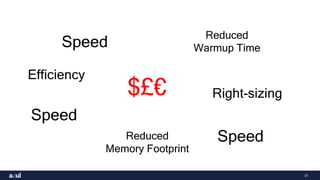

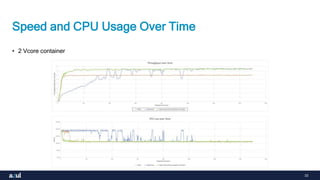

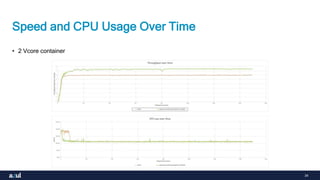

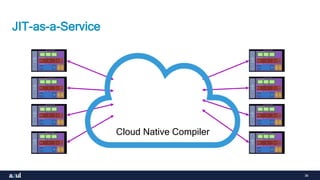

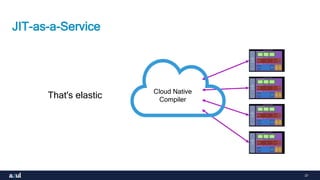

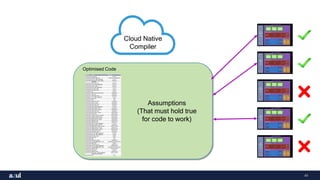

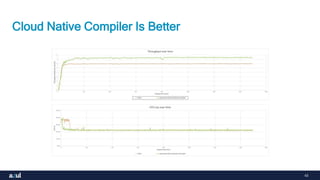

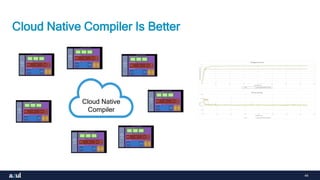

The document discusses the benefits of moving JIT compilation out of individual JVMs and into a shared, cloud-based compiler service. This "JIT-as-a-Service" approach improves efficiency by allowing optimization resources to be shared and elastic across JVMs. It also enables optimized code to be reused for applications that execute on multiple devices. Moving JIT compilation to the cloud reduces warmup time, memory footprint, and CPU usage for JVMs while improving the level of optimizations that can be performed.