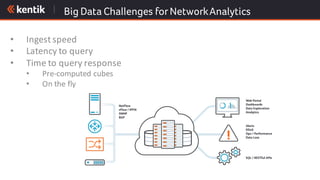

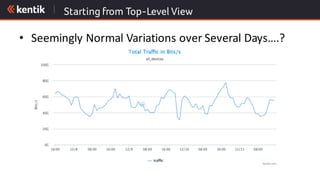

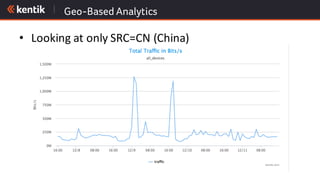

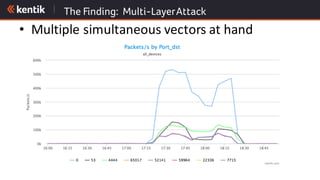

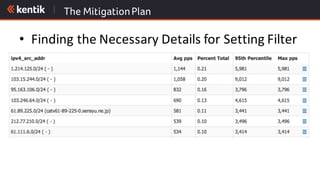

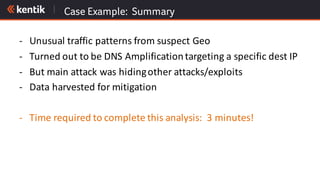

The document discusses the importance of cloud-aware network management in ensuring high performance and user experience amid increasing reliance on cloud services. It outlines key strategies and tactics for efficient network management, such as collecting detailed traffic flow data and utilizing advanced analytics for anomaly detection. The document emphasizes that traditional approaches are insufficient for managing large network data, advocating for big data analytics to improve operational responses and enhance visibility across hybrid environments.