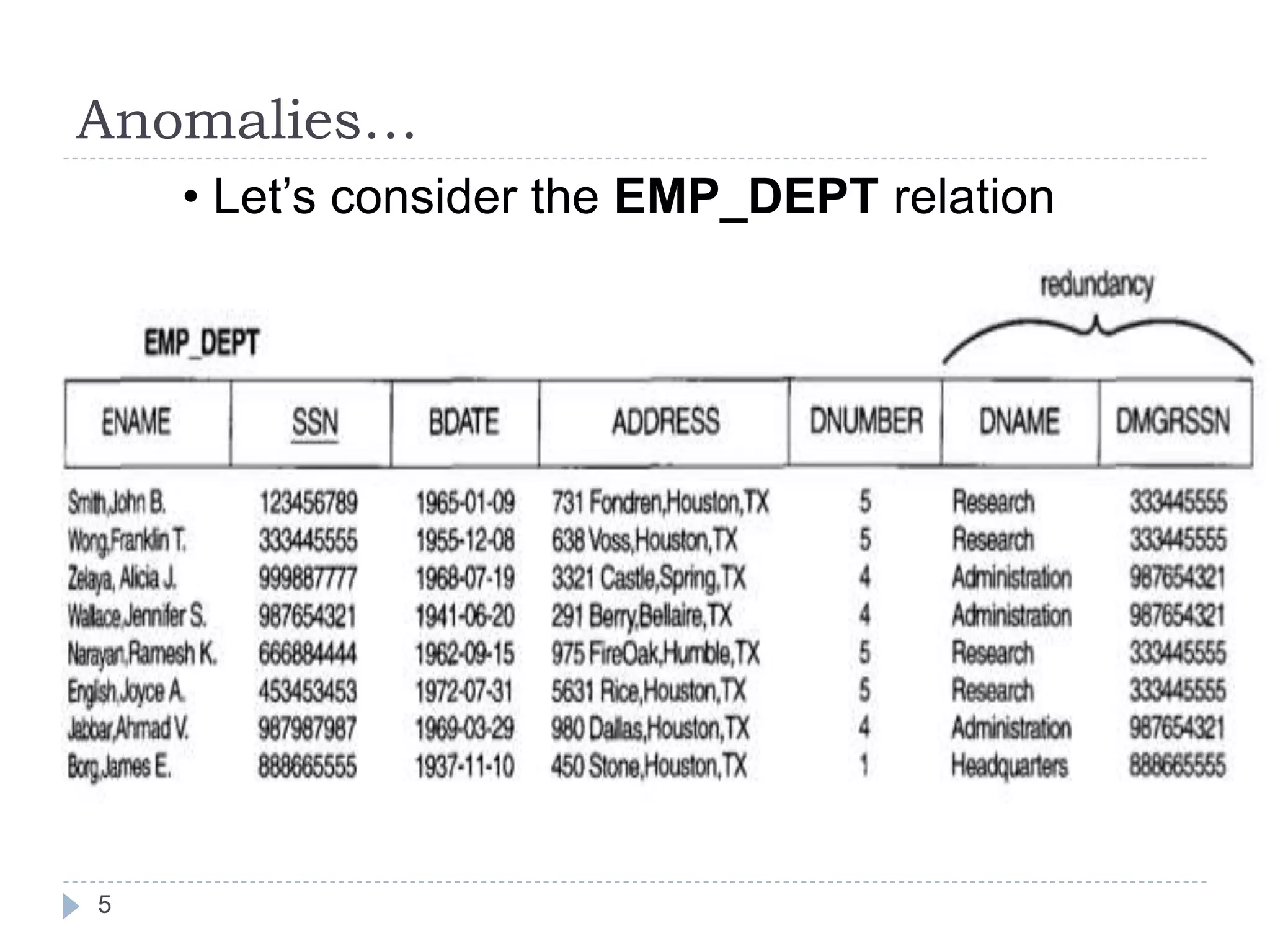

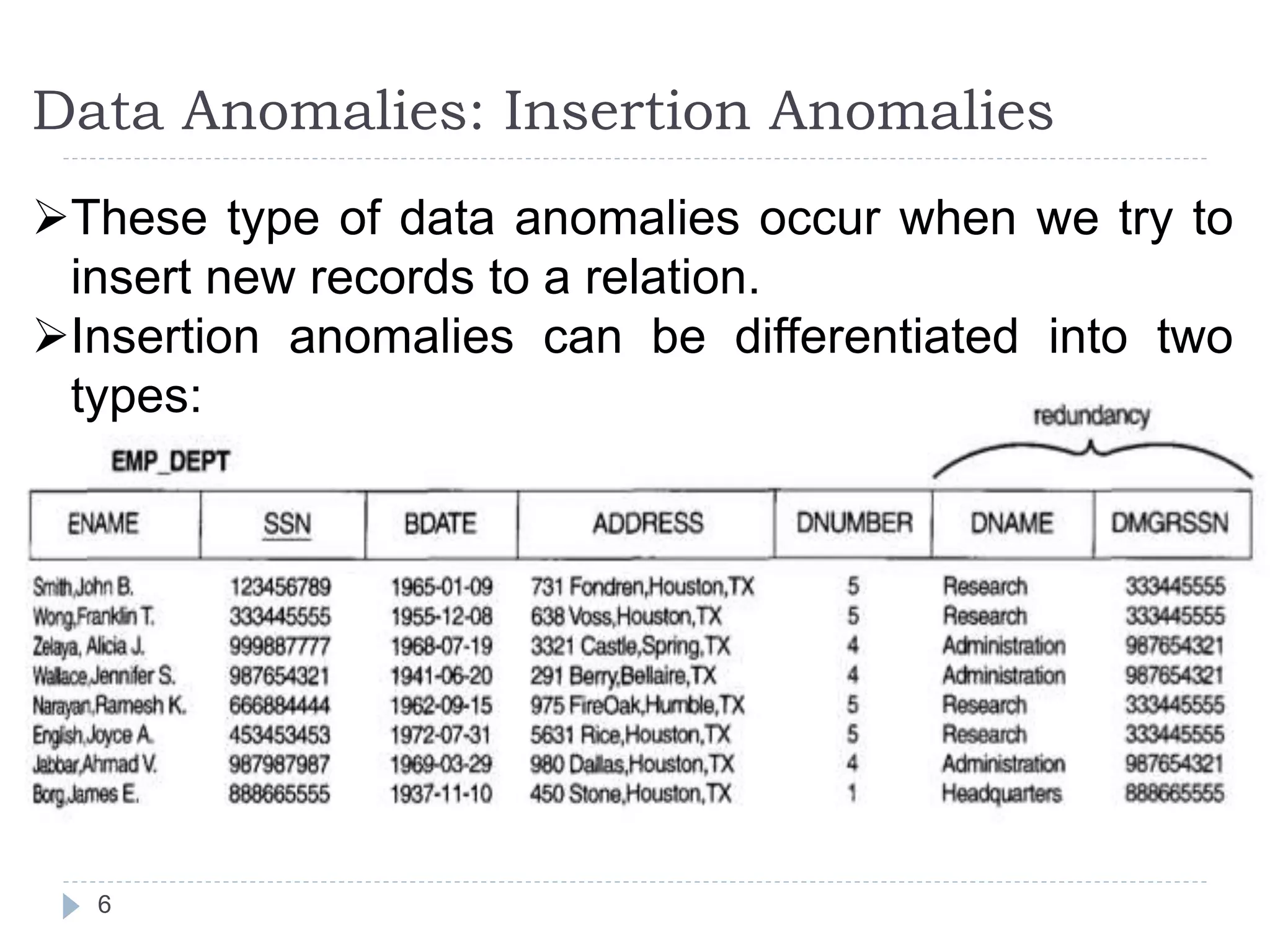

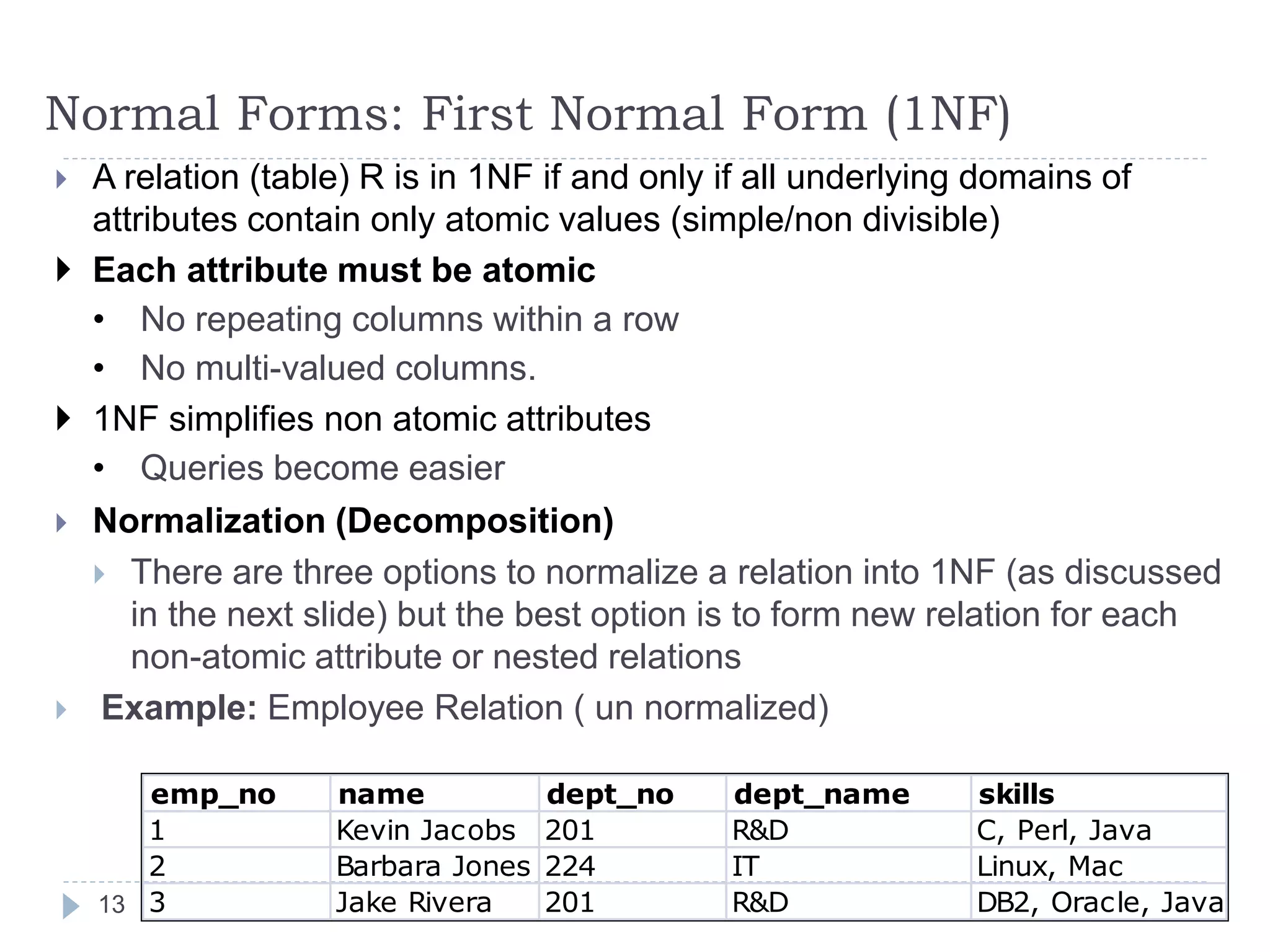

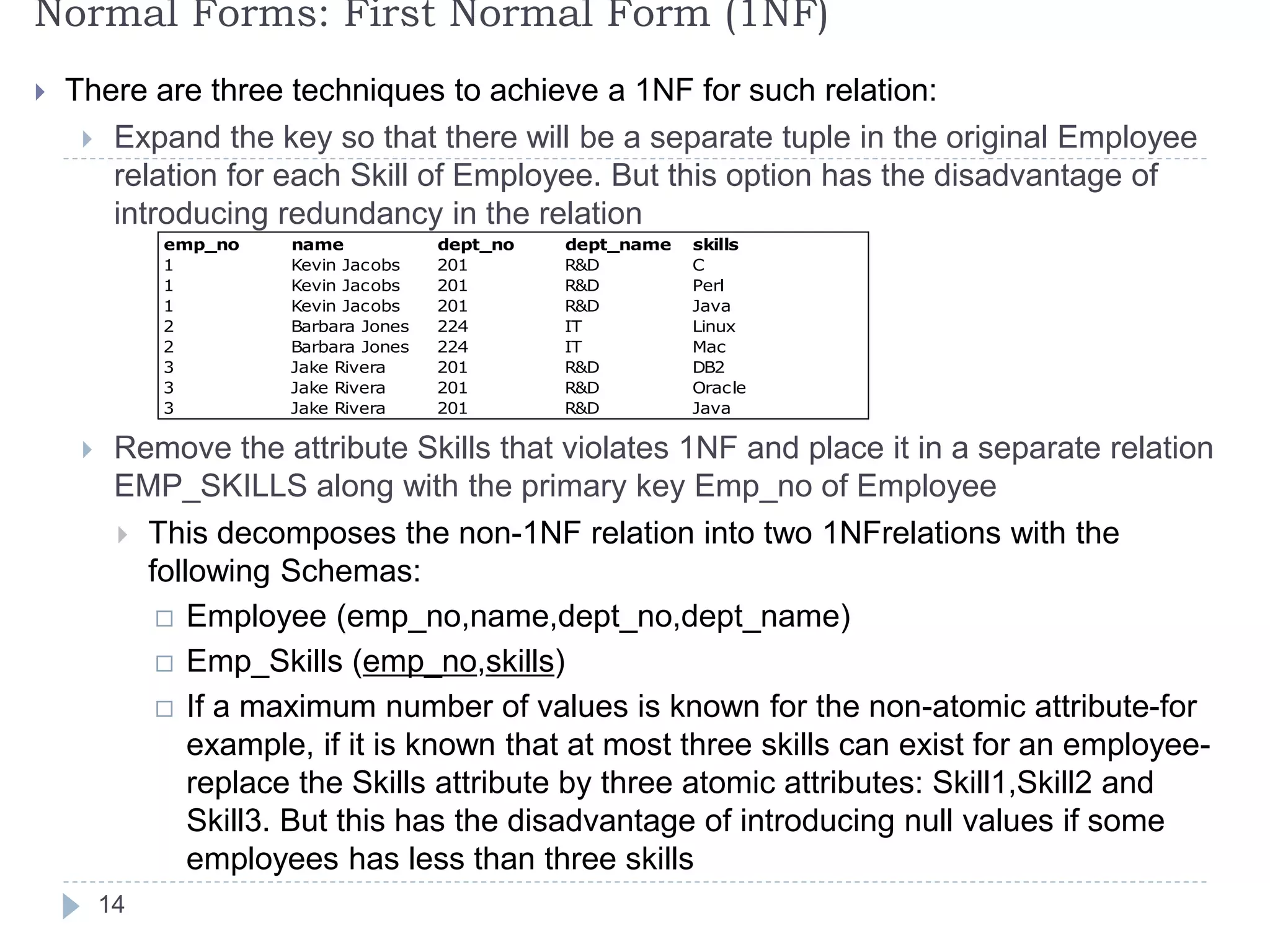

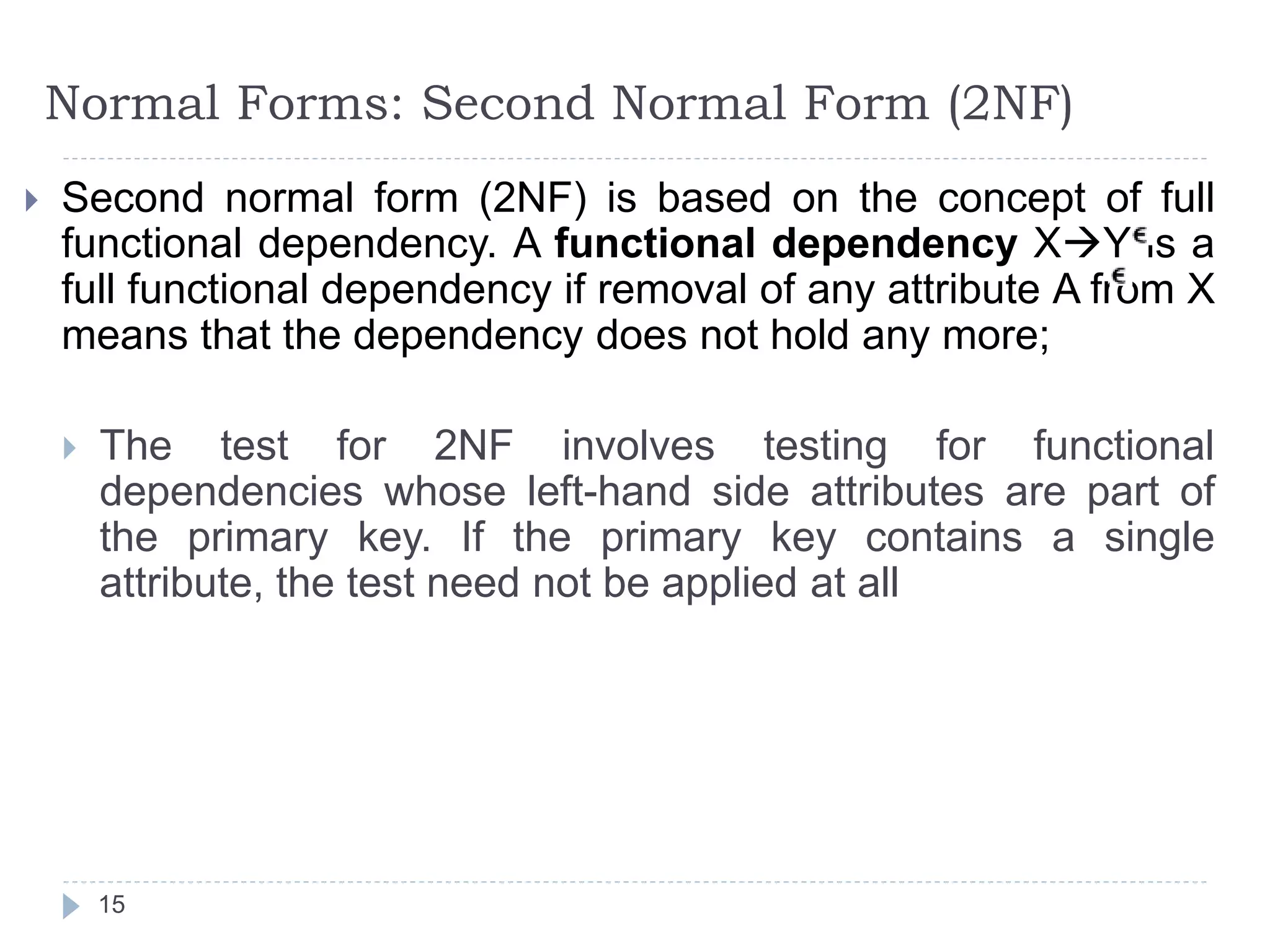

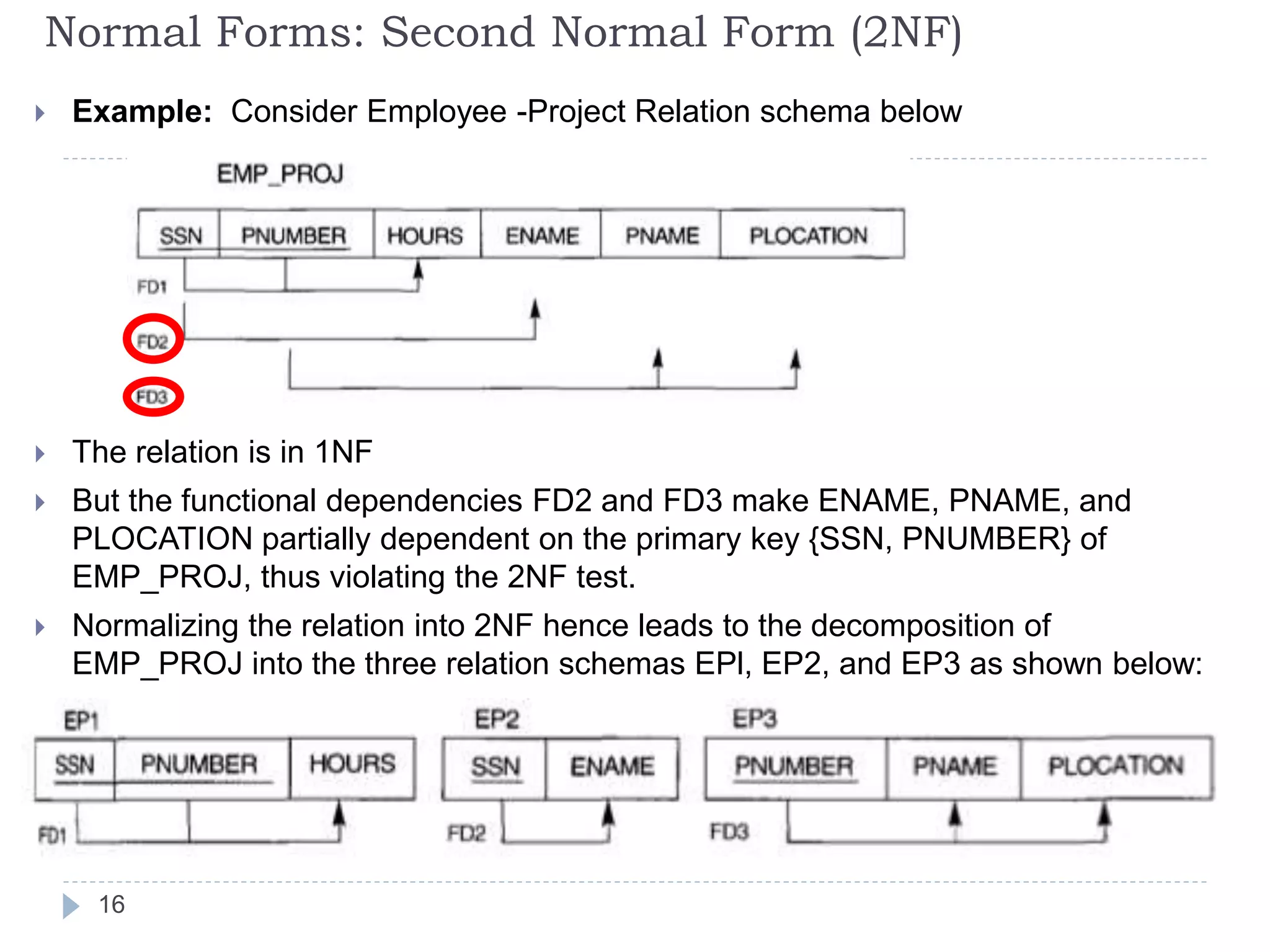

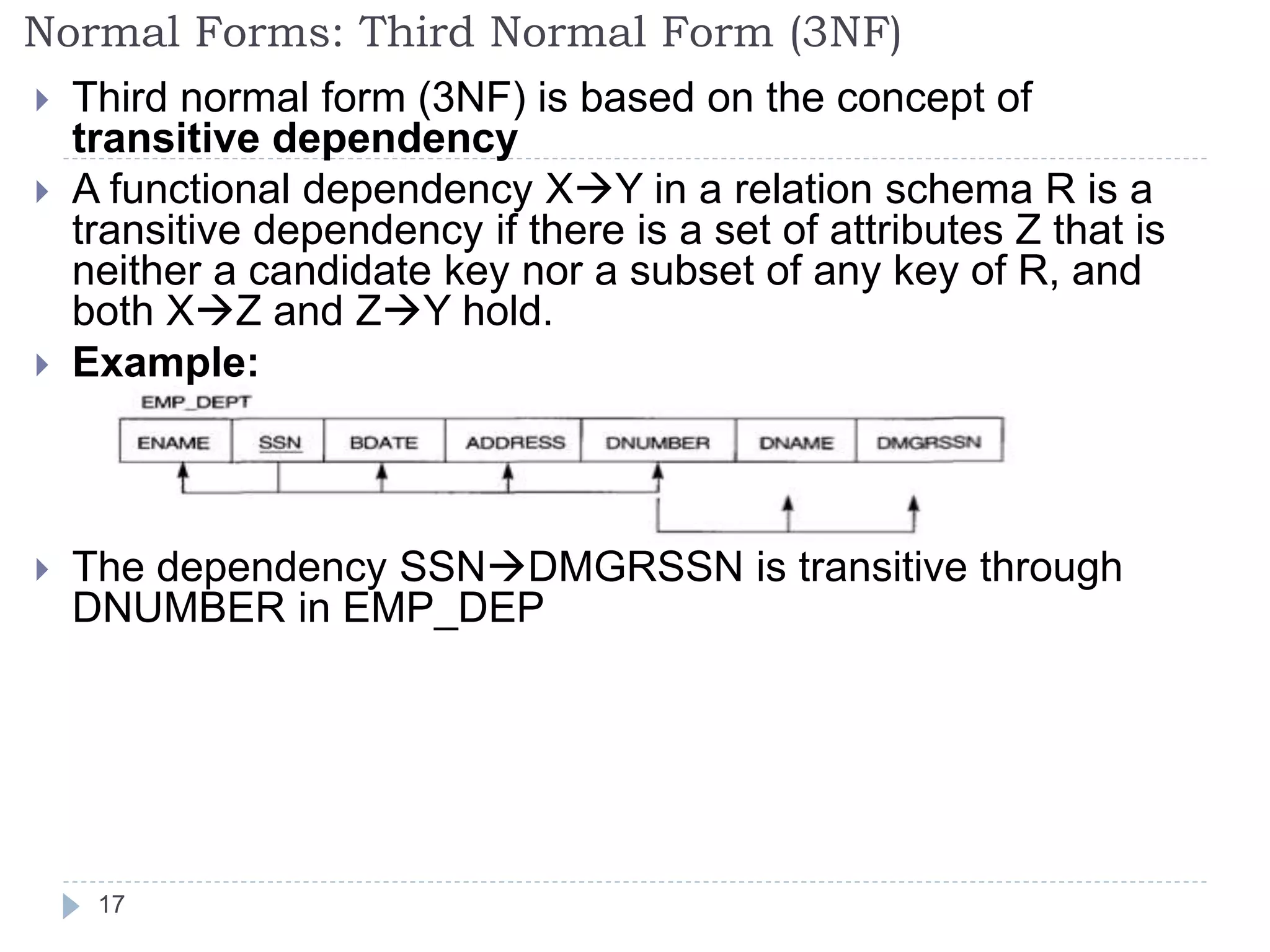

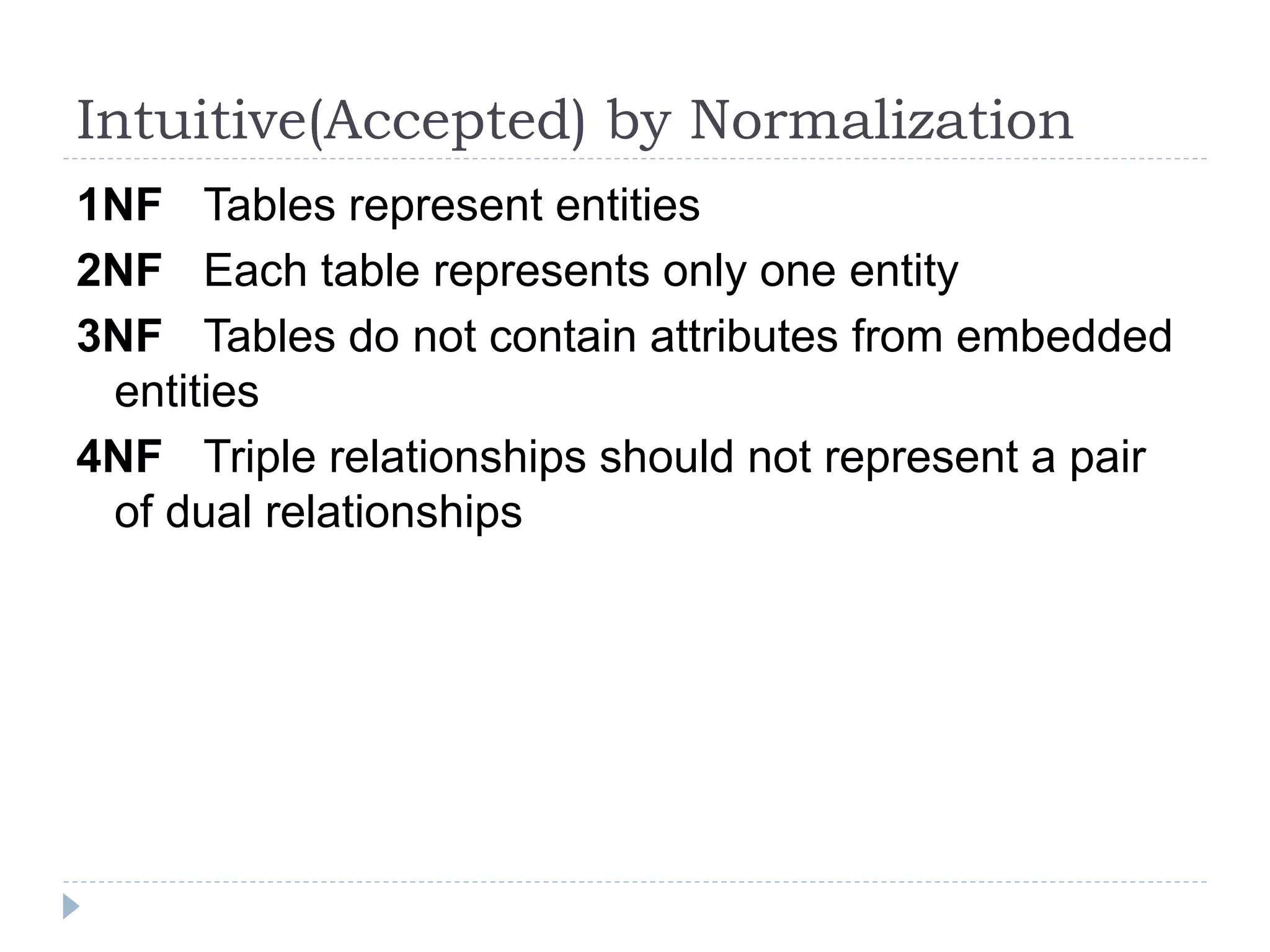

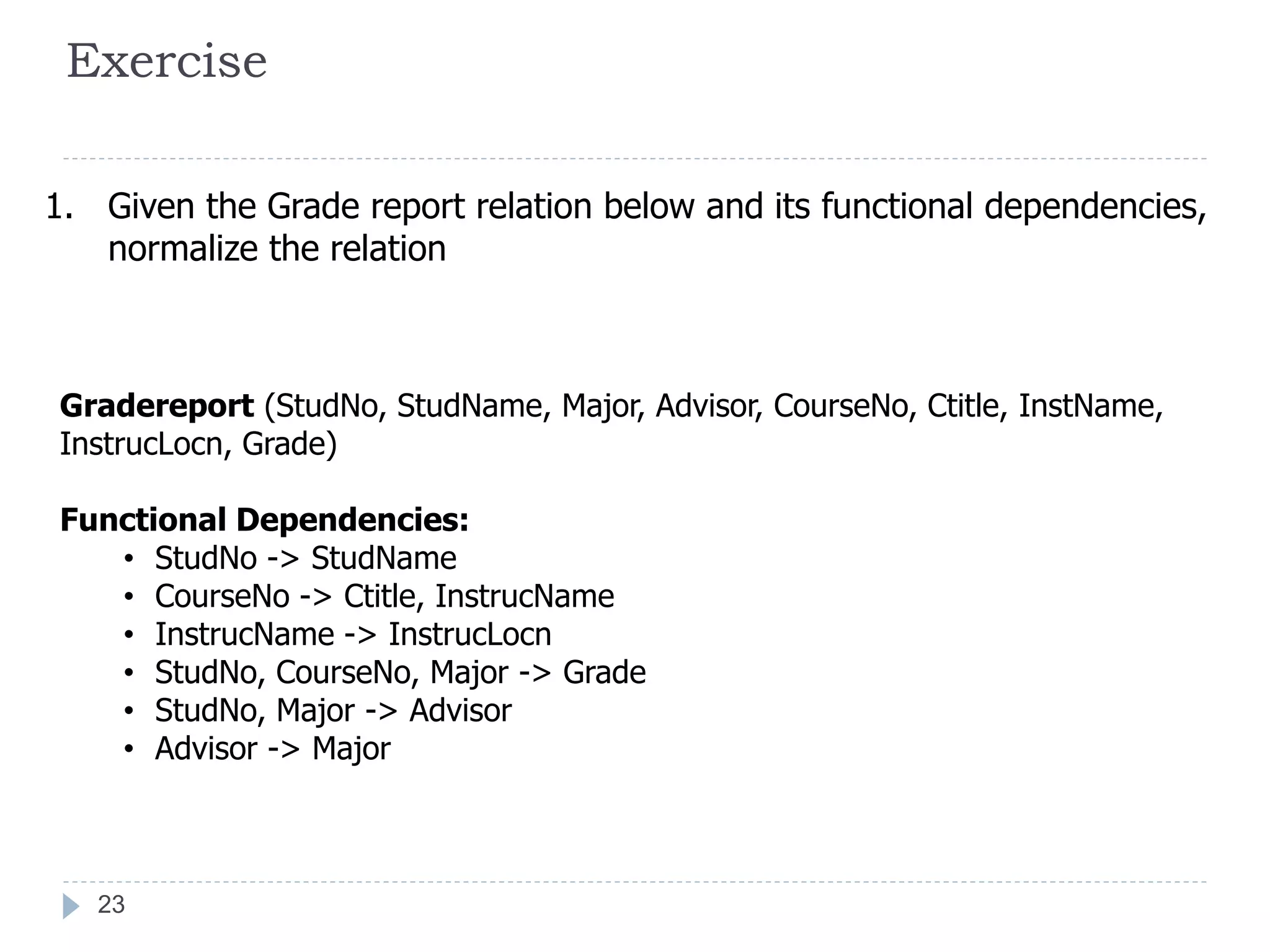

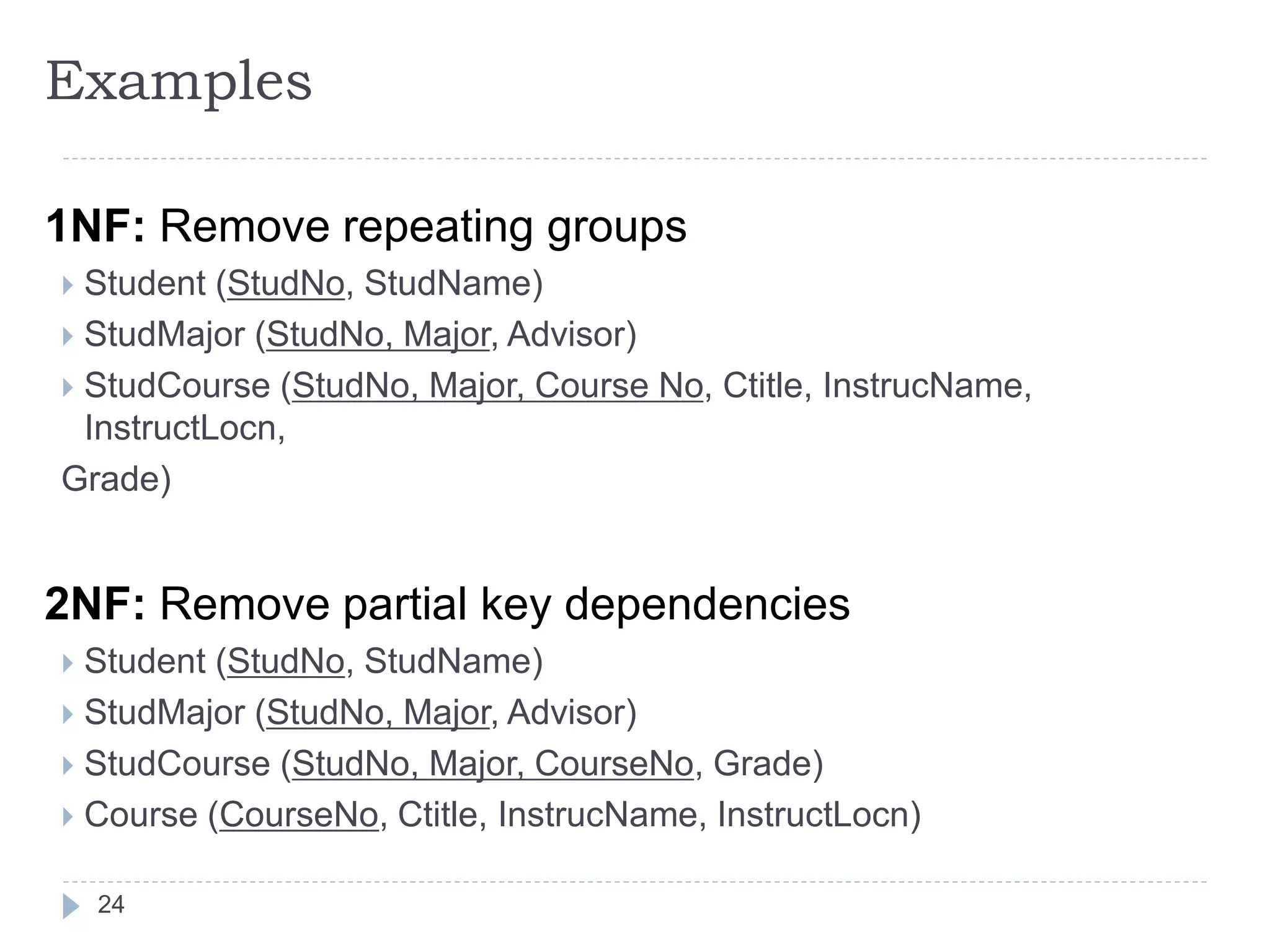

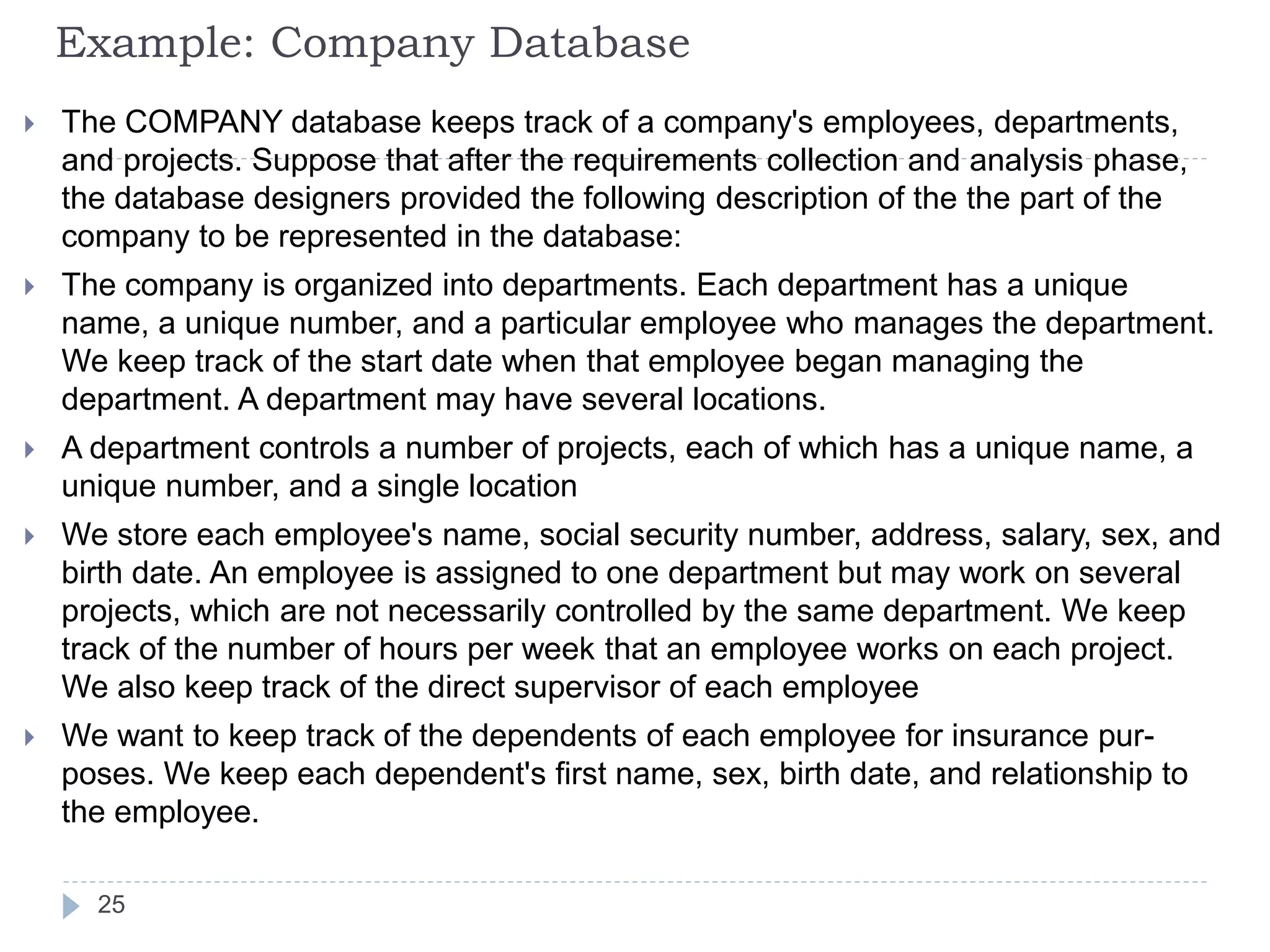

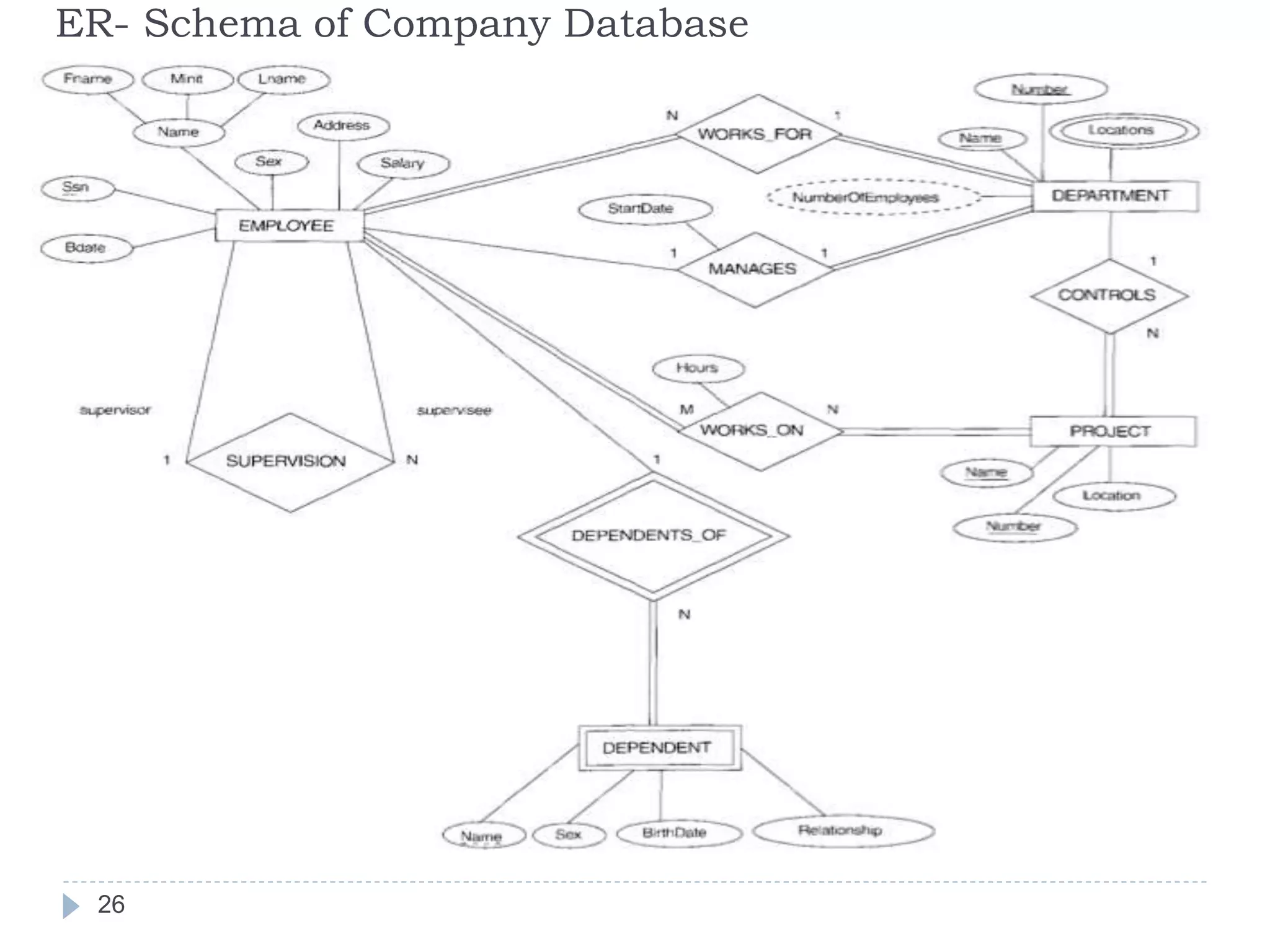

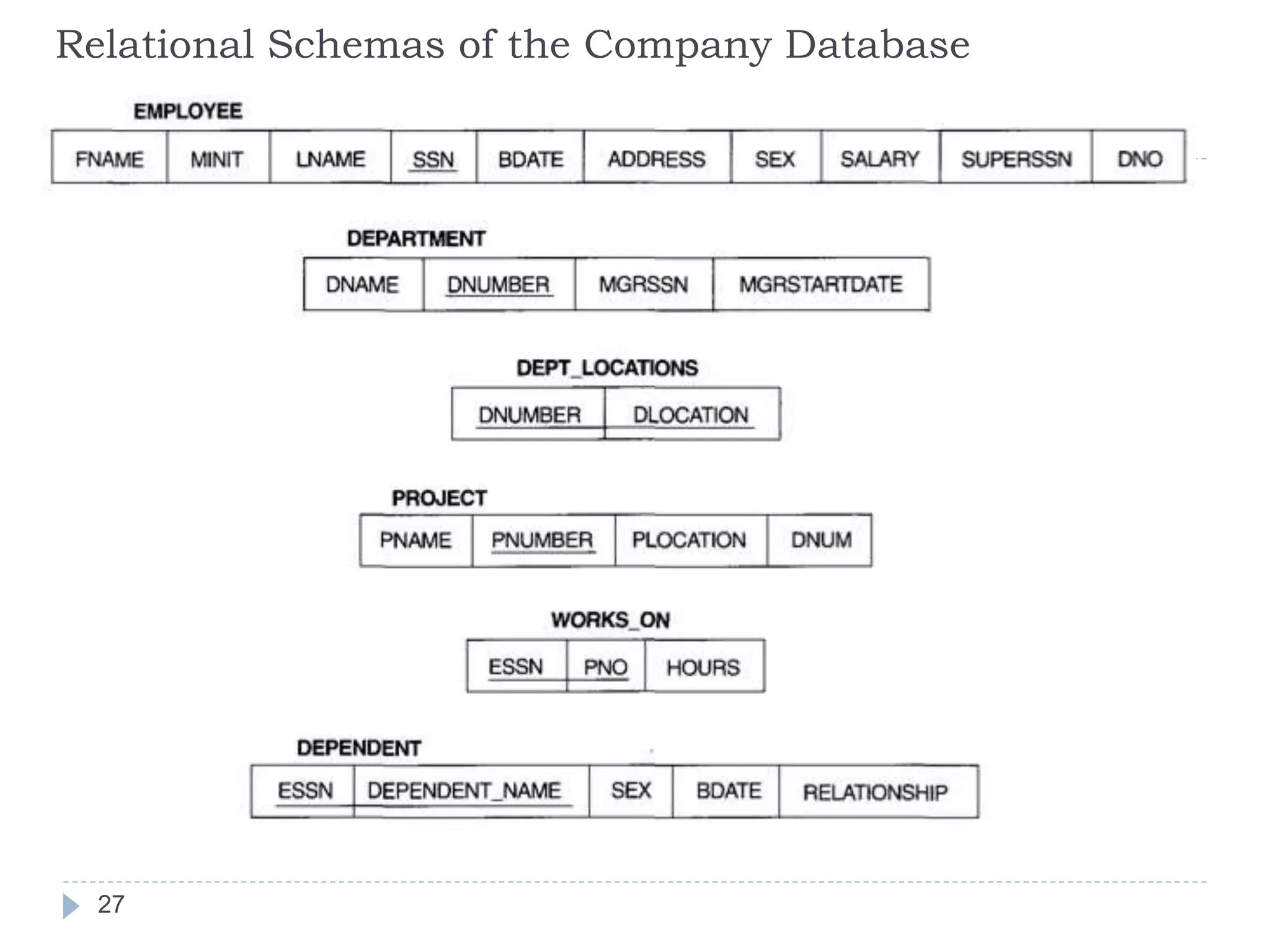

The document discusses database normalization. It defines normalization as decomposing relations to eliminate redundancy and anomalies. The goals of normalization are to eliminate redundancy, organize data efficiently, and reduce anomalies. It describes three common data anomalies - insertion, deletion, and modification anomalies. It also explains different normal forms including 1NF, 2NF, 3NF and BCNF and provides examples to illustrate how to normalize relations to these forms. The document emphasizes that normalization improves data quality by reducing redundancy and inconsistencies.