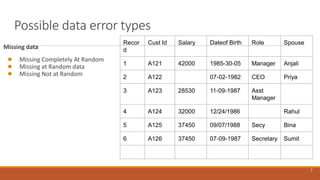

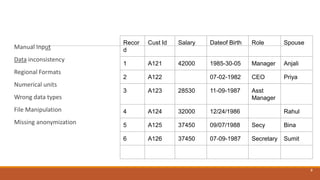

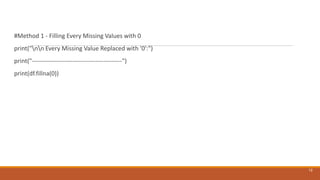

The document discusses data preprocessing in data science, outlining its objectives, phases, types of data, and common errors. It explains data types including categorical and numerical, as well as common data preprocessing operations like data cleaning and data reduction techniques. Additionally, it covers specific methods for handling missing data, smoothing noisy data, and the importance of maintaining data integrity during preprocessing.

![#Method 3 - Replacing missing values with the Median

Valuemedian = df['C01'].median()

df['C01'].fillna(median, inplace=True)

print("nn Missing Values for Column 1 Replaced with Median

Value:")

print("--------------------------------------------------")

print(df)

14](https://image.slidesharecdn.com/chapter3datapreprocessing-240403234054-f7489d12/85/Chapter-3-Data-Preprocessing-techniques-pptx-14-320.jpg)

![One hot encoding

Label encoding assigns a numeric value to each categorical value .This will be ok for categorical

labels

But nominal features do not have any order

Eg : Color of car values do not have any order among themselves

To prevent this one hot encoding is used for nominal attributes

It splits the column which contain the nominal categorical data to many columns depending on the

number of categories present in that column.Each column may contain 0 or 2 corresponding to

which column it has been placed.

EG Color_of _cars =[white’,’red’,’black’]

The one hot encoding matrix will be

16

white red black

1 0 0

0 1 0

0 0 1](https://image.slidesharecdn.com/chapter3datapreprocessing-240403234054-f7489d12/85/Chapter-3-Data-Preprocessing-techniques-pptx-16-320.jpg)