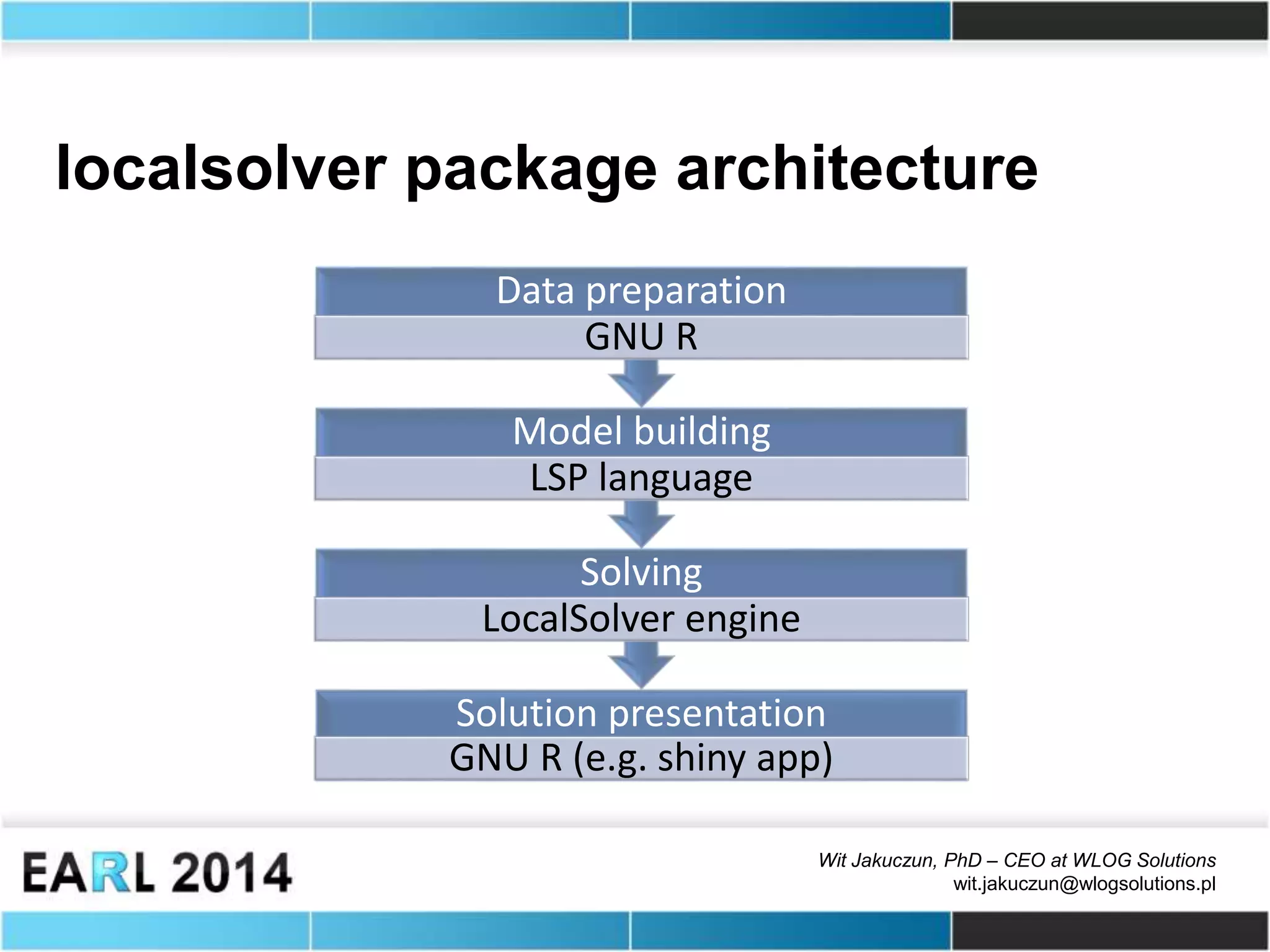

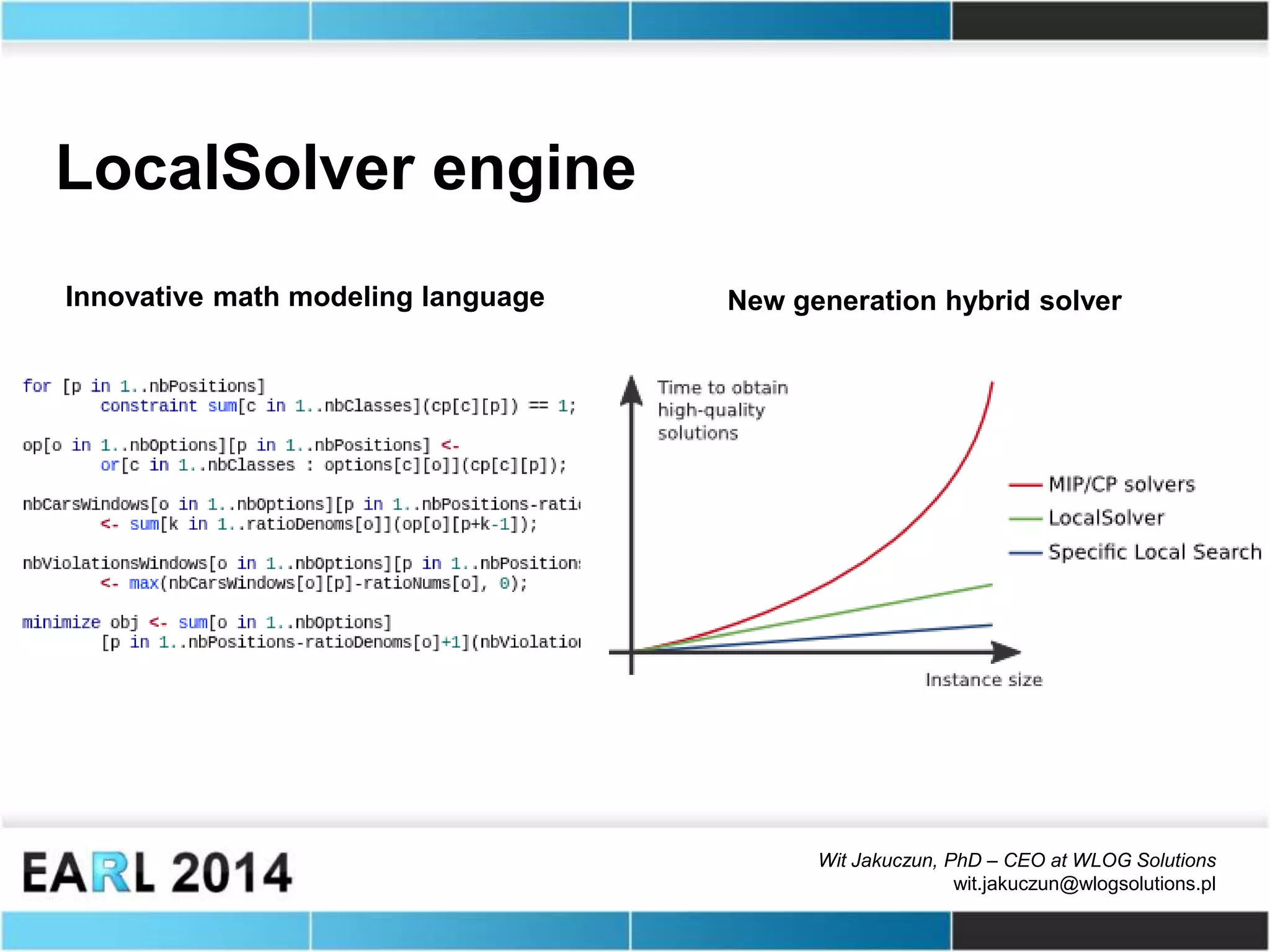

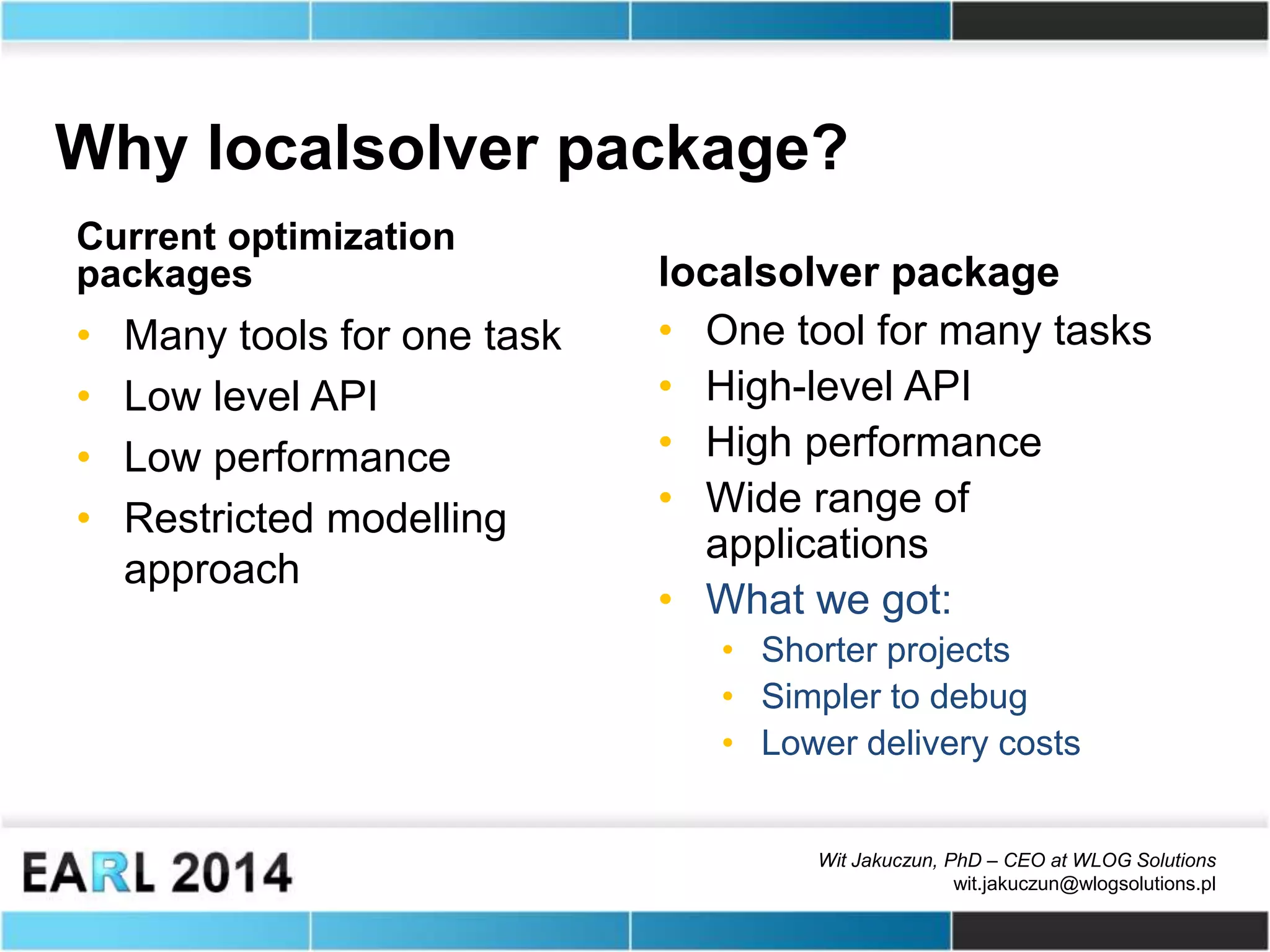

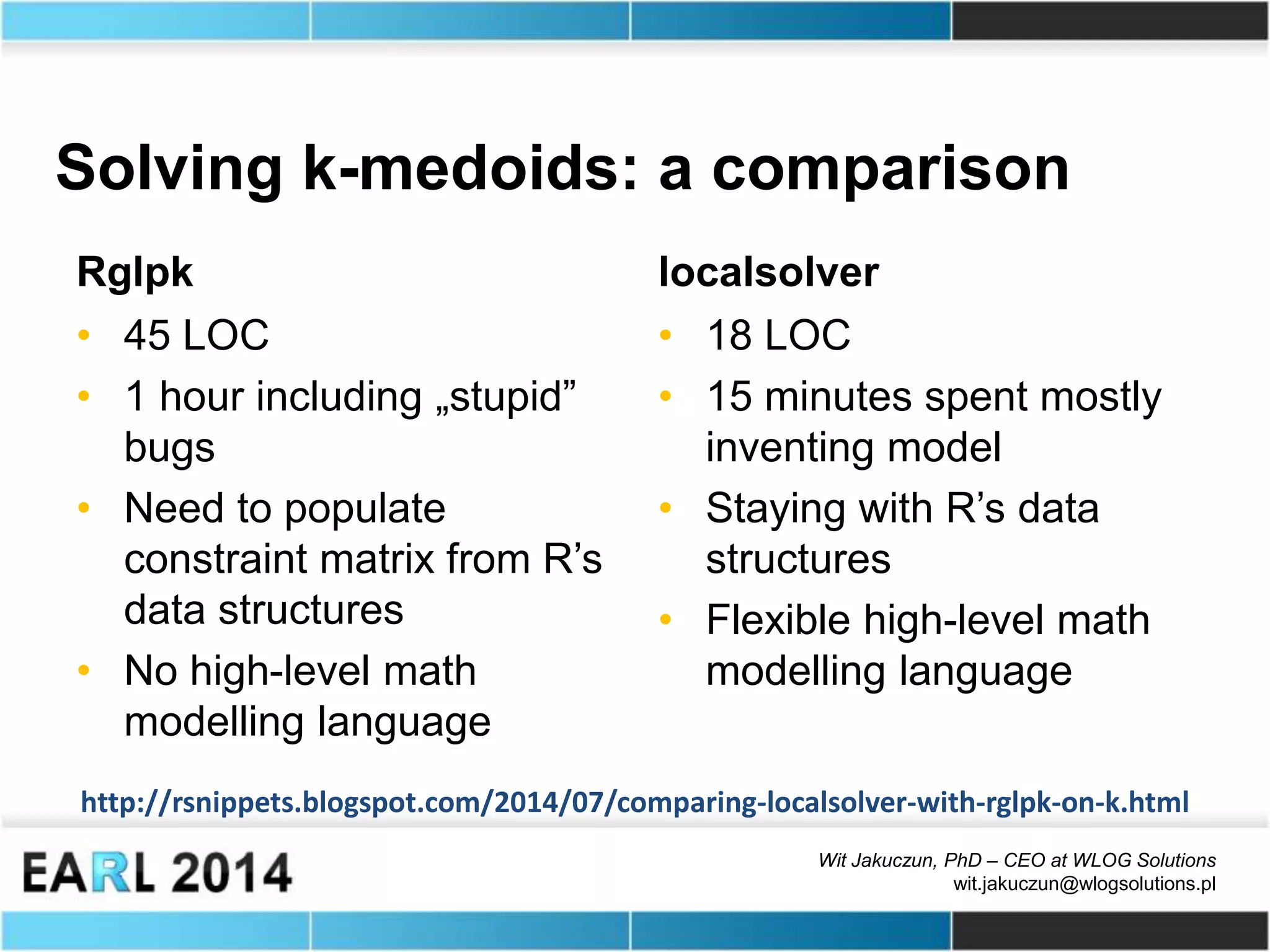

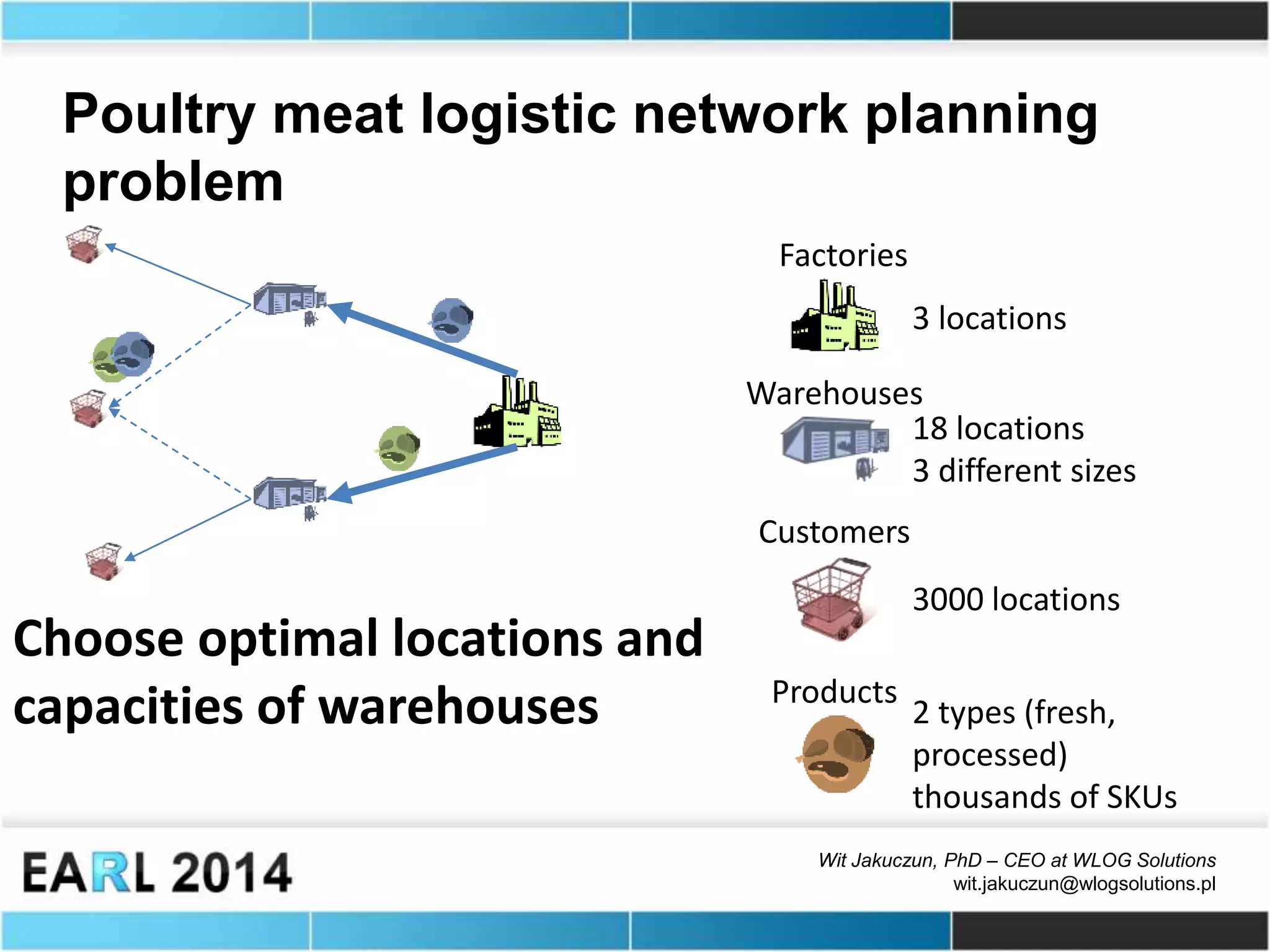

The document discusses optimization tools for R and describes a case study using the LocalSolver package. It provides an overview of the LocalSolver package architecture, highlighting its high-level API, performance, and flexible modeling capabilities. It then presents a comparison between LocalSolver and Rglpk on a k-medoids problem, showing LocalSolver requires less code and time. Finally, it briefly describes a logistic network planning problem for a poultry producer that was solved using LocalSolver.