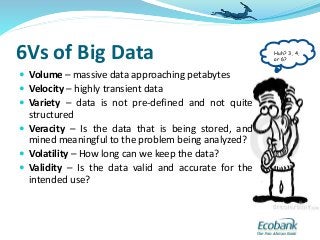

This document provides an overview of big data concepts and technologies. It discusses the 3 Vs, 4 Vs and 6 Vs frameworks used to describe big data. Key big data technologies mentioned include MapReduce, Hadoop, HDFS, YARN, and NoSQL databases like MongoDB, Cassandra, HBase and Dynamo. The Lambda architecture and CAP theorem concepts are also covered. Large internet companies like Google, Amazon, eBay are discussed as examples of organizations that have pioneered big data solutions to handle massive volumes of dynamic data at high velocity.