Ps602 notes part1

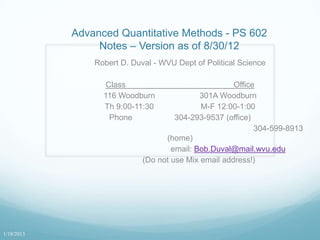

- 1. Advanced Quantitative Methods - PS 602 Notes – Version as of 8/30/12 Robert D. Duval - WVU Dept of Political Science Class Office 116 Woodburn 301A Woodburn Th 9:00-11:30 M-F 12:00-1:00 Phone 304-293-9537 (office) 304-599-8913 (home) email: Bob.Duval@mail.wvu.edu (Do not use Mix email address!) 1/18/2013

- 2. Syllabus Required texts Additional readings Computer exercises Course requirements Midterm - in class, open book (30%) Final – in-class, open book (30%) Research paper (30%) Participation (10%) http://www.polsci.wvu.edu/duval/ps602/602syl.htm January 18, 2013 Slide 2

- 3. Prerequisites An fundamental understanding of calculus An informal but intuitive understanding of the mathematics of Probability A sense of humor January 18, 2013 Slide 3

- 4. Statistics is an innate cognitive skill We all possess the ability to do rudimentary statistical analysis – in our heads – intuitively. The cognitive machinery for stats is built in to us, just like it is for calculus. This is part of how we process information about the world It is not simply mysterious arcane jargon It is simply the mysterious arcane way you already think. Much of it formalizes simple intuition. Why do we set alpha (α) to .05? January 18, 2013 Slide 4

- 5. Introduction This course is about Regression analysis. The principle method in the social science Three basic parts to the course: An introduction to the general Model The formal assumptions and what they mean. Selected special topics that relate to regression and linear models. January 18, 2013 Slide 5

- 6. Introduction: The General Linear Model The General Linear Model (GLM) is a phrase used to indicate a class of statistical models which include simple linear regression analysis. Regression is the predominant statistical tool used in the social sciences due to its simplicity and versatility. Also called Linear Regression Analysis. Multiple Regression Ordinary Least Squares January 18, 2013 Slide 6

- 7. Simple Linear Regression: The Basic Mathematical Model Regression is based on the concept of the simple proportional relationship - also known as the straight line. We can express this idea mathematically! Theoretical aside: All theoretical statements of relationship imply a mathematical theoretical structure. Just because it isn‟t explicitly stated doesn‟t mean that the math isn‟t implicit in the language itself! January 18, 2013 Slide 7

- 8. Math and language When we speak about the world, we often have imbedded implicit relationships that we are referring to. For instance: Increasing taxes will send us into recession. Decreasing taxes will spur economic growth. January 18, 2013 Slide 8

- 9. From Language to models The idea that reducing taxes on the wealthy will spur economic growth (or increasing taxes will harm economic growth) suggests that there is a proportional relationship between tax rates and growth in domestic product. So lets look! Disclaimer! The “models” that follow are meant to be examples. They are not “good” models, only useful ones to talk about! January 18, 2013 Slide 9

- 10. GDP and Average Tax Rates: 1986-2009 Sources: (1) US Bureau of Economic Analysis, http://www.bea.gov/iTable/iTable.cfm?ReqID=9&step=1 (2) US Internal Revenue Service, http://www.irs.gov/pub/irs-soi/09in05tr.xls

- 11. The Stats regress gdp avetaxrate Source | SS df MS Number of obs = 24 -------------+------------------------------ F( 1, 22) = 5.11 Model | 44236671 1 44236671 Prob > F = 0.0340 Residual | 190356376 22 8652562.57 R-squared = 0.1886 -------------+------------------------------ Adj R-squared = 0.1517 Total | 234593048 23 10199697.7 Root MSE = 2941.5 ------------------------------------------------------------------------------ gdp | Coef. Std. Err. t P>|t| [95% Conf. Interval] -------------+---------------------------------------------------------------- avetaxrate | -1341.942 593.4921 -2.26 0.034 -2572.769 -111.1148 _cons | 26815.88 7910.084 3.39 0.003 10411.37 43220.39 ------------------------------------------------------------------------------ January 18, 2013 Slide 11

- 12. The Stats regress gdp avetaxrate Source | SS df MS Number of obs = 24 -------------+------------------------------ F( 1, 22) = 5.11 Model | 44236671 1 44236671 Prob > F = 0.0340 Residual | 190356376 22 8652562.57 R-squared = 0.1886 -------------+------------------------------ Adj R-squared = 0.1517 Total | 234593048 23 10199697.7 Root MSE = 2941.5 ------------------------------------------------------------------------------ gdp | Coef. Std. Err. t P>|t| [95% Conf. Interval] -------------+---------------------------------------------------------------- avetaxrate | -1341.942 593.4921 -2.26 0.034 -2572.769 -111.1148 _cons | 26815.88 7910.084 3.39 0.003 10411.37 43220.39 ------------------------------------------------------------------------------ January 18, 2013 Slide 12

- 13. But wait, there‟s more… What is the model? There is a directly proportional relationship between tax rates and economic growth. How about an equation? We will get back to this… Can you critique the “model?” Can we look at it differently? If higher January 18, 2013 Slide 13

- 14. Effective Tax Rate and Growth in GDP: 1986-2009 Sources: (1) US Bureau of Economic Analysis, http://www.bea.gov/iTable/iTable.cfm?ReqID=9&step=1 (2) US Internal Revenue Service, http://www.irs.gov/pub/irs-soi/09in05tr.xls

- 15. . regress gdpchange avetaxrate Source | SS df MS Number of obs = 23 -------------+------------------------------ F( 1, 21) = 6.15 Model | 22.4307279 1 22.4307279 Prob > F = 0.0217 Residual | 76.5815075 21 3.64673845 R-squared = 0.2265 -------------+------------------------------ Adj R-squared = 0.1897 Total | 99.0122354 22 4.50055615 Root MSE = 1.9096 ------------------------------------------------------------------------------ gdpchange | Coef. Std. Err. t P>|t| [95% Conf. Interval] -------------+---------------------------------------------------------------- avetaxrate | .9889805 .3987662 2.48 0.022 .1597008 1.81826 _cons | -7.977765 5.292757 -1.51 0.147 -18.98466 3.029126 ------------------------------------------------------------------------------ January 18, 2013 Slide 15

- 16. Finally…sort of . regress gdpchange taxratechange Source | SS df MS Number of obs = 23 -------------+------------------------------ F( 1, 21) = 11.00 Model | 34.0305253 1 34.0305253 Prob > F = 0.0033 Residual | 64.9817101 21 3.09436715 R-squared = 0.3437 -------------+------------------------------ Adj R-squared = 0.3124 Total | 99.0122354 22 4.50055615 Root MSE = 1.7591 ------------------------------------------------------------------------------- gdpchange | Coef. Std. Err. t P>|t| [95% Conf. Interval] --------------+---------------------------------------------------------------- taxratechange | .2803104 .0845261 3.32 0.003 .1045288 .456092 _cons | 5.414708 .3780098 14.32 0.000 4.628593 6.200822 ------------------------------------------------------------------------------- January 18, 2013 Slide 16

- 17. Alternate Mathematical Notation for the Line Alternate Mathematical Notation for the straight line - don‟t ask why! 10th Grade Geometry y = mx + b Statistics Literature Yi a bX i ei Econometrics Literature Y i = B 0 + B 1 X i +e i Or (Like your Textbook) Yi = B1 +B2Xi + ui January 18, 2013 Slide 17

- 18. Alternate Mathematical Notation for the Line – cont. These are all equivalent. We simply have to live with this inconsistency. We won‟t use the geometric tradition, and so you just need to remember that B0 and a are both the same thing. January 18, 2013 Slide 18

- 19. Linear Regression: the Linguistic Interpretation In general terms, the linear model states that the dependent variable is directly proportional to the value of the independent variable. Thus if we state that some variable Y increases in direct proportion to some increase in X, we are stating a specific mathematical model of behavior - the linear model. Hence, if we say that the crime rate goes up as unemployment goes up, we are stating a simple linear model. January 18, 2013 Slide 19

- 20. Linear Regression: A Graphic Interpretation The Straight Line 12 10 8 Y 6 4 2 0 1 2 3 4 5 6 7 8 9 10 X January 18, 2013 Slide 20

- 21. The linear model is represented by a simple picture Simple Linear Regression 12 10 8 Y 6 4 2 0 1 2 3 4 5 6 7 8 9 10 X January 18, 2013 Slide 21

- 22. The Mathematical Interpretation: The Meaning of the Regression Parameters a = the intercept the point where the line crosses the Y-axis. (the value of the dependent variable when all of the independent variables = 0) b = the slope the increase in the dependent variable per unit change in the independent variable. (also known as the 'rise over the run') January 18, 2013 Slide 22

- 23. The Error Term Such models do not predict behavior perfectly. So we must add a component to adjust or compensate for the errors in prediction. Having fully described the linear model, the rest of the semester (as well as several more) will be spent of the error. January 18, 2013 Slide 23

- 24. The Nature of Least Squares Estimation There is 1 essential goal and there are 4 important concerns with any OLS Model January 18, 2013 Slide 24

- 25. The 'Goal' of Ordinary Least Squares Ordinary Least Squares (OLS) is a method of finding the linear model which minimizes the sum of the squared errors. Such a model provides the best explanation/prediction of the data. January 18, 2013 Slide 25

- 26. Why Least Squared error? Why not simply minimum error? The error‟s about the line sum to 0.0! Minimum absolute deviation (error) models now exist, but they are mathematically cumbersome. Try algebra with | Absolute Value | signs! January 18, 2013 Slide 26

- 27. Other models are possible... Best parabola...? (i.e. nonlinear or curvilinear relationships) Best maximum likelihood model ... ? Best expert system...? Complex Systems…? Chaos/Non-linear systems models Catastrophe models others January 18, 2013 Slide 27

- 28. The Simple Linear Virtue I think we over emphasize the linear model. It does, however, embody this rather important notion that Y is proportional to X. As noted, we can state such relationships in simple English. As unemployment increases, so does the crime rate. As domestic conflict increased, national leaders will seek to distract their populations by initiating foreign disputes. January 18, 2013 Slide 28

- 29. The Notion of Linear Change The linear aspect means that the same amount of increase in unemployment will have the same effect on crime at both low and high unemployment. A nonlinear change would mean that as unemployment increases, its impact upon the crime rate might increase at higher unemployment levels. January 18, 2013 Slide 29

- 30. Why squared error? Because: (1) the sum of the errors expressed as deviations would be zero as it is with standard deviations, and (2) some feel that big errors should be more influential than small errors. Therefore, we wish to find the values of a and b that produce the smallest sum of squared errors. January 18, 2013 Slide 30

- 31. The Parameter estimates In order to do this, we must find parameter estimates which accomplish this minimization. In calculus, if you wish to know when a function is at its minimum, you set the first derivative equal to zero. In this case we must take partial derivatives since we have two parameters (a & b) to worry about. We will look closer at this and it‟s not a pretty sight! January 18, 2013 Slide 31

- 32. Decomposition of the error in LS January 18, 2013 Slide 32

- 33. Goodness of Fit Since we are interested in how well the model performs at reducing error, we need to develop a means of assessing that error reduction. Since the mean of the dependent variable represents a good benchmark for comparing predictions, we calculate the improvement in the prediction of Yi relative to the mean of Y (the best guess of Y with no other information). January 18, 2013 Slide 33

- 34. Sum of Squares Terminology In mathematical jargon we seek to minimize the Unexplained Sum of Squares (USS), where: 2 U SS ( Yi Yi ) 2 ei January 18, 2013 Slide 34

- 35. Sums of Squares This gives us the following 'sum-of-squares' measures: Total Variation = Explained Variation + Unexplained Variation 2 TSS Total Sum of Squares (Y i Y) ESS Explained Sum of Squares ˆ (Y i Y) 2 USS Un exp lained Sum of Squares (Y i ˆ 2 Yi ) January 18, 2013 Slide 35

- 36. Sums of Squares Confusion Note: Occasionally you will run across ESS and RSS which generate confusion since they can be used interchangeably. ESS can be the error sums-of-squares, or alternatively, the estimated or explained SSQ. Likewise RSS can be the residual SSQ, or the regression SSQ. Hence the use of USS for Unexplained SSQ in this treatment. Gujarati uses ESS (Explained sum of squares) and RSS (Residual sum of squares) January 18, 2013 Slide 36

- 37. The Parameter estimates In order to find the „best‟ parameters, we must find parameter estimates which accomplish this minimization. That is the parameters that have the smallest sum f squared errors. In calculus, if you wish to know when a function is at its minimum, you take the first derivative and set it equal to 0.0 The second derivative must be positive as well, but we do not need to go there. In this case we must take partial derivatives since we have two parameters to worry about. January 18, 2013 Slide 37

- 38. Deriving the Parameter Estimates Since U SS ( Yi Yi ) 2 2 ei 2 ( Yi a bX i ) We can take the partial derivative of USS with respect to both a and b. U SS 2 ( Yi a bX i )( 1) a U SS 2 ( Yi a bX i )( X i ) b January 18, 2013 Slide 38

- 39. Deriving the Parameter Estimates (cont.) Which simplifies to U SS 2 ( Yi a bX i ) 0 a U SS 2 X i ( Yi a bX i ) 0 b We also set these derivatives to 0 to indicate that we are at a minimum. January 18, 2013 Slide 39

- 40. Deriving the Parameter Estimates (cont.) We now add a “hat” to the parameters to indicate that the results are estimators. U SS 2 ( Yi a bXi) 0 a U SS 2 X i ( Yi a bXi) 0 b We also set these derivatives equal to zero. January 18, 2013 Slide 40

- 41. Deriving the Parameter Estimates (cont.) Dividing through by -2 and rearranging terms, we get Yi an b( X i ), 2 X i Yi a( Xi) b( Xi ) January 18, 2013 Slide 41

- 42. Deriving the Parameter Estimates (cont.) We can solve these equations simultaneously to get our estimators. n X i Yi Xi Yi b1 2 2 n Xi ( Xi) (Xi X )( Yi Y) 2 (Xi X) a Y b1 X January 18, 2013 Slide 42

- 43. Deriving the Parameter Estimates (cont.) The estimator for a also shows that the regression line always goes through the point which is the intersection of the two means. This formula is quite manageable for bivariate regression. If there are two or more independent variables, the formula for b2, etc. becomes unmanageable! See matrix algebra in POLS603! January 18, 2013 Slide 43

- 44. Tests of Inference t-tests for coefficients F-test for entire model Since we are interested in how well the model performs at reducing error, we need to develop a means of assessing that error reduction. Since the mean of the dependent variable represents a good benchmark for comparing predictions, we calculate the improvement in the prediction of Yi relative to the mean of Y Remember that the mean of Y is your best guess of Y with no other information. Well, often, assuming the data is normally distributed! January 18, 2013 Slide 44

- 45. T-Tests Since we wish to make probability statements about our model, we must do tests of inference. Fortunately, B tn 2 se B OK, so what is the seB? January 18, 2013 Slide 45

- 46. Standard Errors of Estimates These estimates have variation associated with them sea = å Xi2 s nå x 2 i s seB = åx ˆ 2 1 i January 18, 2013 Slide 46

- 47. This gives us the F test: The F-test tells us whether the full model is significant ( ESS /( k 1) Fk 1, n k USS /( n k) Note that F statistics have 2 different degrees of freedom: k-1 and n-k, where k is the number of regressors in the model. January 18, 2013 Slide 47

- 48. More on the F-test In addition, the F test for the entire model must be adjusted to compensate for the changed degrees of freedom. Note that F increases as n or R2 increases and decreases as k – the number of independent variables - increases. January 18, 2013 Slide 48

- 49. F test for different Models The F test can tell us whether two different models of the same dependent variable are significantly different. i.e. whether adding a new variable will significantly improve estimation 2 2 R new R old / number of new regressors F 2 1 R new / df ( n number of parameters in the new model) January 18, 2013 Slide 49

- 50. The correlation coefficient A measure of how close the residuals are to the regression line It ranges between -1.0 and +1.0 It is closely related to the slope. January 18, 2013 Slide 50

- 51. R2 (r-square, r2) The r2 (or R-square) is also called the coefficient of determination. 2 E SS r T SS U SS 1 T SS It is the percent of the variation in Y explained by X It must range between 0.0 and 1.0. An r2 of .95 means that 95% of the variation about the mean of Y is “caused” (or at least explained) by the variation in X January 18, 2013 Slide 51

- 52. Observations on r2 R2 always increases as you add independent variables. The r2 will go up even if X2 , or any new variable, is a completely random variable. r2 is an important statistic, but it should never be seen as the focus of your analysis. The coefficient values, their interpretation, and the tests of inference are really more important. Beware of r2 = 1.0 !!! January 18, 2013 Slide 52

- 53. The Adjusted R 2 Since R2 always increases with the addition of a new variable, the adjusted R2 compensates for added explanatory variables. 2 [USS /( n k 1)] R 1 [TSS /( n 1)] Note that it may range < 0.0 and greater than 1.0!!! But these values indicate poorly formed models. January 18, 2013 Slide 53

- 54. Comments on the adjusted R-squared R-squared will always go up with the addition of a new variable. Adjusted r-squared will go down if the variable contributes nothing new to the explanation of the model. As a rule of thumb, if the new variable has a t-value greater than 1.0, it increases the adjusted r-square. January 18, 2013 Slide 54

- 55. The assumptions of the model We will spend the next 7 weeks on this! January 18, 2013 Slide 55

- 56. Model The Scalar Version The basic multiple regression model is a simple extension of the bivariate equation. By adding extra independent variables, we are creating a multiple-dimensioned space, where the model fit is a some appropriate space. For instance, if there are two independent variables, we are fitting the points to a „plane in space‟. Visualizing this in more dimensions is a good trick. January 18, 2013 Slide 56

- 57. The Scalar Equation The basic linear model: Yi a b1 X 1i b2 X 2 i ... bk X ki ei If bivariate regression can be described as a line on a plane, multiple regression represents a k-dimensional object in a k+1 dimensional space. January 18, 2013 Slide 57

- 58. The Matrix Model We can use a different type of mathematical structure to describe the regression model Frequently called Matrix or Linear Algebra The multiple regression model may be easily represented in matrix terms. Y XB e Where the Y, X, B and e are all matrices of data, coefficients, or residuals January 18, 2013 Slide 58

- 59. The Matrix Model (cont.) The matrices in Y XB e represented by are Y1 X 11 X 12 ... X ik B1 e1 Y2 X 21 X 22 ... X 2k B2 e2 Y X B e ... ... ... ... Yn X n1 X n2 ... X nk Bk en Note that we postmultiply X by B since this order makes them conformable. Also note that X1 is a column of 1‟s to obtain an intercept term. January 18, 2013 Slide 59

- 60. Assumptions of the model Scalar Version The OLS model has seven fundamental assumptions. This count varies from author to author, based upon what each author thinks is a separate assumption! These assumptions form the foundation for all regression analysis. Failure of a model to conform to these assumptions frequently presents problems for estimation and inference. The “problem” may range from minor to severe! These problems, or violations of the assumptions, almost invariably arise out of substantive or theoretical problems! January 18, 2013 Slide 60

- 61. Model Scalar Version (cont.) 1. The ei's are normally distributed. 2. E(ei) = 0 3. E(ei2) = 2 4. E(eiej) = 0 (i j) 5. X's are nonstochastic with values fixed in repeated samples and å(Xik - Xk )2 isna finite nonzero number. / 6. The number of observations is greater than the number of coefficients estimated. 7. No exact linear relationship exists between any of the explanatory variables. January 18, 2013 Slide 61

- 62. Model The English Version The errors have a normal distribution. The residuals are heteroskedastic. (The variation in the errors doesn‟t change across values of the independent or dependent variables) There is no serial correlation in the errors. The errors are unrelated to their neighbors.) There is no multicollinearity. (No variable is a perfect function of another variable.) The X‟s are fixed (non-stochastic) and have some variation, but no infinite values. There are more data points than unknowns. The model is linear in its parameters.. All modeled relationships are directly proportional OK…so it‟s not really English…. January 18, 2013 Slide 62

- 63. Model: The Matrix Version These same assumptions expressed in matrix format are: 1. e N(0, ) 2. = 2I 3. The elements of X are fixed in repeated samples and (1/ n)X'X is nonsingular and its elements are finite January 18, 2013 Slide 63

- 64. Properties of Estimators ( ) Since we are concerned with error, we will be concerned with those properties of estimators which have to do with the errors produced by the estimates - the s We use the symbol to denote a general parameter It could represent a regression slope (B), a sample mean (Xbar), a standard deviation (s), and many other statistics or estimators based on some sample. January 18, 2013 Slide 64

- 65. Types of estimator error Estimators are seldom exactly correct due to any number of reasons, most notably sampling error and biased selection. There are several important concepts that we need to understand in examining how well estimators do their job. January 18, 2013 Slide 65

- 66. Sampling error Sampling error is simply the difference between the true value of a parameter and its estimate in any given sample. Sam pling E rror This sampling error means that an estimator will vary from sample to sample and therefore estimators have variance. 2 2 2 2 V ar ( ) E[ E ( )] E( ) [ E ( )] January 18, 2013 Slide 66

- 67. Bias The bias of an estimate is the difference between its expected value and its true value. If the estimator is always low (or high) then the estimator is biased. An estimator is unbiased if E( ) And B ias E( ) January 18, 2013 Slide 67

- 68. Mean Squared Error The mean square error (MSE) is different from the estimator‟s variance in that the variance measures dispersion about the estimated parameter while mean squared error measures the dispersion about the true parameter. 2 M ean square error E( ) If the estimator is unbiased then the variance and MSE are the same. January 18, 2013 Slide 68

- 69. Mean Squared Error (cont.) The MSE is important for time series and forecasting since it allows for both bias and efficiency: 2 For Instance SE = variance + (bias) M These concepts lead us to look at the properties of estimators. Estimators may behave differently in large and small samples, so we look at both small sample and, large (asymptotic) sample properties. January 18, 2013 Slide 69

- 70. Small Sample Properties These are the ideal properties. We desire these to hold. Unbiasedness Efficiency Best Linear Unbiased Estimator If the small sample properties hold, then by extension, the large sample properties hold. January 18, 2013 Slide 70

- 71. Bias A parameter is unbiased if E( ) In other words, the average value of the estimator in repeated sampling equals the true parameter. Note that whether an estimator is biased or not implies nothing about its dispersion. January 18, 2013 Slide 71

- 72. Bias 1 0.8 Normal 0.6 (s=1.0) Prob 0.4 Series3 0.2 0 -3 0.2 1 1.8 2.6 -2.2 -1.4 -0.6 X January 18, 2013 Slide 72

- 73. Efficiency An estimator is efficient if it is unbiased and where its variance is less than any other unbiased estimator of the parameter. Is unbiased; ~ Var( ) Var ( ) ~ where is any other unbiased estimator of There might be instances in which we might choose a biased estimator, if it has a smaller variance. January 18, 2013 Slide 73

- 74. Efficiency 0.5 0.4 Normal 0.3 (s=1.0) Prob 0.2 Normal (s=2.0) 0.1 0 -3 0.2 1 1.8 2.6 -2.2 -1.4 -0.6 X Series 1 (s=1.0) is more efficient than Series 2 (s=2.0) January 18, 2013 Slide 74

- 75. BLUE (Best Linear Unbiased Estimate) An estimator is described as a BLUE estimator if it is is a linear function is unbiased Var( ) Var ( ) ~ where ~ is any other linear unbiased estimator of January 18, 2013 Slide 75

- 76. What is a linear estimator? A linear estimator looks like the formula for a straight line ˆ a1 x1 a2 x2 a3 x3 ... an xn The linearity referred to is the linearity in the parameters Note that the sample mean is an example of a linear estimator. 1 1 1 1 X X1 X2 X3 ... Xn n n n n January 18, 2013 Slide 76

- 77. BLUE is Bueno If your estimator (e.g. the Regression B) is the BLUE estimator, then you have a very good estimator – relative to other regression style estimators. The problem is that if certain assumptions are violated, then OLS may no longer be the “best” estimator. There might be a better one! You can still hope that the large sample properties hold though! January 18, 2013 Slide 77

- 78. Asymptotic (Large Sample) Properties Asymptotically unbiased Consistency Asymptotic efficiency January 18, 2013 Slide 78

- 79. Asymptotic bias An estimator is unbiased if lim E ( ˆn ) n As the sample size gets larger the estimated parameter gets closer to the true value. For instance: 2 2 (Xi X) 2 2 1 S and E(S ) 1- n n January 18, 2013 Slide 79

- 80. Consistency The point at which a distribution collapses is called the probability limit (plim) If the bias and variance both decrease as the sample size gets larger, the estimator is consistent. ˆ lim n P 1 0 Usually noted by plim ˆ n January 18, 2013 Slide 80

- 81. Asymptotic efficiency An estimator is asymptotically efficient if asymptotic distribution with finite mean and variance is consistent no other estimator has smaller asymptotic variance January 18, 2013 Slide 81

- 82. Rifle and Target Analogy Small sample properties Bias: The shots cluster around some spot other than the bull‟s-eye) Efficient: When one rifle‟s cluster is smaller than another‟s. BLUE - Smallest scatter for rifles of a particular type of simple construction January 18, 2013 Slide 82

- 83. Rifle and Target Analogy (cont.) Asymptotic properties Think of increased sample size as getting closer to the target. Asymptotic Unbiasedness means that as the sample size gets larger, the center of the point cluster moves closer to the target center. With consistency, the point cluster moves closer to the target center and cluster shrinks in size. If it is asymptotically efficient, then no other rifle has a smaller cluster that is closer to the true center. When all of the assumptions of the OLS model hold its estimators are: unbiased Minimum variance, and BLUE January 18, 2013 Slide 83

- 84. How we will approach the question. Definition Implications Causes Tests Remedies January 18, 2013 Slide 84

- 85. Non-zero Mean for the residuals (Definition) Definition: The residuals have a mean other than 0.0. Note that this refers to the true residuals. Hence the estimated residuals have a mean of 0.0, while the true residuals are non-zero. January 18, 2013 Slide 85

- 86. Non-zero Mean for the residuals (Implications) The true regression line is Yi a bX i e Therefore the intercept is biased. The slope, b, is unbiased. There is also no way of separating out a and . January 18, 2013 Slide 86

- 87. Non-zero Mean for the residuals (Causes, Tests, Remedies) Causes: Non-zero means result from some form of specification error. Something has been omitted from the model which accounts for that mean in the estimation. We will discuss Tests and Remedies when we look closely at Specification errors. January 18, 2013 Slide 87

- 88. Non-normally distributed errors : Definition The residuals are not NID(0, ) Normality Tests Section Assumption Value Probability Decision(5%) Skewness 5.1766 0.000000 Rejected Kurtosis 4.6390 0.000004 Rejected Omnibus 48.3172 0.000000 Rejected Histogram of Residuals of rate90 35.0 26.3 Count 17.5 8.8 0.0 -1000.0 -250.0 500.0 1250.0 2000.0 Residuals of rate90 January 18, 2013 Slide 88

- 89. Non-normally distributed errors : Implications The existence of residuals which are not normally distributed has several implications. First is that it implies that the model is to some degree misspecified. A collection of truly stochastic disturbances should have a normal distribution. The central limit theorem states that as the number of random variables increases, the sum of their distributions tends to be a normal distribution. Distribution theory – beyond the scope of this course January 18, 2013 Slide 89

- 90. Non-normally distributed errors : Implications (cont.) If the residuals are not normally distributed, then the estimators of a and b are also not normally distributed. Estimates are, however, still BLUE. Estimates are unbiased and have minimum variance. They are no longer efficient, even though they are asymptotically unbiased and consistent. It is only our hypothesis tests which are suspect. January 18, 2013 Slide 90

- 91. Non-normally distributed errors: Causes Generally causes by a misspecification error. Usually an omitted variable. Can also result from Outliers in data. Wrong functional form. January 18, 2013 Slide 91

- 92. errors : Tests for non- normality Chi-Square goodness of fit Since the cumulative normal frequency distribution has a chi-square distribution, we can test for the normality of the error terms using a standard chi- square statistic. We take our residuals, group them, and count how many occur in each group, along with how many we would expect in each group. January 18, 2013 Slide 92

- 93. errors : Tests for non- normality (cont.) We then calculate the simple 2 statistic. 2 k 2 Oi Ei This statistic has (N-1) degrees of ifreedom, where N is i 1 E the number of classes. January 18, 2013 Slide 93

- 94. errors : Tests for non- normality (cont.) Jarque-Bera test This test examines both the skewness and kurtosis of a distribution to test for normality. Where S is the skewness and K is the kurtosis of the 2 2 S (K 3) residuals. JB n 6 24 JB has a 2 distribution with 2 df. January 18, 2013 Slide 94

- 95. Non-normally distributed errors: Remedies Try to modify your theory. Omitted variable? Outlier needing specification? Modify your functional form by taking some variance transforming step such as square root, exponentiation, logs, etc. Be mindful that you are changing the nature of the model. Bootstrap it! From the shameless commercial division! January 18, 2013 Slide 95

- 96. Multicollinearity: Definition Multicollinearity is the condition where the independent variables are related to each other. Causation is not implied by multicollinearity. As any two (or more) variables become more and more closely correlated, the condition worsens, and „approaches singularity‟. Since the X's are fixed (or they are supposed to be anyway), this a sample problem. Since multicollinearity is almost always present, it is a problem of degree, not merely existence. January 18, 2013 Slide 96

- 97. Multicollinearity: Implications Consider the following cases A. No multicollinearity The regression would appear to be identical to separate bivariate regressions This produces variances which are biased upward (too large) making t-tests too small. The coefficients are unbiased. For multiple regression this satisfies the assumption. January 18, 2013 Slide 97

- 98. Multicollinearity: Implications (cont.) B. Perfect Multicollinearity Some variable Xi is a perfect linear combination of one or more other variables Xj, therefore X'X is singular, and |X'X| = 0. This is matrix algebra notation. It means that one variable is a perfect linear function of another. (e.g. X2 = X1+3.2) The effects of X1 and X2 cannot be separated. The standard errors for the B‟s are infinite. A model cannot be estimated under such circumstances. The computer dies. And takes your model down with it… January 18, 2013 Slide 98

- 99. Multicollinearity: Implications (cont.) C. A high degree of Multicollinearity When the independent variables are highly correlated the variances and covariances of the Bi's are inflated (t ratio's are lower) and R2 tends to be high as well. The B's are unbiased (but perhaps useless due to their imprecise measurement as a result of their variances being too large). In fact they are still BLUE. OLS estimates tend to be sensitive to small changes in the data. Relevant variables may be discarded. January 18, 2013 Slide 99

- 100. Multicollinearity: Causes Sampling mechanism. Poorly constructed design & measurement scheme or limited range. Too small a sample range Constrained theory: (X1 does affect X2) e.g. Elect consump = wealth + House size Statistical model specification: adding polynomial terms or trend indicators. Too many variables in the model - the model is over-determined. Theoretical specification is wrong. Inappropriate construction of theory or even measurement. If your dependent variable is constructed using an independent variable January 18, 2013 Slide 100

- 101. Multicollinearity: Tests/Indicators |X'X| approaches 0.0 The variance covariance matrix is singular, so it‟s “determinant” is 0.0 Since the determinant is a function of variable scale, this measure doesn't help a whole lot. We could, however, use the determinant of the correlation matrix and therefore bound the range from 0. to 1.0 January 18, 2013 Slide 101

- 102. Multicollinearity: Tests/Indicators (cont.) Tolerance: If the tolerance equals 1, the variables are unrelated. If Tolj = 0, then they are perfectly correlated. To calculate, regress each Independent variable on all the other independent variables Variance Inflation Factors (VIFs) 1 VIF 2 1 Rk Tolerance 2 TOL j 1 Rj (1 / (VIF j )) January 18, 2013 Slide 102

- 103. Interpreting VIFs No multicollinearity produces VIFs = 1.0 If the VIF is greater than 10.0, then multicollinearity is probably severe. 90% of the variance of Xj is explained by the other X‟s. In small samples, a VIF of about 5.0 may indicate problems January 18, 2013 Slide 103

- 104. Multicollinearity: Tests/Indicators (cont.) R2 deletes - tries all possible models of X's and by includes/ excludes based on small changes in R2 with the inclusion/omission of the variables (taken 1 at a time) F is significant, But no t value is. Adjusted R2 declines with a new variable Multicollinearity is of concern when either rX 1 X 2 r X 1Y rX 1 X 2 rX 2Y January 18, 2013 Slide 104

- 105. Multicollinearity: Tests/Indicators (cont.) I would avoid the rule of thumb rX X1 .6 2 Beta's are > 1.0 or < -1.0 Sign changes occur with the introduction of a new variable The R2 is high, but few t-ratios are. Eigenvalues and Condition Index - If this topic is beyond Gujarati, it‟s beyond me. January 18, 2013 Slide 105

- 106. Multicollinearity: Remedies Increase sample size Pooled cross-sectional time series Thereby introducing all sorts of new problems! Omit Variables Scale Construction/Transformation Factor Analysis Constrain the estimation. Such as the case where you can set the value of one coefficient relative to another. January 18, 2013 Slide 106

- 107. Multicollinearity: Remedies (cont.) Change design (LISREL maybe or Pooled cross- sectional Time series) Thereby introducing all sorts of new problems! Ridge Regression This technique introduces a small amount of bias into the coefficients to reduce their variance. Ignore it - report adjusted R2 and claim it warrants retention in the model. January 18, 2013 Slide 107

- 108. Heteroskedasticity: Definition Heteroskedasticity is a problem where the error terms do not have a constant variance. 2 2 E ( ei ) i That is, they may have a larger variance when values of some Xi (or the Yi‟s themselves) are large (or small). January 18, 2013 Slide 108

- 109. Heteroskedasticity: Definition This often gives the plots of the residuals by the dependent variable or appropriate independent variables a characteristic fan or funnel shape. 180 160 140 120 100 Series1 80 60 40 20 0 0 50 100 150 January 18, 2013 Slide 109

- 110. Heteroskedasticity: Implications The regression B's are unbiased. But they are no longer the best estimator. They are not BLUE (not minimum variance - hence not efficient). They are, however, consistent. January 18, 2013 Slide 110

- 111. Heteroskedasticity: Implications (cont.) The estimator variances are not asymptotically efficient, and they are biased. So confidence intervals are invalid. What do we know about the bias of the variance? If Yi is positively correlated with ei, bias is negative - (hence t values will be too large.) With positive bias many t's too small. January 18, 2013 Slide 111

- 112. Heteroskedasticity: Implications (cont.) Types of Heteroskedasticity There are a number of types of heteroskedasticity. Additive Multiplicative ARCH (Autoregressive conditional heteroskedastic) - a time series problem. January 18, 2013 Slide 112

- 113. Heteroskedasticity: Causes It may be caused by: Model misspecification - omitted variable or improper functional form. Learning behaviors across time Changes in data collection or definitions. Outliers or breakdown in model. Frequently observed in cross sectional data sets where demographics are involved (population, GNP, etc). January 18, 2013 Slide 113

- 114. Heteroskedasticity: Tests Informal Methods Plot the data and look for patterns! Plot the residuals by the predicted dependent variable (Resids on the Y-axis) Plotting the squared residuals actually makes more sense, since that is what the assumption refers to! Homoskedasticity will be a random scatter horizontally across the plot. January 18, 2013 Slide 114

- 115. Heteroskedasticity: Tests (cont.) Park test As an exploratory test, log the residuals and regress them on the logged values of the suspected independent variable. 2 2 ln u i ln B ln X i vi a B ln X i vi If the B is significant, then heteroskedasticity may be a problem. January 18, 2013 Slide 115

- 116. Heteroskedasticity: Tests (cont.) Glejser Test This test is quite similar to the park test, except that it uses the absolute values of the residuals, and a variety of transformed X‟s. 1 ui B1 B2 X i vi ui B1 B2 vi Xi ui B1 B2 Xi vi ui B1 B2 X i vi 1 ui B1 B2 vi ui B1 B2 X i 2 vi Xi A significant B2 indicated Heteroskedasticity. Easy test, but has problems. January 18, 2013 Slide 116

- 117. Heteroskedasticity: Tests (cont.) Goldfeld-Quandt test Order the n cases by the X that you think is correlated with ei2. Drop a section of c cases out of the middle (one-fifth is a reasonable number). Run separate regressions on both upper and lower samples. January 18, 2013 Slide 117

- 118. Heteroskedasticity: Tests (cont.) Goldfeld-Quandt test (cont.) Do F-test for difference in error variances F has (n - c - 2k)/2 degrees of freedom for each s ee 1 F n c 2k n c 2k ( 2 , 2 ) s ee 2 January 18, 2013 Slide 118

- 119. Heteroskedasticity: Tests (cont.) Breusch-Pagan-Godfrey Test (Lagrangian Multiplier test) Estimate model with OLS Obtain ~2 2 ui / n Construct variables pi ˆ ui 2 ~ 2 / i January 18, 2013 Slide 119

- 120. Heteroskedasticity: Tests (cont.) Breusch-Pagan-Godfrey Test (cont.) Regress pi on the X (and other?!) variables Calculate pi 1 2 Z 2i 3 Z 3i ... m Z mi Note that 1 (ESS ) 2 2 m 1 January 18, 2013 Slide 120

- 121. Heteroskedasticity: Tests (cont.) White‟s Generalized Heteroskedasticity test Estimate model with OLS and obtain residuals Run the following auxiliary regression Higher powers may also be used, along with more X‟s 1 (ESS ) 2 January 18, 2013 Slide 121

- 122. Heteroskedasticity: Tests (cont.) White‟s Generalized Heteroskedasticity test (cont.) Note that 2 2 n R The degrees of freedom is the number of coefficients estimated above. January 18, 2013 Slide 122

- 123. Heteroskedasticity: Remedies GLS We will cover this after autocorrelation Weighted Least Squares si2 is a consistent estimator of σi2 use same formula (BLUE) to get a & ß January 18, 2013 Slide 123

- 124. Iteratively weighted least squares (IWLS) Iteratively weighted least squares (IWLS) 1. Obtain estimates of ei2 using OLS Yi a bX i ei 2. Use these to get "1st round" estimates of σi 3. Using formula above replace wi with 1/ si and obtain new estimates for a and ß. 4. Adjust data * Yi * Xi Yi , Xi si si 5. Use these to re-estimate * * * * * Yi Xi ei 6. Repeat Step 3-5 until a and ß converge. January 18, 2013 Slide 124

- 125. White‟s corrected standard errors White’s corrected standard errors For normal OLS 2 ˆ Var ( B1 ) TSS x We can restate this as n 2 2 ( xi x) i ˆ Var ( B1 ) i 1 2 TSS x Since n 2 ( xi x) i 1 this is the same when 2 2 January 18, 2013 i Slide 125

- 126. errors (cont.) White’s corrected standard errors White‟s solution is to use the robust estimator n 2 2 ˆ 2ˆ ( rij ) u i ˆ ˆ Va r ( B j ) i 1 2 RSS j When you see robust standard errors, it usually refers to this estimator January 18, 2013 Slide 126

- 127. Obtaining Robust errors In Stata, just add a , r to the regress command regress approval unemrate Becomes regress approval unemrate, r January 18, 2013 Slide 127

- 128. Autocorrelation: Definition Autocorrelation is simply the presence of correlation between adjacent (contemporaneous) residuals. If a residual is negative (or positive) then its neighbors tend to also be negative (or positive). Most often autocorrelation is between adjacent observations, however, lagged or seasonal patterns can also occur. Autocorrelation is also usually a function of order by time, but it can occur for other orders as well – firm or state size. January 18, 2013 Slide 128

- 129. Autocorrelation: Definition (cont.) The assumption violated is E ( ei e j ) 0 This means that the Pearson‟s r (correlation coefficient) between the residuals from OLS and the same residuals lagged one period (or more) is non- zero. E ( ei e j ) 0 January 18, 2013 Slide 129

- 130. Autocorrelation: Definition (cont.) Most autocorrelation is what we call 1st order autocorrelation, meaning that the residuals are related to their contiguous values. Autocorrelation can be rather complex, producing counterintuitive patterns and correlations. January 18, 2013 Slide 130

- 131. Autocorrelation: Definition (cont.) Types of Autocorrelation Autoregressive processes Moving Averages January 18, 2013 Slide 131

- 132. Autocorrelation: Definition (cont.) Autoregressive processes AR(p) The residuals are related to their preceding values. et et 1 ut This is classic 1st order autocorrelation January 18, 2013 Slide 132

- 133. Autocorrelation: Definition (cont.) Autoregressive processes (cont.) In 2nd order autocorrelation the residuals are related to their t-2 values as well et 1 et 1 2 et 2 ut Larger order processes may occur as well et 1 et 1 2 et 2 ... p et p ut January 18, 2013 Slide 133

- 134. Autocorrelation: Definition (cont.) Moving Average Processes MA(q) et ut ut 1 The error term is a function of some random error plus a portion of the previous random error. January 18, 2013 Slide 134

- 135. Autocorrelation: Definition (cont.) Moving Average Processes (cont. Higher order processes for MA(q) also exist. et ut 1 ut 1 2 ut 2 ... q ut q The error term is a function of some random error plus a portion of the previous random error. January 18, 2013 Slide 135

- 136. Autocorrelation: Definition (cont.) Mixed processes ARMA(p,q) et 1 et 1 2 et 2 ... p et p ut 1 ut 1 2 ut 2 ... q ut q The error term is a complex function of both autoregressive and moving average processes. January 18, 2013 Slide 136

- 137. Autocorrelation: Definition (cont.) There are substantive interpretations that can be placed on these processes. AR processes represent shocks to systems that have long-term memory. MA processes are quick shocks to a system that can handle the process „efficiently,‟ having only short term memory. January 18, 2013 Slide 137

- 138. Autocorrelation: Implications Coefficient estimates are unbiased, but the estimates are not BLUE The variances are often greatly underestimated (biased small) Hence hypothesis tests are exceptionally suspect. In fact, strongly significant t-tests (P < .001) may well be insignificant once the effects of autocorrelation are removed. January 18, 2013 Slide 138

- 139. Autocorrelation: Causes Specification error Omitted variable – i.e inflation Wrong functional form Lagged effects Data Transformations Interpolation of missing data differencing January 18, 2013 Slide 139

- 140. Autocorrelation: Tests Observation of residuals Graph/plot them! Runs of signs Geary test January 18, 2013 Slide 140

- 141. Autocorrelation: Tests (cont.) Durbin-Watson d t n 2 ˆ ut ˆ ut 1 t 2 d t n ˆ2 ut t 2 Criteria for hypothesis of AC Reject if d < dL Do not reject if d > dU Test is inconclusive if dL d dU. January 18, 2013 Slide 141

- 142. Autocorrelation: Tests (cont.) Durbin-Watson d (cont.) Note that the d is symmetric about 2.0, so that negative autocorrelation will be indicated by a d > 2.0. Use the same distances above 2.0 as upper and lower bounds. Analysis of Time Series. http://cnx.org/content/m34544/latest/ January 18, 2013 Slide 142

- 143. Autocorrelation: Tests (cont.) Durbin‟s h Cannot use DW d if there is a lagged endogenous variable in the model d T h 1 2 2 1 Ts c sc2 is the estimated variance of the Yt-1 term h has a standard normal distribution January 18, 2013 Slide 143

- 144. Autocorrelation: Tests (cont.) Tests for higher order autocorreltaion Ljung-Box Q (χ2 statistic) L 2 rj Q' T (T 2) j 1 T j Also called the Portmanteau test Breusch-Godfrey January 18, 2013 Slide 144

- 145. Autocorrelation: Remedies Generalized Least Squares Later! First difference method Take 1st differences of your Xs and Y Regress Δ Y on ΔX Assumes that Φ = 1! This changes your model from one that explains rates to one that explains changes. Generalized differences Requires that Φ be known. January 18, 2013 Slide 145

- 146. Autocorrelation: Remedies Cochran-Orcutt method (1) Estimate model using OLS and obtain the residuals, u t. (2) Using the residuals from the OLS, run the following regression. ˆ ut ˆˆ put 1 vt January 18, 2013 Slide 146

- 147. Autocorrelation: Cochran-Orcutt method (cont.) (3) using the p obtained, perform the regression on the generalized differences Where Yt* =B1* + B2 Xt* + ey * t B1* = B1 (1- r ), Yt* = (Yt - rYt-1 ), Xt* = (Xt - r Xt-1 ), and B2 = B2 * (4) Substitute the values of B1 and B2 into the original regression to obtain new estimates of the residuals. (5) Return to step 2 and repeat – until p no longer changes. No longer changes means (approximately) changes are less than 3 significant digits – or, for instance, at the 3rd decimal place. January 18, 2013 Slide 147

- 148. Autocorrelation with lagged Dependent Variables The presence of a lagged dependent variable causes special estimation problems. Essentially you must purge the lagged error term of its autocorrelation by using a two stage IV solution. Careful with lagged dependent variable models. The Lagged dep var may simple scoop up all the variance to be explained. A variety of models used lagged dependent b=variables: Adaptive expectations Partial adjustment, Rational expectations. January 18, 2013 Slide 148

- 149. Model Specification: Definition The analyst should understand one fundamental “truth” about statistical models. They are all misspecified. We exist in a world of incomplete information at best. Hence model misspecification is an ever-present danger. We do, however, need to come to terms with the problems associated with misspecification so we can develop a feeling for the quality of information, description, and prediction produced by our models. January 18, 2013 Slide 149

- 150. Criteria for a “Good Model” Hendry & Richard Criteria Be data admissible – predictions must be logically possible Be consistent with theory Have weakly endogenous regressors (errors and X‟s uncorrelated) Exhibit parameter stability – relationship cannot vary over time – unless modeled in that way Exhibit data coherency – random residuals Be encompassing – contain or explain the results of other models January 18, 2013 Slide 150

- 151. Model Specification: Definition (cont.) There are basically 4 types of misspecification we need to examine: functional form inclusion of an irrelevant variable exclusion of a relevant variable measurement error and misspecified error term January 18, 2013 Slide 151

- 152. Model Specification: Implications If an omitted variable is correlated with the included variables, the estimates are biased as well as inconsistent. In addition, the error variance is incorrect, and usually overestimated. If the omitted variable is uncorrelated with the included variables, the errors are still biased, even though the B‟s are not. January 18, 2013 Slide 152

- 153. Model Specification: Implications Incorrect functional form can result in autocorrelation or heteroskedasticity. See the notes for these problems for the implications of each. January 18, 2013 Slide 153

- 154. Model Specification: Causes This one is easy - theoretical design. something is omitted, irrelevantly included, mismeasured or non-linear. This problem is explicitly theoretical. January 18, 2013 Slide 154

- 155. Data Mining There are techniques out there that look for variables to add. These are often atheoretical – but can they work? Note that data mining may alter the „true‟ level of significance With c candidates for variables in the model, and k actually chosen with an α=.05, the true level of significance is: * c/k 1 (1 ) Note similarity to Bonferroni correction January 18, 2013 a ' =1- (1- a ) 1/k Slide 155

- 156. Model Specification: Tests Actual Specification Tests No test can reveal poor theoretical construction per se. The best indicator that your model is misspecified is the discovery that the model has some undesirable statistical property; e.g. a misspecified functional form will often be indicated by a significant test for autocorrelation. Sometimes time-series models will have negative autocorrelation as a result of poor design. January 18, 2013 Slide 156

- 157. Ramsey RESET Test The Ramsey RESET test is a “Regression Specification Error Test. You add the predicted values of Y to the regression model If they have a significant coefficient then the errors are related to the predicted values, indicating that there is a specification error. This is based on demonstrating that there is some non- random behavior left in the residuals January 18, 2013 Slide 157

- 158. Model Specification: Tests Specification Criteria for lagged designs Most useful for comparing time series models with same set of variables, but differing number of parameters January 18, 2013 Slide 158