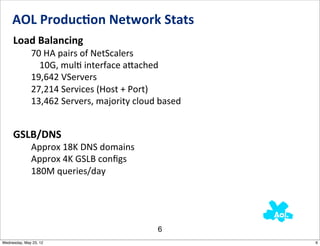

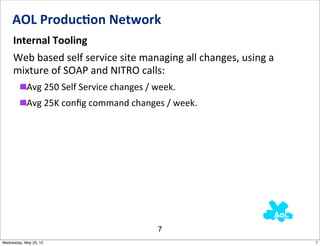

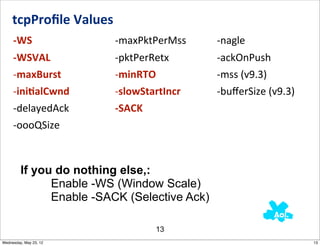

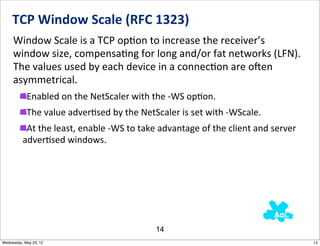

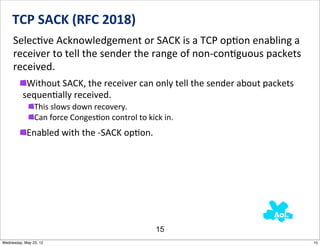

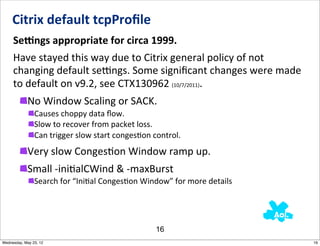

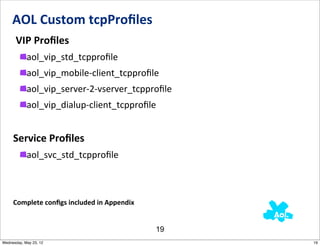

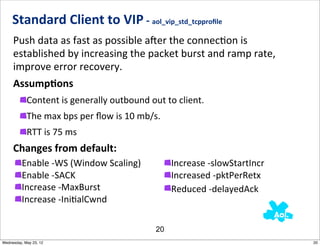

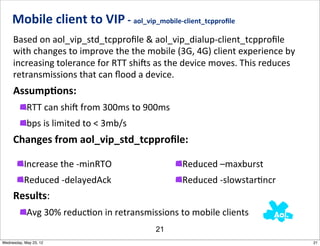

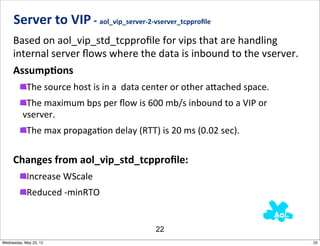

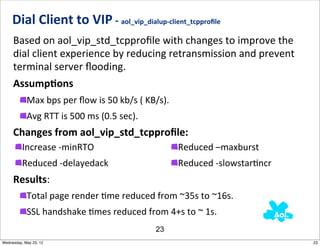

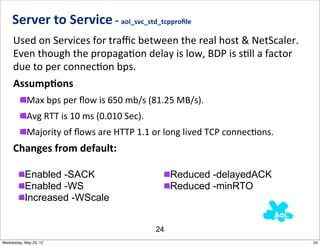

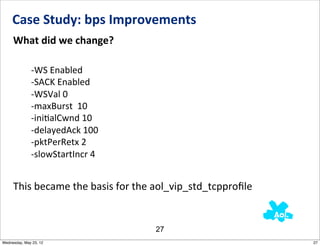

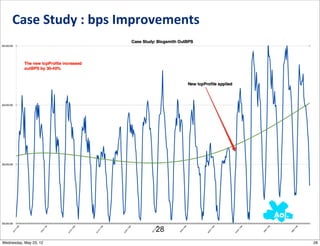

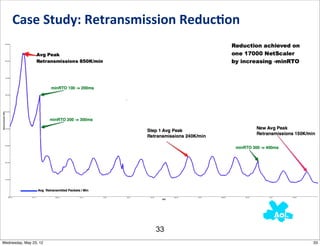

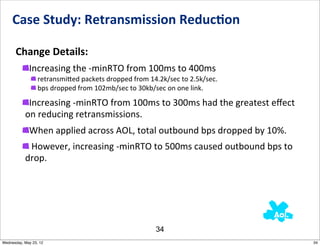

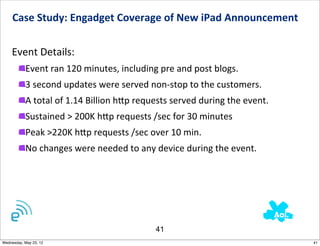

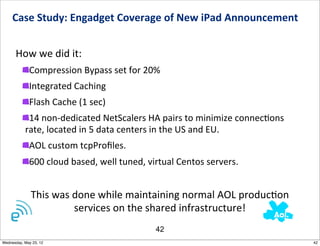

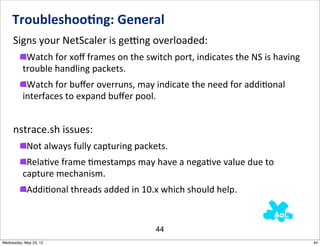

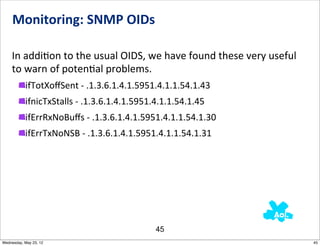

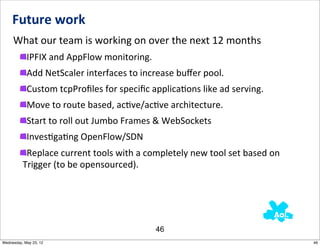

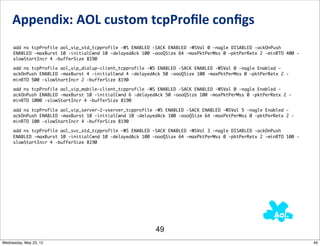

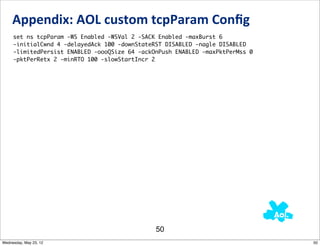

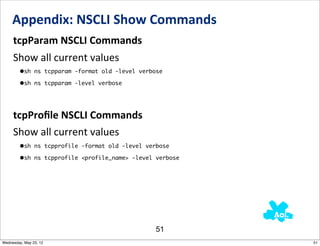

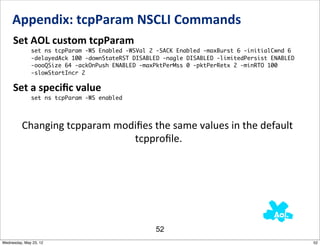

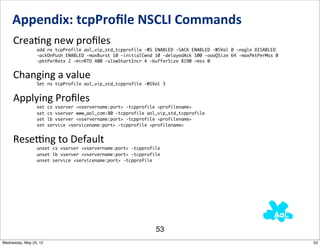

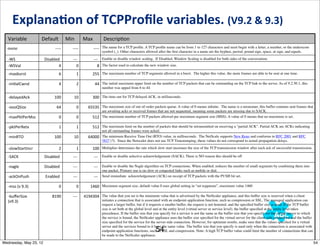

This document outlines the TCP performance tuning strategies implemented in the AOL network using Netscaler, focusing on optimizing customer experience through efficient network performance. It covers various TCP tuning concepts, case studies demonstrating significant improvements in bits per second and retransmission rates, and details of custom TCP profiles used for different connection types. The presentation emphasizes the importance of monitoring and troubleshooting to maintain operational efficiency.