Toward Automated Fact-Checking: Detecting Check-worthy Factual Claims by ClaimBuster

•

0 likes•48 views

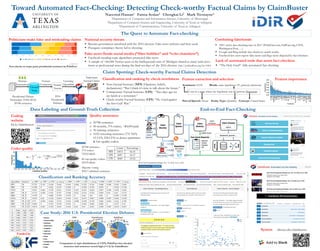

This paper introduces how ClaimBuster, a fact-checking platform, uses natural language processing and supervised learning to detect important factual claims in political discourses. The claim spotting model is built using a human-labeled dataset of check-worthy factual claims from the U.S. general election debate transcripts. The paper explains the architecture and the components of the system and the evaluation of the model. It presents a case study of how ClaimBuster live covers the 2016 U.S. presidential election debates and monitors social media and Australian Hansard for factual claims. It also describes the current status and the long-term goals of ClaimBuster as we keep developing and expanding it.

Report

Share

Report

Share

Download to read offline

Recommended

ClaimBuster: The First-ever End-to-end Fact-checking SystemClaimBuster: The First-ever End-to-end Fact-checking System

ClaimBuster: The First-ever End-to-end Fact-checking SystemThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

Recommended

ClaimBuster: The First-ever End-to-end Fact-checking SystemClaimBuster: The First-ever End-to-end Fact-checking System

ClaimBuster: The First-ever End-to-end Fact-checking SystemThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

This presentation outlines five ways to find data on your reporting beat that can be developed into unique stories. It also outlines several data-driven story ideas on three beats: cops and courts, health, and government. And it includes exercises on how to sort in Excel and search for stories in government databases. It was created by Manuel Torres, enterprise editor for The Times-Picayune | Nola.com, for APME's NewsTrain in Monroe, La., on Oct. 15-16, 2015. It is accompanied by two handouts: "Data-Driven Enterprise off Your Beat" and "Help Getting Public Records." NewsTrain is a training initiative of Associated Press Media Editors: http://bit.ly/NewsTrainData-Driven Enterprise on Any Beat by Manuel Torres - Monroe, La., NewsTrain ...

Data-Driven Enterprise on Any Beat by Manuel Torres - Monroe, La., NewsTrain ...News Leaders Association's NewsTrain

Track 4. New publishing and scientific communication ways: Electronic edition, digital educational resources

Authors: Taisa Rodrigues Dantas; Almudena Mangas Vega; Javier Merchán Sánchez-Jara; Sofia Pelosi; Raquel Goméz-Díaz; Araceli García-Rodríguez; José Antonio Cordón-García

https://youtu.be/VRjshim-xUwEbooks and Electronic Devices at the University of Salamanca: perception and ...

Ebooks and Electronic Devices at the University of Salamanca: perception and ...Technological Ecosystems for Enhancing Multiculturality

Federal documents detailing the attacks at the U.S. Capitol show a mix of FBI techniques, from license plate readers to facial recognition, that helped identify rioters. Digital rights activists say the invasive technology can infringe on our privacy.How America’s surveillance networks helped the FBI catch the Capitol mob

How America’s surveillance networks helped the FBI catch the Capitol mobLUMINATIVE MEDIA/PROJECT COUNSEL MEDIA GROUP

More Related Content

What's hot

This presentation outlines five ways to find data on your reporting beat that can be developed into unique stories. It also outlines several data-driven story ideas on three beats: cops and courts, health, and government. And it includes exercises on how to sort in Excel and search for stories in government databases. It was created by Manuel Torres, enterprise editor for The Times-Picayune | Nola.com, for APME's NewsTrain in Monroe, La., on Oct. 15-16, 2015. It is accompanied by two handouts: "Data-Driven Enterprise off Your Beat" and "Help Getting Public Records." NewsTrain is a training initiative of Associated Press Media Editors: http://bit.ly/NewsTrainData-Driven Enterprise on Any Beat by Manuel Torres - Monroe, La., NewsTrain ...

Data-Driven Enterprise on Any Beat by Manuel Torres - Monroe, La., NewsTrain ...News Leaders Association's NewsTrain

Track 4. New publishing and scientific communication ways: Electronic edition, digital educational resources

Authors: Taisa Rodrigues Dantas; Almudena Mangas Vega; Javier Merchán Sánchez-Jara; Sofia Pelosi; Raquel Goméz-Díaz; Araceli García-Rodríguez; José Antonio Cordón-García

https://youtu.be/VRjshim-xUwEbooks and Electronic Devices at the University of Salamanca: perception and ...

Ebooks and Electronic Devices at the University of Salamanca: perception and ...Technological Ecosystems for Enhancing Multiculturality

What's hot (20)

Data-Driven Enterprise on Any Beat by Manuel Torres - Monroe, La., NewsTrain ...

Data-Driven Enterprise on Any Beat by Manuel Torres - Monroe, La., NewsTrain ...

Associated Press California Proposition 8 Database Project

Associated Press California Proposition 8 Database Project

US mining data from 9 leading internet firms and companies deny knowledge

US mining data from 9 leading internet firms and companies deny knowledge

Use a web search engine to search for local, state, and federal gove

Use a web search engine to search for local, state, and federal gove

Ebooks and Electronic Devices at the University of Salamanca: perception and ...

Ebooks and Electronic Devices at the University of Salamanca: perception and ...

Similar to Toward Automated Fact-Checking: Detecting Check-worthy Factual Claims by ClaimBuster

Federal documents detailing the attacks at the U.S. Capitol show a mix of FBI techniques, from license plate readers to facial recognition, that helped identify rioters. Digital rights activists say the invasive technology can infringe on our privacy.How America’s surveillance networks helped the FBI catch the Capitol mob

How America’s surveillance networks helped the FBI catch the Capitol mobLUMINATIVE MEDIA/PROJECT COUNSEL MEDIA GROUP

Similar to Toward Automated Fact-Checking: Detecting Check-worthy Factual Claims by ClaimBuster (20)

How America’s surveillance networks helped the FBI catch the Capitol mob

How America’s surveillance networks helped the FBI catch the Capitol mob

History 3100 AAAS 2800 Modern American Civil Rights Movemen.docx

History 3100 AAAS 2800 Modern American Civil Rights Movemen.docx

Study: Millions of Americans Go To Court Without a Lawyer

Study: Millions of Americans Go To Court Without a Lawyer

From traditional to online fact-checking - Il fact checking dalla tradizione ...

From traditional to online fact-checking - Il fact checking dalla tradizione ...

Federal Statistical System, Transparency Camp West

Federal Statistical System, Transparency Camp West

The Role of Innovation and Evidence-Based Decision Making in the 2016 U.S. El...

The Role of Innovation and Evidence-Based Decision Making in the 2016 U.S. El...

Policy and the Private Sector: Addressing the NSA Leaks

Policy and the Private Sector: Addressing the NSA Leaks

Social media and their influence on democratic election

Social media and their influence on democratic election

A. Can InciFIN 465Innovations in Contemporary FinanceP.docx

A. Can InciFIN 465Innovations in Contemporary FinanceP.docx

Migration Patterns in the US and its Effects on Terror_Anya_FINAL (1)

Migration Patterns in the US and its Effects on Terror_Anya_FINAL (1)

Anger swells after NSA phone records collection revelations

Anger swells after NSA phone records collection revelations

More from The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

The IDIR Lab at the University of Texas at Arlington has been on the frontier of computational journalism research in the past several years. This talk will discuss two systems developed in the thrust. (1) ClaimBuster, a fact-checking system, uses machine learning and natural language processing techniques to help journalists find political claims to fact-check. We will use it to find factual statements in the 2016 United States presidential debates that are worth checking, with the goal of expanding its use to other types of discourses, articles, social media, as well as non-political claims. Still under development, a near real-time test during the recent August 6th Republican debate of the 2016 election suggests a statistically significant agreement between ClaimBuster and professional fact-checkers. This talk will discuss the ideas behind ClaimBuster, its data collection process, the experience with the Republican debate, and our quest for the "Holy Grail"---a completely-automated, live fact-checking machine that replaces human fact-checkers. (2) The talk will also briefly discuss FactWatcher, a system that helps journalists identify data-backed, attention-seizing facts which serve as leads to news stories. Given an append-only database, upon the arrival of a new tuple, FactWatcher monitors if the tuple triggers any new facts. Its algorithms efficiently search for facts without exhaustively testing all possible ones. Furthermore, FactWatcher provides multiple features in striving for an end-to-end system, including fact ranking, fact-to-statement translation and keyword-based fact search. In 2014 the system won an Excellent Demonstration Award at VLDB, one of the two most prestigious conferences in the database area.Enabling Computational Journalism: Automated Fact-Checking and Story-Finding

Enabling Computational Journalism: Automated Fact-Checking and Story-FindingThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

Our society is struggling with an unprecedented amount of falsehood. Human fact-checkers cannot keep up with the amount of misinformation and disinformation and the speed at which they spread. This challenge creates an opportunity for automated fact-checking systems. We have been building ClaimBuster, an end-to-end system for data-driven fact-checking. ClaimBuster uses machine learning, natural language processing, and database query techniques to aid in the process of fact-checking. It monitors live discourses and social media to spot factual claims, detect matches between the claims and a curated repository of fact-checks, and deliver those matches instantly to the audience. For various types of new claims not checked before, ClaimBuster automatically translates them into queries against knowledge databases and reports whether they check out. While the development of the full-fledged system is still ongoing, several components of ClaimBuster are integrated and deployed at http://idir.uta.edu/claimbuster/. The project is part of the IDIR Lab's inter-disciplinary research program in computational journalism. Under this program, we have also extensively worked on frameworks, algorithms, and systems for efficient discovery of data-backed facts. Particularly, we developed Maverick, an extensible graph mining framework that discovers exceptional facts about entities in knowledge graphs, and FactWatcher (http://idir.uta.edu/factwatcher/), a suite of fact-finding algorithms that monitor structured event databases. The IDIR Lab is also conducting research on tackling usability challenges in querying, mining, and exploring entity graphs. This talk will first present a high-level overview of these projects, followed by brief discussion of ClaimBuster's claim-spotting method and several ongoing directions. It will then provide more details about FactWatcher and Maverick, which represents a synergy of several research thrusts. Finally, the talk will discuss our plan for research on improving transparency and trust in fact-checking and improving usability of fact-finding systems.Restoring Trust by Computing: Data-driven Fact-checking and Exceptional Fact ...

Restoring Trust by Computing: Data-driven Fact-checking and Exceptional Fact ...The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

There is a pressing need to tackle the usability challenges in querying massive, ultra-heterogeneous entity graphs which use thousands of node and edge types in recording millions to billions of entities (persons, products, organizations) and their relationships. Widely known instances of such graphs include Freebase, DBpedia and YAGO. Applications in a variety of domains are tapping into such graphs for richer semantics and better intelligence. Both data workers and application developers are often overwhelmed by the daunting task of understanding and querying these data, due to their sheer size and complexity. To retrieve data from graph databases, the norm is to use structured query languages such as SQL, SPARQL, and those alike. However, writing structured queries requires extensive experience in query language and data model. The database community has long recognized the importance of graphical query interface to the usability of data management systems. Yet, relatively little has been done. Existing visual query builders allow users to build queries by drawing query graphs, but do not offer suggestions to users regarding what nodes and edges to include. At every step of query formulation, a user would be inundated with possibly hundreds of or even more options.

Towards improving the usability of graph query systems, Orion is a visual query interface that iteratively assists users in query graph construction by making suggestions using machine learning methods. In its active mode, Orion suggests top-k edges to be added to a query graph, without being triggered by any user action. In its passive mode, the user adds a new edge manually, and Orion suggests a ranked list of labels for the edge. Orion’s edge ranking algorithm, Random Correlation Paths (RCP), makes use of a query log to rank candidate edges by how likely they will match users’ query intent. Extensive user studies using Freebase demonstrated that Orion users have a 70% success rate in constructing complex query graphs, a significant improvement over the 58% success rate by users of a baseline system that resembles existing visual query builders. Furthermore, using active mode only, the RCP algorithm was compared with several methods adapting other machine learning algorithms such as random forests and naive Bayes classifier, as well as recommendation systems based on singular value decomposition. On average, RCP required only 40 suggestions to correctly reach a target query graph while other methods required 2-4 times as many suggestions.Tackling Usability Challenges in Querying Massive, Ultra-heterogeneous Graphs

Tackling Usability Challenges in Querying Massive, Ultra-heterogeneous GraphsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

A database application differs form regular applications in that some of its inputs may be database queries. The program will execute the queries on a database and may use any result values in its subsequent program logic. This means that a user-supplied query may determine the values that the application will use in subsequent branching conditions. At the same time, a new database application is often required to work well on a body of existing data stored in some large database. For systematic testing of database applications, recent techniques replace the existing database with carefully crafted mock databases. Mock databases return values that will trigger as many execution paths in the application as possible and thereby maximize overall code coverage of the database application.

In this paper we offer an alternative approach to database application testing. Our goal is to support software engineers in focusing testing on the existing body of data the application is required to work well on. For that, we propose to side-step mock database generation and instead generate queries for the existing database. Our key insight is that we can use the information collected during previous program executions to systematically generate new queries that will maximize the coverage of the application under test, while guaranteeing that the generated test cases focus on the existing data.Dynamic Symbolic Database Application Testing

Dynamic Symbolic Database Application TestingThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

This paper proposes Facetedpedia, a faceted retrieval system for information discovery and exploration in Wikipedia. Given the set of Wikipedia articles resulting from a keyword query, Facetedpedia generates a faceted interface for navigating the result articles. Compared with other faceted retrieval systems, Facetedpedia is fully automatic and dynamic in both facet generation and hierarchy construction, and the facets are based on the rich semantic information from Wikipedia. The essence of our approach is to build upon the collaborative vocabulary in Wikipedia, more specifically the intensive internal structures (hyperlinks) and folksonomy (category system). Given the sheer size and complexity of this corpus, the space of possible choices of faceted interfaces is prohibitively large. We propose metrics for ranking individual facet hierarchies by user’s navigational cost, and metrics for ranking interfaces (each with k facets) by both their average pairwise similarities and average navigational costs. We thus develop faceted interface discovery algorithms that optimize the ranking metrics. Our experimental evaluation and user study verify the effectiveness of the system.Facetedpedia: Dynamic Generation of Query-Dependent Faceted Interfaces for Wi...

Facetedpedia: Dynamic Generation of Query-Dependent Faceted Interfaces for Wi...The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

Wikipedia is the largest user-generated knowledge base. We propose a structured query mechanism, entity-relationship query, for searching entities in Wikipedia corpus by their properties and inter-relationships. An entity-relationship query consists of arbitrary number of predicates on desired entities. The semantics of each predicate is specified with keywords. Entity-relationship query searches entities directly over text rather than pre-extracted structured data stores. This characteristic brings two benefits: (1) Query semantics can be intuitively expressed by keywords; (2) It avoids information loss that happens during extraction. We present a ranking framework for general entity-relationship queries and a position-based Bounded Cumulative Model for accurate ranking of query answers. Experiments on INEX benchmark queries and our own crafted queries show the effectiveness and accuracy of our ranking method.Entity-Relationship Queries over Wikipedia

Entity-Relationship Queries over WikipediaThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

This paper studies the problem of prominent streak discovery in sequence data. Given a sequence of values, a prominent streak is

a long consecutive subsequence consisting of only large (small)

values. For finding prominent streaks, we make the observation

that prominent streaks are skyline points in two dimensions– streak

interval length and minimum value in the interval. Our solution

thus hinges upon the idea to separate the two steps in prominent

streak discovery– candidate streak generation and skyline operation over candidate streaks. For candidate generation, we propose the concept of local prominent streak (LPS). We prove that prominent streaks are a subset of LPSs and the number of LPSs is less than the length of a data sequence, in comparison with the quadratic number of candidates produced by a brute-force baseline method. We develop efficient algorithms based on the concept of LPS. The non-linear LPS-based method (NLPS) considers a superset of LPSs as candidates, and the linear LPS-based method (LLPS) further guarantees to consider only LPSs. The results of experiments using multiple real datasets verified the effectiveness of the proposed methods and showed orders of magnitude performance improvement against the baseline method.Prominent Streak Discovery in Sequence Data

Prominent Streak Discovery in Sequence DataThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

CrewScout is an expert-team finding system based on the concept of skyline teams and efficient algorithms for finding such teams. Given a set of experts, CrewScout finds all k-expert skyline teams, which are not dominated by any other k-expert teams. The dominance between teams is governed by comparing their aggregated expertise vectors. The need for finding expert teams prevails in applications such as question answering, crowdsourcing, panel selection, and project team formation. The new contributions of this paper include an end-to-end system with an interactive user interface that assists users in choosing teams and an demonstration of its application domains.Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScout

Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScoutThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

We study the novel problem of finding new, prominent situational facts, which are emerging statements about objects that stand out within certain contexts. Many such facts are newsworthy—e.g., an athlete’s outstanding performance in a game, or a viral video’s impressive popularity. Effective and efficient identification of these facts assists journalists in reporting, one of the main goals of computational journalism. Technically, we consider an ever-growing table of objects with dimension and measure attributes. A situational fact is a “contextual” skyline tuple that stands out against historical tuples in a context, specified by a conjunctive constraint involving dimension attributes, when a set of measure attributes are compared. New tuples are constantly added to the table, reflecting events happening in the real world. Our goal is to discover constraint-measure pairs that qualify a new tuple as a contextual skyline tuple, and discover them quickly before the event becomes yesterday’s news. A brute-force approach requires exhaustive comparison with every tuple, under every constraint, and in every measure subspace. We design algorithms in response to these challenges using three corresponding ideas—tuple reduction, constraint pruning, and sharing computation across measure subspaces. We also adopt a simple prominence measure to rank the discovered facts when they are numerous. Experiments over two real datasets validate the effectiveness and efficiency of our techniques.Incremental Discovery of Prominent Situational Facts

Incremental Discovery of Prominent Situational FactsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

We witness an unprecedented proliferation of knowledge graphs that record millions of heterogeneous entities and their diverse relationships. While knowledge graphs are structure-flexible and content-rich, it is difficult to query them. The challenge lies in the gap between their overwhelming complexity and the limited database knowledge of non-professional users. If writing structured queries over “simple” tables is difficult, it gets even harder to query complex knowledge graphs. As an initial step toward improving the usability of knowledge graphs, we propose to query such data by example entity tuples, without requiring users to write complex graph queries. Our system, GQBE (Graph Query By Example), is a proof of concept to show the possibility of this querying paradigm working in practice. The proposed framework automatically derives a hidden query graph based on input query tuples and finds approximate matching answer graphs to obtain a ranked list of top-k answer tuples. It also makes provisions for users to give feedback on the presented top-k answer tuples. The feedback is used to refine the query graph to better capture the user intent. We conducted initial experiments on the real-world Freebase dataset, and observed appealing accuracy and efficiency. Our proposal of querying by example tuples provides a complementary approach to the existing keyword-based and query-graph-based methods, facilitating user-friendly graph querying. To the best of our knowledge, GQBE is among the first few emerging systems to query knowledge graphs by example entity tuples.Towards a Query-by-Example System for Knowledge Graphs

Towards a Query-by-Example System for Knowledge GraphsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

Towards computational journalism, we present FactWatcher, a system that helps journalists identify data-backed, attention-seizing facts which serve as leads to news stories. FactWatcher discovers three types of facts, including situational facts, one-of-the-few facts, and prominent streaks, through a unified suite of data model, algorithm framework, and fact ranking measure. Given an append-only database, upon the arrival of a new tuple, FactWatcher monitors if the tuple triggers any new facts. Its algorithms efficiently search for facts without exhaustively testing all possible ones. Furthermore, FactWatcher provides multiple features in striving for an end-to-end system, including fact ranking, fact-to-statement translation and keyword-based fact search.Data In, Fact Out: Automated Monitoring of Facts by FactWatcher

Data In, Fact Out: Automated Monitoring of Facts by FactWatcherThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

CrewScout is an expert-team finding system based on the concept of skyline teams and efficient algorithms for finding such teams. Given a set of experts, CrewScout finds all k-expert skyline teams, which are not dominated by any other k-expert teams. The dominance between teams is governed by comparing their aggregated expertise vectors. The need for finding expert teams prevails in applications such as question answering, crowdsourcing, panel selection, and project team formation. The new contributions of this paper include an end-to-end system with an interactive user interface that assists users in choosing teams and an demonstration of its application domains.Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScoutCrewsc...

Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScoutCrewsc...The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

We present VIIQ (pronounced as wick), an interactive and iterative visual query formulation interface that helps users construct query graphs specifying their exact query intent. Heterogeneous graphs are increasingly used to represent complex relationships in schema-less data, which are usually queried using query graphs. Existing graph query systems offer little help to users in easily choosing the exact labels of the edges and vertices in the query graph. VIIQ helps users easily specify their exact query intent by providing a visual interface that lets them graphically add various query graph components, backed by an edge suggestion mechanism that suggests edges relevant to the user’s query intent. In this demo we present: 1) a detailed description of the various features and user-friendly graphical interface of VIIQ, 2) a brief description of the edge suggestion algorithm, and 3) a demonstration scenario that we intend to show the audience.VIIQ: Auto-suggestion Enabled Visual Interface for Interactive Graph Query Fo...

VIIQ: Auto-suggestion Enabled Visual Interface for Interactive Graph Query Fo...The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

This is the first study of crowdsourcing Pareto-optimal object finding over partial orders and by pairwise comparisons, which has applications in public opinion collection, group decision making, and information exploration. Departing from prior studies on crowdsourcing skyline and ranking queries, it considers the case where objects do not have explicit attributes and preference relations on objects are strict partial orders. The partial orders are derived by aggregating crowdsourcers’ responses to pairwise comparison questions. The goal is to find all Pareto-optimal objects by the fewest possible questions. It employs an iterative question-selection framework. Guided by the principle of eagerly identifying non-Pareto optimal objects, the framework only chooses candidate questions which must satisfy three conditions. This design is both sufficient and efficient, as it is proven to find a short terminal question sequence. The framework is further steered by two ideas—macro-ordering and micro-ordering. By different micro-ordering heuristics, the framework is instantiated into several algorithms with varying power in pruning questions. Experiment results using both real crowdsourcing marketplace and simulations exhibited not only orders of magnitude reductions in questions when compared with a brute-force approach, but also close-to-optimal performance from the most efficient instantiation. Crowdsourcing Pareto-Optimal Object Finding by Pairwise Comparisons

Crowdsourcing Pareto-Optimal Object Finding by Pairwise ComparisonsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

Users are tapping into massive, heterogeneous entity graphs for many applications. It is challenging to select entity graphs for a particular need, given abundant datasets from many sources and the oftentimes scarce information for them. We propose methods to produce preview tables for compact presentation of important entity types and relationships in entity graphs. The preview tables assist users in attaining a quick and rough preview of the data. They can be shown in a limited display space for a user to browse and explore, before she decides to spend time and resources to fetch and investigate the complete dataset. We formulate several optimization problems that look for previews with the highest scores according to intuitive goodness measures, under various constraints on preview size and distance between preview tables. The optimization problem under distance constraint is NP-hard. We design a dynamic-programming algorithm and an Apriori-style algorithm for finding optimal previews. Results from experiments, comparison with related work and user studies demonstrated the scoring measures’ accuracy and the discovery algorithms’ efficiency.Generating Preview Tables for Entity Graphs

Generating Preview Tables for Entity GraphsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

In this research, we deployed an automated fact-checking system (ClaimBuster) on the 2016 presidential primary debates and assessed its performance compared to professional news organizations. In real-time, ClaimBuster scored the statements made by candidates on check-worthiness, the likelihood that the statement contained an important factual claim. Its discrimination compared to professional journalists was high. Statements chosen for fact checking by CNN and PolitiFact had been scored much higher by ClaimBuster than those not selected. The topics of statements chosen for checking also mirrored the topics of those scored highly by ClaimBuster. Differences between the political parties and individual candidates on the use of factual claims are also presented.Comparing Automated Factual Claim Detection Against Judgments of Journalism O...

Comparing Automated Factual Claim Detection Against Judgments of Journalism O...The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

This paper introduces TableView, a tool that helps generate preview tables of massive heterogeneous entity graphs. Given the large number of datasets from many different sources, it is challenging to select entity graphs for a particular need. TableView produces preview tables to offer a compact presentation of important entity types and relationships in an entity graph. Users can quickly browse the preview in a limited space instead of spending precious time or resources to fetch and explore the complete dataset that turns out to be inappropriate for their needs. In this paper we present: 1) a detailed description of TableView’s graphical user interface and its various features, 2) a brief description of TableView’s preview scoring measures and algorithms, and 3) the demonstration scenarios we intend to present to the audience.TableView: A Visual Interface for Generating Preview Tables of Entity Graphs

TableView: A Visual Interface for Generating Preview Tables of Entity GraphsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

We present Maverick, a general, extensible framework that discovers exceptional facts about entities in knowledge graphs. To the best of our knowledge, there was no previous study of the problem. We model an exceptional fact about an entity of interest as a context-subspace pair, in which a subspace is a set of attributes and a context is defined by a graph query pattern of which the entity is a match. The entity is exceptional among the entities in the context, with regard to the subspace. The search spaces of both patterns and subspaces are exponentially large. Maverick conducts beam search on the patterns which uses a match-based pattern construction method to evade the evaluation of invalid patterns. It applies two heuristics to select promising patterns to form the beam in each iteration. Maverick traverses and prunes the subspaces organized as a set enumeration tree by exploiting the upper bound properties of exceptionality scoring functions. Results of experiments and user studies using real-world datasets demonstrated substantial performance improvement of the proposed framework over the baselines as well as its effectiveness in discovering exceptional facts.Maverick: Discovering Exceptional Facts from Knowledge Graphs

Maverick: Discovering Exceptional Facts from Knowledge GraphsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

In this paper, we show that by using a relatively simple neural network architecture and including edge (i.e., nonsensical) cases into a dataset we can more reliably identify factual claims than predecessor SVM models. Doping the dataset with these nonsensical example results in a more robust model overall that is resistant to being tricked into classifying sentences into a certain category based on easily met criteria. Furthermore, we show that the use of multiple word-embeddings makes little difference to the overall accuracy of the model, but particular embeddings perform differently on text that contains digits (i.e., 0-9) which can be leveraged by using multiple models to come to a conclusion on the score for a particular piece of text. Our results also show, that for our particular dataset trying to differentiate sentences into more than two categories might hurt the overall accuracy of the models, or at least not provide any substantial benefits compared to the binary classification scenario.An Empirical Study on Identifying Sentences with Salient Factual Statements

An Empirical Study on Identifying Sentences with Salient Factual StatementsThe Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington

More from The Innovative Data Intelligence Research (IDIR) Laboratory, University of Texas at Arlington (20)

Enabling Computational Journalism: Automated Fact-Checking and Story-Finding

Enabling Computational Journalism: Automated Fact-Checking and Story-Finding

Restoring Trust by Computing: Data-driven Fact-checking and Exceptional Fact ...

Restoring Trust by Computing: Data-driven Fact-checking and Exceptional Fact ...

Tackling Usability Challenges in Querying Massive, Ultra-heterogeneous Graphs

Tackling Usability Challenges in Querying Massive, Ultra-heterogeneous Graphs

Facetedpedia: Dynamic Generation of Query-Dependent Faceted Interfaces for Wi...

Facetedpedia: Dynamic Generation of Query-Dependent Faceted Interfaces for Wi...

Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScout

Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScout

Incremental Discovery of Prominent Situational Facts

Incremental Discovery of Prominent Situational Facts

Towards a Query-by-Example System for Knowledge Graphs

Towards a Query-by-Example System for Knowledge Graphs

Data In, Fact Out: Automated Monitoring of Facts by FactWatcher

Data In, Fact Out: Automated Monitoring of Facts by FactWatcher

Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScoutCrewsc...

Anything You Can Do, I Can Do Better: Finding Expert Teams by CrewScoutCrewsc...

VIIQ: Auto-suggestion Enabled Visual Interface for Interactive Graph Query Fo...

VIIQ: Auto-suggestion Enabled Visual Interface for Interactive Graph Query Fo...

Crowdsourcing Pareto-Optimal Object Finding by Pairwise Comparisons

Crowdsourcing Pareto-Optimal Object Finding by Pairwise Comparisons

Comparing Automated Factual Claim Detection Against Judgments of Journalism O...

Comparing Automated Factual Claim Detection Against Judgments of Journalism O...

TableView: A Visual Interface for Generating Preview Tables of Entity Graphs

TableView: A Visual Interface for Generating Preview Tables of Entity Graphs

Maverick: Discovering Exceptional Facts from Knowledge Graphs

Maverick: Discovering Exceptional Facts from Knowledge Graphs

An Empirical Study on Identifying Sentences with Salient Factual Statements

An Empirical Study on Identifying Sentences with Salient Factual Statements

Recently uploaded

Recently uploaded (20)

Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...![Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...

Gomti Nagar & best call girls in Lucknow | 9548273370 Independent Escorts & D...

Gomti Nagar & best call girls in Lucknow | 9548273370 Independent Escorts & D...

Top profile Call Girls In Begusarai [ 7014168258 ] Call Me For Genuine Models...![Top profile Call Girls In Begusarai [ 7014168258 ] Call Me For Genuine Models...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Begusarai [ 7014168258 ] Call Me For Genuine Models...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Begusarai [ 7014168258 ] Call Me For Genuine Models...

Top profile Call Girls In Tumkur [ 7014168258 ] Call Me For Genuine Models We...![Top profile Call Girls In Tumkur [ 7014168258 ] Call Me For Genuine Models We...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Tumkur [ 7014168258 ] Call Me For Genuine Models We...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Tumkur [ 7014168258 ] Call Me For Genuine Models We...

Top profile Call Girls In Satna [ 7014168258 ] Call Me For Genuine Models We ...![Top profile Call Girls In Satna [ 7014168258 ] Call Me For Genuine Models We ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Satna [ 7014168258 ] Call Me For Genuine Models We ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Satna [ 7014168258 ] Call Me For Genuine Models We ...

DATA SUMMIT 24 Building Real-Time Pipelines With FLaNK

DATA SUMMIT 24 Building Real-Time Pipelines With FLaNK

Gulbai Tekra * Cheap Call Girls In Ahmedabad Phone No 8005736733 Elite Escort...

Gulbai Tekra * Cheap Call Girls In Ahmedabad Phone No 8005736733 Elite Escort...

TrafficWave Generator Will Instantly drive targeted and engaging traffic back...

TrafficWave Generator Will Instantly drive targeted and engaging traffic back...

Fun all Day Call Girls in Jaipur 9332606886 High Profile Call Girls You Ca...

Fun all Day Call Girls in Jaipur 9332606886 High Profile Call Girls You Ca...

Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...![Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...

SAC 25 Final National, Regional & Local Angel Group Investing Insights 2024 0...

SAC 25 Final National, Regional & Local Angel Group Investing Insights 2024 0...

Dubai Call Girls Peeing O525547819 Call Girls Dubai

Dubai Call Girls Peeing O525547819 Call Girls Dubai

Nirala Nagar / Cheap Call Girls In Lucknow Phone No 9548273370 Elite Escort S...

Nirala Nagar / Cheap Call Girls In Lucknow Phone No 9548273370 Elite Escort S...

Jual obat aborsi Bandung ( 085657271886 ) Cytote pil telat bulan penggugur ka...

Jual obat aborsi Bandung ( 085657271886 ) Cytote pil telat bulan penggugur ka...

Top profile Call Girls In Indore [ 7014168258 ] Call Me For Genuine Models We...![Top profile Call Girls In Indore [ 7014168258 ] Call Me For Genuine Models We...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Indore [ 7014168258 ] Call Me For Genuine Models We...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Indore [ 7014168258 ] Call Me For Genuine Models We...

Toward Automated Fact-Checking: Detecting Check-worthy Factual Claims by ClaimBuster

- 1. Naeemul Hassan1 Fatma Arslan2 Chengkai Li2 Mark Tremayne3 1Department of Computer and Information Science, University of Mississippi 2Deparment of Computer Science and Engineering, University of Texas at Arlington 3Department of Communication, University of Texas at Arlington Fake-news floods social media (“filter bubbles” and “echo chambers”) The Quest to Automate Fact-checking Politicians make false and misleading claims § Facebook trending topic algorithms promoted fake-news. § A sample of 140,000 Twitter users in the battleground state of Michigan shared as many junk news items as professional news during the final ten days of the 2016 election. http://politicalbots.org/?p=1064 National security threats § Russian government interfered with the 2016 election. Fake-news websites and bots used. § Pizzagate: conspiracy theory led to shooting § 100+ active fact-checking sites in 2017 (PolitiFact.com, FullFact.org, CNN, Washington Post, …) § Google and Bing include fact-checks in search results. § Facebook lets users report false items and flags items disputed by fact-checkers. Claim Spotting: Check-worthy Factual Claims Detection Presidential Debate Transcripts (1960-2012) 20788 sentences Ground Truth Human Annotation Feature Vectors Feature Extraction Learning Algorithm Important Factual Claims 2016 Presidential Debates Classification and ranking by check-worthiness § Non-Factual Sentence (NFS) (Opinions, beliefs, declarations): “But I think it’s time to talk about the future.” § Unimportant Factual Sentence (UFS): “Two days ago we ate lunch at a restaurant.” § Check-worthy Factual Sentence (CFS): “He voted against the first Gulf War.” Feature extraction and selection I was in a state where my legislature was 87 percent Democrat. Entity Type: QuantityPart-of-Speech: Noun Concept: United States Sentiment: 0.032 Words: state, legislature, 87, percent, democrat Case Study: 2016 U.S. Presidential Election Debates Data Labeling and Ground-Truth Collection 20788 sentences 374 coders 76552 labels 86 top-quality coders 52333 labels Majority voting 20617 admitted sentences Combating falsehoods Comparison of topic distributions of CNN, PolitiFact fact-checked sentences and sentences scored high (>=.5) by ClaimBuster Funded by Toward Automated Fact-Checking: Detecting Check-worthy Factual Claims by ClaimBuster Fact-checks on major party presidential nominees by PolitiFact Lack of automated tools that assist fact-checkers Coding website bit.ly/claimbusters o 20788 sentences o 20 months, 374 coders, ~$4,000 paid o 30 training sentences o 1032 screening sentences (731 NFS, 63 UFS, 238 CFS) to detect spammers & low-quality coders Coder quality Quality assurance Feature importance § “The Holy Grail”: fully automated fact-checking End-to-End Fact-Checking System idir.uta.edu/claimbuster Classification and Ranking Accuracy