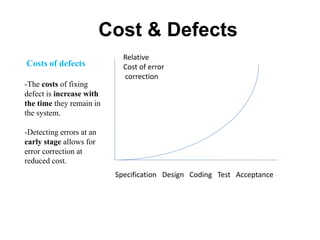

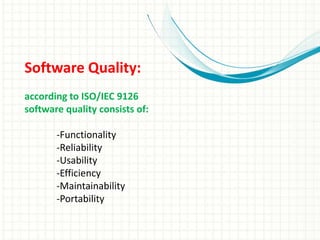

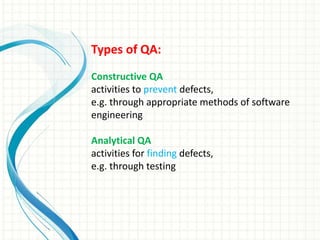

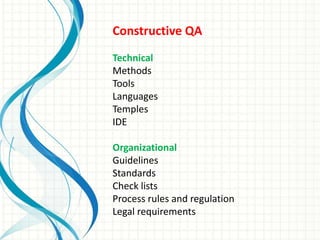

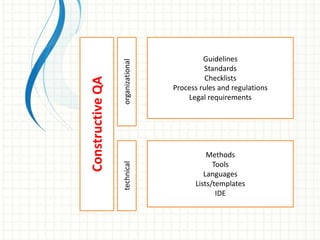

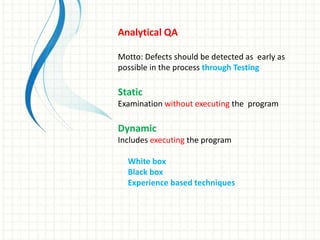

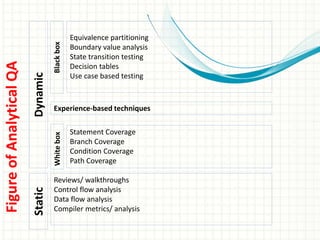

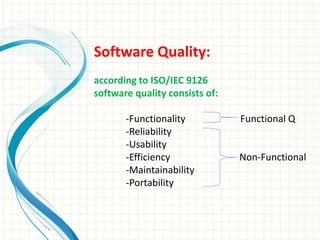

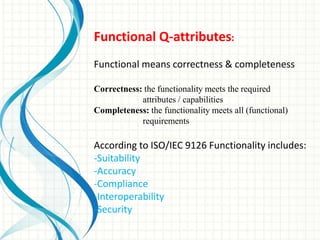

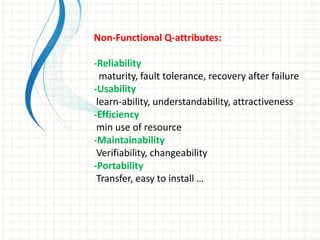

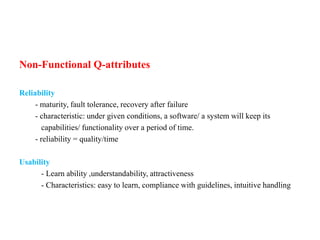

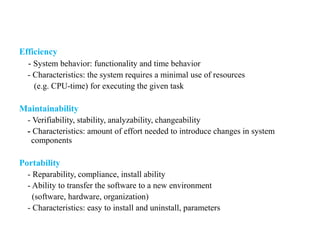

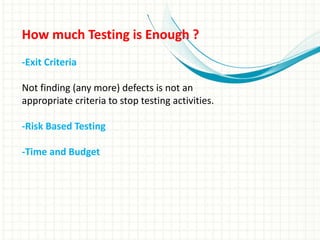

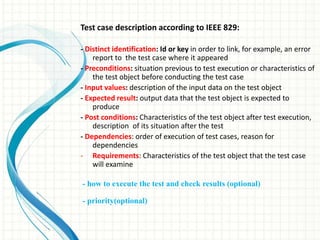

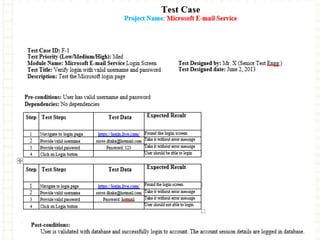

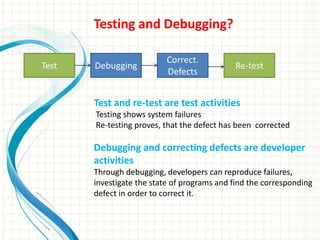

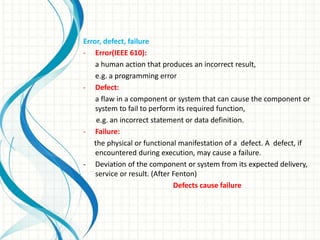

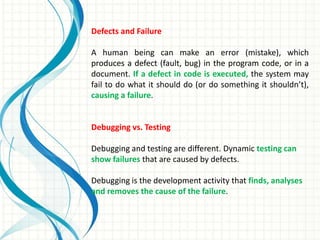

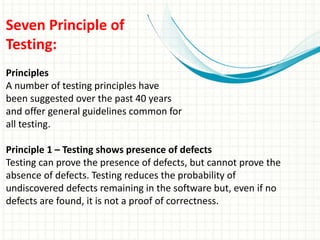

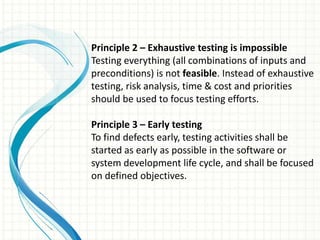

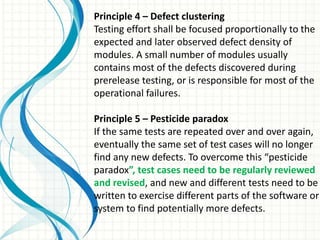

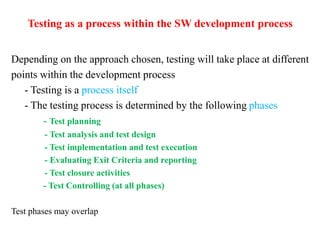

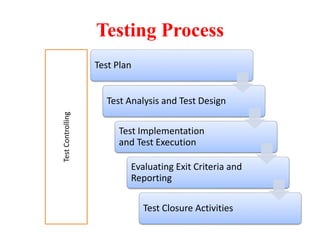

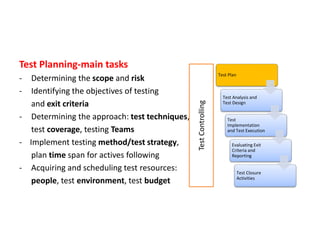

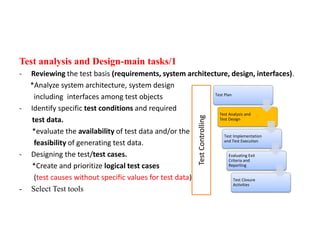

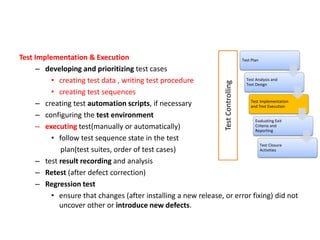

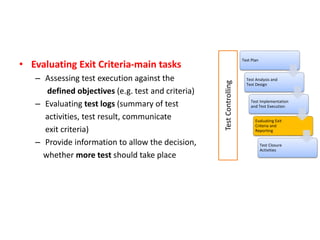

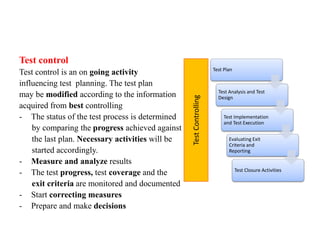

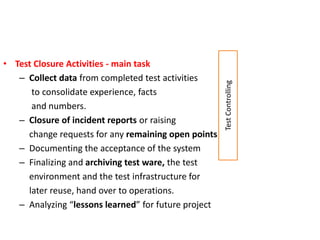

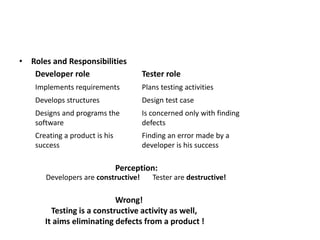

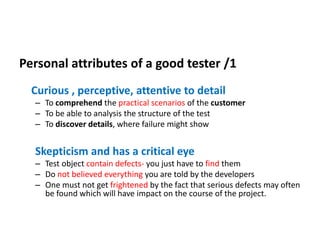

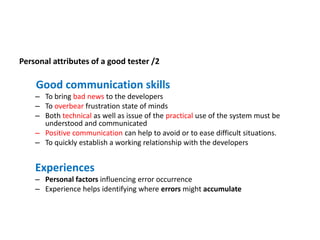

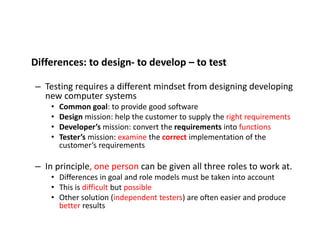

The presentation discusses software quality assurance and testing. It covers topics such as the importance of software quality, types of software quality (functional and non-functional), software testing principles and processes. The testing process involves test planning, analysis and design, implementation and execution, evaluating results, and closure activities. The presentation emphasizes that testing is a critical part of the software development process to improve quality and find defects.