Data science seminar - University of Tartu - SmartML

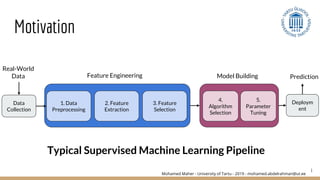

- 1. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Motivation 1 Data Collection 1. Data Preprocessing 2. Feature Extraction 3. Feature Selection 4. Algorithm Selection Deploym ent 5. Parameter Tuning Prediction Real-World Data Feature Engineering Model Building Typical Supervised Machine Learning Pipeline

- 2. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 2 Model Building 4. Algorithm Selection 5. Parameter Tuning Examples: - Linear Classification: (Simple Linear Classification, Ridge, Lasso, Simple Perceptron, ….) - Support Vector Machines - Decision Tree (ID3, C4.5, C5.0, CART, ….) - Nearest Neighbors - Gaussian Processes - Naive Bayes (Gaussian, Bernoulli, Complement, ….) - Ensembling: (Random Forest, GBM, AdaBoost, ….) Motivation: Model Building

- 3. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 3 Model Building 4. Algorithm Selection 5. Parameter Tuning Kernel Linear RBF Polynomial Gamma [2^-15, 2^3] Degree 2,3,.... C - Penalty [2^-5, 2^15] Example: Support Vector Machine …….. Motivation: Model Building

- 4. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 4 Model Building 4. Algorithm Selection 5. Parameter Tuning Motivation: Model Building

- 5. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 5 Smart ML: A Meta Learning-Based Framework for Automated Selection and Hyperparameter Tuning for Machine Learning Algorithms 1. First Automation R-Package for Automatic Algorithm Selection and Hyper-Parameter Optimization. 2. Built over 15 Classifiers in different R packages. 3. Collaborative Knowledge Base for Meta- Learning. 4. Using a Modified Version of SMAC with more exploitation than exploration for Hyper-Parameter Optimization.

- 6. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 6 Using SmartML MORE = Larger Knowledge Base For Meta Learning

- 7. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 7 Examples of Meta-Features: ● Number of Instances. ● Ratio of Numerical to Categorical Features. ● Average Skewness of Numerical Features. ● Standard Deviation of Kurtosis of Numerical Features. ● Mean number of symbols in categorical Features. ● ETC...

- 8. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 8 Param Param Param Param Param Param Param Param Search Space Algorithm Selection Algorithm Selection Param Param Param Param Param Param Param Param Search Space BEFORE AFTER Meta-Learning

- 9. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Is that Everything? 9 Forbes: Cleaning Big Data: Most Time-Consuming, Least Enjoyable Data Science Task, Survey Says

- 10. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 10 Different Scale → Normalization ?? Missing Value → Imputation ?? Non-Numeric Values → Encoding ?? Motivation: Data PreProcessing 1. Data Preprocessing

- 11. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 11 Motivation: Data PreProcessing Non-Numeric Values → Encoding?? Example Smoke I1 Never I2 Never I3 Occas 1. Data Preprocessing

- 12. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 12 Motivation: Data PreProcessing One-Hot-Encoding: Smoke.N ever Smoke.R egul Smoke.Oc cas I1 1 0 0 I2 1 0 0 I3 0 0 1 Example Smoke I1 Never I2 Never I3 Occas 1. Data Preprocessing

- 13. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 13 Motivation: Data PreProcessing Example Smoke I1 Never I2 Never I3 Occas Label Encoder: Smoke I1 0 I2 0 I3 2 1. Data Preprocessing

- 14. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 14 Motivation: Data PreProcessing Examples of Data Preprocessors: 1. Scaling 2. Normalization 3. Standardization 4. Binarization 5. Imputation 6. Deletion 7. One-Hot-Encoding 8. Hashing 9. Discretization 1. Data Preprocessing

- 15. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 15 Motivation: Dimensionality Reduction Example:Feature Extraction: Principal Component Analysis: How to reduce dataset dimensions while keeping as much variation as possible 2. Feature Extraction

- 16. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 16 Motivation: Dimensionality Reduction Example:Feature Selection: Univariate Feature Selection (Fast): 3. Feature Selection Age Year of Birth Diabetes Blood Pressure Early Bird/ Night Owl Smoker Mortality (Class Labels) 20 1999 Yes Normal Night Owl No Low 80 1939 No Normal Early Bird No High Best Two Features → They are the same!!

- 17. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 17 Motivation: Dimensionality Reduction Example:Feature Selection: Multivariate Feature Selection (Slow): 3. Feature Selection Age Year of Birth Diabetes Blood Pressure Early Bird/ Night Owl Smoker Mortality (Class Labels) 20 1999 Yes Normal Night Owl No Low 80 1939 No Normal Early Bird No High - Are we going to try every possible set of features? - How many features are enough?

- 18. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 18 Motivation: Dimensionality Reduction Examples of Feature Extraction: 1. Principal Component Analysis 2. Linear Discriminant Analysis 3. Multiple Discriminant Analysis 4. Independent Component Analysis Examples of Multivariate Feature Selection: 1. Relief 2. Correlation Feature Selection 3. Branch and Bound 4. Sequential Forward Selection 5. Plus L - Minus R Examples of Univariate Feature Selection: 1. Information Gain 2. Fisher Score 3. Correlation with Target 2. Feature Extraction 3. Feature Selection

- 19. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 19 Motivation: Dimensionality Reduction Examples of Feature Extraction: 1. Principal Component Analysis 2. Linear Discriminant Analysis 3. Multiple Discriminant Analysis 4. Independent Component Analysis Examples of Multivariate Feature Selection: 1. Relief 2. Correlation Feature Selection 3. Branch and Bound 4. Sequential Forward Selection 5. Plus L - Minus R Examples of Univariate Feature Selection: 1. Information Gain 2. Fisher Score 3. Correlation with Target 2. Feature Extraction 3. Feature Selection

- 20. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Skills Required by a Data Scientist 20 KDnuggets: The Most in Demand Skills for Data Scientists

- 21. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Skills Required by a Data Scientist 21 KDnuggets: The Most in Demand Skills for Data Scientists Data Scientist for 21st Century

- 22. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 22

- 23. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Data Vs Data Scientist 23

- 24. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Data Vs Data Scientist 24

- 25. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Data Vs Data Scientist 25

- 26. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Data Vs Data Scientist 26

- 27. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 27 : A Framework for Automated Optimized Machine Learning Pipelines in the Big Data Era Ongoing Work

- 28. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Design Principles 28 3 4 1 2

- 29. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Design Principles 29 3 4 1. Meta-Learning 2

- 30. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 30 Design Principles 1. Meta-Learning: Collaborative KnowledgeBase The meta-learning mechanism and the collaborative knowledge base will play an effective role on dramatically reducing the search space and quickly suggesting some initializations of pipelines as a warming-up step that are likely to perform quite well. Meta Learning

- 31. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Design Principles 31 3 4 1. Meta-Learning 2. Distributed

- 32. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 32 2. Distributed: We are extending our framework to remain agnostic towards the underlying machine learning platform and make use of the distributed machine learning platforms that are becoming essential nowadays. Data Centralized Platforms Design Principles

- 33. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 33 Design Principles Dataset Metric Machine (1) 24$/Month 2 Cores 4 GB - Memory Machine (2) 194$/Month 8 Cores 32 GB - Memory Cluster 145$/Month 6 Machines (1) SUSY (2.3 GB) Time(s) Memory Err 392 87 Accuracy Memory Err 76.0% 76.0% Cost Memory Err 2.93$ 0.49$ HIGGS (7.8 GB) Time(s) Memory Err 720 180 Accuracy Memory Err 64.0% 63.9% Cost Memory Err 5.39$ 1.0$ Experiment using LDA Classifier:

- 34. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Design Principles 34 3. Composability 4 1. Meta-Learning 2. Distributed

- 35. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 35 3. Composability: The SmartML framework will combine services from different available machine learning frameworks as these libraries vary in their capabilities. Design Principles Weka Scikit Learn Spark MLib Mahout …. # Data Preprocessors 32 12 6 0 # Feature Engineering 14 12 5 5 # Classification Algorithms 23 15 7 3 # Regression Algorithms 14 10 6 0

- 36. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee Design Principles 36 3. Composability 4. Language Agnostic 1. Meta-Learning 2. Distributed

- 37. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 37 4. Language Agnostic: In order to ensure interoperability and integration with the different machine learning frameworks, we are designing our framework to remain agnostic towards the supported programming languages. In particular, we are providing API interfaces for various programming languages (e.g., Python, Java, Scala) a REST APIs that can be embedded in any programming language in addition of being used as a RESTful Web Service. Design Principles

- 38. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 38 Architecture

- 39. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 39 Challenges Optimization of Pipeline Recommendation and Execution Process: The optimizer needs to exploit any available opportunities for sharing the execution of the tasks of the recommended pipelines by establishing a joint execution graph. The optimizer needs to be able to make smart decisions based on intermediate results after the execution of the graph of tasks. For example, the optimizer can decide to early stop some branches of the recommended pipelines based on its initial/intermediate results.

- 40. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 40 Challenges Optimization of Pipeline Recommendation and Execution Process: The cost-model of the framework optimizer needs to consider several aspects. For example, it needs to consider the time budget, the efficient scheduling and optimized distribution/parallelization of the tasks of the pipeline (e.g., preprocessing, feature engineering, training, hyper-parameter tuning) on the available computing resources.

- 41. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 41 Challenges Automated Preprocessing & Feature Engineering : It is very challenging to fully automate these steps as they are heavily depending on the domain and the nature of the data. Human interpretability is still required for the impact of the different features on the model prediction. Our framework considers a wide range of data preprocessors that can be applied on data using two main mechanisms: 1) Pre-defined rules (Hard Coded Rules). Eg: 20/03/2019 → Month: March, Day: 20, Year: 2019 2) Using a meta-learning study which analyzes the outcomes of applying different combinations of pipelines to various datasets. "What cannot be completely attained, should not be completely left."

- 42. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 42 Challenges Automating the Trade-offs : AutoML systems introduce new hyperparameters and decisions of their own that need to be optimized. Deciding about the optimal values of these parameters can be very challenging for non-expert end users. Example: ● Type of Evaluation/hyperparameter optimization methods to use. ● Time budget to wait before getting the recommended pipeline.

- 43. Mohamed Maher - University of Tartu - 2019 - mohamed.abdelrahman@ut.ee 43